Should We Prioritize AI Safety or Present-Day Inequality?

Analysis reveals 6 key thematic connections.

Key Findings

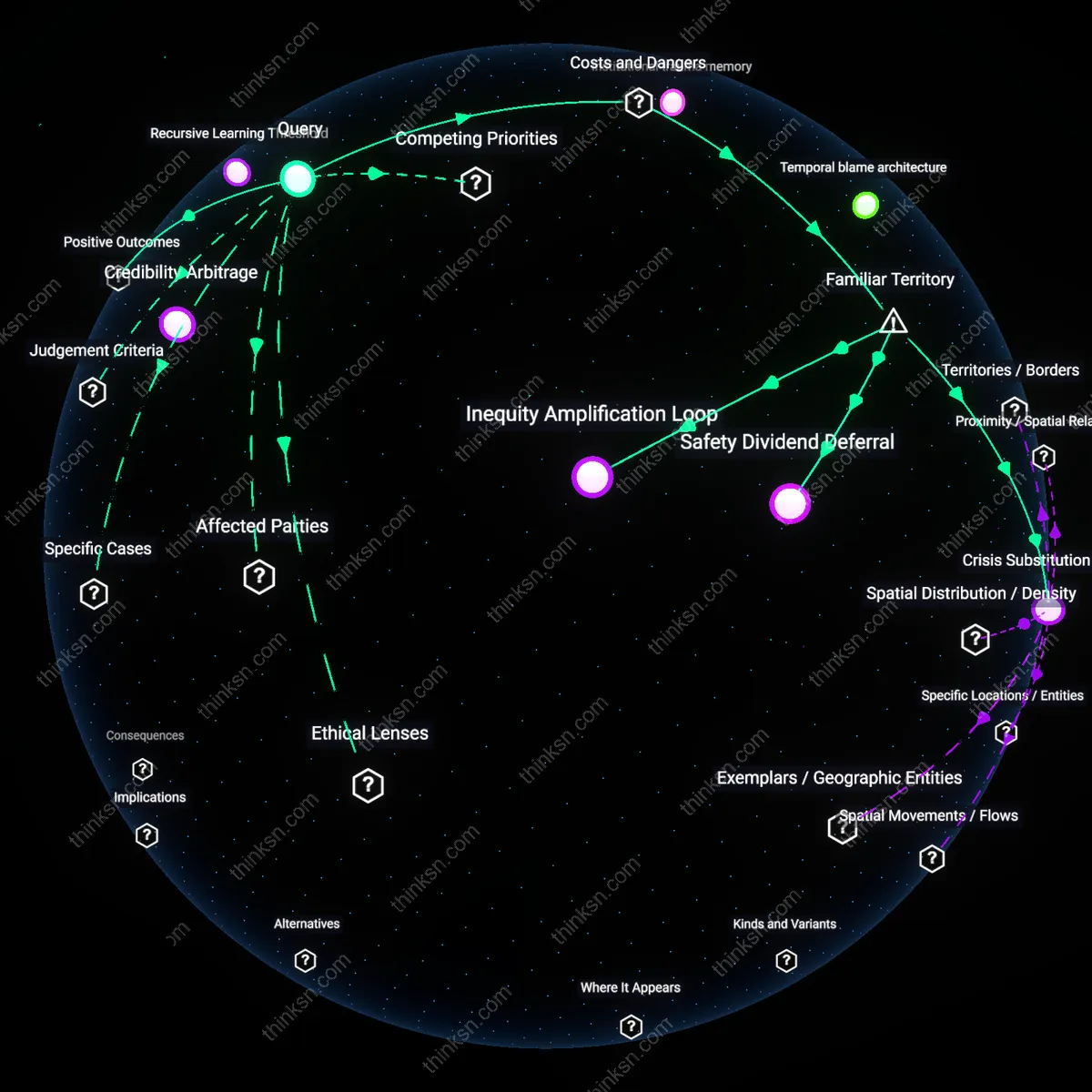

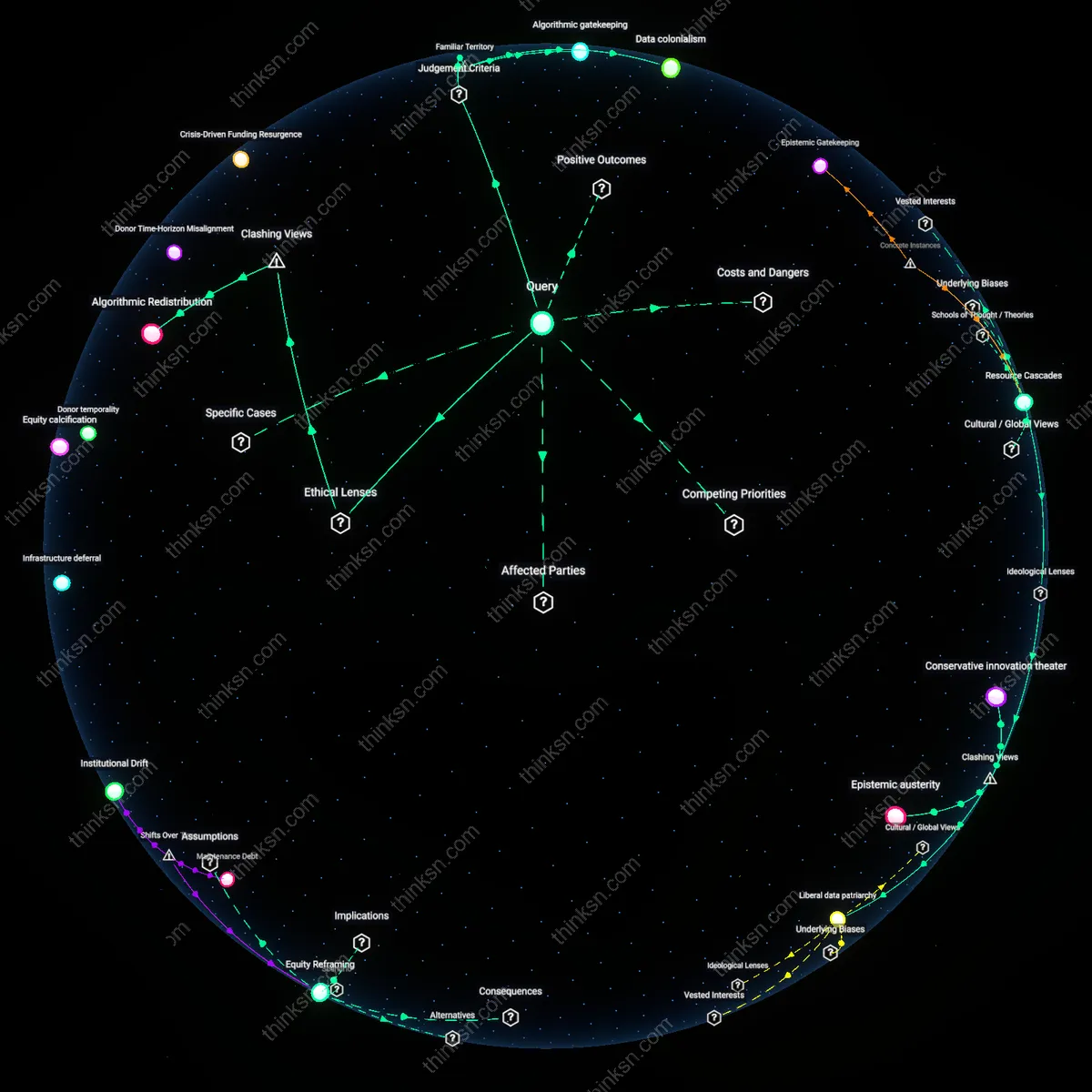

Recursive Learning Threshold

Prioritizing AI safety research accelerates a recursive learning threshold in global technical governance by enabling early alignment frameworks to propagate through emerging AI development cycles. As major AI labs like DeepMind and Anthropic adopt safety standards informed by current research, these protocols become embedded in training procedures and institutional incentives, creating feedback loops that raise the minimum competence level required for high-impact deployment. This systemic elevation prevents future misalignments from cascading into irreversible global harms, which would otherwise exacerbate inequality far more severely than present disparities—thus making safety investment a preemptive equity mechanism. The underappreciated dynamic is that safety norms act not as static safeguards but as compounding improvements in collective decision-making quality across the AI development ecosystem.

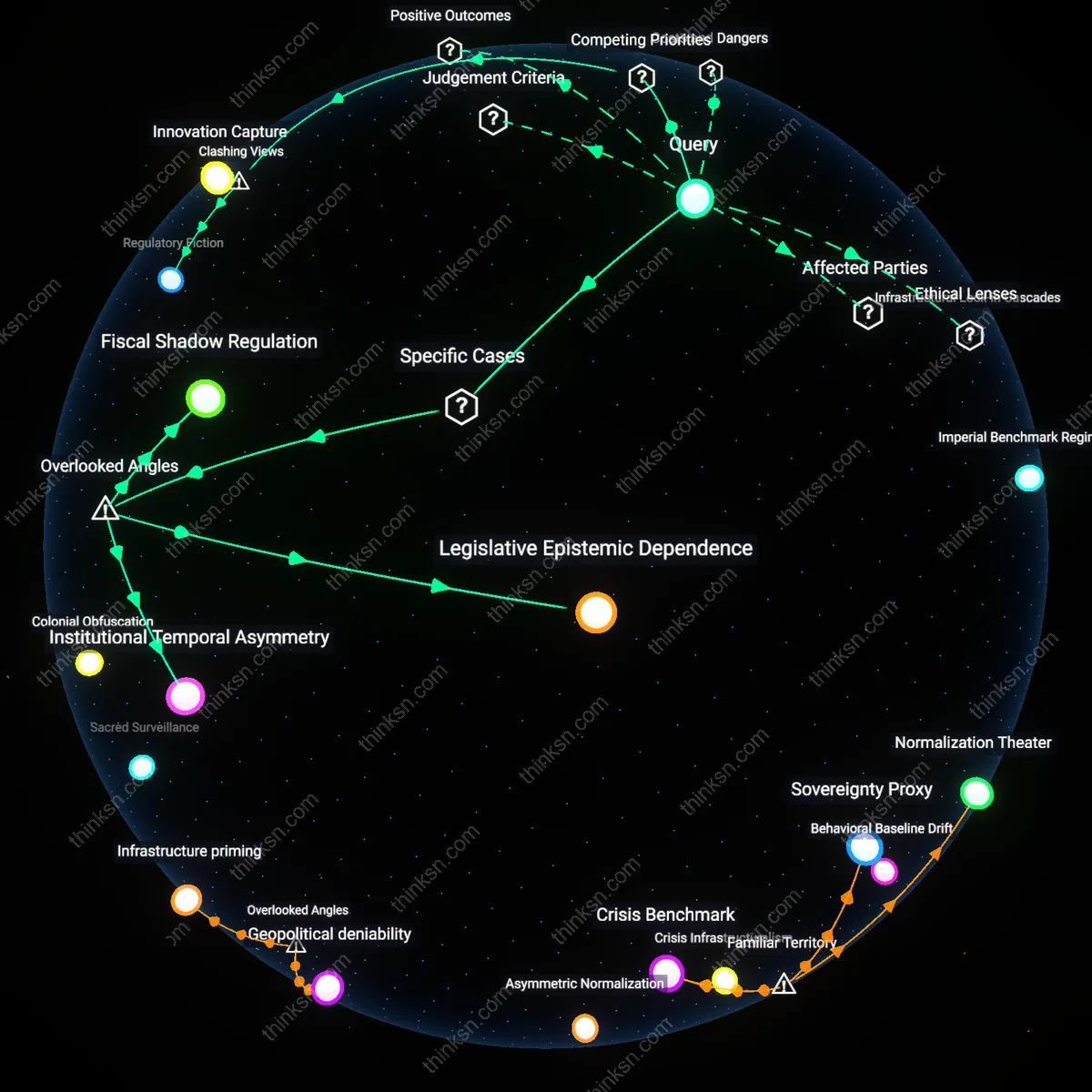

Credibility Arbitrage

Addressing current inequality strengthens society’s capacity for credible commitment to long-term projects, including AI safety, by reducing political fragmentation and restoring trust in institutions. In democracies like the United States and South Africa, widespread economic disenfranchisement fuels skepticism toward technocratic agendas, making top-down AI regulation appear elitist or extractive; redistributive policies counter this by legitimizing governance structures that can later enforce safety mandates. The key mechanism is credibility arbitrage—using tangible social reforms to collateralize future cooperation—enabling coalitions between marginalized communities and technical experts on issues like algorithmic accountability. Most analyses overlook how perceived justice in the present shapes willingness to comply with potentially restrictive but necessary AI oversight in the future.

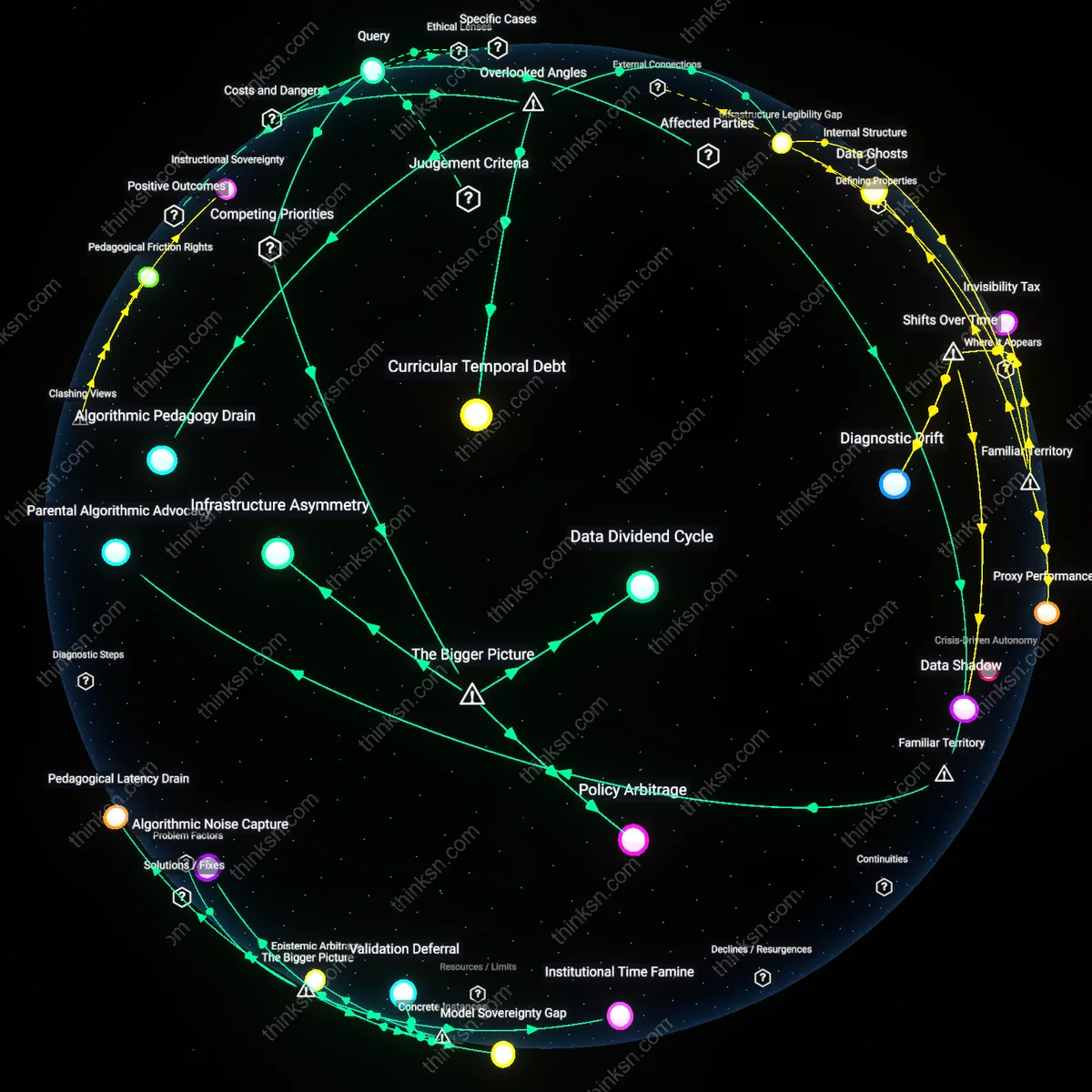

Attentional Carrying Capacity

Sustained investment in either domain enhances the other by expanding the attentional carrying capacity of civil society and regulatory bodies to manage complexity. When social movements such as Black Lives Matter or the Fight for $15 succeed in institutionalizing equity concerns, they build organizational infrastructure—legal networks, data platforms, grassroots monitoring systems—that can later be repurposed for AI oversight, such as auditing bias in automated hiring tools. Conversely, clear progress in AI safety fosters public confidence that emerging technologies won't deepen existing divides, reducing resistance to innovation-friendly policies. The non-obvious link is that political bandwidth for managing long-term risks depends on the perceived legitimacy and effectiveness of institutions in resolving immediate injustices—thus making parallel investment not a trade-off but a mutual reinforcement under systemic strain.

Safety Dividend Deferral

Prioritize AI safety over immediate inequality because delaying equity investments preserves capital for future risk mitigation. Wealth concentrated in advanced economies funds AI research hubs like those in Silicon Valley and Beijing, where delay in redistributive policies maintains financial agility to respond to emergent AI threats—such as autonomous weapons or manipulation systems—before they cascade globally. What’s underappreciated is that the familiar moral urgency of inequality is strategically deferred not due to indifference, but as a calculated trade-off to avoid irreversible systemic collapse, treating equality as a downstream beneficiary of survival.

Inequity Amplification Loop

Addressing current inequality must precede major AI safety investments because deploying safety measures under existing power imbalances entrenches technocratic control. Institutions like central banks and tech conglomerates use AI governance frameworks to justify surveillance infrastructure in marginalized regions—such as predictive policing in low-income urban areas—under the guise of risk prevention. The unspoken danger is that familiar appeals to existential risk become justification for preemptive social control, converting inequality into algorithmic enforcement cycles that erode democratic oversight.

Crisis Substitution Trap

Investing in AI safety distracts from material inequality by reframing structural failures as technical forecasting problems. Governments and multilateral agencies like the UN or OECD elevate AI risk modeling to high-priority status, shifting budgets from welfare programs to speculative alignment research, while accepting widening wealth gaps as 'temporary' in light of future catastrophe avoidance. The hidden mechanism is that familiar narratives of apocalyptic AI—shaped by sci-fi tropes and elite discourse—functionally substitute for accountability in real-time economic harm, making present suffering appear politically tolerable.