Do Code Suggestions Speed Dev or Introduce Stealthy Security Risks?

Analysis reveals 12 key thematic connections.

Key Findings

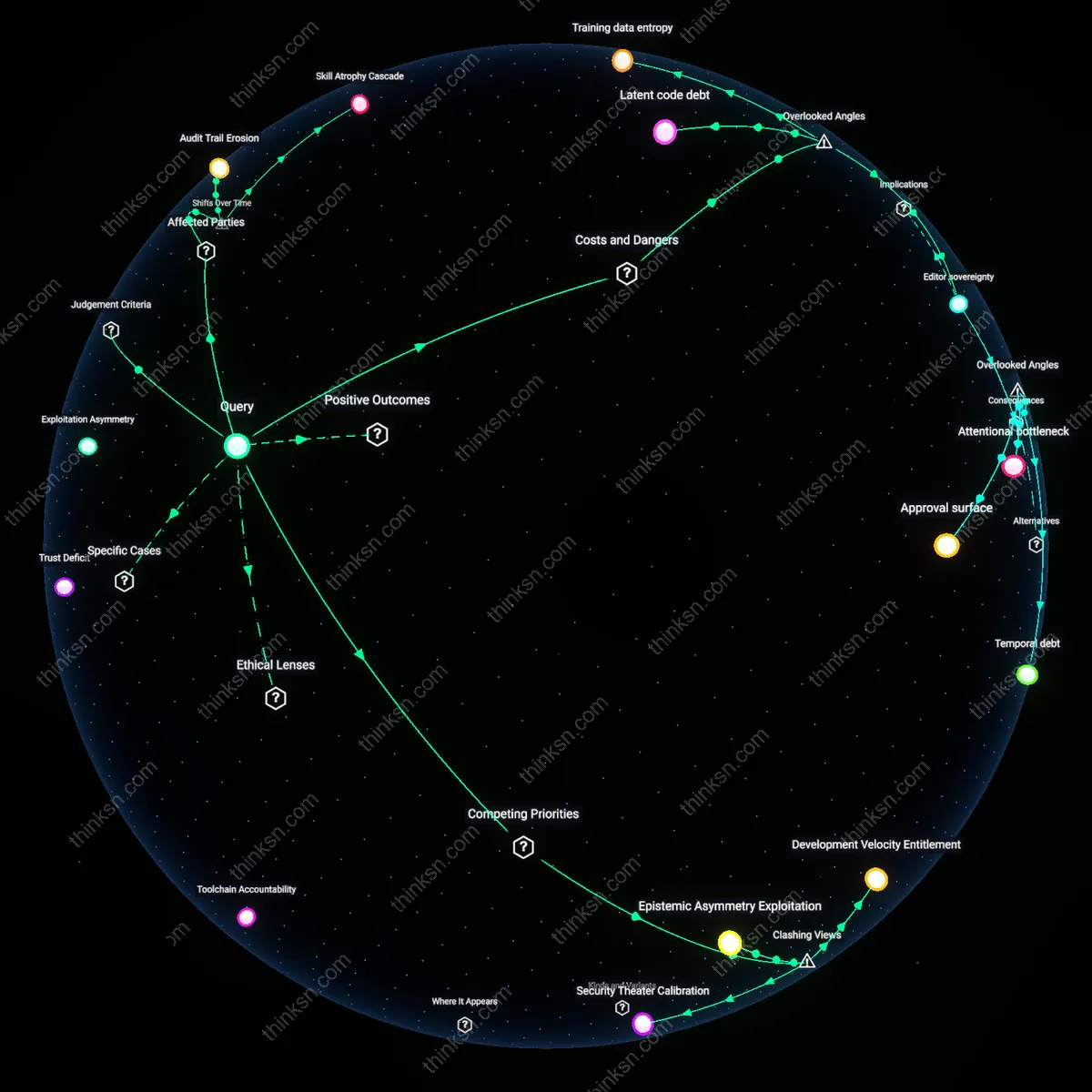

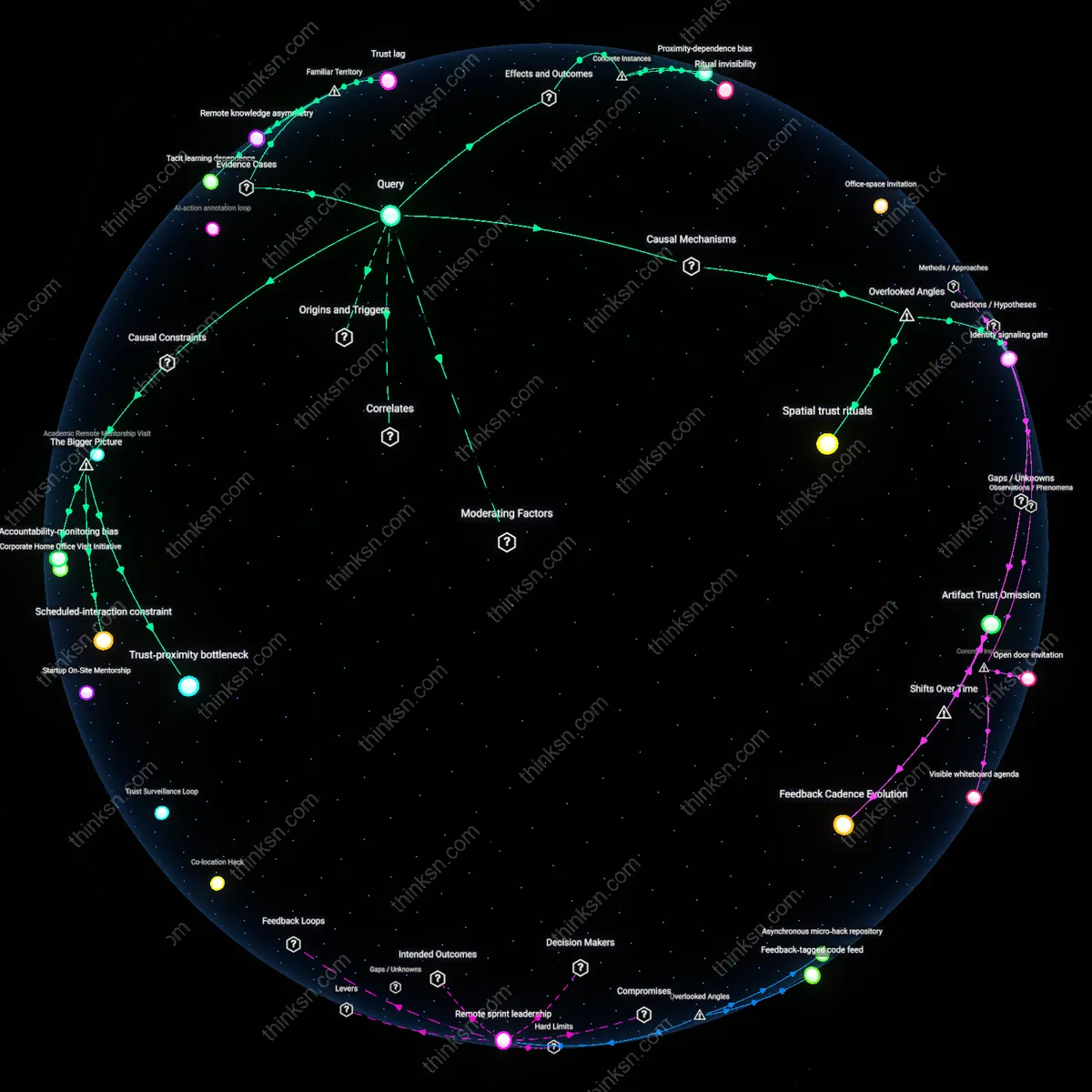

Developer Liability Asymmetry

Software developers now bear disproportionate liability for AI-generated code flaws, a shift from the 2000s when vendor warranties and formal verification insulated them from downstream failures. As firms like GitHub and Google shifted responsibility to end users after deploying Copilot and Codey in 2020–2022, developers—especially junior engineers in startups—absorbed risk without commensurate authority over model training data or security governance. This reversal masks the erosion of institutional accountability, where platform providers externalize risk while capturing productivity gains.

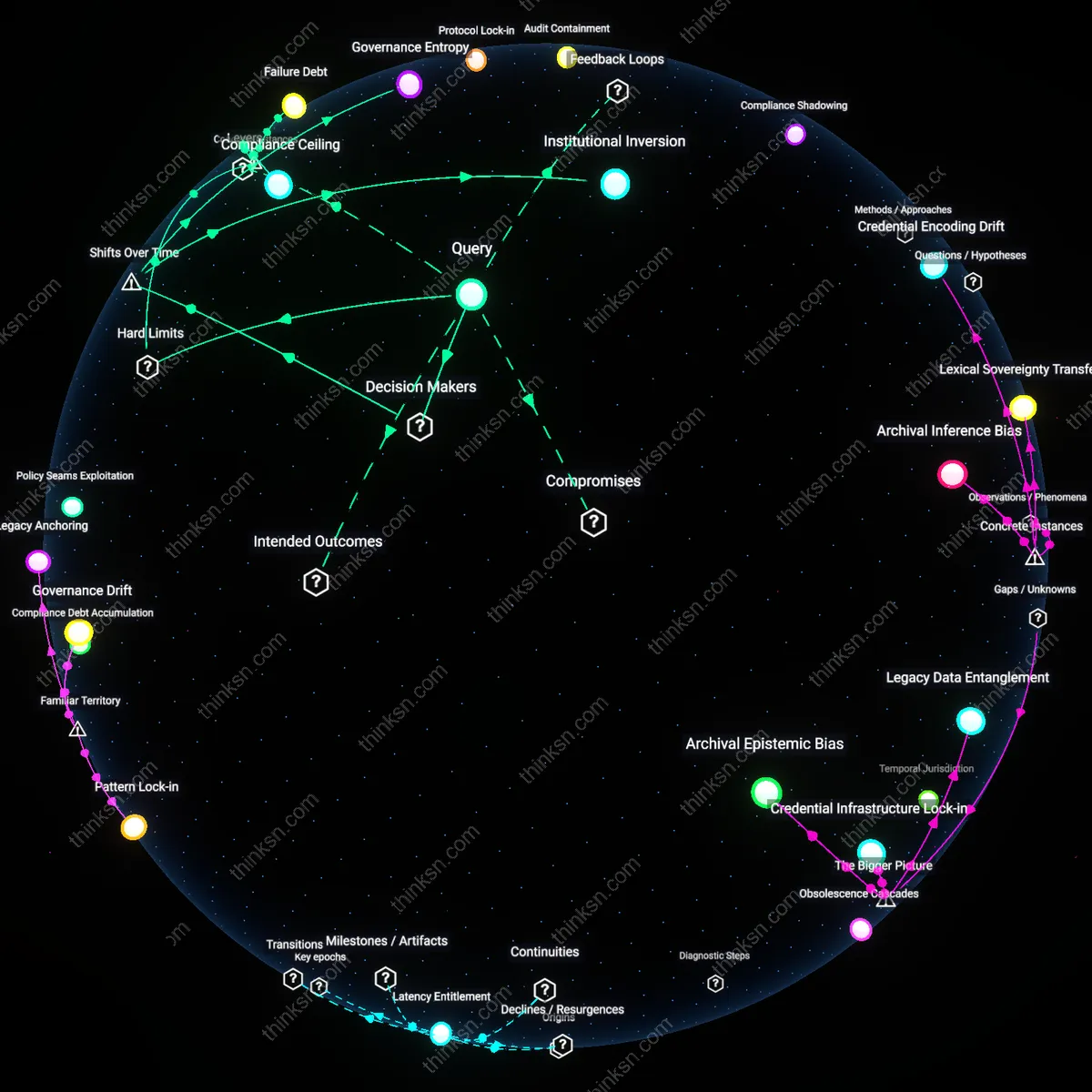

Audit Trail Erosion

The integrity of code provenance collapsed post-2022 as CI/CD pipelines in enterprises like JPMorgan and Lockheed Martin began integrating AI-generated snippets without versioned attribution to training data sources or model iterations. Unlike the pre-2015 era, when open-source licenses and commit logs enabled forensic tracing of vulnerabilities, today’s obfuscated lineage undermines compliance with standards like NIST SP 800-53 and weakens incident response for federal contractors. This temporal rupture reveals how automation outpaced governance infrastructure, making blame assignment technically infeasible.

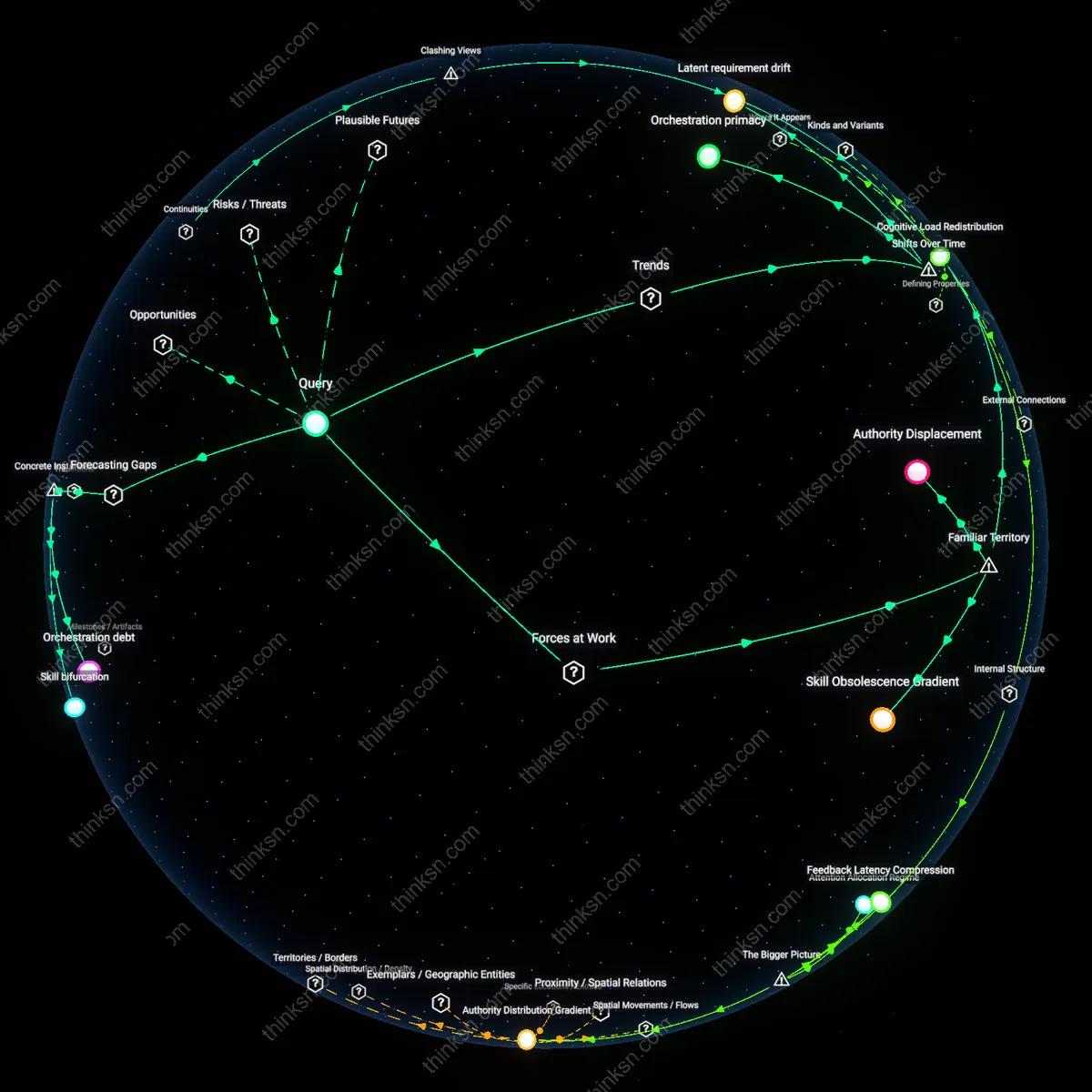

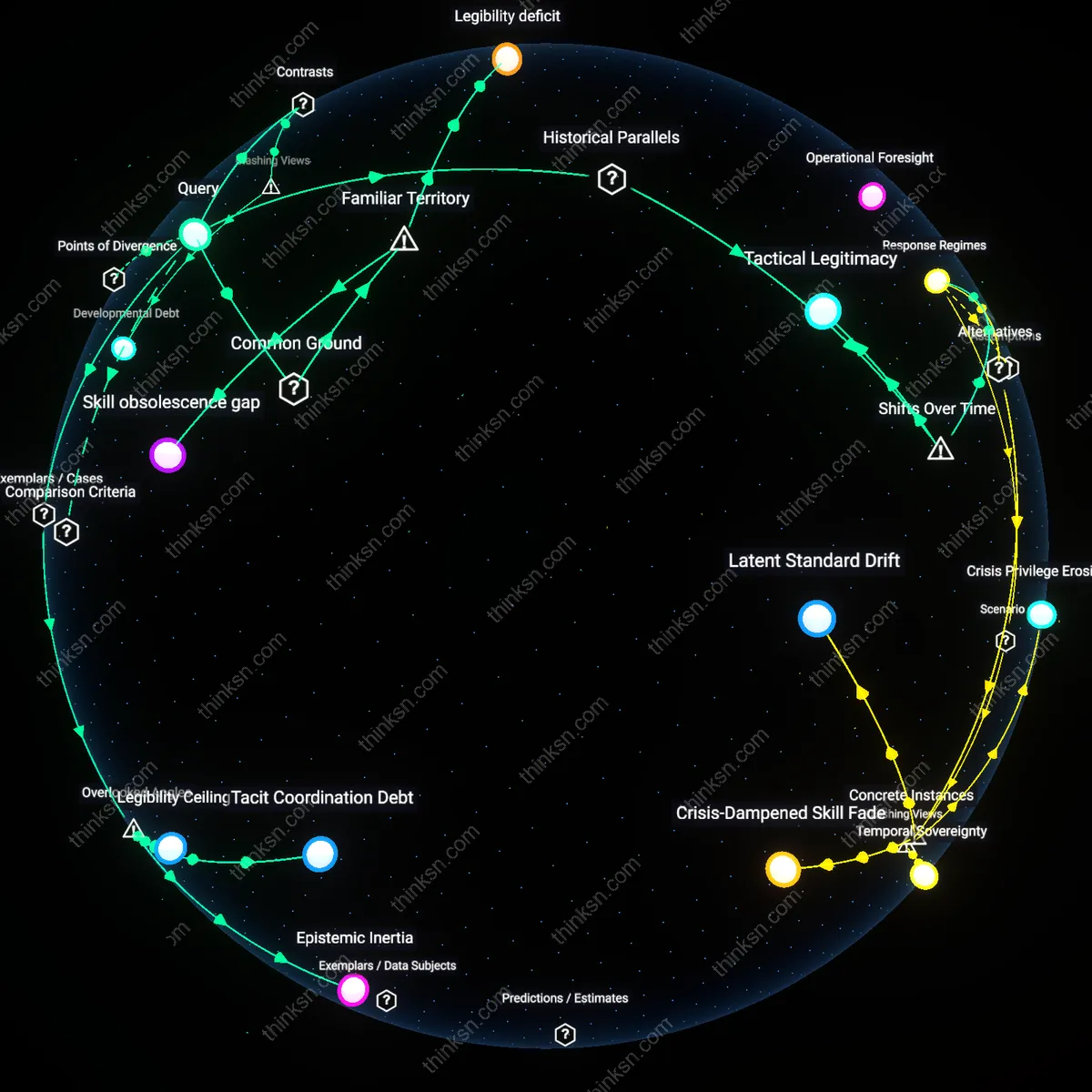

Skill Atrophy Cascade

Mid-level software engineers in the U.S. and Indian tech sectors are experiencing a measurable decline in debugging and systems design proficiency since 2021, directly tied to overreliance on AI code completions in IDEs like Visual Studio Code. Where the 1990s Linux kernel community institutionalized peer review and deep literacy through mailing lists and patch scrutiny, today’s accelerated development cycles prioritize output volume, weakening the transmission of tacit knowledge across generations. This transition risks creating a bifurcated labor market where only a shrinking elite retains the skill to audit or intervene in AI-generated systems.

Trust Deficit

Yes, the security risk from AI-generated code undermines faster development because it erodes developer and organizational trust in automated outputs, especially when vulnerabilities are silently introduced in mission-critical systems like financial services or healthcare software; this occurs through the breakdown of accountability pathways when neither developers nor AI vendors assume full responsibility for defects, a mechanism rarely acknowledged in productivity-centric tool adoption; the non-obvious insight is that speed gains are negated not by bugs themselves but by the organizational paralysis induced by uncertain liability.

Skill Atrophy

Yes, the security risk from AI-generated code undermines the benefits of faster development because it accelerates the erosion of deep coding competency among junior engineers who rely on opaque suggestions from tools like GitHub Copilot in startup and enterprise environments; this occurs through the displacement of deliberate practice with pattern absorption from potentially flawed AI models trained on insecure public repositories, a dynamic that compromises long-term system resilience; what’s underappreciated is that the most immediate cost isn’t a breach—but a workforce increasingly unable to audit or correct what the AI produces.

Exploitation Asymmetry

Yes, the security risk from AI-generated code undermines faster development because cyber attackers leverage the same AI tools to rapidly discover and weaponize vulnerabilities in widely adopted auto-generated software patterns, particularly in open-source ecosystems like npm or PyPI; this occurs through an imbalance in offensive speed, where automated penetration testing and exploit generation outpace defensive review cycles in understaffed projects; the overlooked reality is that development acceleration benefits are structurally shared, but risks are concentrated in the weakest downstream adopters.

Latent code debt

AI-generated code introduces undetectable syntactic and semantic inconsistencies that accumulate as latent code debt, imperceptible during development but triggering systemic failures in edge-case runtime environments. This occurs because AI models train on fragmented, unvetted public repositories where malformed or context-specific code patterns are indistinguishable from robust ones, and IDE integrations deploy them without runtime accountability layers. The risk is not merely that AI writes insecure code, but that it standardizes code patterns that appear functional yet harbor structural fragility across distributed systems—such as microservices in financial clearinghouses—where failure surfaces only under rare synchronization conditions, long after deployment. Most security analyses focus on overt vulnerabilities like injection flaws, overlooking how AI systematically introduces low-probability, high-impact instability through grammatically correct but logically unsound constructs.

Training data entropy

The entropy embedded in AI training data—specifically the inclusion of deprecated APIs, abandoned frameworks, and contradictory architectural patterns from obsolete repositories—propagates into generated code, creating hidden obsolescence pathways that accelerate technical decay. Unlike human developers who implicitly filter out defunct paradigms through professional continuity, AI models treat historical code as equally valid, leading to the reintroduction of retired vulnerabilities like insecure deserialization in Java RMI or forgotten attack surfaces in legacy .NET remoting. This reanimation of defunct but dangerous patterns occurs silently in greenfield projects at firms like regional health information exchanges, where audit trails don’t flag deprecated constructs as risks. Security debates center on present-day exploits, missing how AI resurrects dormant systemic risks by treating technological history as a flat, accessible library rather than a curated evolution.

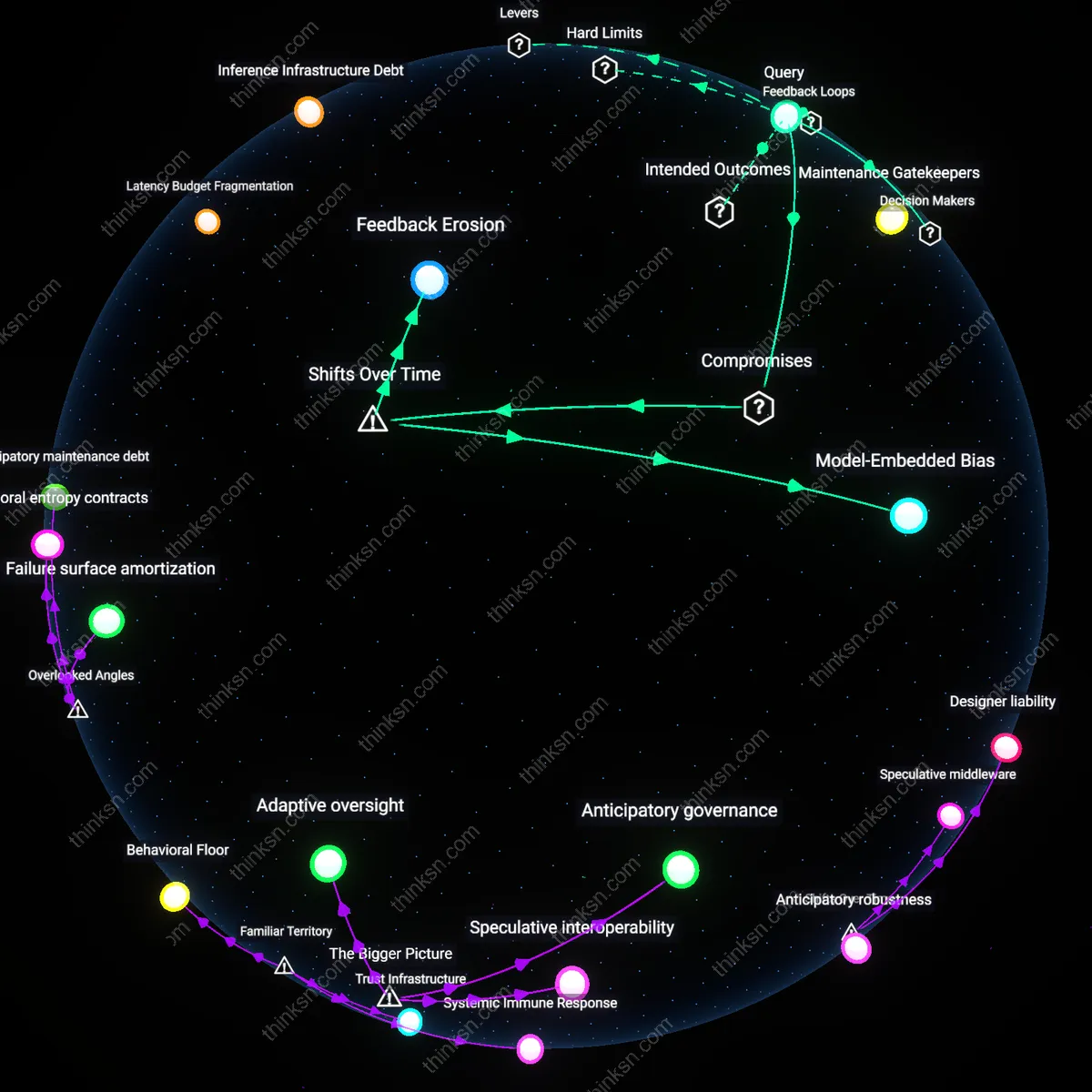

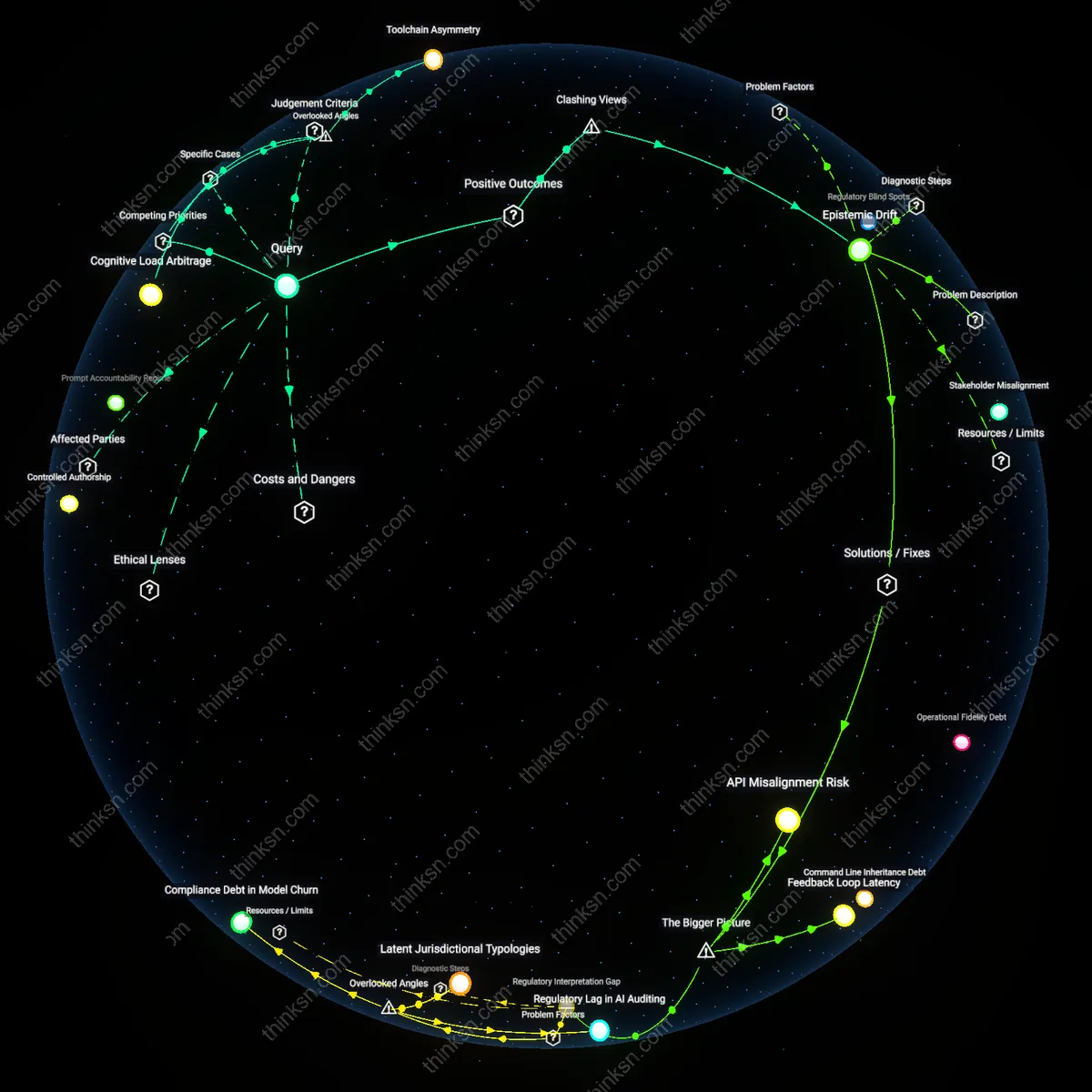

Editor sovereignty

Real-time AI code completion undermines editor sovereignty—the implicit authority of development environments to act as neutral, deterministic tools—by transforming IDEs into persuasive agents with opaque stakeholder incentives. When autocomplete suggests entire functions or modules, it shifts the developer from author to approver, embedding third-party AI logic (e.g., GitHub Copilot’s OpenAI-trained model) directly into proprietary systems at defense contractors or energy grid operators, where supply chain policies assume tooling does not introduce behavioral code. This erosion occurs not through malice but default integration, where the editor’s role as a passive platform is replaced with an active co-developer whose decision boundaries are hidden and unregulated. Standard security risk models assume code enters systems via developers or repositories, not through the subversion of the development interface itself—a blind spot in both governance and incident forensics.

Development Velocity Entitlement

The security risk from AI-generated code does not undermine the benefits of faster development because engineering leadership in high-velocity tech firms treats speed as a non-negotiable operational imperative, effectively insulating deployment timelines from security feedback loops; this dynamic emerges not from technical assessment but from organizational incentive structures that reward feature output over resilience, making security a downstream cost center rather than a coequal priority. This configuration is entrenched in product-driven Silicon Valley startups where investor expectations compress release cycles, rendering security reviews ceremonial—a non-obvious power asymmetry where engineering velocity functions as a form of cultural capital that systematically overrides adversarial risk modeling. The residual concept reveals how perceived market survival depends on motion, not robustness, legitimizing technical debt as strategic.

Security Theater Calibration

The security risk from AI-generated code is deliberately left unmitigated not because it outweighs development speed but because the perceived threat is managed through performative compliance rather than actual hardening, as seen in regulated industries like fintech where audit-ready documentation substitutes for functional security. This dynamic operates through risk committees that require only plausible deniability of breaches, enabling leaders to claim due diligence while absorbing AI-coded vulnerabilities as insurable losses, reflecting a shift from prevention to blame deflection. The non-obvious mechanism here is that security is not sacrificed for speed but recalibrated into theater, exposing how institutional risk management prioritizes liability containment over system integrity.

Epistemic Asymmetry Exploitation

Faster development using AI-generated code persists despite security risks because early adopters in platform teams exploit an information gap between those who understand the code's provenance and those responsible for securing it, creating a de facto transfer of risk to overstretched AppSec teams who lack tooling to audit AI outputs at scale. This occurs in cloud-native enterprises where DevOps engineers deploy AI-suggested infrastructure-as-code templates without disclosure, weaponizing ambiguity to bypass review gates that would slow deployment—security is compromised not by intent but by asymmetry in interpretive authority. The dissonance lies in framing speed as innovation when it is actually a procedural end-run enabled by differential knowledge, revealing that velocity gains are extracted from opacity, not efficiency.