Vaccine Safety Debate: Media Literacy or Platform Design?

Analysis reveals 6 key thematic connections.

Key Findings

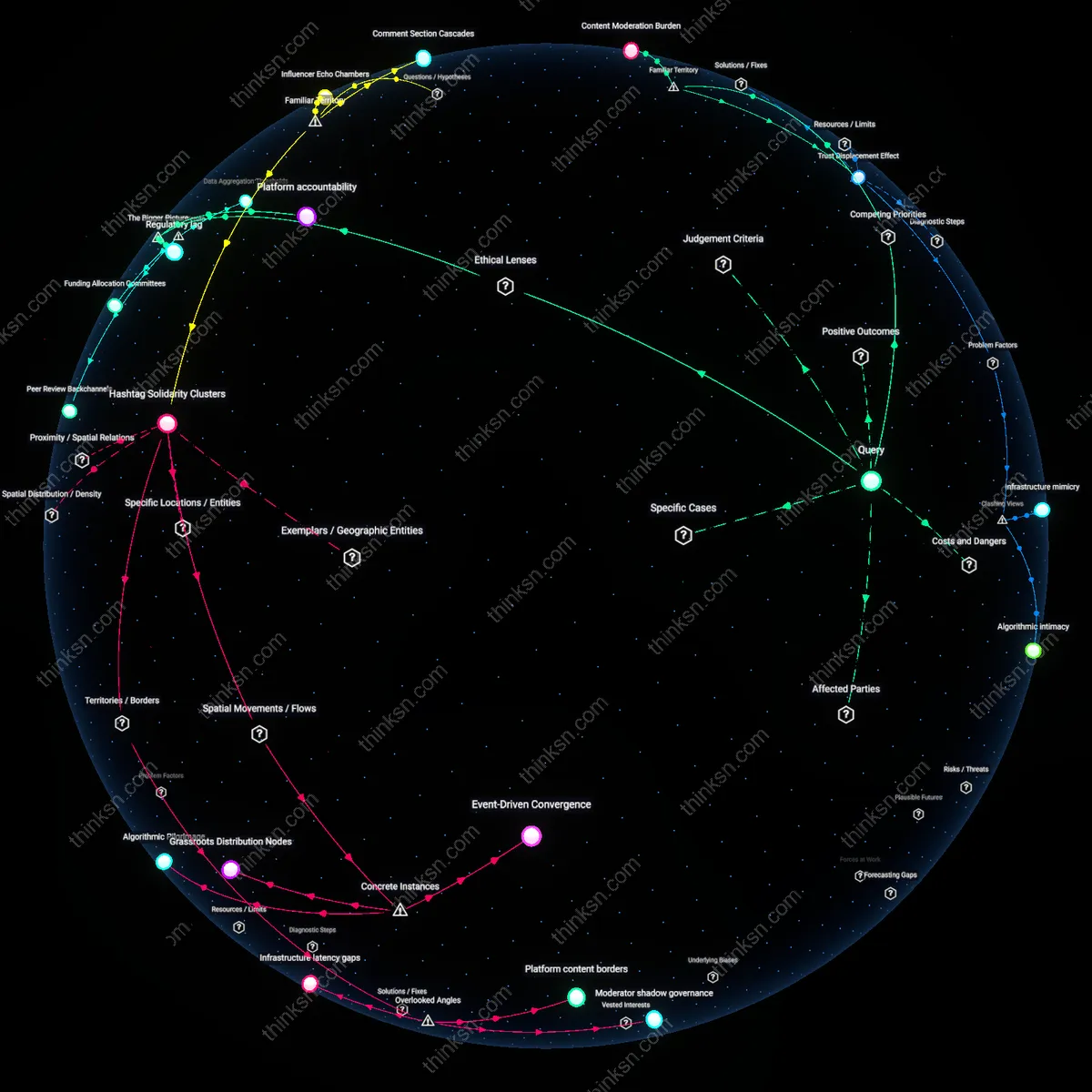

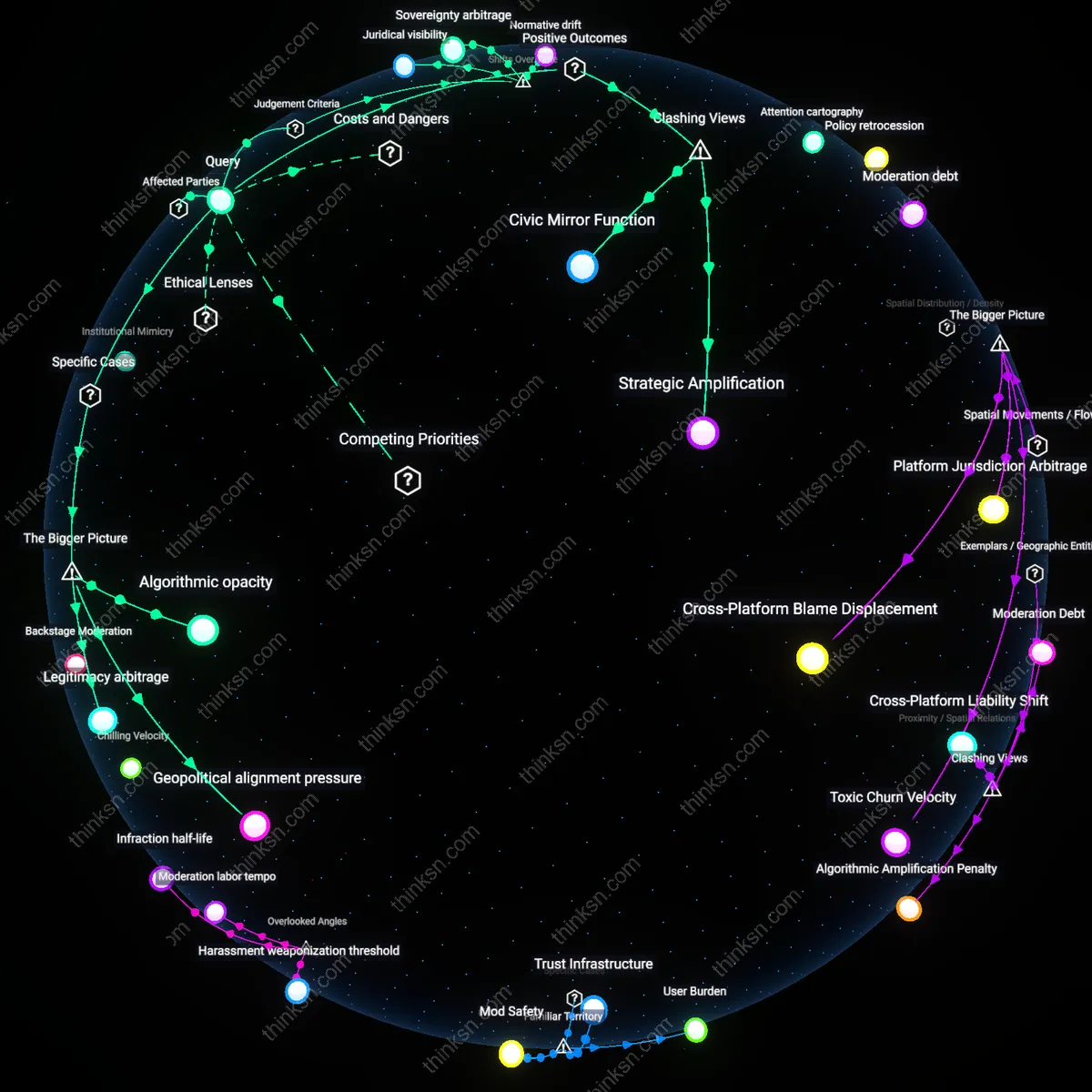

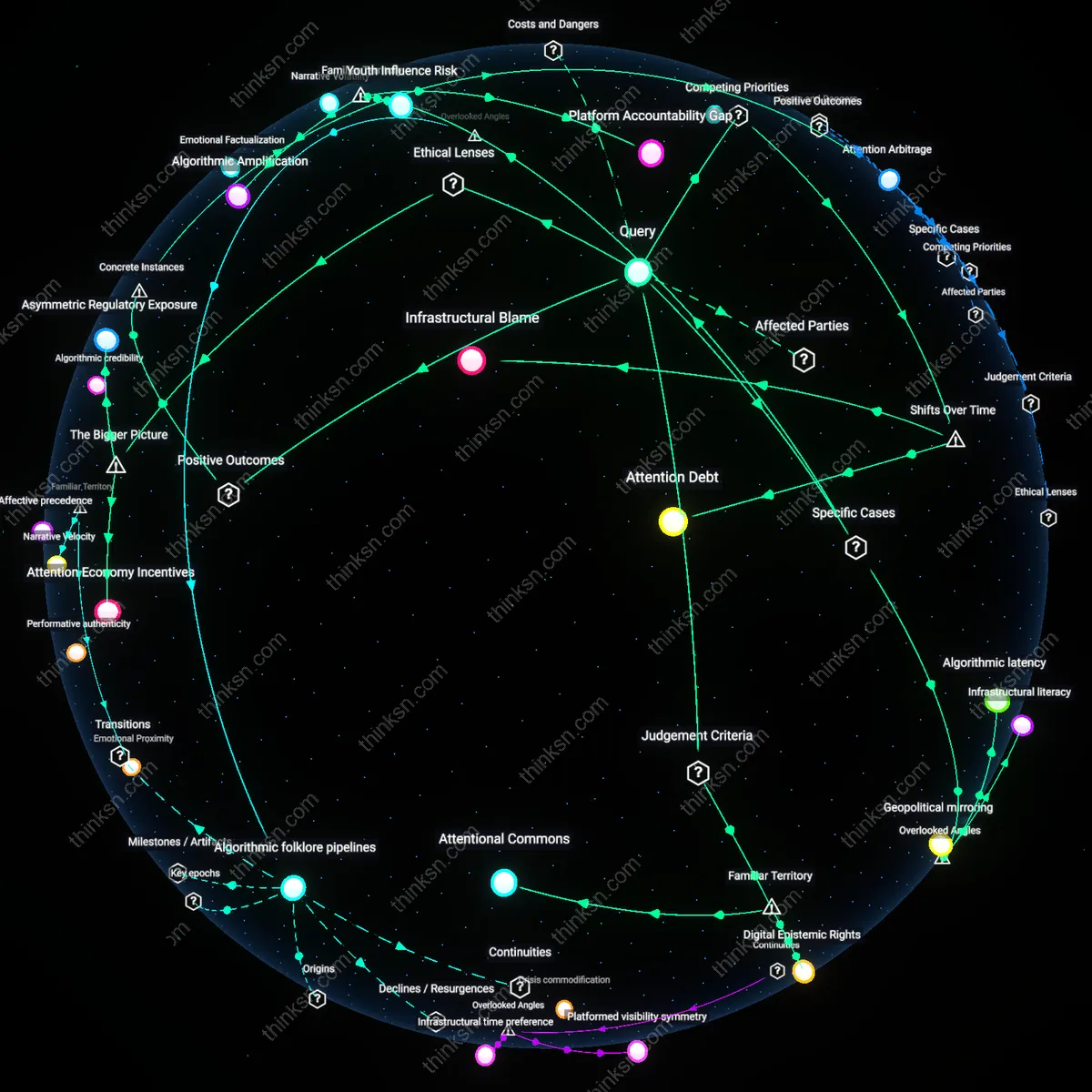

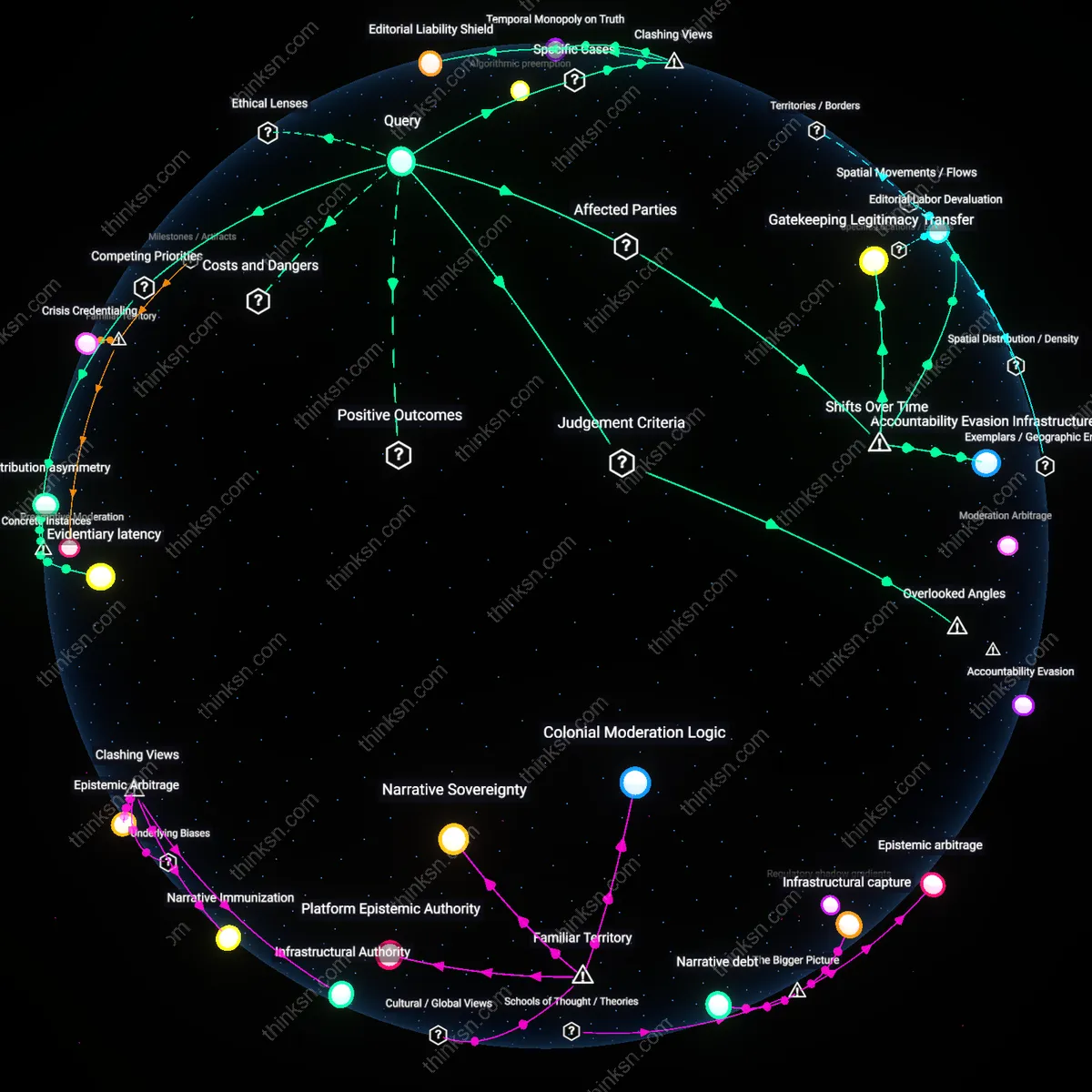

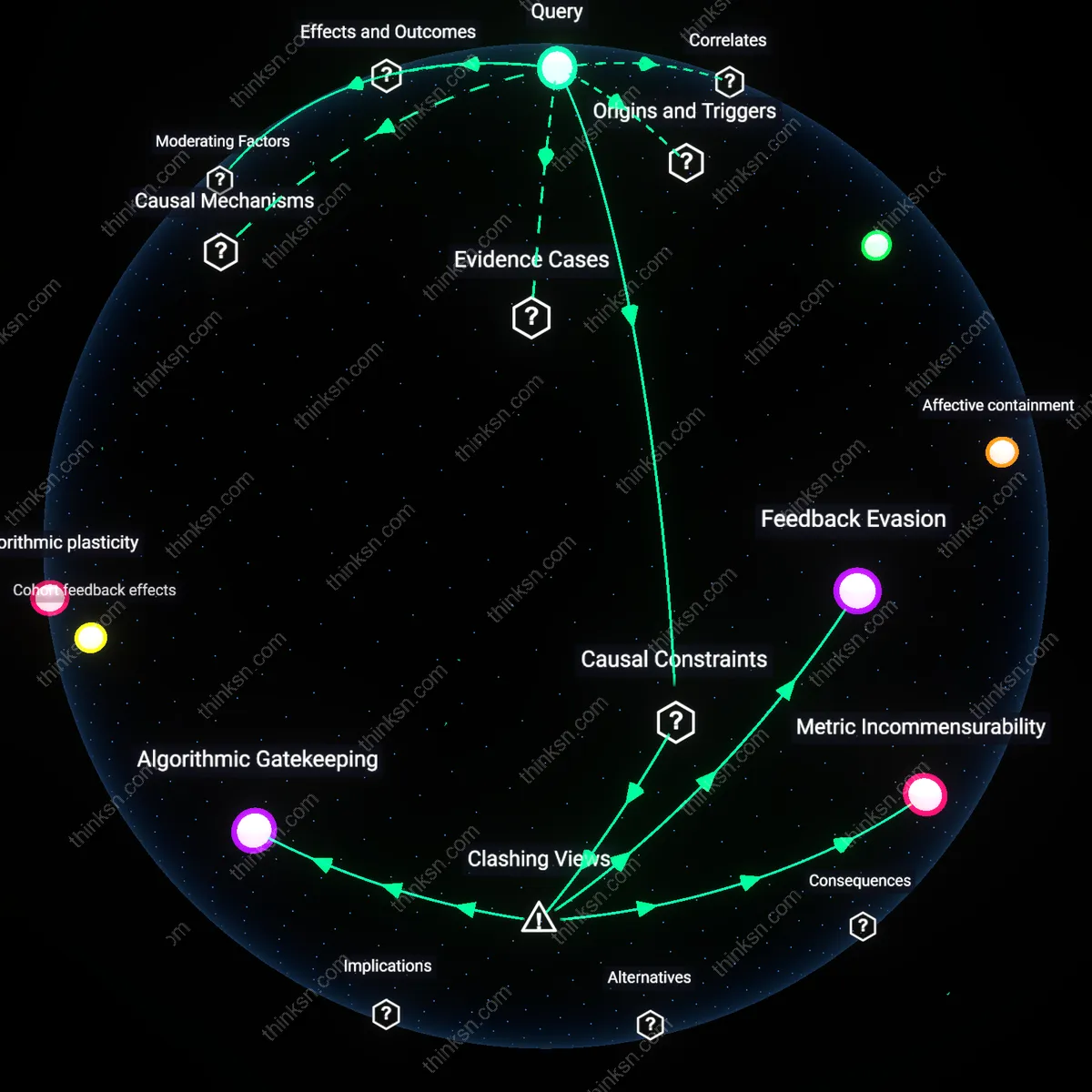

Content Moderation Burden

Platforms must bear primary responsibility because their algorithmic architectures amplify disinformation faster than users can critically evaluate it. Social media systems like Facebook or YouTube prioritize engagement through recommendation engines that reward outrage and novelty, meaning even media-literate individuals are systematically exposed to persuasive anti-vaccine content. This reveals the underappreciated reality that individual literacy cannot scale against automated distribution infrastructures designed to bypass rational deliberation.

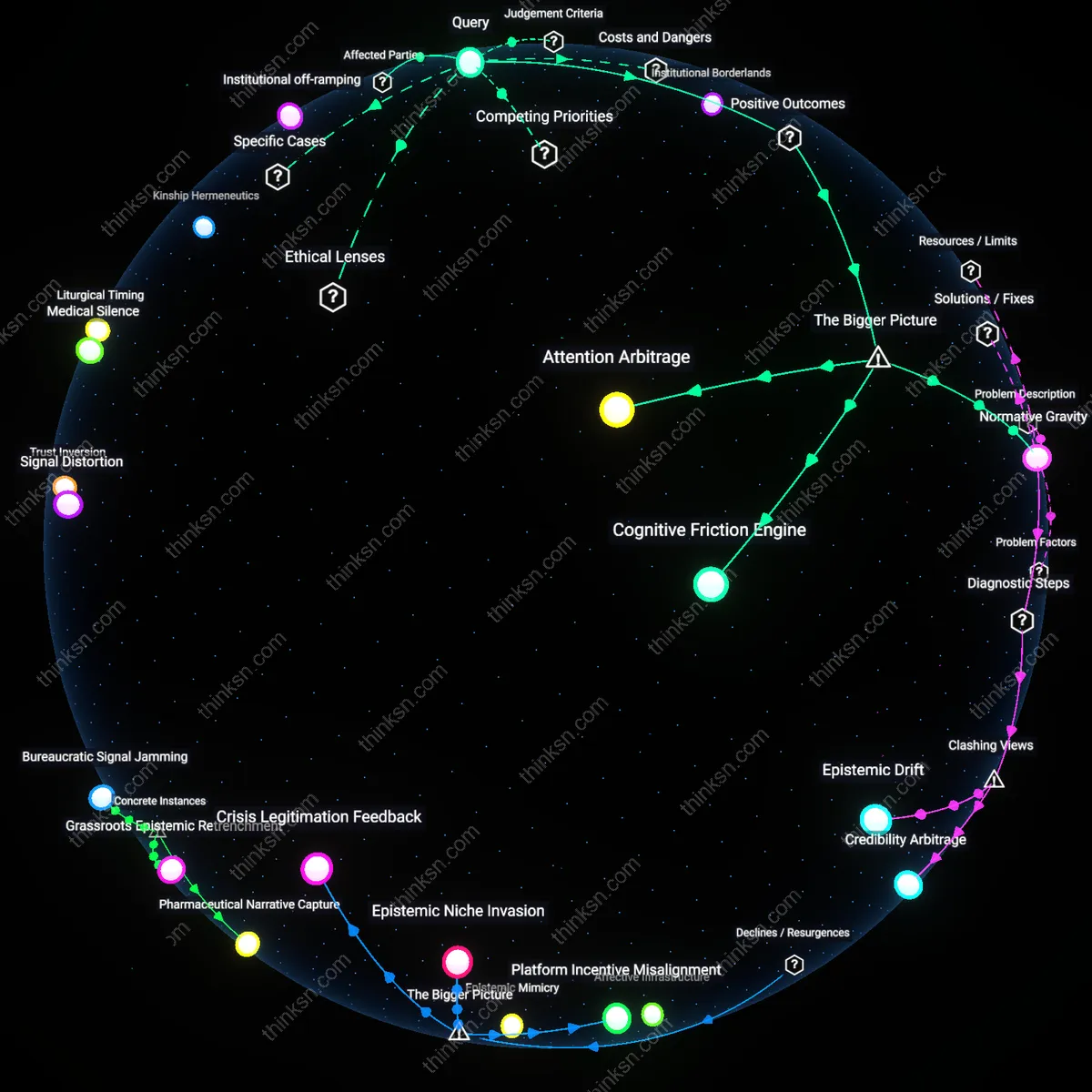

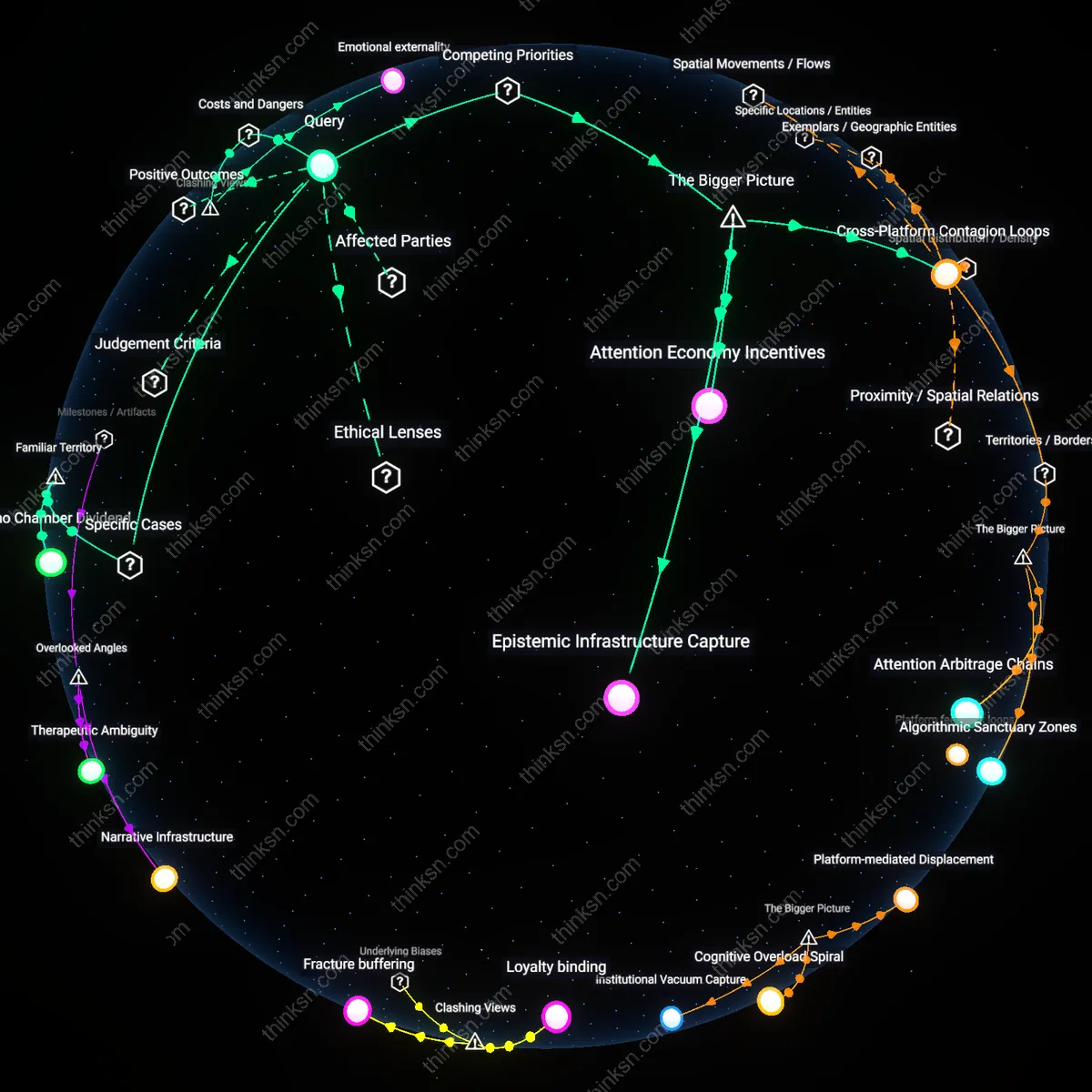

Cognitive Overload Trap

Individual media literacy fails under conditions of information saturation because people rely on heuristic cues—like social proof or emotional resonance—when processing viral content. On platforms such as Twitter or TikTok, where pro- and anti-vaccine messages flood feeds with equal footing, users default to familiar narratives rather than evidence-based reasoning, exposing how the expectation of self-reliant discernment ignores the psychological limits of ordinary cognition in engineered attention economies.

Trust Displacement Effect

When public health authorities lose credibility, social media becomes the default source of medical knowledge, shifting epistemic authority from institutions to peer networks and influencers. This dynamic—visible in measles outbreaks linked to Facebook parenting groups—demonstrates that platform design enables decentralization of expertise, making literacy irrelevant when the very sources people trust are embedded within closed loops of affirmation, a consequence rarely acknowledged in mainstream debates about online safety.

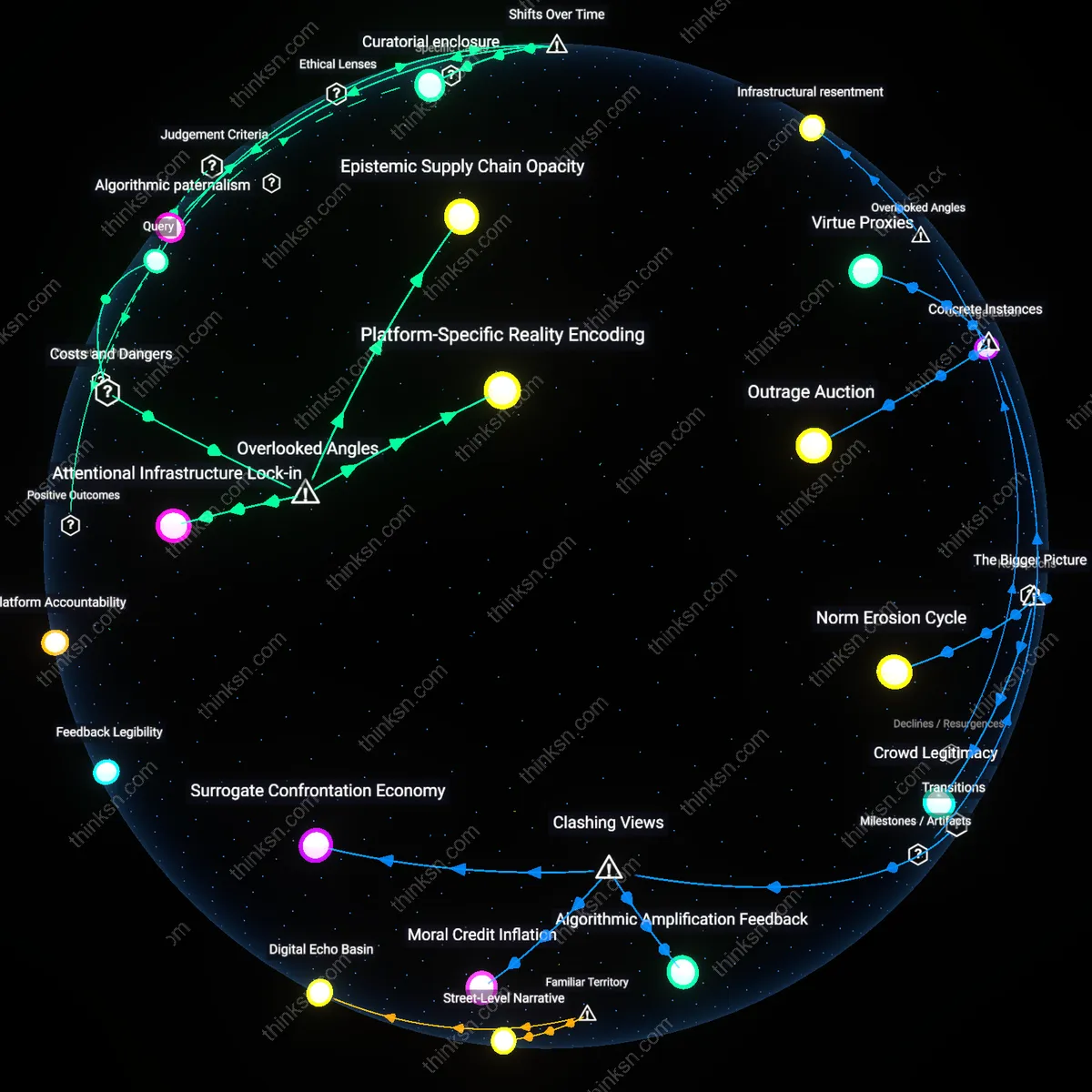

Platform accountability

Responsibility should fall on platform design because social media architectures prioritize engagement-driven algorithms that amplify emotionally charged content, including anti-vaccine disinformation, over scientifically accurate but less viral material. This mechanism is sustained by ad-based revenue models that incentivize prolonged user attention, making platforms structurally complicit in the spread of harmful misinformation. The non-obvious consequence is that individual media literacy becomes systematically overwhelmed by a designed information environment it cannot counteract—shifting ethical responsibility toward the institutions that shape that environment. What makes this connection hold is the interplay between algorithmic curation, corporate incentives, and the erosion of epistemic public goods.

Epistemic burden

Responsibility should fall on individual media literacy only when supported by robust civic education systems capable of fostering critical engagement with scientific evidence, yet this places an unjust epistemic burden on users under conditions of information asymmetry. Most individuals lack access to primary scientific literature or training in epidemiological reasoning, and thus rely on heuristic cues—often exploited by disinformation campaigns mimicking expert discourse. The non-obvious systemic failure is that expecting individuals to reliably discern truth in a weaponized information ecosystem ignores the deliberate mimicry of scientific debate by bad actors. This burden is structurally unfair because it demands expert-level analysis from laypersons in a context engineered to confuse.

Regulatory lag

Responsibility falls within the gap created by regulatory lag, where digital platforms operate under outdated legal frameworks that classify them as neutral intermediaries rather than publishers with editorial responsibility. This classification, rooted in doctrines like Section 230 in the U.S., enables platforms to avoid liability for harmful content despite wielding editorial power through algorithmic curation. The non-obvious systemic dynamic is that legal immunity has become a structural enabler of disinformation ecosystems, allowing platforms to benefit from scientific controversy without bearing its public health costs. What sustains this condition is the misalignment between analog-era laws and the operational realities of digital influence at scale.