When Inconsistent, Do Community Standards Favor Certain Political Views?

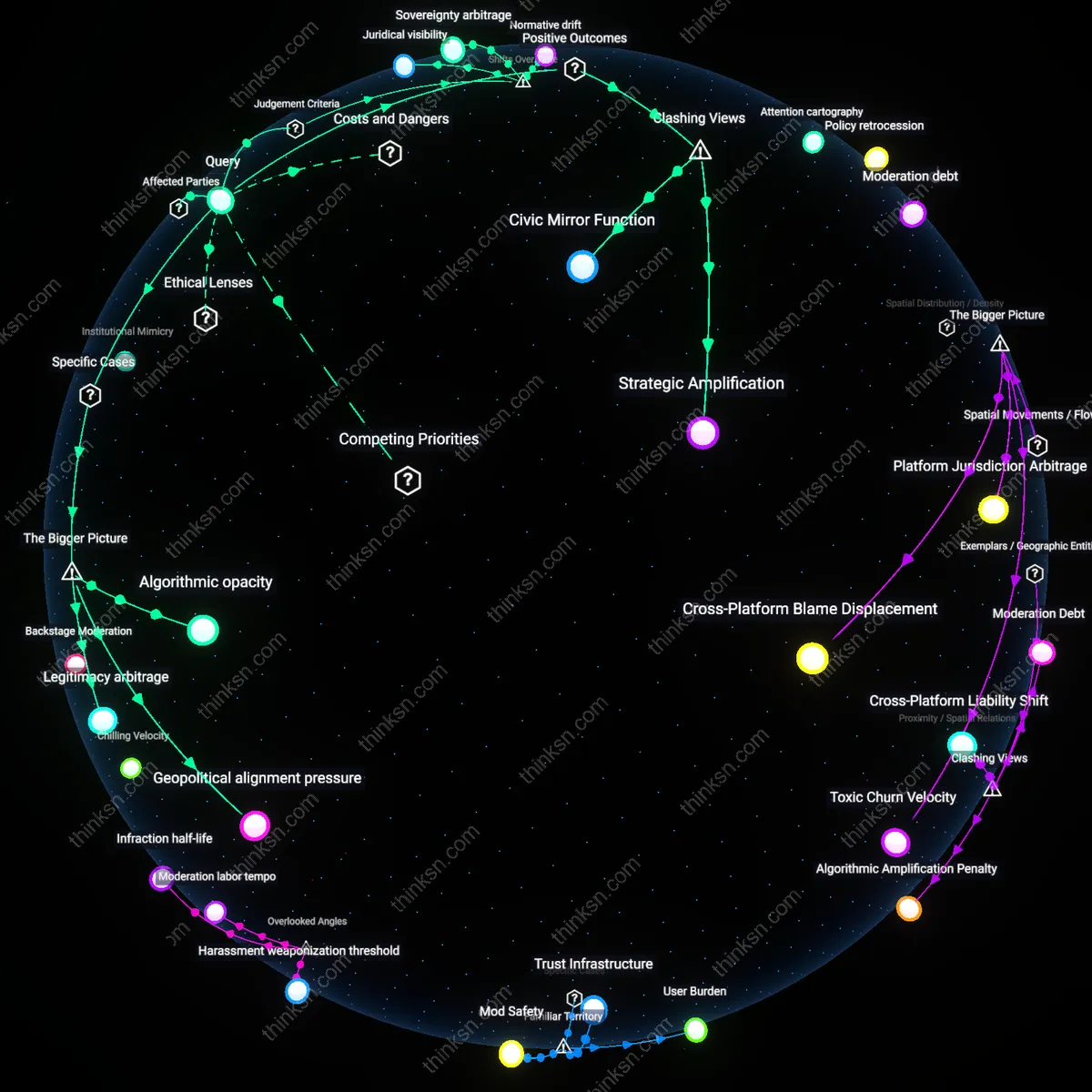

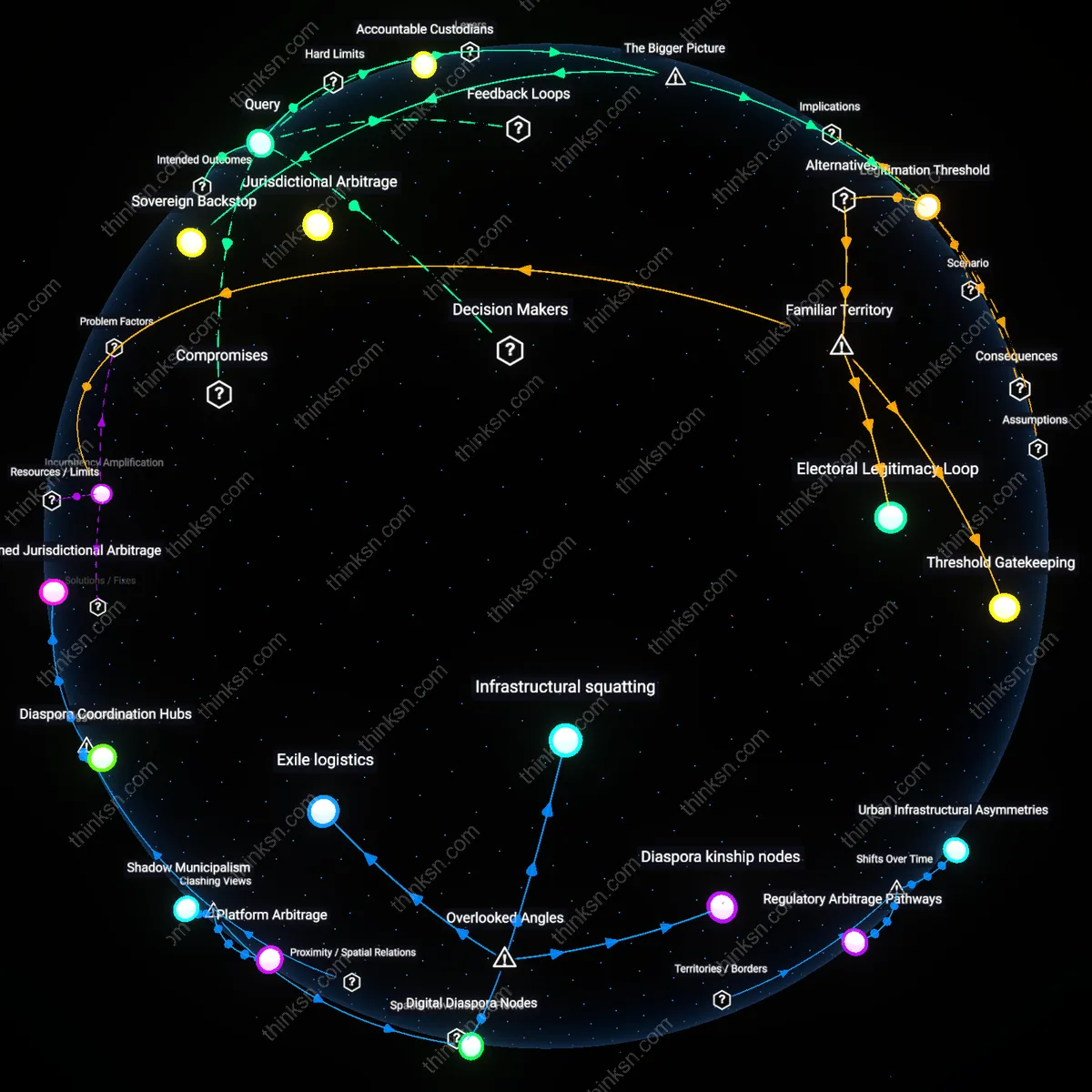

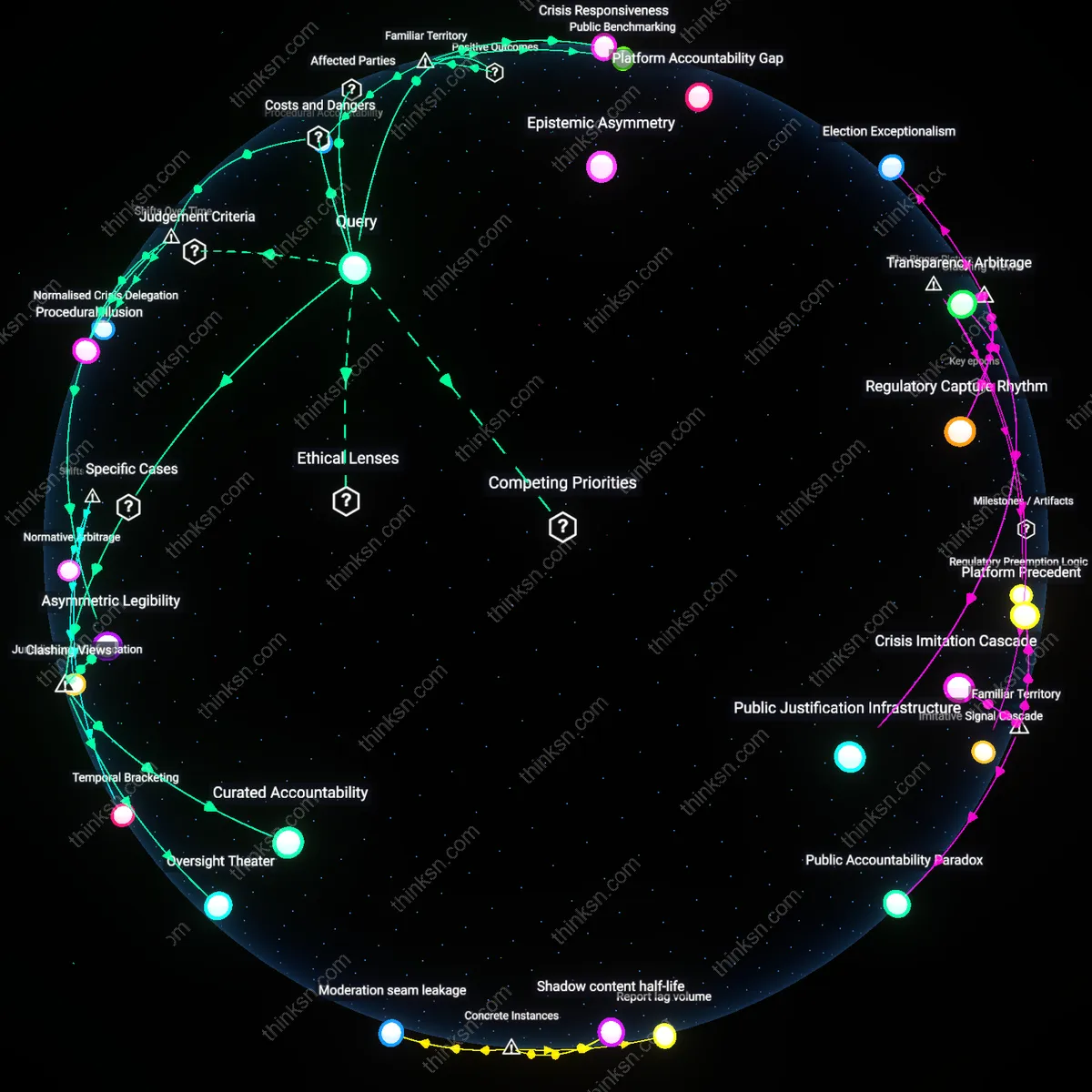

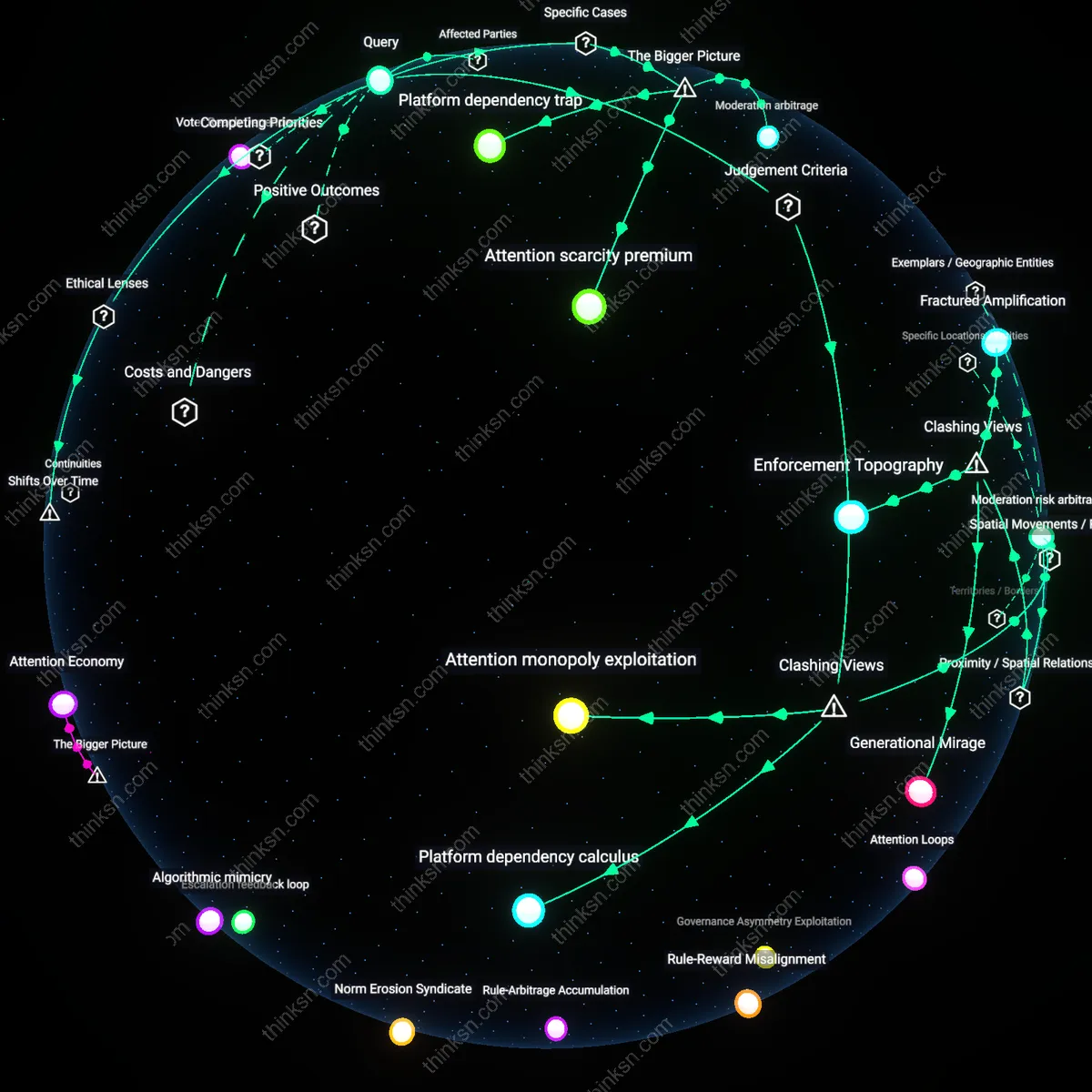

Analysis reveals 11 key thematic connections.

Key Findings

Discursive Vulnerability

Citizens should interpret inconsistent enforcement of platform rules as a mechanism of selective marginalization, exemplified by Facebook’s treatment of Indigenous activists in Canada who were silenced for using traditional terms deemed offensive while similar hate speech by settler groups remained unmoderated; this reveals that platform moderation often reproduces colonial power dynamics under neutral policy language, disproportionately burdening marginalized communities with the labor of appeal and explanation, a pattern rarely acknowledged in mainstream discourse on free speech online.

Institutional Mimicry

Citizens should respond to erratic content moderation by recognizing it as a form of regulatory evasion, as seen in YouTube’s inconsistent enforcement of misinformation policies during Brazil’s 2018 election, where far-right figures spreading false claims faced minimal action while left-wing politicians were swiftly penalized; this asymmetry emerged not from algorithmic error but from platform actors mimicking state power selectively to avoid political backlash without establishing consistent accountability, exposing how private platforms perform governance without being bound by its norms.

Norm Entrepreneurship

Citizens should interpret uneven enforcement as an opening for civic coalition-building, as demonstrated by the #StopToxicModeration campaign led by African American Reddit users in 2020 who successfully pressured the platform to audit subreddit policies after years of tolerating racially hostile communities; this shift occurred not through top-down reform but through persistent, visible organizing that redefined what counted as harm, revealing how targeted user resistance can reshape platform norms by exploiting inconsistencies to demand institutional transparency.

Juridical visibility

Citizens should treat inconsistent enforcement of platform community standards as a signal to demand procedural transparency, because the shift from state-led regulation in the 1990s to platform-governed speech environments after the 2016 election cycle revealed that rule application now operates through opaque moderation infrastructures staffed by contract labor and automated classifiers. This mechanism substitutes legal due process with operational expediency, making enforcement appear arbitrary when it is in fact shaped by geopolitical moderation hubs—like Manila or Nairobi—where regional political sensitivities are unevenly folded into global policies. The non-obvious consequence of this shift is that citizens now depend on discretionary acts of visibility—appeals, leaks, or whistleblower reports—not to access justice but to produce juridical visibility within a system designed to suppress it.

Normative drift

Citizens should respond to inconsistent enforcement by recognizing it as evidence of normative drift, because the transition from early internet governance (1995–2005), when platforms deferred to user communities to define acceptable speech, to the post-2012 monetization turn embedded content moderation within engagement-driven business models. This shift aligned community standards with algorithmic amplification systems that privilege attention retention over consistency, enabling political actors to exploit enforcement gaps by testing and adapting messaging to slip beneath detection thresholds. The underappreciated dynamic is that inconsistency is no longer a failure of policy but a structural outcome of platforms learning from user behavior—meaning norms are not enforced but continuously reshaped through evasion, producing normative drift rather than deviation.

Sovereignty arbitrage

Citizens should interpret politically inconsistent enforcement as a form of sovereignty arbitrage, because the post-2010 expansion of platforms into semi-authoritarian markets—such as India, Turkey, and Brazil—has led to a strategic calibration of moderation policies to align with the most repressive regulatory demands while maintaining Western democratic appearances. This mechanism operates through localized content takedown units and jurisdiction-specific AI training sets, enabling platforms to selectively enforce global standards based on state pressure, thereby turning political inconsistency into a competitive survival tactic. The overlooked reality is that this temporal shift—from neutral intermediary to geopolitically segmented regulator—has dissolved the myth of uniform enforcement, replacing it with sovereignty arbitrage where compliance is less about ethics than jurisdictional cost-benefit analysis.

Strategic Amplification

Citizens should exploit politically inconsistent enforcement of platform community standards to amplify marginalized narratives by strategically violating rules in ways that expose double standards. When activists deliberately post content that mirrors banned speech from opposing ideologies—such as far-right users reposting progressive slogans under identical framing—they trigger selective moderation that reveals asymmetrical enforcement; this occurs through platform moderators’ reliance on context-dependent ideological heuristics rather than neutral rules, making visible the political valence embedded in content governance. This tactic works because platforms operate as reactive institutions that prioritize reputational risk over consistency, and the resulting disparities generate public evidence of bias, empowering civil society actors to demand structural reform. The non-obvious insight is that inconsistency is not merely a flaw to be corrected but a resource for political dramaturgy that can delegitimize opaque regulatory power.

Civic Mirror Function

Citizens should interpret inconsistent enforcement not as a regulatory failure but as a civic mirror function that reflects the unresolved political fractures embedded within liberal democracies themselves. When platforms ban hate speech in Germany under NetzDG but permit similar rhetoric in the U.S. to uphold First Amendment norms, they externalize national legal and cultural conflicts into their moderation systems, making visible how democracy lacks consensus on the boundaries of acceptable speech. This occurs because platforms function as transnational intermediaries that codify local political settlements into global rules, thereby turning enforcement gaps into diagnostics of societal division rather than technical flaws. The underappreciated reality is that platform inconsistency does not corrupt public discourse—it exposes the myth of a unified public, revealing instead the competing moral geographies that democratic systems fail to reconcile.

Algorithmic opacity

Citizens should treat politically inconsistent enforcement of platform community standards as evidence of algorithmic opacity that protects platforms from accountability. Moderation systems at companies like Meta and X rely on proprietary AI models whose decision rules are inaccessible to external auditors, enabling selective enforcement—such as the disproportionate removal of Palestinian solidarity content compared to similar Israeli state-affiliated posts—under the cover of technical neutrality. This opacity is sustained by legal protections like Section 230 and a lack of mandatory transparency frameworks, allowing platforms to appear compliant while embedding political biases in unreviewable code. The underappreciated reality is that the inconsistency is not a failure of policy implementation but a designed feature that preserves operational discretion under legal and geopolitical pressure.

Geopolitical alignment pressure

Citizens should interpret inconsistent enforcement as a symptom of geopolitical alignment pressure that shapes content governance in strategic markets. For example, YouTube has been observed to demonetize or suppress content related to Uyghur persecution in Xinjiang while permitting Chinese state media to amplify disinformation about Western democracies, reflecting compliance with Beijing’s extraterritorial censorship demands. This dynamic emerges not from formal policy but from platforms’ dependency on market access and infrastructure in authoritarian states, which creates an invisible enforcement gradient aligned with great power influence. The key insight is that political inconsistency is not random but systematically correlates with platforms’ economic exposure to state coercion, revealing content moderation as an arena of digital realpolitik.

Legitimacy arbitrage

Citizens should respond to inconsistent enforcement by recognizing platforms’ use of legitimacy arbitrage, where companies selectively invoke democratic norms or free expression to justify enforcement actions that primarily serve investor and state interests. When Twitter under Elon Musk reinstated accounts like Donald Trump’s while keeping others banned, it framed the move as a commitment to free speech, but applied the principle asymmetrically to consolidate user growth and political favor in the U.S. This selective moral framing exploits public trust in normative language while masking instrumental decision-making driven by audience capture and regulatory avoidance. The deeper mechanism is that platforms monetize perceived neutrality, allowing them to shift enforcement standards without losing systemic legitimacy—because there is no independent referent for consistency beyond brand survival.