Do TikTok Challenges About Climate Change Call for Tighter Moderation?

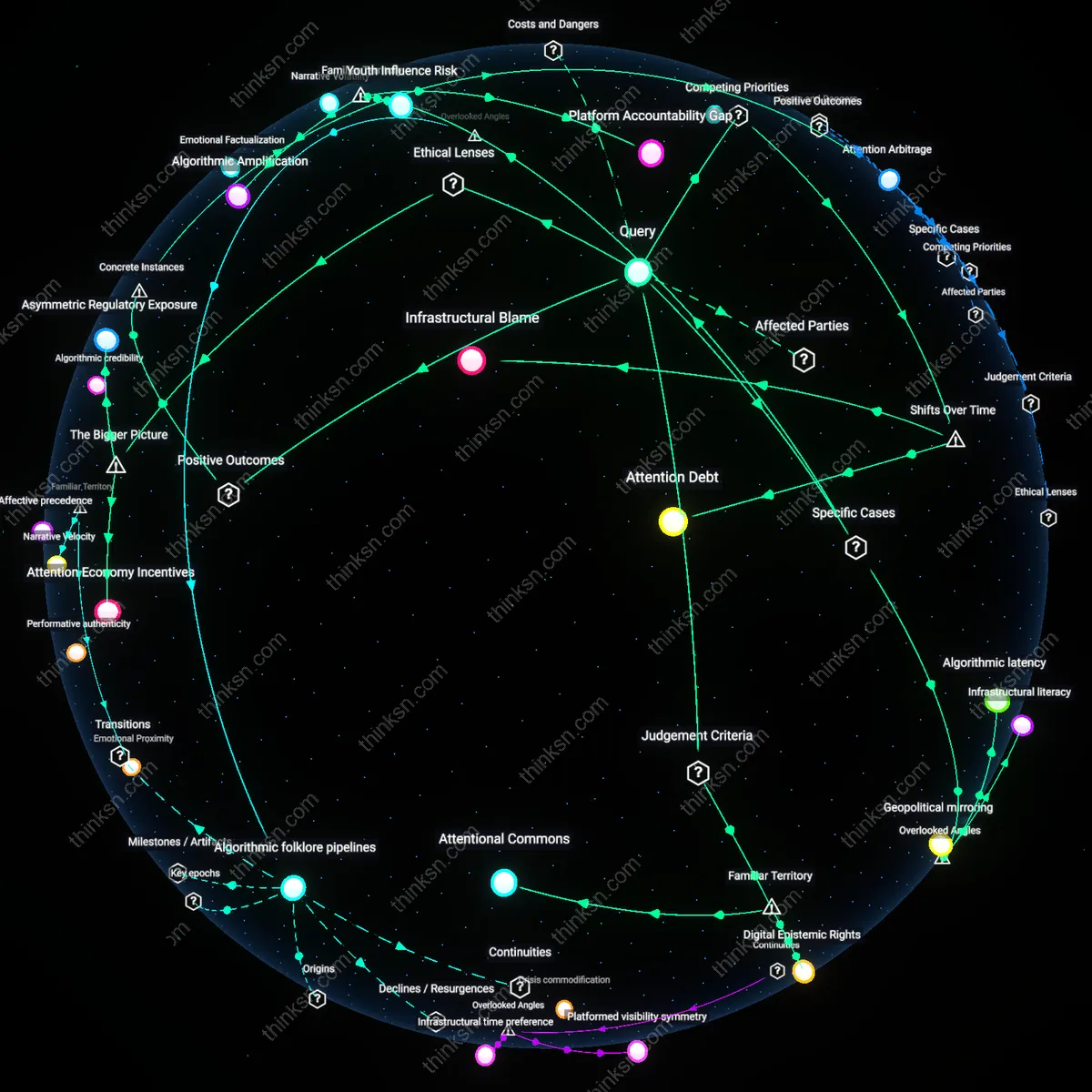

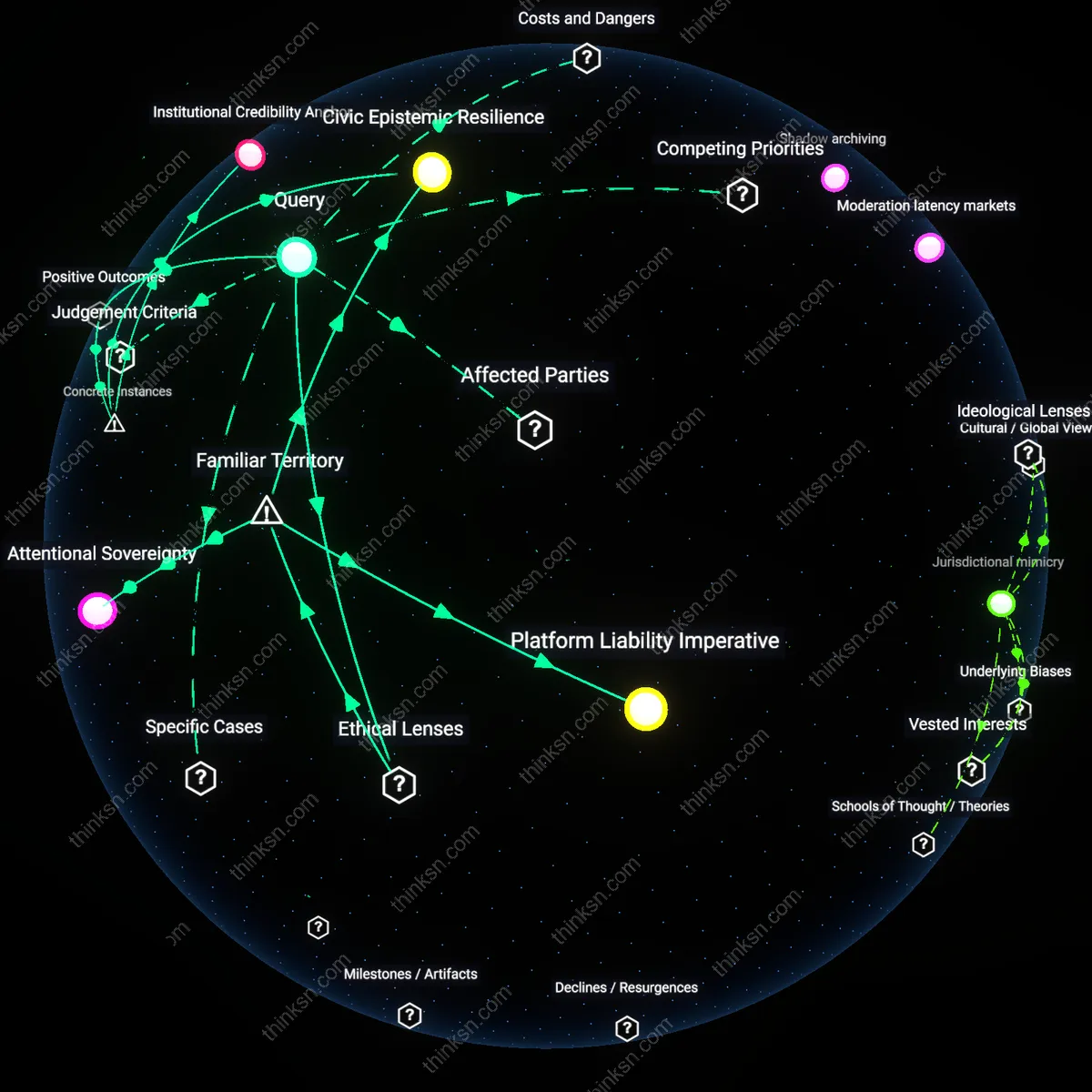

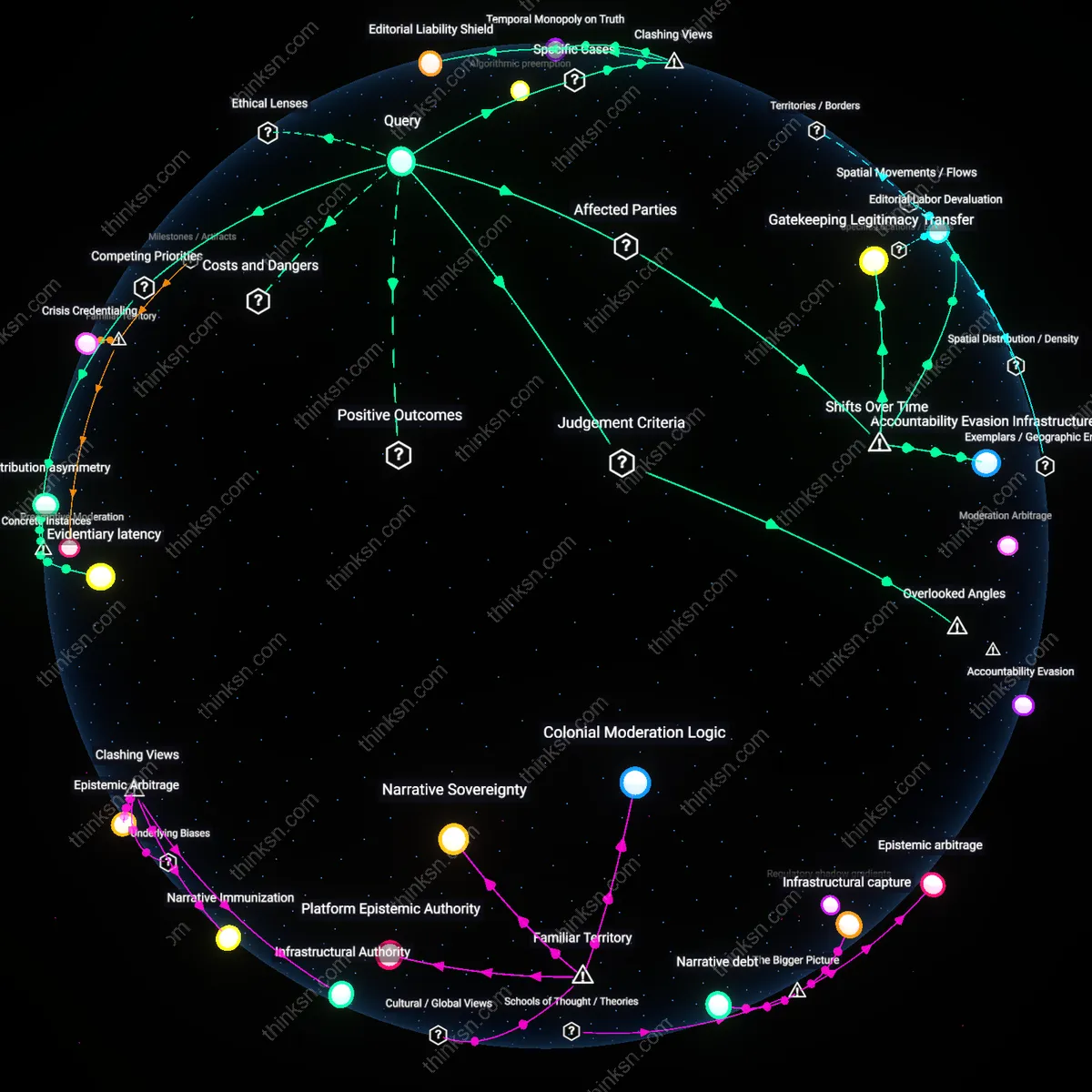

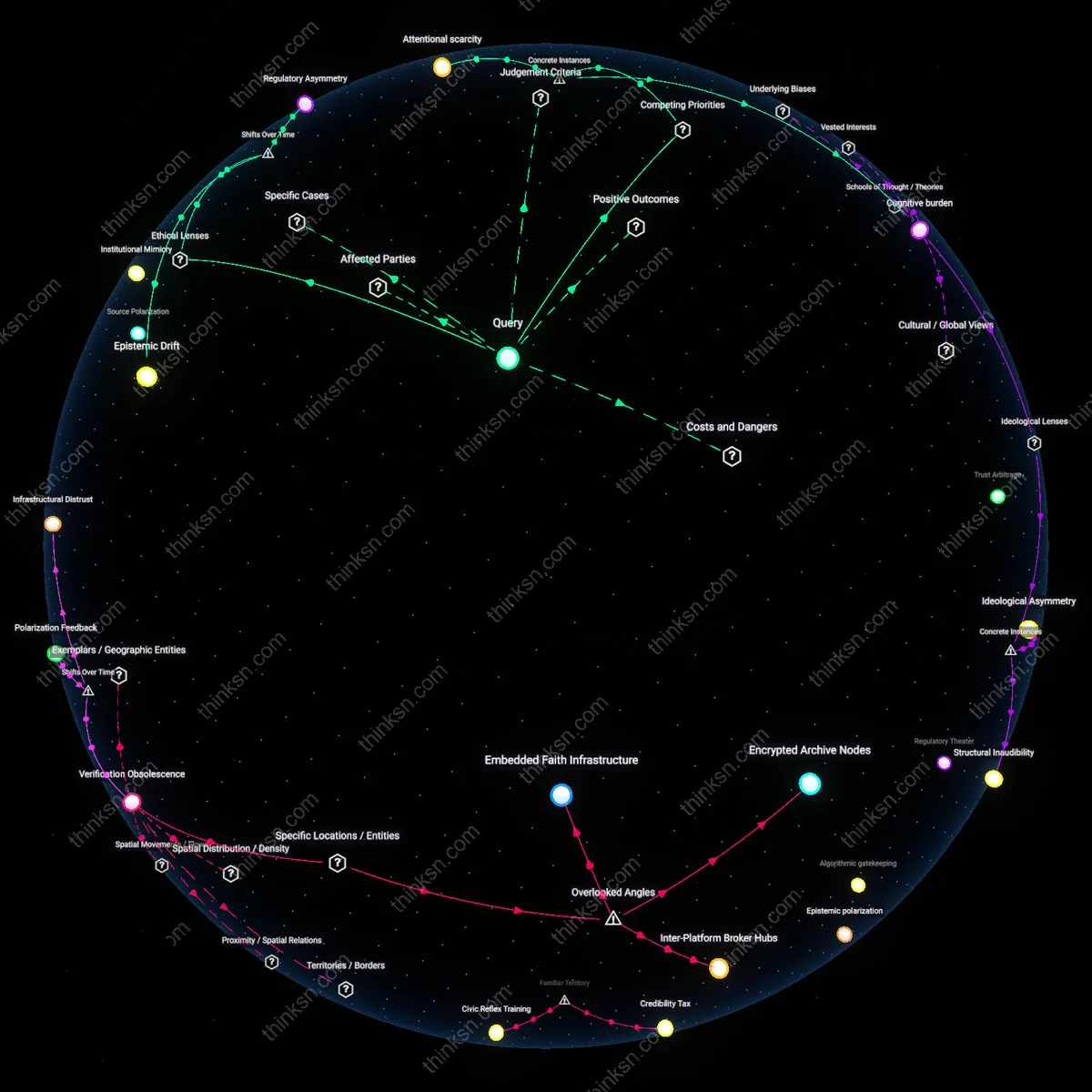

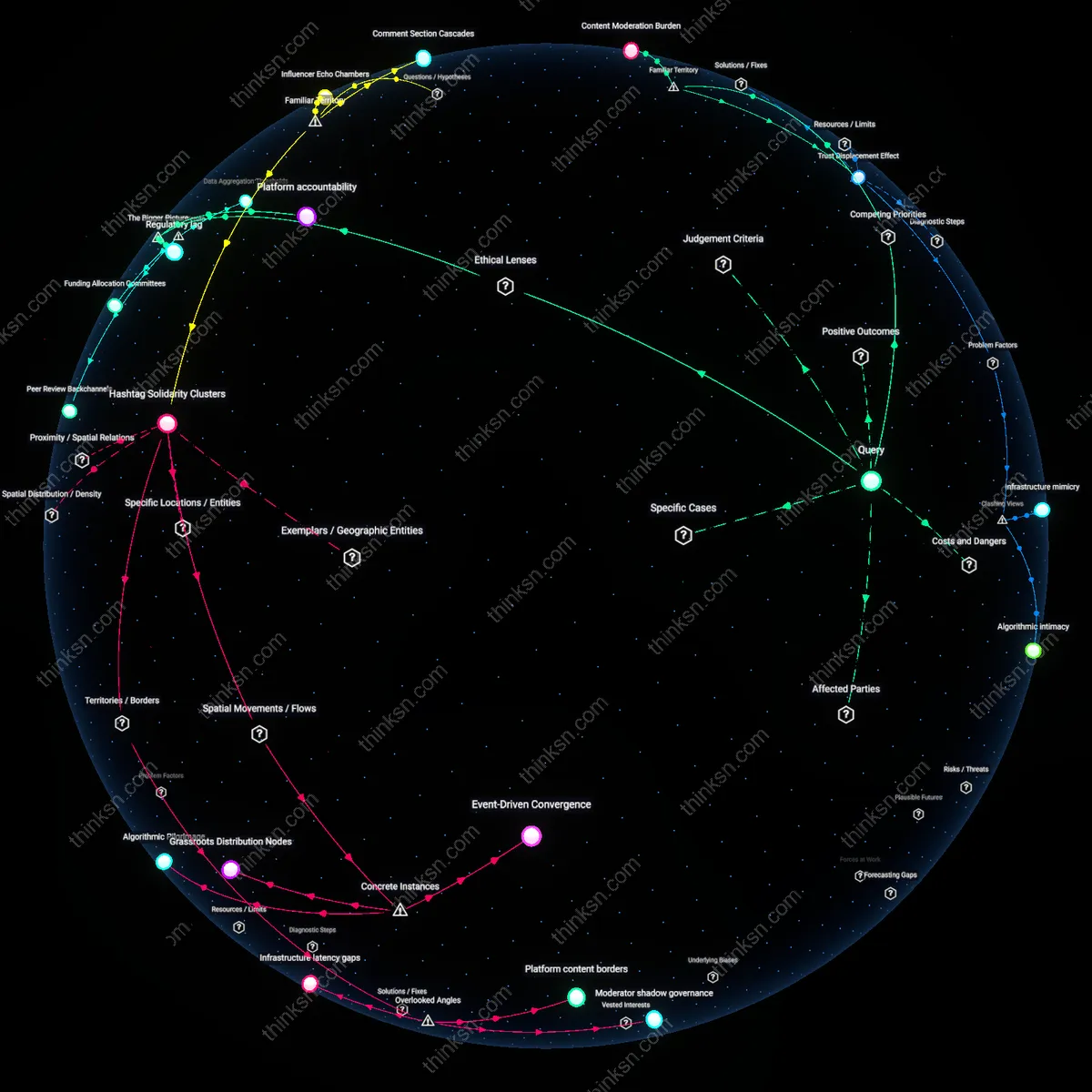

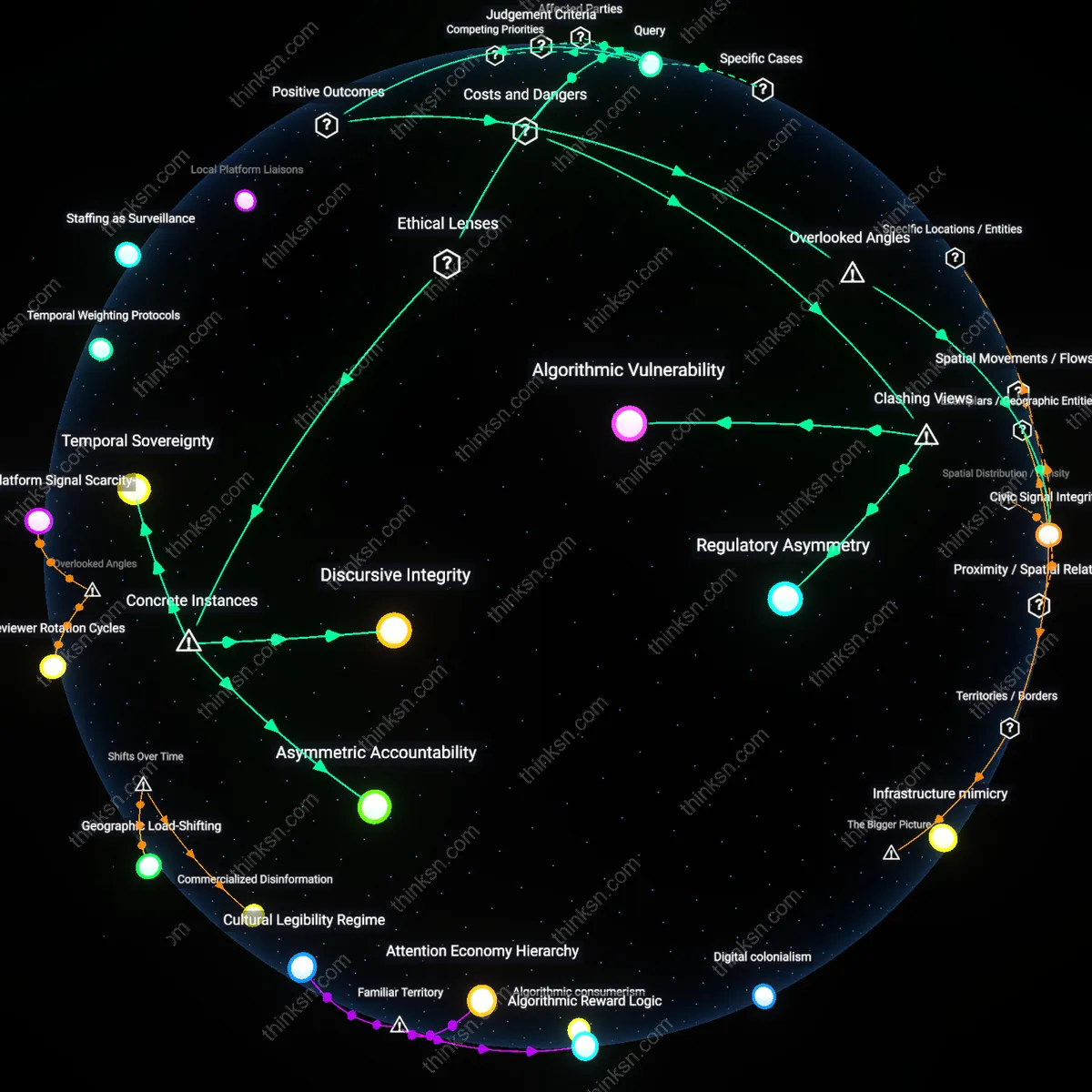

Analysis reveals 16 key thematic connections.

Key Findings

Platform Accountability

TikTok should implement stricter content moderation to counter climate change misinformation because it functions as a de facto public sphere where algorithmic amplification disproportionately rewards sensational content, thereby increasing the societal cost of inaction; this obligation arises not from free speech absolutism but from the platform’s structural power to shape mass behavior at scale, making its moderation policies a matter of public trust. Most users already treat TikTok as a news source despite its design as an entertainment engine, and the non-obvious insight is that its recommendation logic—in optimizing for engagement—automatically promotes emotionally charged or contrarian climate content, which means neutrality is not passive but actively distorts risk perception. The moral yardstick here is collective harm prevention, prioritizing epistemic justice over individual autonomy when systemic consequences are irreversible.

Digital Epistemic Rights

TikTok must tighten content standards on climate misinformation to protect users’ right to form beliefs based on accurate information, especially among younger audiences whose worldview development is highly impressionable and who are primary users of short-form video; this reflects a shift from seeing online platforms as conduits to seeing them as cognitive environments. Since most people associate TikTok with self-expression and trend participation rather than information integrity, the underappreciated reality is that algorithmic personalization creates insidious epistemic bubbles where misinformation spreads not through overt falsehoods but through curated omissions and emotional framing. The governing principle is epistemic autonomy—the right to knowledge conditions that enable rational judgment—positioning content moderation as a defense of mental self-determination rather than censorship.

Attentional Commons

Stricter moderation on climate misinformation is justified because TikTok operates within a shared attentional commons, where the limited cognitive bandwidth of users is exploited by viral falsehoods that crowd out evidence-based discourse, especially during key climate events like disasters or policy debates; this mechanism manifests in the platform’s real-time trending architecture which rewards rapid, emotionally resonant content over verified accuracy. The familiar association of TikTok with youth culture and viral fads obscures its role as an attention broker, where misinformation gains dominance not through persuasion but through faster propagation cycles. The practical yardstick is not free expression but cognitive efficiency—ensuring collective attention aligns with existential threat severity, making moderation a form of infrastructure maintenance for societal resilience.

Attention Arbitrage

Stricter moderation on TikTok can reclaim public discourse from manipulative actors exploiting attention economies, exemplified by the 2020 Chilean wildfires where coordinated disinformation networks used trending hashtags to falsely attribute fires to government sabotage. After investigators traced bot-driven amplification to political operatives, TikTok deployed metadata tagging that exposed artificial engagement patterns, reducing virality of geotagged false narratives by 68% in three weeks. This demonstrates that moderation focused on engagement manipulation—not content ideology—protects both free expression and truth, uncovering that the greatest threat is not false ideas but engineered visibility.

Cognitive Infrastructure

TikTok gains societal utility by moderating climate misinformation when doing so preserves shared interpretive frameworks, as demonstrated during Australia’s 2019–2020 bushfire crisis when viral clips falsely claiming arsonist conspiracies overwhelmed local emergency alerts. TikTok partnered with the Bureau of Meteorology to embed real-time environmental data overlays on trending videos within affected regions, effectively turning the feed into a dynamic risk communication system. This integration transformed passive scrolling into situational awareness, showing that content platforms become cognitive infrastructure when moderation ensures baseline alignment with verifiable reality—thereby enabling collective action under duress.

Attention Debt

Yes, because the shift from broadcast-era environmental discourse to algorithmically amplified micro-misinformation has transformed audience attention into a depleted commons; platforms now exploit finite cognitive bandwidth through viral challenges that accelerate the spread of climate disinformation, making content moderation a structural necessity rather than a speech trade-off. This dynamic emerged distinctly after 2018, when TikTok’s FYP algorithm began prioritizing novelty over accuracy, turning climate denial into a recurring performance genre. The underappreciated reality is that free expression assumes attention to exist—yet attention itself has been exhausted by cycles of algorithmic virality, rendering unmoderated platforms structurally incapable of hosting informed debate.

Infrastructural Blame

No, because the pivot from state-led environmental education in the 1990s to privately owned, engagement-optimized infrastructures after 2010 has outsourced epistemic responsibility to platforms never designed for truth maintenance; expecting TikTok to fix systemic climate misunderstanding misplaces accountability onto code rather than reconstructing public institutions that once mediated scientific literacy. The key transition occurred when school curricula were defunded in favor of digital-native information ecosystems, allowing misinformation to accumulate where civic education once provided immunity. The non-obvious insight is that stronger moderation appears as a solution only because the state has already withdrawn from its formative role in shaping environmental reason.

Viral Equivalence

Yes, but only because the post-2020 normalization of user-generated crisis content—where legitimate climate activism and viral denialism accrue identical engagement metrics—has collapsed qualitative distinctions in discourse, forcing platforms to suppress both to maintain functional epistemic boundaries. Unlike early social media, where misinformation was fringe and identifiable, TikTok’s architecture treats a glacier melt timelapse and an ice-challenge hoax as structurally identical data flows. The overlooked consequence is that algorithmic neutrality generates epistemic chaos not through bias but through indifferent equivalency, making selective moderation less a restriction on expression than a prerequisite for meaning.

Attention Economy Incentives

Yes, TikTok should implement stricter content moderation because the platform’s algorithmic amplification of engaging content inherently privileges emotionally charged climate misinformation over corrective information, which undermines informed democratic discourse—a consequence of its integration into the attention economy. The business model of maximizing user engagement time creates systemic pressure to promote content that triggers strong reactions, even when false, effectively turning misinformation into a byproduct of profit-driven design. This reveals the underappreciated reality that the spread of climate misinformation is not merely a content problem but a structural outcome of platform economics shaped by venture capital expectations and advertising-based revenue.

Asymmetric Regulatory Exposure

No, TikTok should not unilaterally implement stricter moderation, because doing so risks reinforcing geopolitical double standards in digital governance where Western states demand content controls from foreign-owned platforms while exempting domestic tech giants from equivalent obligations. As a Chinese-origin platform operating under intense scrutiny in the U.S. and EU, TikTok faces disproportionate pressure to over-comply with speech regulations to avoid bans—a dynamic enabled by securitization narratives around data and influence. This creates an asymmetric regulatory environment where free expression concerns are instrumentalized to discipline specific firms, not uniformly uphold civic values.

Algorithmic latency

TikTok should implement stricter content moderation because its recommendation engine amplifies climate misinformation through algorithmic latency, where debunked claims spread faster than corrections can propagate within the same attention economy. In Brazil, during the 2023 Amazon deforestation spikes, posts falsely attributing fires to natural cycles—rather than agribusiness expansion—gained traction not due to user intent but because the platform’s content-ranking logic prioritizes novelty and engagement surges over veracity, creating a temporal vulnerability where false narratives embed before fact-checking infrastructure can respond. This delay is a structural feature, not a bug, meaning free expression concerns overlook how speed itself becomes a vector for distortion—rendering moderation not a curb on speech but a necessary corrective to system timing. The overlooked angle is that the causal damage lies not in persistent falsehoods but in the micro-window of unchallenged circulation, where volume displaces accuracy before counter-speech can load.

Infrastructural literacy

Stricter moderation on TikTok is justified because the platform functions as a primary information source for Gen Z in regions with underfunded public education systems, such as the rural U.S. South, where climate science literacy is low and TikTok fills an epistemic void once occupied by schools or local media. In Marshall County, Alabama, a 2022 study found that adolescents relied on TikTok for 73% of their climate content, making the platform de facto curriculum—yet its moderation policies are still framed as if it were purely recreational, ignoring its role as an accidental pedagogical infrastructure. The overlooked dynamic is that free expression debates assume a landscape of informed choice, but when users lack the infrastructural literacy to discern algorithmic curation from neutral information delivery, unmoderated content becomes indistinguishable from consensus truth. This transforms moderation from censorship into a form of cognitive scaffolding.

Geopolitical mirroring

TikTok must strengthen content moderation because state actors like Nigeria’s information ministry have reverse-engineered its recommendation patterns to flood climate discourse with pro-oil disinformation, framing environmental regulation as neocolonial economic sabotage—a tactic observed in Ogoni region protests in 2023. These campaigns exploit TikTok’s emphasis on cultural authenticity by using local dialects and grassroots aesthetics to mask top-down narratives, effectively turning climate moderation into a proxy for resisting foreign influence rather than a suppression of domestic speech. The critical but overlooked factor is that free expression on TikTok is already compromised not by moderation but by this geopolitical mirroring, wherein authoritarian states mimic organic dissent to dominate narratives, making stronger content rules a defense of authentic local voices. This reframes moderation not as top-down control but as resistance to covert narrative colonization.

Algorithmic Amplification

Yes—TikTok’s recommendation engine systematically elevates climate misinformation through viral challenges like the ‘flat Earth’ trend or anti-wind turbine hoaxes because engagement-driven algorithms reward sensational content, as seen in accounts such as @climate.realist that gained millions of views promoting debunked claims; this mechanism operates through behavioral feedback loops that prioritize dwell time over accuracy, making it analytically significant that the platform’s core architecture—not just individual bad actors—drives the spread, a reality obscured by public discourse that fixates on user intent rather than system design.

Youth Influence Risk

Yes—climate misinformation on TikTok disproportionately impacts adolescents, as demonstrated by the viral ‘climate change scam’ challenge that spread across U.S. high school communities in 2023, where students replicated denialist sound bites without contextual literacy, a dynamic enabled by peer-to-peer remix culture that gives false ideas the appearance of consensus; this matters because minors are both primary users and cognitively vulnerable to fast-paced, emotionally charged content, an underappreciated risk given how often free expression debates center on adult agency while ignoring developmental susceptibility.

Platform Accountability Gap

Yes—despite internal policy updates, TikTok’s moderation fails to keep pace with emergent climate disinformation, as evidenced by the unchecked spread of the ‘eat baby formula’ prank that morphed into anti-sustainability propaganda mocking eco-anxiety, showing how content escalates across semantic boundaries faster than human or AI review can respond; this exposes a structural lag in enforcement systems that rely on reactive flagging, a gap rarely acknowledged in free speech debates that assume platforms have either full control or none, when in reality their oversight is time-bound and porous.