Reddit: Diverse Insights or Coordinated Disinformation?

Analysis reveals 5 key thematic connections.

Key Findings

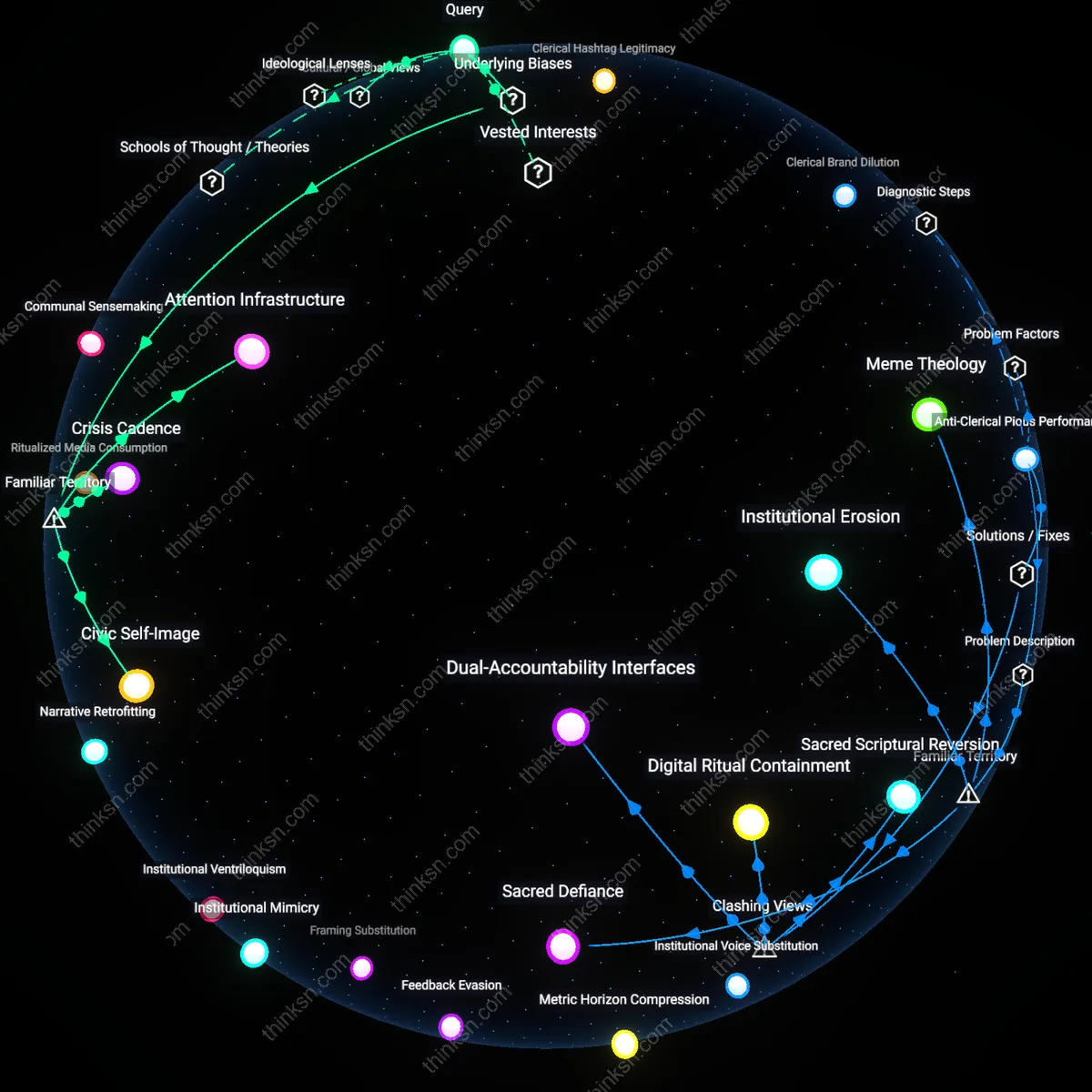

Institutional off-ramping

During the 2016 U.S. election, Russian operatives used r/The_Donald to amplify divisive narratives, but the subreddit’s eventual hardening into an ideological echo chamber led some early contributors—particularly centrist or libertarian users—to disengage or migrate to alternative forums like r/DebatePoliticalReddit. This exodus did not stem from direct censorship but from the perception that the epistemic norms had collapsed under coordinated influence, rendering diverse discourse futile. The case demonstrates how disinformation can trigger self-selective flight from pluralism, where the protective response is not confrontation but withdrawal—revealing that sustained exposure to manipulation can erode the very user base needed to maintain viewpoint diversity.

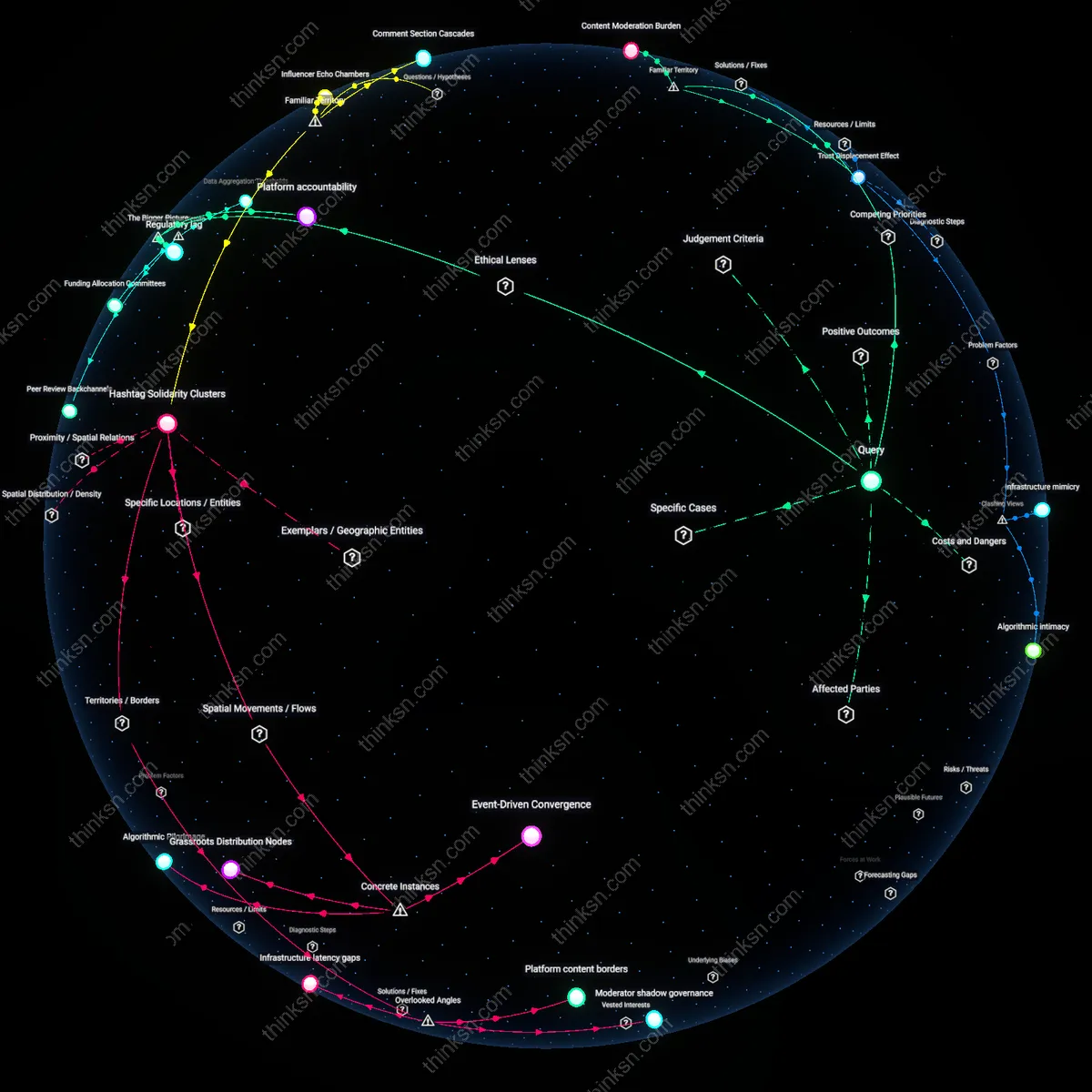

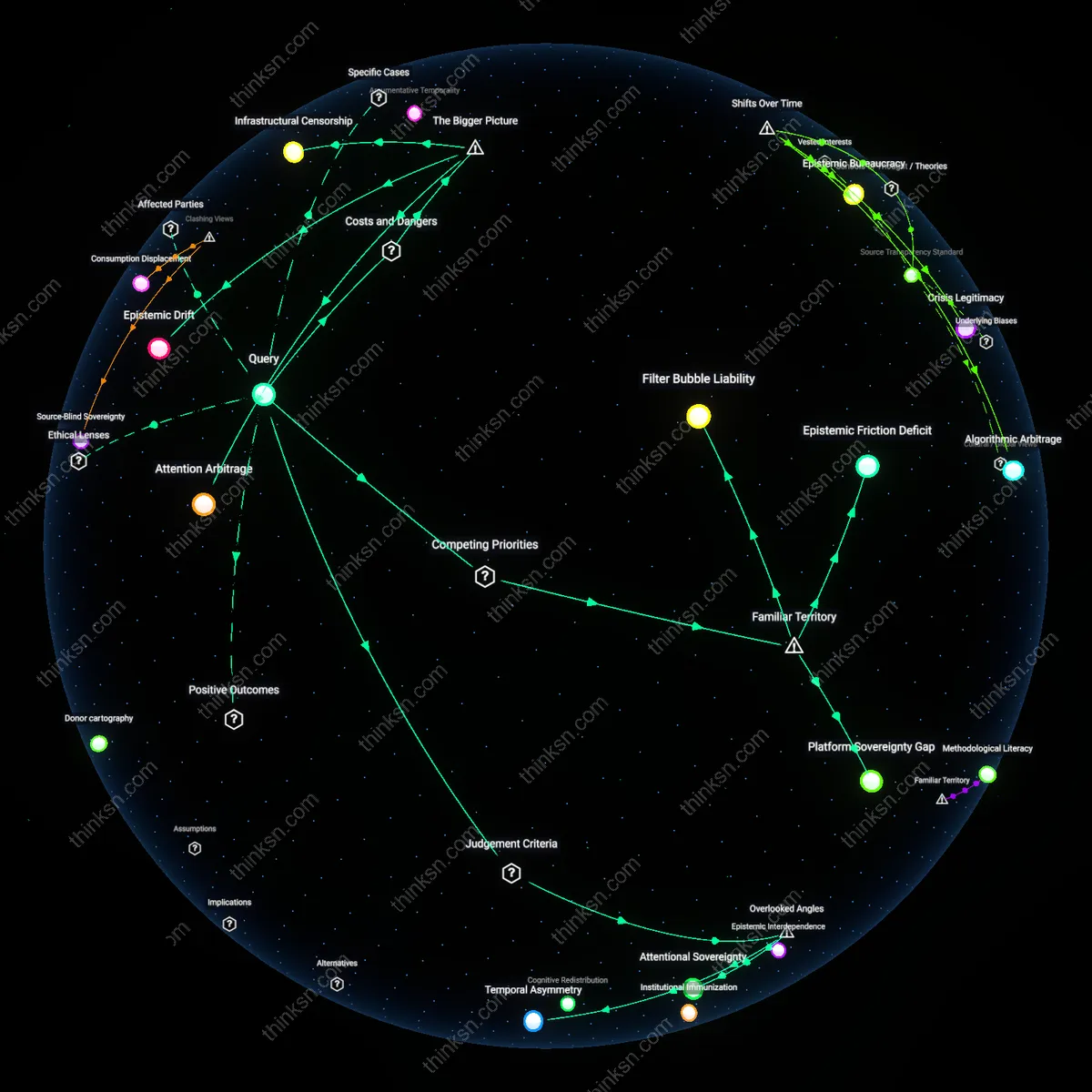

Algorithmic amplification debt

In 2021, Reddit’s default sorting algorithm promoted r/NoNewNormal posts linking vaccines to widespread side effects across multiple subreddits, accelerating reach before moderators or admins intervened; this delay allowed anti-vaccine narratives to gain traction even after corrections, as seen in user surveys from r/TwoXChromosomes where previously vaccine-confident members reported doubt. The structural lag between algorithmic distribution and human moderation creates a temporal window where disinformation exploits the platform’s openness more efficiently than truth can respond, embedding falsehoods into personal belief systems faster than community norms can correct them—highlighting a hidden cost of algorithmic design that incentivizes engagement over epistemic stability.

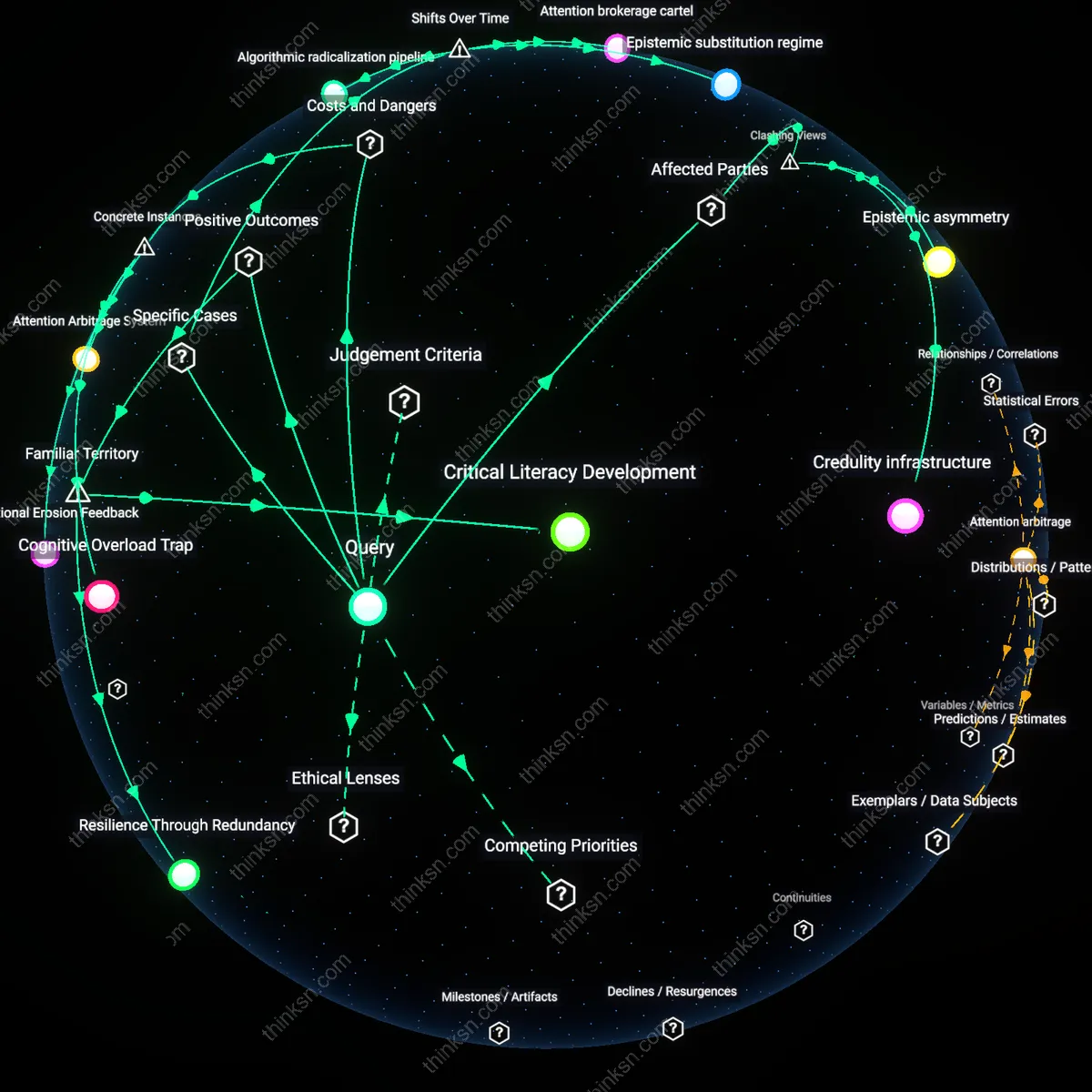

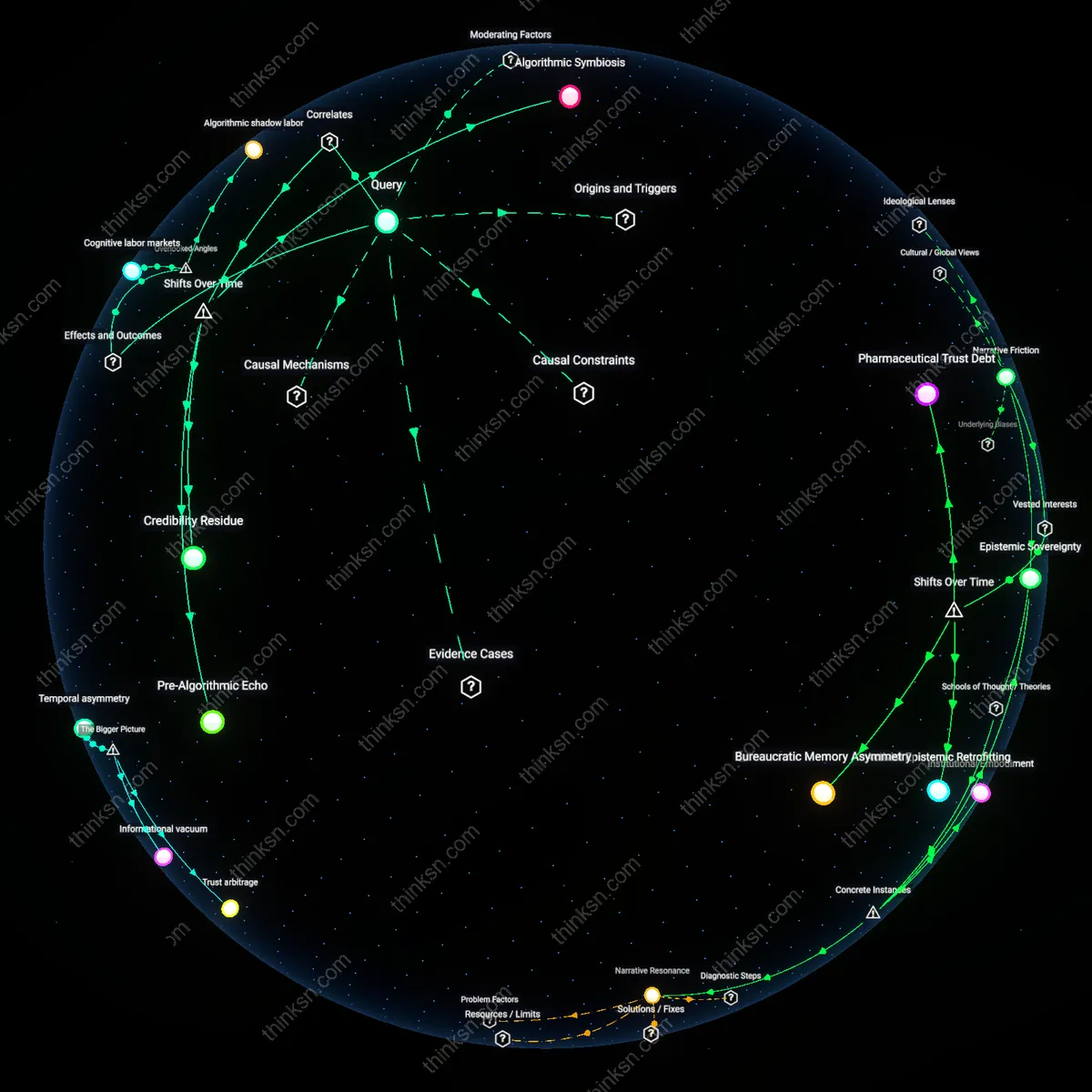

Cognitive Friction Engine

Exposure to diverse viewpoints on Reddit strengthens collective sense-making by introducing controlled cognitive friction that disrupts belief calcification. When users encounter ideologically or epistemically dissimilar perspectives—such as climate skeptics engaging with climate scientists or antivax communities encountering epidemiological data—the resulting mental tension forces justification, reevaluation, or refinement of beliefs, a process amplified by Reddit’s upvote-driven visibility that surfaces compelling counterpoints without centralized editorial control. This mechanism operates through decentralized discourse networks where no single actor curates truth, making the system resilient to ideological monopolies but dependent on the presence of minimally credible alternative views; the non-obvious insight is that disinformation, while dangerous, can paradoxically activate epistemic vigilance in informed subreddits, turning exposure into a distributed stress test for ideas rather than mere contamination.

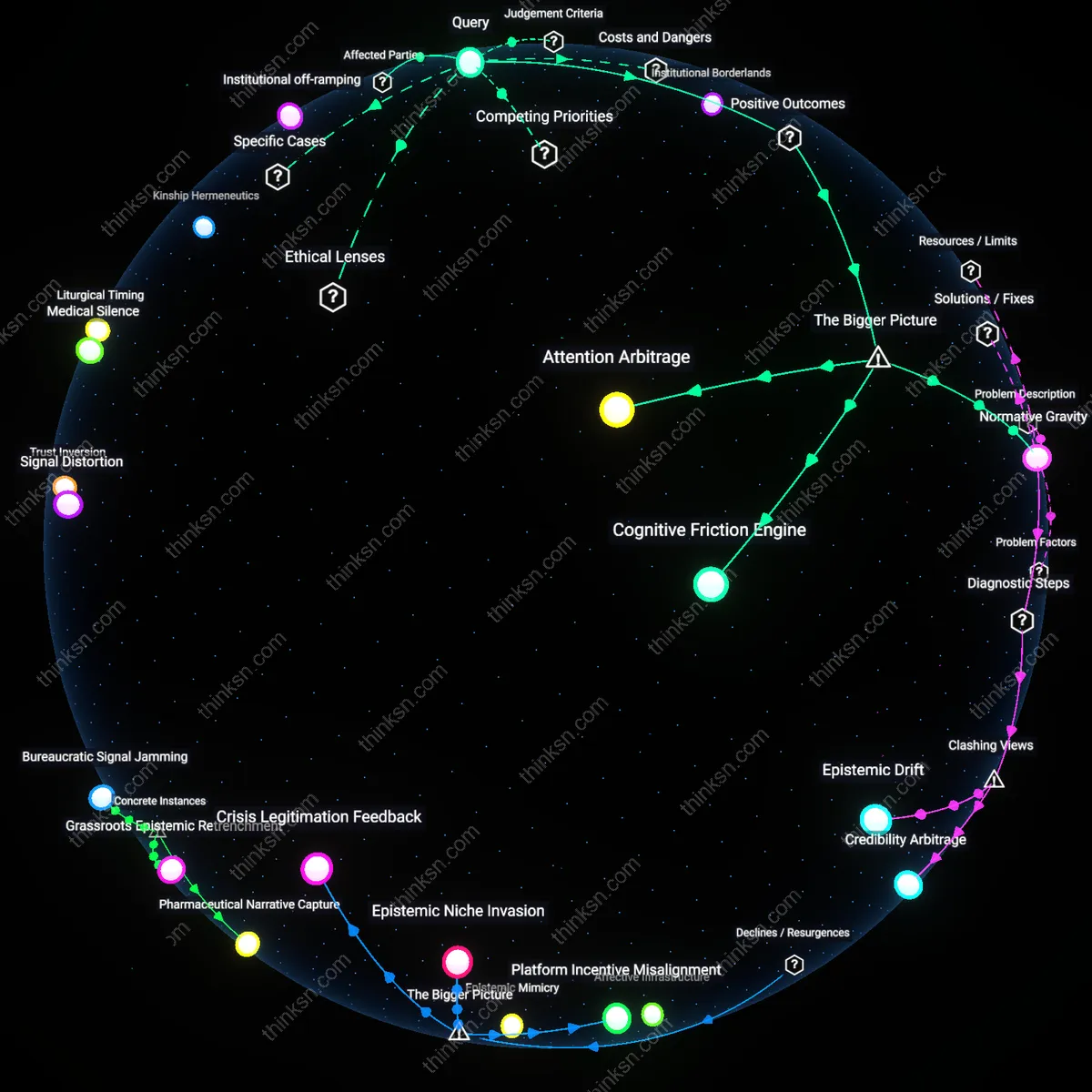

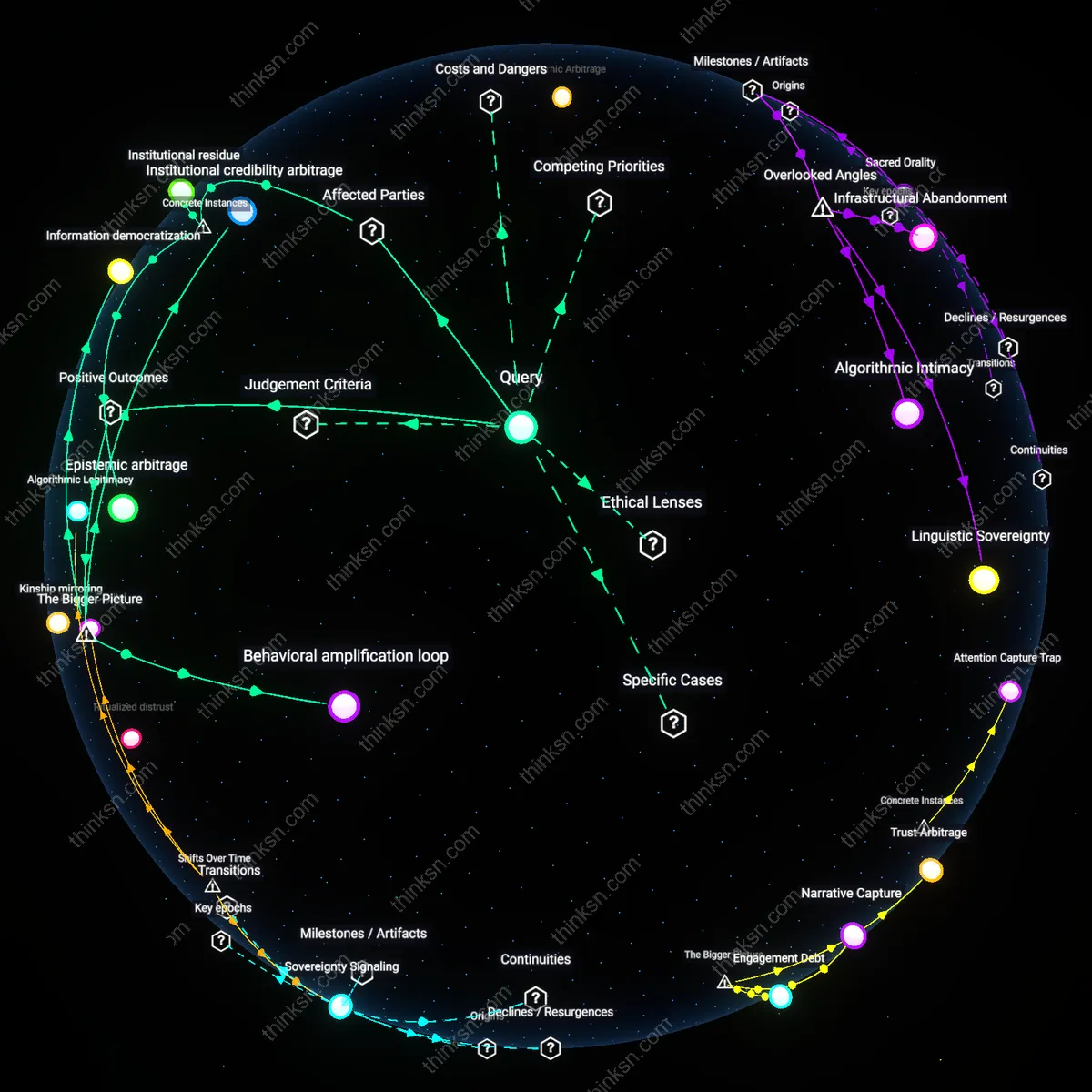

Attention Arbitrage

Coordinated disinformation on Reddit persists not because of gullibility but because it exploits a systemic imbalance in attention economics, where sensational or emotionally charged content gains disproportionate reach due to algorithmic amplification and user behavior. Bad-faith actors—including state-affiliated troll farms or domestic grifters—strategically inject narratives into high-traffic communities like r/worldnews or r/politics, where engagement incentives prioritize virality over veracity, enabling falsehoods to piggyback on genuine discourse peaks such as elections or disasters. This dynamic is sustained by platform architecture that rewards engagement metrics over epistemic quality, allowing malign actors to convert minimal investment into outsized influence; the underappreciated reality is that diversity of viewpoints becomes a vulnerability when the system cannot distinguish between authentic pluralism and weaponized noise.

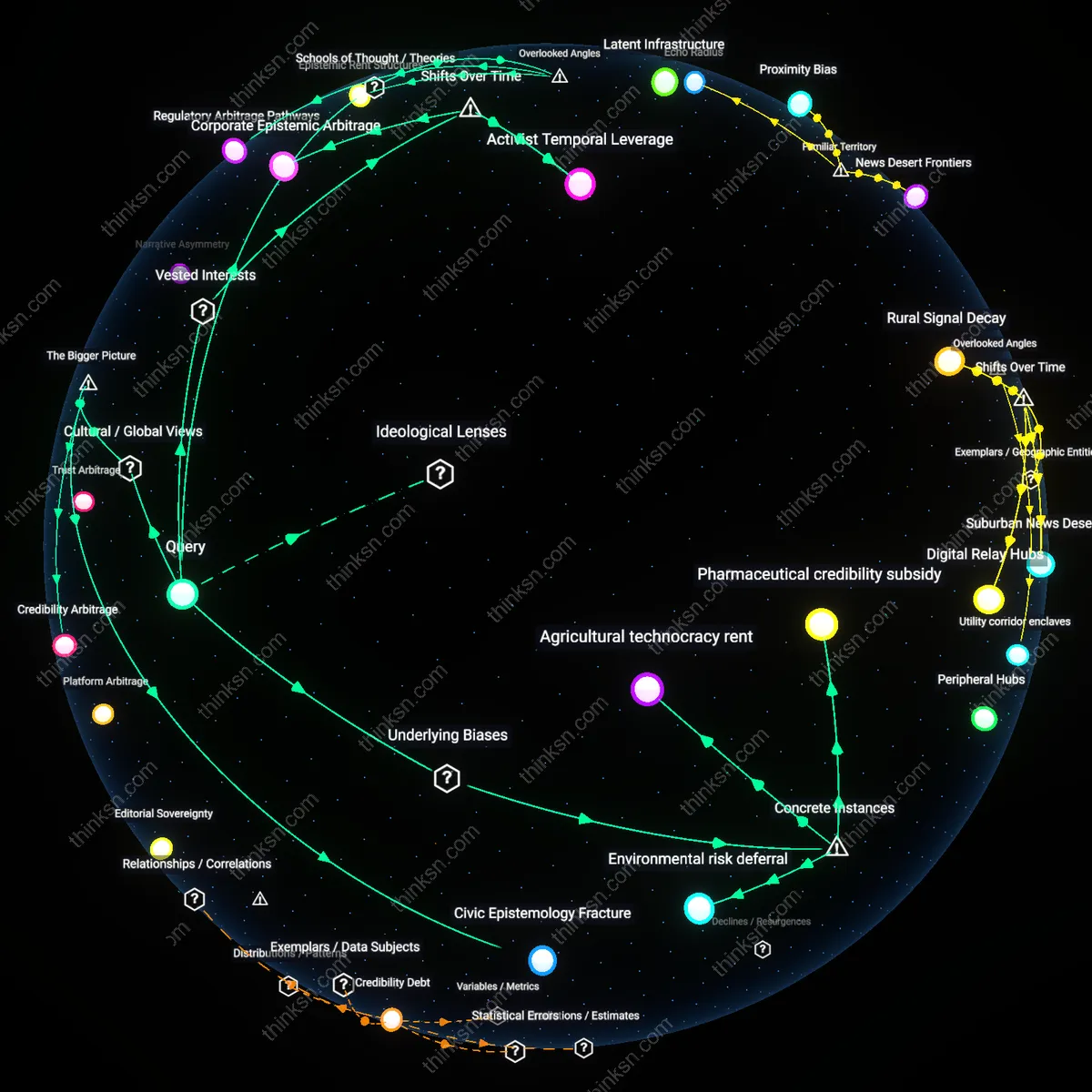

Normative Gravity

Highly moderated subreddits with strong epistemic norms—such as r/science or r/DataIsBeautiful—act as counterweights to disinformation by establishing local truth standards that attract users seeking credible discourse, thereby creating normative gravity that shapes broader platform culture. These communities exert influence not through top-down enforcement but through the gravitational pull of consistent moderation, citation requirements, and community-sanctioned expertise, which train users to expect evidence-based discourse even in adjacent, less rigorous spaces. This dynamic emerges from the interaction between volunteer moderators, subreddit-specific rules, and user migration patterns, revealing that diversity’s benefit lies not in volume of opinions but in the presence of anchored, rule-governed enclaves that stabilize the epistemic ecosystem—something easily overlooked in debates that treat Reddit as a monolithic attention arena rather than a constellation of competing normative zones.