Is Community Worth the Misinformation on Facebook?

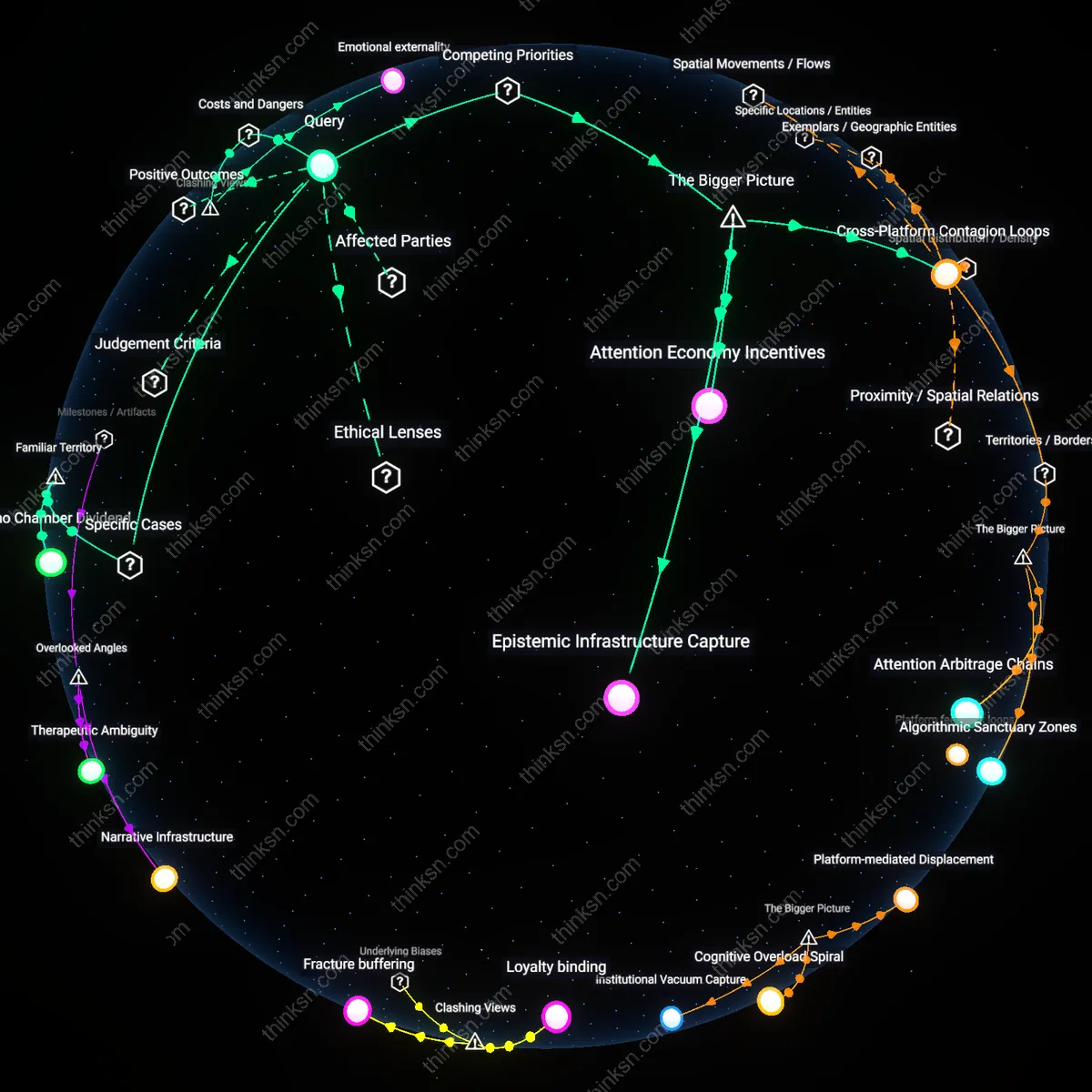

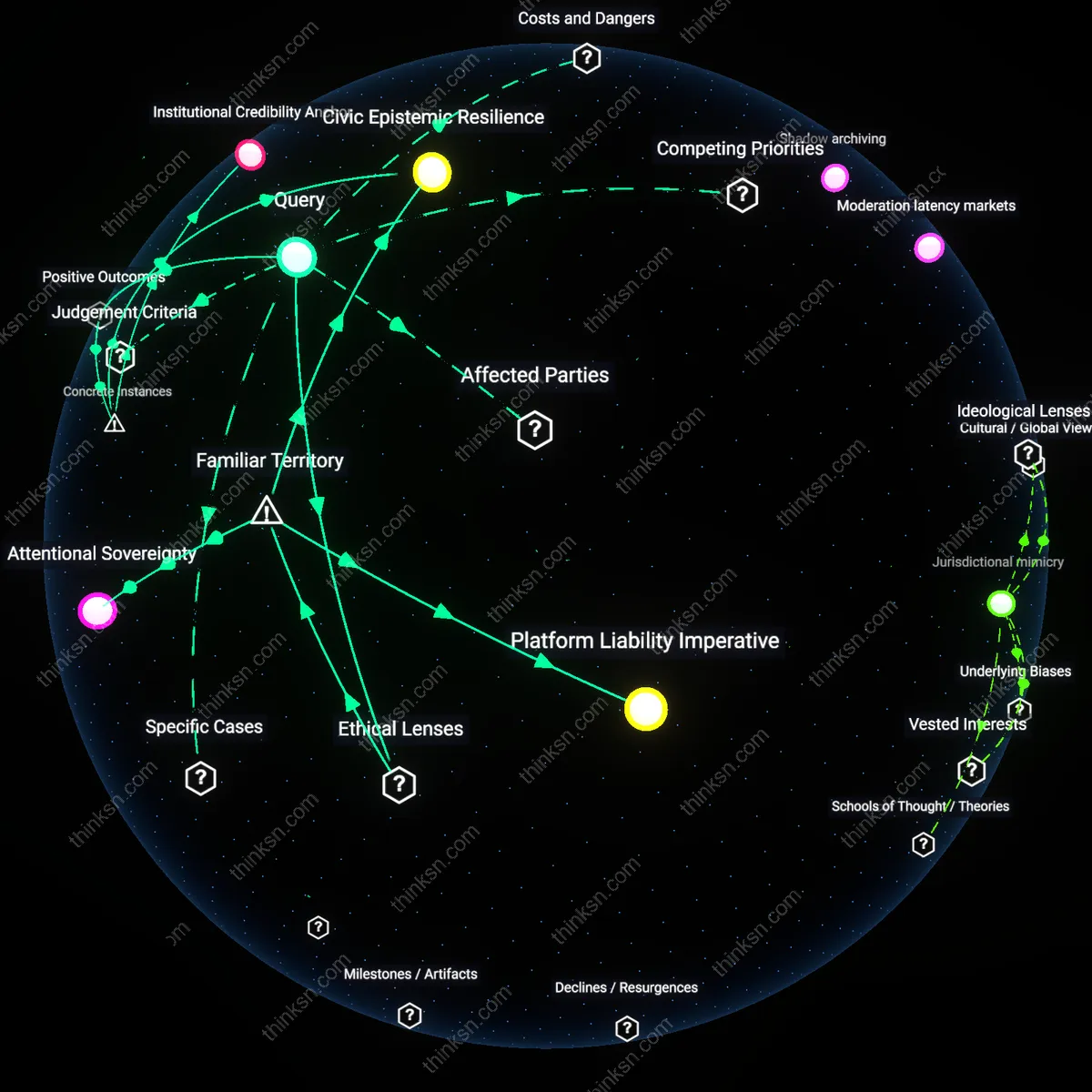

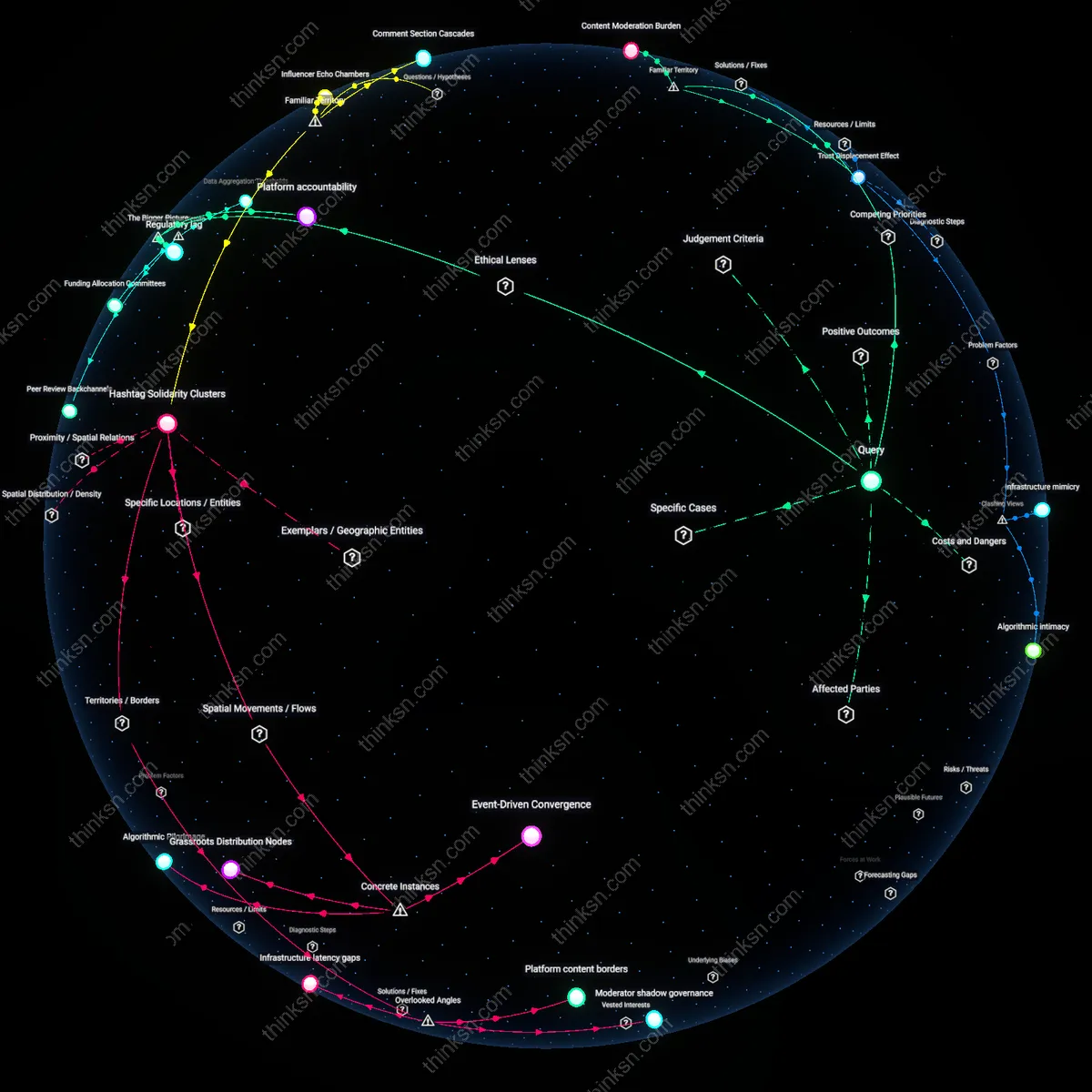

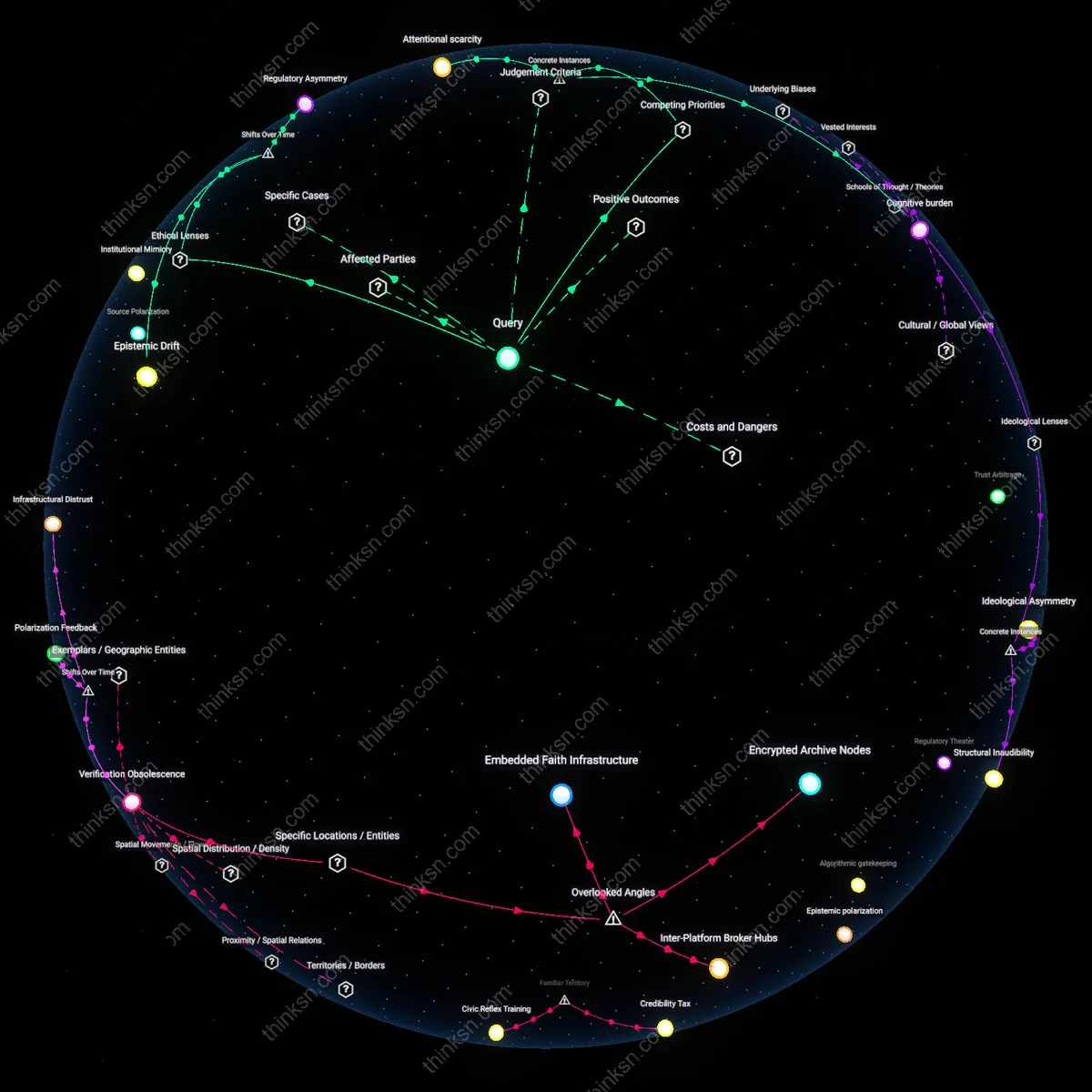

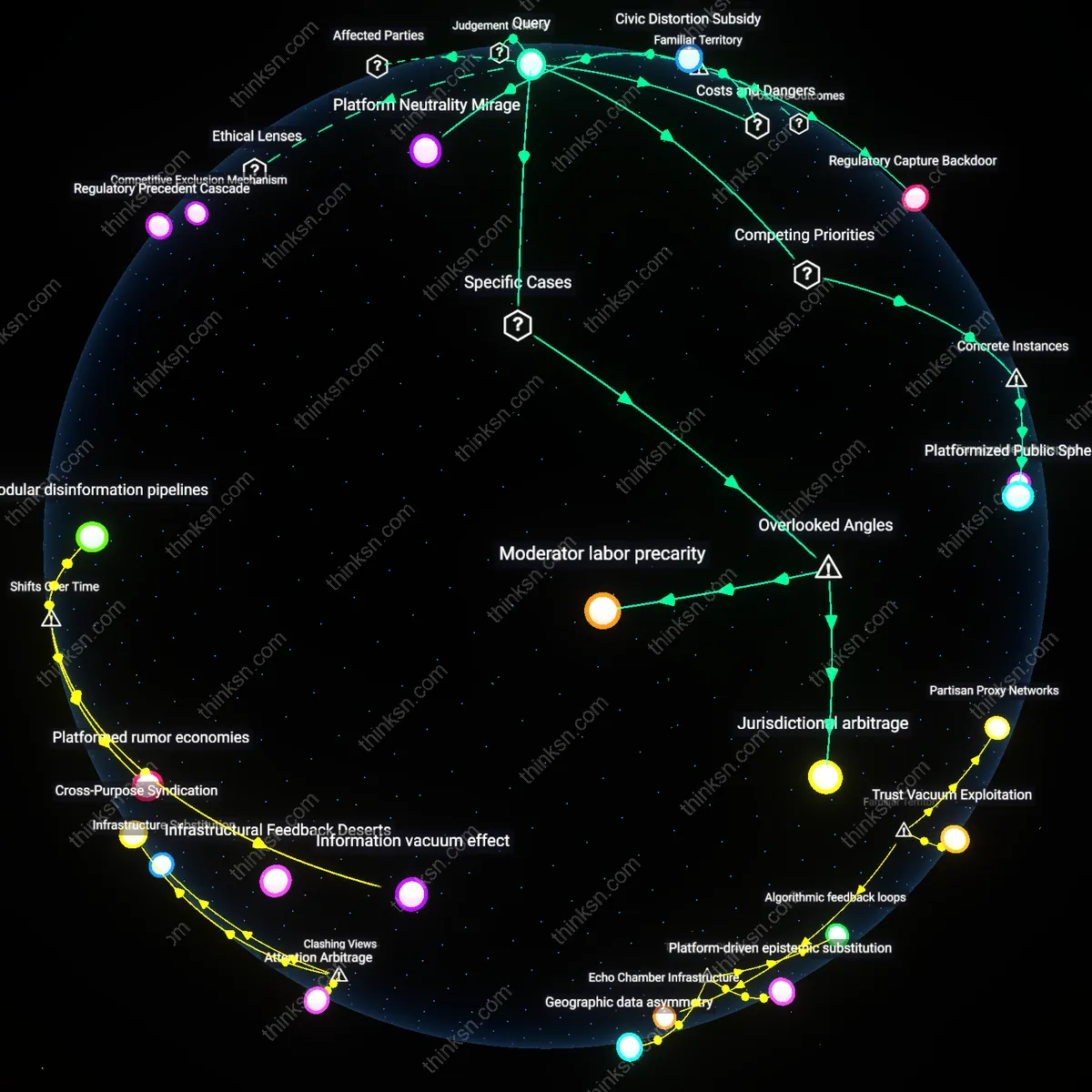

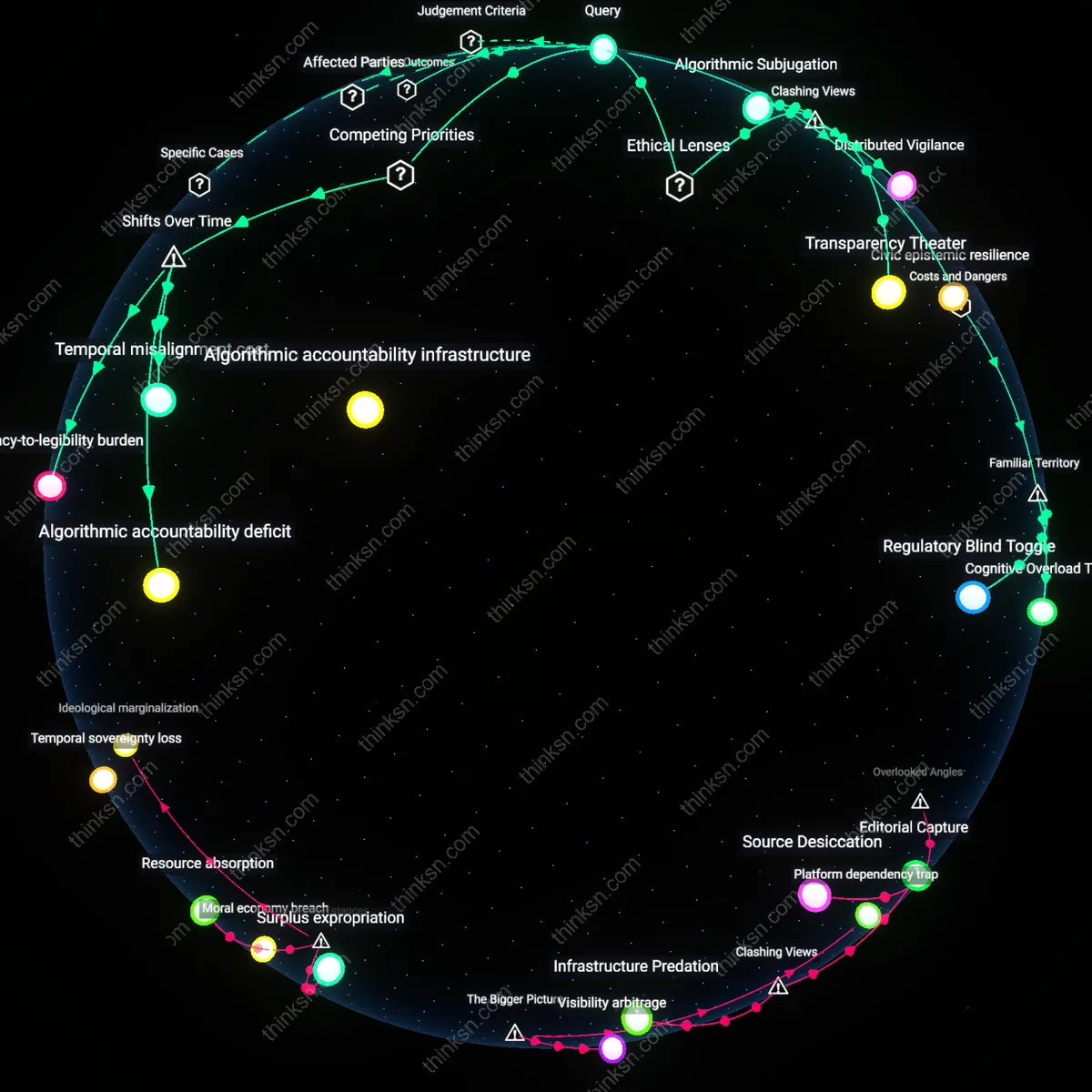

Analysis reveals 7 key thematic connections.

Key Findings

Algorithmic entrapment

No, the sense of community in niche Facebook groups exacerbates misinformation by locking vulnerable users into algorithmically reinforced feedback loops that prioritize engagement over truth. Facebook’s recommendation engine amplifies emotionally resonant content within these groups, gradually steering even well-intention pushing users toward increasingly extreme or false narratives as a condition of belonging. This mechanism operates through the platform’s engagement-based ranking system, which rewards outrage, affirmation, and identity-based signaling more than factual accuracy, thereby converting communal bonds into vectors of radicalization. The non-obvious danger is that tightly knit communities do not resist misinformation by default—they often accelerate it, because social cohesion becomes dependent on shared belief rather than critical scrutiny.

Emotional externality

No, because the psychological benefits of belonging in niche Facebook groups are privatized as emotional relief while the societal costs of misinformation—such as public health failures or election interference—are externalized across the information ecosystem. Users gain immediate affective rewards from validation within closed groups, but the platform’s scale ensures that distortions originating in seemingly harmless niches propagate into mainstream discourse via cross-network leakage. This operates through the asymmetry between micro-level social reinforcement and macro-level disinformation cascades, where Facebook profits from both user retention and ad-driven amplification. The underappreciated friction is that community here functions not as a social good but as an emotional subsidy that masks the user’s complicity in a larger epistemic crisis.

Attention Economy Incentives

No, the sense of community in niche Facebook groups does not justify tolerating the platform’s role in spreading misinformation because the architecture of Facebook’s algorithmic curation is structurally dependent on engagement maximization, which systematically amplifies emotionally charged or divisive content over accuracy; this mechanism, driven by advertisers’ demand for user attention, ensures that even benign communities exist within an ecosystem engineered to reward outrage and ideological reinforcement. The non-obvious connection is that localized belonging does not operate in isolation but is parasitic on a platform-wide incentive structure that monetizes cognitive bias—meaning community value cannot be decoupled from misinformation risk without undermining Facebook’s core business model.

Epistemic Infrastructure Capture

No, the community benefits of niche Facebook groups are insufficient justification for platform tolerance because Facebook has effectively replaced public, state-affiliated information intermediaries—such as local newspapers or civic bulletin boards—with proprietary, unaccountable infrastructures that privatize community formation while embedding opaque content moderation and visibility rules. This shift allows the platform to act as a de facto gatekeeper of social trust, where the conditions for belonging are covertly shaped by corporate policy changes, data practices, and geopolitical lobbying—making localized cohesion vulnerable to sudden, externally imposed disruptions. The underappreciated dynamic is that community on Facebook is not self-sustained but structurally dependent on a fragile, profit-driven epistemic backbone that can reconfigure or dissolve social ties without public oversight.

Cross-Platform Contagion Loops

No, the value of community in niche Facebook groups cannot outweigh the platform’s role in spreading misinformation because localized interactions frequently serve as on-ramps for users into larger, algorithmically fueled networks of disinformation that spill beyond Facebook into real-world institutions like healthcare, elections, or education; for example, a closed group for natural parenting may begin with non-political discussions but gradually normalizes anti-vaccine sentiment that migrates to offline organizing or influence campaigns. The systemic danger lies in Facebook’s function as a 'low-threshold incubator'—where intimacy and trust are exploited to build epistemic resilience against counterevidence, enabling misinformation to achieve social legitimacy before it proliferates across platforms and jurisdictions.

Echo Chamber Dividend

The sense of community in anti-vaccination Facebook groups justifies tolerating misinformation because it fulfills deep emotional needs for belonging among parents who feel dismissed by mainstream medicine. Members of groups like ‘Vaccinated Kids Are Sick Kids’ rely on shared narratives that reinforce identity and mutual support, creating a self-sustaining system where misinformation spreads through trusted peer validation rather than algorithmic amplification alone. This dynamic reveals that the platform’s harm is not incidental but instrumental—users accept misinformation as the cost of admission to a rare space of affirmation, making the community itself a vector for ideological insulation. The non-obvious insight is that these groups function not because people distrust science, but because they trust their community more than institutions, reframing misinformation tolerance as a rational trade-off in fragmented social ecosystems.

Crisis Coproduction

Cancer survivor groups on Facebook, such as ‘Metastatic Breast Cancer Network,’ sustain life-saving emotional resilience and practical advice exchange, leading members to accept misinformation about alternative treatments as a tolerated byproduct of open dialogue. These communities operate on peer-led moderation and urgency-driven sharing, where speed and empathy outweigh verification, especially during late-night emotional crises. The system thrives because Facebook enables immediate, unfiltered connection during moments of extreme vulnerability—something no regulated platform has replicated. The overlooked truth is that misinformation is not merely overlooked here; it is coproduced by caregiving instincts, where sharing any potential hope—however unproven—feels ethically mandatory, thus embedding epistemic risk into the moral fabric of support.