Curbing Misinfo: Where Does Free Press Meet Regulation?

Analysis reveals 9 key thematic connections.

Key Findings

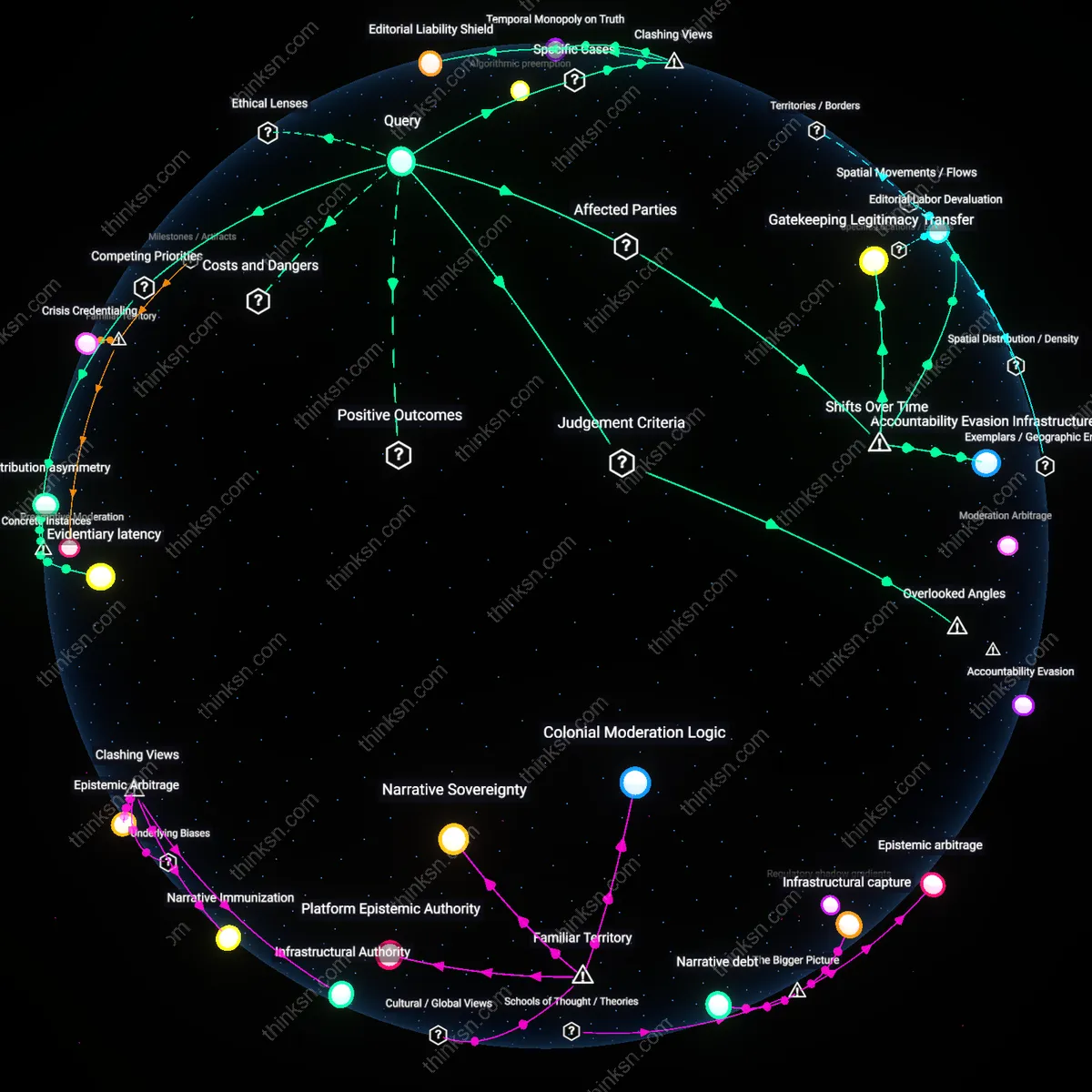

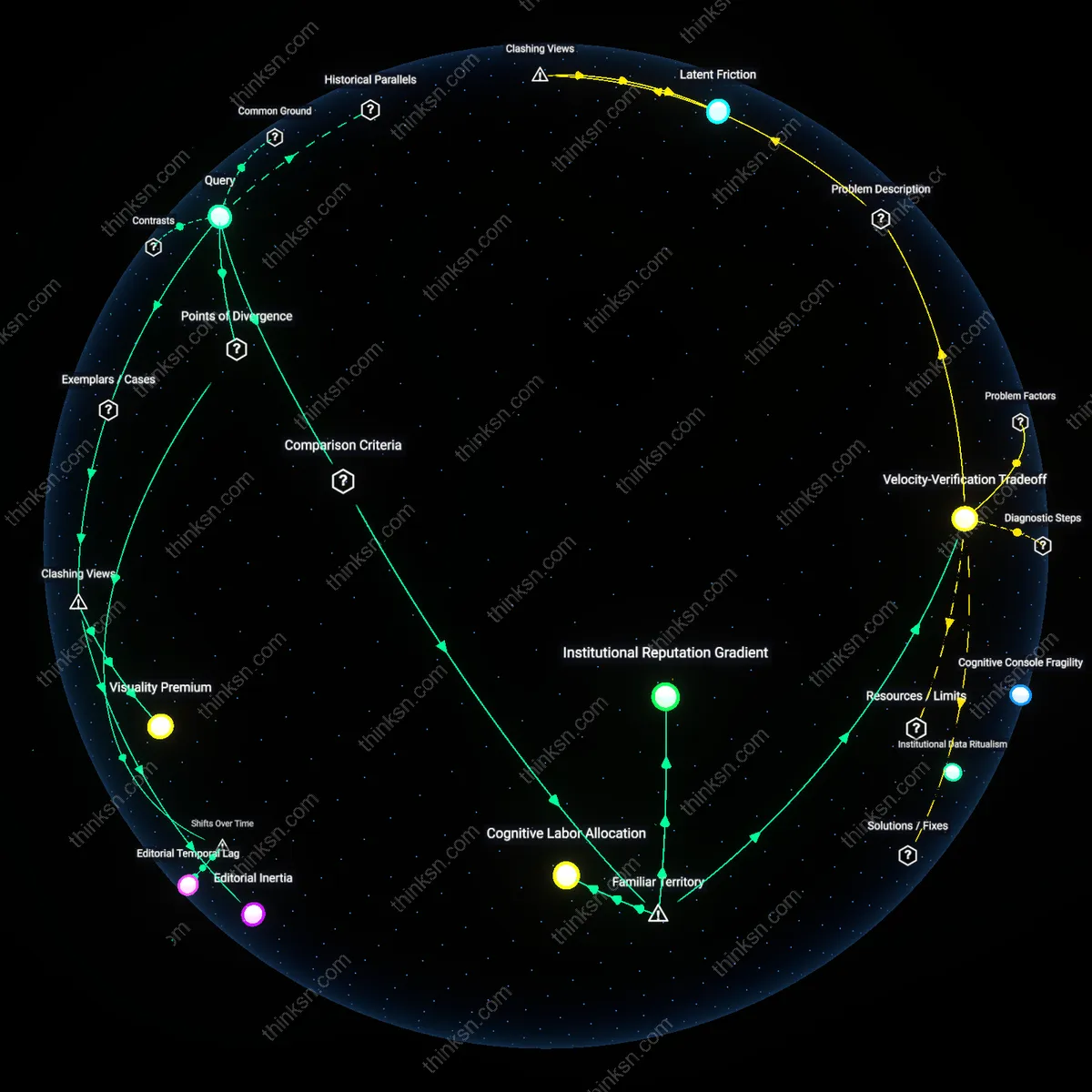

Editorial Labor Devaluation

Platforms' algorithmic enforcement against misinformation after 2016 inadvertently degraded investigative journalism by equating time-intensive, source-dependent reporting with real-time false claims, collapsing epistemic distinctions in content moderation systems. Moderation infrastructures built to respond rapidly to viral falsehoods—such as third-party fact-checking partnerships and automated takedowns—prioritize speed and scale, marginalizing journalistic practices that rely on delayed verification, anonymous sourcing, and institutional accountability, thus redefining rigorous reporting as procedural risk. This shift, crystallized during the post-2016 electoral disinformation crackdowns, exposed how platform governance began treating editorial judgment not as a safeguard against misinformation but as a liability akin to malinformation, particularly affecting local journalists and whistleblowing intermediaries. The non-obvious consequence is that the very actors historically tasked with uncovering institutional deception became structurally vulnerable to the same moderation mechanisms designed to protect public discourse.

Gatekeeping Legitimacy Transfer

The transition from legacy media dominance to platform-mediated news distribution after 2010 transferred gatekeeping authority from newsrooms to tech firms, allowing platform policies framed as neutrality to selectively delegitimize investigative work that challenges powerful actors. As platforms assumed editorial-like roles through recommendation algorithms and community guidelines—especially after the 2020 U.S. election cycle—they began to arbitrate truth claims not by journalistic standards but by reputational risk, resulting in the suppression of investigative reports under broad misinformation labels when they involved classified leaks or corporate wrongdoing. This reconfiguration placed investigative journalists aligned with marginalized communities or adversarial to state power—such as those covering police misconduct or surveillance programs—at disproportionate risk of content removal, revealing how platform policy, under the guise of consistency, replicates state or corporate norms of legitimacy. The underappreciated outcome is that the historical function of journalism as a counter-power has been destabilized not by overt censorship but by the temporal and procedural mismatch between investigative timelines and platform enforcement cycles.

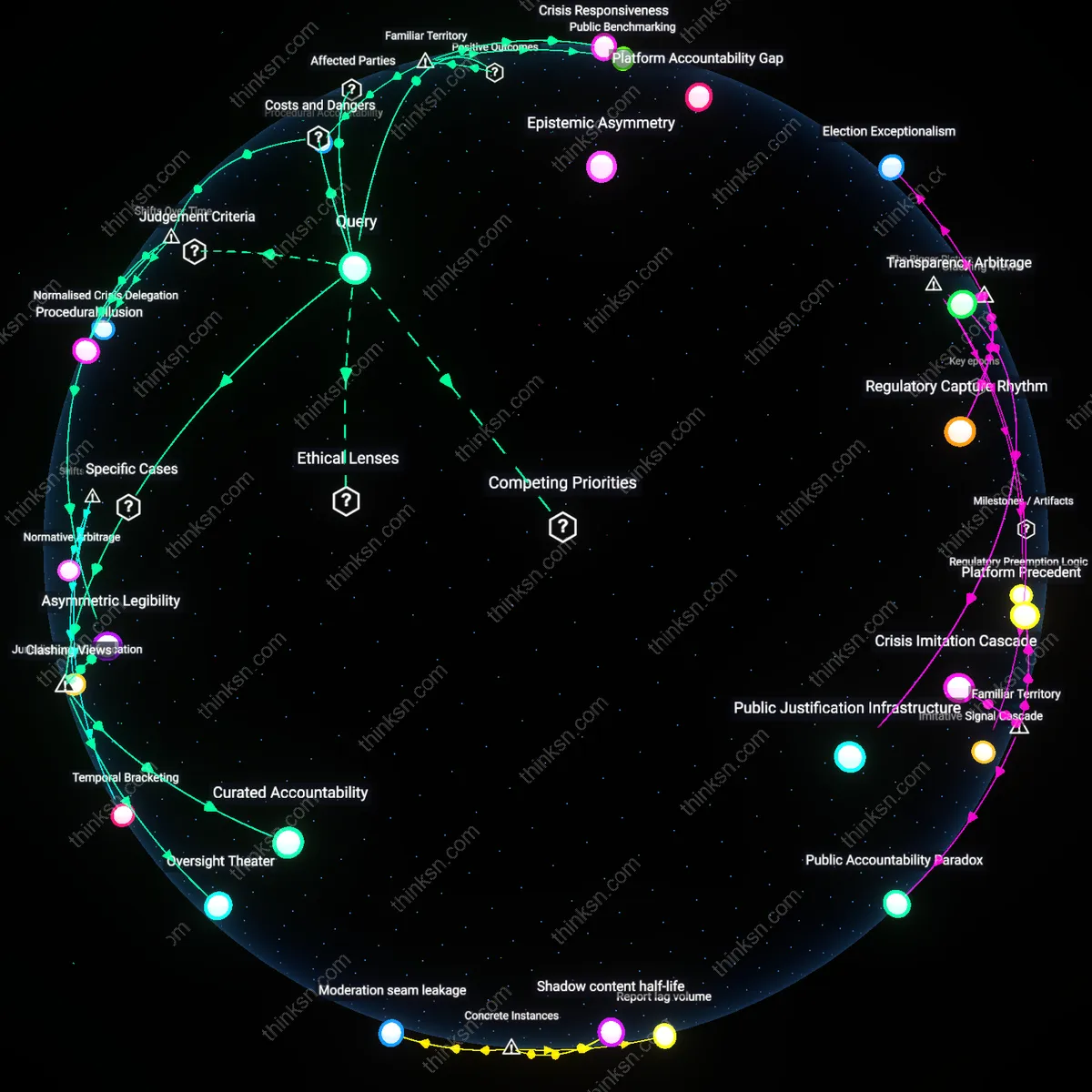

Accountability Evasion Infrastructure

Following the 2018 Cambridge Analytica scandal, platforms formalized misinformation policies that obscured their own role in amplifying deceptive content, framing enforcement as neutral hygiene rather than political judgment, thereby insulating themselves from accountability when those policies hinder investigative journalism. By adopting standardized definitions of misinformation rooted in consensus verification—often tied to government or scientific authorities—platforms avoided responsibility for decisions that disproportionately removed content exposing institutional malfeasance, especially from freelance or nonprofit investigative units operating without institutional backing. This shift toward procedural compliance over epistemic transparency created a system where the removal of investigative material could be automated or outsourced without public justification, effectively transforming content moderation into a mechanism for deflecting scrutiny. The overlooked consequence is that platforms, under pressure to act decisively against misinformation, built infrastructures designed not to resolve truth claims but to minimize liability—rendering investigative journalism a collateral casualty of institutional self-preservation.

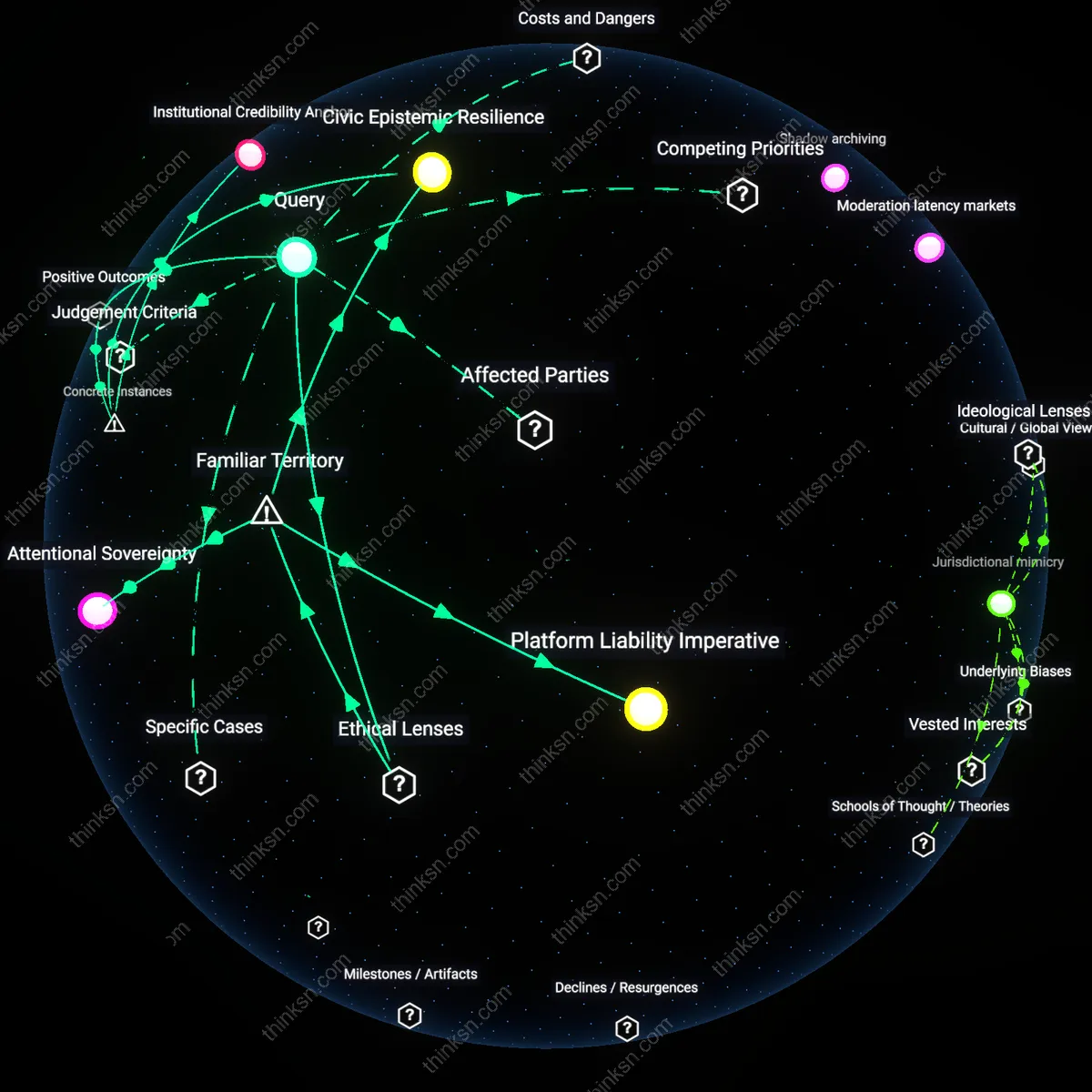

Temporal Preemption

Efforts to suppress misinformation cross into suppression of investigative journalism when preemptive content takedowns based on probabilistic risk models displace stories before publication, particularly in cases where journalists rely on platform infrastructure for source coordination and encrypted data transfer, as seen in the throttling of Signal-linked file shares or shadow-banning of draft tweets under AI surveillance systems like Twitter’s Trust & Safety algorithms. The overlooked dynamic is temporal preemption—the platform’s ability to act on anticipated harm before an investigative piece materializes—transforming risk assessment from response to anticipation, which shifts the burden of proof onto the journalist to prove legitimacy in advance, a reversal unseen in traditional media law and rarely acknowledged in transparency reports; this creates a hidden chokepoint at the pre-publication stage, where the speed of algorithmic governance outpaces editorial timelines and deters high-risk reporting by design.

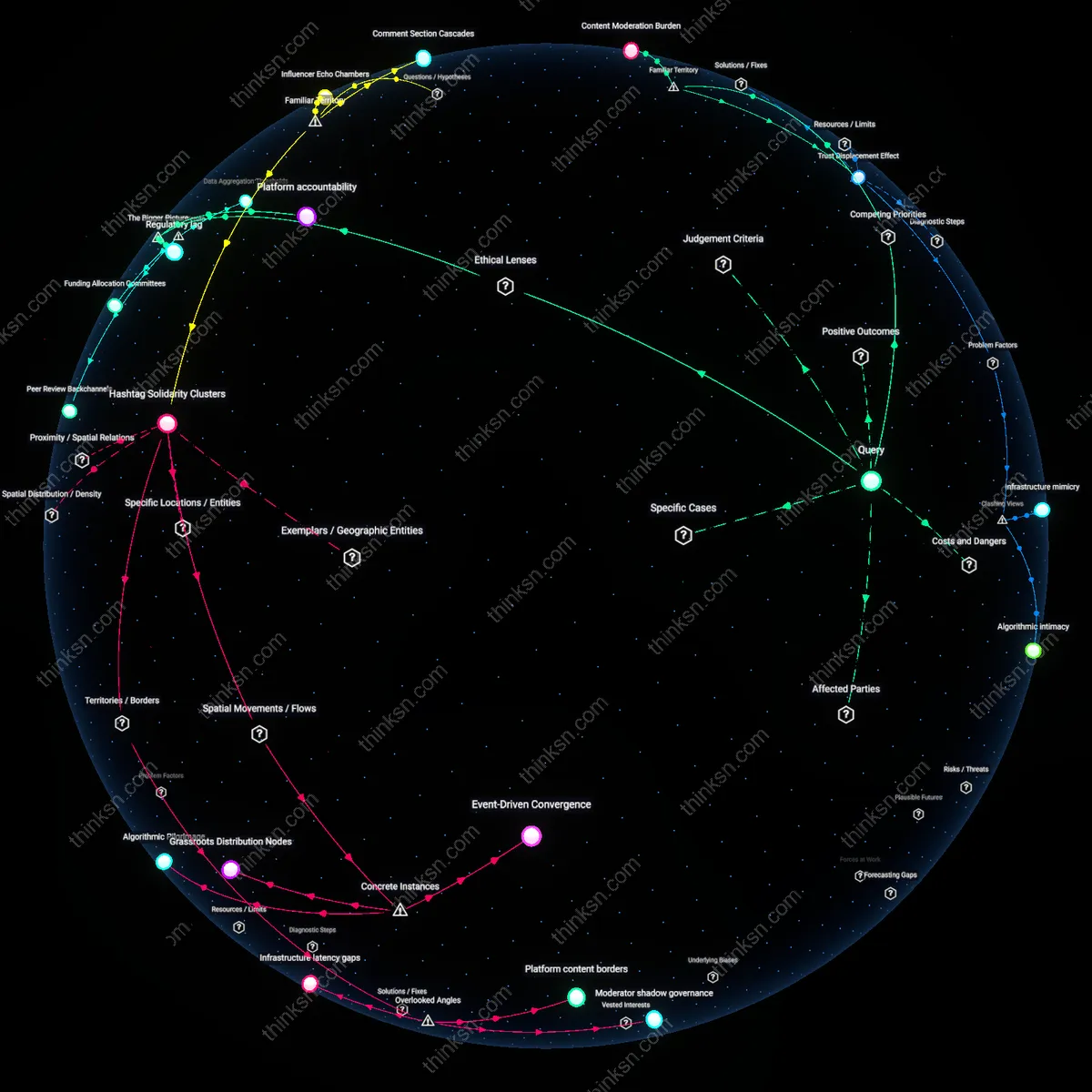

Moderator capture

Platform content moderation during the 2020 U.S. election disproportionately removed reports from freelance journalists and independent investigators sharing unverified ballot chain-of-custody footage, while retaining officially sourced misinformation about voter fraud, because centralized trust mechanisms prioritized institutional sourcing over procedural accountability, revealing how the pursuit of informational stability systematically disables grassroots verification actors.

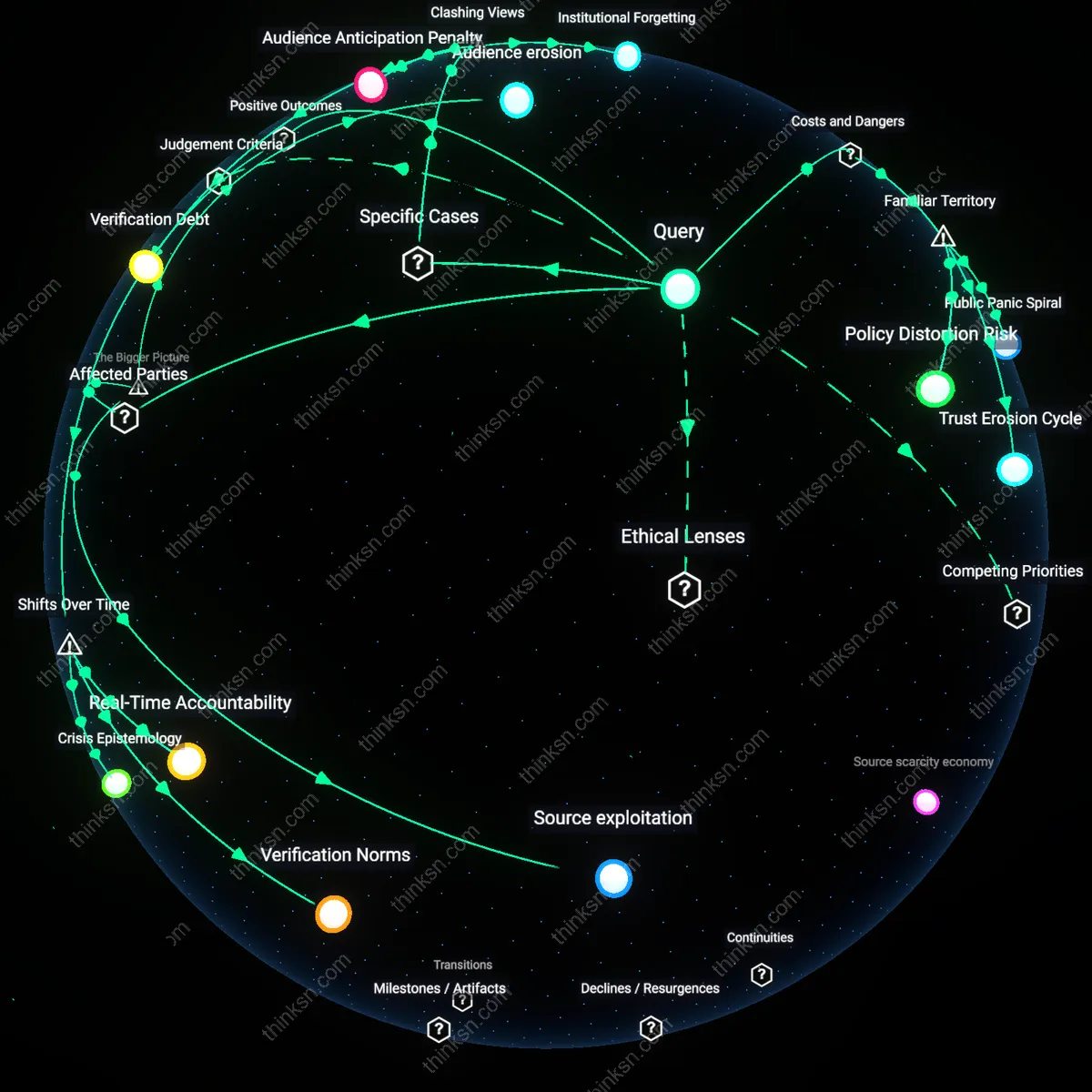

Evidentiary latency

In 2021, Facebook’s restrictions on posts discussing the origins of SARS-CoV-2 silenced early hypotheses about lab leaks—including those later corroborated by U.S. intelligence agencies—because its anti-misinformation protocols classified non-consensus scientific inquiry as harmful falsehood, demonstrating how real-time moderation systems cannot accommodate the slow, iterative timeline of investigative discovery.

Attribution asymmetry

When Twitter in 2022 enforced new rules against 'manipulated media' to block the New York Post’s Hunter Biden laptop story, it applied forensic verification standards to journalistic artifacts that were not required of state actors disseminating disinformation, exposing how platform policies designed to reduce harm privilege the provenance of the speaker over the veracity of the content, thus weaponizing authenticity standards against adversarial journalism.

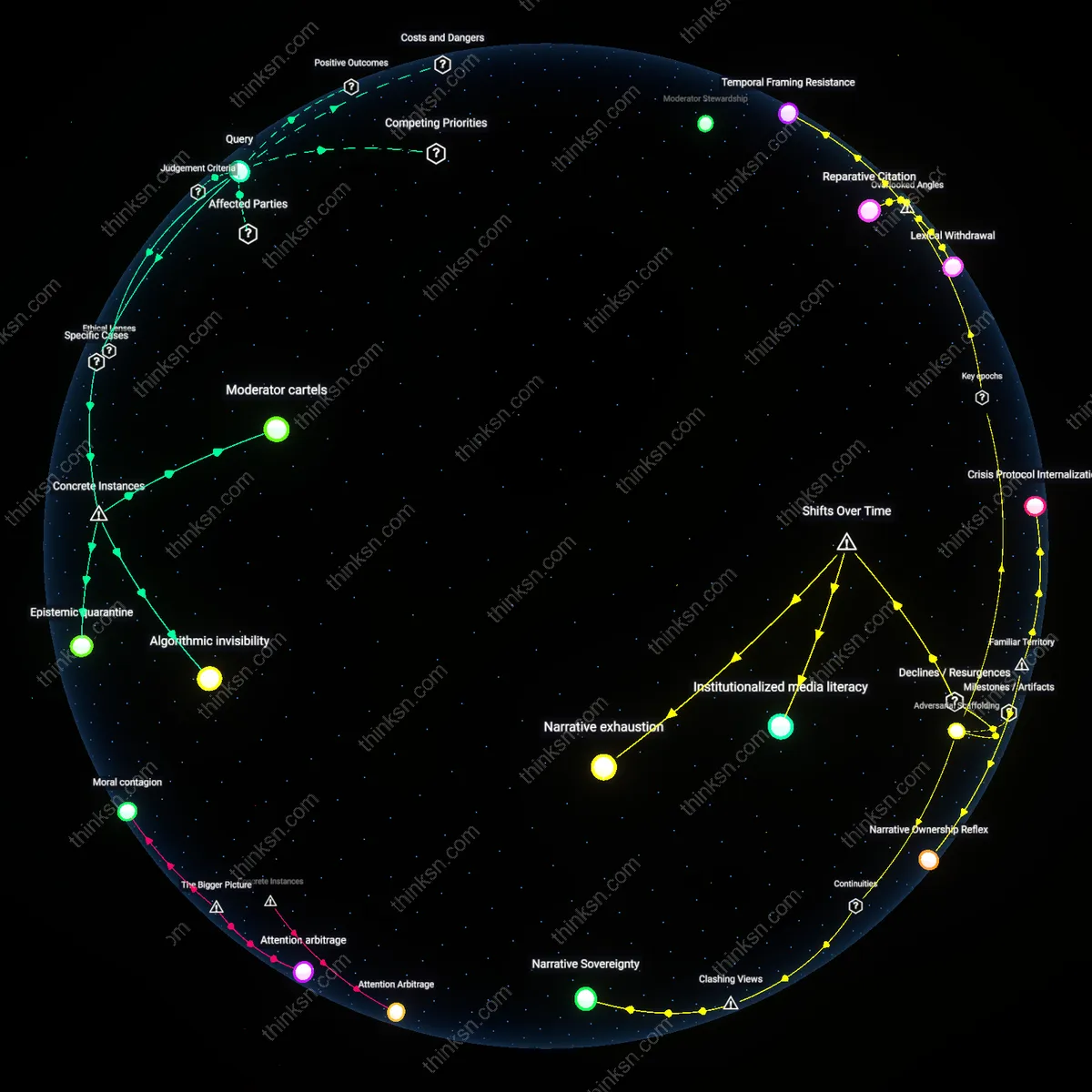

Editorial Liability Shield

Platforms that retroactively penalize news outlets for factual reporting under misinformation policies distort liability norms, as seen when Meta downgraded and defunded outlets like The Local in Germany after they published leaked documents on migration statistics that contradicted official narratives. The mechanism is not content moderation per se but the imposition of reputational and economic sanctions on organizations whose sourcing meets journalistic standards, thus creating a chilling effect on legally protected speech. The non-obvious consequence is that platforms become de facto arbiters of state truth, undermining the press’s legally recognized role as a counterweight to institutional power.

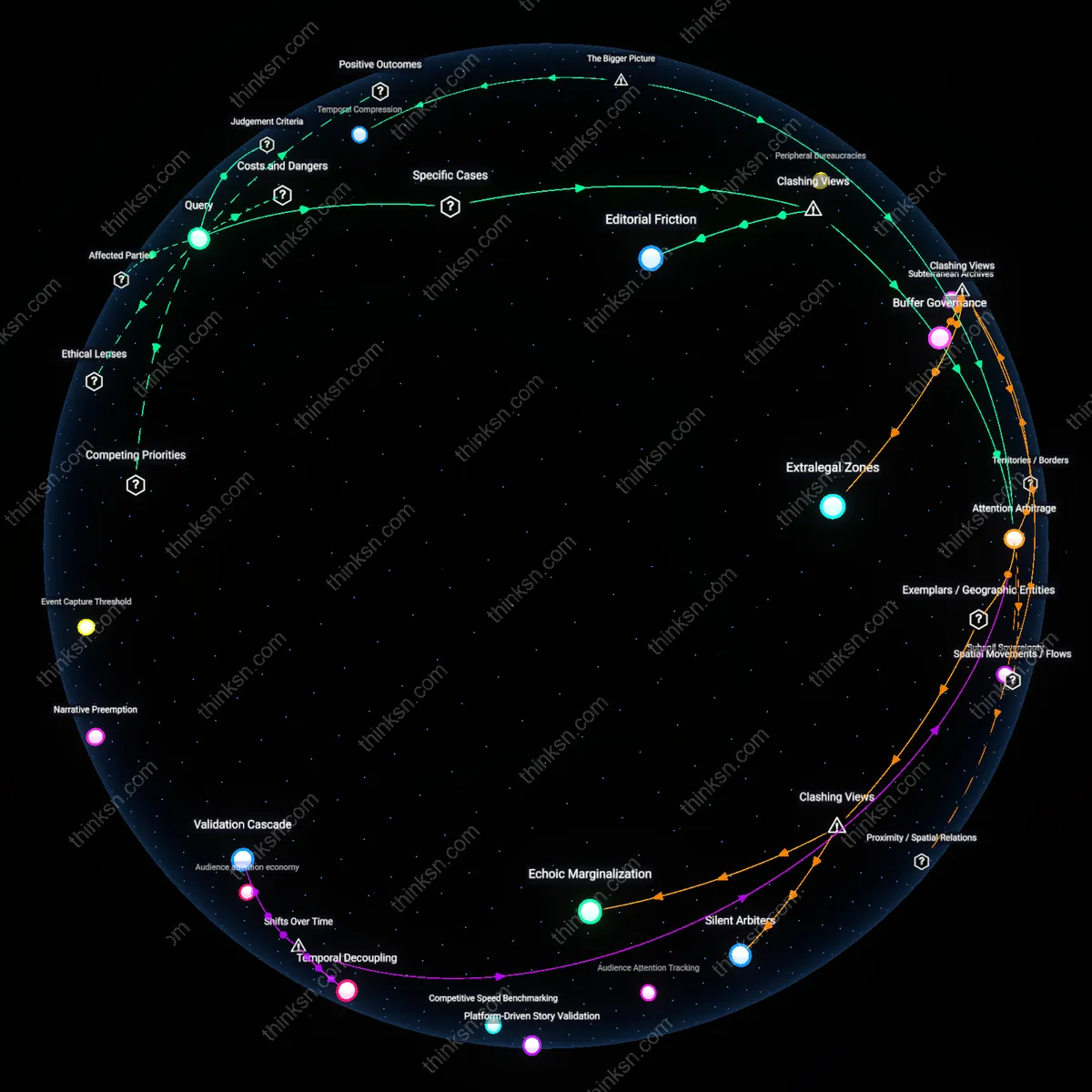

Temporal Monopoly on Truth

Investigative journalism becomes misclassified as misinformation when platforms enforce real-time alignment with emergent consensus, as occurred during the 2021 U.S. Capitol breach when Twitter suspended journalists sharing unverified footage of protester demographics before official reports existed. The dynamic hinges on platforms privileging temporal proximity to institutional validation over evidentiary rigor, thereby criminalizing the first draft of history. This reframes censorship not as overt suppression but as the monopolization of truth-timing—where delay is weaponized against the press’s core function to precede official narratives.