Self-Audits by AI Labs: Fix or Facade for Bias?

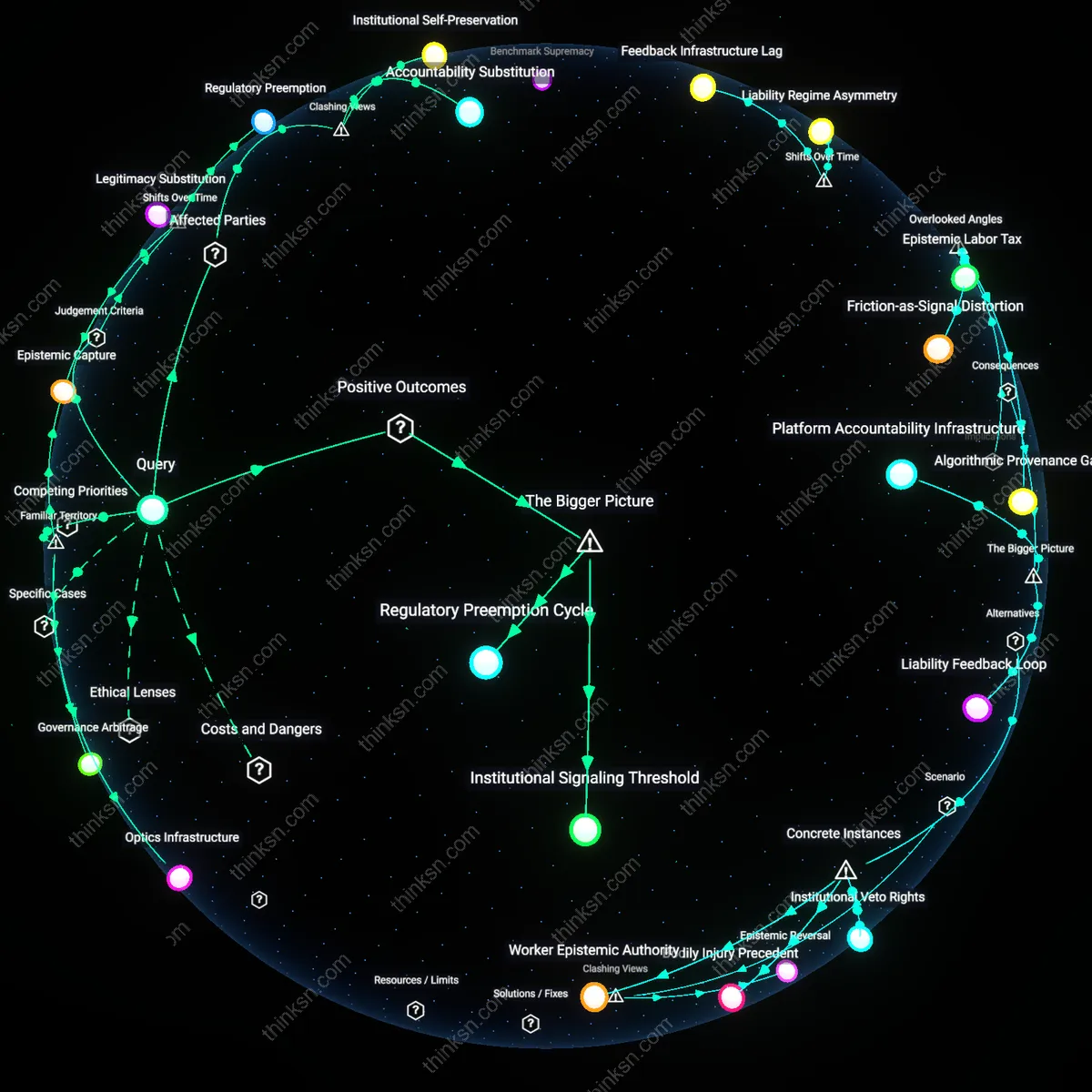

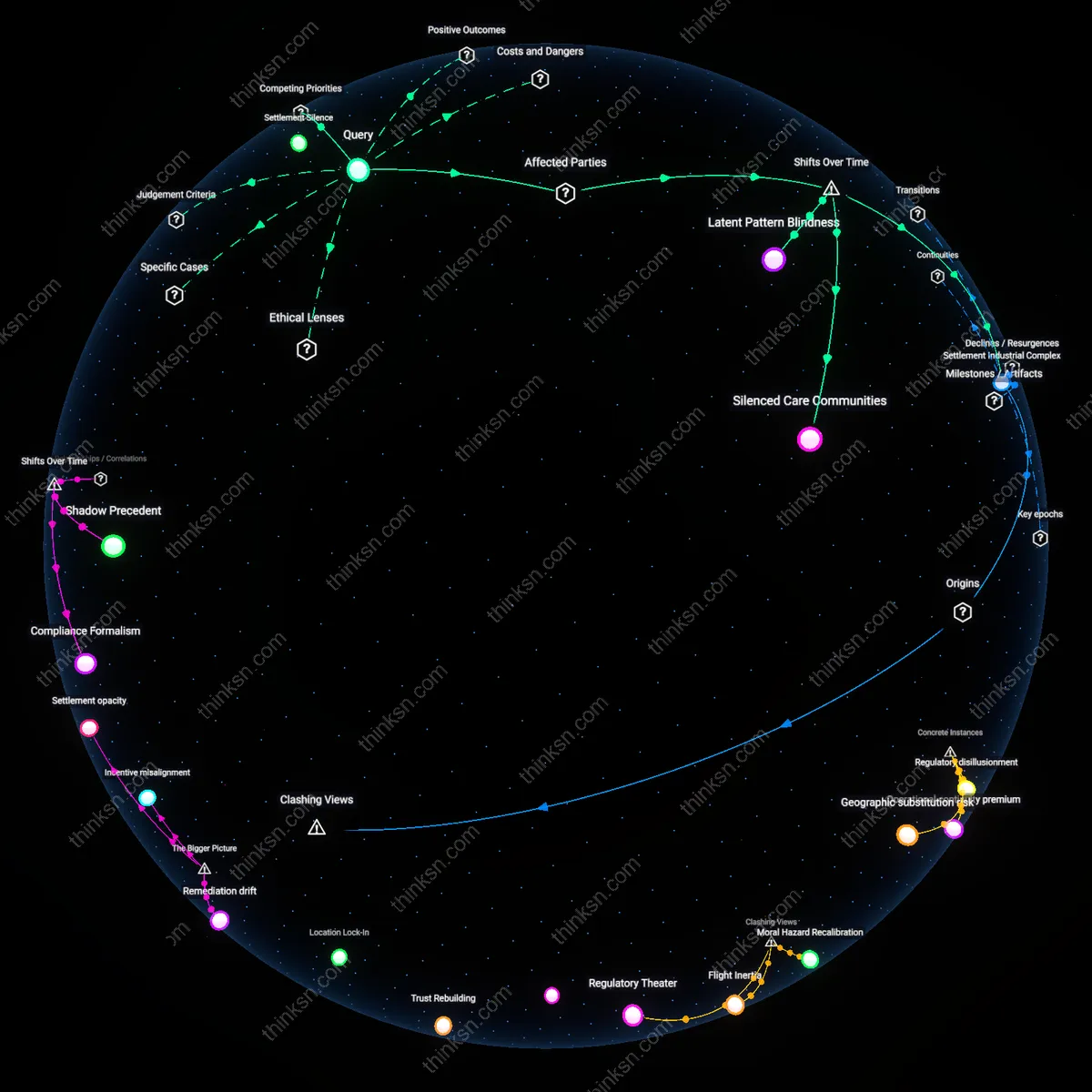

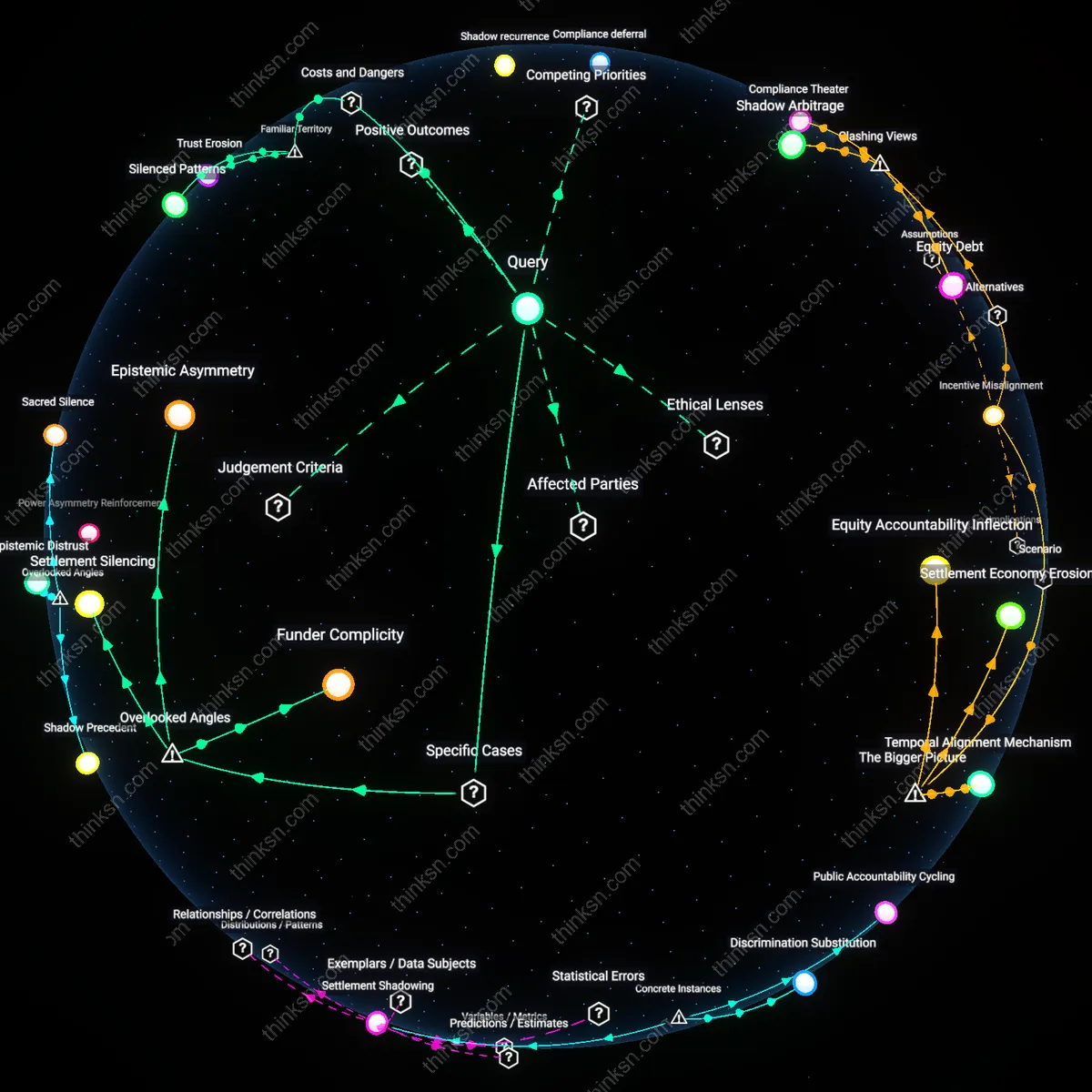

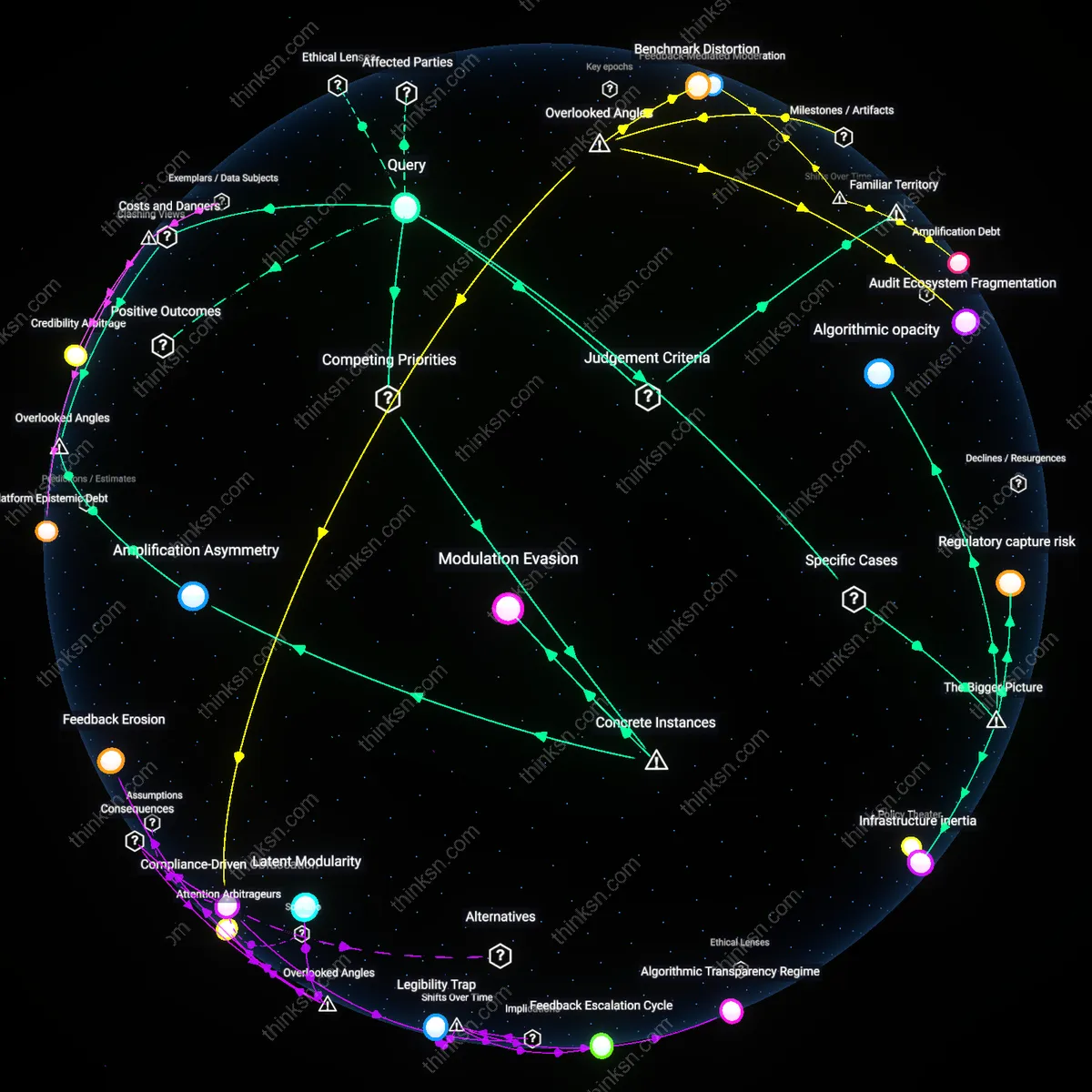

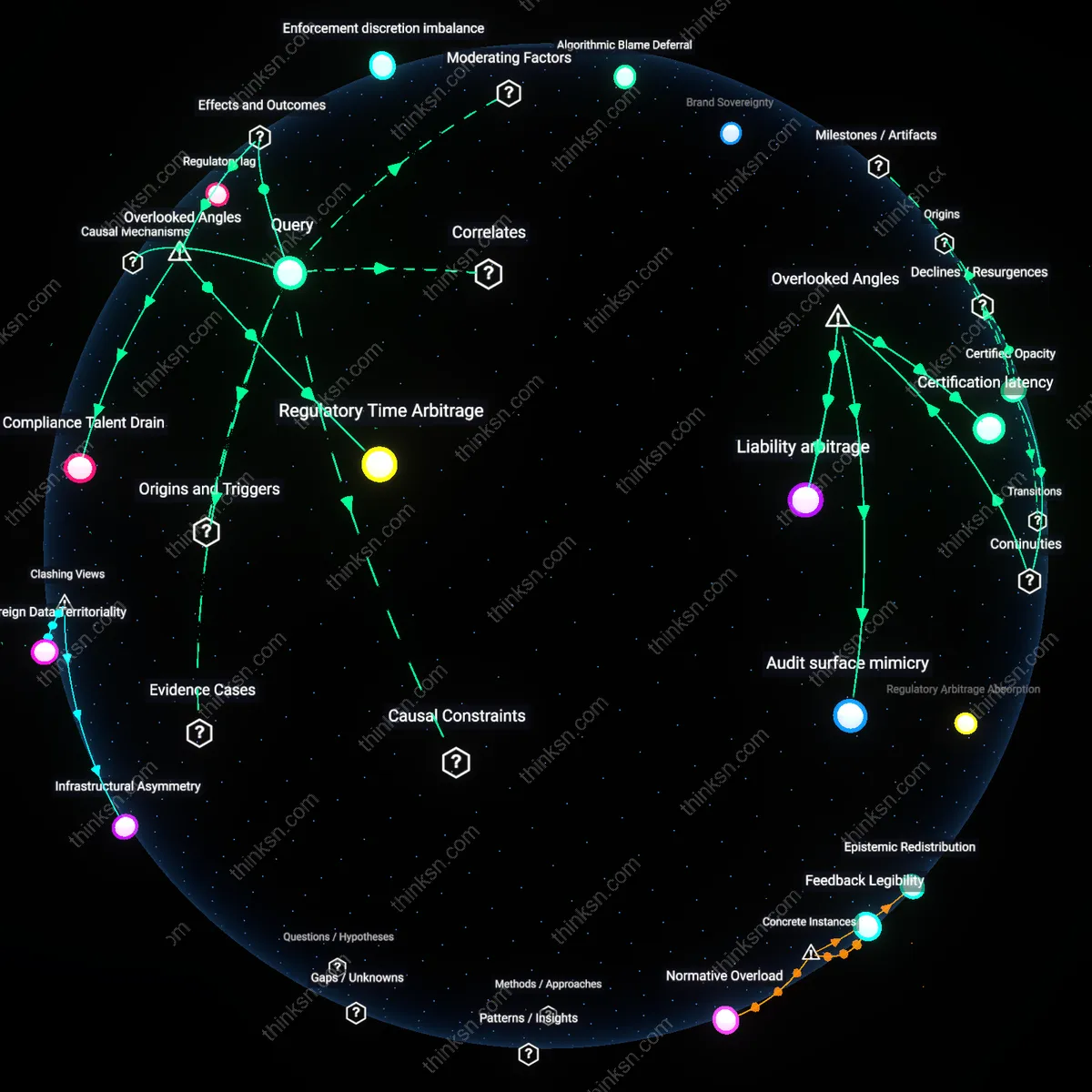

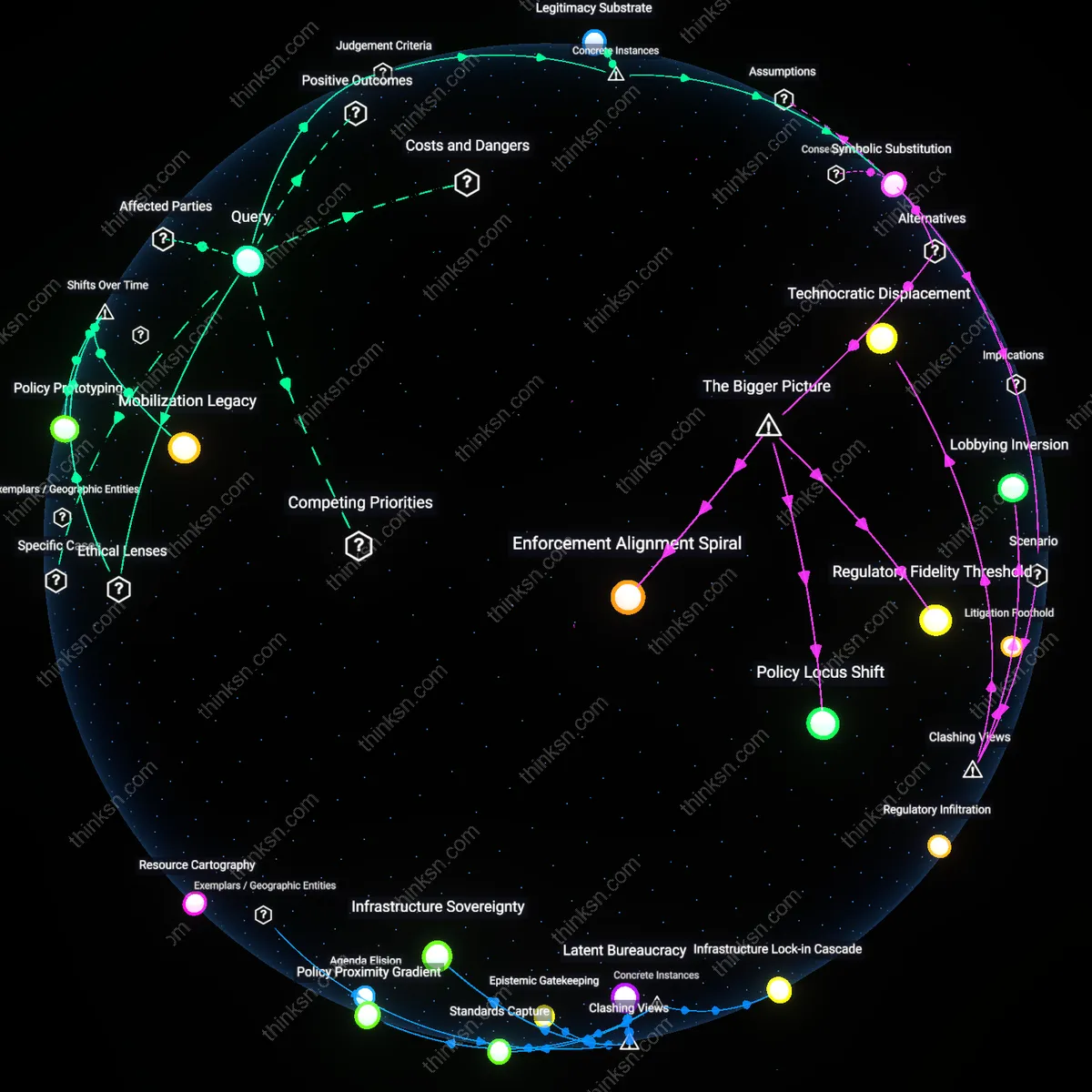

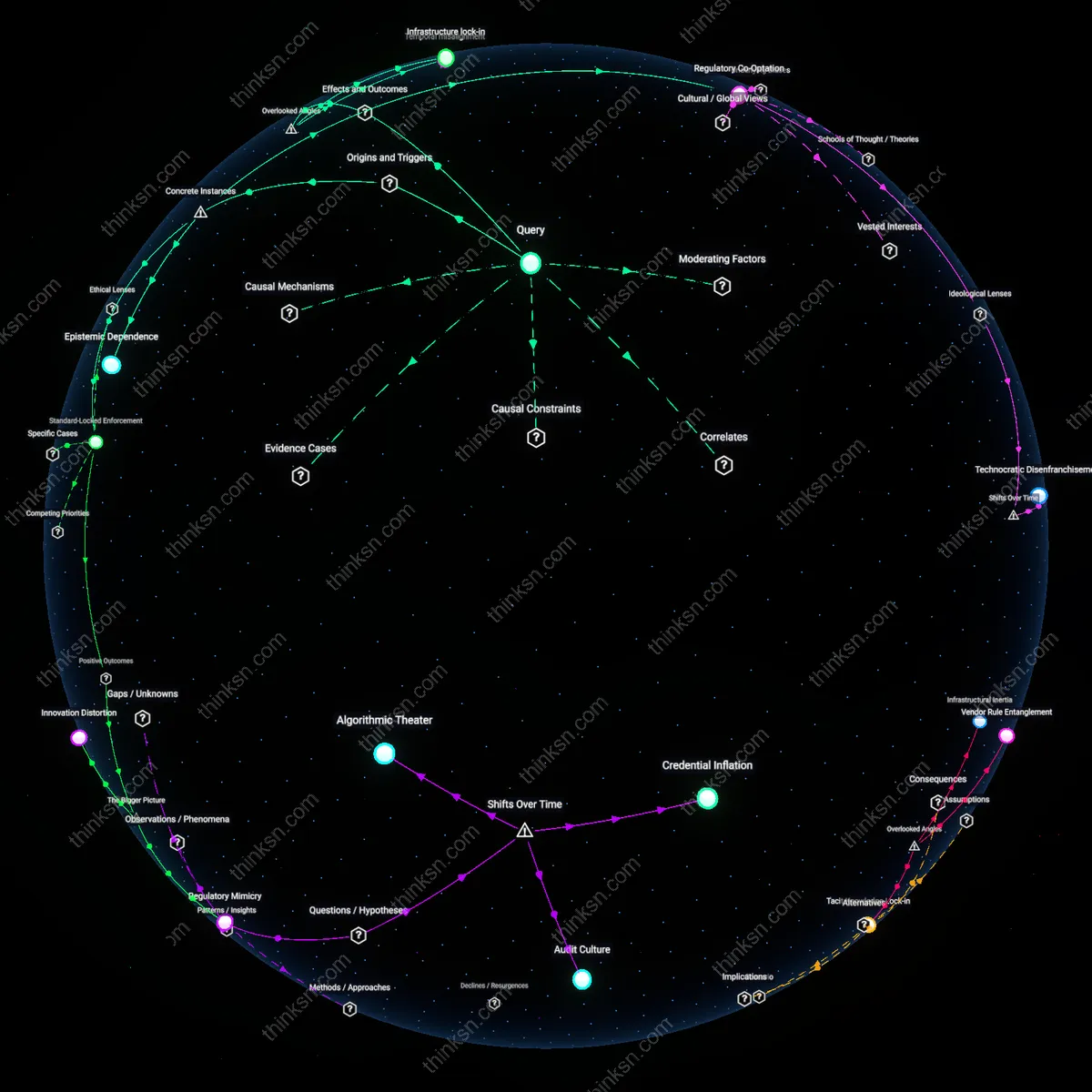

Analysis reveals 10 key thematic connections.

Key Findings

Institutional Self-Preservation

A major AI lab's pledge to self-audit for bias primarily protects its operational autonomy rather than dismantling discriminatory systems, because the auditing framework is designed and interpreted by the same corporate structures that benefit from unimpeded development cycles. This mechanism operates through controlled transparency—releasing summaries without methodological access—enabling labs to signal ethical compliance while insulating high-impact decisions from public scrutiny or regulatory intervention. The non-obvious friction here is that the act of auditing becomes a strategic performance of accountability that displaces more rigorous external oversight, revealing self-auditing not as a check on power but as a tool to consolidate it.

Epistemic Marginalization

Self-audits by AI labs exacerbate systemic discrimination by excluding frontline communities from defining what constitutes harm, because assessment criteria are derived from internal engineering benchmarks rather than lived experiences of targeted populations. This dynamic functions through epistemic gatekeeping—where only quantifiable, technical definitions of bias are validated—rendering invisible structural inequities that don’t fit algorithmic metrics. The counterintuitive result is that these pledges, presented as inclusive safeguards, actually deepen marginalization by legitimizing a narrow, technocratic interpretation of justice that overrides community-based knowledge.

Accountability Substitution

Voluntary self-audits function as deliberate substitutes for enforceable regulation, allowing AI labs to position themselves as ethical leaders while lobbying against binding legislative standards. This occurs through a coordinated strategy in which public pledges are timed with policy debates—such as the EU AI Act or U.S. Algorithmic Accountability Act—to demonstrate 'proactive responsibility' and deter external mandates. What is obscured is that these audits lack third-party verification, penalties, or remediation pathways, making them ritualistic rather than corrective, and thus revealing self-auditing as a political maneuver that simulates reform without altering power distribution.

Legitimacy Substitution

A major AI lab's pledge to self-audit for bias functions as a mechanism of legitimacy substitution, displacing demands for external oversight by mimicking accountability processes without ceding control. This shift emerged prominently after 2018, when public scandals over biased facial recognition—especially Amazon’s Rekognition misuse by law enforcement—spurred corporate commitments to ethical review; yet these internal audits consistently lack enforceable standards, peer validation, or public scrutiny, operating instead through PR-aligned task forces that redefine ethical compliance as voluntary performance. What is underappreciated is how this mimicry paradoxically strengthens institutional autonomy by allowing labs to absorb criticism while neutralizing structural reform, transforming accountability from a binding obligation into a procedural aesthetic.

Regulatory Preemption

Such self-audits serve as instruments of regulatory preemption, strategically forestalling binding legislation by positioning industry self-regulation as a sufficient alternative. Following the 2022 White House Blueprint for an AI Bill of Rights, several labs—including Google and Microsoft—launched transparency reports and bias impact assessments that closely mirror proposed regulatory frameworks, thereby constructing a narrative of responsibility that lawmakers might point to in deferring legal action. The non-obvious consequence is that these pledges do not merely respond to oversight concerns—they actively shape the political imagination of what governance looks like, freezing regulation in a voluntary phase by presenting self-policing as both pragmatic and progressive.

Epistemic Capture

Self-auditing by major AI labs enacts epistemic capture, wherein the defining criteria for bias and fairness are monopolized by technical insiders who exclude sociopolitical expertise from historically marginalized communities. This trajectory crystallized after 2016, when researchers like Joy Buolamwini exposed racial bias in commercial AI systems, prompting labs to institutionalize fairness research—but within engineering-led teams using narrowly quantifiable metrics that erase context-sensitive harms. The underappreciated shift is how technical governance absorbs dissent by reformatting systemic discrimination into computationally tractable problems, rendering structural inequality legible only through algorithms’ own logics and silencing alternative forms of knowledge essential to justice.

Institutional Signaling Threshold

A major AI lab’s pledge to self-audit for bias can catalyze sector-wide transparency norms by establishing a minimum public benchmark that forces peer organizations to respond, either by matching the commitment or risking reputational decoupling from industry leaders; this occurs because early adopters in concentrated tech ecosystems alter the cost-benefit calculus of inaction, leveraging asymmetric influence over public and regulatory expectations. The mechanism operates through status competition among peer labs and investor pressure for ESG alignment, which transforms voluntary acts into de facto competitive requirements. What is underappreciated is that such pledges function less as compliance tools than as signals that shift the institutional threshold for what counts as legitimate behavior in the field.

Regulatory Preemption Cycle

Self-auditing pledges by dominant AI labs preempt more stringent external oversight by supplying just enough procedural transparency to satisfy incrementalist policymakers, thereby preserving the firm’s operational autonomy; this happens because regulatory agencies with limited technical capacity and political will interpret corporate self-reporting as evidence of good faith, delaying or diluting legislative interventions. The dynamic is reinforced by revolving-door labor flows between these labs and government advisory bodies, which align framing of risks with corporate risk management paradigms. The non-obvious consequence is that voluntary audits become institutional circuit breakers, converting systemic accountability demands into managed public relations cycles.

Optics Infrastructure

A major AI lab's pledge to self-audit for bias primarily functions as a public relations alignment tool rather than a structural intervention, because it activates preexisting media and policy expectations around corporate responsibility without altering internal incentives. The mechanism operates through familiar narratives of corporate self-correction—think of Facebook’s ‘We connect people’ or Google’s ‘Don’t be evil’—which resonate in public discourse and neutralize regulatory urgency. This dynamic substitutes the appearance of accountability for external oversight, leveraging societal trust in technocratic benevolence despite documented gaps in enforcement. The underappreciated reality is that the pledge's power lies not in its technical rigor but in its ability to saturate the symbolic space where scrutiny would otherwise grow.

Governance Arbitrage

When major AI labs commit to self-auditing, they strategically shift oversight from democratic arenas to closed technical forums, where the definition of 'bias' can be narrowly operationalized and depoliticized. This occurs through the delegation of ethical decisions to in-house review boards whose metrics prioritize model performance over distributive justice, effectively converting systemic discrimination into isolated statistical anomalies. The process mirrors how financial firms used self-regulation to bypass SEC scrutiny in the 2000s, exploiting regulatory lag and expertise asymmetries. What’s rarely acknowledged is that self-audits aren’t failures of governance—they are refinements of it, designed to preserve autonomy under the guise of compliance.