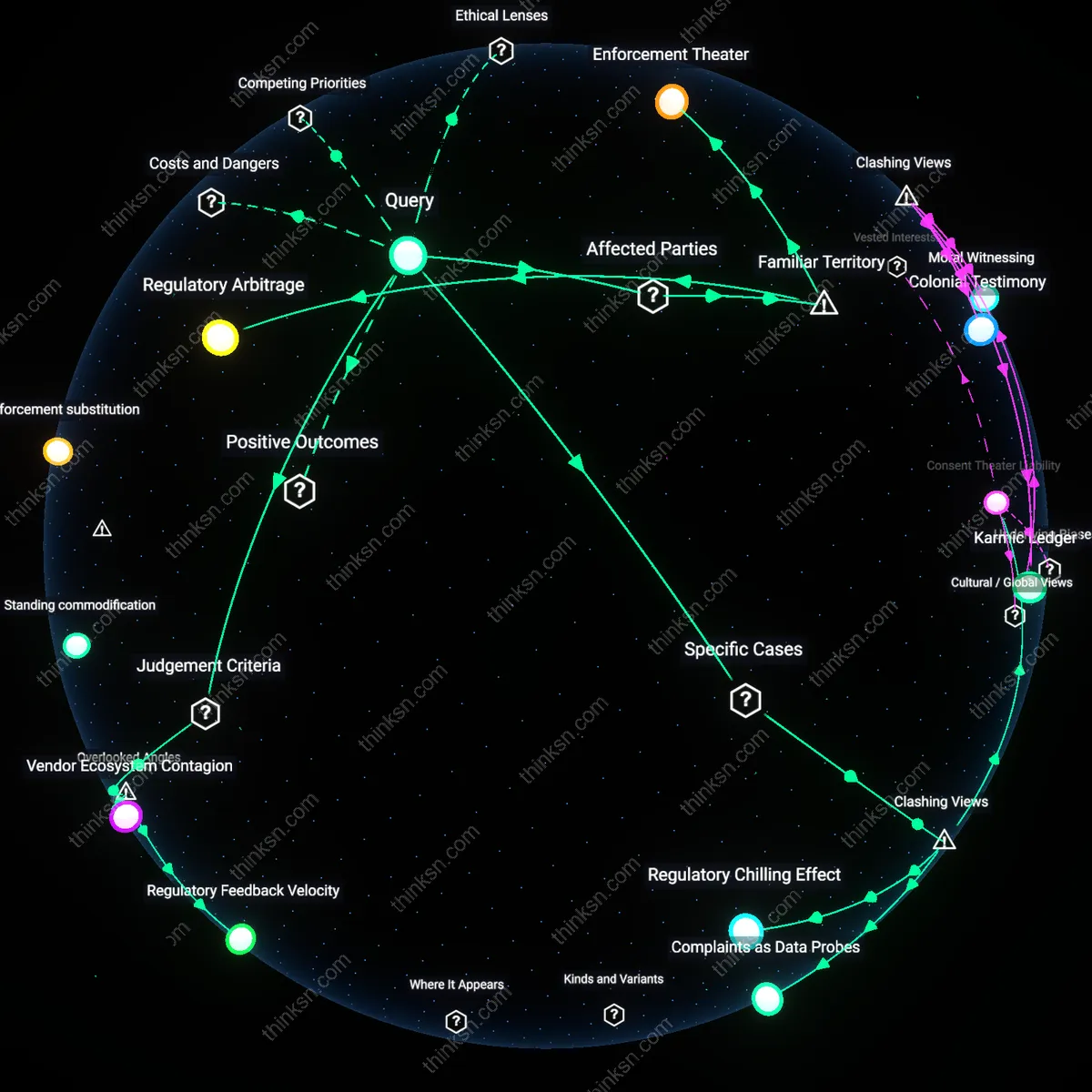

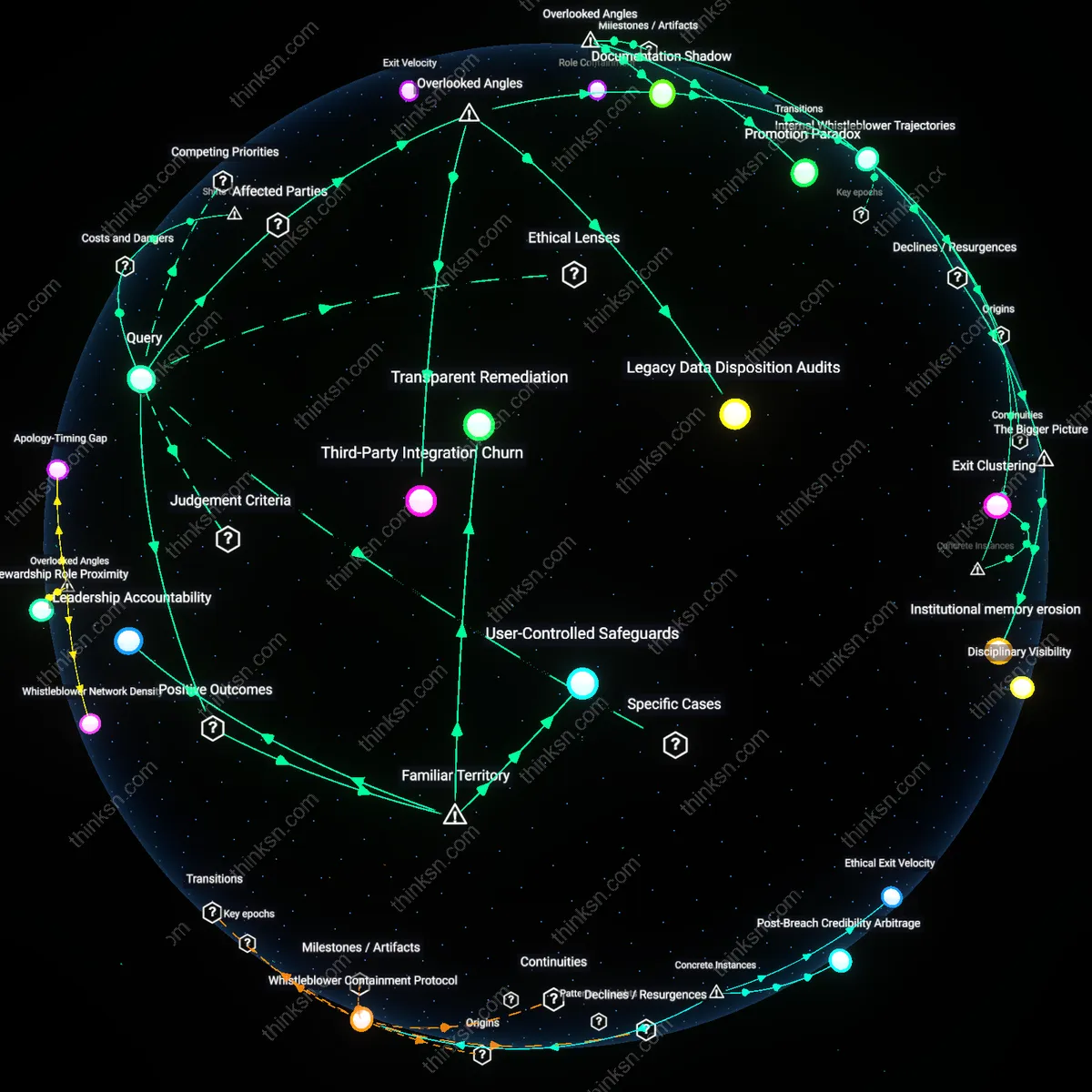

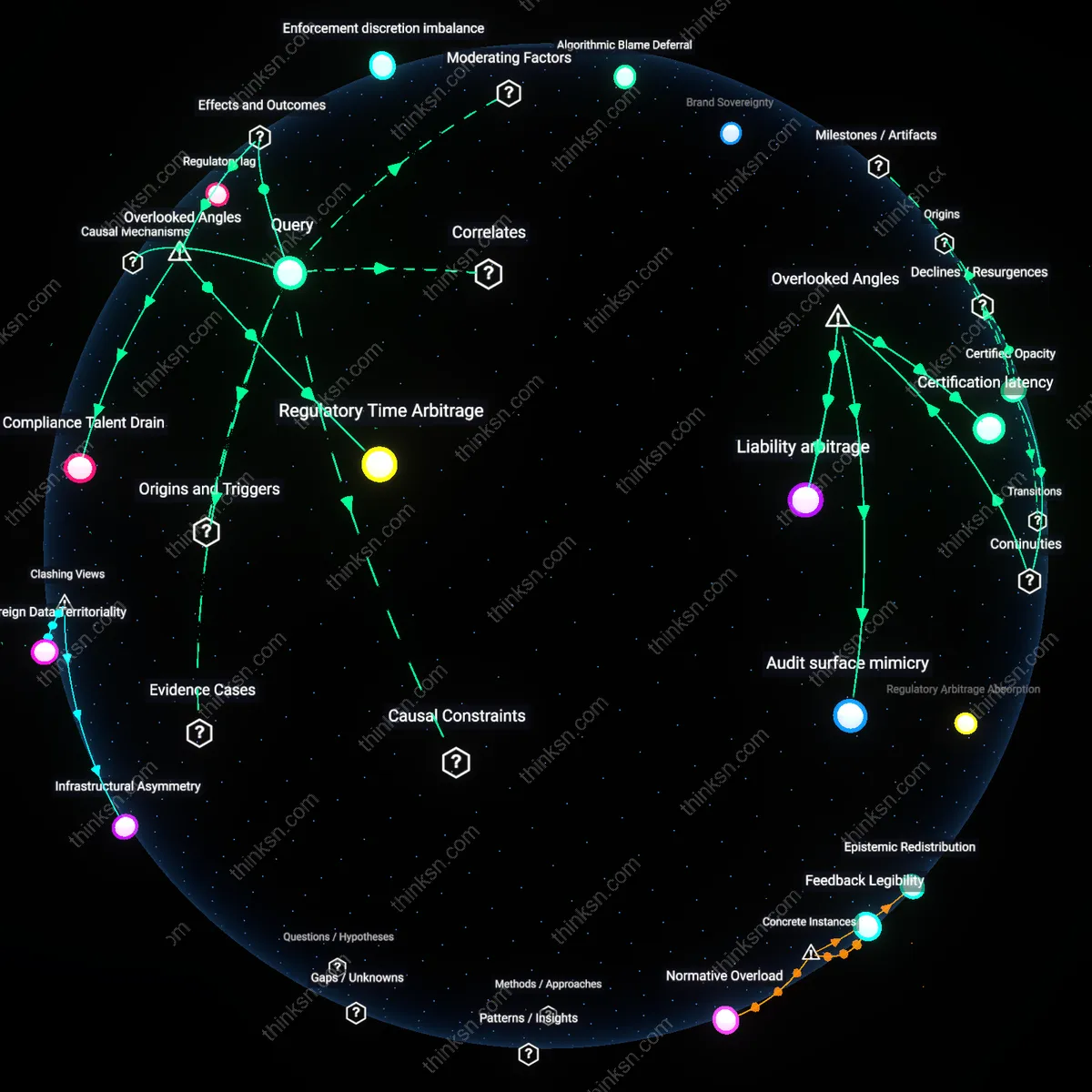

Epistemic Authority Structures

The designers of data infrastructures—such as algorithmic scoring models in education—retain decision-making power in systems using anonymized data because they control the selection, weighting, and curation of inputs that shape outcomes, while affected individuals lack access to or influence over these hidden criteria. Engineers and data scientists at institutions like testing agencies or ed-tech firms embed normative judgments into anonymized data systems through feature selection and model design, which then produce seemingly neutral outputs that determine student tracking or funding allocations. This concentration of interpretive control within technical teams, shielded from public scrutiny, sustains an invisible epistemic hierarchy where the logic of decision-making is opaque but authoritative. The non-obvious consequence is that anonymization does not remove bias but instead relocates moral and technical agency to unaccountable actors who define what counts as 'valid' data.

Representational Inertia

Institutional actors such as school administrators and policymakers rely on legacy data categories within anonymized datasets—like past disciplinary records or historical test scores—which systematically underrepresent marginalized students' evolving competencies and contexts, thereby amplifying the voices of dominant demographic groups. These datasets, often drawn from decades of uneven surveillance and assessment practices, become entrenched in decision algorithms that assume static, ahistorical patterns, failing to incorporate community-based knowledge or alternative indicators of potential. The systemic feedback loop emerges when decisions based on outdated proxies are themselves fed back into the system as evidence of 'objective' outcomes, reinforcing initial biases. The underappreciated dynamic is that anonymization masks not only identity but also temporal change, locking in past inequities as neutral baselines.

Auditability Deficit

Vendors of automated student analytics platforms in public education—such as those providing early warning systems for dropout risk—operate without independent verification of their models, ensuring that corporate teams, not students or parents, determine what evidence counts in high-stakes decisions. Because proprietary algorithms process anonymized student data behind legal and technical firewalls, third parties cannot inspect, challenge, or reproduce how risk scores are generated, giving private firms de facto regulatory power over educational trajectories. This structural absence of public audit mechanisms enables unverifiable claims about student behavior to circulate as facts within schools, shaping interventions without recourse. The overlooked consequence is that anonymization colludes with secrecy regimes to dissolve democratic oversight, privileging commercial logic over civic accountability.

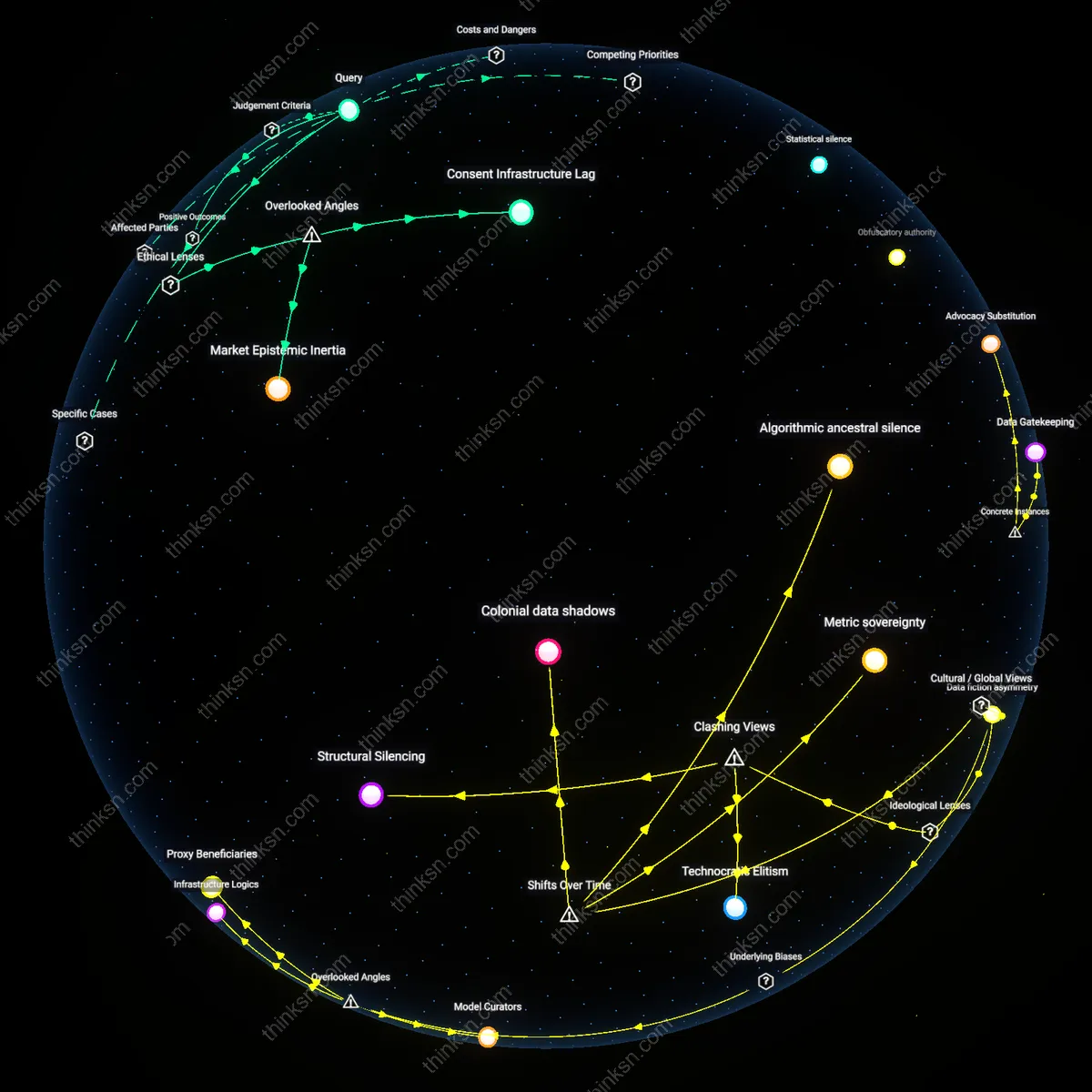

Technocratic Elitism

The voices of algorithmic designers and data scientists dominate anonymized systems, not because they intend to impose ideology but because their technical authority displaces contestable social questions into 'neutral' computational logic. Engineers at firms like Palantir or public-sector data teams in UK local councils operationalize fairness as statistical parity, rendering political judgments about equity invisible under the guise of optimization—this erasure privileges those fluent in machine-learning syntax over affected communities. The non-obvious mechanism is not bias in data but the delegation of normative decisions to technical interfaces that only a narrow epistemic community can access or influence.

Structural Silencing

Anonymized data systems amplify institutional actors—regulators, actuaries, credit raters—while erasing the very populations whose behavior generates the patterns being modeled, not due to malice but because anonymity severs feedback loops needed for democratic redress. When the US Federal Reserve uses aggregated consumer spending data to set monetary policy, the lived realities of low-income households appear only as statistical noise, not political claims, entrenching a form of governance where the governed cannot recognize themselves in the decisions made in their name. This reveals that anonymization doesn’t protect individuals so much as it dissolves collective agency into inert inputs.

Colonial data shadows

Western statistical systems, formalized during the late 19th-century imperial censuses, displaced Indigenous epistemic authorities by anonymizing local identities into administrative categories, rendering community-specific knowledge invisible despite its prior centrality in governance—this erasure, institutionalized through colonial bureaucracy, established that voices heard in data systems would align with administrative legibility rather than lived experience, a shift most visible in British India’s ethnographic surveys which replaced oral genealogies with racialized occupational typologies.

Algorithmic ancestral silence

Post-2000 global health data platforms, such as those used by WHO in sub-Saharan Africa, began anonymizing patient records to meet Western privacy standards, but in doing so severed data from kinship-based consent protocols long upheld in Yoruba and Akan traditions, where medical decisions are communally held—this technical shift privileged individualized data ownership models rooted in Enlightenment liberalism, making family elders and healers invisible in algorithmic decision-making despite their ongoing cultural authority in care ecosystems.

Metric sovereignty

Since the 2015 Paris Climate Agreement, Pacific Island nations have challenged the anonymization of climate vulnerability data by transnational risk models that aggregate their territories into ‘small island states’ categories, obscuring distinct ancestral land practices and localized adaptation knowledge—this institutional flattening contrasts with pre-1970s regional meteorological systems where navigation and forecasting were community-anchored, revealing how contemporary data anonymity enables powerful states to define risk while marginalizing Indigenous temporalities embedded in ecological observation.

Model Curators

Corporate data science teams, not affected publics, dominate feedback loops in anonymized systems because retraining of algorithms relies on performance metrics selected and interpreted by in-house engineers who filter which errors merit correction—this mechanism silently privileges commercial continuity over civic redress, making the class of technical gatekeepers who define 'accuracy' the de facto arbiters of fairness, a dynamic rarely acknowledged when debates focus on transparency alone.

Infrastructure Logics

The physical and temporal constraints of legacy database architectures—such as batch processing schedules and data retention policies in municipal record systems—actively suppress real-time challenges to decision-making by rendering individual appeals invisible until aggregate thresholds trigger audits, thereby privileging systemic inertia over personal contestation, a technical dependency that shapes whose experiences register even when identities are anonymized, a factor almost entirely absent from ethical AI discourse.

Proxy Beneficiaries

Third-party vendors selling compliance certifications for anonymization technologies gain credibility and contracts when errors remain diffuse and unattributed, because persistent uncertainty about data influence increases demand for proprietary audit tools, creating a covert economic incentive to maintain opacity, whereby firms that commodify trust in anonymized data profit precisely because individuals cannot trace or correct it, revealing a parasitic relationship obscured by rights-based narratives.

Data Gatekeeping

Corporate entities like Facebook determine the boundaries of what anonymized user data can be accessed or corrected by individuals, as seen in the 2018 Cambridge Analytica scandal where third parties exploited psychographic profiling from non-consensual data aggregation; this mechanism privileges Facebook’s internal logic of data as proprietary infrastructure, making transparency a competitive liability rather than a user right, which reveals how control over data access becomes a tool for sustaining corporate dominance under the guise of privacy and security.

Advocacy Substitution

In the development context of India’s Aadhaar biometric identification system, activist groups such as the Internet Freedom Foundation challenged state claims of anonymized data use in welfare distribution, exposing how officials substituted technical assurances for democratic deliberation; the government justified opacity through the logic of efficiency and national scale, thereby marginalizing rural beneficiaries whose exclusion errors could not be contested due to inaccessible data processes, showing how anonymization regimes enable policymakers to replace affected voices with expert rationalization.

Regulatory Capture

In the European Union’s implementation of GDPR, financial institutions like Deutsche Bank influenced the interpretation of ‘legitimate interest’ to retain broad rights over processing anonymized transaction data, shaping regulatory guidance through lobbying that framed privacy compliance as a barrier to market innovation; this dynamic allowed banks to define the limits of data subject rights in algorithmic credit scoring, revealing how anonymized systems become arenas where compliance infrastructure is quietly captured by incumbent actors who reframe fairness as economic stability.