Are AI Audit Trails Transparent or a Trade Secret Risk?

Analysis reveals 9 key thematic connections.

Key Findings

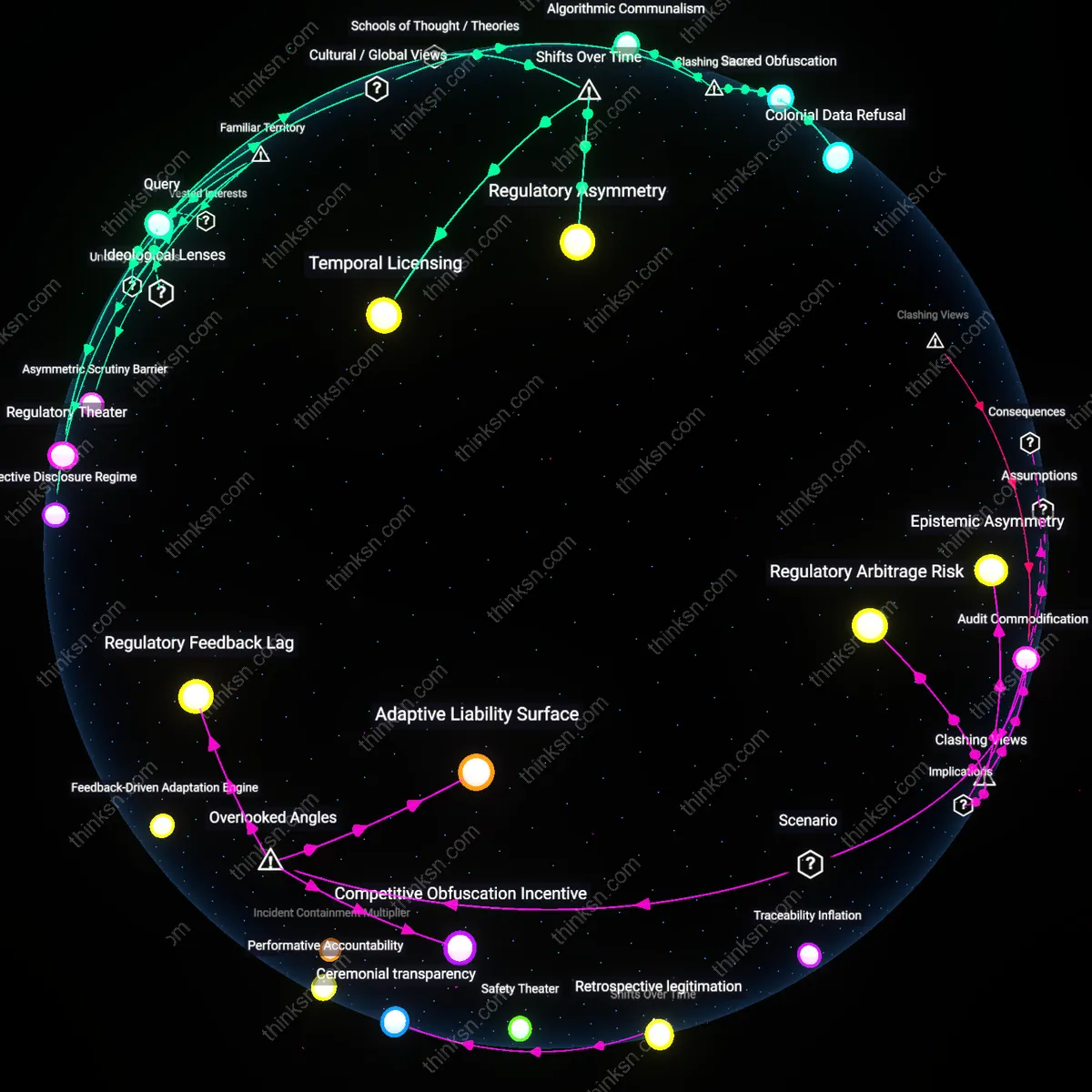

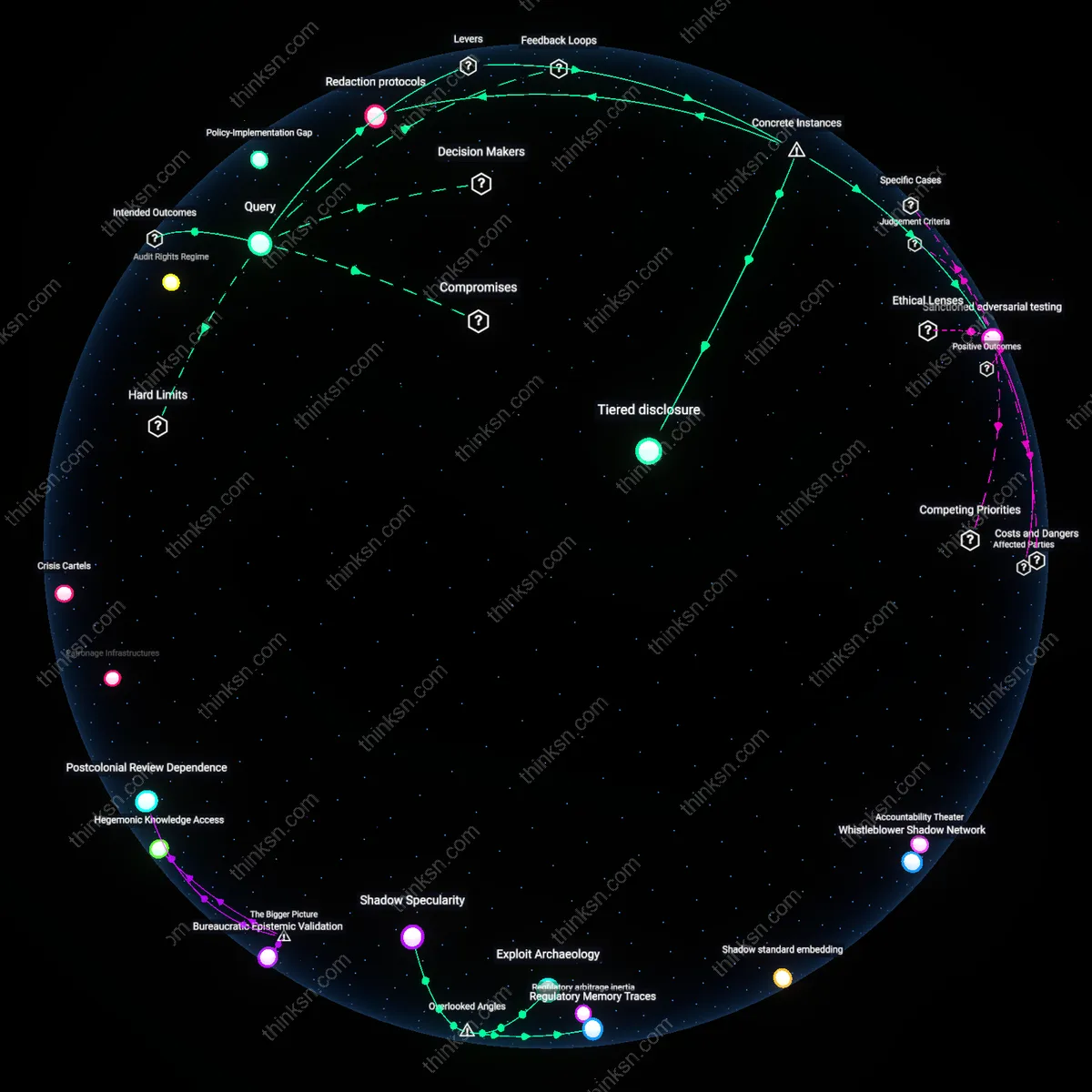

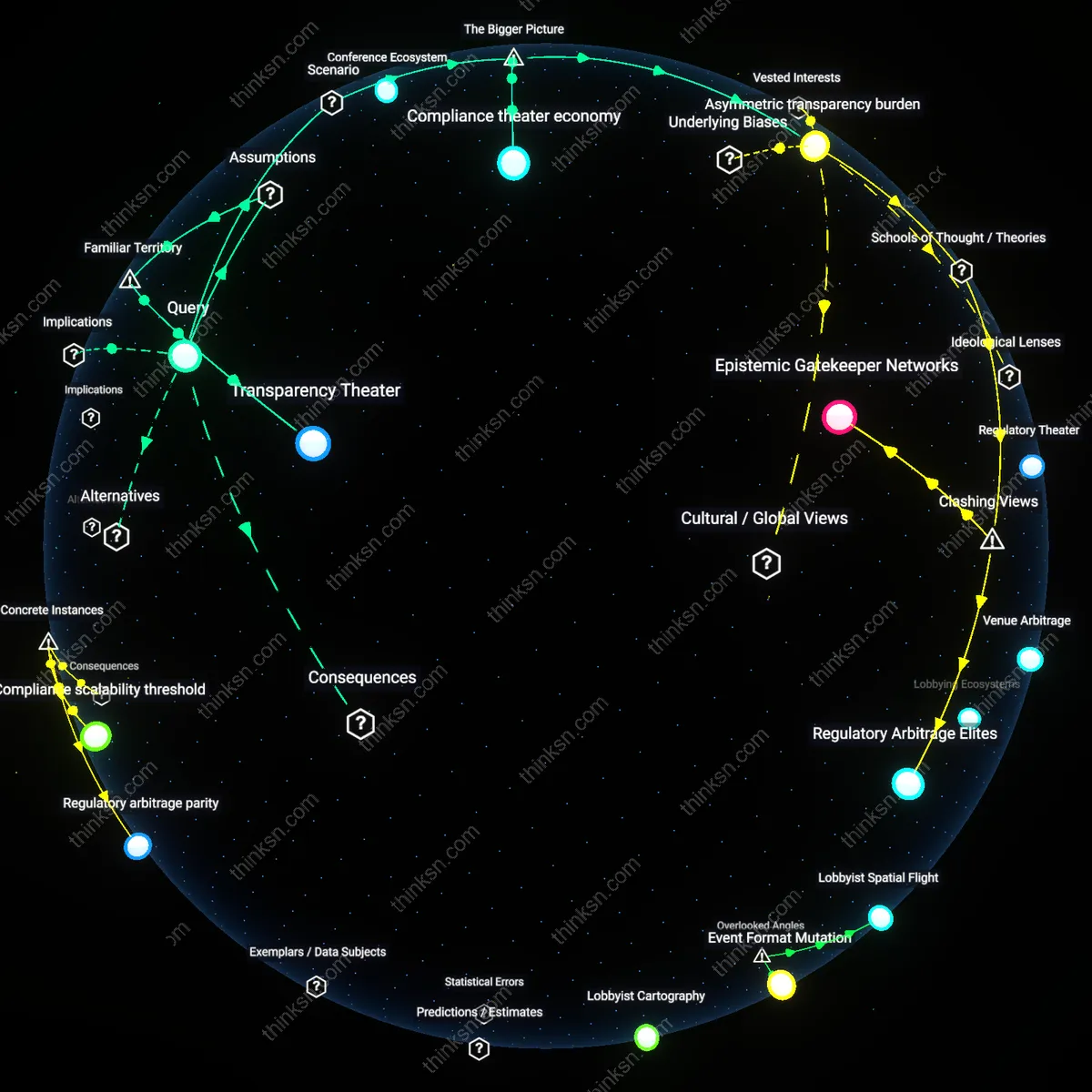

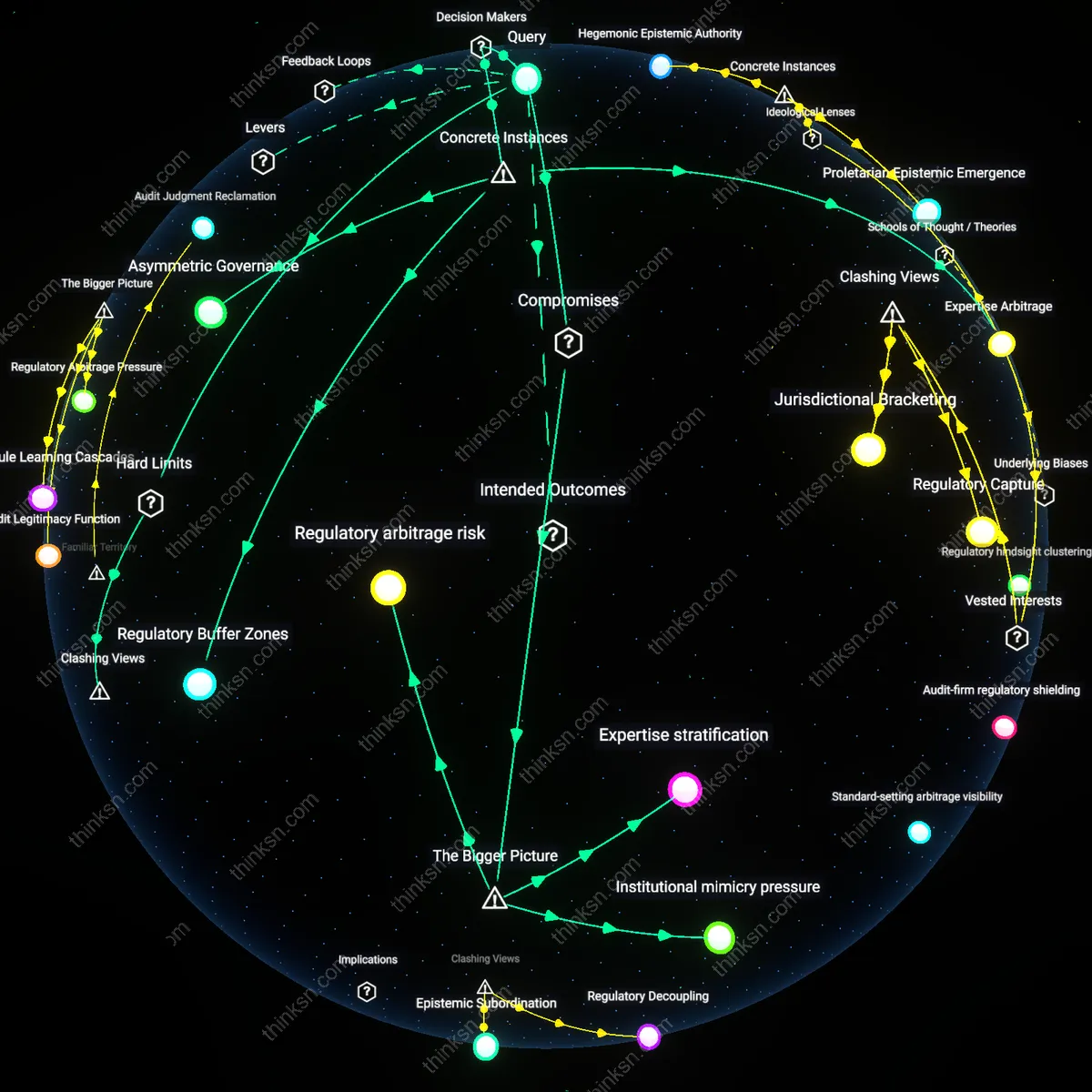

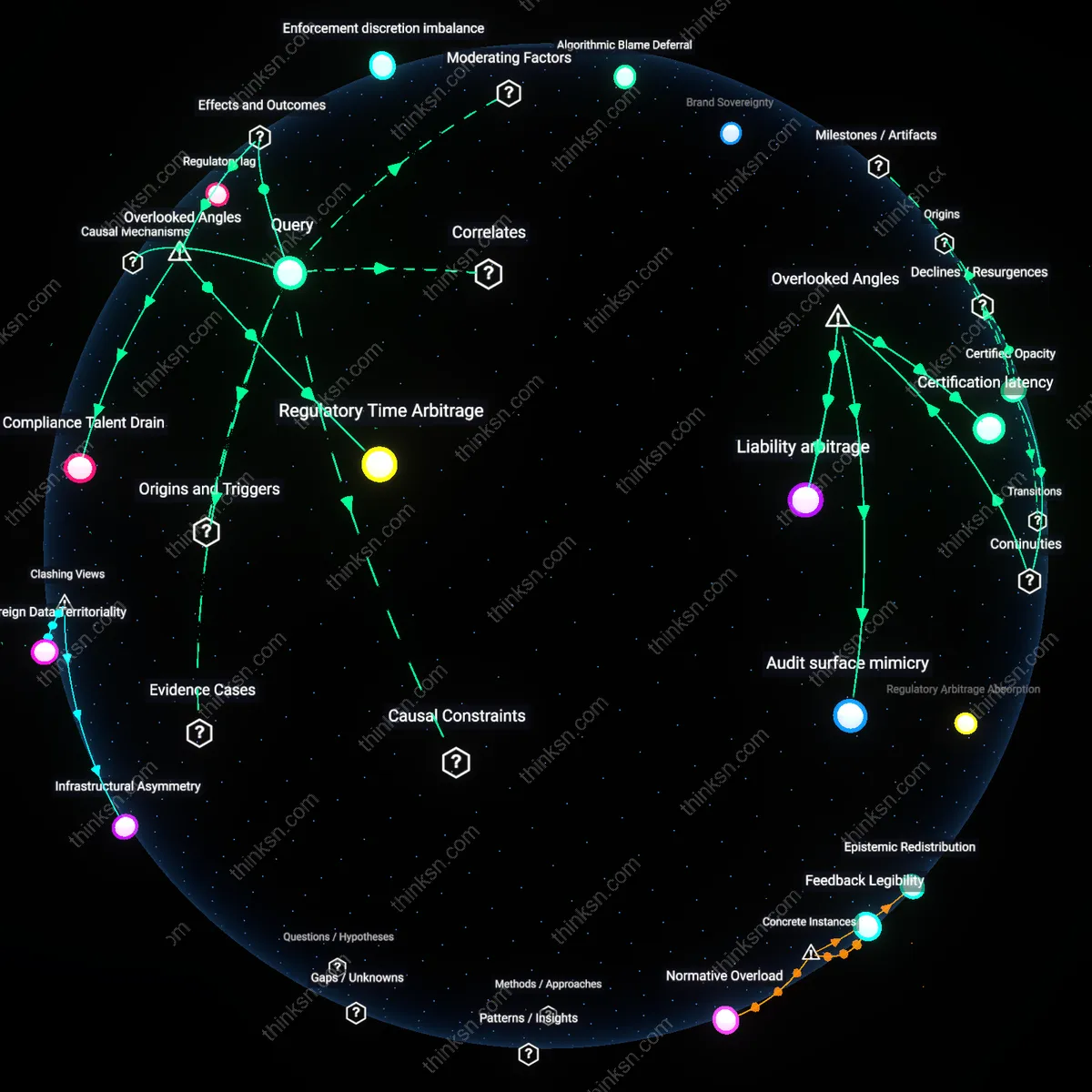

Regulatory Asymmetry

Mandatory AI audit trails institutionalize a regulatory asymmetry where state actors gain privileged access to algorithmic logic under the guise of public accountability, while competitors remain excluded, thereby preserving proprietary secrecy. This mechanism emerged decisively after the 2016–2018 period of AI ethics backlash, when public pressure over opaque decision-making in hiring and lending forced regulators to assert oversight without direct IP appropriation—utilizing third-party auditors certified under frameworks like NIST’s AI Risk Management Framework. The non-obvious insight is that the state does not need full algorithmic transparency to enforce compliance; instead, it leverages selective visibility, creating a tiered information order where governance and commercial secrecy coexist through procedural gatekeeping rather than full disclosure.

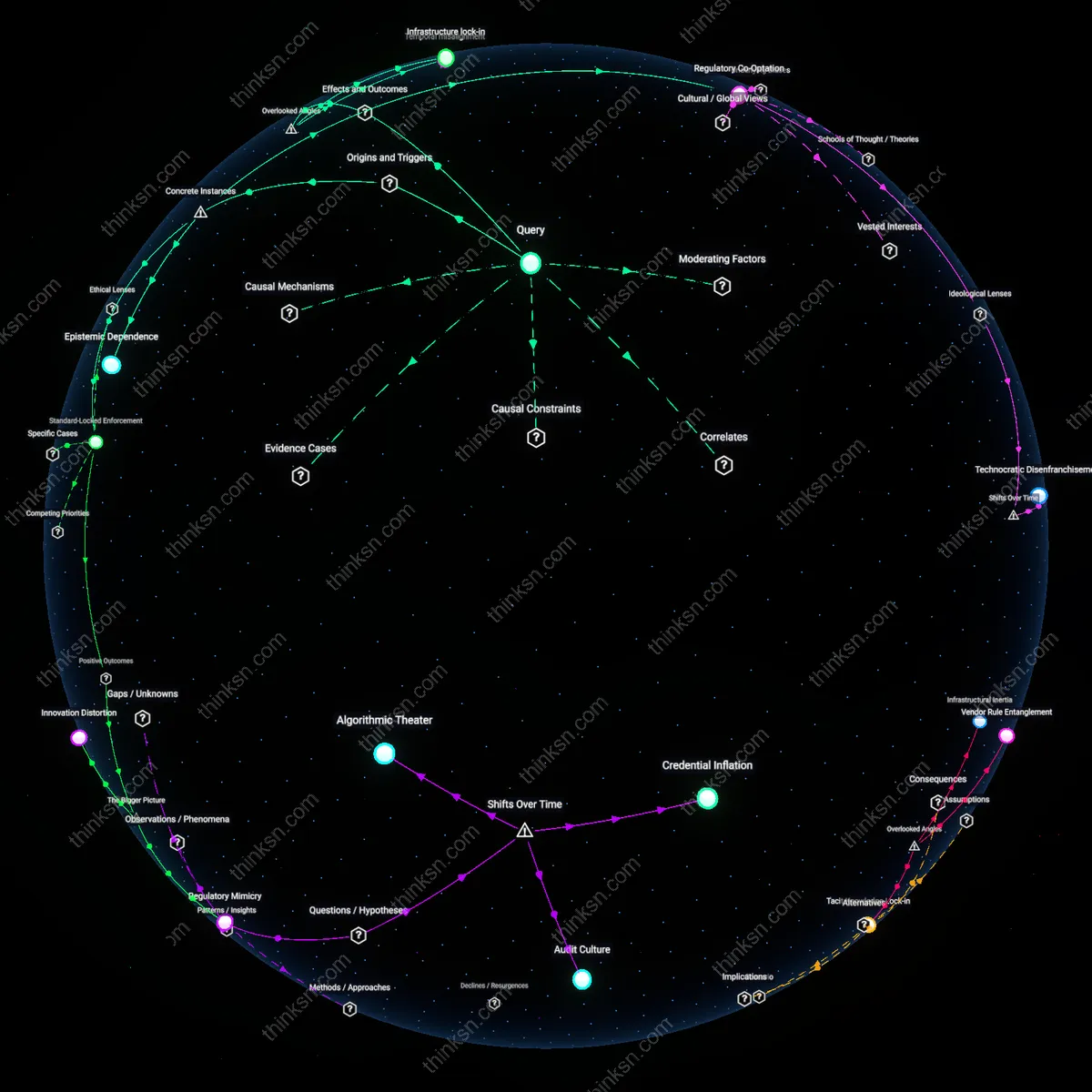

Audit Fiction

Mandatory AI audit trails sustain an audit fiction—a procedural performance of transparency that satisfies regulatory expectations without transferring meaningful algorithmic knowledge to external parties. This shift crystallized in the early 2020s as corporations, particularly in big tech, adopted audit-ready documentation that mimics accountability while obscuring core innovation logic through modular obfuscation and deliberate ambiguity in reporting metrics. What is underappreciated is that audits have become a compliance theater in which proprietary protection is maintained not by secrecy per se, but by overwhelming inspectors with granular yet inconsequential data, thus transforming transparency into a ritual rather than a revelation.

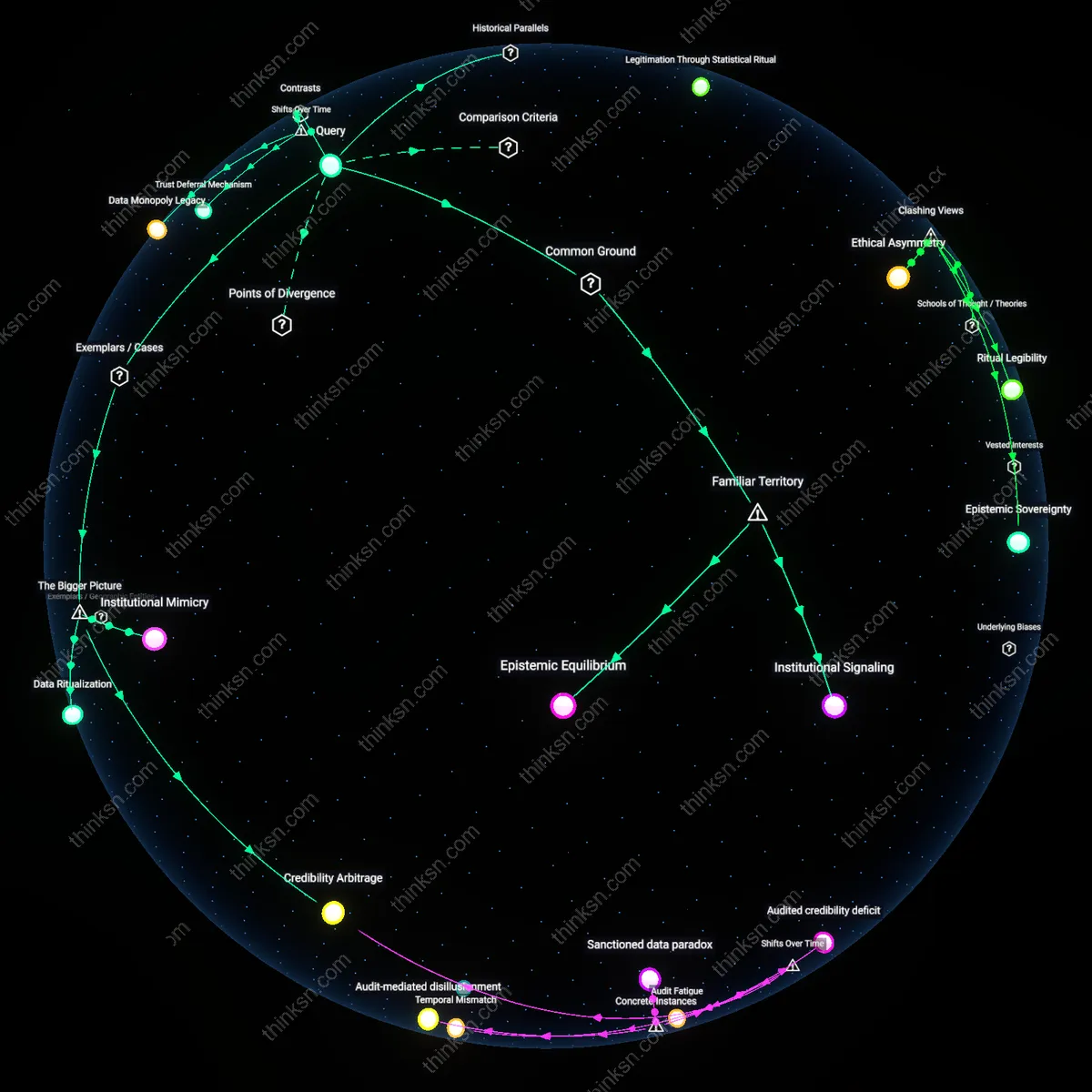

Temporal Licensing

Mandatory AI audit trails enable temporal licensing, wherein algorithmic transparency is granted conditionally and time-limited to authorized entities during formal review periods, then reverted to proprietary status, reflecting a post-2020 pivot from perpetual secrecy to dynamic access models influenced by financial regulation and clinical trial transparency regimes. This shift redefines proprietary protection not as static concealment but as controlled, episodic disclosure governed by contractual and cryptographic enforcement, managed through secure data enclaves like those piloted by the EU’s AI Office. The overlooked development is that ownership is now decoupled from constant exclusivity, allowing firms to maintain competitive advantage while ceding ephemeral oversight—one that leaves no traceable knowledge residue for competitors.

Algorithmic Communalism

Mandatory AI audit trails can enhance transparency without undermining proprietary protections by treating algorithmic oversight as a collective ritual obligation, as practiced in Japan’s keiretsu networks, where firms share audited operational data with affiliated members under mutual trust structures that prioritize ecosystem resilience over individual ownership—this mechanism reframes transparency not as disclosure to competitors but as accountability to a culturally bound business kinship, revealing that proprietary boundaries are not absolute but relationally defined and maintained through exclusionary inclusion.

Sacred Obfuscation

In Hindu-inflected business ethics in India, mandatory AI audit trails gain legitimacy not through full disclosure but through ritualized partial revelation, where algorithms are treated like temple knowledge—accessible to priestly auditors (regulators) who validate spiritual (ethical) compliance without publishing inner workings, thereby preserving commercial secrecy under a framework of dharma-based accountability, challenging the Western assumption that transparency requires public visibility and exposing transparency as a culturally contingent performance rather than an epistemic ideal.

Colonial Data Refusal

Mandatory AI audit trails are resisted in many Global South contexts not because of proprietary concerns but as a form of anti-colonial boundary maintenance, where states like Senegal or Bolivia treat algorithmic transparency demands from Western-led institutions as epistemic extractivism, and instead deploy minimalist, sovereignty-preserving audit protocols that satisfy formal compliance while withholding functional insight—this reveals that the protection of algorithms often serves not corporate interest but postcolonial defense against cognitive imperialism, reframing opacity as a political shield rather than an economic shield.

Regulatory Theater

Mandatory AI audit trails create the appearance of transparency by standardizing documentation that regulators can review without accessing core algorithmic logic. This satisfies public demand for oversight while allowing firms to redact or abstract proprietary components under trade secret claims, with oversight bodies like the FTC or EU AI Office relying on self-reported compliance artifacts. The non-obvious outcome is that audit trails become performative—designed more for legal defensibility than substantive insight, shielding dominant tech firms from competitive exposure while projecting accountability.

Selective Disclosure Regime

Firms disclose only pre-approved, non-core elements of AI systems—such as data lineage or model version logs—through audit trails, preserving strategic advantage in optimization techniques and architecture design. This operates through tiered access protocols where third-party auditors from major consulting firms (e.g., Deloitte, PwC) validate compliance without transferring knowledge to competitors or regulators. The underappreciated effect is that transparency becomes a choreographed exchange of low-risk information, reinforcing an ecosystem where established players control what constitutes 'meaningful' disclosure.

Asymmetric Scrutiny Barrier

Mandatory audits raise compliance costs in a way that disadvantages startups and open-source projects, who lack the infrastructure to generate standardized, legally defensible audit logs, while large incumbents absorb these expenses as routine. This dynamic functions through regulatory complexity that maps onto existing corporate hierarchies—lawyers, compliance officers, and internal review boards—turning audit requirements into a moat. The overlooked consequence is that transparency mandates, framed as democratic safeguards, become tools of market consolidation, protecting proprietary algorithms not through secrecy alone, but by institutionalizing high-cost verification processes.