Does AI Hypothesis Generation Stifle Scientific Diversity?

Analysis reveals 7 key thematic connections.

Key Findings

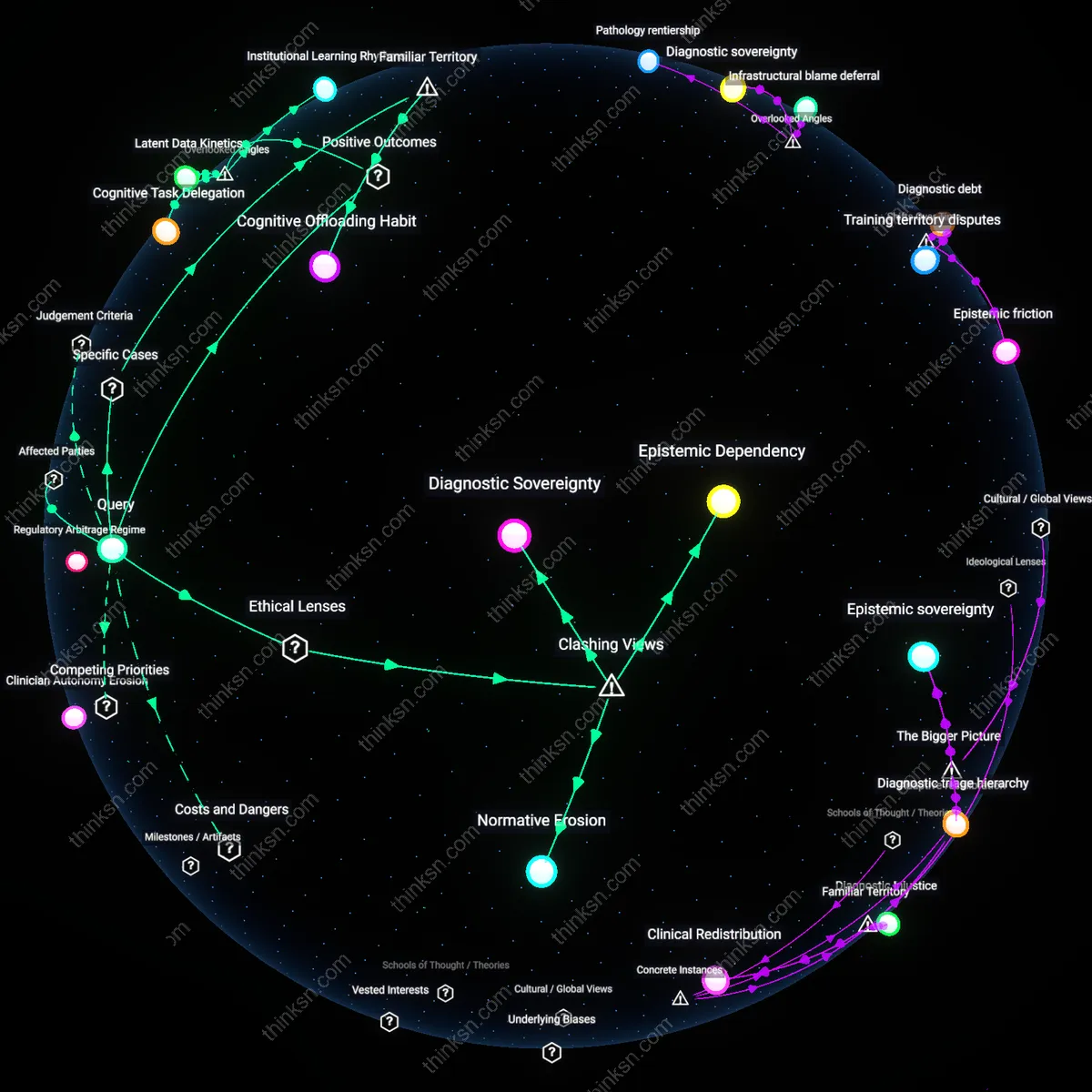

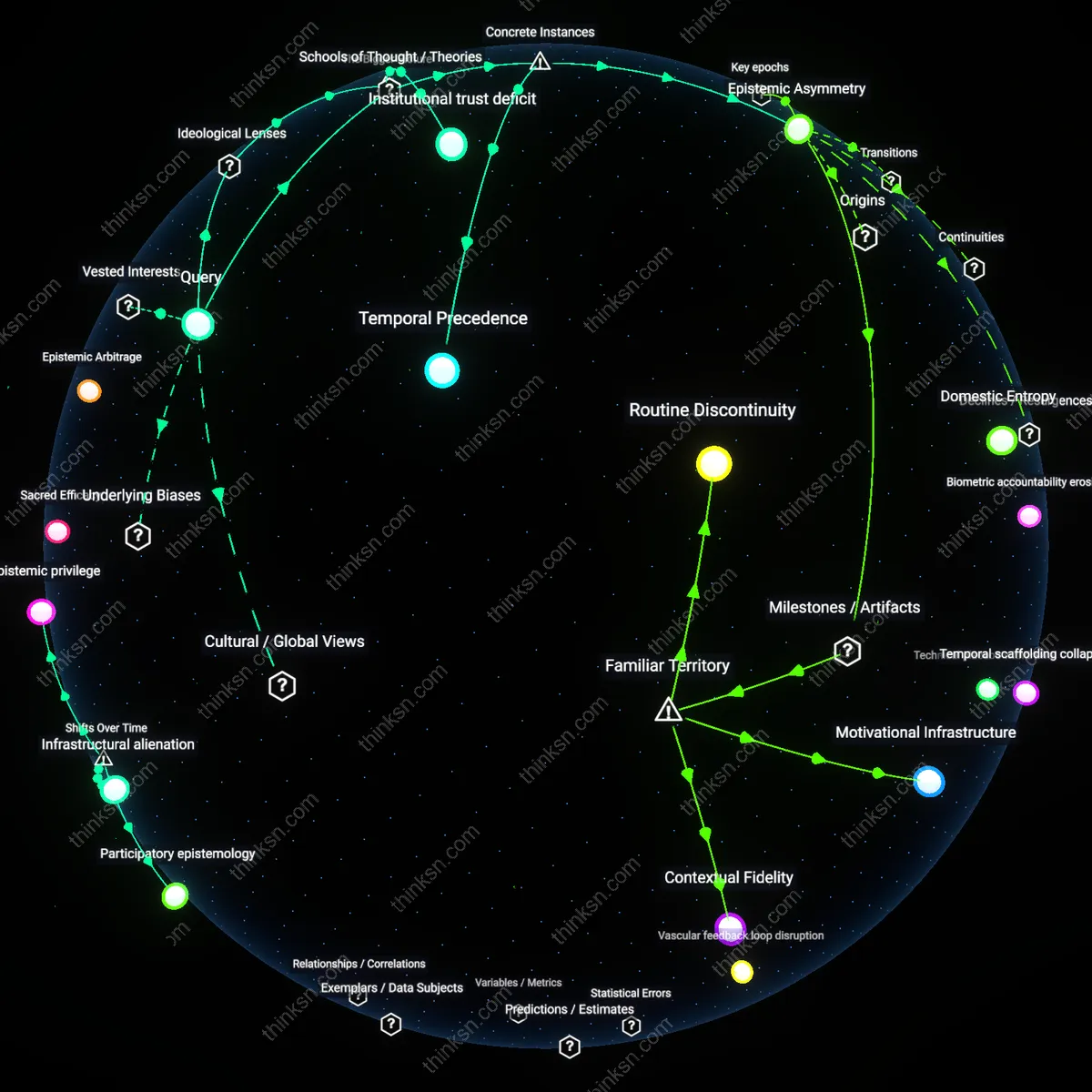

Automated Consensus Drift

AI-generated hypothesis suggestion entrenches dominant research paradigms by statistically amplifying historically prevalent associations in biomedical literature, thereby marginalizing low-frequency but potentially transformative ideas that lack prior textual representation. This mechanism operates through large language models trained on citation-dense corpora from the post-2000 publication boom, where high-impact journals systematically favored mechanistic studies over exploratory work, thus encoding a normative bias toward pathophysiological continuity rather than disruption. The shift from hypothesis-led to data-led discovery after the Human Genome Project created a feedback loop where AI tools reinforce the epistemic value of 'plausibility'—defined narrowly by past success—making outlier hypotheses appear increasingly aberrant, not just rare, which erodes the disciplinary tolerance for empirically unanchored innovation.

Algorithmic Path Dependence

AI-generated hypothesis suggestion risks epistemic homogenization because early model outputs become self-reinforcing research trajectories, as seen in the 2020 uptake of DeepMind’s AlphaFold by structural biologists; once institutions like the European Bioinformatics Institute embedded its protein-folding predictions into grant prioritization and publication pipelines, alternative experimental methods lost funding and visibility, not due to failure but because the technical authority of a high-performing AI compressed methodological diversity. This mechanism operates through infrastructural entrenchment—where tools shape not just what is known but what can be proposed—revealing the underappreciated way non-human actors discipline scientific imagination by privileging computationally tractable problems over empirically divergent ones.

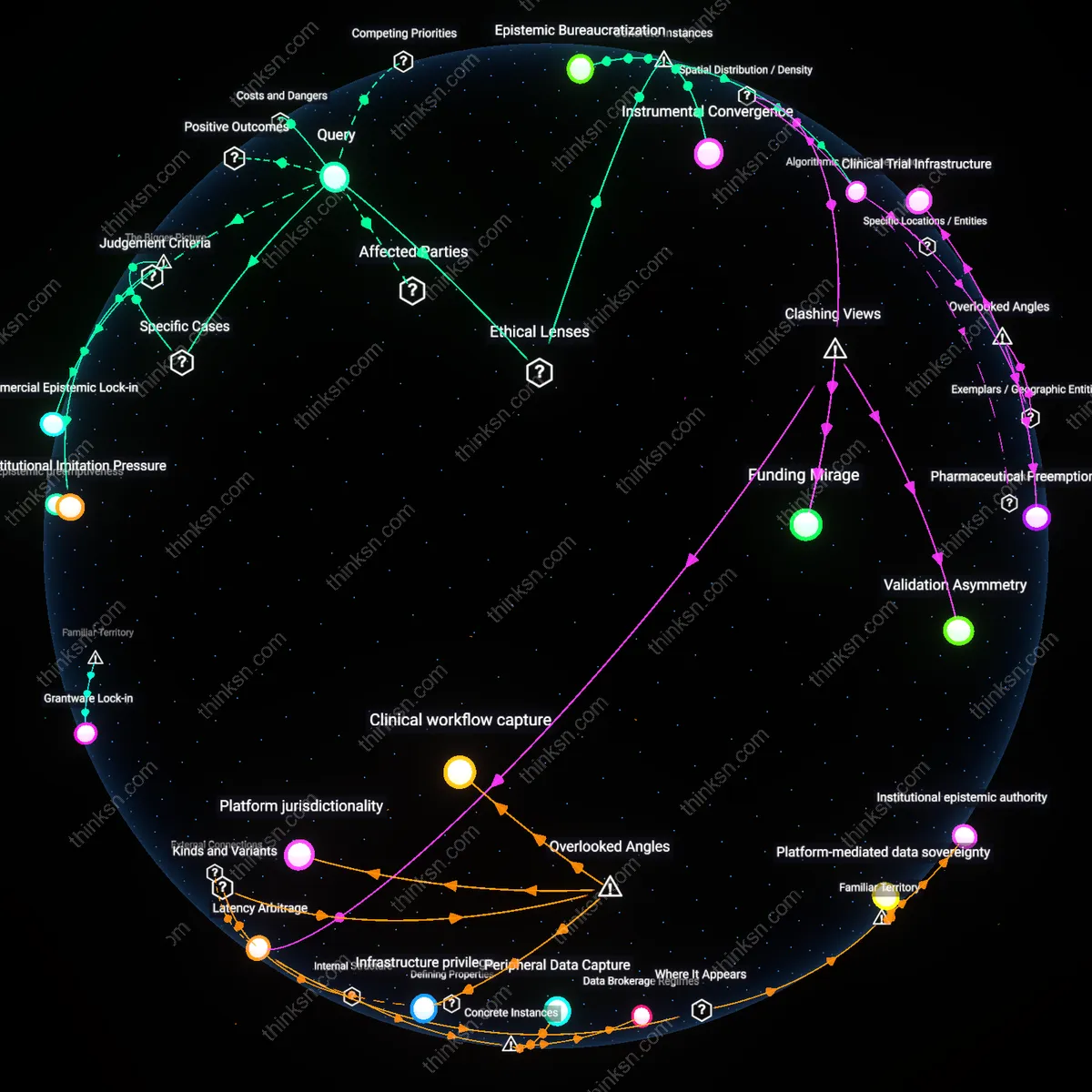

Epistemic Bureaucratization

The integration of AI hypothesis generators into NIH-funded research review panels since 2022 has systematically advantaged proposals aligned with machine-suggested pathways, as evidenced by the declining approval rates for investigator-initiated studies using non-model organisms at the National Institute of Allergy and Infectious Diseases; here, utilitarian ethics—prioritizing the greatest good through scalable AI-optimized research—collides with the Rawlsian value of fair opportunity for diverse epistemic traditions, creating a selection bias where non-dominant forms of biological reasoning (e.g., field ecology or ethnobotanical insight) are excluded not by failure but by incompatibility with algorithmic input formats. This exposes how ethical frameworks coded into administrative systems silently reclassify legitimate scientific pluralism as inefficiency.

Instrumental Convergence

The reliance on AI-generated hypotheses in global health research, particularly in Gates Foundation-supported malaria studies in sub-Saharan Africa since 2021, has produced a convergence on molecular interventions while marginalizing social-structural analyses, because machine learning models trained on Western biomedical databases systematically underweight contextual variables like housing quality or healthcare access; operating through the political ideology of technocratic globalism, this shift reframes complex health disparities as solvable through targeted innovation, thereby aligning with neoliberal governance preferences that favor depoliticized solutions. The non-obvious consequence is not just homogenization but the erasure of historically rooted, community-validated knowledge systems under the guise of evidence-based progress.

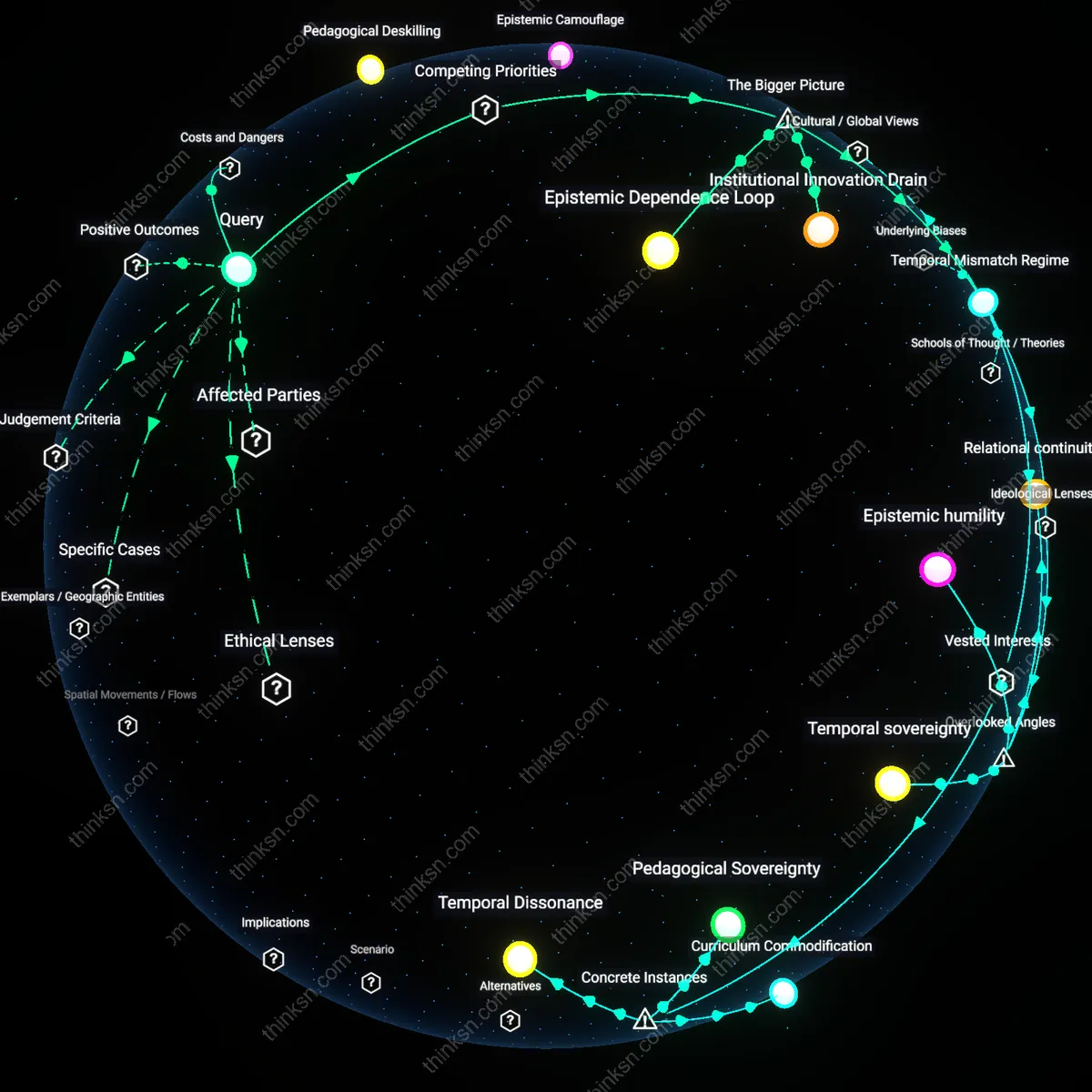

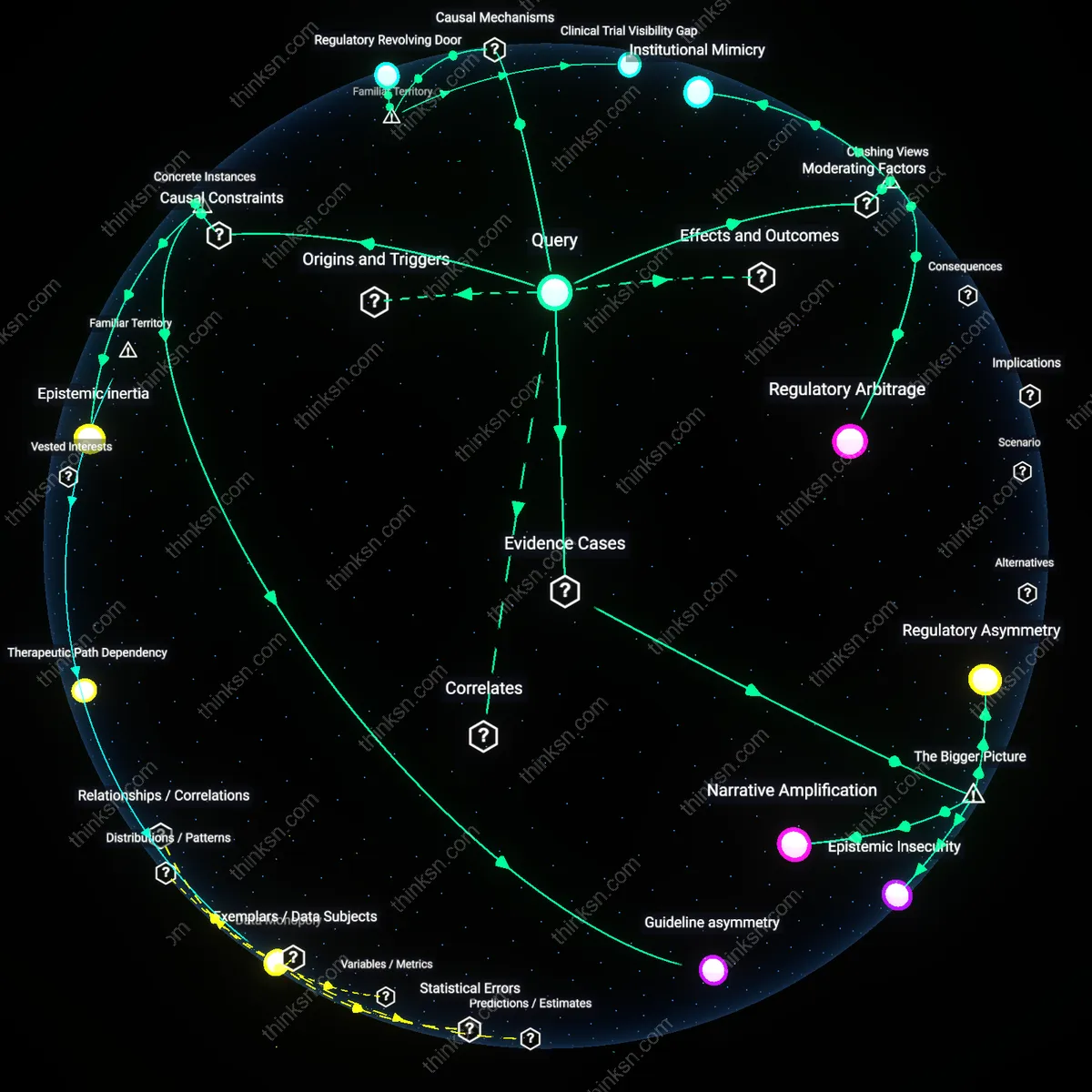

Institutional Imitation Pressure

AI-generated hypothesis suggestion reinforces epistemic homogenization when major research institutes like the Broad Institute or Mount Sinai adopt identical AI platforms from vendors such as Owkin or Google Health, whose models are trained on dominant public datasets like UK Biobank or The Cancer Genome Atlas—creating a feedback loop where high-impact institutions validate similar hypotheses, marginalizing alternative research trajectories rooted in underrepresented populations. This convergence is driven not by scientific superiority but by shared algorithmic priors and reputational incentives, where funding bodies and journals reward methodological alignment with these standardized AI tools, systematically disadvantaging hypotheses emerging from regionally grounded or minority-serving institutions. The non-obvious mechanism here is not the AI’s bias per se, but the institutional race to legitimacy that turns AI suggestions into self-fulfilling norms.

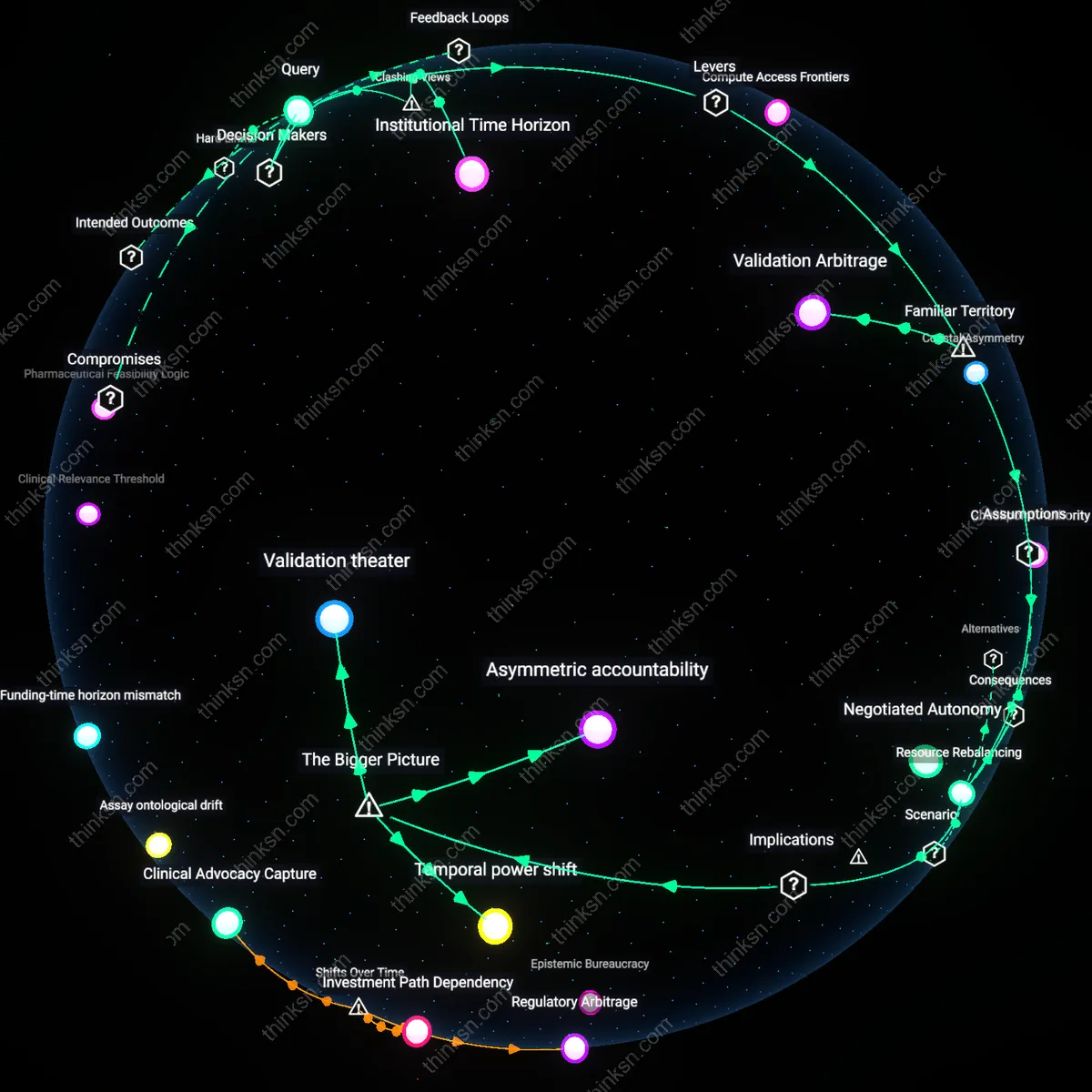

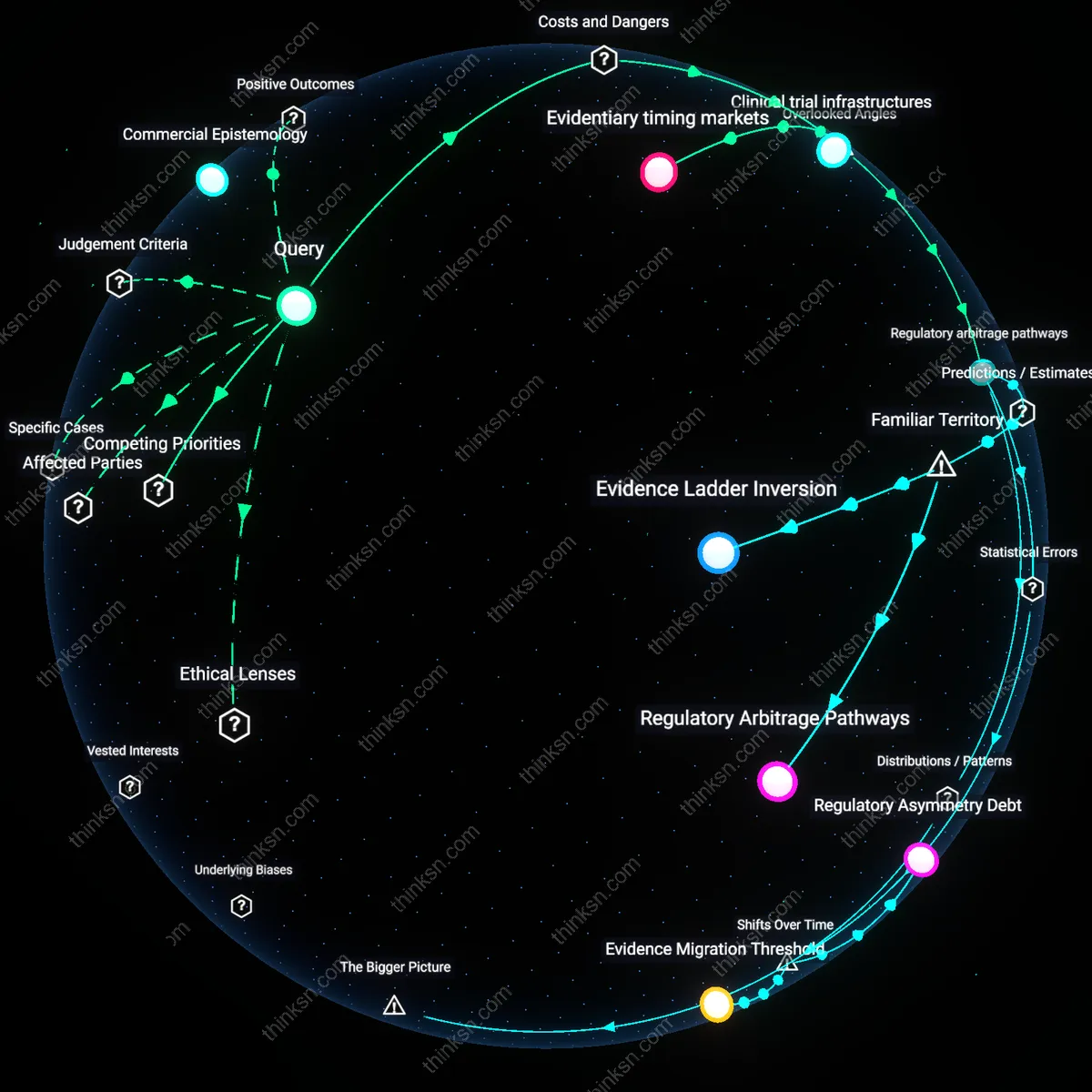

Commercial Epistemic Lock-in

Pharmaceutical companies such as Pfizer and Roche increasingly outsource early discovery to AI firms like Recursion Pharmaceuticals and Insilico Medicine, whose proprietary models suggest hypotheses optimized for druggability and patentability within existing biochemical paradigms—resulting in a narrowing of disease mechanisms deemed 'actionable' and systematically excluding non-molecular or ecological models of illness. This homogenization arises because commercial AI platforms are structurally incentivized to reproduce hypotheses aligned with regulatory approval pathways and return on investment, not theoretical diversity, and their black-box architectures prevent external critique or modification. The underappreciated dynamic is that epistemic diversity erodes not from flawed science but from the enclosure of hypothesis generation within proprietary systems that treat knowledge as intellectual property.

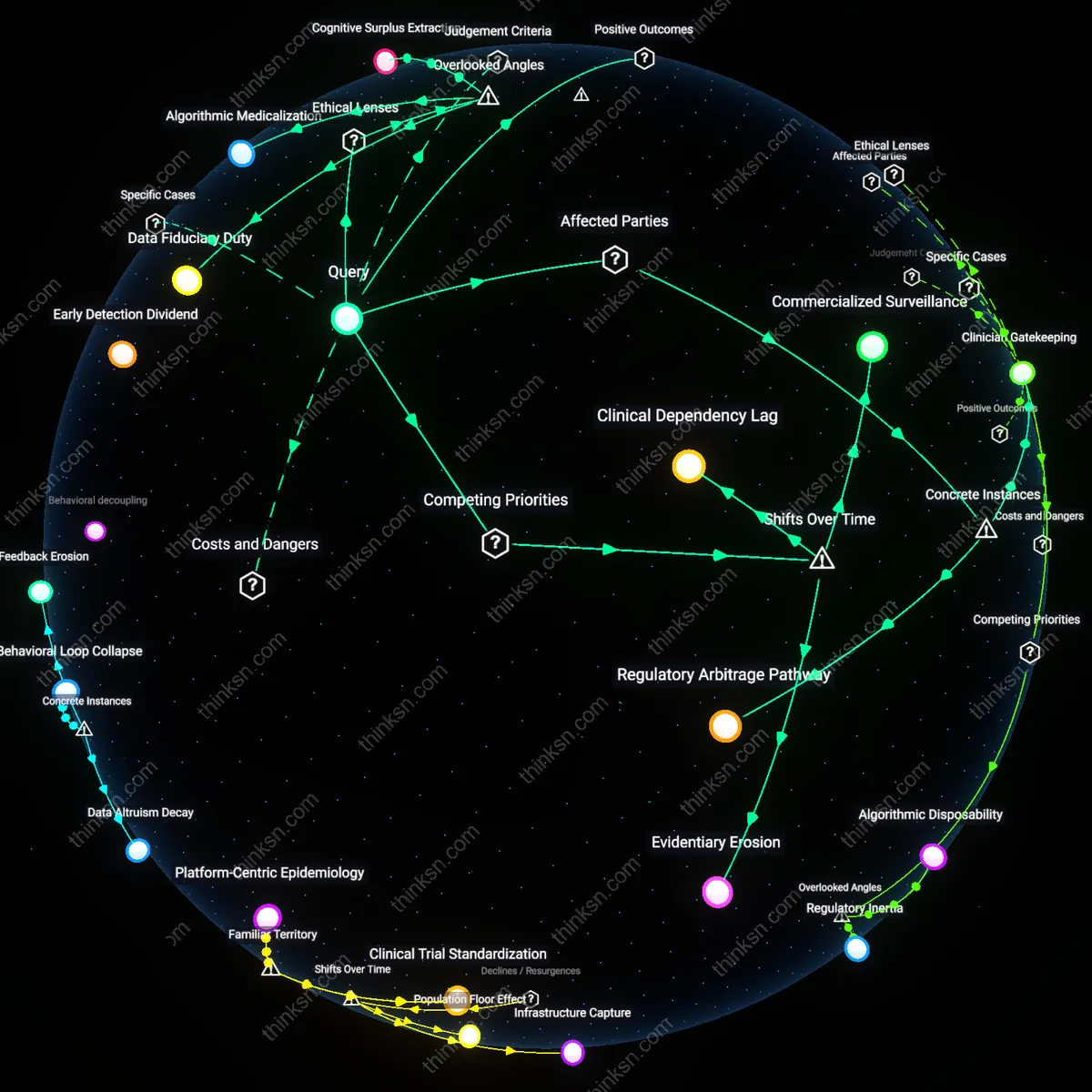

Funding Feedback Atrophy

National Institutes of Health grant panels increasingly favor proposals that cite AI-driven preliminary data, creating a path dependency where investigators at universities like Duke or UCSF must adopt mainstream AI-suggested hypotheses to secure funding, even when local clinical observations suggest alternative etiologies—such as in rural health or Indigenous populations where data scarcity biases AI toward urban, Western norms. This shift entrenches epistemic homogeneity because AI suggestions become gatekeepers to legitimacy, crowding out investigator-initiated, context-sensitive research that lacks algorithmic validation. The overlooked consequence is not reduced innovation per se, but the gradual atrophy of funding ecosystems that once supported outlier thinking, turning AI from a tool into a systemic filter.