Should Biotech Researchers Bet on AI or Deepen Biology Skills?

Analysis reveals 4 key thematic connections.

Key Findings

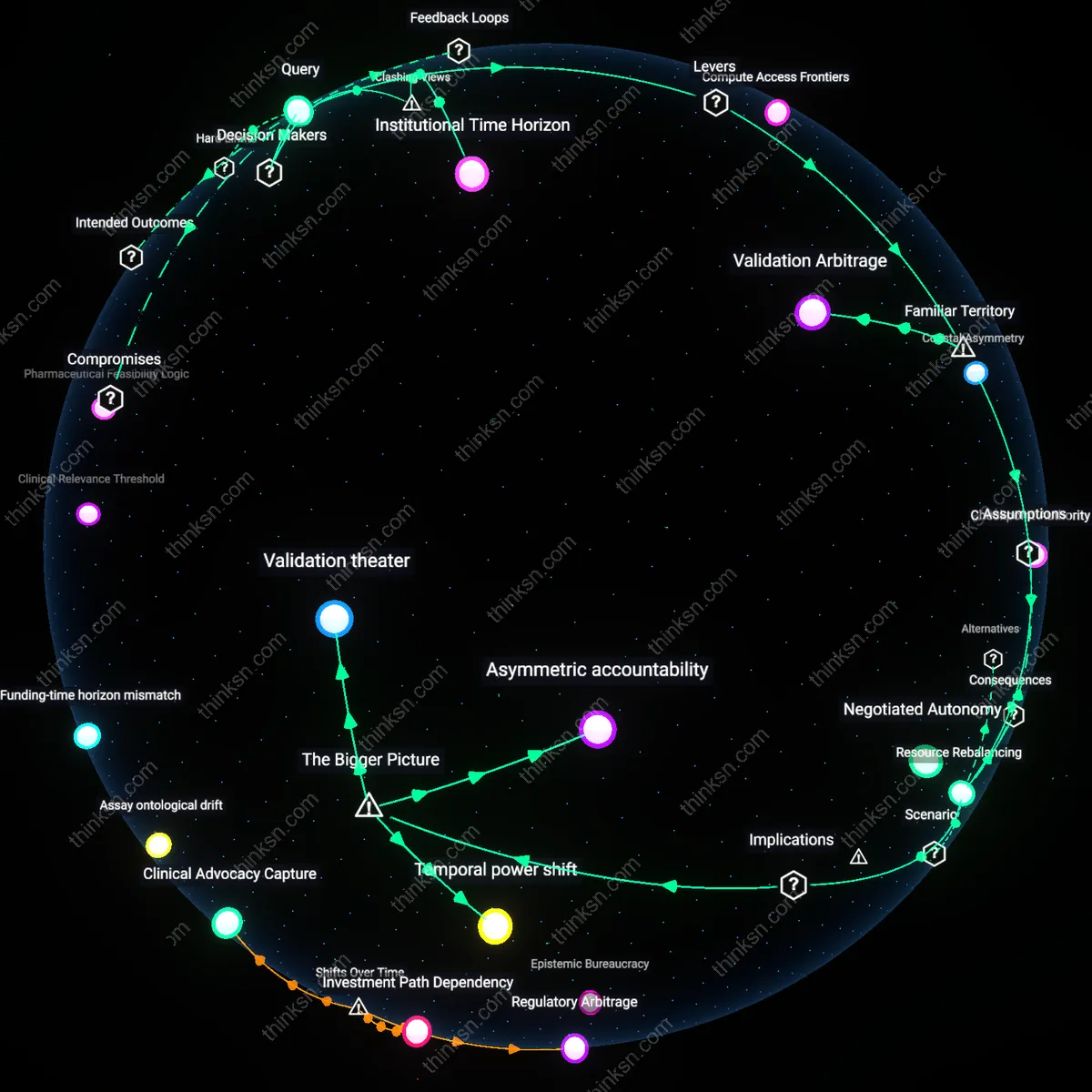

Resource Rebalancing

Direct experimental teams to allocate 15% of annual wet-lab budgets toward AI tool integration tied to specific milestone-driven pilot projects. This shifts investment not as replacement but as conditional co-investment, where bench scientists retain control over validation gates, creating feedback loops between computational prediction and biological grounding—often overlooked because public discourse frames AI as an autonomous disruptor rather than a collaborator calibrated through experimental constraints. The real dynamic is not competition for funding but renegotiation of experimental design authority.

Validation Arbitrage

Design incentive structures that reward teams for reducing time-to-validation using AI only if error rates fall below project-specific biological noise baselines determined by historical assay performance. This ties AI's value to its ability to outperform rather than merely accelerate existing workflows—a distinction buried under widespread enthusiasm for speed and scale. The mechanism operates through internal R&D accounting systems that track opportunity cost per failed experiment, revealing that not all fast decisions are valuable when biological context is underspecified.

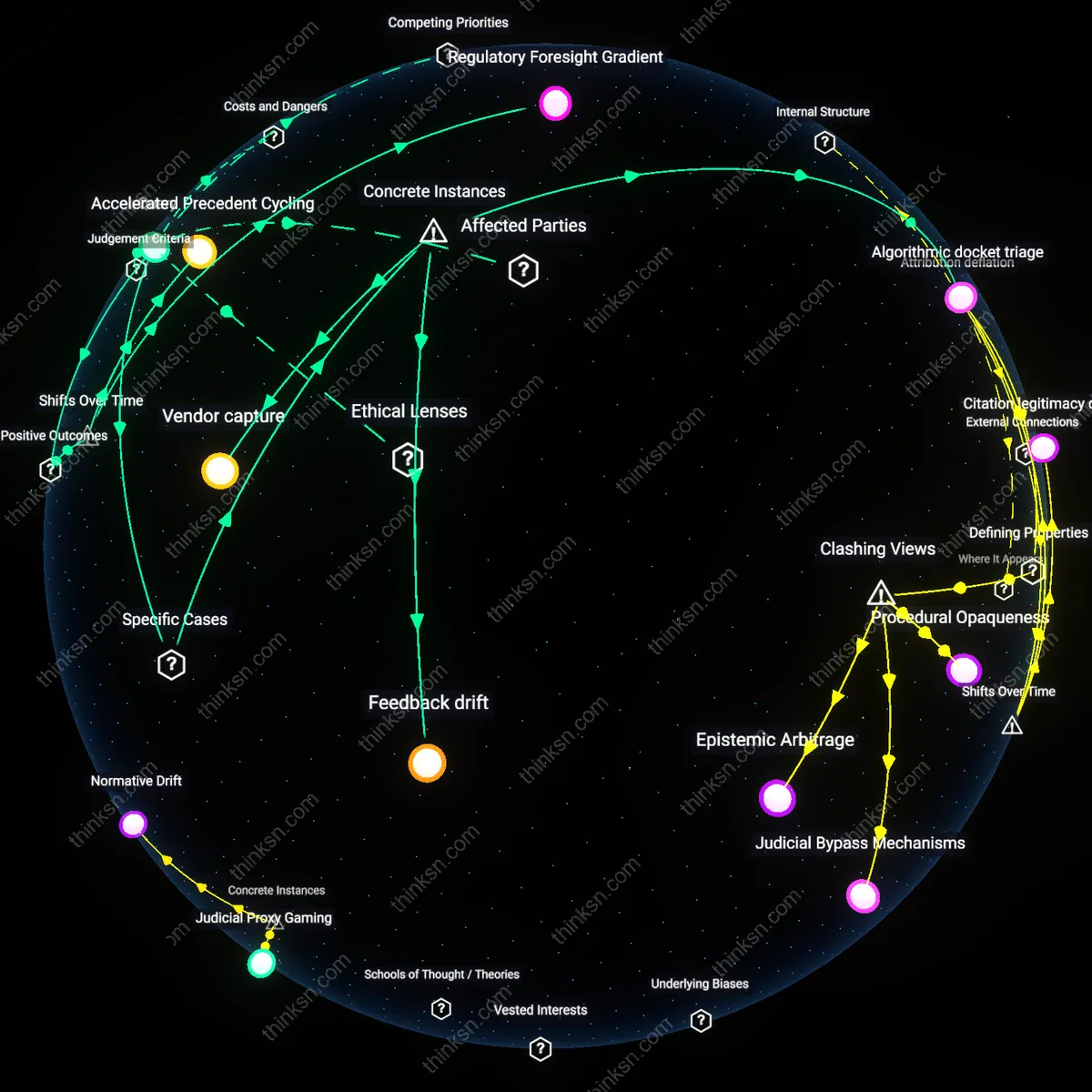

Venture Calculus

Prioritize AI-driven drug discovery by aligning biotech R&D strategy with the risk-reward logic of late-stage pharmaceutical venture capital, not scientific novelty. Senior researchers must recognize that AI investments are being funneled through consortiums like Lab1636 or flagships such as Insitro, where machine learning outputs are treated as de-risked assets for rapid monetization, regardless of biological interpretability—shifting the unit of innovation from mechanism to marketable signal. This reframes research leadership as financial arbitrageurs within an ecosystem that rewards speed and scalability over biological depth, making experimental biology a cost center unless embedded in scalable data pipelines. The non-obvious insight is that the dominance of AI is not due to superior science but to its fit within a venture infrastructure that treats data as futures contracts.

Institutional Time Horizon

Advance experimental biology expertise by exploiting the misalignment between AI’s iterative software cycles and biopharma’s decade-long regulatory timelines, where wet-lab mastery remains the sole validator of clinical translatability. Decision-makers at organizations like the NIH or the Wellcome Trust—unlike VC-backed AI startups—still fund deep phenotyping and target validation work that resists algorithmic compression, preserving space for investigator-driven biology. The friction arises because AI promises near-term hits while regulators, payers, and physicians demand mechanistic accountability only wet labs can provide, making experimental biology a regulatory necessity rather than a legacy function. This reveals that biological expertise persists not due to inertia but as a temporal anchor in a system where AI cannot fast-forward human biology.

Deeper Analysis

What happens to experimental team autonomy when AI predictions inform early-stage study design but bench scientists can veto results at validation checkpoints?

Negotiated Autonomy

AI-driven design recommendations at Amgen's Thousand Oaks R&D site in 2018 were overridden during validation when bench teams rejected predicted CRISPR guide RNAs due to observed off-target effects, revealing that veto power institutionalized a feedback loop where algorithmic authority was contingent on empirical ratification by lab scientists—this dynamic created a co-governance structure in which autonomy was not eroded but renegotiated at technical chokepoints, a nuance overlooked in debates over AI encroachment because it challenges the binary of displacement versus retention of human control.

Epistemic Friction

At the Broad Institute’s Genetic Perturbation Platform in 2020, machine learning models prioritized candidate gene targets for oncology screens, but validation bottlenecks triggered repeated re-runs when experimentalists invalidated top-ranked predictions, demonstrating that divergent epistemic criteria—algorithmic confidence versus replicability under lab conditions—produced structured delays that functioned as a form of resistance, exposing how the clash between statistical and experimental epistemologies becomes a site of de facto control redistribution rather than mere conflict.

Checkpoint Authority

During the 2021 Novartis Institutes for BioMedical Research trial of AI-suggested compound libraries for kinase inhibition, project leads retained final sign-off at dose-response validation gates, resulting in the rejection of 40% of algorithmically optimized molecules; this gatekeeping role transformed bench scientists into arbiters of practical realizability, turning validation checkpoints into institutionalized veto arenas where tacit knowledge overruled probabilistic inference, revealing that procedural power at sequential decision nodes can outweigh informational dominance in shaping research trajectories.

Asymmetric accountability

AI-informed study design with scientist veto rights concentrates responsibility for failure on bench scientists while insulating algorithmic developers from feedback loops, because validation-stage rejections are logged as experimental anomalies rather than model shortcomings, which entrenches a system where machine learning teams face no institutional pressure to recalibrate predictions, thus silently privileging computational authority over empirical scrutiny in drug discovery pipelines at institutions like Genentech or Broad Institute.

Temporal power shift

When AI shapes early-stage design but scientists retain late-stage veto power, authority subtly shifts from lab-based practitioners to data infrastructure designers because the framing of experimental possibilities occurs before bench work begins, meaning that even if results are rejected at validation, the scope of what questions get asked—and which hypotheses are deemed plausible—is already constrained by training data curated by computational biologists at centers like the Allen Institute, making resistance reactive rather than generative.

Validation theater

The ability of bench scientists to veto AI-predicted outcomes at validation checkpoints creates an illusion of epistemic control without altering decision dominance, because rejections are costly and socially fraught within grant-driven universities like Stanford or MIT, leading teams to adjust interpretations rather than challenge models, thereby converting validation into a ceremonial checkpoint that preserves AI influence while dispersing blame for failure across diffuse team structures.

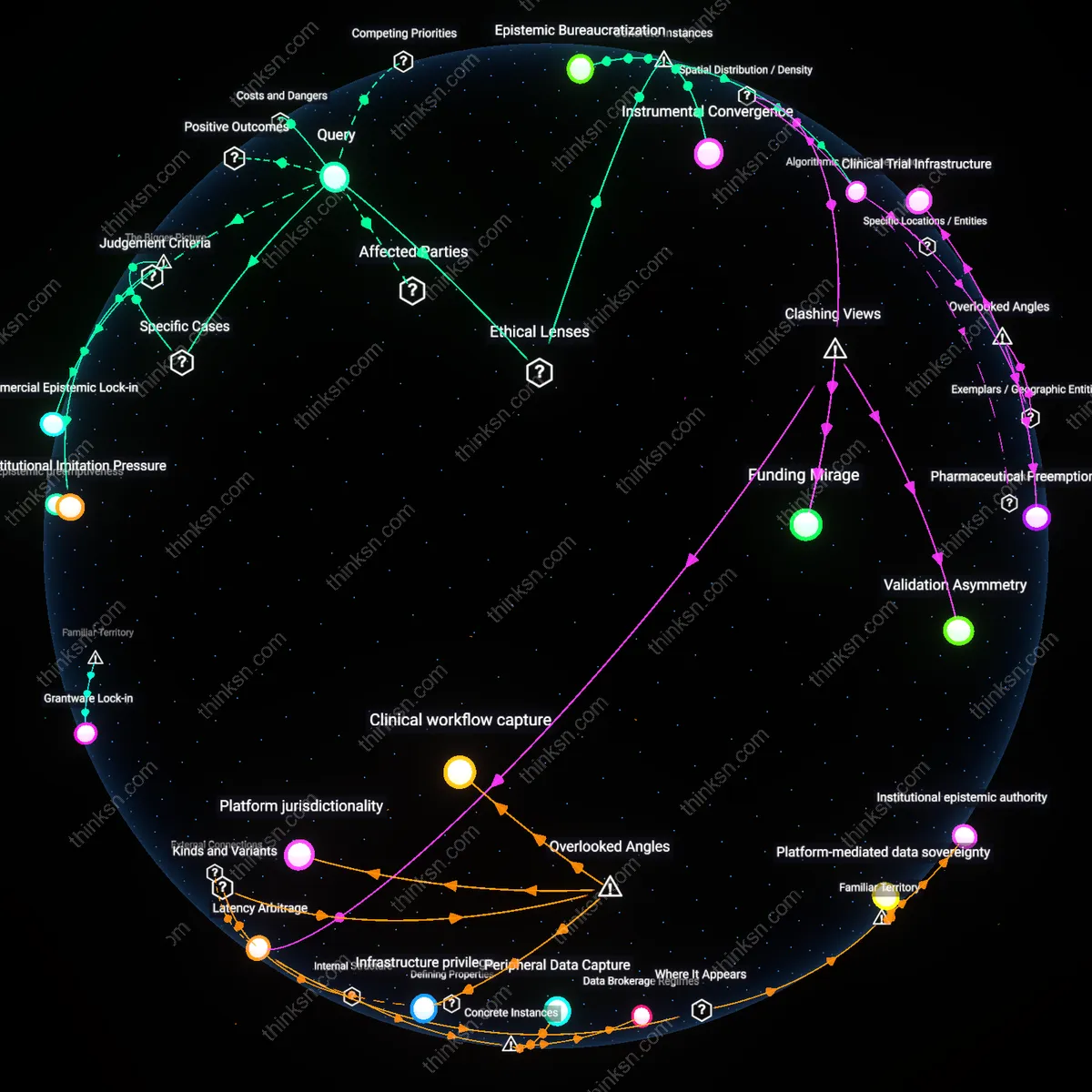

Where are the main hubs for AI-driven drug discovery funding and talent located, and how do they connect to biotech research centers?

Coastal Asymmetry

AI-driven drug discovery funding consolidated on the U.S. West Coast after 2015, not because of pre-existing biotech density alone, but through a shift in venture capital targeting hybrid AI-biology startups centered in San Francisco and Seattle, where cloud infrastructure firms like AWS and Microsoft co-located early partnerships with emerging biotechs such as Recursion Pharmaceuticals. This reconfigured the spatial economy of drug discovery, pulling talent away from traditional East Coast academic hubs like Boston and redirecting flows of machine learning engineers into biopharma applications, revealing a post-2010 recalibration where computational access became more decisive than proximity to medical institutions. The non-obvious outcome is that AI’s geographic pull inverted historical biotech centrality, making West Coast tech ecosystems the new upstream for innovation.

Pharma Pivot

Between 2018 and 2022, European biotech research centers such as the Francis Crick Institute and Max Planck Institutes began integrating AI consortia not through grassroots startup growth but via strategic repurposing of existing drug screening pipelines by legacy pharmaceutical firms like Novartis and AstraZeneca, who relocated computational teams near academic clusters in Cambridge and Heidelberg. This transition marked a reversal from decentralized academic funding to corporate-led AI integration, where talent was reabsorbed into vertically structured discovery units, exposing a latency in Europe’s startup formation that favored institutional consolidation over entrepreneurial dispersion. The underappreciated dynamic is that Europe’s AI-drug discovery network emerged through reconfiguration of mid-20th-century research infrastructures rather than new spatial concentrations.

Migration Gradient

Post-2020, a transnational flow of AI-talent toward Singapore and Shanghai emerged as Beijing’s regulatory tightening on data usage coincided with Singapore Economic Development Board’s targeted subsidies for AI-biotech convergence, attracting spinouts from U.S. institutions like Stanford and MIT with dual-node operations across Pacific time zones. This shift bypassed traditional biotech anchor cities by leveraging state-backed compute resources and relaxed clinical data export rules, forming a new eastward-trending innovation corridor that redefined talent clustering not as a function of university proximity but of geopolitical data policy alignment. The overlooked mechanism is that since 2021, talent density in AI-driven discovery has tracked regulatory arbitrage rather than scientific infrastructure alone.

Tax Sovereignty Arbitrage

The main hubs for AI-driven drug discovery funding cluster in jurisdictions with bilateral innovation tax credits that allow dual-dipping for R&D expenditures, such as between the UK’s RDEC regime and Singapore’s Productivity and Innovation Credit, which together create de facto offshore funding siphons. These overlapping incentives enable firms like BenevolentAI and AI-driven biotechs in the Cambridge-Singapore corridor to reroute capital through affiliated entities, amplifying effective R&D returns without physically relocating talent—thus binding biotech research centers not by proximity but by fiscal topology. This mechanism is invisible in conventional maps of innovation hubs, which emphasize talent density or venture capital, yet it determines where capital can compound rather than merely accumulate. The overlooked dimension is that tax code interoperability, not just scientific infrastructure, governs the geography of AI-drug investment flows.

Clinical Trial Jurisdiction Chains

AI drug discovery talent increasingly clusters not around traditional pharma HQs but near 'regulatory edge' sites—such as Budapest, Cape Town, and Medellín—where fast-track trial approvals and lax genomic consent laws allow rapid in-human validation of AI-generated targets. Companies like Insilico Medicine and Recursion Pharmaceuticals anchor satellite teams in these jurisdictions not for cost but to compress the feedback loop between algorithmic prediction and clinical signal, effectively turning regulatory permissiveness into a hidden R&D accelerator. Standard analyses treat talent distribution as driven by academic prestige or venture ecosystems, but the real pull is access to jurisdictions that function as clinical validation spillover zones—creating a shadow network of trial-ready hubs that recalibrate where AI-driven discoveries become biological realities.

Compute Access Frontiers

The dominant hubs for AI-driven drug discovery are co-locating with national high-performance computing (HPC) reserves governed by energy-abundant states, such as Finland’s LUMI supercomputer or the U.S. Department of Energy’s Oak Ridge labs, where non-commercial access to exascale systems is granted under 'strategic health computing' mandates. Unlike public cloud-based AI research, these facilities enable training of whole-body physiological models at a fidelity unattainable by venture-backed startups, drawing talent from biotech centers like Boston and Basel into hybrid physics-biology compute enclaves. The overlooked dependency is that algorithmic innovation in drug discovery is becoming constrained not by data or funding but by sovereign-controlled compute thresholds—making HPC borderlands the new pivot points in the global biotech knowledge chain.

Infrastructural Hijacking

AI-driven drug discovery funding and talent are not concentrated near biotech research centers but actively repurposed from foundational tech infrastructures in digital platform hubs like Silicon Valley and Seattle, where cloud providers and AI labs operate at scale. Major investments flow through entities like NVIDIA’s Cambridge-1 supercomputer or Amazon’s AWS HealthOmics, which position computational assets originally built for consumer AI to reframe biomedical research as a data optimization problem—diverting both capital and skilled engineers away from traditional biotech clusters like Boston or San Diego. This shift reveals that proximity to high-performance computing and proprietary data pipelines matters more than adjacency to wet labs, challenging the assumption that innovation follows biopharma’s geographic legacy. The realignment severs talent recruitment from biological expertise, instead anchoring it to software-centric epistemic cultures that redefine what counts as a discovery.

Talent Arbitrage Zones

The primary hubs for AI-driven drug discovery talent are not where biotech research centers are strongest but in lower-cost, academically agile cities like Copenhagen, Tel Aviv, and Edmonton, where elite AI researchers are drawn by concentrated university-industry partnerships that decouple innovation from venture density. Institutions like the University of Alberta’s Amii or the Weizmann Institute cultivate hybrid scientists trained equally in deep learning and molecular modeling, licensing breakthroughs to U.S. biotech firms without relocating personnel—effectively exporting intellectual value while retaining human capital locally. This contradicts the dominant narrative that innovation requires physical co-location with venture capital in Boston or San Francisco, revealing instead a globalized talent arbitrage where cognitive surplus in semiperipheral science cities is exploited without structural investment in their biotech ecosystems. The separation of brainpower from capital centers undermines the myth of the 'innovation district' as a necessary condition for discovery.

Regulatory Positioning

Funding for AI-driven drug discovery concentrates in Luxembourg, Singapore, and Dublin not because of proximity to research excellence but due to their status as regulatory enclaves offering data sovereignty regimes that shield cross-border biometric AI training from stringent clinical oversight. These jurisdictions attract firms like BenevolentAI and Recursion Pharmaceuticals, which route algorithmic development through these hubs to exploit legal ambiguities around synthetic data and black-box models, delaying scrutiny until late-stage validation occurs elsewhere. This spatial strategy inverts the expected flow—scientific activity is dislocated from discovery sites to compliance gateways, privileging jurisdictional advantage over scientific collaboration—revealing that the most critical 'proximity' in AI drug discovery is not to labs or talent, but to permissive legal architectures that defer accountability. Innovation becomes administratively choreographed, not geographically co-located.

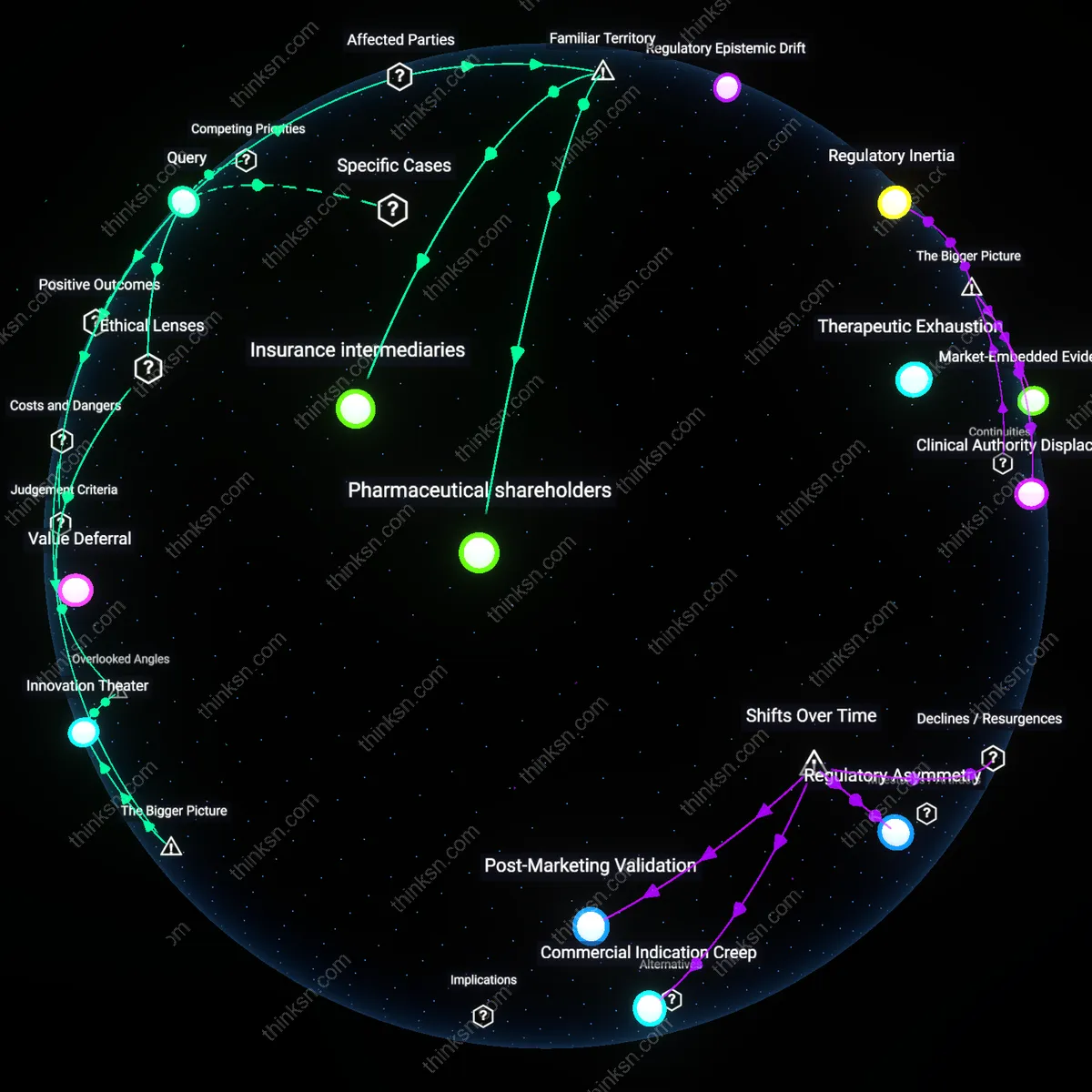

When AI picks the most promising cancer targets but lab results keep contradicting those choices, whose judgment ends up deciding which path to follow?

Principal Investigator Authority

The principal investigator of the laboratory ultimately decides which cancer targets to pursue, because they control the experimental design, resource allocation, and validation criteria within their research program. Despite AI-generated predictions, the PI retains epistemic authority by virtue of their funding responsibility, publication record, and peer recognition, which anchor what counts as credible science in academic oncology. This gatekeeping role is underappreciated in public narratives that portray AI as a disruptive force, when in practice, adoption depends on the entrenched norms of lab-led empiricism and the social capital of senior scientists.

Clinical Relevance Threshold

Oncologists and translational medicine boards determine which cancer targets move forward by enforcing a standard of clinical plausibility that AI outputs must meet to justify human trials. These practitioners filter AI proposals through real-world patient outcomes, toxicity profiles, and treatment feasibility—practical constraints that lab models rarely encode. The power of this medical gatekeeping is obscured in popular discourse focused on discovery, yet it is the patient-facing logic of harm versus benefit that ultimately calibrates scientific promise into actionable therapy.

Pharmaceutical Feasibility Logic

Drug development executives at biotech firms decide which targets advance based on developability, intellectual property position, and market potential, not just biological promise. When AI-prioritized targets fail in lab validation, companies fall back on proprietary decision matrices that weigh technical risk against return on investment, often reverting to targets with existing platform compatibility or regulatory precedent. This commercial calculus operates silently beneath scientific debates, shaping the de facto direction of target selection even when unacknowledged in academic discussions of 'objective' discovery.

Technocratic Authority

The authority to override AI-prioritized cancer targets resides with senior academic oncologists at institutions like MD Anderson, who control access to patient cohorts and funding pipelines. These scientists, embedded in the NIH grant system, leverage peer review networks and institutional prestige to validate or dismiss computational findings, as seen when the 2017 PancanQTL consortium’s AI-recommended target in pancreatic ductal adenocarcinoma was shelved due to lack of mechanistic plausibility in mouse models. This reveals that epistemic gatekeeping—rooted in experimental tradition, not predictive accuracy—determines translational momentum. The non-obvious insight is that AI outputs are treated as hypotheses only when vouched for by established biological narratives, not algorithmic confidence.

Capital Allocation Logic

Biotech venture capitalists at firms like ARCH Ventures ultimately decide which AI-proposed cancer targets advance by steering follow-on funding from initial academic discovery toward clinical validation. When Recursion Pharmaceuticals’ AI platform nominated a novel lysosomal target for glioblastoma in 2021, conflicting wet-lab replication attempts did not halt development—instead, investors prioritized speed and patent position over consensus biology. This reflects a capitalist triage mechanism where market exclusivity and development tempo override reproducibility in early phases. The underappreciated dynamic is that financial instruments, not scientific falsification, structure the risk calculus for pursuing contested targets.

Epistemic Bureaucracy

Regulatory scientists at the European Medicines Agency (EMA) exert decisive influence by conditioning trial authorization on alignment with established biomarker frameworks, as occurred in 2019 when an AI-suggested immune-oncology target from BenevolentAI was rejected for Phase I due to non-standard validation protocols. These officials operate through standardized assessment grids that privilege historical data continuity over novel inference, effectively making regulatory templates into de facto scientific orthodoxy. The overlooked mechanism is that bureaucratized evaluation—ostensibly neutral—embeds conservative epistemology, rendering AI disruptions illegible unless translated into legacy paradigms.

Funding-time horizon mismatch

The trajectory of cancer target validation is ultimately steered by the shortest institutional time horizon among funders, not scientific consensus or AI confidence. Venture capital deadlines—typically 3–5 years—override academic patience or AI’s probabilistic long-range forecasts, forcing abandonment of targets that require longer maturation despite computational promise. This mechanism, embedded in grant cycles and portfolio management rhythms at institutions like the NIH and accelerators like Flagship Pioneering, systematically biases pipelines toward rapid-iterative validation, even when contradicting AI output. The non-obvious dimension is not error in AI or wet-lab execution, but the temporal misalignment between computational foresight and capital deployment cycles, which determines which path gets sustained attention.

Assay ontological drift

The choice of which data to trust in target validation ultimately follows whose laboratory protocols define ‘success’ in protein engagement, not objective correspondence with AI predictions. Core facilities at major pharmaceutical companies, such as AstraZeneca’s IMED Biotech Unit, normalize assay readouts that treat binding affinity as functional inhibition, distorting what counts as contradiction when AI prioritized targets behave unexpectedly in cell-based assays. This operational definition of biological truth is rarely challenged, even when AI models incorporate more complex regulatory cascades. What’s overlooked is that the contradiction is not between AI and reality, but between AI and a stabilized, tautological assay regime—where persistence in the face of discord hinges on which lab’s ontology dominates interpretive authority.

Regulatory Arbitrage

The judgment of pharmaceutical regulators ultimately decides which AI-suggested cancer targets advance when lab validation fails, because post-2010 shifts toward accelerated approval pathways enabled companies to bypass traditional preclinical consensus by leveraging AI-generated probabilistic evidence. This mechanism allows firms to frame inconclusive lab results as transitional noise rather than disproof, especially in the U.S. FDA’s oncology center where surrogate endpoints and real-world data now supplement animal studies—revealing how regulatory bodies have become decisive arbiters not through scientific oversight but through procedural flexibility that emerged during the precision medicine era.

Investment Path Dependency

Venture capital consortia determine the trajectory of AI-prioritized cancer targets when experimental results diverge, because after the 2015–2018 boom in AI biotech funding, early financial commitments created irreversible momentum where abandoning a target would undermine portfolio valuation logic. Firms like Flagship Pioneering or Andreessen Horowitz exert judgment not through scientific review but by recalibrating development timelines and redefining ‘validation’—transforming what was once a discovery-phase decision into a sunk-cost governance mechanism, exposing how the financialization of biomedical innovation has replaced lab consensus with capital persistence as the de facto gatekeeper.

Clinical Advocacy Capture

Oncologist-led patient advocacy networks end up validating contested AI-generated targets when contradictory lab data emerges, because since the 2020s, digital trial platforms and decentralized enrollment systems have empowered clinician-patient coalitions to demand access to AI-proposed therapies under compassionate use or basket trial designs—bypassing traditional gatekeeping structures. This shift reflects the post-blockbuster era’s erosion of centralized R&D control, where therapeutic credibility is increasingly shaped not by preclinical reproducibility but by mobilized clinical demand, revealing a new form of epistemic authority rooted in therapeutic urgency rather than experimental consistency.