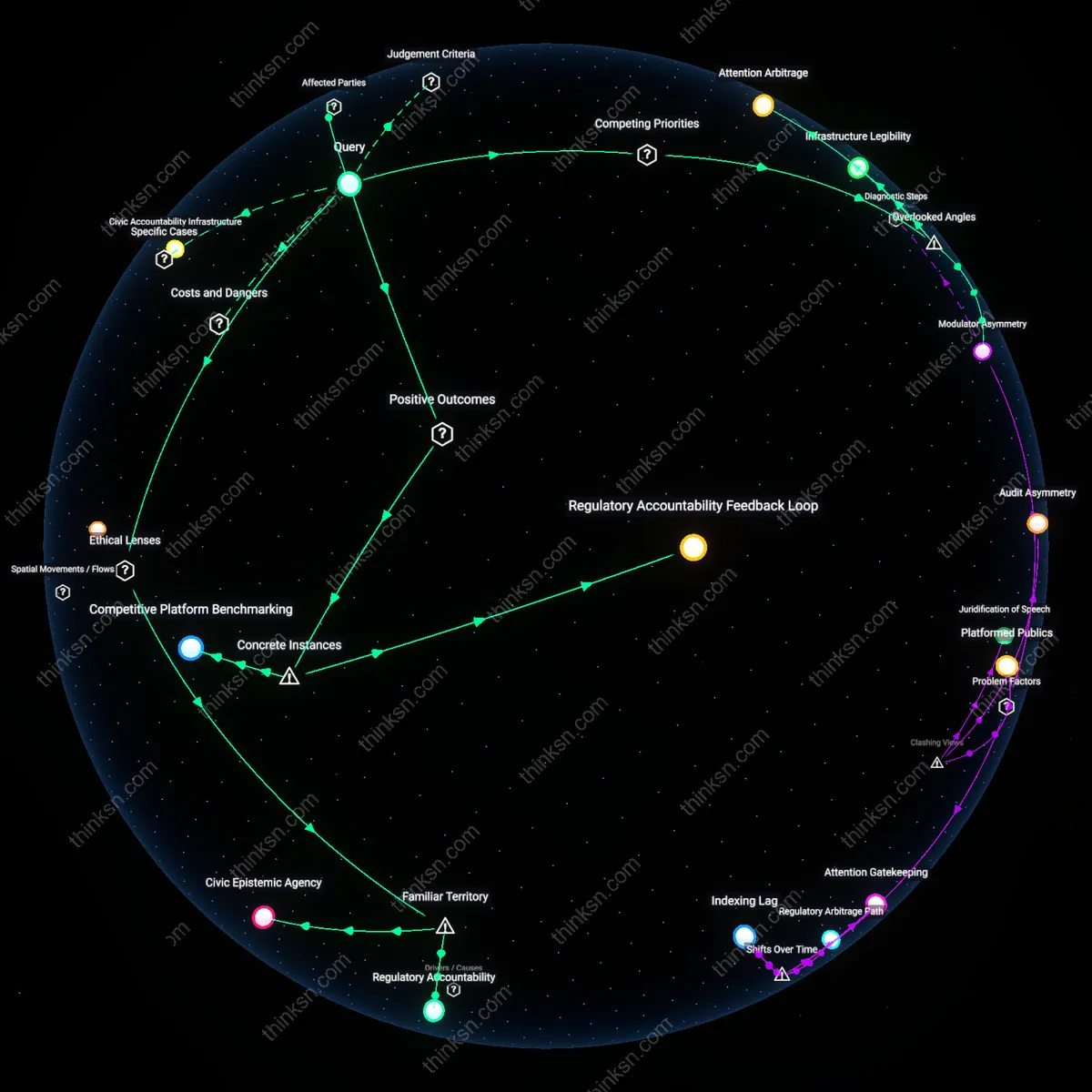

Judicial Empowerment

France's Conseil d'État rapidly invalidated key provisions of the Avia Law in 2020, conditioning platform liability on judicial review rather than administrative takedown orders, which forced the state to route content moderation through courts and established a precedent that constrained executive overreach in non-election periods. This shift empowered judges as gatekeepers of free expression online, embedding legal scrutiny into misinformation governance and deterring hasty removals by platforms seeking to comply with state demands. The non-obvious consequence was not weaker enforcement but a systemic rebalancing toward judicial oversight, transforming how legality was enforced in digital speech regulation.

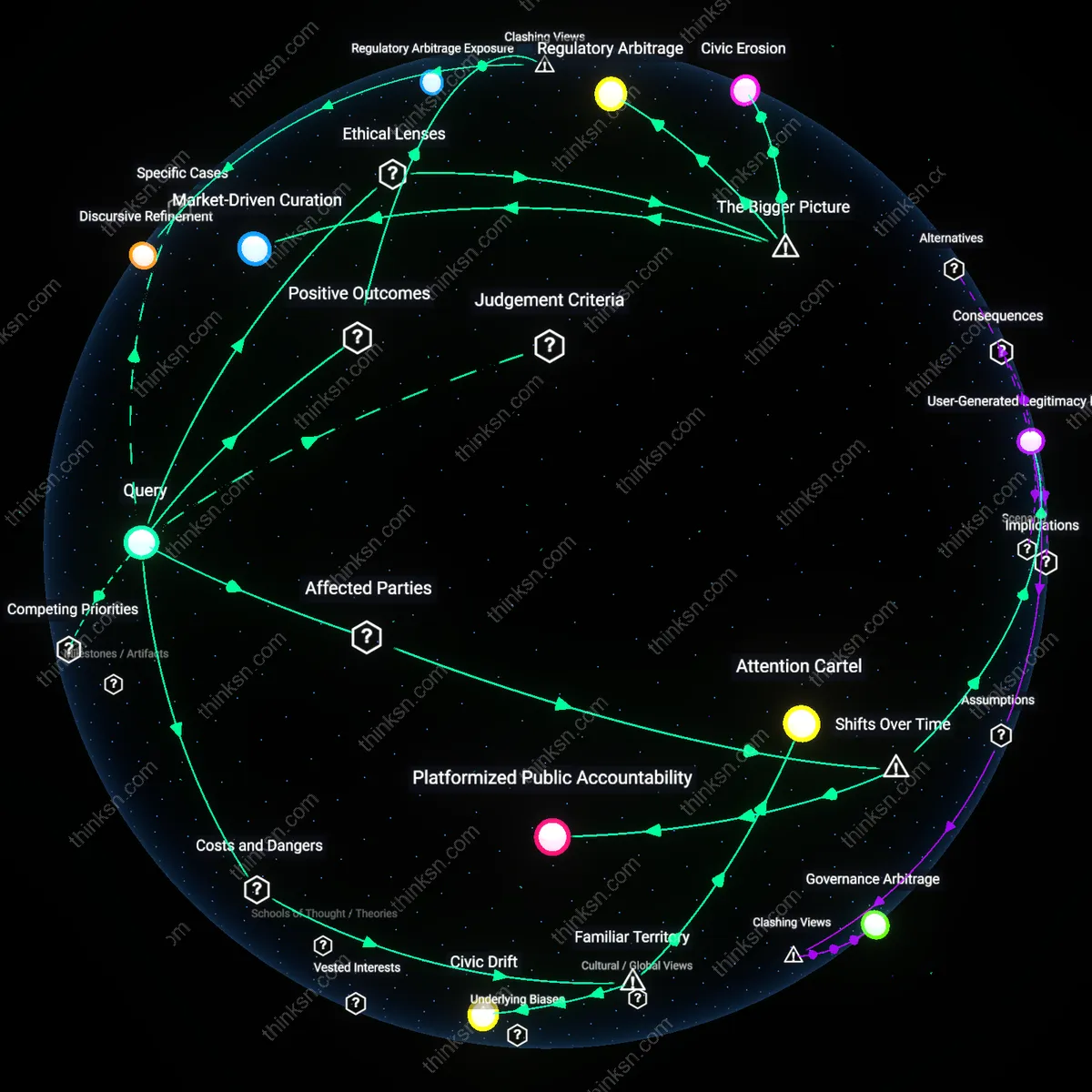

Regulatory Arbitrage

After the Avia Law’s partial invalidation, major platforms strategically aligned their content moderation practices with the emerging EU Digital Services Act (DSA) framework, using its harmonized rules to resist fragmented national measures like Avia, thereby standardizing responses to misinformation across member states. This alignment allowed platforms to replace ad hoc compliance with centralized moderation protocols governed by Luxembourg-based compliance units and Brussels-facing legal teams. The underappreciated dynamic was that platforms leveraged supranational regulation to depoliticize national pressure, turning EU-level standardization into a shield against aggressive domestic regimes.

Public Accountability Deflection

The failure of the Avia Law to endure judicial scrutiny led French regulators to shift blame onto platforms’ opacity, accelerating demands for algorithmic transparency and auditability under the DSA, which in turn recast misinformation management as a technical governance problem solvable through disclosure rather than state-directed removal. This reframing allowed policymakers to maintain political momentum by targeting ‘black box’ systems instead of asserting direct control, redirecting public accountability toward platform design rather than state efficacy. The overlooked mechanism was the substitution of regulatory ambition with procedural scrutiny, allowing both states and platforms to appear responsive without decisive intervention.

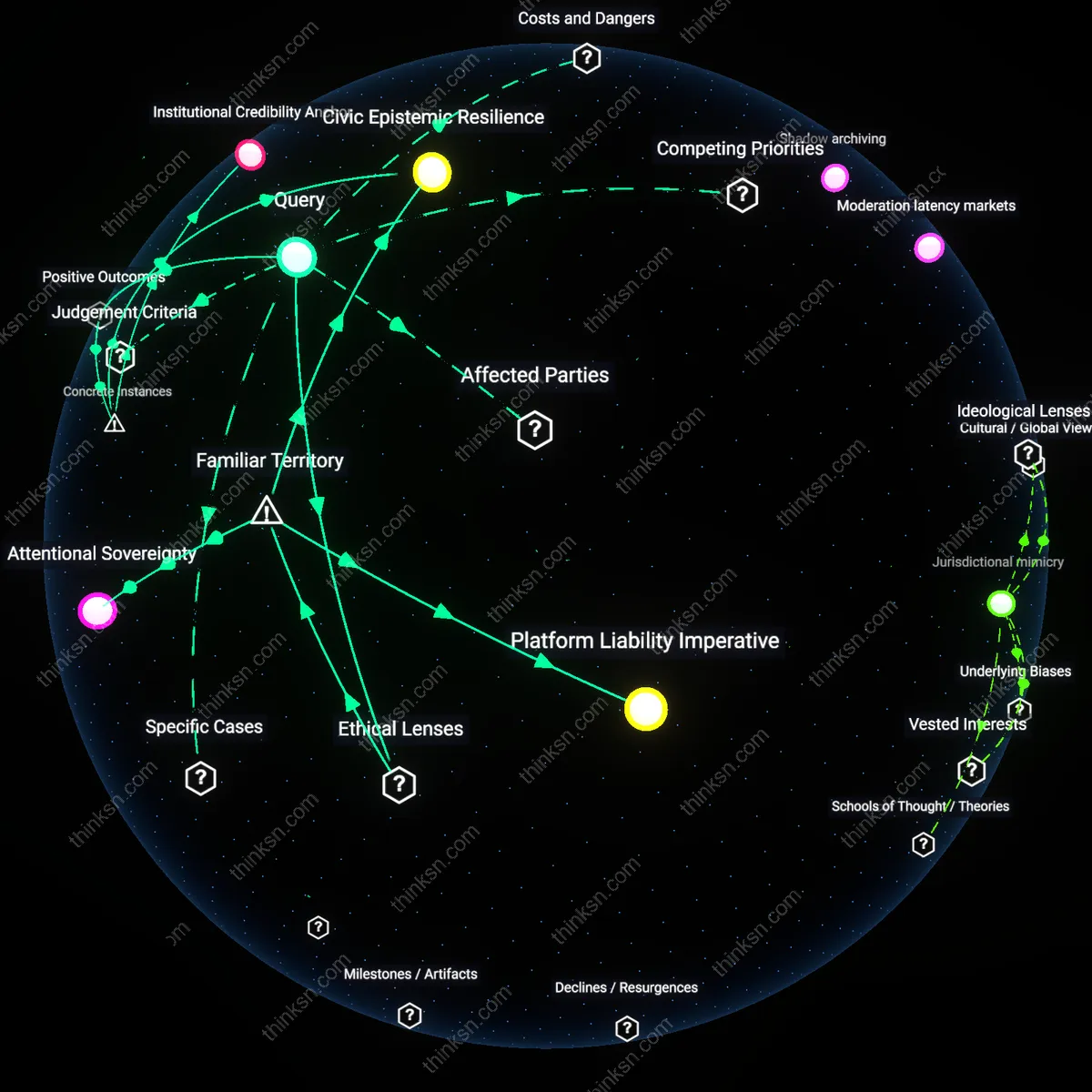

Shadow archiving

Platforms began systematically preserving deleted misinformation content for internal threat intelligence rather than public transparency, a shift triggered by insurers requiring demonstrable risk-mitigation behaviors post-Avia Law. This created a clandestine feedback loop where deplatformed narratives were analyzed to pre-identify destabilizing content patterns beyond electoral crises, yet these archives remained inaccessible to researchers. The non-obvious mechanism is actuarial pressure from cyber-insurance underwriters, who treated unregulated misinformation flows as liability exposures, thereby incentivizing data hoarding over disclosure—a move that redefined platform accountability as a forensic resource for private risk management, not public scrutiny.

Moderation latency markets

After the Avia Law, third-party fact-checking vendors started pricing their services based on the speed of 'pre-emptive tagging' of borderline content, leading platforms to optimize for temporal proximity to upload rather than contextual accuracy. This created an implicit market in moderation latency, where faster takedowns generated higher compliance scores under French regulatory expectations, even outside election periods. The overlooked dynamic is how billing models for outsourced moderation—structured around response time—rewired content governance toward performative swiftness, privileging algorithmic flagging over editorial assessment and effectively laundering regulatory pressure into automated acceleration.

Jurisdictional mimicry

Smaller EU member states began adopting imitation versions of the Avia Law’s procedural mechanisms—such as mandatory reporting timelines and escalation tiers—not because of domestic demand, but to force platforms into standardized cross-border enforcement templates that reduced local operational overhead. This cascade of mimicry allowed platforms to consolidate moderation pipelines under uniform compliance interfaces, effectively outsourcing national policy variation to procedural harmonization. The hidden dependency is that platform scalability interests inadvertently rewarded legislative isomorphism, turning national laws into interoperable modules and shifting the center of gravity in content governance from legal substance to administrative formatting.

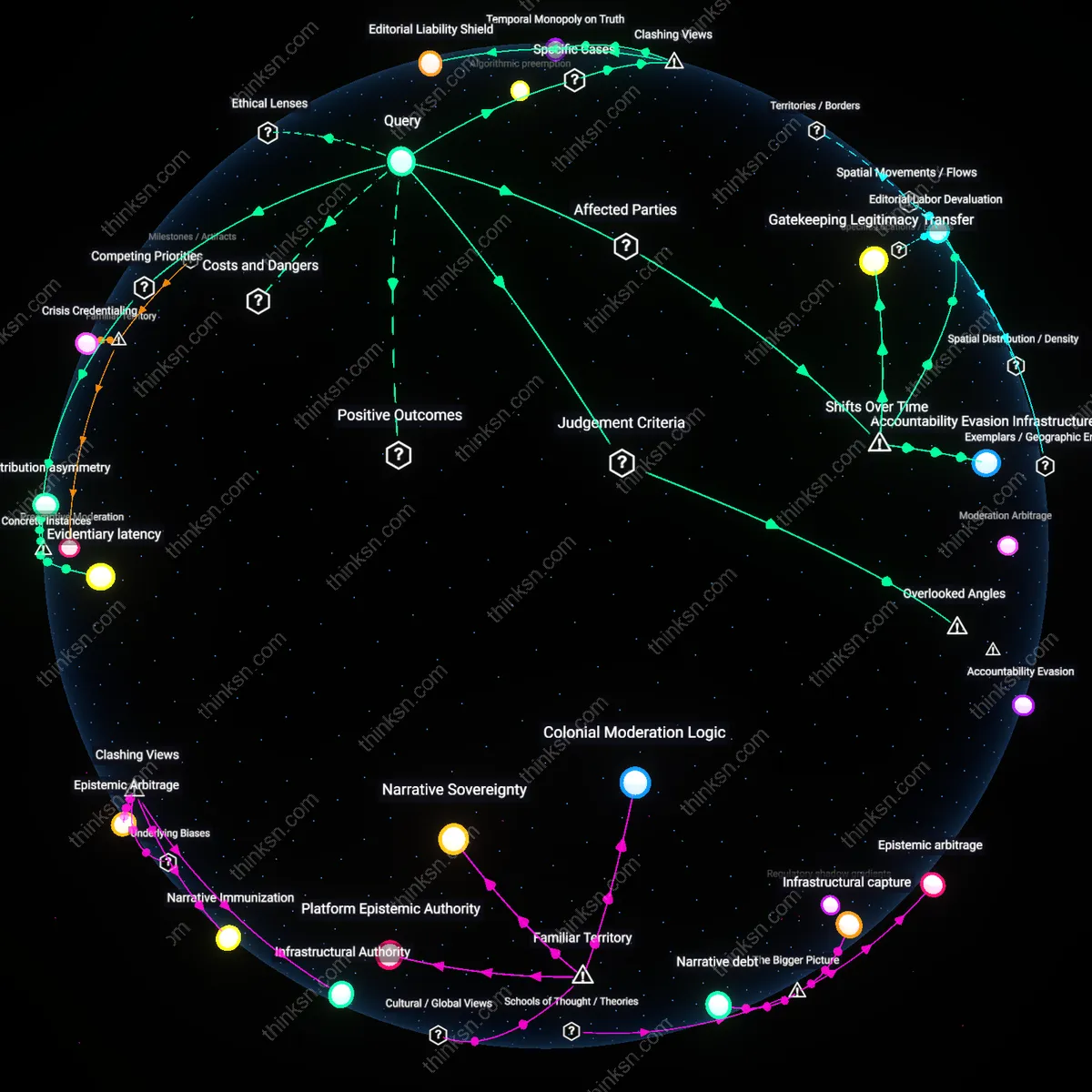

Judicial Bypass Mechanism

France's Avia Law was struck down in 2020 by the Constitutional Council, yet platforms expanded automated takedown systems for non-election misinformation by aligning with the law’s procedural skeleton—urgency, definitional breadth, and state-backed legitimacy—while shifting enforcement to opaque internal tribunals shielded from public scrutiny. This post-legal adaptation reveals how platforms adopted the law’s operational tempo and scope without its accountability, treating constitutional invalidation not as a setback but as a clearance to mimic its powers through private governance. The non-obvious insight is that legal defeat became the condition for more durable, less contestable control—a reversal of the intuitive link between judicial rejection and policy termination.

Regulatory Imaginary Infrastructure

After the Avia Law’s annulment, European regulators and platforms began treating the law as if it had succeeded, referencing its categories and timelines in subsequent legislation like the Digital Services Act, thereby constructing a fictional continuity where a failed law became the blueprint for binding norms. This collective pretense operates through institutional memory laundering—where the symbolic force of a law outlives its legal death, shaping compliance behaviors and technical designs despite having no force. The counterintuitive outcome is that legislative failure can generate stronger normative pressure than enactment, exposing how regulatory legitimacy is often performative rather than juridical.

Content Hyperlegality

In the years following the Avia Law, platforms began classifying non-election misinformation as potential 'hate-adjacent' speech to justify preemptive removal under broader human rights frameworks, thus reframing public disinformation as a threat to dignity rather than truth—a move enabled by French civil society groups citing the Avia Law as evidence of societal harm. This pivot leverages the law’s ethos while discarding its letter, embedding its intent into AI moderation models trained on French legal rhetoric now detached from statutory origin. The overlooked mechanism is that laws can posthumously colonize algorithmic logic, making nullity irrelevant to influence.

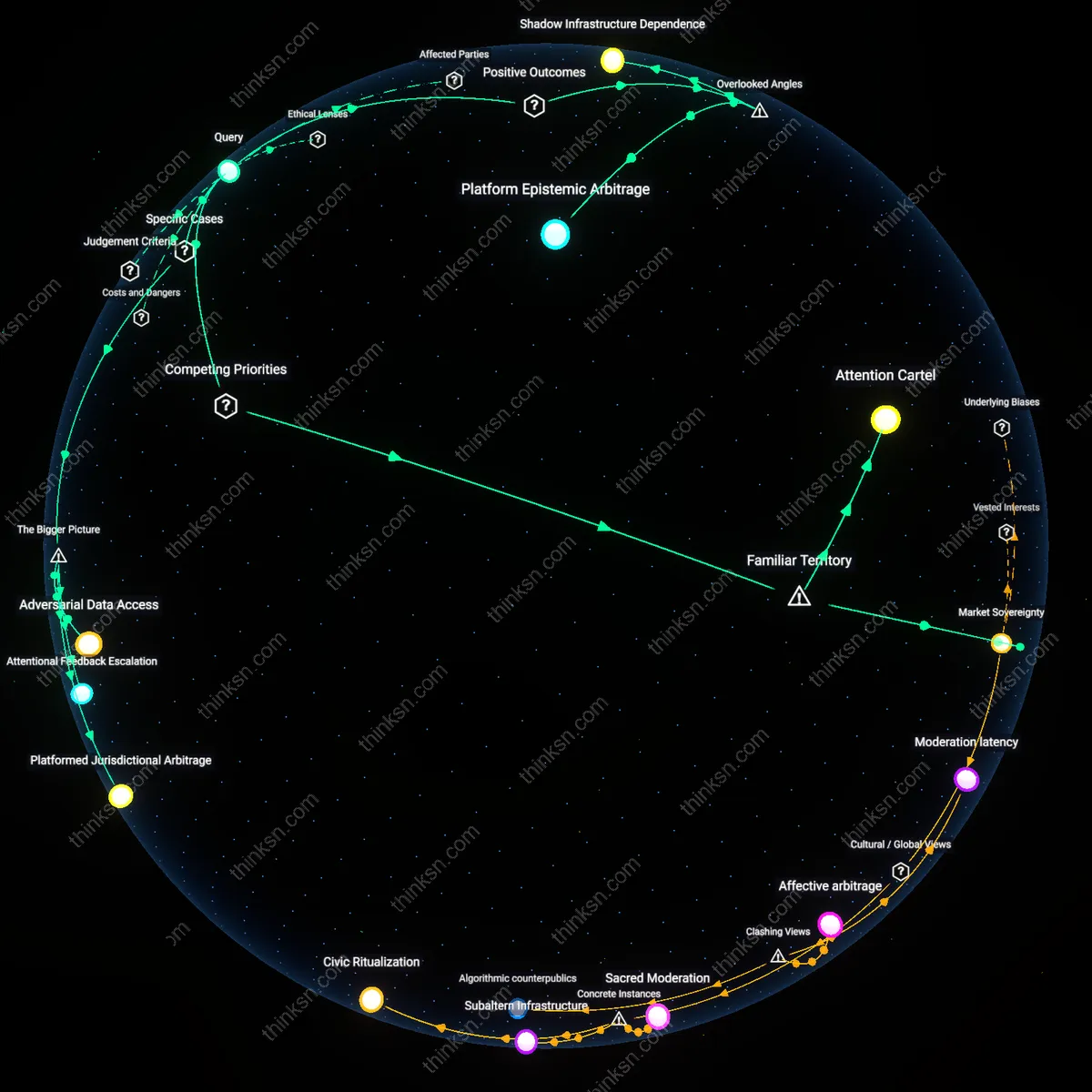

Moderation Drift

After France's Avia Law passed in 2020, YouTube shifted its enforcement of misinformation policies on non-election content by retroactively adjusting age-restriction algorithms originally designed for extremist content to limit visibility of vaccine misinformation on channels like Doctissimo and Jean-Michel Cohen in 2021. This recalibration was not a new policy but a repurposing of existing flagging systems, revealing how platforms absorb regulatory pressure by silently migrating moderation tools across domains, making enforcement less visible and accountable. The non-obvious mechanism here is gradual functional creep in AI moderation systems, where election-specific rules become latent infrastructure for broader behavioral control.

Regulatory Arbitrage Spaces

Following the Avia Law's emphasis on fast takedowns, Telegram opened dedicated French-language channels in 2022—such as Groupe France Info Parallèle—hosting material previously removed from Facebook and Twitter, capitalizing on its unmoderated infrastructure to become a haven for persistent misinformation actors like Étienne Chouard’s network. This migration demonstrated how platform ecosystems respond to national regulation not by compliance alone, but by niche specialization, where underregulated services expand their influence by absorbing overflow from compliant platforms. The overlooked dynamic is not evasion per se, but the intentional structural asymmetry between platforms that enables regulated spaces to offload rather than eliminate harmful content.

Policy Shadow Testing

In the wake of Avia Law enforcement challenges, TikTok launched its 'Civic Integrity Pilot' in Marseille in 2023, trialing AI-driven downranking of non-election health and civic misinformation in a region with high disinformation activity, using local university partnerships with Aix-Marseille Université to validate signals. This localized experiment functioned not as a direct response to French law but as a controlled test bed for scalable moderation templates under legal duress, showing how platforms use geographic policy shocks to justify internal innovation labs. The underappreciated reality is that regulatory friction can be productively weaponized by platforms to advance internal R&D under the guise of compliance.