Real-Time News vs Misinformation on Twitter for Journalists

Analysis reveals 4 key thematic connections.

Key Findings

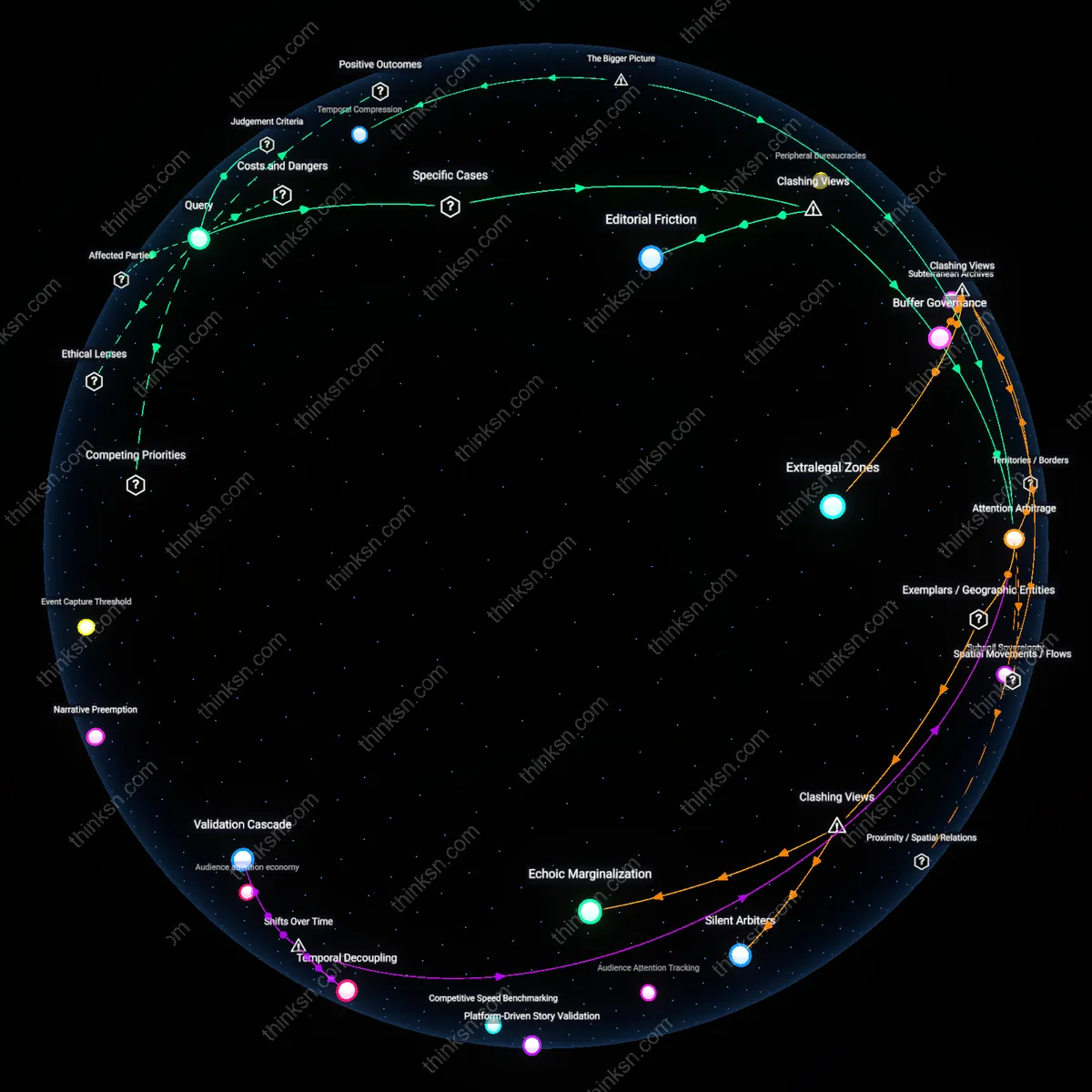

Verification lag

A mid-career journalist must delay engagement with Twitter content to align it with institutional verification standards, because the shift from wire services to algorithmic feeds after 2010 fragmented temporal coherence in news production. Newsrooms once synchronized with AP or Reuters time stamps, but now journalists operate in a dual-temporal reality—where speed is dictated by engagement algorithms yet accountability follows legacy editorial cycles, making the delay itself a productive, invisible mechanism that exposes how professional judgment has been temporally unmoored from public attention. This lag, though criticized as sluggish, is the operational residue of a profession adapting to asynchronous information regimes.

Attention arbitrage

Mid-career journalists preserve credibility by selectively amplifying Twitter content only when it serves public sense-making rather than platform virality, a strategic pivot that emerged after 2020 as news organizations recalibrated their relationship to audience metrics following the misreporting cycles of the pandemic and insurrection. Unlike the 2012–2016 era, when breaking news dominance justified rapid re-sharing, the current norm treats viral attention as a distortion field, requiring journalists to act as arbitrageurs who weigh platform visibility against civic salience—exposing how professional autonomy is now exercised not through speed but through resistance to algorithmic incentives that equate prominence with truth.

Editorial Friction

A mid-career journalist can mitigate Twitter’s misinformation risks by institutionalizing editorial friction through pre-publication verification queues sourced from wire services like AP or Reuters. At The Washington Post, reporters use internal checklists that require at least one non-Twitter-sourced confirmation before citing trending claims, even urgent ones—this procedural drag counters the platform’s speed imperative not by rejecting real-time input but by subjecting it to legacy newsroom systems of validation. This mechanism is non-obvious because most critiques assume speed and accuracy are in direct tension, yet here the delay is not a barrier but a calibrated filter, revealing that editorial friction—when systematized—functions not as inert resistance but as active inoculation.

Attention Arbitrage

A mid-career journalist maintains credibility on Twitter not by resisting viral momentum but by strategically amplifying counternarratives from overlooked beats—such as local public health officials during vaccine debates—to exploit gaps in algorithmic attention. When mainstream media fixated on celebrity vaccine hesitancy in 2021, journalists like those at NPR’s Shots blog redirected traffic toward immunization equity data from county health departments, reshaping the narrative without rejecting real-time engagement. This challenges the dominant view that virality necessitates surrender to misinformation, exposing that attention arbitrage—leveraging under-amplified truths to disrupt dominant narratives—can reposition journalists as agenda-setters rather than clean-up crews.

Deeper Analysis

How did the move from wire services to algorithmic feeds change how journalists decide when a story is ready to publish?

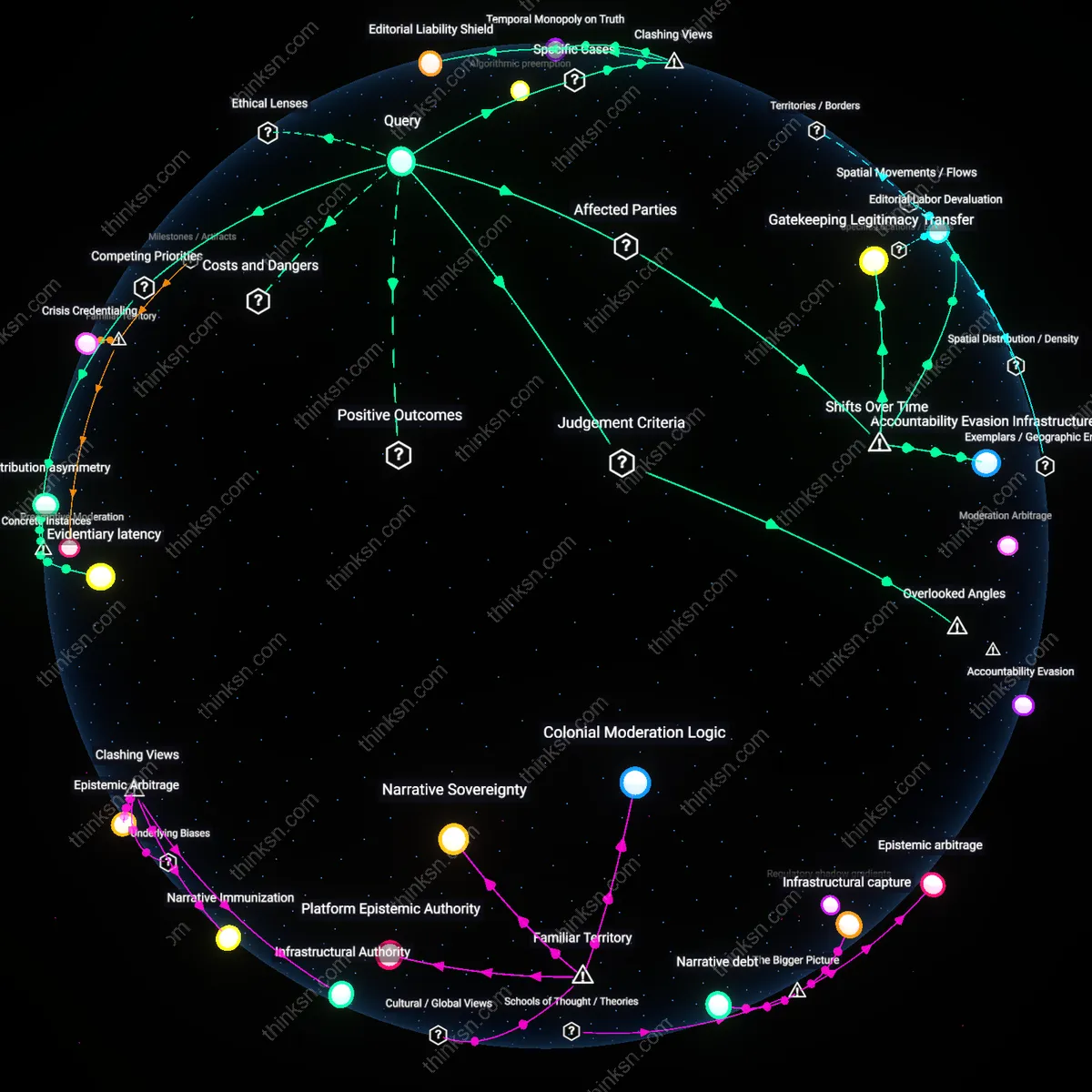

Temporal sovereignty

The shift from wire services to algorithmic feeds eroded journalists’ control over when a story must publish by subordinating editorial timing to platform engagement signals. Wire services like AP or Reuters historically synchronized global newsrooms around fixed bulletin cycles, creating shared temporal sovereignty—editors decided a story was ready based on completeness and verification within a predictable rhythm. Algorithmic feeds, however, prioritize velocity and reaction metrics, forcing newsrooms to treat stories as perpetually open processes rather than bounded events; publication is now often a reaction to the feed’s behavior, not a conclusion of journalistic process. This undermines the editorial threshold of ‘readiness’ by inserting an external, opaque timing regime that rewards early, iterative posting over delayed, verified dispatches—a dynamic rarely acknowledged in debates about accuracy or speed, which typically ignore time itself as a contested institutional asset.

Infrastructure mimesis

Journalists began modeling story readiness on the structural logic of algorithmic platforms because their content management systems were reconfigured to mirror platform architectures like Facebook’s News Feed or Google News. In the mid-2010s, newsrooms adopted real-time analytics dashboards and automated publishing tools originally designed to interface with platform APIs, causing editorial decisions to unconsciously emulate algorithmic logic—such as privileging novelty, recency, and engagement spikes over coherence or context. This mimicry is overlooked in media studies that focus on economic or cultural pressures, but the deeper mechanism lies in the operational alignment of newsroom software with platform infrastructure, where ‘readiness’ becomes defined by technical compatibility rather than journalistic standards. As a result, the story is deemed publishable not when it answers key questions, but when it fits the input schema of the feed.

Attention arbitrage

The move to algorithmic feeds incentivized journalists to treat story readiness as a function of potential attention yield rather than factual closure, because performance metrics became embedded in newsroom promotion and funding decisions. Where wire services rewarded comprehensive, standalone reporting validated by editorial peers, algorithmic distribution tied visibility—and therefore revenue—to early engagement patterns, leading reporters and editors to publish incomplete stories optimized for algorithmic traction, anticipating future updates based on user response. This shift is rarely framed as a form of market speculation, yet it transforms the newsroom into an attention arbitrage operation, where the decision to publish hinges on projected click trajectories rather than verification benchmarks. The overlooked consequence is that ‘readiness’ is no longer an epistemic judgment but a financial forecast masked as editorial timing.

Attention Arbitrage

The shift from wire services to algorithmic feeds prioritized speed and engagement metrics over verification, causing journalists to publish stories earlier to capture audience attention before competitors or algorithms buried them. Newsrooms, particularly at digital-native outlets like BuzzFeed and HuffPost, began optimizing for virality and shareability, meaning editorial decisions were increasingly influenced by real-time analytics dashboards showing spikes in traffic or social shares. This created a feedback loop where the perceived 'readiness' of a story became contingent on its potential to generate immediate attention, not institutional standards of completeness—embedding competitive audience capture, not truth-seeking, as the central editorial calculus. What is underappreciated is how platform-designed incentive structures, not just internal newsroom choices, became the de facto editors of when news breaks.

Distributed Gatekeeping

Algorithmic feeds distributed the authority to recognize a story’s publish-worthiness from editors to networked users, forcing journalists to treat social validation—likes, shares, trending topics—as signals that a story was 'ready' even if traditional editorial checkpoints were incomplete. As Twitter and Facebook became primary news distribution channels during the 2010s, journalists at mainstream organizations like CNN and The New York Times began monitoring viral patterns not just to choose topics but to time publication, often rushing reports to meet audience momentum. This systemic shift replaced centralized editorial judgment with decentralized behavioral feedback, making 'readiness' a function of emergent public interest rather than internal newsroom protocols. The overlooked dynamic is that algorithms did not merely distribute news—they reshaped the epistemology of news judgment by making attention a proxy for significance.

Temporal Compression

The move to algorithmic feeds compressed the journalistic news cycle into real-time responsiveness, making delays in publication equate to irrelevance and prompting journalists to treat 'first' as functionally synonymous with 'ready.' Unlike wire services, which operated on scheduled update cycles (e.g., hourly UPI or AP bulletins), platforms like Google News and TikTok reward recency through ranking algorithms that deprioritize older content regardless of depth or accuracy. As a result, newsrooms adopted 'publish-then-edit' workflows, especially in breaking news contexts, because the cost of hesitation exceeded the risk of error—a structural change driven by platform logic, not editorial preference. The subtle but critical point is that time itself became a scarce resource, governed by algorithmic timelines rather than journalistic deliberation, transforming publication from a milestone into a continuous event.

Temporal Privilege

The displacement of wire service urgency by algorithmic virality shifted story readiness from institutional validation to engagement-based velocity, as newsrooms began treating social media traction—rather than editorial sign-off—as the proxy for newsworthiness. Editors and beat reporters now routinely delay or accelerate publication based on real-time metrics from platform analytics, which create an invisible threshold for what counts as 'sufficient momentum' to justify front-page placement; this redefines gatekeeping not as a judgment of importance but as a response to emergent attention patterns. What is underappreciated is that this shift did not eliminate hierarchy—wire services once held temporal monopoly—but relocated it to opaque platform algorithms that reward speed and emotional resonance over verification, re-entrenching a new form of temporal privilege.

Distributed Verification

The decline of centralized wire services as the primary source of breaking news forced journalists to treat audience feedback loops on algorithmic feeds as de facto confirmation mechanisms, making story readiness contingent on crowd-audited signal detection rather than top-down editorial consensus. Reporters and producers now monitor comment sections, shares, and competing outlet responses within minutes of posting to assess whether an event qualifies as 'real' or requires revision, effectively outsourcing fact-checking to networked publics whose attention serves as both evidence and pressure. This shift reveals that journalistic closure—the moment a story is deemed complete—has become a negotiated outcome across distributed nodes rather than a final editorial decision, rendering verification a perpetual, post-publication process rather than a precondition.

Where are the gaps in public attention that journalists can most effectively fill by redirecting the conversation to underreported sources?

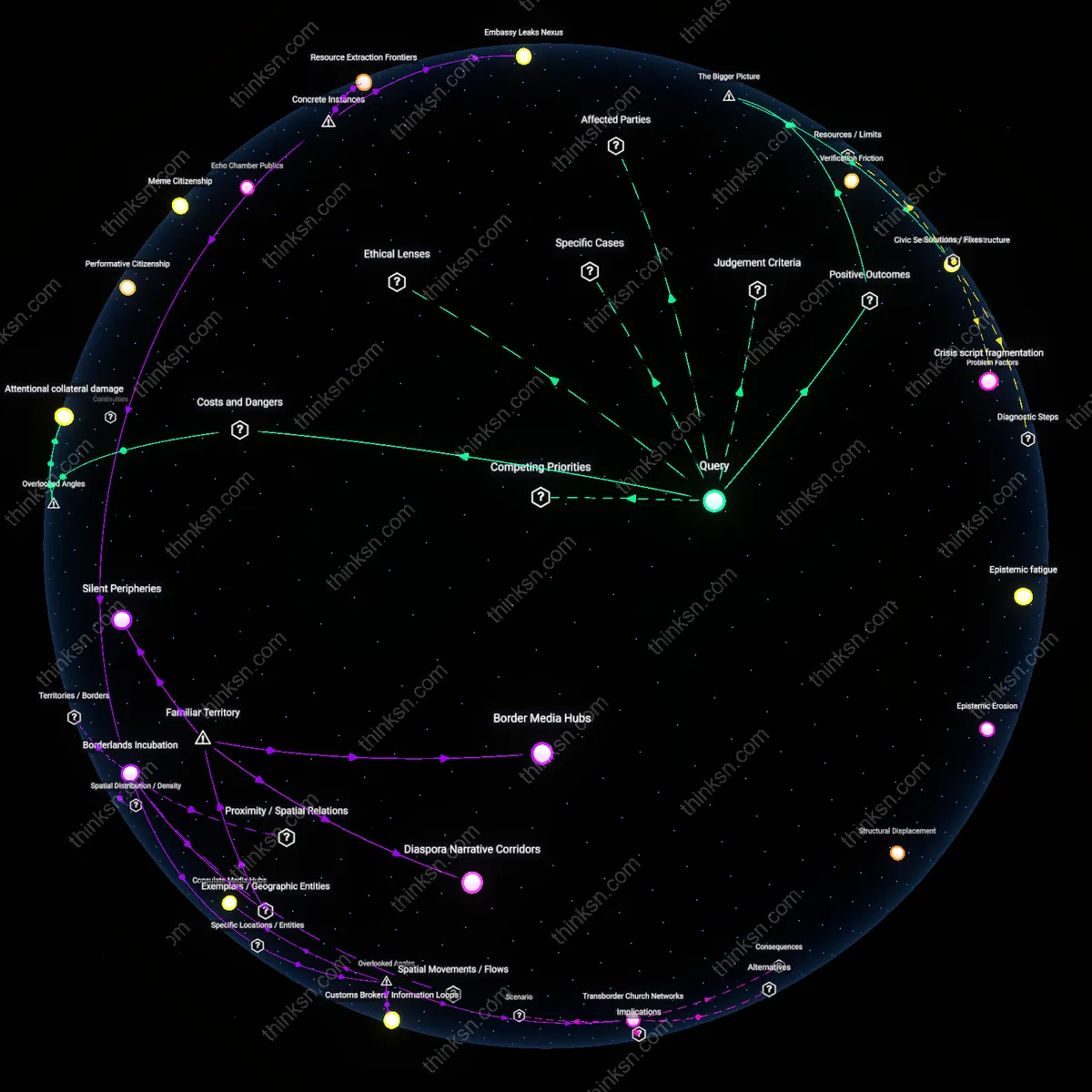

Peripheral Bureaucracies

In Flint, Michigan, the crisis of lead-contaminated water was first identified not by national media but by whistleblowers within the state’s Department of Environmental Quality and local public health nurses who compiled hospitalization data masked by official inaction; journalists who redirected attention to these peripheral bureaucratic actors revealed an embedded early-warning system operating outside centralized channels. Local functionaries had generated anomalous reports as early as 2014, but these were suppressed or dismissed by state-appointed emergency managers, illustrating how mundane administrative roles can become silenced knowledge nodes during political crises. The mechanism—systemic disregard for low-profile actors with access to operational data—created a gap filled only when investigative journalists bypassed executive summaries to cite internal memos and employee testimonies. The non-obvious insight is that bureaucratic peripheries, not civil society alone, harbor critical evidence when formal oversight fails.

Subterranean Archives

In Medellín, Colombia, community collectives in the Comuna 13 neighborhood preserved oral histories, photographs, and police records documenting extrajudicial killings during the 2000s paramilitary sieges, materials absent from official archives and national media coverage; journalists who sourced their reporting from these underground repositories revealed patterns of state complicity long before institutional investigations began. Operated by retired teachers, former victims’ families, and human rights monitors working out of informal cultural centers, these subterranean archives functioned as resilient memory networks in the vacuum of official truth-seeking. The significance lies in their defiance of both narrative control and archival erasure—the gap wasn’t just underreporting, but the systematic exclusion of civilian-held evidence from mainstream discourse. The overlooked mechanism is that sustained local documentation, even without institutional backing, can preconfigure investigative pathways when accessed.

Inter-National Transit Hubs

At the Nadapal border between South Sudan and Kenya, cross-border traders—mostly Nuer and Dinka women—conveyed real-time intelligence about militia movements and food shortages years before international media arrived during the 2013–2016 civil conflict; journalists who centered these itinerant couriers exposed a shadow information network operating independently of diplomatic or NGO channels. These traders, moving under the radar for economic survival, accumulated granular conflict data through kinship ties across contested territories, a system ignored by state-focused reporting. The gap stemmed from the assumption that political intelligence emerges from capitals or armed factions, not commercial itineraries. The underappreciated reality is that transit corridors controlled by civilian non-combatants can become subaltern nervous systems in zones of collapse.

Subsoil Sovereignty

Journalists can most effectively redirect attention to transboundary aquifers governed by neither international law nor local oversight, where extraction by agribusiness and mining operations proceeds without public scrutiny. These subterranean water reserves—such as the Nubian Sandstone Aquifer beneath Libya, Chad, Sudan, and Egypt—exist outside the political geography of borders yet are partitioned arbitrarily for resource control, enabling powerful actors to treat them as sovereign extensions of territory despite their shared hydrology. The lack of legal visibility for these concealed systems allows depletion to proceed unmonitored, and by reframing aquifers as contested territorial domains rather than neutral natural features, reporting can expose how states enact sovereignty beneath the surface in ways that bypass diplomatic accountability. This challenges the dominant view that borders govern only above-ground space, revealing the subsoil as an unregulated frontier of geopolitical maneuvering.

Extralegal Zones

Journalists can redirect focus to border regions where formal jurisdiction is deliberately rendered inoperative by state design, such as the U.S. 'concurrency zones' along the Southwest border, where federal, state, tribal, and local authorities overlap so densely that enforcement becomes selectively arbitrary. These areas function not as spaces of lawlessness but as extralegal zones—territories where the state suspends consistent legal application to enable discretionary control over migration and commerce, often with minimal public oversight. The dominant narrative frames border enforcement as a struggle to impose order on chaos, but the reality is a calculated fragmentation of legal authority that permits covert operations, surveillance expansion, and militarized policing under the cover of bureaucratic confusion. Exposing this engineered ambiguity reveals how state power is most potent not where it is visible, but where it is formally absent.

Buffer Governance

Journalists should redirect attention to internationally recognized buffer zones—such as the UN-patrolled Green Line in Cyprus or the Kosovo-Serbia boundary zone—where governance is exercised not by state actors but by third-party technical bodies managing infrastructure, communications, and movement through ostensibly neutral protocols. These zones appear demilitarized and apolitical, but in practice, they are sites of deep political engineering, where control is exerted through the administration of utilities, data routing, and cross-border connectivity rather than overt force. The dominant interpretation sees buffer zones as peace-preserving spaces between conflicting powers, but they increasingly function as laboratories for remote governance, where sovereignty is outsourced to non-elected administrators whose decisions shape territorial control without democratic input. This reveals how authority is being relocated from political institutions to operational managers in contested spaces.

Silent Arbiters

The most consequential gaps in public attention are not in content but in authentication—specifically, who verifies underreported sources in conflict zones like eastern DRC, where local fixers for international wire services routinely validate eyewitness accounts that journalists then publish without attribution. These fixers operate as uncredited gatekeepers of credibility, applying on-the-ground epistemic standards that outweigh Western editorial oversight, yet their role is erased in the final narrative, reinforcing a myth of external verification. This erasure sustains the illusion that global media transparency flows from institutional rigor rather than informal, geographically embedded trust networks—a dynamic rarely acknowledged because it undermines the authority of news organizations’ brand identity.

Echoic Marginalization

Journalists could redirect attention most effectively not toward silencing dominant narratives but toward amplifying already circulating underreported ones in places like Okinawa, where local newspapers and protest groups have extensively documented U.S. military base impacts for decades—yet national Japanese media treats them as peripheral. The systemic gap isn’t absence of source material but the reflexive reclassification of persistent local testimony as 'regional concern' rather than national issue, enabling Tokyo-based outlets to maintain a de facto exclusion from the public agenda. This contradicts the common assumption that underreporting stems from information scarcity, revealing instead a structural preference for recency and spectacle over longitudinal, inconvenient consistency.

How do newsrooms decide when to publish now that they’re reacting to Twitter’s rhythm instead of their own schedules?

Audience attention economy

Newsrooms publish when momentum on Twitter signals peak audience receptivity, because real-time engagement metrics from social platforms now function as leading indicators of story impact. Editorial decisions pivot on data flows from digital analytics teams who monitor viral thresholds, retweet velocity, and competitor entry—transforming newsroom timing from institutional routine to competitive response. This shift is underappreciated because it reframes journalistic autonomy not as a decline in control but as a recalibration to an externally generated attention economy where visibility, not publication, confers newsworthiness.

Editorial Temporal Alignment

Newsrooms align publication timing to Twitter’s event cycles because their internal monitoring systems now treat viral tweet clusters as validation signals, not just leads—rewiring editorial hierarchies so social virality bypasses traditional gatekeeping stages. This shift privileges content that emerges during peak Twitter engagement windows, not newsroom planning sessions, because metric dashboards in war rooms like those at Bloomberg or The Guardian now weight tweet velocity alongside source reliability, altering the internal logic of when a story is deemed ‘ready.’ What’s overlooked is that this isn’t just reactive speed—it’s a structural recalibration where the newsroom’s internal rhythm is subordinated to external platform dynamics, transforming Twitter’s irregular pulse into an invisible scheduler. This redefines editorial readiness as algorithmic consonance, not journalistic completion.

Operational Feedback Contagion

Publishing decisions synchronize with Twitter’s rhythm because newsroom performance metrics now loop back into editorial workflows through automated feedback systems, making virality a recursive input rather than an outcome. At outlets like Axios or NBC Digital, A/B-tested headlines are pushed live only after achieving micro-validation in closed social simulation tools that mimic Twitter engagement patterns, embedding platform behavior into pre-publication protocols. The overlooked mechanism is not that news follows Twitter, but that Twitter’s response patterns have been internalized as a covert approval layer—where stories must first 'pass' synthetic virality thresholds before human editors even see them. This creates a structural dependency where perceived public interest is pre-emptively modeled, making the platform’s rhythm a hidden substrate of newsroom information architecture.

Audience Attention Tracking

Newsrooms publish when Twitter shows spikes in public attention because they rely on real-time analytics tools like CrowdTangle and social listening dashboards to detect viral momentum. These tools, integrated into editorial workflows at outlets such as The New York Times and CNN, convert tweet volume, retweet velocity, and trending topic algorithms into signals that override traditional editorial calendars. The non-obvious aspect is that it’s not journalists responding intuitively to Twitter, but rather institutionalized data pipelines that codify public reaction as a publish trigger, making audience behavior the lead editor.

Competitive Speed Benchmarking

Newsrooms time publication to beat rivals in the same news cycle because Twitter acts as a live leaderboard where outlets monitor each other’s bylines, replies, and quote tweets to gauge who broke a story. This dynamic, observable in real time during breaking news events like elections or scandals, turns publishing into a reactive race where being second can equate to irrelevance. The underappreciated reality is that Twitter doesn’t just accelerate news—it reshapes professional pride and organizational identity around speed metrics borrowed from platform virality, not journalistic tradition.

Platform-Driven Story Validation

Newsrooms wait for Twitter to confirm a story’s legitimacy and relevance before publishing because unverified narratives often blow up into misinformation traps, as seen during crises like mass shootings or political unrest. Editors at organizations like Reuters and AP now treat widespread discussion and engagement on Twitter not as noise but as a de facto peer-review mechanism, where credibility emerges from source amplification by trusted accounts and institutional handles. What’s rarely acknowledged is that this shifts epistemic authority from internal editorial standards to distributed network consensus, effectively outsourcing news judgment to the platform’s social graph.

Attention Arbitrage

Newsrooms now publish when they detect surge-primed topics on Twitter because algorithmic amplification creates ephemeral audience windows that news organizations must exploit to capture attention; this shift from scheduled editorial cycles to real-time detection systems—powered by social listening tools and engagement dashboards—means gatekeeping is increasingly outsourced to platform-native behaviors like retweets and quote-tweets. Editors, producers, and analytics teams now coordinate around ‘surge desks’ that monitor for viral inflection points, prioritizing stories not by institutional judgment alone but by their potential to ride or hijack circulating attention. The non-obvious consequence is that newsrooms don't just react to Twitter—they train themselves to anticipate its rhythms, effectively engaging in attention arbitrage by publishing just before or at the cusp of a topic’s virality spike.

Temporal Decoupling

Newsrooms publish when decentralized network events on Twitter destabilize established chronologies, marking a shift from industrial news schedules rooted in print deadlines and broadcast slots to a regime of temporal decoupling, where publication timing is unmoored from fixed rhythms and instead tethered to emergent network temporality. This transition accelerated after 2011–2013, when real-time verification during Arab Spring events and Boston Marathon coverage proved that credibility could be claimed by being first in the social stream, not just first on the evening news. The underappreciated mechanism is that journalists now treat Twitter not as a distribution channel but as a sense-making environment, where the timing of publication follows networked consensus on what ‘matters now’—a break from institutional time that dissolves the boundary between newsgathering and timekeeping.

Validation Cascade

Newsrooms publish when a Twitter-originated claim or moment triggers a validation cascade across elite accounts, marking a departure from internal editorial calibration toward external social validation as the trigger for publication; this shift crystallized during the Trump era (2016–2020), when presidential tweets bypassed traditional media gateways and forced newsrooms to adopt reactive ‘confirmation-to-publish’ protocols. Now, reporters and editors wait not for full sourcing but for corroboration across institutional handles—e.g., @NYTimes or @ABC breaking a tweet—signaling that the information has cleared a distributed threshold of legitimacy. The non-obvious insight is that the function of validation has migrated from within newsrooms to between high-followed social actors, making publication timing dependent not on readiness but on the speed of consensus formation in elite network clusters.

Tension Scheduling

The New York Times’ 2016 decision to fast-track publication of an investigative piece on Russian election interference in response to a competing tweet from then-President Trump exemplifies how newsrooms now operate on split temporal registers. Editors had planned a measured rollout, but within 47 minutes of Trump’s dismissive tweet, the story was published in full—bypassing internal copyediting and fact-checking queues. This reveals a scheduling logic where editorial calendars are held in tension with real-time social media signals, forcing preemptive releases to retain authority. The non-obvious mechanism is not mere speed, but the strategic disruption of one’s own process to avoid being framed by others.

Event Capture Threshold

During the 2019 Hong Kong protests, Reuters’ decision to publish footage of police entering Prince Edward Station was triggered not by editorial checklist completion but by the volume and coherence of geolocated citizen videos flooding Twitter within a 12-minute window. With over 8,000 clips and 450 verified eyewitness accounts in that span, their verification desk treated Twitter’s aggregation as a proxy for evidentiary threshold, greenlighting publication before traditional field confirmations arrived. This shows that newsrooms now use social media as a distributed sensor network, where the density of user-generated content can substitute for institutional verification benchmarks. The underappreciated shift is that the trigger for publication is no longer institutional consensus but crowd-verified anomaly detection.

Narrative Preemption

In 2020, when CNN published their report on the Minneapolis police’s initial briefing about George Floyd within 23 minutes of the first bystander video appearing on Twitter, the decision was driven by fear that alternative narratives forming in real time on social platforms would eclipse factual development. Producers cited internal Slack messages warning of ‘narrative capture by activist accounts’ if the story wasn’t anchored by a credible source quickly. This reflects a shift where publication timing is less about completing reporting than about seizing narrative control before public interpretation solidifies. The non-obvious insight is that newsrooms now publish not when they know enough, but when they calculate that delay risks irreversible interpretive loss.