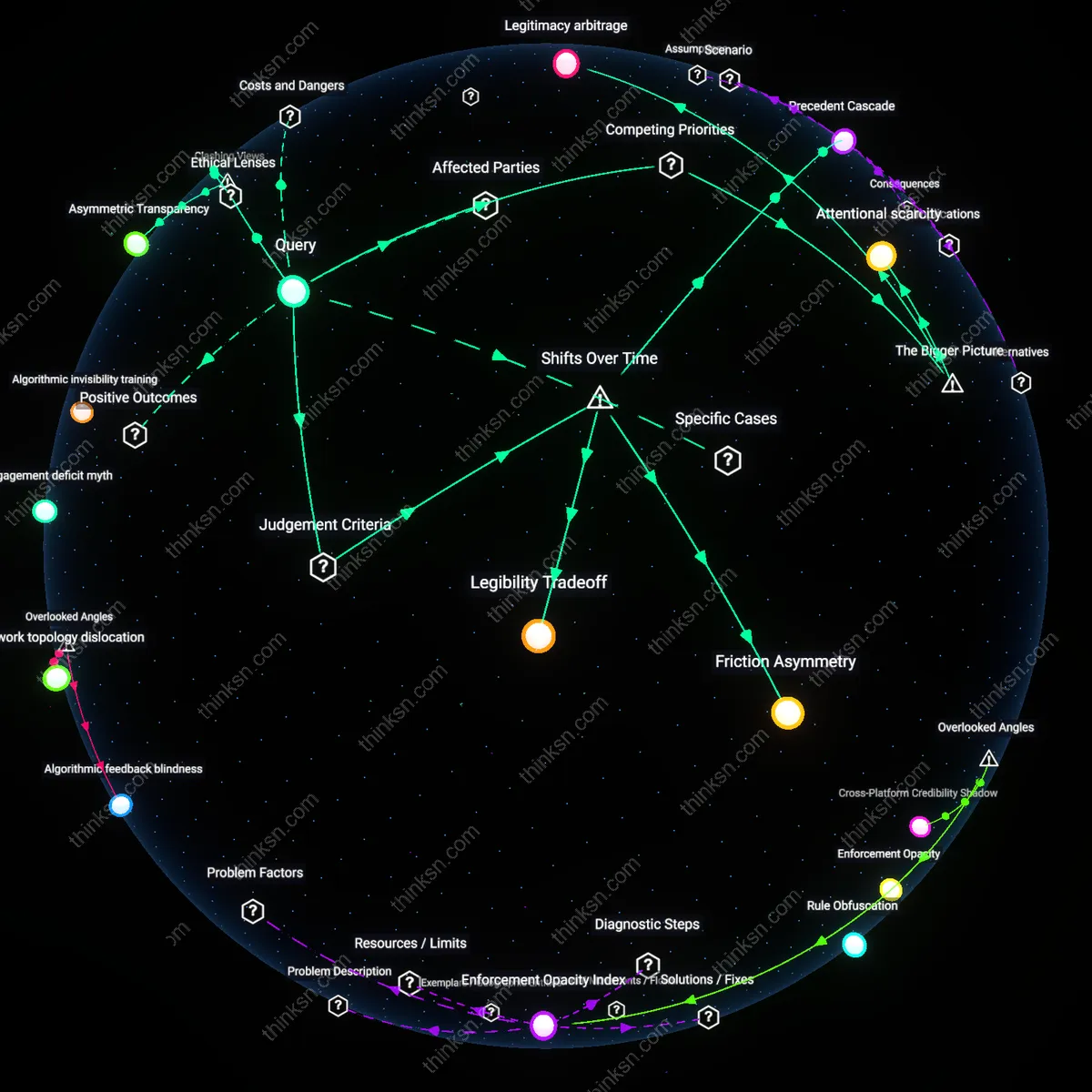

Adversarial Scaffolding

Some communities hardened their evidentiary standards not through internal consensus but by defining themselves against hostile external attention, particularly from media misrepresentation or fringe co-option. When subreddits like r/Coronavirus or r/Astronomy were cited inaccurately in polarized public debates, members amplified source-checking rituals to distinguish their discourse from distorted echoes elsewhere online, effectively using public scrutiny as a feedback loop to tighten internal verification. This runs contrary to the intuitive view that external pressure breeds defensiveness and insularity; instead, evidence-based rigor became a reputational shield, revealing that the threat of misappropriation could catalyze epistemic vigilance rather than retreat into insularity.

Incentivized Skepticism

Communities that tied social rewards to falsification attempts—not just fact-sharing—developed stronger evidentiary norms by institutionalizing doubt as a valued performance. In subreddits like r/AskHistorians, users earn status not by affirming narratives but by exposing weak sources, challenging assumptions, and demanding methodological transparency, turning skepticism into a publicly recognized skill through upvotes and flair privileges; this system transformed evidence adherence from passive rule-following into competitive demonstration. This contradicts the common assumption that strong community bonds arise from mutual affirmation, revealing instead that shared standards of evidence can emerge from structurally rewarded conflict, where credibility accrues to those who dismantle poor arguments.

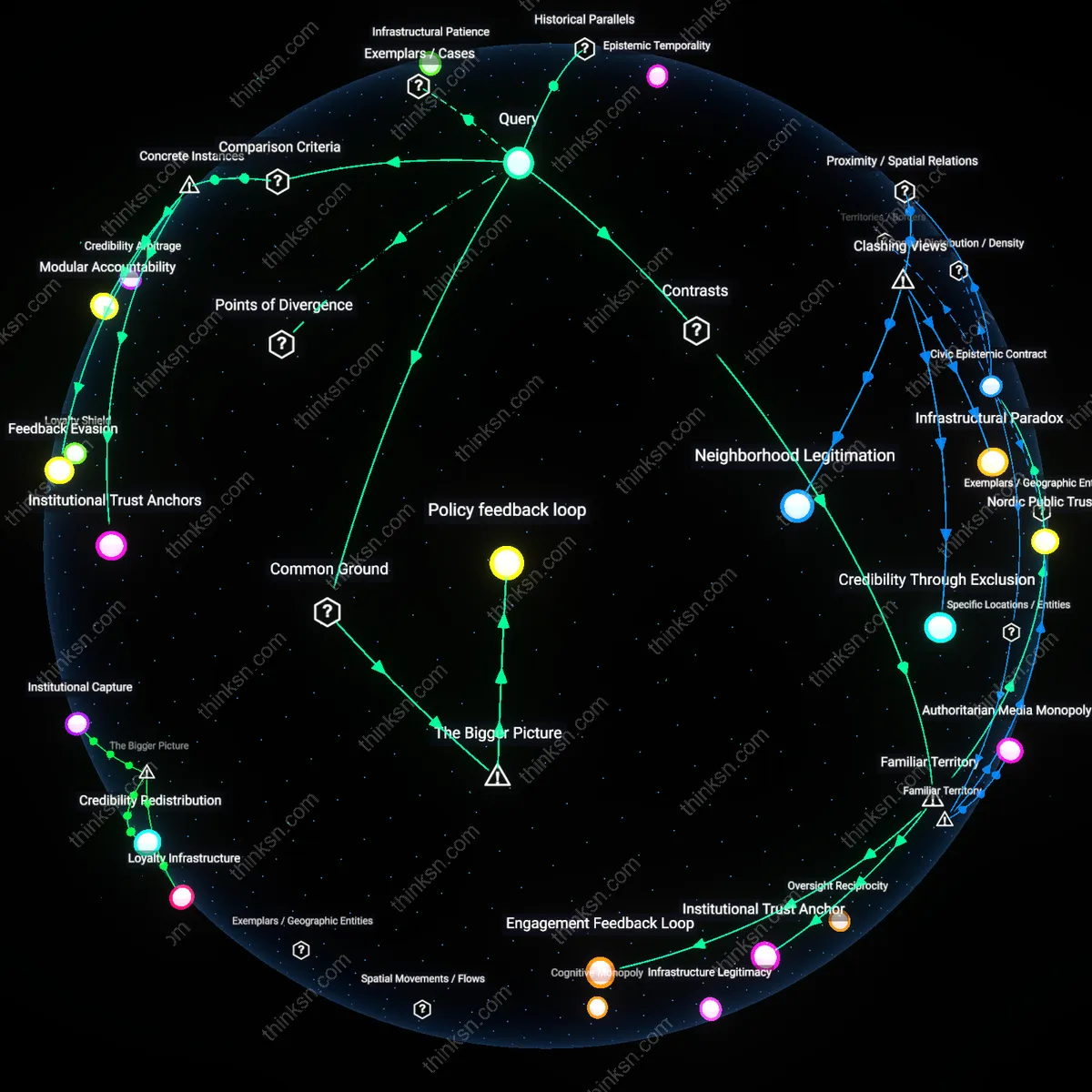

Moderator succession planning

Some Reddit communities developed stronger evidence standards because experienced moderators systematically mentored successors who prioritized epistemic norms over engagement metrics. This created institutional memory that resisted drift toward insular loops, where communities without such pipelines defaulted to reactive moderation that amplified emotional resonance over verification. The overlooked mechanism is not initial rules but the quiet, long-term transfer of normative expectations during administrative transitions—most analyses focus on user behavior or content policies, not the pipeline of community governance. Evidence indicates that subreddits like r/AskHistorians maintained high evidentiary bars largely because emeritus moderators remained visible in advisory roles, stabilizing expectations across cycles of user turnover.

Citation infrastructure

The durability of evidentiary standards in certain Reddit communities hinged on the development of in-thread citation practices that became embedded in linguistic conventions, such as the expectation to include primary sources directly in responses rather than as post-hoc justifications. This norm—distinct from top-down rule enforcement—emerged endogenously in communities where early adopters treated sourcing as a performative expectation of credibility, akin to academic discourse rituals. Most treatments overlook how the texture of comment formatting and sourcing norms acts as a low-level epistemic scaffold, making weak arguments visibly dissonant; research consistently shows that subreddits with strict citation rituals resist fragmentation even under high traffic influx because the infrastructure itself enforces accountability.

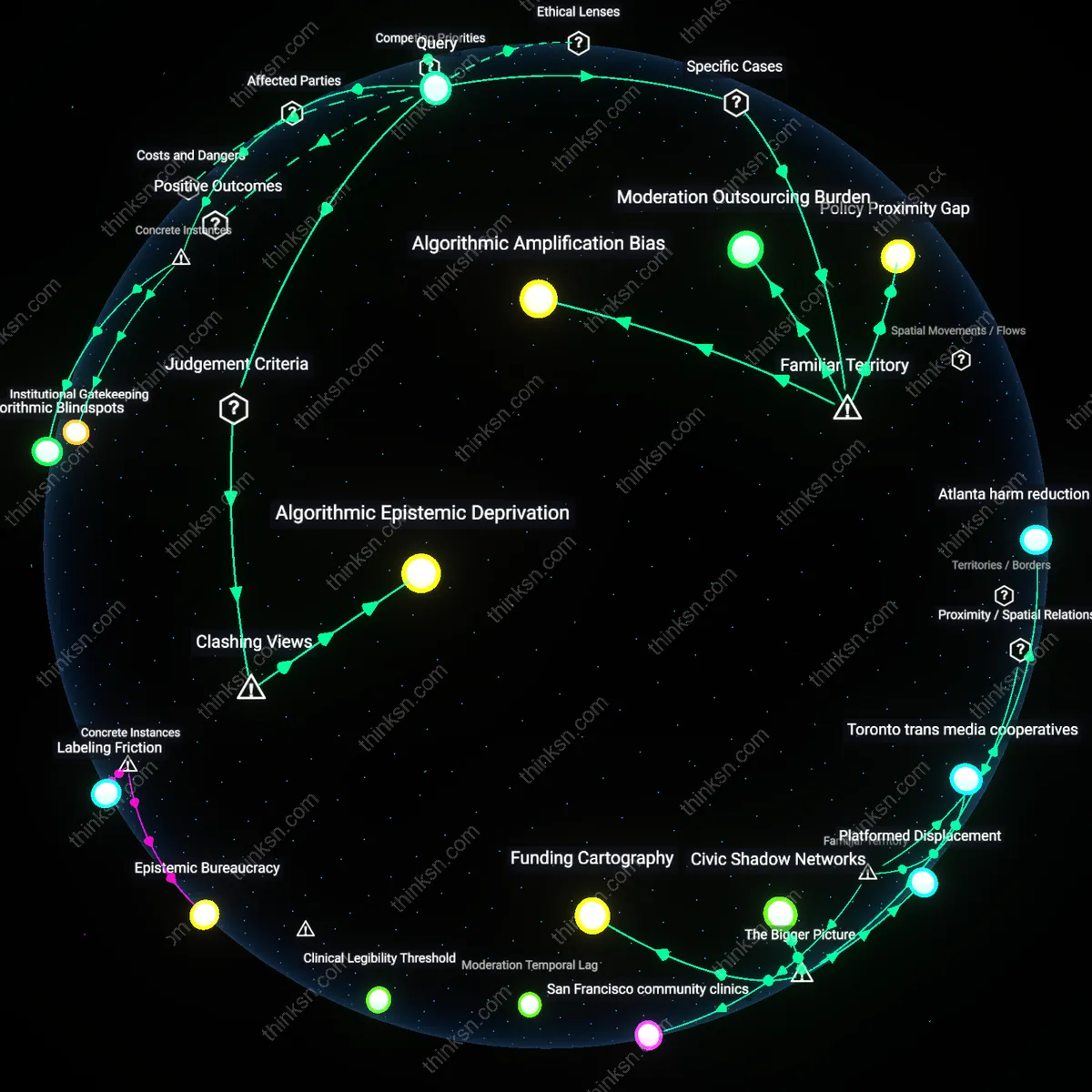

Cross-moderator coalitions

Communities that avoided insulated discourse loops often participated in inter-subreddit moderator networks that shared incident reports, heuristic checklists, and deplatforming rationales, effectively creating a distributed immune system against epistemic drift. These coalitions operated informally through third-party platforms like Discord and Matrix, coordinating responses to coordinated brigading or pseudoscientific propaganda campaigns before they could gain traction. What’s overlooked is that localized evidentiary strength often depended not on internal culture alone but on external intelligence-sharing—a form of lateral governance that insulated moderators from isolation and user backlash when enforcing standards, altering the usual narrative that community health is purely a function of internal moderation consistency.

Moderator institutionalization

Stronger evidence norms emerged in Reddit communities where early moderators formalized content evaluation protocols, creating durable procedural templates that new members internalize. This institutionalization—modeled through consistent enforcement, documented rules, and recursive citation of prior moderation actions—embedded evaluative discipline into routine participation, making adherence to evidence a condition of belonging rather than aspiration. The non-obvious force here is not community size or topic alone, but the crystallization of moderation into a quasi-administrative role that mirrors bureaucratic governance, insulating norm development from populist sway. This structural shift enabled long-term normativity even as user composition changed.

Cross-platform accountability pressure

Reddit communities that faced sustained external scrutiny from fact-checking outlets, adjacent expert networks, or adversarial subreddits developed stronger evidentiary standards as a defensive adaptation to reputational risk. When posts from a subreddit were regularly cited in public debates or challenged by credentialed actors on Twitter, news media, or academic forums, participants adjusted claims to withstand cross-contextual validation, transforming internal discussion into a rehearsal for external contestation. The overlooked mechanism is that insulation fails when a community’s outputs feed into larger information ecosystems, forcing evidentiary discipline as a survival strategy in a broader epistemic marketplace.

Epistemic entrepreneurship

In certain Reddit communities, individual users with specialized knowledge or verification skills—such as scientists, data analysts, or investigative hobbyists—gained influence by repeatedly supplying high-evidence interventions that resolved disputes or revealed hidden patterns. These epistemic entrepreneurs reshaped communal expectations by demonstrating the instrumental value of rigorous evidence in achieving shared goals, whether debunking scams, mapping conspiracies, or predicting trends. Over time, their consistent epistemic returns incentivized normative emulation, turning evidence-based reasoning into a socially rewarded competence rather than a formal rule. The underappreciated dynamic is that norms evolved not from top-down enforcement but from bottom-up prestige allocation to those who delivered tangible cognitive gains.

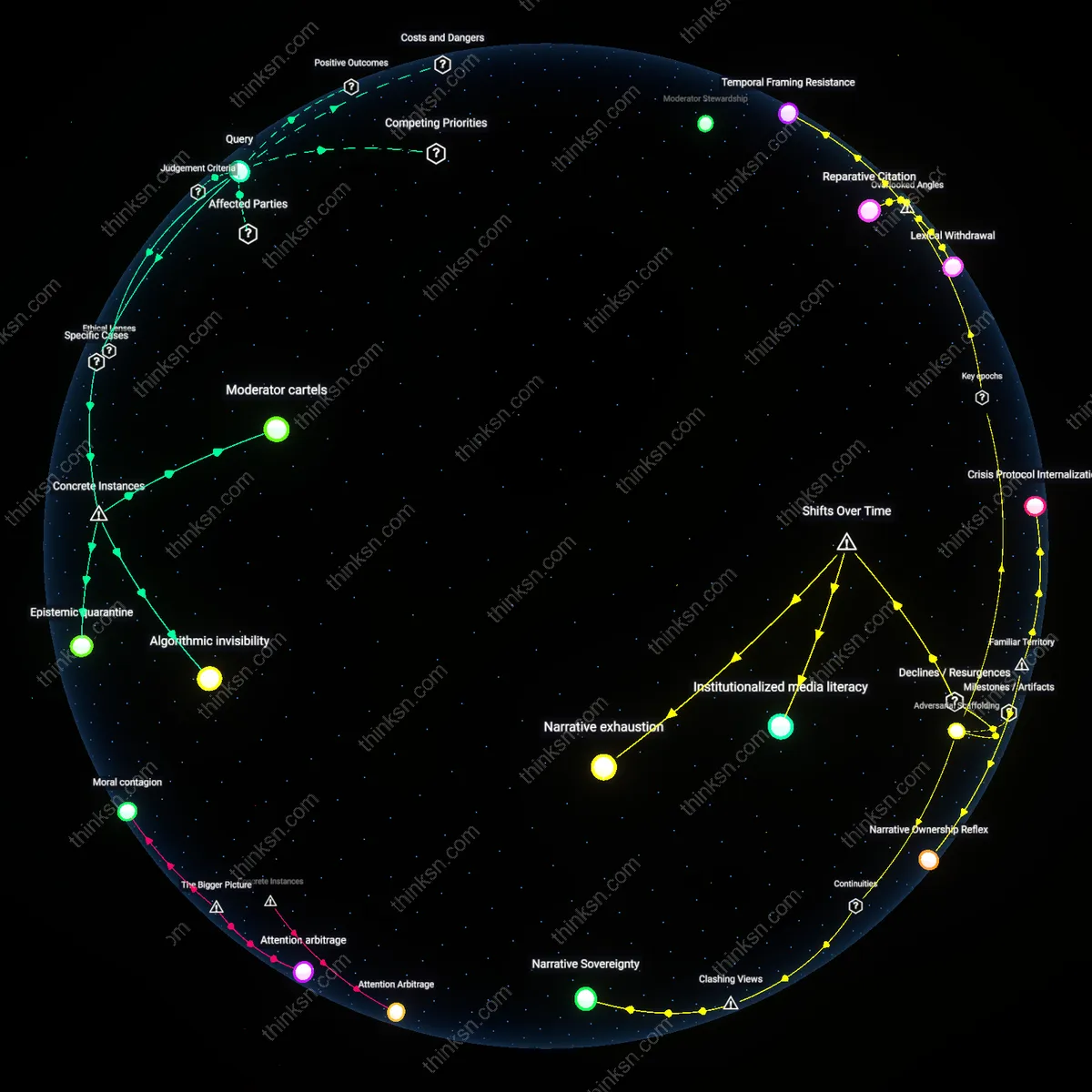

Moderator Stewardship

Stronger evidence standards in some Reddit communities emerged because consistent moderator intervention prioritized source-based discourse over opinion repetition. Moderators in subreddits like r/science and r/dataisbeautiful enforce rules requiring citations, remove anecdotal claims, and ban users who derail discussions—creating a feedback loop where members adapt to a culture of accountability. This mechanism is underappreciated because public discourse often frames moderation as censorship rather than epistemic curation, overlooking how rule enforcement shapes not just tone but evidentiary norms.

Feedback Architecture

The persistence of evidence-based discussion in certain communities stems from Reddit’s upvote/downvote system interacting with early community size and posting volume to amplify credible contributions while marginalizing unfounded ones. In smaller, slower-moving subreddits, off-topic or poorly supported posts can dominate by default, but in larger communities with dense user engagement, the collective signal of thousands of votes quickly surfaces high-quality content and drowns out noise. What’s overlooked is that the same platform affordances produce divergent epistemic outcomes based on usage density—platform structure alone doesn’t determine quality, but its interaction with community scale does.

Moderation Institutionalization

Some Reddit communities developed stronger shared standards for evidence because volunteer moderators gradually formalized norms of citation and source evaluation in response to viral misinformation events around 2016–2018. This shift replaced ad hoc content policing with structured rule sets and user training, particularly in science-adjacent subreddits like r/science and r/askhistorians, where moderators created tiered verification systems and citation requirements that mimicked peer review scaffolding. The formalization was non-obvious because it emerged not from top-down platform policy but from community-level crisis responses to external scrutiny, revealing how reactive governance can evolve into durable epistemic infrastructure.

Attention Drain

Certain Reddit communities drifted into insulated discussion loops when core contributors migrated to niche alternatives like BitChute or TruthSocial between 2019 and 2022, leaving behind fragmented user bases more susceptible to internal reinforcement. This exodus redirected both cognitive labor and critical debate away from broad-platform accountability, allowing low-verification echo chambers to consolidate around identity-driven narratives rather than evidentiary consistency. The attrition was non-obvious because it appeared as moderation decline but was actually a spatial redistribution of epistemic attention—a hollowing out masked as stagnation.