Is Economic Data More Credible in Editorials or Startup Dashboards?

Analysis reveals 7 key thematic connections.

Key Findings

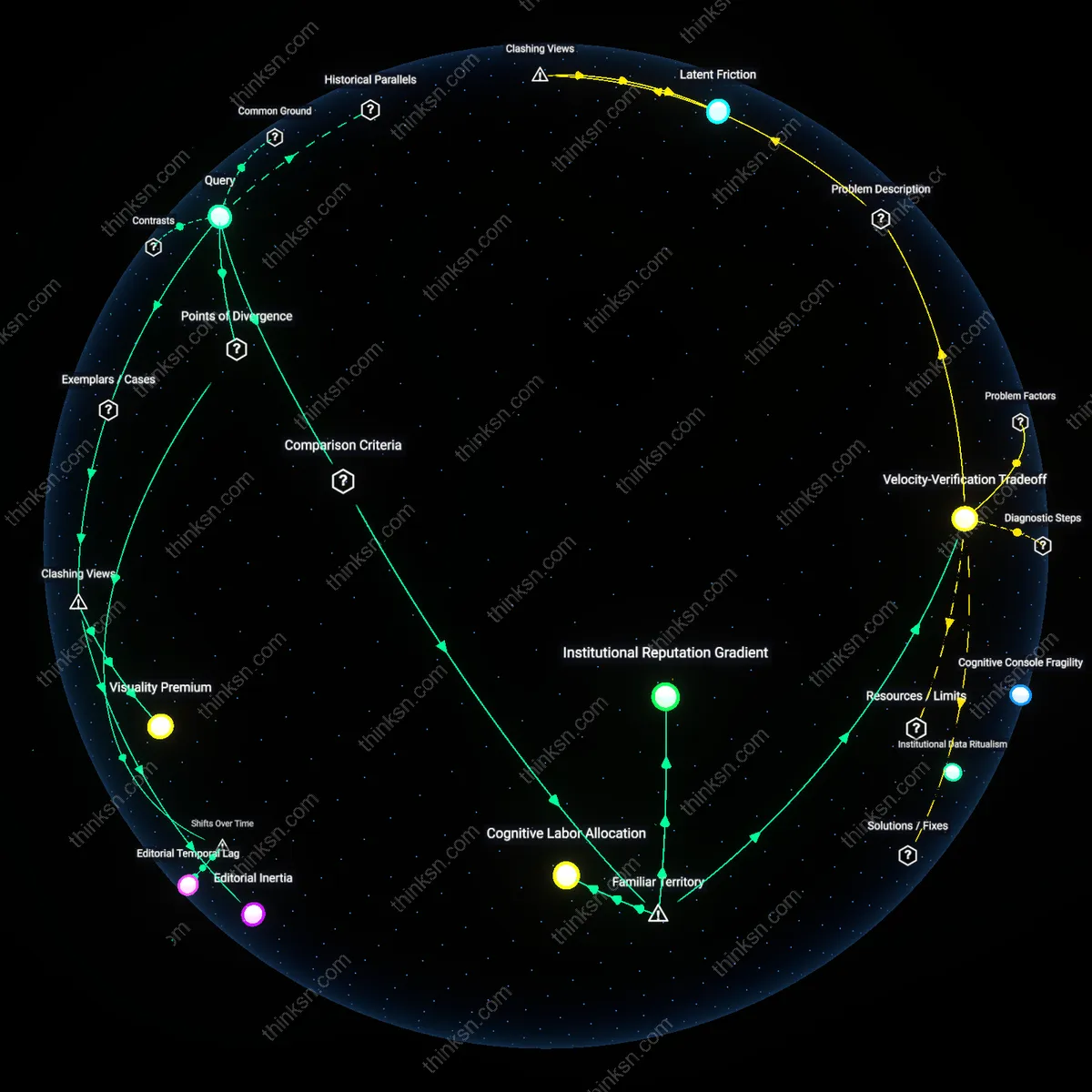

Institutional Reputation Gradient

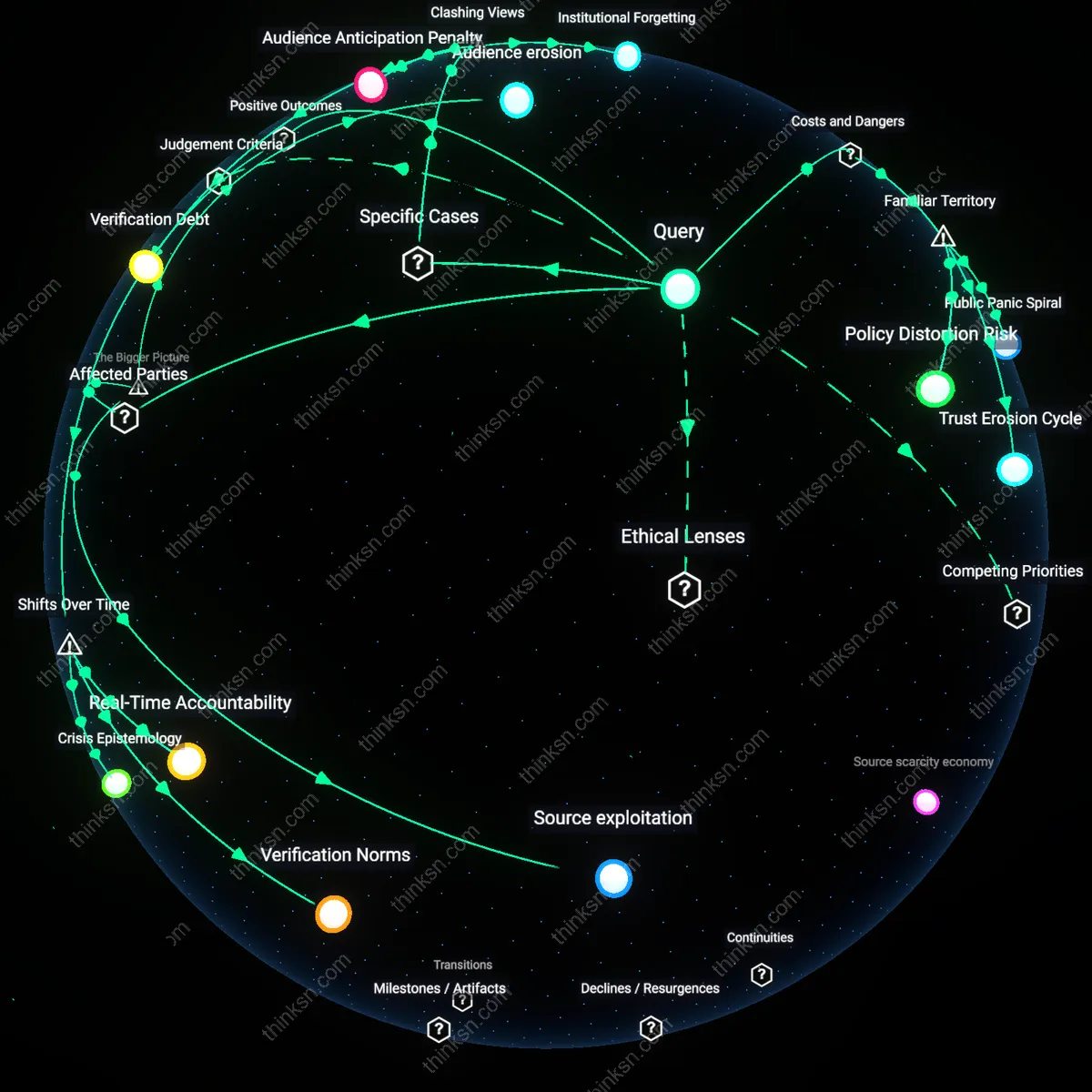

Legacy newspapers sustain higher perceived credibility in economic data reporting due to their historically embedded accountability mechanisms, such as editorial boards, ombudsmen, and long-standing relationships with institutional sources like central banks and treasury departments. This credibility emerges not from data superiority but from decades of reputational compounding, where errors are publicly acknowledged and methodological transparency is expected by educated readerships. The non-obvious insight is that people trust the newspaper’s editorial constraints—its slowness, its hierarchy—not in spite of them, but because they mimic due process, reinforcing the sense that data has been vetted through adversarial review, unlike the often opaque real-time pipelines of startups.

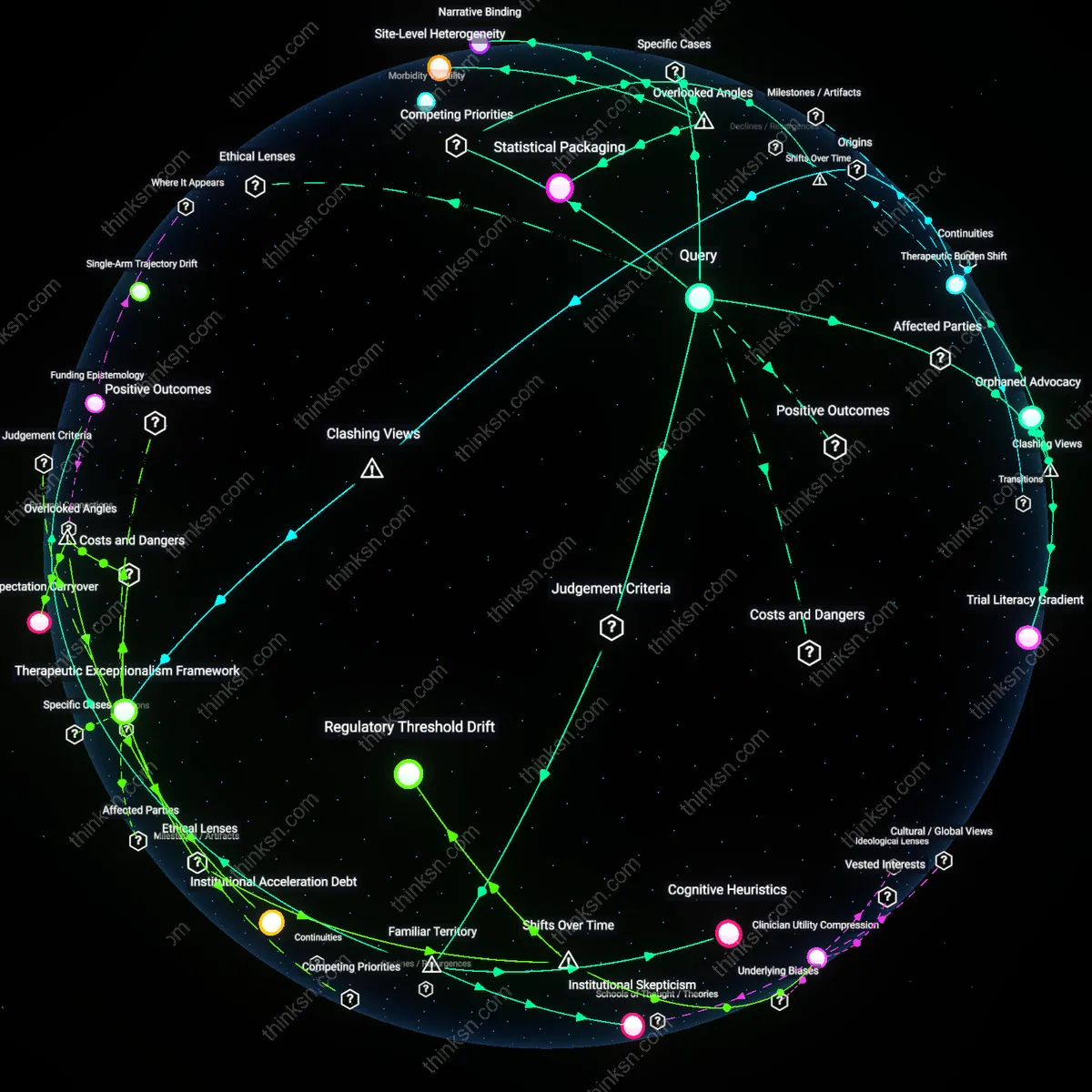

Velocity-Verification Tradeoff

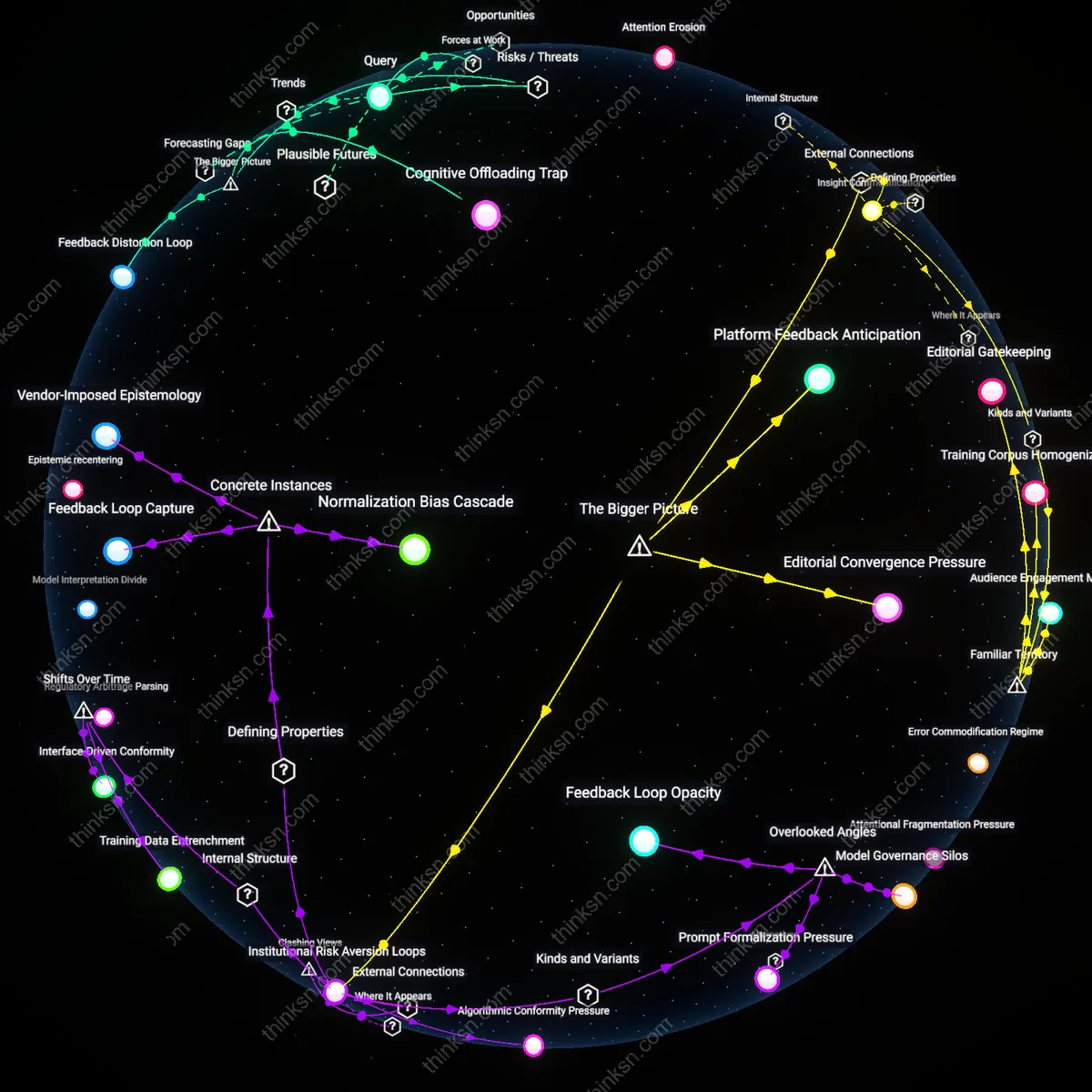

Data-driven startups prioritize speed and granularity in economic data presentation, leveraging APIs, machine learning models, and alternative datasets like credit card transactions or shipping logistics to update dashboards in near real time. While this responsiveness enhances relevance for traders and tech firms, it reduces space for editorial scrutiny, leading users to implicitly accept data as neutral output rather than socially constructed measurement. The underappreciated reality is that the startup’s credibility is not built on provenance or process but on perceived technological modernity—the assumption that faster data is inherently more accurate, even when metadata or sample biases are undisclosed.

Cognitive Labor Allocation

Readers of legacy newspaper editorials outsource data credibility assessment to the brand’s gatekeepers, relying on visible editorial controls like named columnists, citations, and retractions to reduce their own cognitive burden. In contrast, users of startup dashboards engage in active interpretation—comparing trends across tabs, adjusting filters, and cross-referencing signals—making credibility a contingent, user-constructed outcome rather than a pre-packaged assurance. The overlooked dynamic here is that credibility in the startup context is not a property of the data itself but emerges from the user’s sense of control and interaction, mistaking navigational fluency for epistemic reliability.

Editorial Temporal Lag

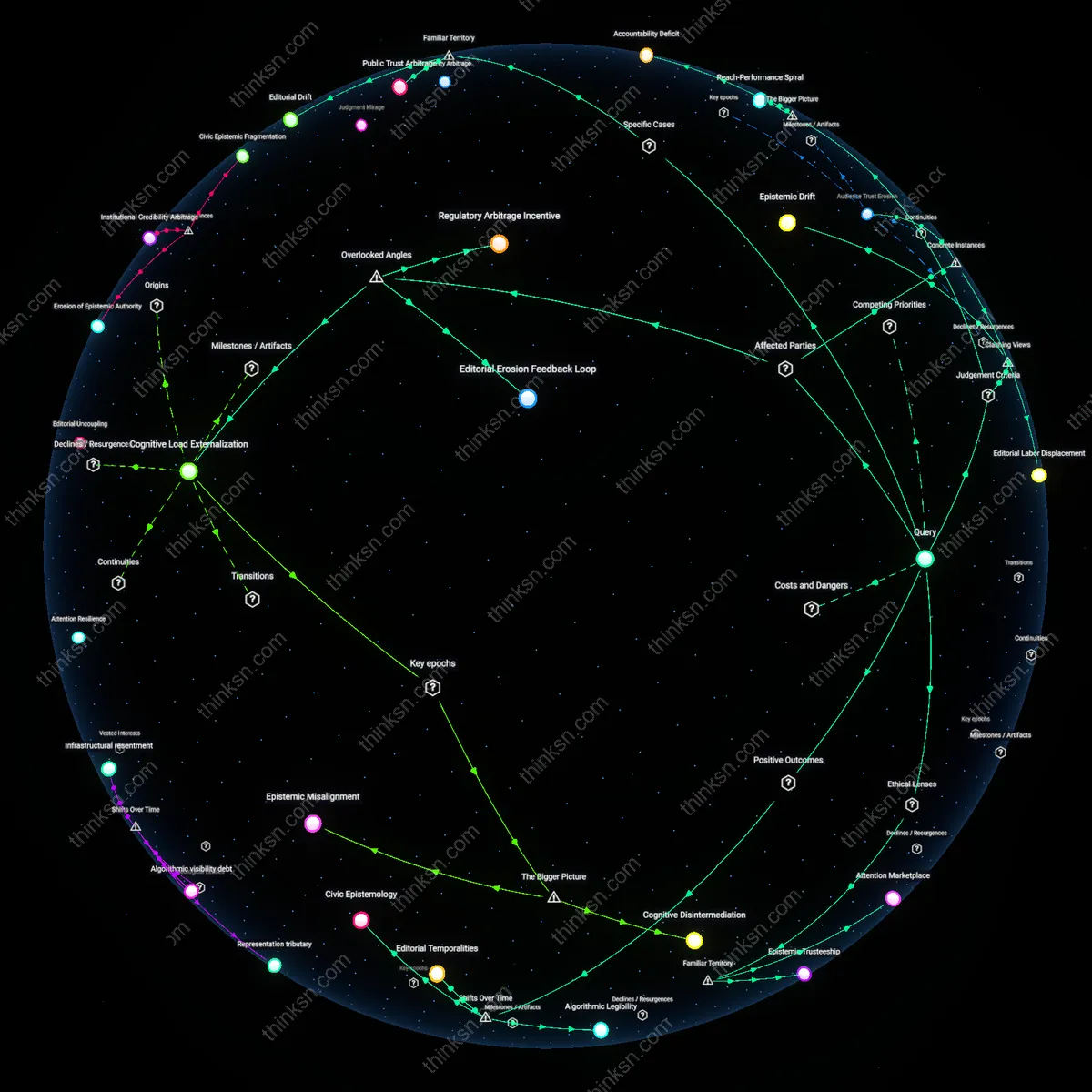

Established newspapers decelerate data credibility by subjecting economic indicators to hierarchical editorial review cycles that originated in the mid-20th century print production model, delaying publication until alignment with institutional narrative standards is achieved. This process, managed by op-ed editors and senior columnists embedded in legacy media hierarchies, filters data through precedent-based expectations of tone and relevance, often excluding or reframing statistically significant but contextually disruptive figures. The mechanism—a gatekeeping protocol designed for mass circulation credibility in the analog era—now produces a temporal lag between data emergence and public presentation, privileging coherence over contemporaneity. What is underappreciated is that this lag is not accidental but a preserved artifact of pre-digital workflow regimentation, where credibility was equated with deliberation, revealing how mid-century newspaper production rhythms continue to distort real-time economic interpretation.

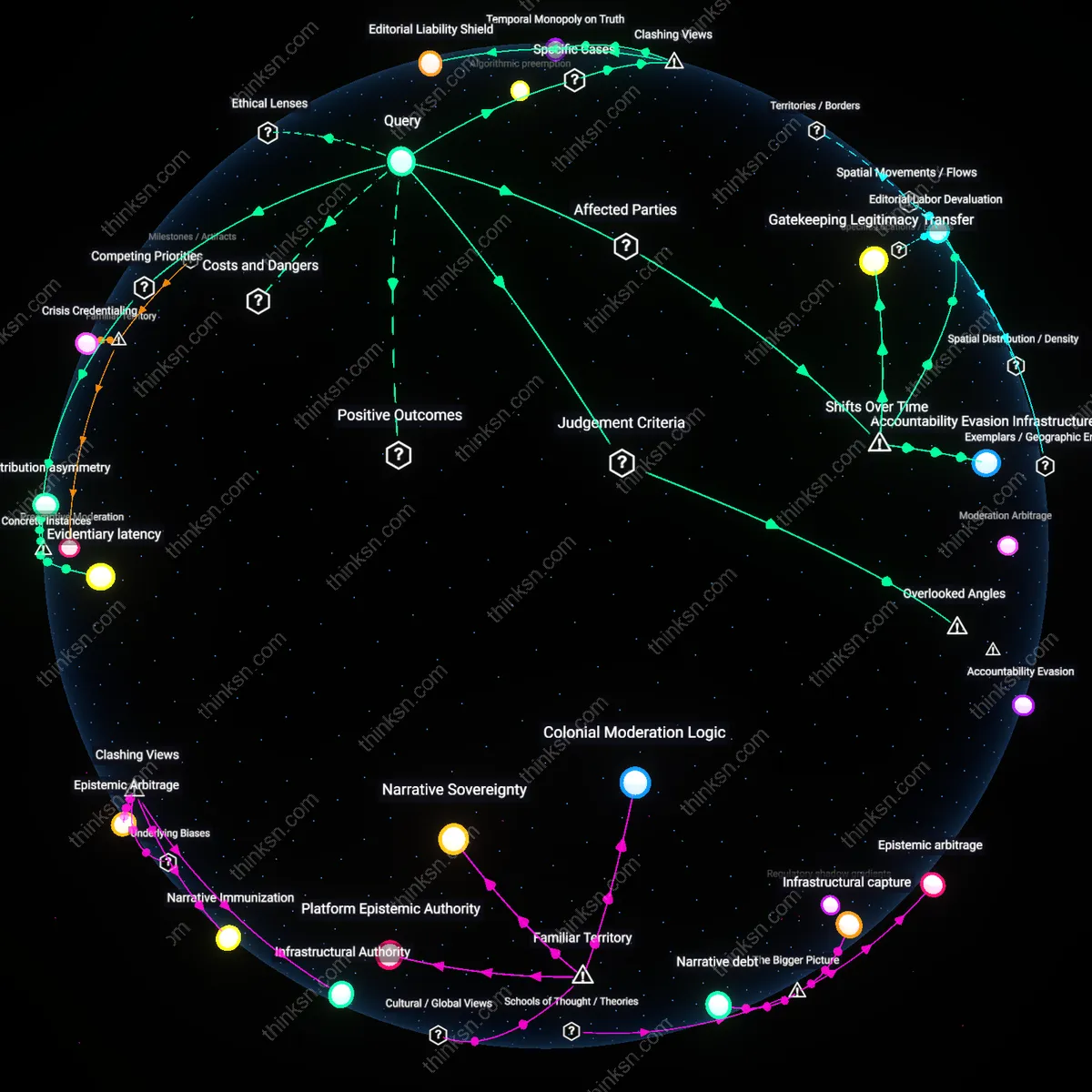

Asymmetric Accountability Regimes

The credibility gap between legacy editorial pages and data startups crystallized after the 2008 financial crisis, when public mistrust in institutional economic narratives grew while simultaneously elevating faith in quantified, 'unfiltered' data displays, creating divergent accountability structures. Legacy newspapers, under pressure to maintain reader trust, intensified internal review protocols involving fact-checkers and economics editors—who now reference Federal Reserve publications and academic working papers to ground claims—making credibility a function of traceable attribution and peer-reviewed consensus. Meanwhile, startups, especially those backed by venture capital from 2012 onward, avoided comparable oversight by framing dashboards as 'tools' not 'opinions,' thus evading journalistic standards while still shaping economic perception. The critical shift is that post-crisis demand for transparency produced stronger internal checks in traditional media while incentivizing startups to exploit regulatory ambiguity, revealing that credibility is no longer determined by accuracy alone but by whether an entity is perceived to be in the line of institutional accountability.

Editorial Inertia

The credibility of economic data is lower on legacy newspaper editorial pages because institutional resistance to updating narrative frameworks distorts how new data are interpreted, as seen at The Wall Street Journal’s editorial board, which consistently reframes Federal Reserve inflation metrics through a hawkish, deregulatory lens regardless of statistical revisions, revealing that credibility is not a function of data accuracy but of alignment with entrenched ideological templates. This mechanism operates through editorial continuity—long-tenured opinion editors who treat data as substantiating evidence for preexisting worldviews rather than falsifying inputs—undermining the impression of objectivity even when sourcing legitimate datasets. The non-obvious insight is that frequent citation of official statistics does not enhance credibility when those citations are systematically curated to affirm a fixed editorial stance, challenging the intuitive belief that data-rich commentary implies data-responsive thinking.

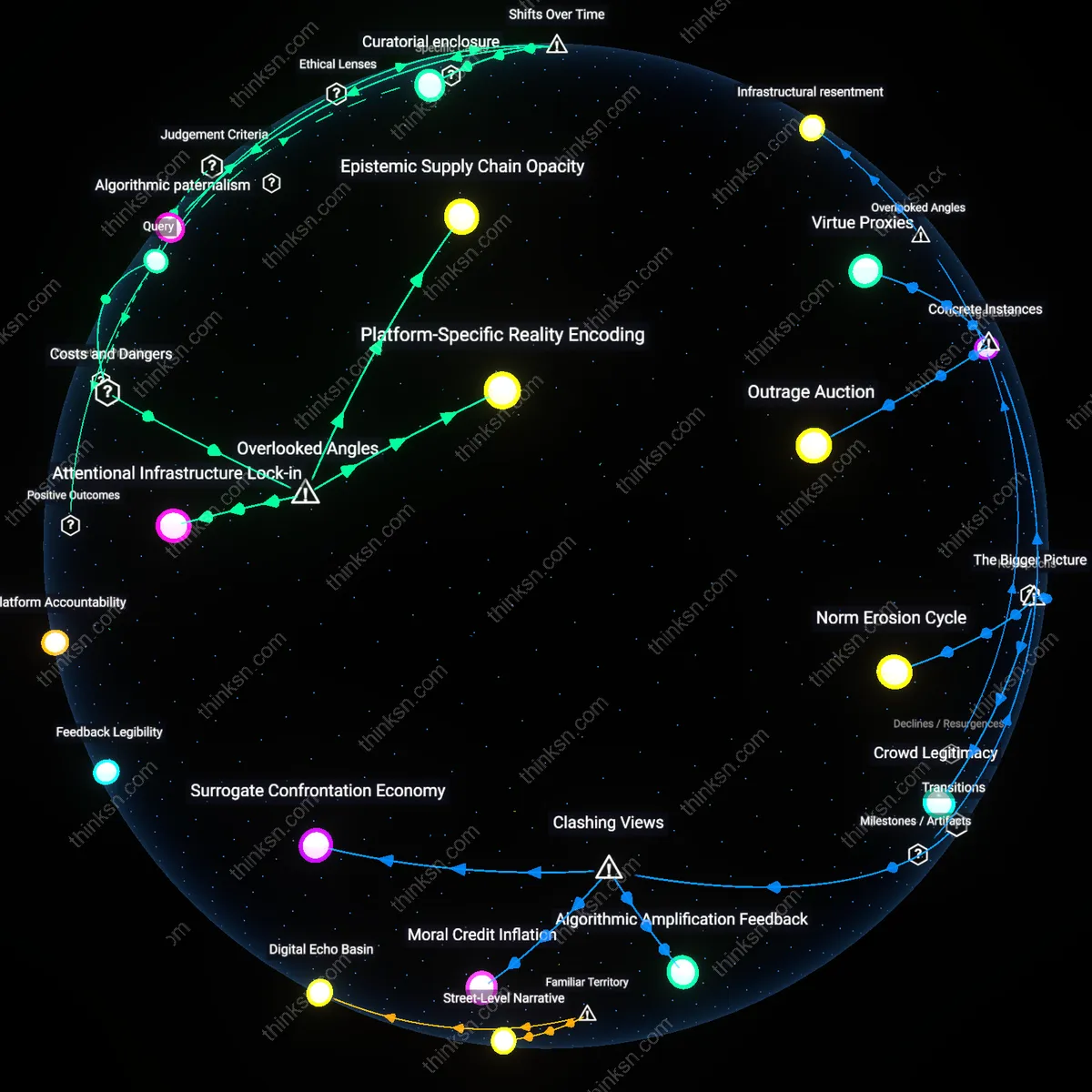

Visuality Premium

A data-driven startup dashboard like FRED (Federal Reserve Economic Data) by the St. Louis Fed gains perceived credibility not from neutrality but from the ritual displacement of judgment onto visualization mechanics, where algorithmic updates and real-time graphs simulate objectivity more effectively than editorial disclaimers ever could, as observed in fintech users’ preference for TradingView’s automated GDP trackers over IMF reports embedded in articles from the Financial Times. This credibility stems from interface design that suppresses authorial presence—removing bylines, timestamps, or revision histories—so that data appear self-generating, governed by code rather than human curation, making the dashboard more trusted despite identical underlying sources. The dissonance lies in the fact that reduced editorial control paradoxically increases perceived reliability, contradicting the assumption that oversight improves data quality.