Facial Biometrics in Dating: Convenience vs. Privacy Risks?

Analysis reveals 10 key thematic connections.

Key Findings

Platform Algorithmic Debt

Free dating apps that integrate biometric facial analysis accumulate platform algorithmic debt by embedding non-consensual data incompatibilities into their matching systems, privileging false-positive matches over long-term user autonomy. This occurs as developers repurpose third-party facial recognition APIs without transparent opt-in protocols, binding future iterations to inherited biases that degrade trust in algorithmic fairness—especially among neurodivergent users who express attraction outside dominant physiognomic norms. The overlooked mechanism is technical path dependency in open-architecture platforms, where early integration choices silently constrain ethical redesign, making de-biasing future versions structurally costly and politically resisted by venture stakeholders. This shifts the risk calculus from individual privacy violations to systemic erosion of adaptive platform governance.

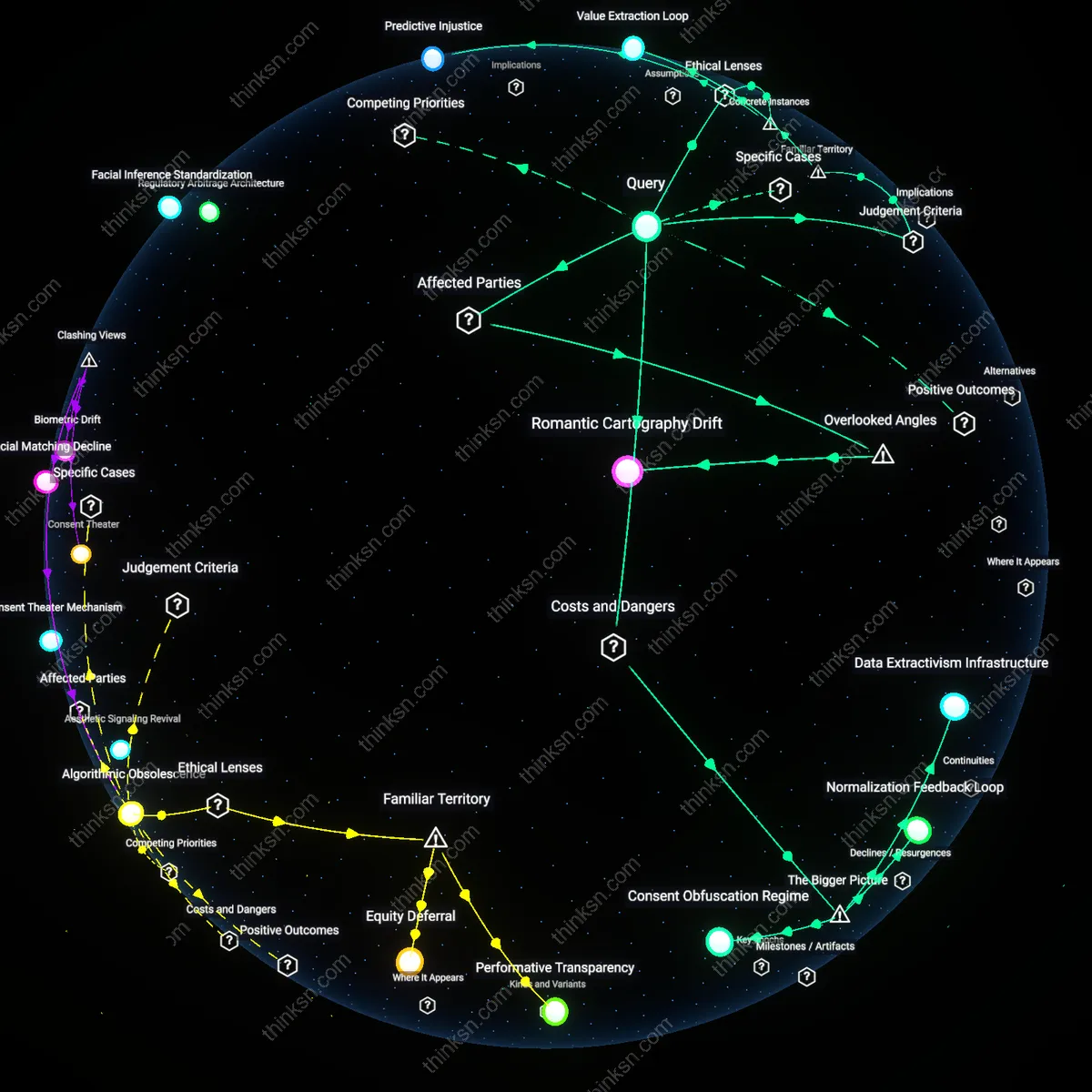

Romantic Cartography Drift

Biometric facial analysis distorts romantic cartography drift by redefining geographic proximity not as physical adjacency but as algorithmic likeness, causing municipal public health initiatives—like STD prevention campaigns in transit hubs—to lose targeting precision when users’ movement patterns are misaligned with their app-generated 'compatibility zones'. The overlooked dependency is institutional reliance on dating app mobility data for urban health forecasting, which becomes increasingly invalid as facial-matching algorithms promote long-distance emotional investment over local interaction. This alters the public utility value of behavioral datasets, turning what was once a neutral input into a commercially skewed artifact with civic downstream consequences.

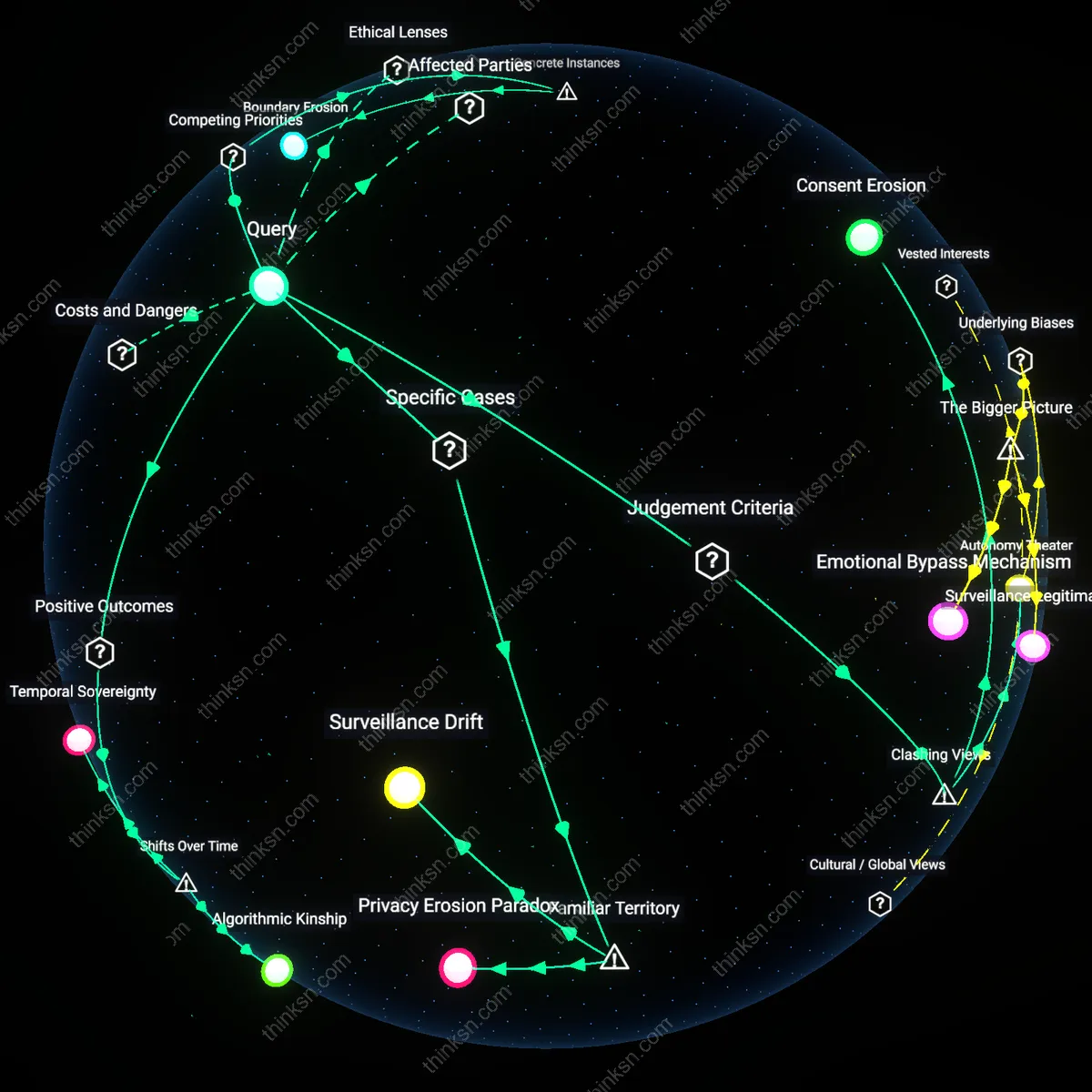

Consent Erosion

No, because users on free dating apps rarely provide informed consent for biometric data usage, and the mechanisms enabling facial analysis—such as opaque privacy policies and bundled permissions—exploit behavioral inertia. Most platforms treat consent as a procedural checkbox rather than an ongoing, meaningful choice, allowing continuous profiling under the guise of service improvement. This undermines personal autonomy not through overt coercion but through systemic design that discourages active decision-making, revealing how convenience normalizes surveillance in consumer tech.

Value Extraction Loop

No, because biometric facial analysis on free apps primarily serves to refine user profiles for third-party data markets, turning intimate preferences into monetizable behavioral patterns. The compatibility benefit is incidental—a retention tactic to sustain engagement—while the core economic mechanism extracts value from personal biology without reciprocal ownership or compensation. This reveals a structural asymmetry where personal data fuels profitable prediction markets, yet users bear the risk and gain minimal control.

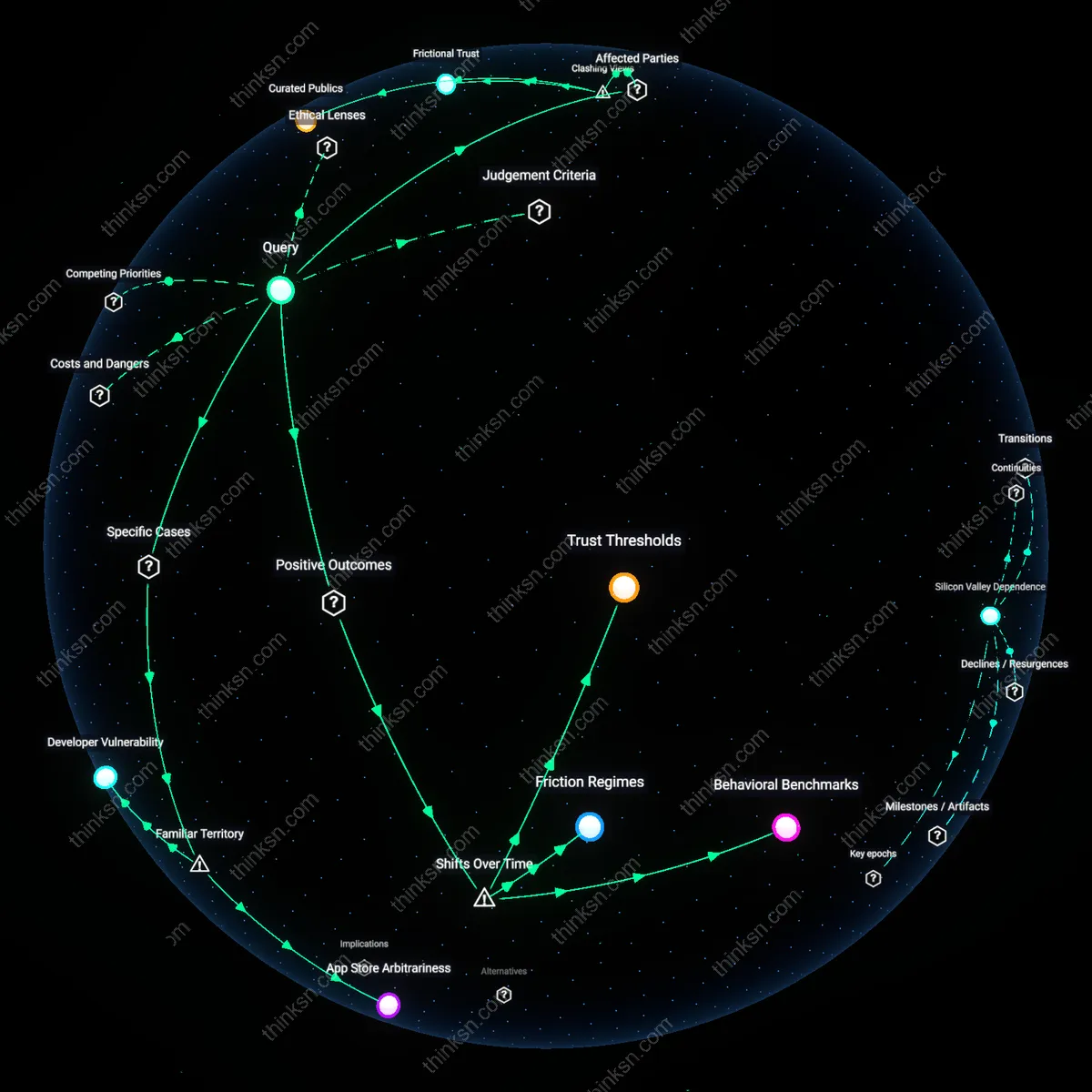

Trust Infrastructure Deficit

Yes, because when biometric matching demonstrably increases long-term relationship formation—such as in trials using facial symmetry and emotional valence detection—public health outcomes like reduced loneliness and stable households can justify measured data use. The familiar fear of profiling often overlooks that medical and social institutions already rely on embodied signals (e.g., voice stress, gait), suggesting the real issue is not analysis itself but the absence of auditable, regulated systems to govern its use in consumer platforms.

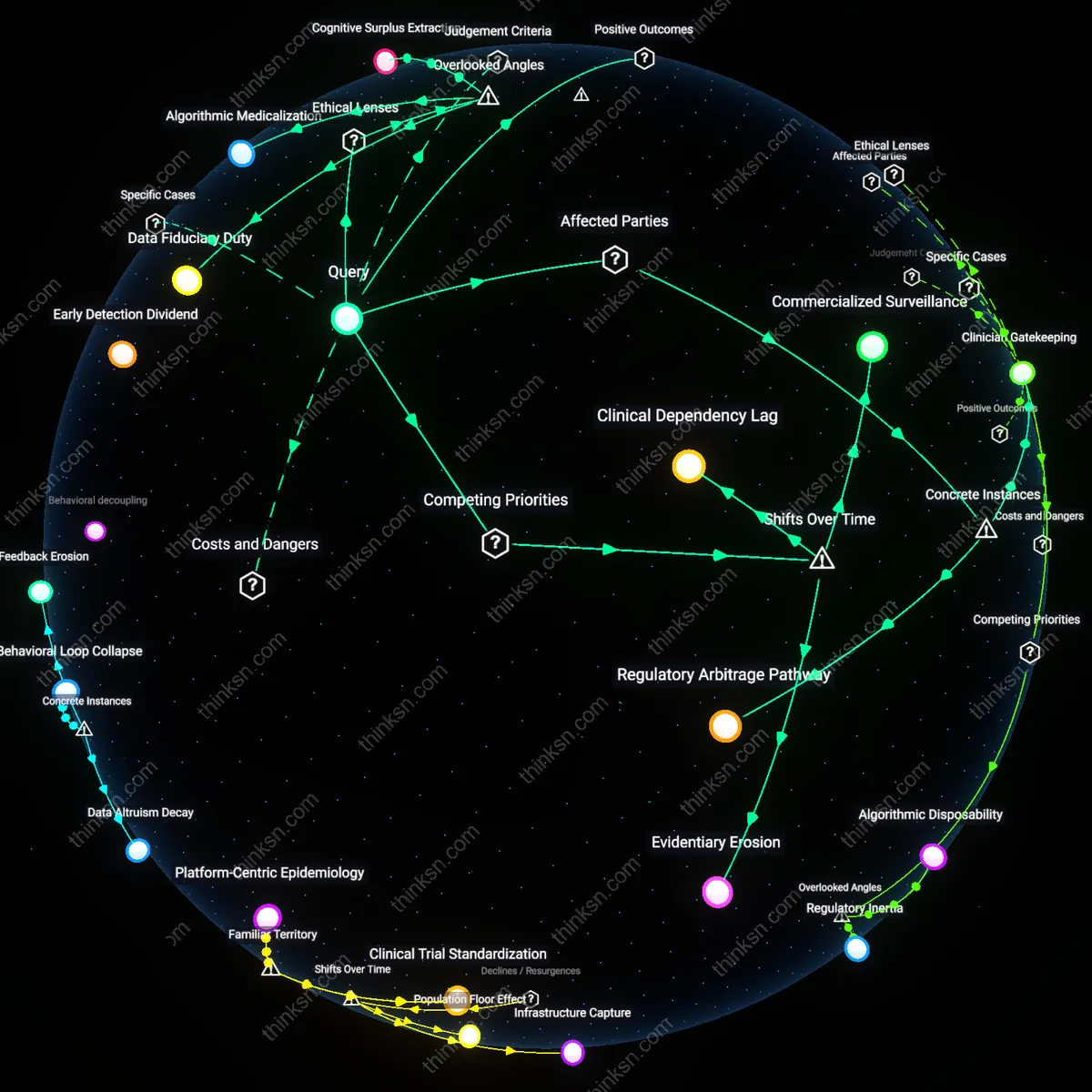

Data Extractivism Infrastructure

Biometric facial analysis on free dating apps enables pervasive user profiling that outweighs compatibility benefits by integrating intimate biometric data into advertising and surveillance economies. Free apps monetize user attention and data through surveillance-based business models, where facial biometrics become high-value inputs for cross-platform identity tracking and behavioral prediction. This integration is driven not by romantic matching efficacy but by the infrastructural demand of ad tech ecosystems for granular personal data, which repurpose intimate biometrics beyond users’ consent horizons. The non-obvious consequence is that facial analysis functions less as a matching tool and more as a conduit for embedding users into systems of data extractivism that operate globally and with minimal regulatory oversight.

Normalization Feedback Loop

The routine collection of facial biometrics on dating apps risks normalizing biometric surveillance in everyday social interactions, eroding societal resistance to automated physical scrutiny. As users acclimate to facial scanning as a prerequisite for intimacy, they implicitly accept that personal attraction must be mediated by algorithmic bodies trained on often non-consensual data pools. This normalization is amplified by platform design that frames biometric analysis as innovative and consensual, masking the broader institutional drift toward ambient biometric monitoring. The underappreciated systemic danger is that these apps act as cultural testing grounds, habituating populations to biometric scrutiny under benign branding while preparing infrastructural and normative pathways for state and corporate surveillance expansion.

Consent Obfuscation Regime

Free dating apps obscure meaningful consent through interface design and legal architecture, making users complicit in their own profiling while believing they are optimizing romance. Terms of service and default settings are structured to funnel users into biometric enrollment without conveying the downstream reuse of facial data in third-party risk assessment, insurance, or employment screening systems. This obfuscation is sustained by asymmetries in technical literacy and power between users and platform operators, who benefit from regulatory gray zones in biometric data governance. The critical systemic mechanism is not user ignorance per se, but the deliberate engineering of consent processes that simulate choice while functionally eliminating it, thus enabling exploitation under the guise of service improvement.

Predictive Injustice

The use of facial analysis to infer compatibility mirrors the logic of predictive policing algorithms like those deployed in Chicago’s Strategic Subject List, which used biased data to flag individuals as future crime risks, thereby reproducing structural inequities under utilitarian cost-benefit frameworks that falsely assume neural correlates of attraction are universally measurable; this instance shows that biometric matching systems inevitably encode dominant cultural biases—such as Eurocentric beauty standards—into seemingly neutral algorithms, producing a form of epistemic injustice where marginalized users are systematically misclassified not due to inaccuracy but due to the political ontology embedded in training data.

Surveillance Drift

When free dating platforms adopt facial analysis, they follow the same trajectory as Facebook’s DeepFace technology, which began as a photo-tagging convenience but was later repurposed for national security contracts and third-party data sales, illustrating how biometric systems in consumer apps inevitably experience function creep under neoliberal data governance regimes where data exhaust is treated as a capital asset; this pattern reveals that the initial social benefit of improved matching is structurally inseparable from long-term surveillance expansion, as monetization pressures transform compatibility tools into behavioral prediction infrastructures with no juridical boundary between private preference and public control.