Does Shadow Banning by App Stores Undermine User Trust?

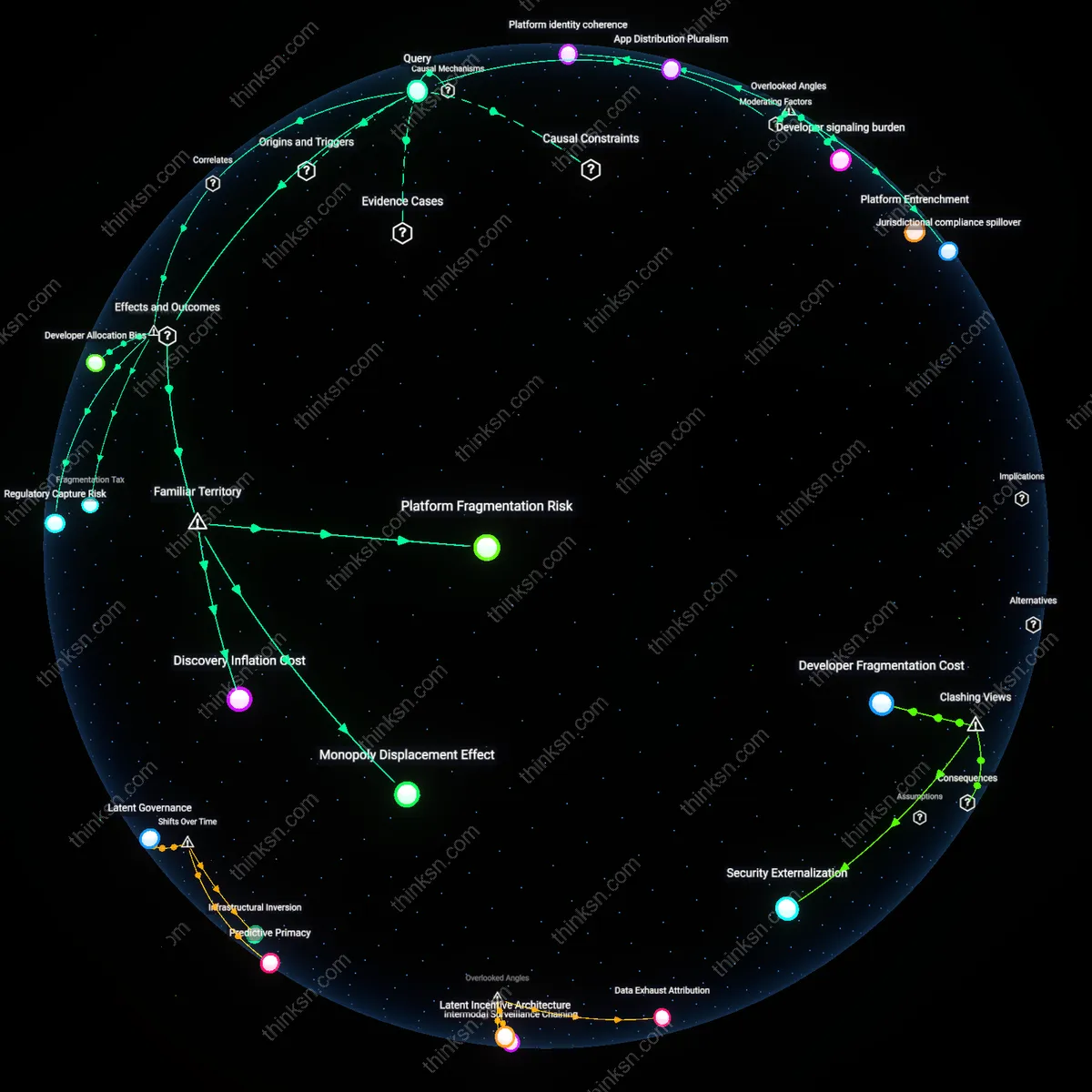

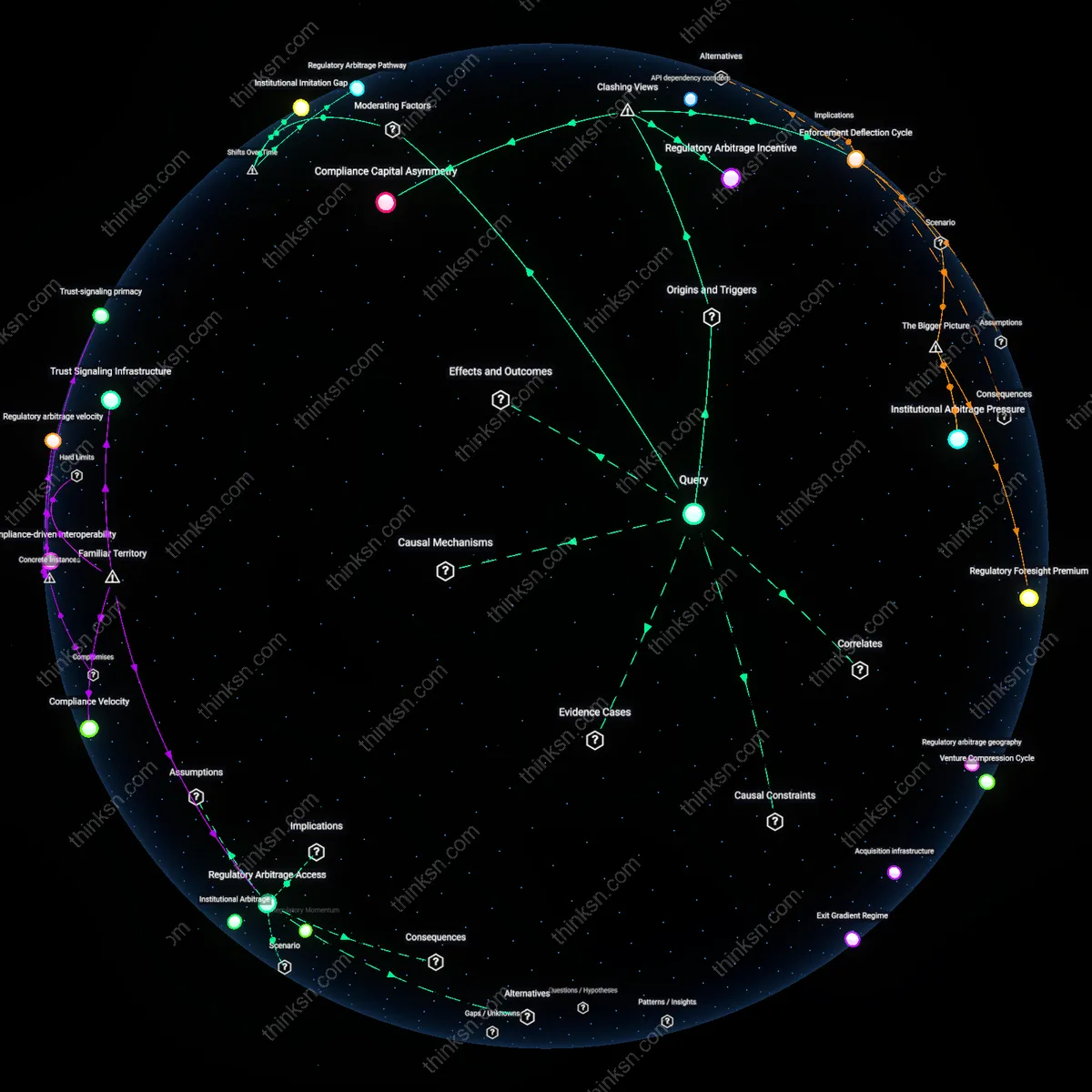

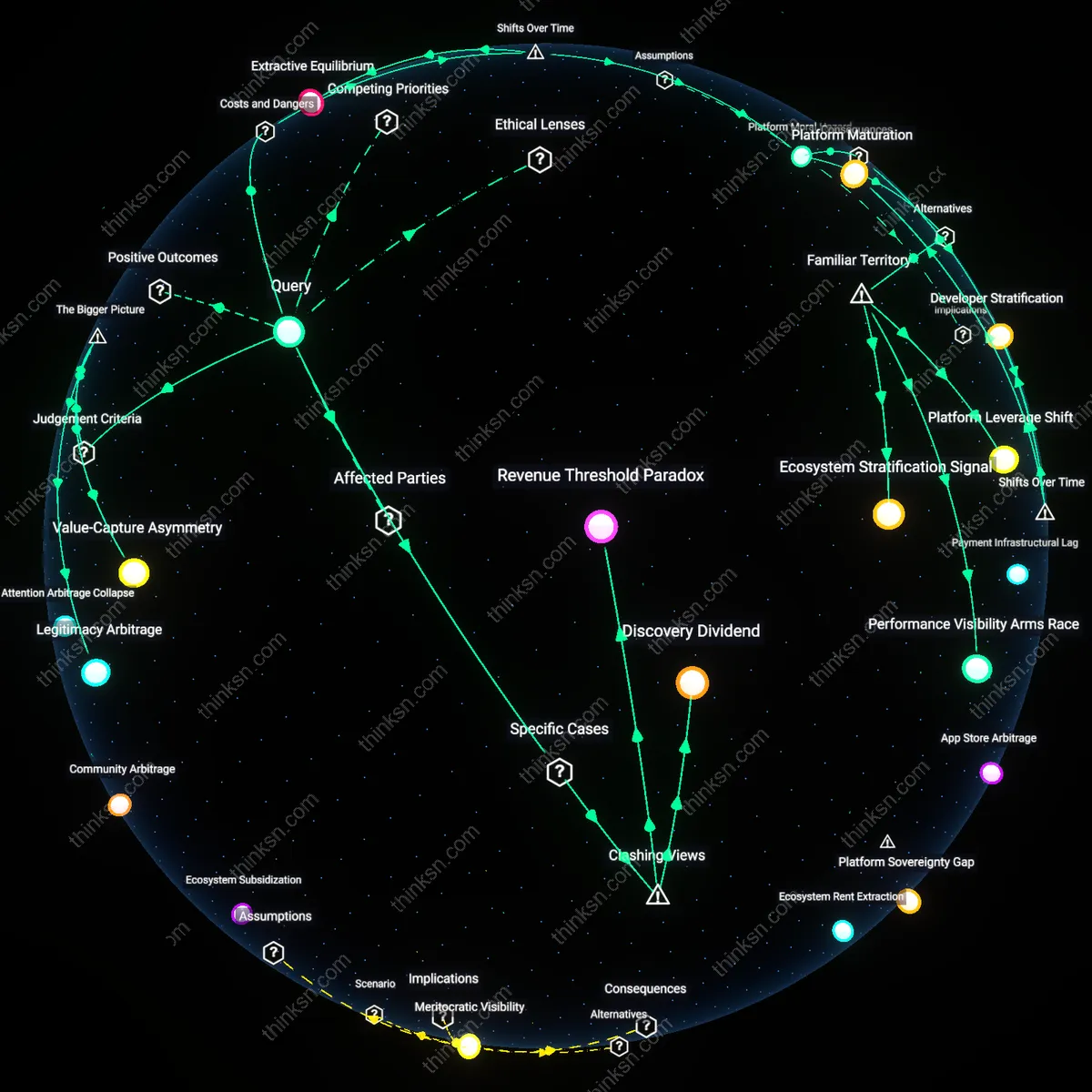

Analysis reveals 8 key thematic connections.

Key Findings

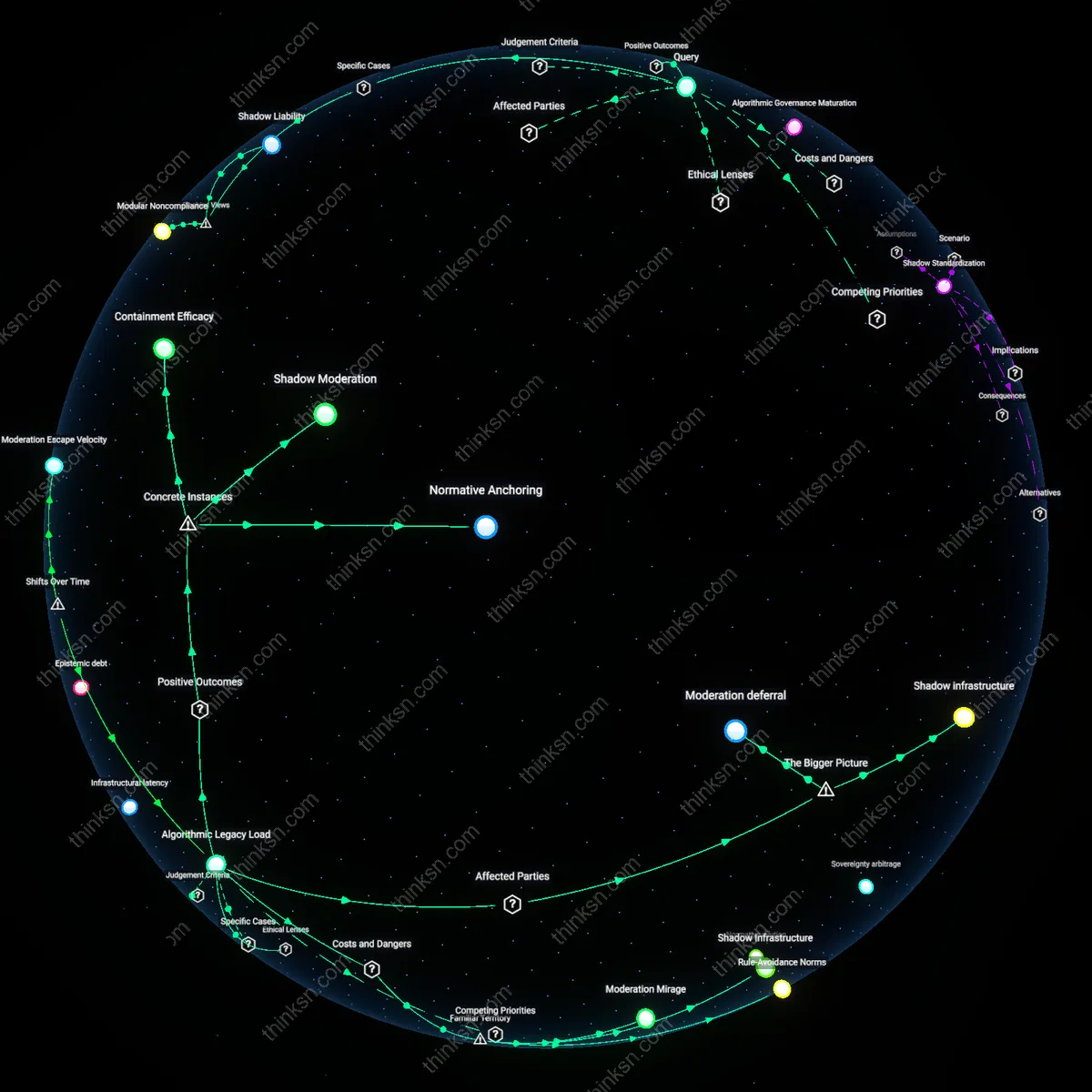

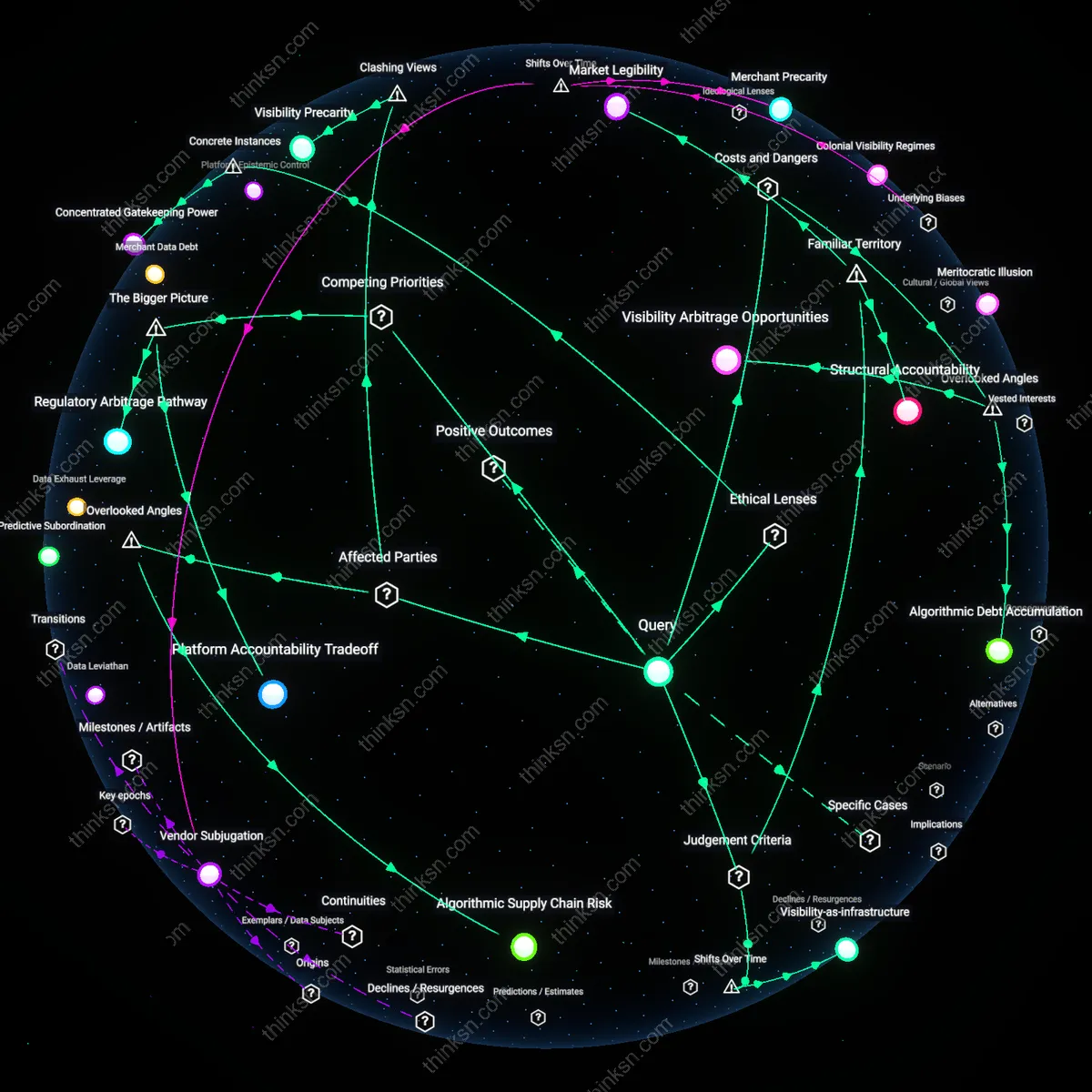

Curated Publics

App store shadow banning is ethically necessary because it enables platforms to uphold normative community standards in ways that reflect localized legal and cultural expectations, particularly in jurisdictions with strict regulations on hate speech or misinformation—such as Germany’s NetzDG law or India’s IT Rules. This mechanism allows app stores to quietly demote or deprioritize applications that comply with U.S.-centric free speech norms but violate the civic thresholds of other polities, thereby preserving operational legitimacy abroad. The non-obvious insight is that shadow banning, rather than undermining trust, can be a tool for multinational platforms to align with diverse publics’ expectations without triggering diplomatic or legal clashes—revealing that seemingly opaque enforcement may enable pluralistic governance.

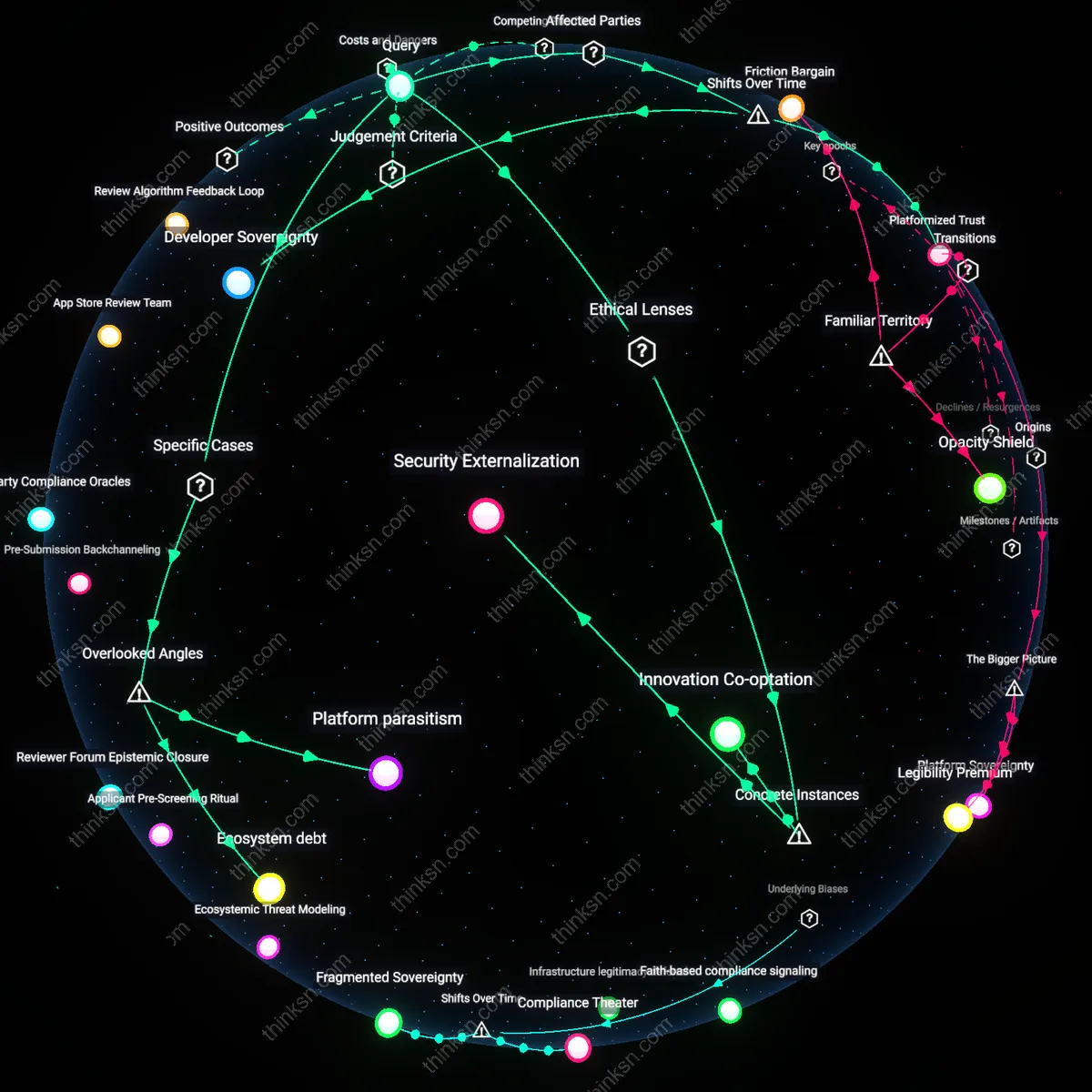

Asymmetric Accountability

Shadow banning in app stores entrenches a power differential where developers from marginalized regions or with limited resources bear disproportionate harm from unexplained delistings or reduced visibility, while well-connected firms can navigate or contest these decisions through lobbying or legal teams. The mechanism operates through non-transparent algorithmic ranking combined with appeal processes that favor institutional knowledge—exemplified by how indie app creators in Southeast Asia report sudden traffic drops without notification, unlike Silicon Valley counterparts who receive early warnings. This reveals that the real friction is not about visibility per se, but how opacity shields dominant platforms from reciprocal scrutiny, making accountability flow downward only—exposing a system where fairness is structurally inverted.

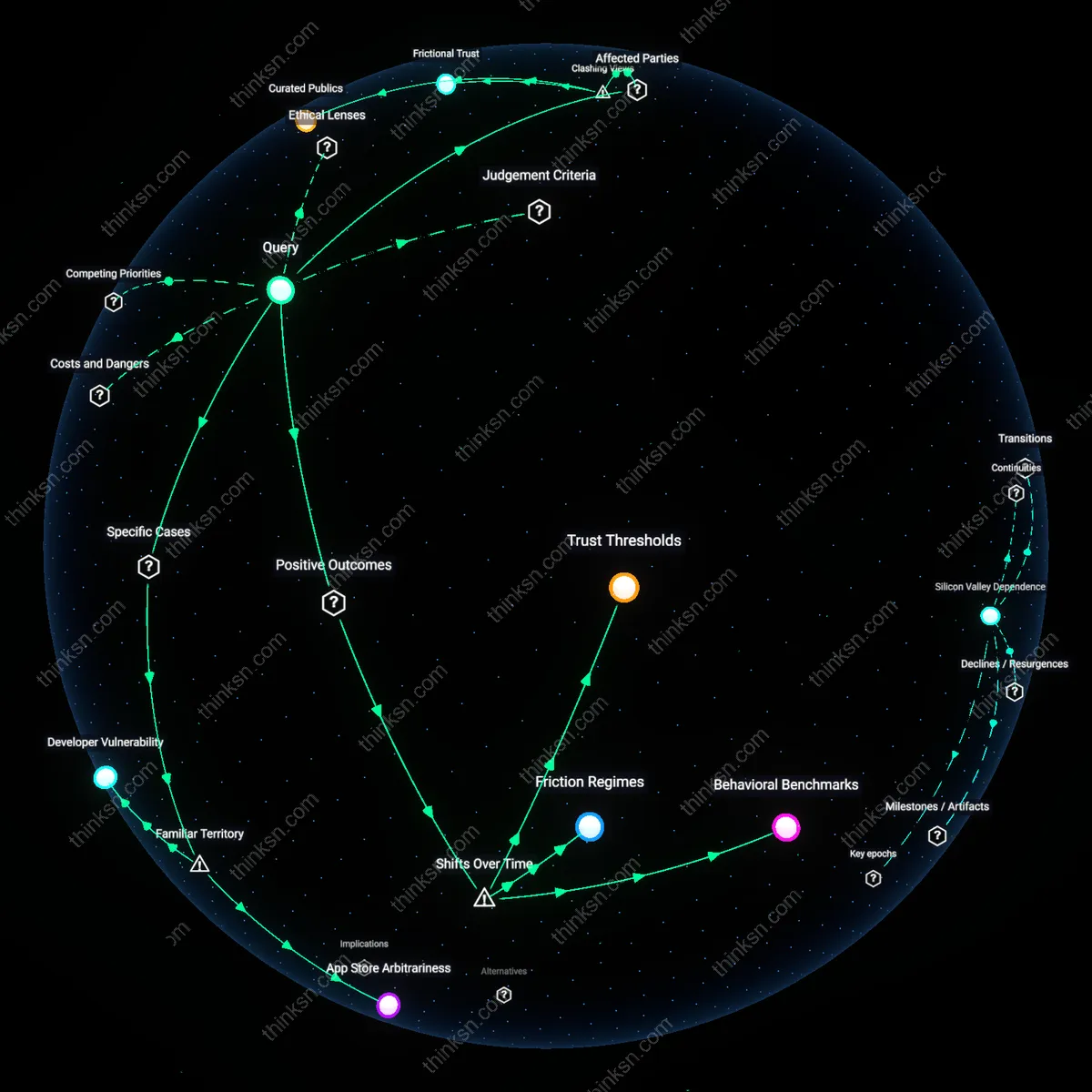

Frictional Trust

Shadow banning paradoxically sustains user trust by preventing mass exposure to harmful or deceptive apps that would otherwise proliferate under strict transparency mandates, as seen in the rapid spread of fake health apps during the 2020 pandemic when app stores briefly relaxed curation. The practical justification lies in delaying or downgrading suspicious content just enough to allow human review, mimicking immunological latency in biological systems—where a delayed response prevents systemic infection. The non-obvious truth is that momentary obscurity, not immediacy of information, enables long-term ecosystem resilience, suggesting that ethical legitimacy can emerge from calibrated invisibility rather than full disclosure.

Trust Thresholds

Shadow banning in app stores became ethically and practically justifiable after the 2016–2018 inflection point when platform governance shifted from reactive content removal to proactive behavioral modulation, a transition catalyzed by widespread manipulation during pivotal elections. Prior to this era, app delistings were overt and final, functioning as blunt enforcement tools that disrupted user ecosystems abruptly and publicly; the shift to shadow measures allowed platforms like Google Play and Apple App Store to demote or marginalize apps subtly—particularly those exploiting algorithms for disinformation or surveillance—without triggering backlash from developers or users accustomed to all-or-nothing visibility. This calibrated suppression preserved the functional integrity of app ecosystems while quietly raising the threshold for trustworthiness, a mechanism that only emerged meaningfully once platforms began treating visibility as a conditional privilege rather than a default right.

Friction Regimes

The justification for shadow banning crystallized during the late 2010s as app stores evolved from digital marketplaces into regulated public utilities, marked by the 2020 antitrust scrutiny of Apple’s App Store and Google’s Play Store, which forced them to formalize opaque moderation practices into structured but non-transparent friction systems. Unlike earlier models where banned apps were outright removed—creating legal liability and public relations crises—shadow banning introduced graduated interference, such as reduced search rankings or restricted API access, which allowed platforms to degrade harmful functionality without triggering appeal rights or due process expectations. This regime of calibrated friction emerged not as a loophole but as a necessary adaptation to judicial and regulatory pressure, transforming shadow banning from a covert tactic into a systemic governance tool that balances accountability avoidance with operational control.

Behavioral Benchmarks

Shadow banning gained legitimacy through the post-2019 normalization of machine learning-based compliance monitoring, when app stores shifted from rule-based enforcement to behavioral pattern detection, using AI systems trained on historical data from removed or flagged apps to identify emerging threats before they scale. In this new phase, transparency was no longer prioritized because the criteria for restriction became emergent properties of algorithmic models, not fixed policies—making public justification impractical without exposing proprietary logic or enabling adversarial manipulation. The move toward dynamic, data-driven thresholds meant that shadow actions were no longer arbitrary but served as early interventions calibrated to evolving threat landscapes, producing a feedback loop where enforcement precision improved at the cost of explainability, thereby redefining trust as an outcome of predictive accuracy rather than procedural clarity.

App Store Arbitrariness

Shadow banning in app stores is ethically unjustifiable because it enables unilateral, opaque enforcement by dominant platforms like Apple and Google, who remove or suppress apps without public justification or appeal—such as when Apple removed the Fortnite app over payment policy violations while providing minimal procedural transparency. This mechanism operates through proprietary review systems that lack independent oversight, turning curated ecosystems into unaccountable gatekeepers. The non-obvious consequence under familiar concerns about fairness is not censorship per se, but the normalization of arbitrary authority masked as quality control.

Developer Vulnerability

Shadow banning erodes trust because independent developers, such as those behind privacy-focused apps like Signal or alternative social platforms like Mastodon-connected clients, depend entirely on app store visibility yet receive no notification or recourse when their discoverability is suppressed. This occurs through algorithmic ranking and indexing decisions made internally by Google Play or Apple’s App Store teams, which function as invisible levers of market access. While users associate app stores with convenience, the underappreciated reality is that developers operate in a state of radical dependency, where commercial survival hinges on opaque, unchallengeable backend judgments.