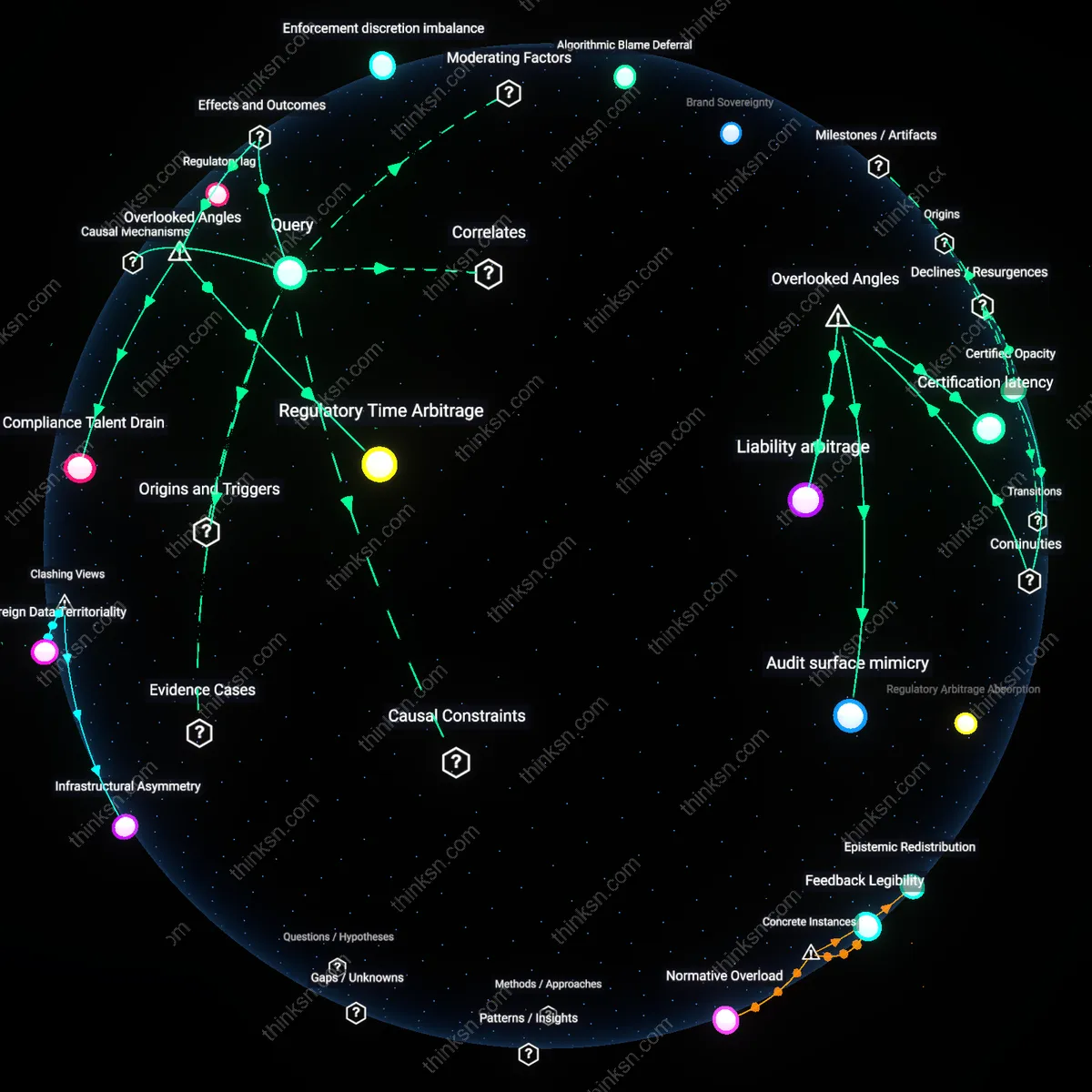

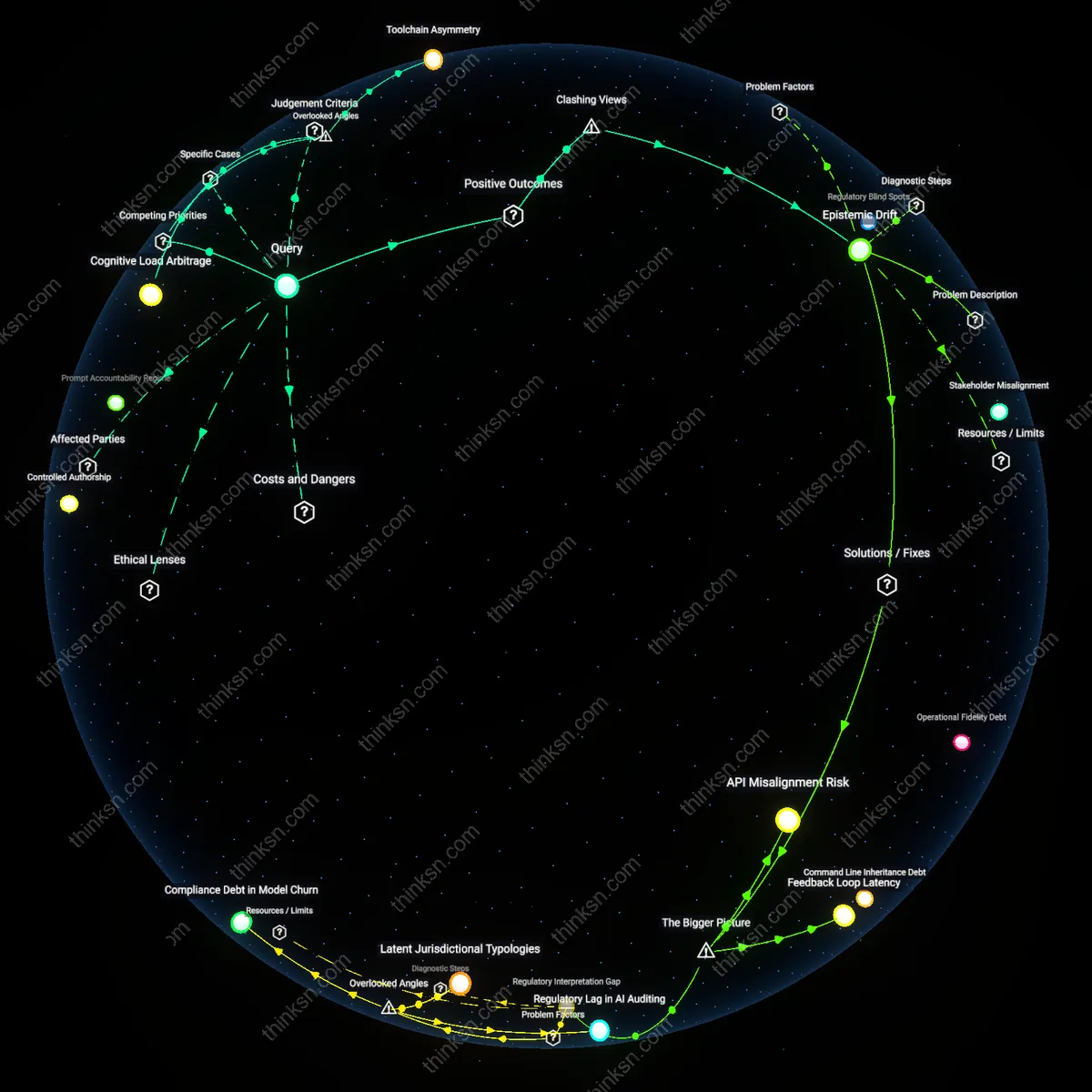

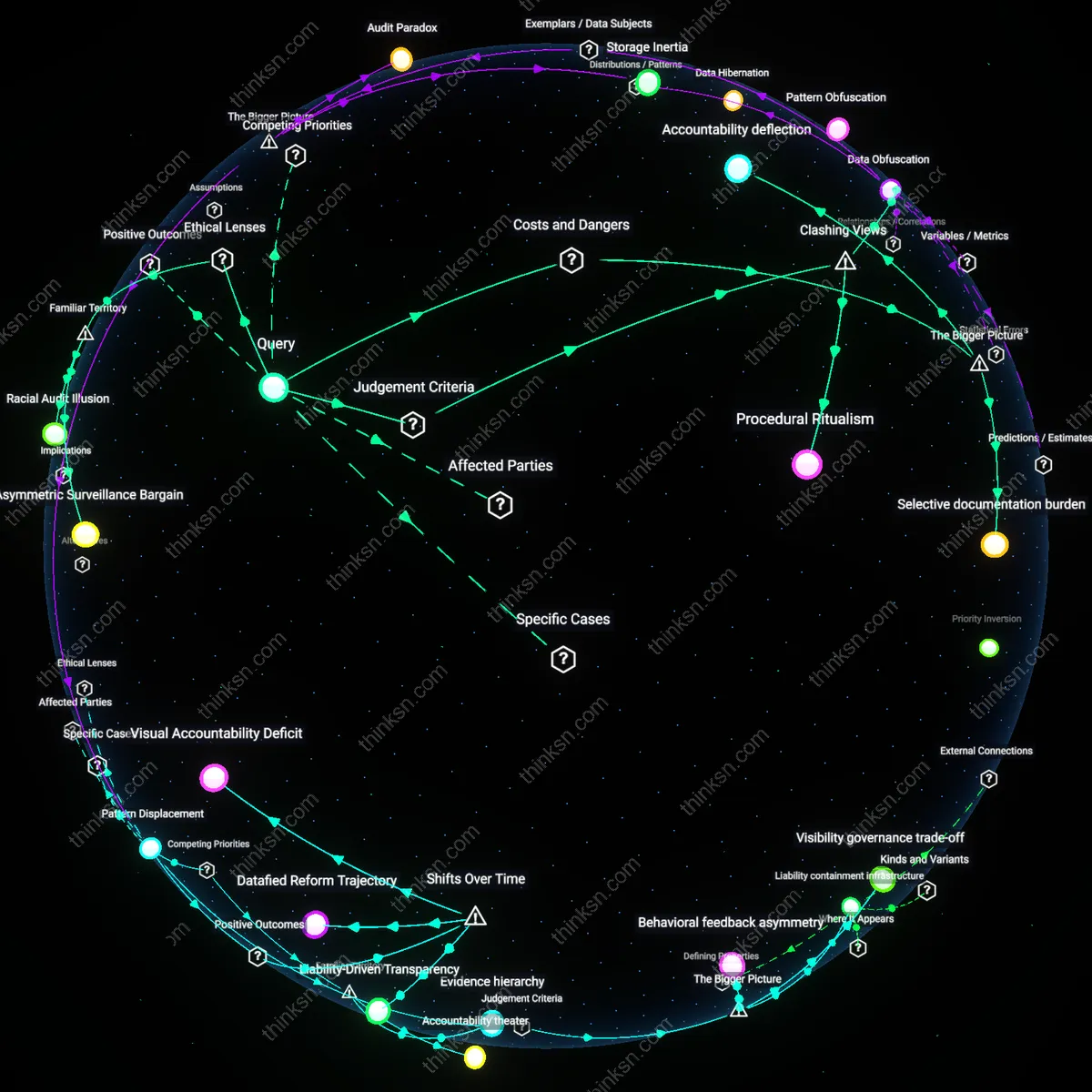

Why Biometric Rights Fizzle When Vendors Hide in the Dark?

Analysis reveals 12 key thematic connections.

Key Findings

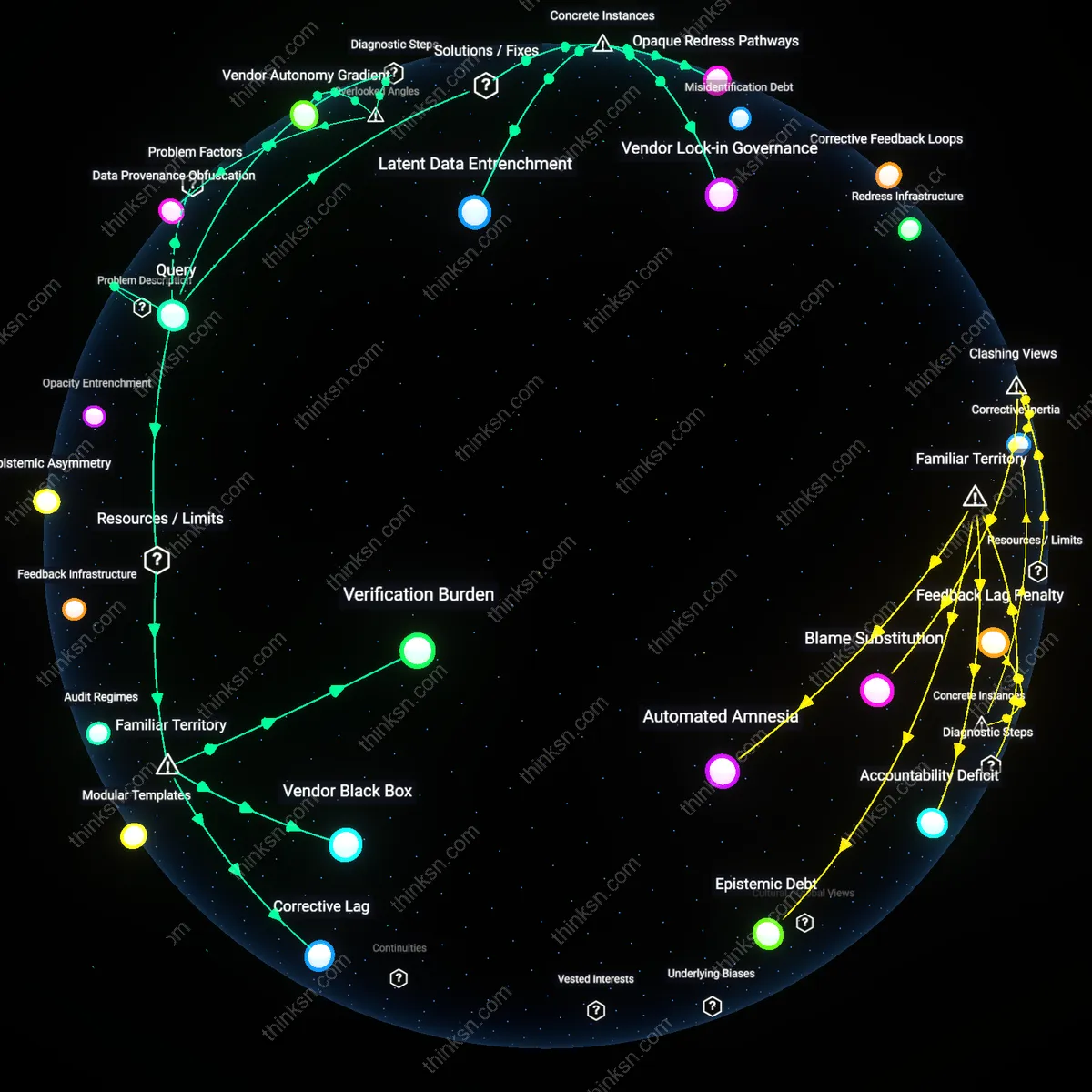

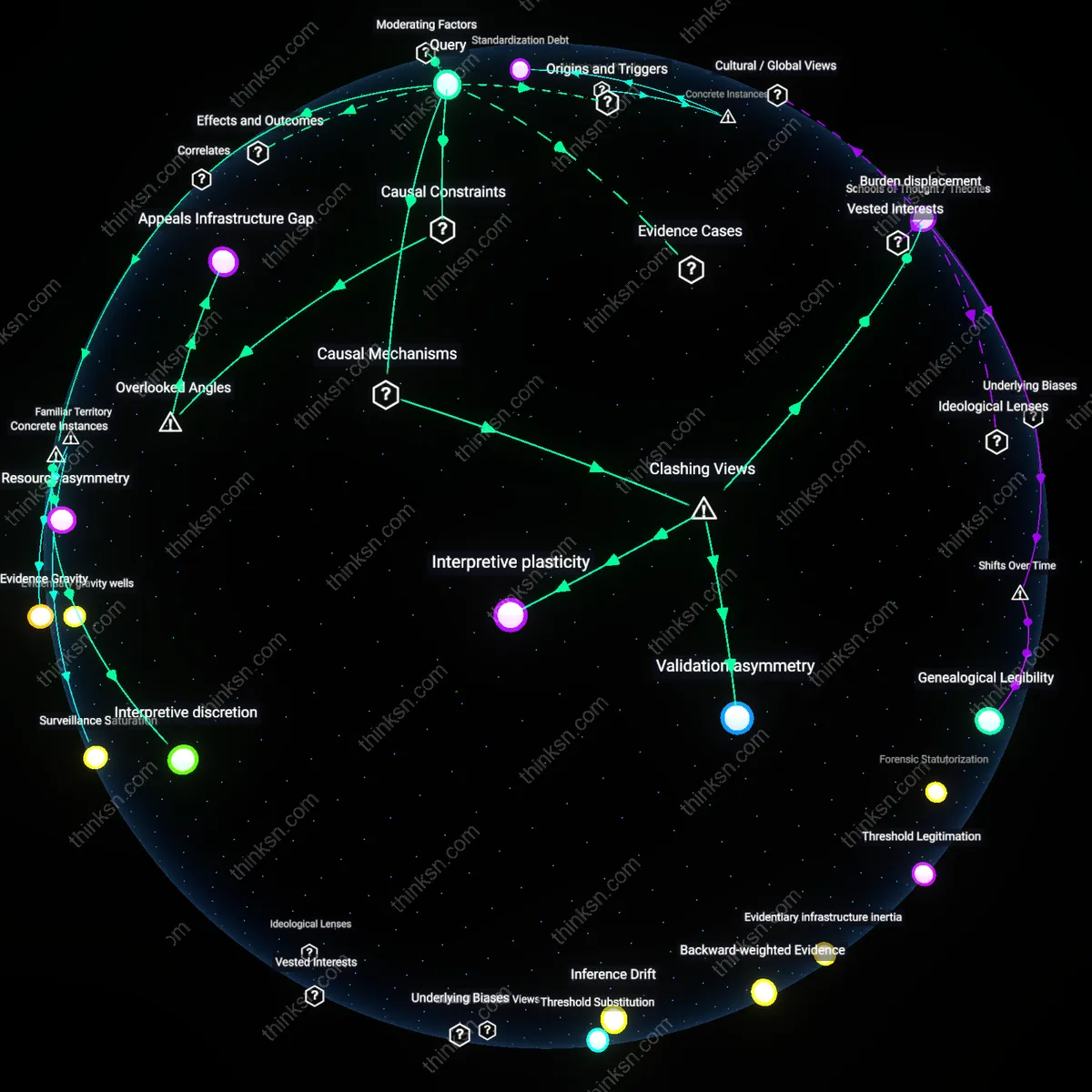

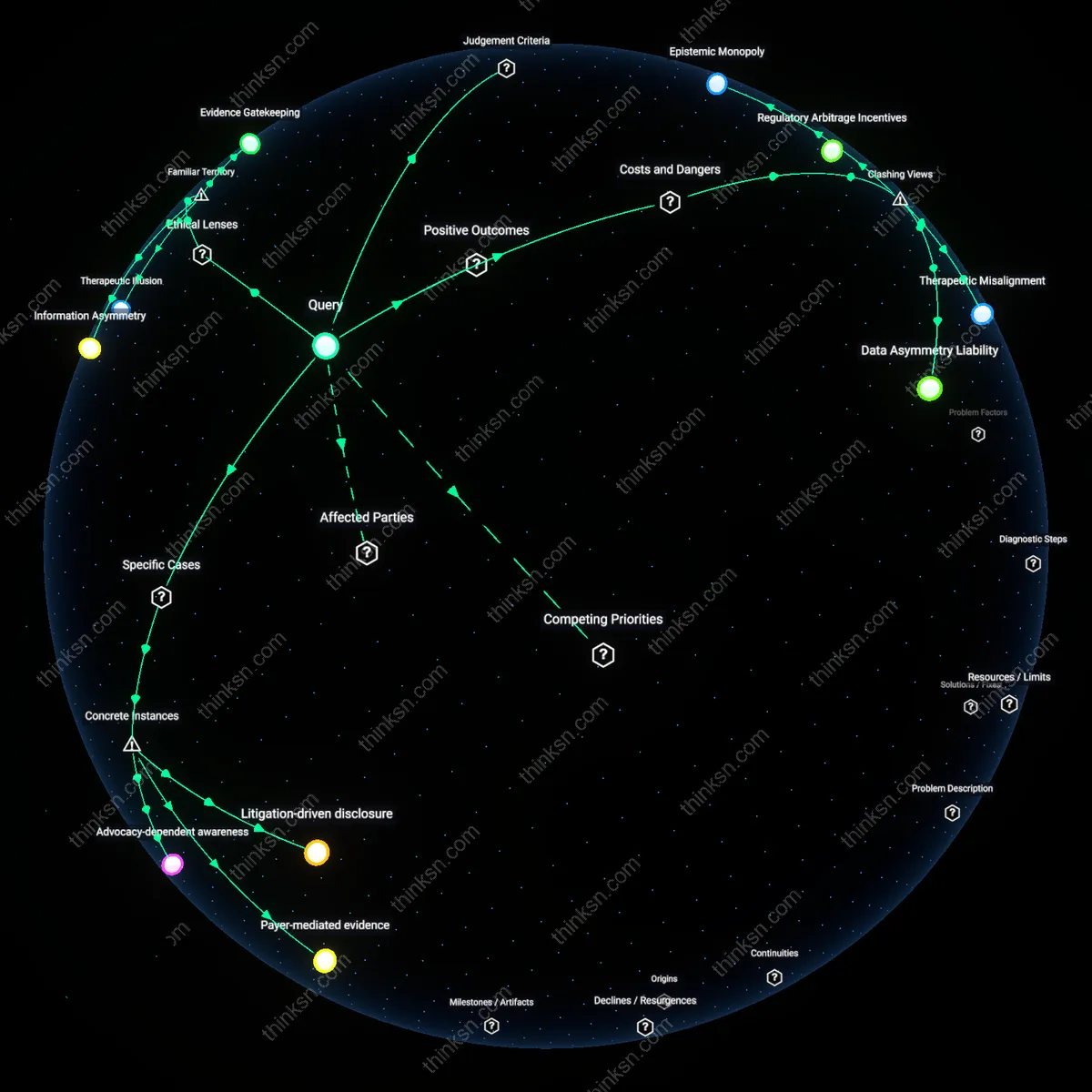

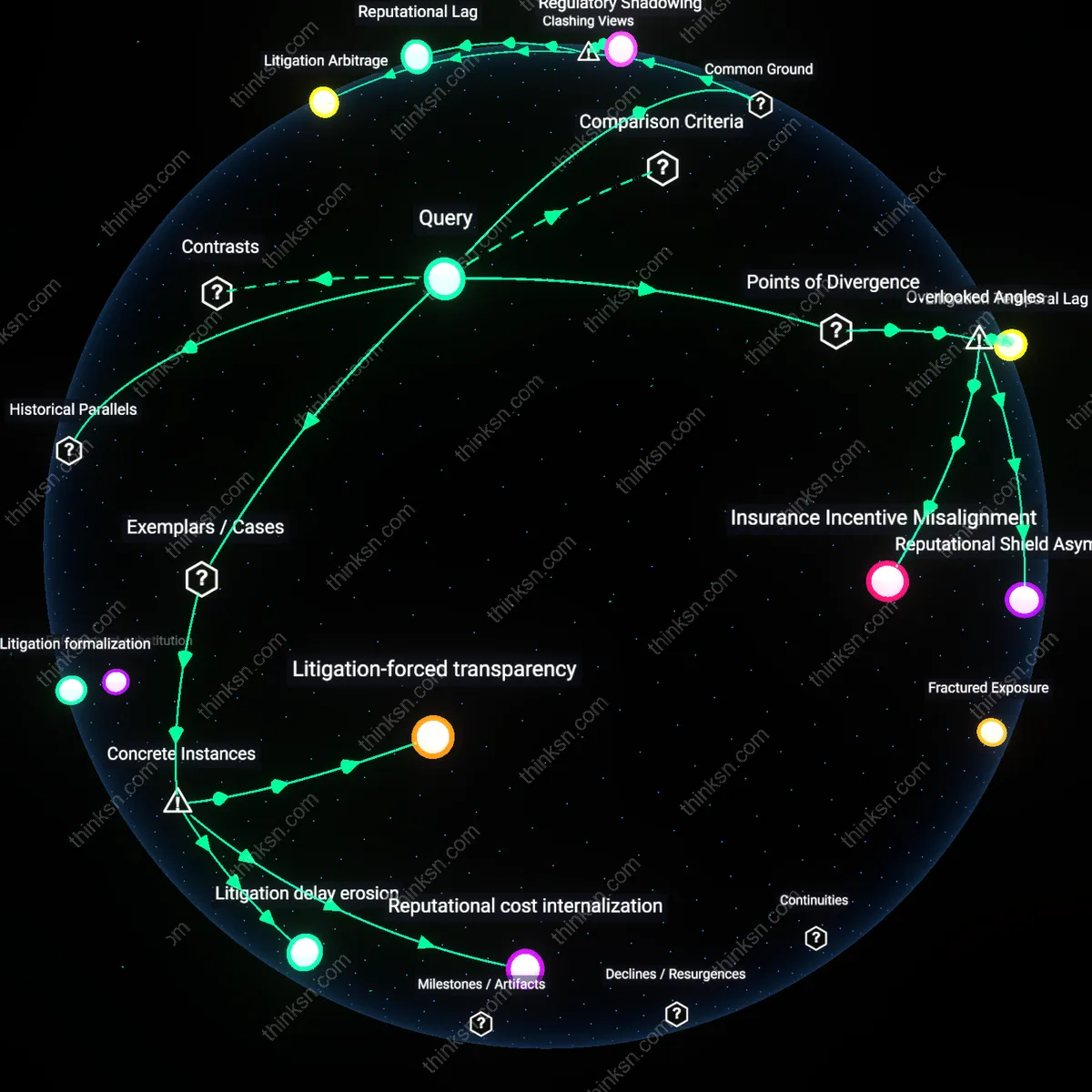

Epistemic Asymmetry

The right to correct inaccurate biometric data fails because the individual cannot independently verify the system's baseline against which corrections are assessed, since the vendor controls both data storage and the algorithms that interpret biometric inputs. Individuals lack access to the feature vectors or template generation processes used to encode their biometrics, rendering any dispute over accuracy speculative rather than evidentiary, and placing the burden of proof on the user without granting them tools to meet it. This creates a structural dependency where the vendor's definition of 'accuracy' becomes unchallengeable through technical argument, undermining the corrective right in practice. The non-obvious insight is that the failure lies not in refusal to correct, but in the impossibility of constructing a technically coherent challenge to the system’s output.

Opacity Entrenchment

Correction mechanisms fail because the vendor’s lack of transparency legally insulates it from accountability, even when errors are demonstrated, since courts and regulators defer to proprietary claims over algorithmic processes. When a plaintiff cannot inspect the model or training data behind a facial recognition match, judges typically accept the vendor’s assertion of due process compliance based on procedural checkboxes rather than functional validity, allowing erroneous determinations to persist under the guise of technical legitimacy. This entrenches error within legally accepted frameworks, making transparency a precondition for correction that is systematically denied. The dissonance is that regulatory compliance can actively prevent redress by rewarding the appearance of governance without requiring operational truth.

Feedback Opaqueness

Biometric correction fails because the system does not reflect corrections back into the operational pipeline, as the vendor’s model retraining cycles are infrequent, undocumented, and decoupled from user-initiated updates. Even when a correction is accepted, it may only alter a metadata flag or raw data entry without reprocessing the entire biometric sample through the enrollment pipeline, leaving the operational template unchanged and error rates unaffected at scale. This creates a false equivalence between data modification and functional correction, where the interface promises resolution but the backend architecture nullifies it. The overlooked reality is that correction is treated as a recordkeeping act, not a system recalibration, rendering it functionally inert.

Data Provenance Obfuscation

A private vendor’s opaque data integration pipeline breaks the traceability of biometric corrections back to original enrollment sources, preventing verification of whether the fix propagated system-wide. Because biometric records often fuse inputs from multiple ingestion points—kiosks, mobile apps, third-party contractors—the vendor’s internal data provenance architecture determines whether a correction applies to all derivative instances or only the queried node. This failure mode is invisible without access to lineage graphs, and most regulatory audits assume correction mechanisms are atomic, not distributed—a non-obvious rupture in accountability that undermines rights at scale.

Temporal Policy Drift

Biometric updates may be invalidated by retrospective model retraining, where a corrected datum is excluded from the retraining set if the vendor's algorithms treat past inputs during periods of ‘low data quality’ as bulk-invalidated. Vendors often reprocess entire datasets using new algorithms without publicizing these sweeps, meaning a user-verified correction today could be erased tomorrow during an unseen backend recalibration. This temporal instability of data rights—governed by undocumented internal quality thresholds—is rarely considered in legal frameworks premised on static data states, creating a hidden layer of policy volatility beneath apparent compliance.

Vendor Autonomy Gradient

The right to correction fails because contractually delegated operational autonomy allows vendors to define ‘accuracy’ algorithmically, not juridically—so long as final system performance metrics meet threshold obligations, internal inconsistencies are tolerated. Security-conscious clients often prioritize false-negative rates over individual data integrity, enabling vendors to absorb correction requests into noise thresholds rather than propagate them, effectively deprioritizing individual rights in favor of aggregate system behavior. This trade-off reflects a hidden hierarchy of priorities where fiduciary responsibility to the contracting institution overrides individual data subjects, a dependency rarely disclosed or contestable.

Opaque Redress Pathways

When the UK’s Metropolitan Police partnered with NEC to deploy live facial recognition on public streets, individuals flagged by faulty matches had no direct access to the biometric template or algorithmic logic used, nor any standardized channel to contest false positives—corrective action depended on third-party police discretion, not user agency. This lack of procedural clarity and direct interface between subject and system means errors persist not due to technical inevitability but institutional delegation, where the vendor's black-box infrastructure is shielded by operational secrecy and public authorities act as gatekeepers rather than facilitators. The non-obvious insight is that the failure of correction is not a data flaw but a structural disconnection between accountability and access.

Vendor Lock-in Governance

In 2019, the city of Chicago faced widespread criticism after Clearview AI scraped billions of facial images from public social media to train its law enforcement biometric database without consent or transparency, leaving individuals with no mechanism to audit or remove their data despite federal lawsuits confirming inaccuracy and misuse. Because the biometric model was entangled with proprietary scraping pipelines and inference algorithms inaccessible to municipal auditors or citizens, the city lacked technical and contractual levers to enforce data correction even when legally mandated. The underappreciated reality here is that governance erodes not from malice but from dependency—where public bodies outsource core identity systems without securing correction rights in service-level agreements.

Latent Data Entrenchment

India’s Aadhaar system, managed in part by Tata Consultancy Services and other private vendors, embedded inaccurate biometric enrollments—such as failed iris scans in rural areas—into national identification records, and though citizens could file correction requests, the centralized template update process often replicated errors due to version mismatches across distributed authentication nodes. Once flawed biometric hashes propagated into backend authentication microservices used by banks and hospitals, correcting the source data did not cascade to all dependent systems, rendering individual fixes ineffective. What is overlooked is that correction fails not at input but at synchronization—biometric inaccuracies become structurally inertial once diffused across interdependent, loosely coupled infrastructures.

Verification Burden

Individuals must independently prove biometric inaccuracies to opaque private systems lacking accessible audit mechanisms. Because users lack direct access to enrollment logs, algorithmic error logs, or raw biometric templates stored by vendors like Clearview AI or NEC Corporation, they cannot produce evidence regulators or courts recognize as valid—rendering the right to correction unactionable despite legal recognition. This reflects the hidden procedural cost of challenging data in systems designed for one-way authentication, not two-way verification, exposing how user rights dissolve when proof requirements exceed available tools.

Vendor Black Box

Private biometric vendors such as IDEMIA or Thales deploy proprietary matching algorithms and data-processing pipelines that are legally protected as trade secrets, preventing public or regulatory inspection. As a result, individuals and oversight bodies cannot trace how a fingerprint or facial scan was misclassified—whether due to poor image quality, biased training data, or software drift—making targeted corrections impossible even if inaccuracies are suspected. This opacity converts technical secrecy into operational immunity, a dynamic rarely challenged because public discourse assumes data errors are user-side, not system-side.

Corrective Lag

Even when a private vendor acknowledges a biometric error, the patching process—re-enrollment, template regeneration, system-wide sync—operates on internal IT cycles that prioritize infrastructure stability over individual timelines. A facial recognition error in a corporate access system managed by Amazon Rekognition might take weeks to propagate across distributed endpoints due to staged update protocols, during which the individual remains misidentified. This mechanical inertia reveals how real-time harms outpace slow, resource-bounded backend operations, a bottleneck unacknowledged in rights frameworks built for static data, not embedded behavioral traces.