Is AI Loan Underwriting a Fair Trade-off Between Speed and Discrimination?

Analysis reveals 6 key thematic connections.

Key Findings

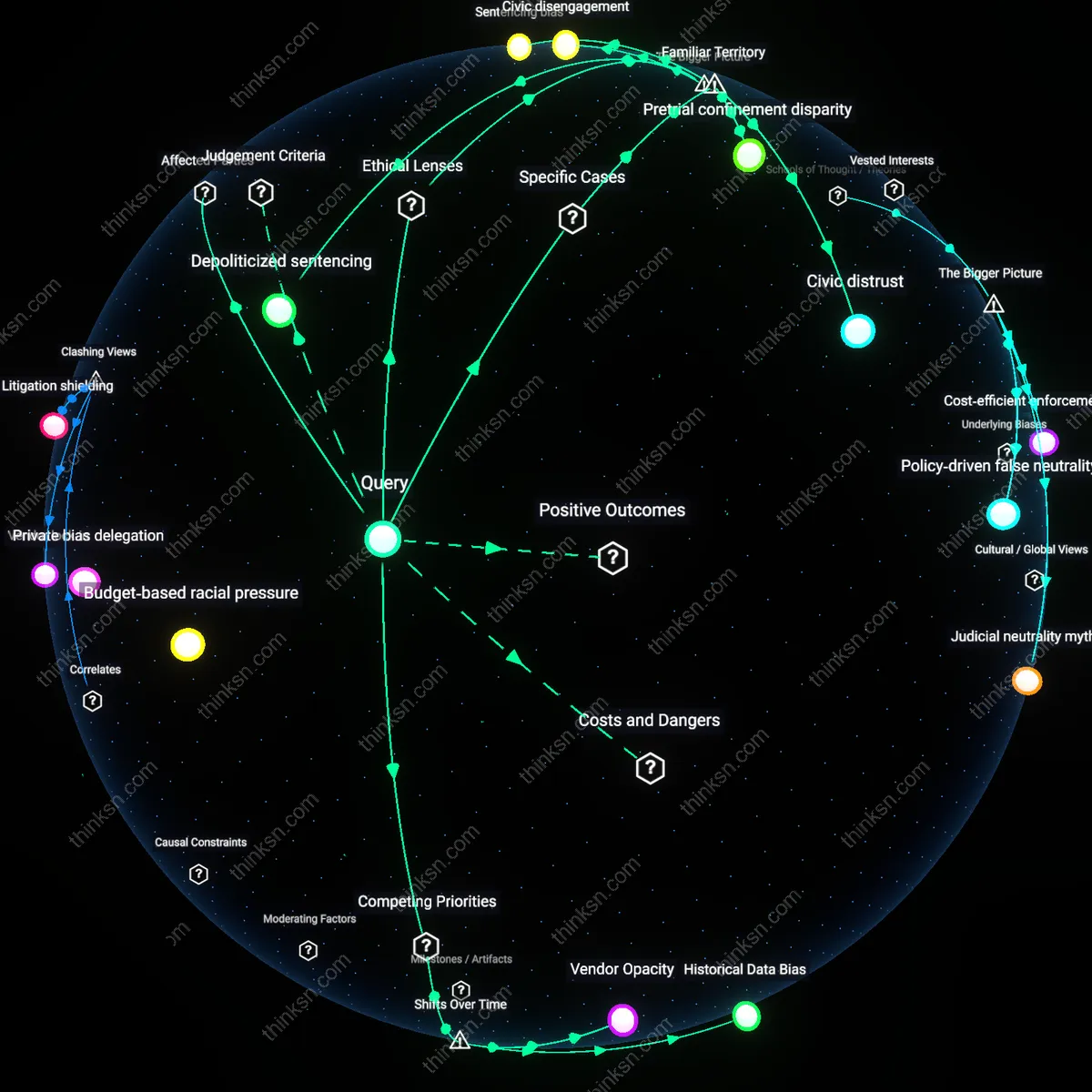

Normative Inertia

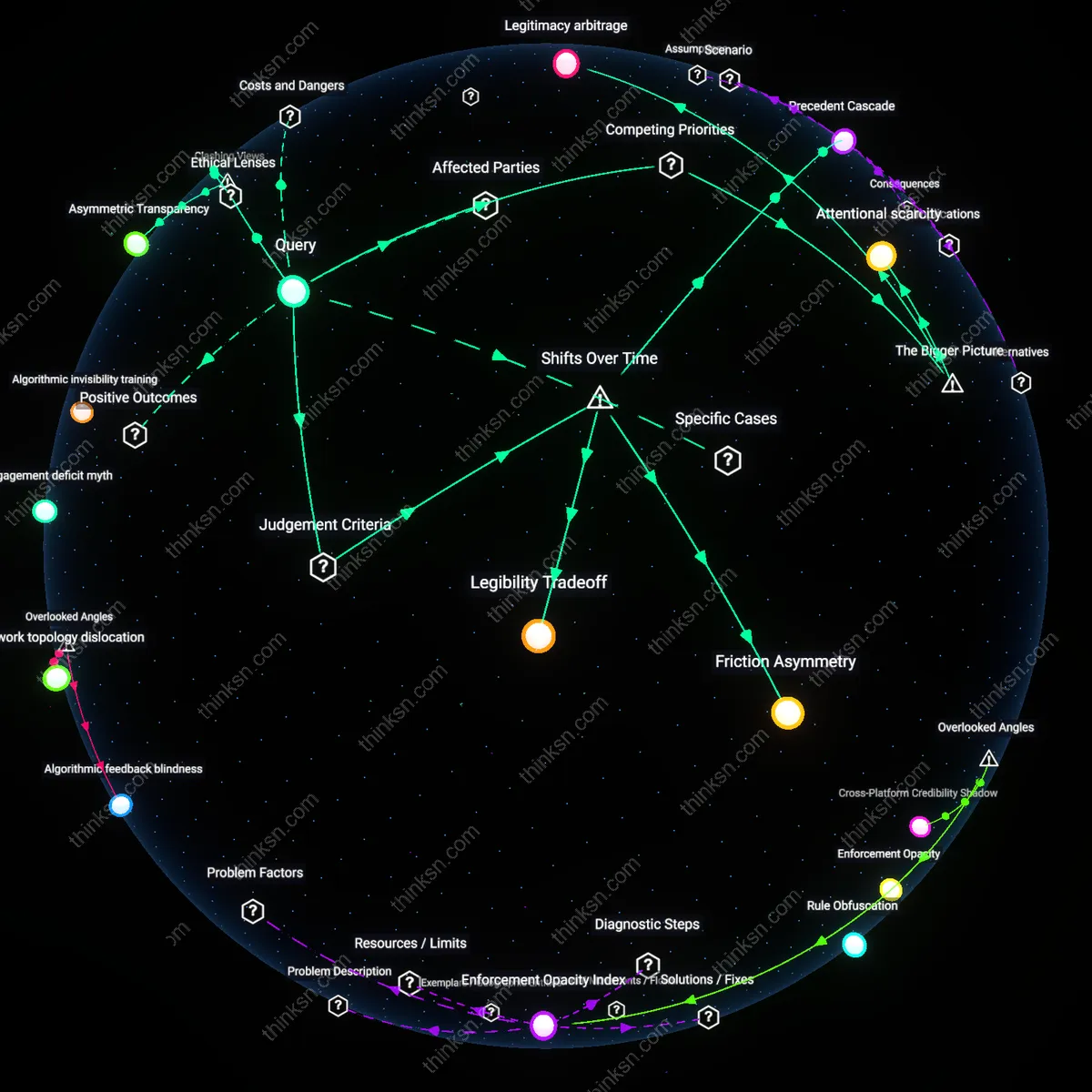

No, the use of AI in automated loan underwriting entrenches historical inequities by codifying past lending patterns into algorithmic rule systems, which then reproduce disparities under the guise of technical neutrality. Banks, fintech platforms, and regulators rely on training data from decades of discriminatory housing and credit policies—such as redlining and income verification practices exclusive to formal employment—causing AI models to systematically disadvantage Black, Latino, and gig-economy applicants even when 'fairness' metrics are optimized. This mechanism operates through statistical mimicry, where models learn not just risk signals but embedded social hierarchies, and its significance lies in revealing how efficiency serves as a cover for unexamined moral deference to legacy systems. The non-obvious insight is that the greater the AI's accuracy in replicating past decisions, the deeper the entrenchment of exclusion—making high performance itself a danger.

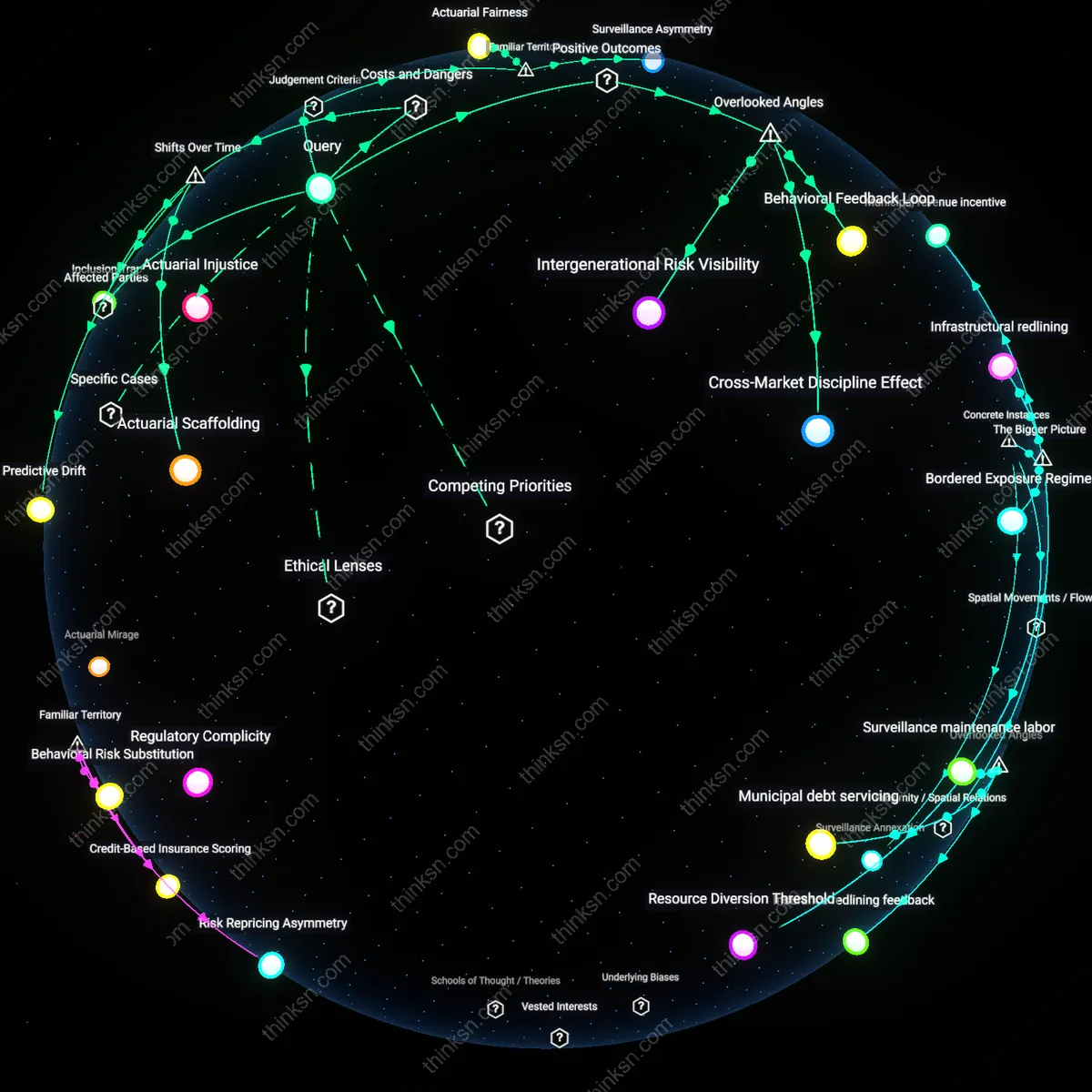

Epistemic Opaqueness

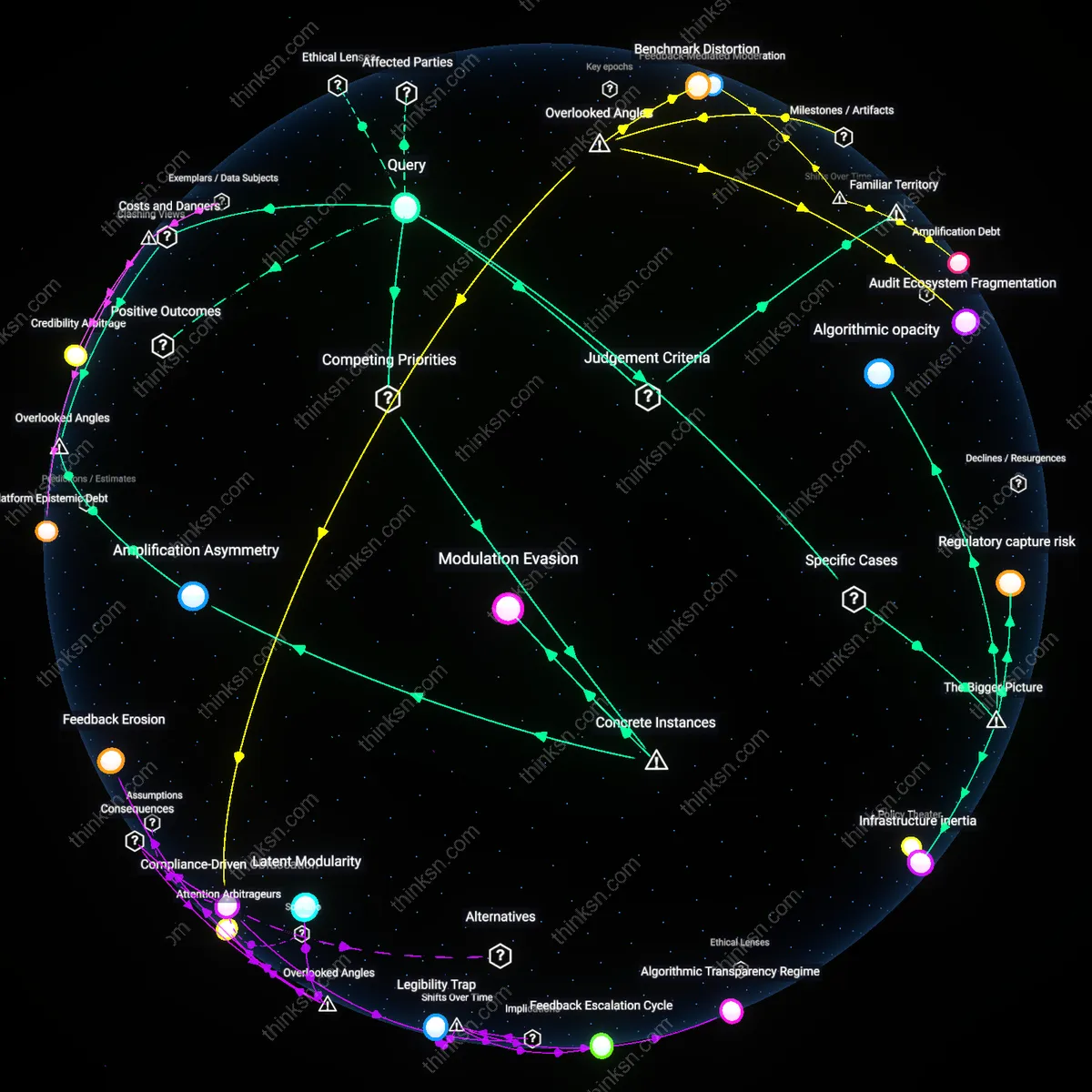

No, because the primary risk of AI underwriting is not overt bias but the erosion of accountable reasoning in credit denial, where applicants, regulators, and even auditors cannot trace or contest the logic behind decisions due to proprietary model architectures and feature engineering obfuscation. Unlike human underwriters who can articulate reasons for rejection based on verifiable criteria, machine learning systems—especially deep learning models used by firms like Upstart or ZestFinance—generate decisions through high-dimensional interactions of thousands of variables, including behavioral proxies like device type or browsing speed, which evade scrutiny under current disclosure laws. This operates through a systemic shift from justification-based governance to outcome-validated black boxes, and its significance is the quiet dismantling of due process in financial inclusion. The dissonance lies in recognizing that transparency efforts fail not from lack of will, but because interpretability is structurally incompatible with the complexity that enables AI's touted efficiency.

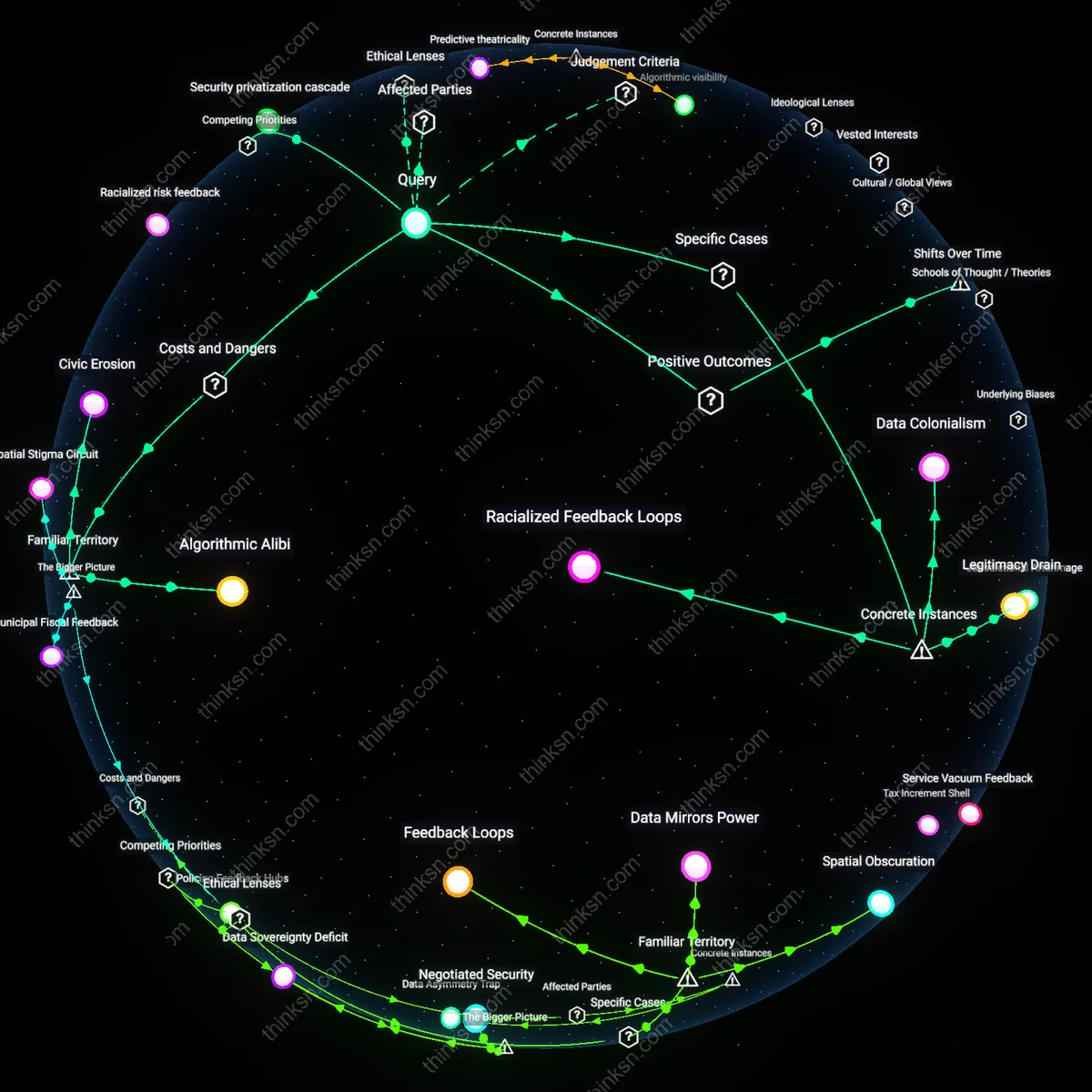

Feedback Abyss

No, because AI underwriting systems generate self-reinforcing feedback loops that degrade the very data they depend on, as biased rejections reduce future applications from marginalized groups, shrinking their representation in training data and amplifying exclusion over time. For example, when an AI model denies credit to rural or low-income applicants slightly more often, fewer such applicants re-enter the pipeline, causing subsequent models to perceive them as rarer and riskier—a dynamic observed in subprime auto lending algorithms used by major lenders in the U.S. South. This operates through data feedback erosion in closed-loop decision systems, and its significance is the creation of invisible, automated market thinning that mimics natural market behavior while being entirely artifact-driven. The challenge to the dominant view is that the system does not merely reflect bias; it actively constructs new categories of undesirability through its own operational success.

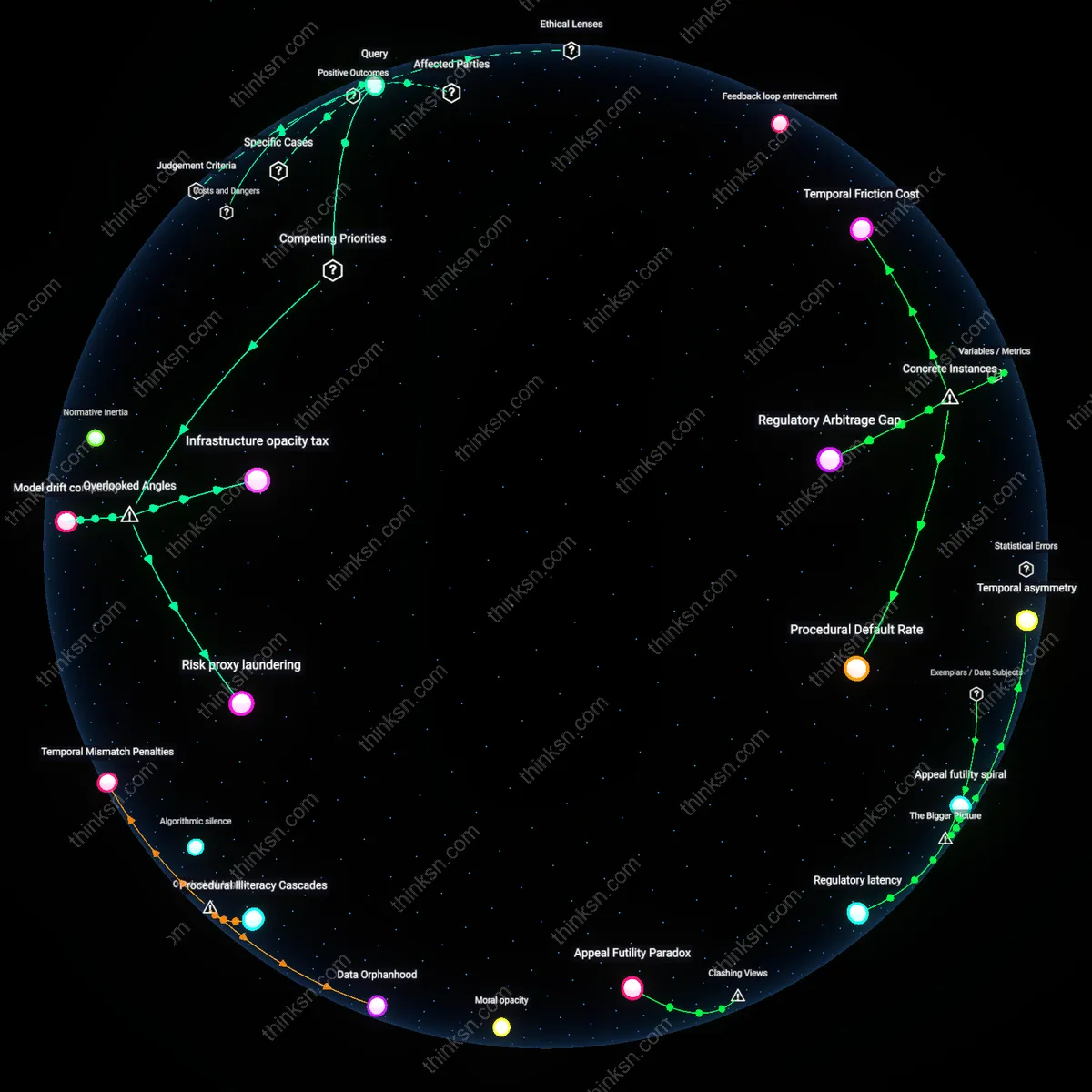

Model drift complicity

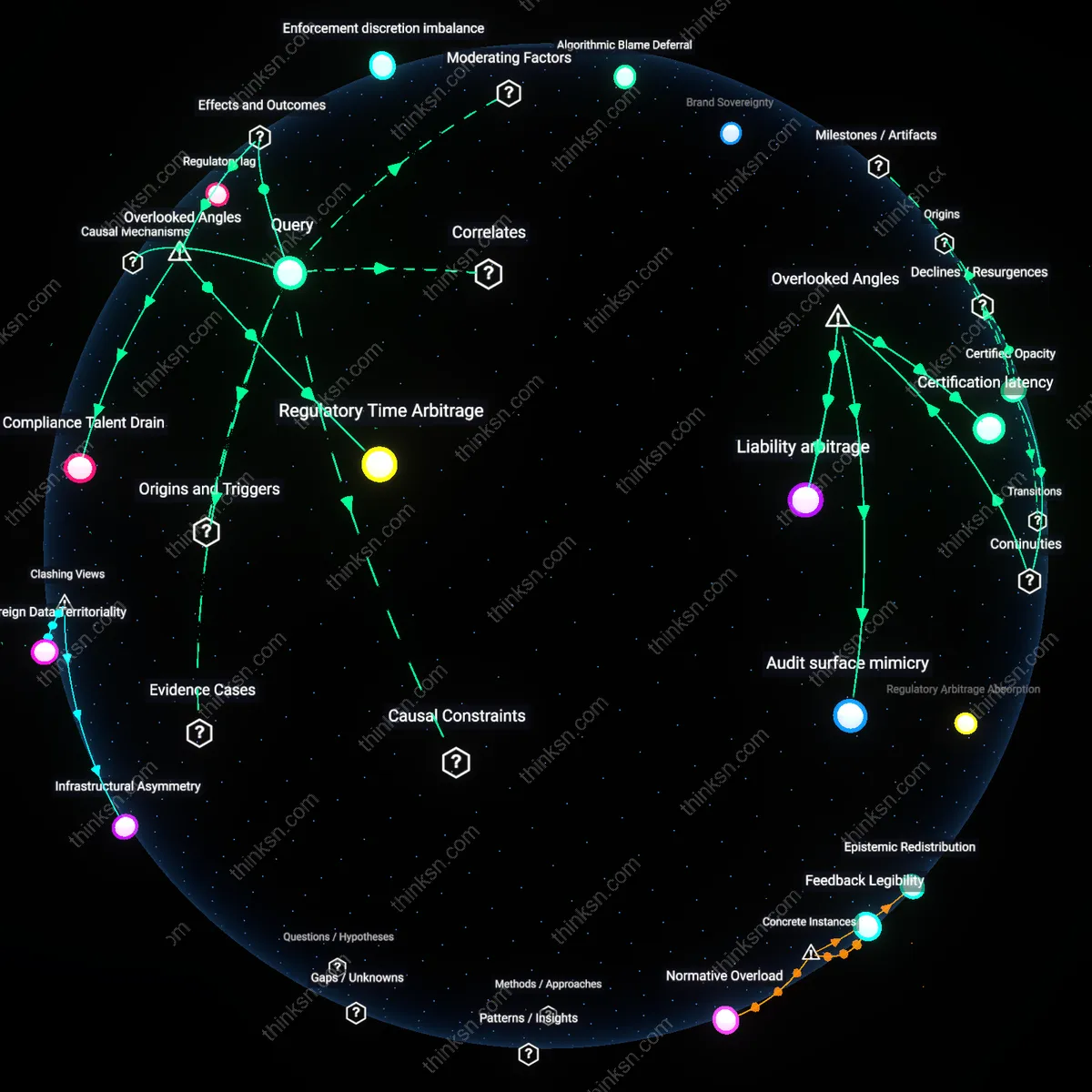

The use of AI in automated loan underwriting cannot be ethically justified because institutional actors systematically ignore model drift as a form of active complicity in discriminatory outcomes, where ongoing accuracy decay disproportionately impacts marginalized borrowers over time. Regulatory reliance on static validation cycles allows financial institutions to outsource ethical accountability to outdated performance benchmarks, while learning algorithms silently shift decision thresholds in response to macroeconomic feedback loops—such as regional unemployment spikes—that correlate with demographic variables. This dynamic is overlooked because most bias audits focus on initial training data rather than longitudinal decision erosion, masking how technical neglect functions as a covert mechanism of exclusion.

Infrastructure opacity tax

AI-driven underwriting embeds ethical compromises not through overt bias but by concentrating decision-making opacity within third-party cloud and API infrastructures used by smaller lenders, where audit access is restricted by proprietary barriers and computational cost. Community banks and credit unions relying on off-the-shelf AI platforms from firms like Feedzai or Upstart cannot inspect or modify underlying inference logic, forcing them to accept adverse outcomes as operational defaults—effectively paying an 'opacity tax' that transfers ethical risk downstream. This dependency is rarely considered in fairness debates, which assume direct control over models, but in reality, the physical and legal layers of AI deployment infrastructure dictate whose values get encoded and whose are overruled.

Risk proxy laundering

Automated underwriting systems ethically fail not because they use biased data, but because they institutionalize the laundering of illicit risk proxies through legally permissible variables—such as geocoded mobile app usage or utility payment histories—that are functionally inseparable from protected attributes yet shielded by regulatory blind spots. Fintech lenders like Affirm or Branch use behavioral telemetry that, while compliant on paper, reconstructs socioeconomic vulnerability via circuits of indirect measurement, allowing discrimination to persist under the guise of innovation. This laundering process is structurally ignored because regulators focus on input variables rather than the synthetic composites generated within latent model spaces, where proxies are reborn as 'neutral' signals.