Algorithmic audibility

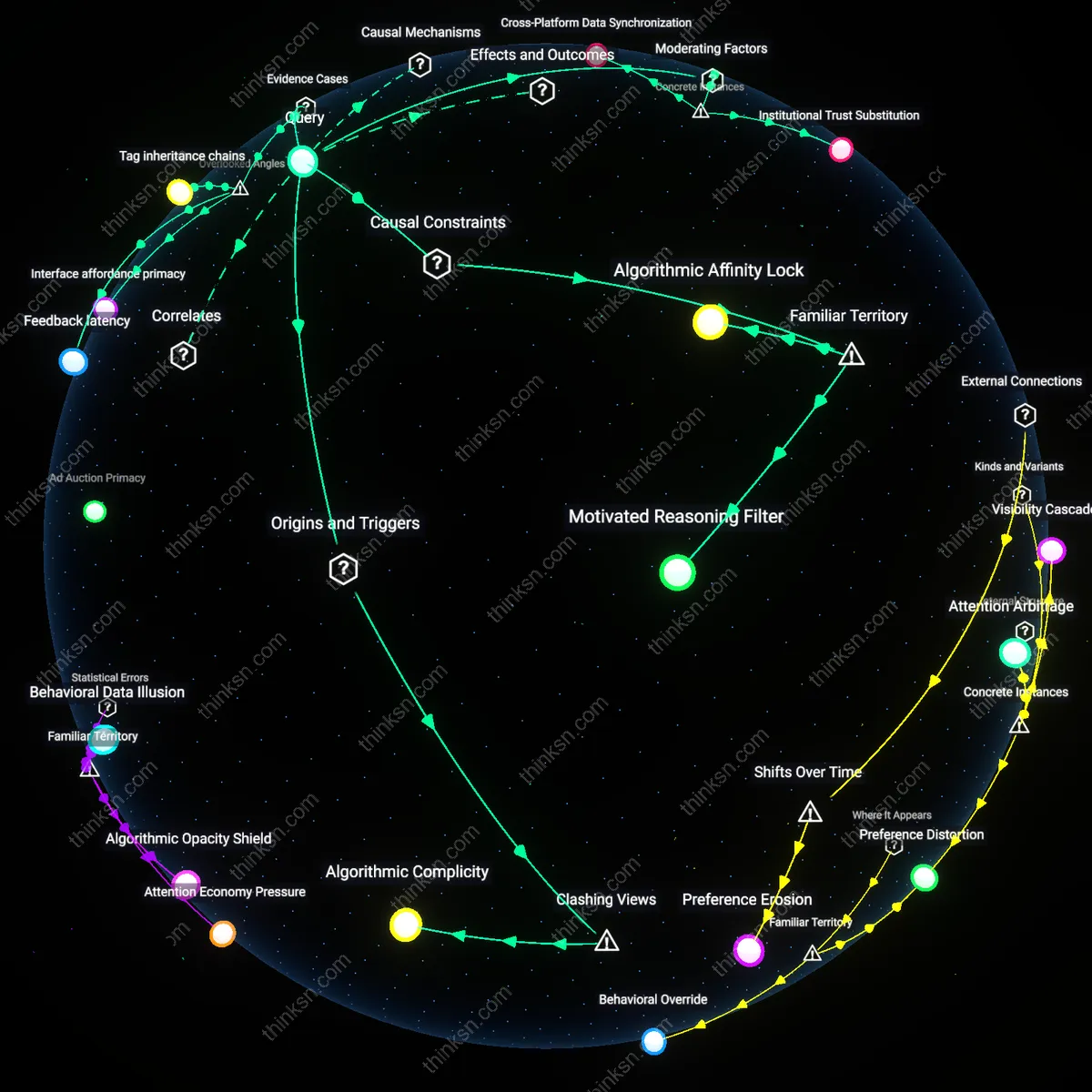

Platforms' acoustic optimization incentives render certain vocal timbres, regional accents, and non-normative speech patterns less detectable to automated recommendation systems, which prioritize audio features like speech clarity, tempo, and keyword density over semantic or cultural richness; this creates a hidden filter not of content but of sound itself—one that systematically suppresses voices not because they are controversial but because they do not register efficiently in machine-learned models trained on dominant linguistic norms. Most analyses focus on visibility or discoverability of content, yet the pre-processing layer of audio feature extraction operates as a perceptual gatekeeper long before any video is ranked or recommended—a mechanistic silencing that remains invisible even to creators adapting their delivery, altering how they speak before they even know what they’re being judged on.

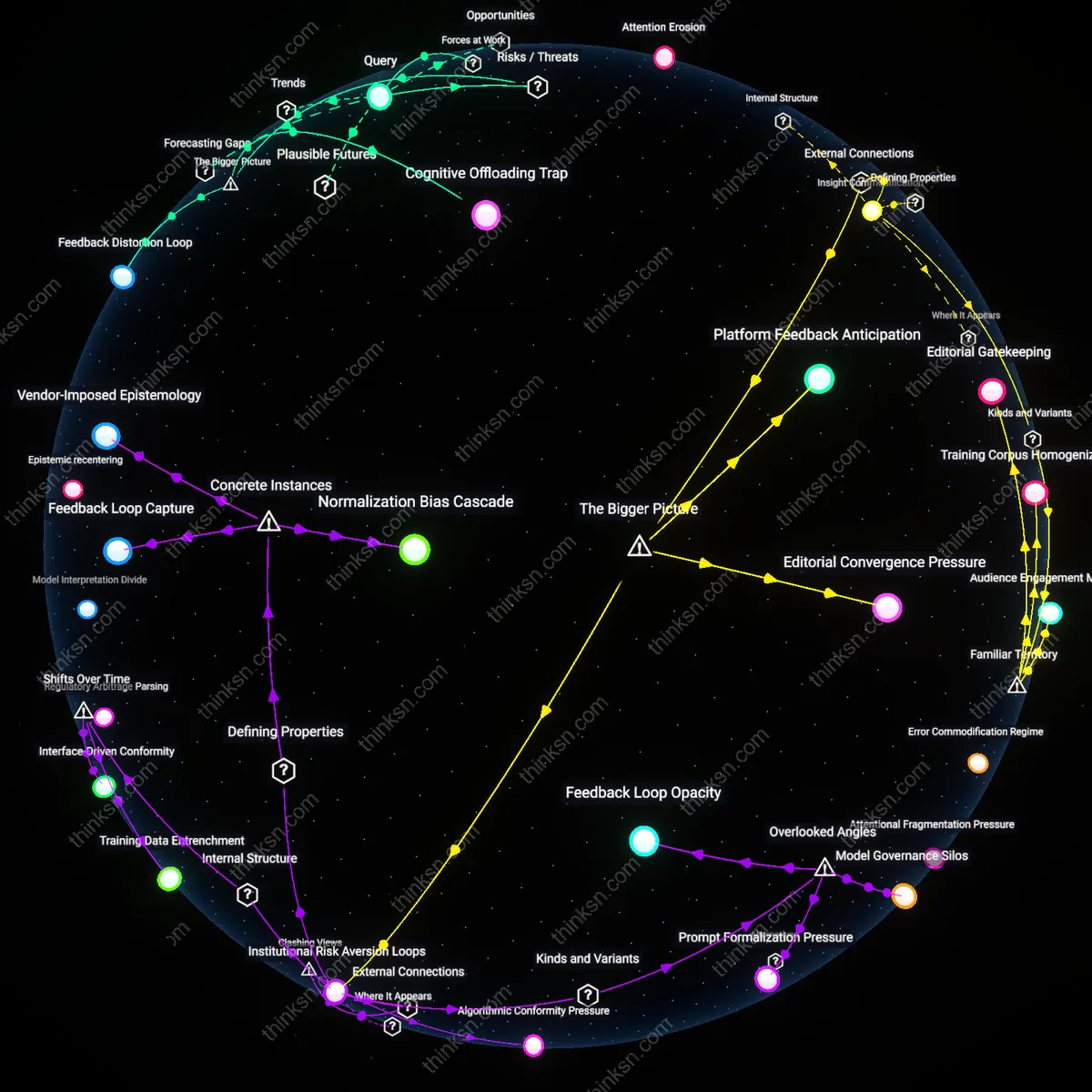

Creative latency debt

Creators who iteratively adjust vocal delivery—pitch, pacing, emotional valence—to match perceived algorithmic preferences accumulate an invisible cost in delayed artistic development, where time spent mastering algorithmic mimicry displaces time for narrative experimentation, skill diversification, or audience intimacy; this deferred creative agency manifests not as immediate censorship but as gradual erosion of stylistic risk-taking across cohorts of emerging filmmakers, podcasters, and educators. Standard critiques emphasize content suppression or homogenization, yet overlook how the feedback loop between performance and platform reward reshapes human development trajectories, favoring those who can least afford to lose income during periods of artistic uncertainty—thus reinforcing socioeconomic stratification in voice representation under the guise of engagement optimization.

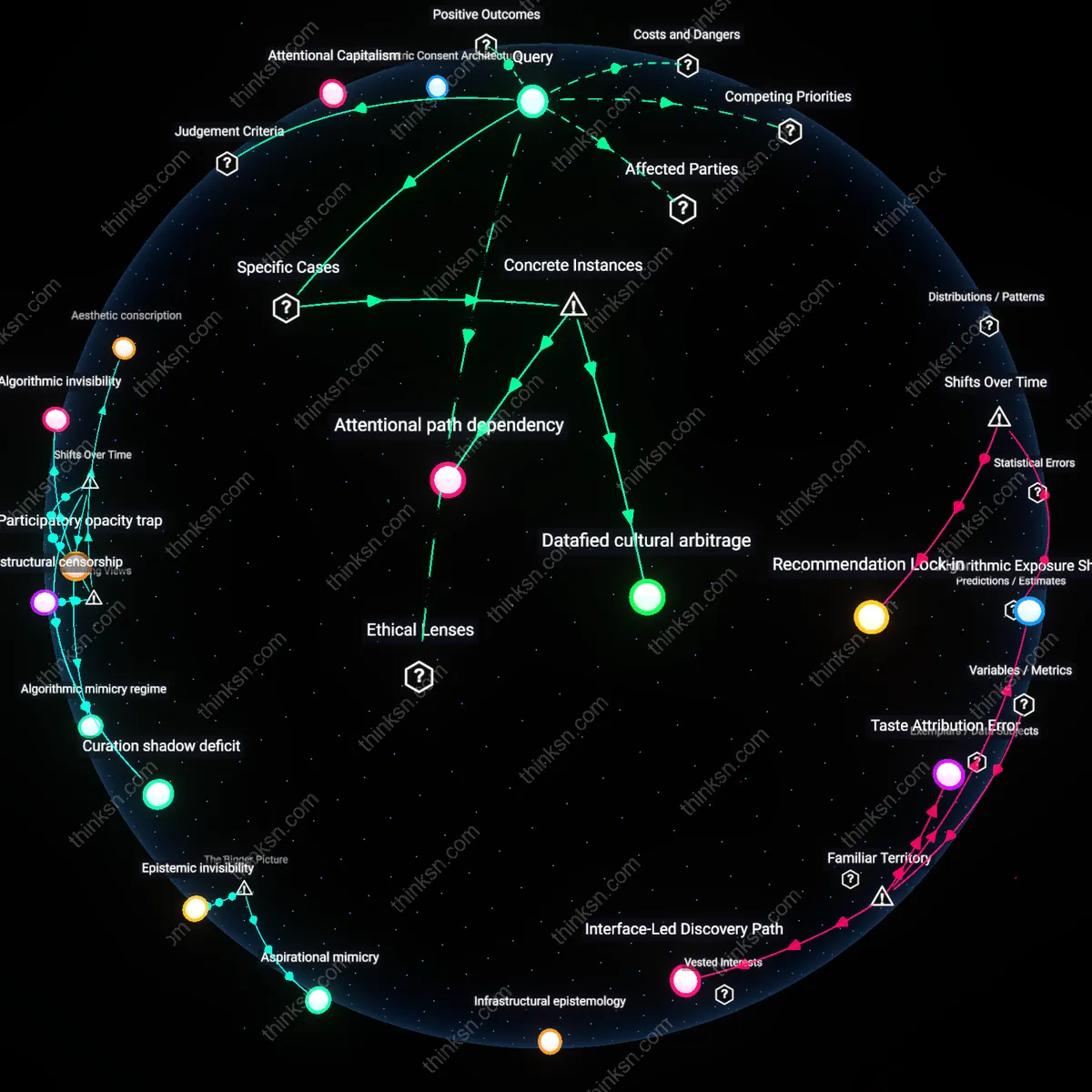

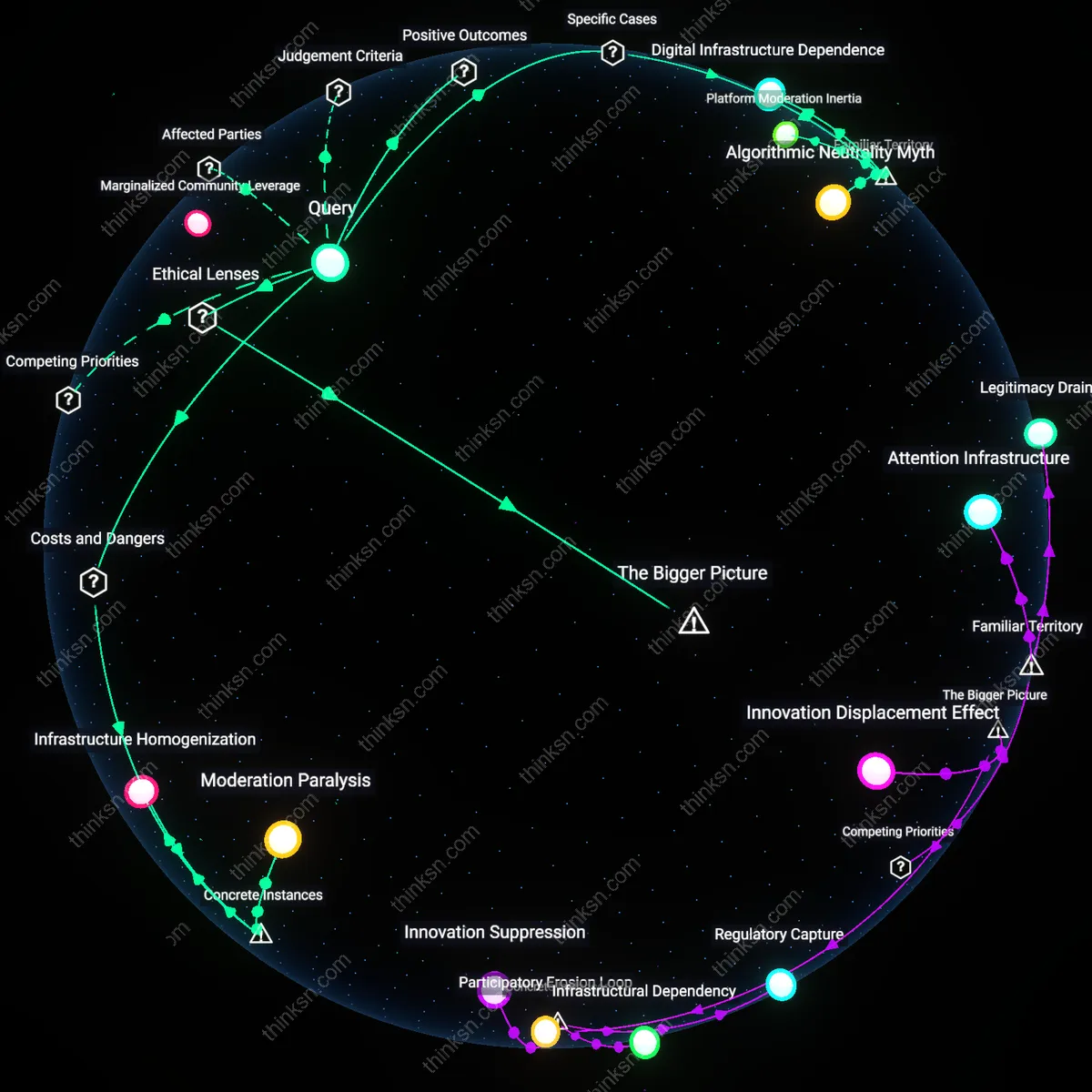

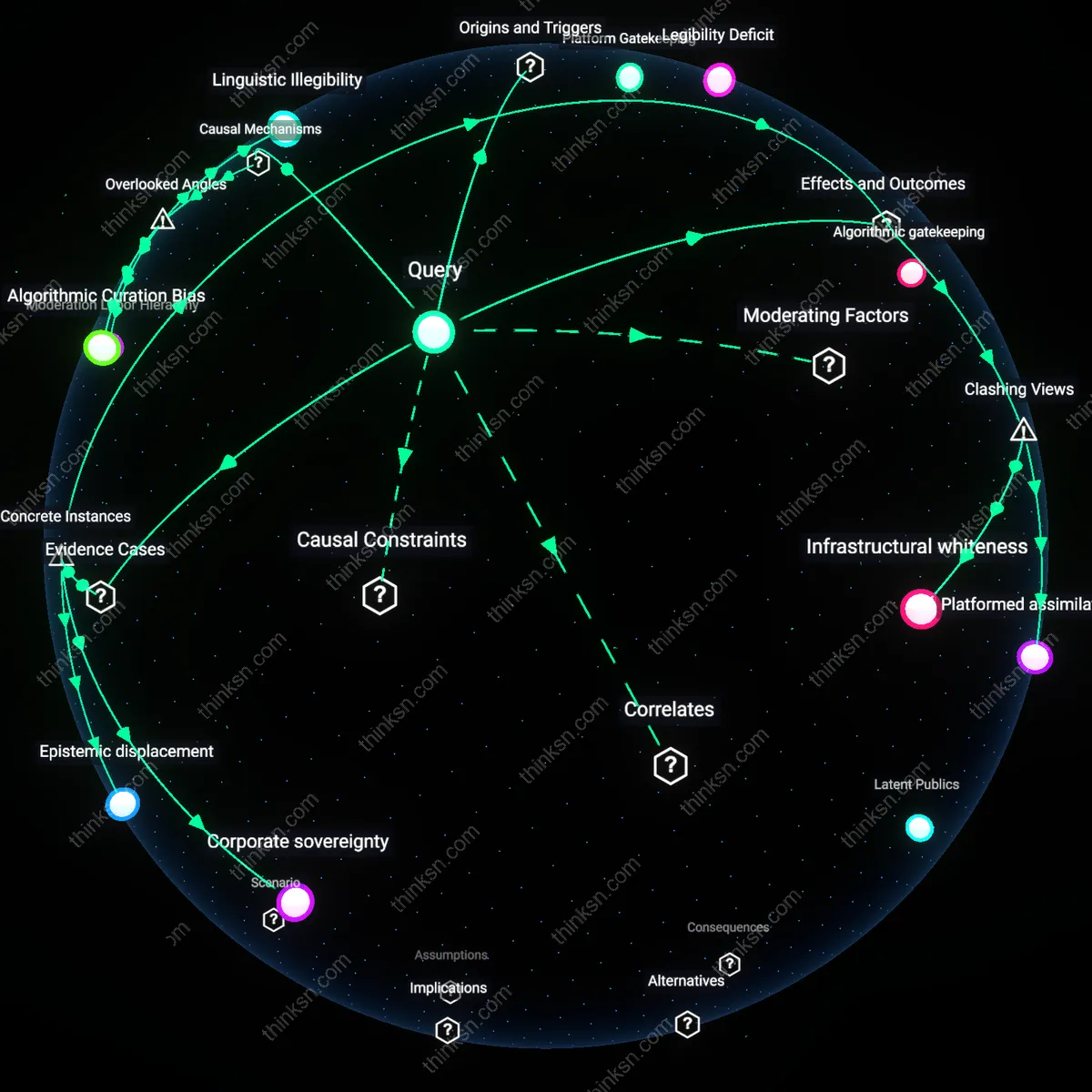

Infrastructural epistemology

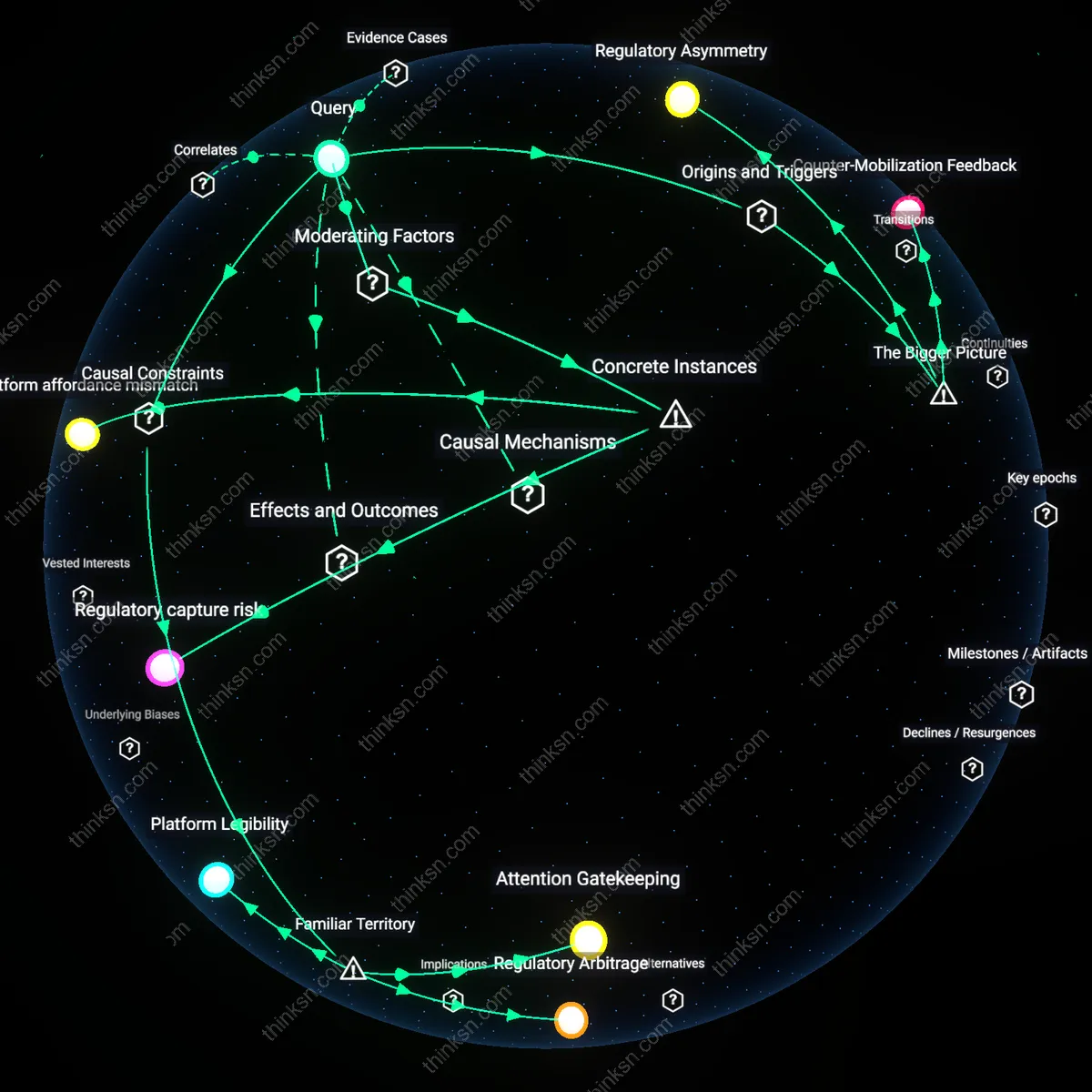

The unobservable architecture of recommendation algorithms produces a distorted epistemology among creators, who develop beliefs about 'what works' based on fragmented, second-order data points—view duration spikes, retention curves, traffic sources—leading them to mistake statistical noise for causal rules and reshape vocal storytelling accordingly; this results in the loss of nonlinear, polyphonic, or ambiguity-tolerant narratives that don’t generate clean algorithmic signals, not because they fail but because they are misread. Conventional discourse assumes creators respond to real algorithmic logic, but the deeper issue is that they act within a belief system forged by partial observability and speculative inference—a cognitive distortion built into the platform’s opacity, which makes certain kinds of truth-bearing stories feel empirically disfavored even when they aren’t directly penalized.

Algorithmic invisibility

Platforms like TikTok and YouTube enforce sonic standardization through opaque recommendation systems, pressuring creators to mimic successful audio patterns regardless of cultural or personal authenticity; this mimicry is not a free choice but a survival tactic within engineered attention economies. The real mechanism is not algorithmic manipulation per se, but the structural concealment of how audio metrics are scored—creators aren't optimizing for discoverability, they're adapting to a black box they are forbidden from auditing. What’s underappreciated is that voice loss isn't incidental—it's the system’s design outcome, where algorithmic invisibility becomes a form of disciplinary control that erases dissenting sonic identities by making them statistically invisible.

Aesthetic conscription

The pressure to conform to algorithmic audio norms functions less as a market signal and more as a form of ideological drafting, where creators—especially from marginalized communities—are conscripted into producing content that aligns with platform-approved emotional registers, such as up-tempo enthusiasm or performative vulnerability. This conscription operates through the material reality of monetization thresholds and visibility caps, which reward compliance and penalize deviation in ways that disproportionately affect non-Western or low-income voices. Contrary to the liberal narrative of platform empowerment, what’s lost are not just stories, but modes of storytelling that resist algorithmic legibility, revealing aesthetic conscription as the hidden cost of participation.

Infrastructural censorship

Dominant platforms do not merely filter voices—they preemptively shape them through infrastructural design choices like default audio filters, auto-caption timing, and voice-to-text conversion biases, which systematically degrade or silence non-standard accents, tonal languages, and slower narrative pacing. This form of censorship operates not through content moderation but through signal distortion, where certain voices literally fail to register as coherent input for recommendation models trained on dominant linguistic datasets. The non-obvious truth is that the loss isn't in the content selected against, but in the modes of expression that are rendered technically indistinct before they’re even heard—what emerges is infrastructural censorship, an erasure built into the platform’s sensory interface.

Aspirational mimicry

In countries like India and Indonesia, young creators increasingly imitate American or K-pop-influenced vocal stylizations—fast speech, high-pitched enthusiasm, lexical borrowing—not because these styles reflect local norms but because they associate them with visibility and economic mobility on global platforms. This mimicry is enabled by the structural condition of platform dependency, where algorithmic gatekeeping is perceived as a neutral technical challenge rather than a culturally biased system, leading creators to self-modify in ways that align with imagined Western preferences embedded in platform design. The consequence is a flattening of narrative diversity, as stories rooted in community ethics, elder-led wisdom, or devotional silence—such as Balinese *kakawin* chanting or Tamil folk parables—are abandoned for more 'platform-compatible' performances. What is rarely acknowledged is that this mimicry is not voluntary cultural exchange but a survival strategy within a digital attention economy where cultural capital is systematically assigned to voices that resemble dominant (i.e. Anglo-American) media archetypes.

Epistemic invisibility

Indigenous and rural creators in the Amazon and Central Asia often use narrative structures that emphasize circularity, environmental sound integration, and collective voice—qualities deliberately suppressed by audio normalization algorithms optimized for solo, foregrounded speech with minimal ambient noise. Because these platforms’ recommendation engines interpret deviation from this standard as low production quality or poor engagement potential, entire storytelling traditions, such as the Aymara’s use of silence as narrative punctuation or Kyrgyz *manaschi* oral epics, are algorithmically deprioritized, not because they lack audience interest, but because the enabling condition of automated audio evaluation misreads cultural difference as technical deficiency. This creates a downstream consequence where institutions and funders, observing low engagement metrics, conclude these forms are irrelevant, thus reinforcing underrepresentation. The overlooked dynamic is that algorithmic systems do not merely reflect cultural bias—they actively produce it by redefining what counts as a legitimate voice, thereby rendering non-conforming expressions epistemically invisible even when they persist offline.

Algorithmic Mimicry

YouTube creators in Brazil increasingly adopt exaggerated vocal cadences and scripted emotional peaks not to engage viewers but to trigger algorithmic favor, exemplified by the rise of 'NostalgiaTube' channels that fabricate 1990s childhood memories with synthetic affect—this shift sidelines quieter, testimonial forms like indigenous oral histories from the Amazon, which lack the sonic markers of algorithmic engagement; the mechanism is not user preference but platform-defined reward signals that prioritize auditory intensity, revealing how algorithmic mimicry suppresses epistemic diversity under the guise of authenticity.

Visibility Tax

TikTok influencers in Jakarta who speak in Javanese dialects report suppressing local idioms and pitch patterns to adopt standardized Indonesian Mandarin cadence after analytics show lower reach for regionally accented content, illustrated by the case of @BundaJogja, a rice farmer whose original videos on intergenerational farming knowledge gained traction only after she mimicked Beijing-accented pronunciation rhythms; this demonstrates how non-Western vocal geographies pay a visibility tax, where intelligibility to centralized moderation systems becomes a precondition for circulation, obscuring subaltern articulations that fail to align with Sinophone or Anglophone templates.

Silent Curation

Deaf content creators on Instagram Reels using sign language report lower engagement unless they add exaggerated mouth movements and vocal sounds despite no hearing audience, as seen in the marginalization of @ASLStories NYC, whose nuanced facial grammar was deprioritized until theatrics were introduced; the algorithm interprets absence of vocal spikes as low energy, thus enforcing a form of silent curation where bodily authenticity is sacrificed for acoustic legibility, exposing that algorithmic hearing is not auditory at all but a textual proxy system tuned to written sound cues, which indirectly disciplines bodily expression to simulate voice.

Algorithmic mimicry regime

Platforms like TikTok and YouTube have incentivized creators to emulate algorithm-favored audio patterns—such as high-energy hooks or viral soundbites—not because these styles are artistically dominant but because visibility now depends on mimicking black-boxed engagement metrics; this shift marks a decisive break from the 2000s-era YouTube ethos, where idiosyncratic voices could gain traction through niche communities rather than engineered performability, revealing how platform capitalism since the mid-2010s has replaced organic expression with standardized auditioning for recommendation systems.

Curation shadow deficit

Media conglomerates such as Warner Music Group and Netflix now license and promote videos that conform to algorithm-optimized templates because automated discoverability guarantees ad revenue predictability, a pivot from the 1990s public broadcasting model that privileged editorial curation over scalability, exposing how the erosion of human gatekeeping since the 2010s has created a cultural deficit where entire affective tonalities—such as subdued narration or nonlinear storytelling—are rendered structurally invisible even when they retain audience resonance.

Participatory opacity trap

Grassroots activist networks in climate justice and racial equity movements have increasingly adopted algorithm-compatible audio formats—like 15-second testimonials with text overlays—not to maximize reach but to survive in platform-dominated discourse, marking a strategic departure from the 2008–2012 era of video activism when raw, unedited testimony held disruptive power, underscoring how algorithmic mediation since the mid-2010s has transformed testimonial authenticity from an ethical norm into a tactical liability.