Will AI Tools Diminish Critical Thinking in Consumer Insights?

Analysis reveals 6 key thematic connections.

Key Findings

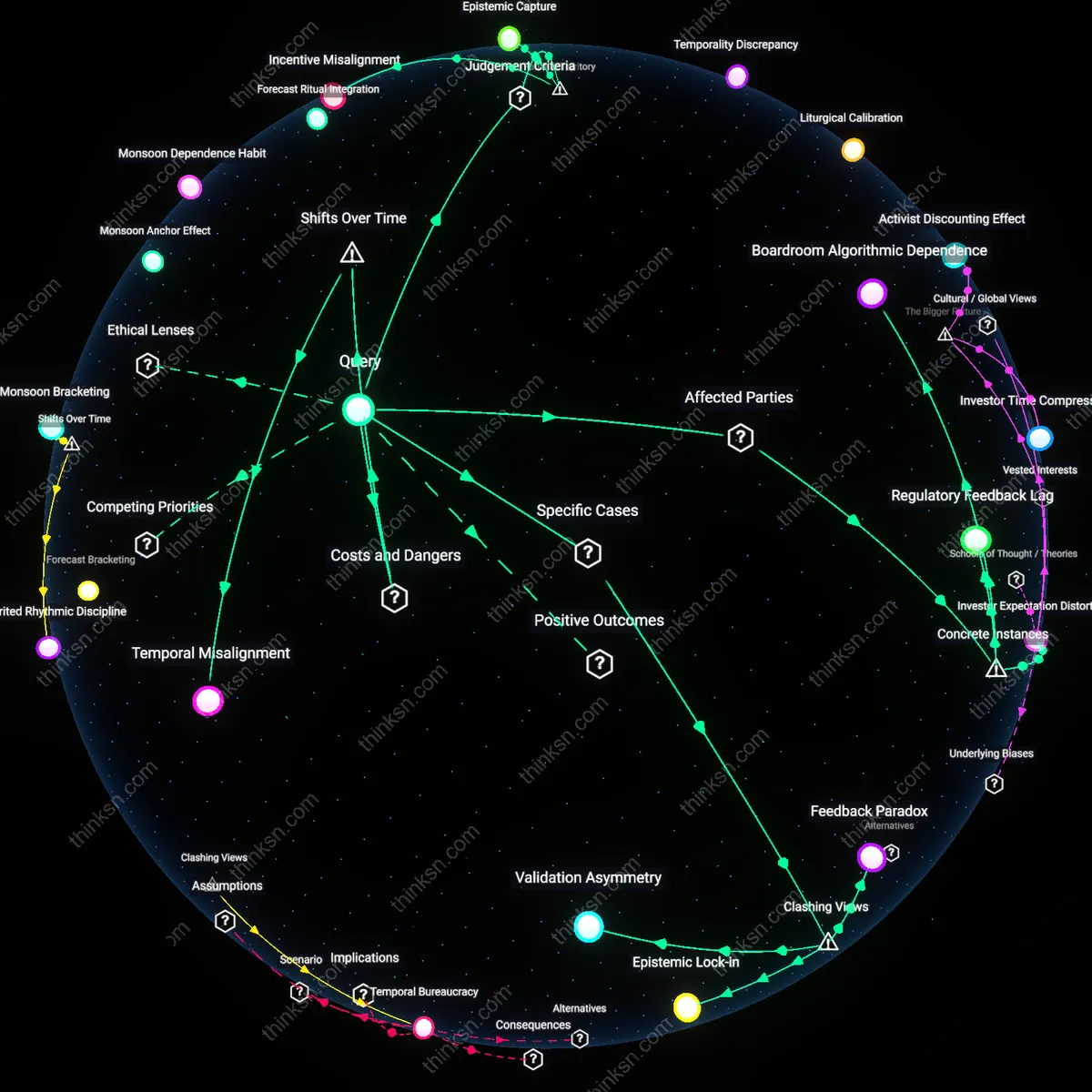

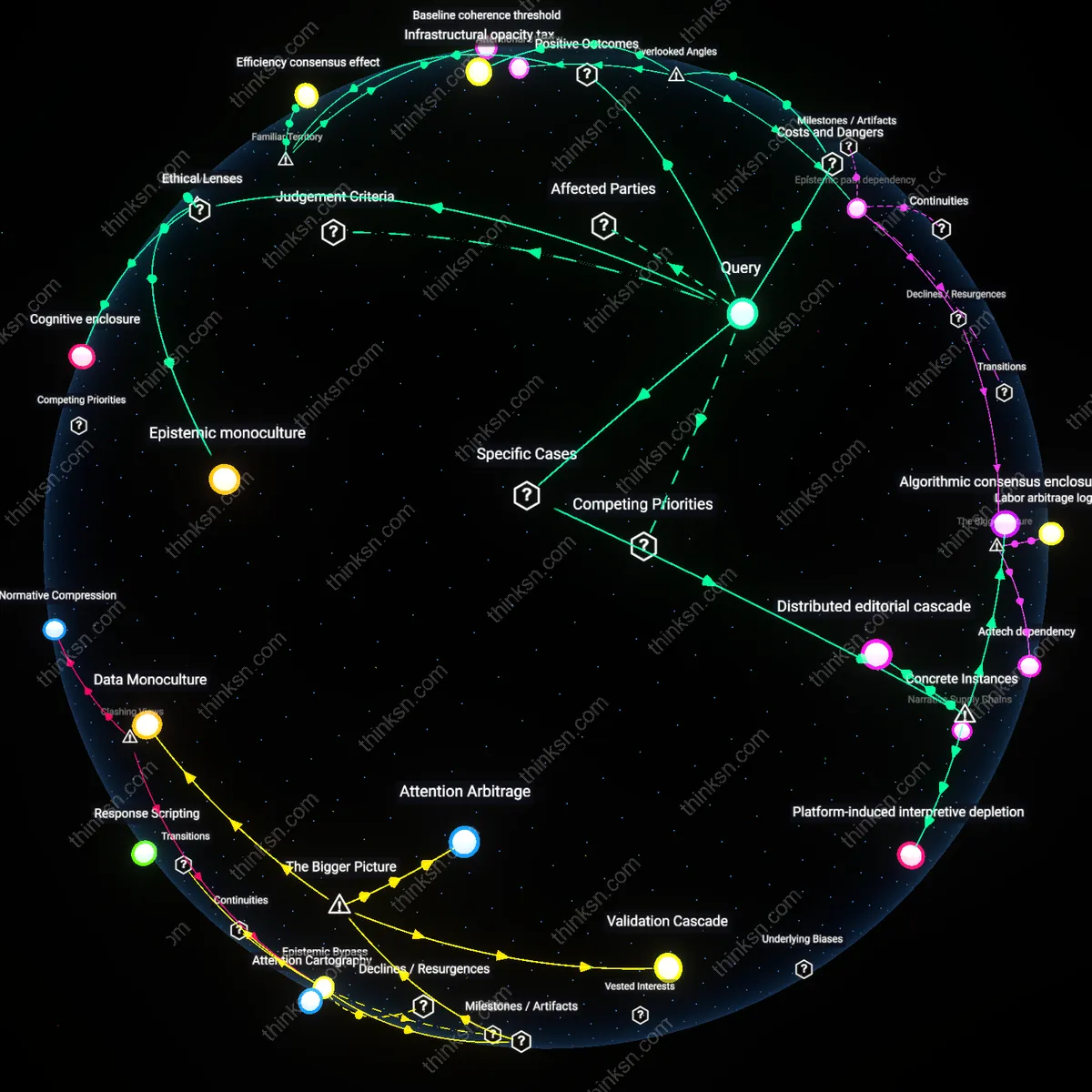

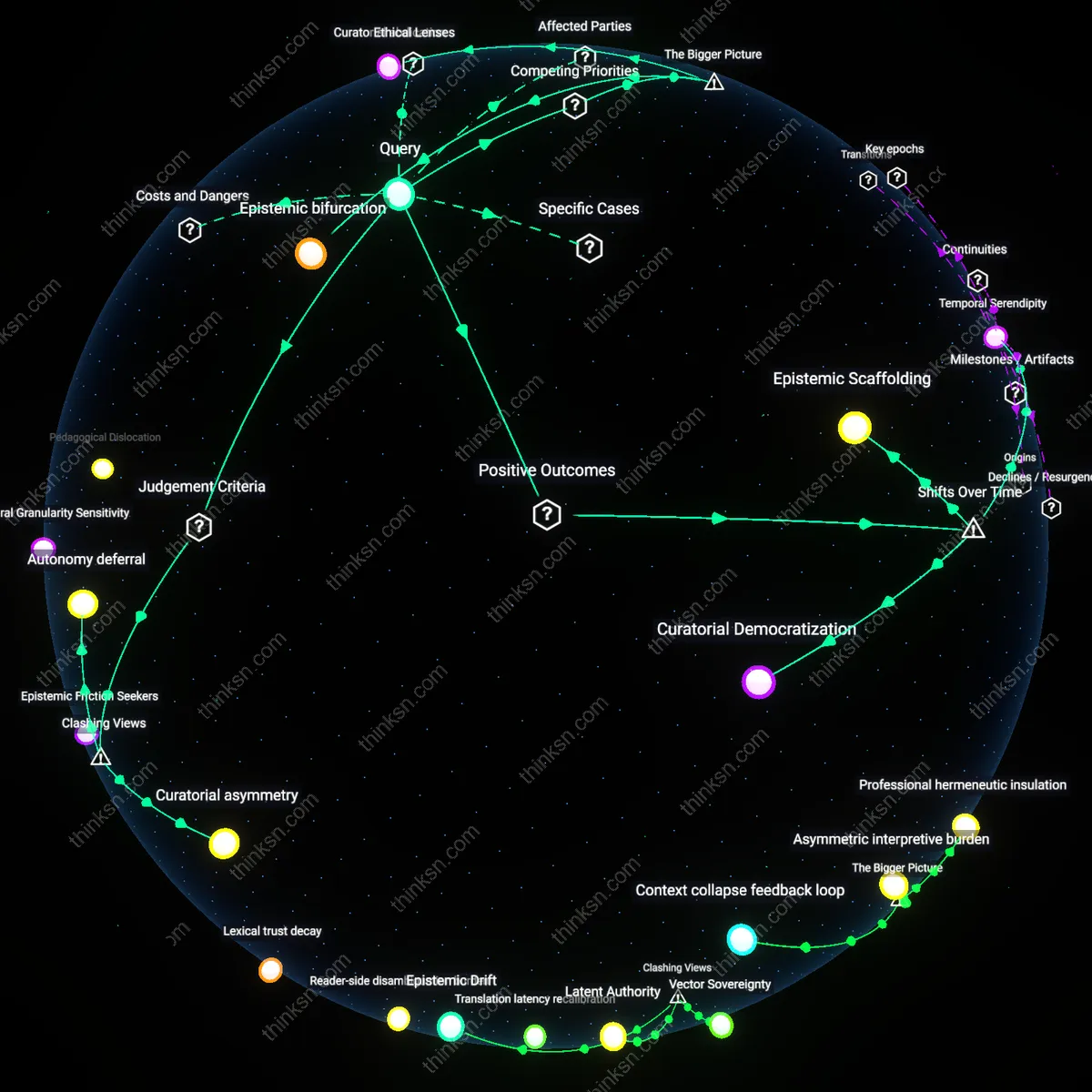

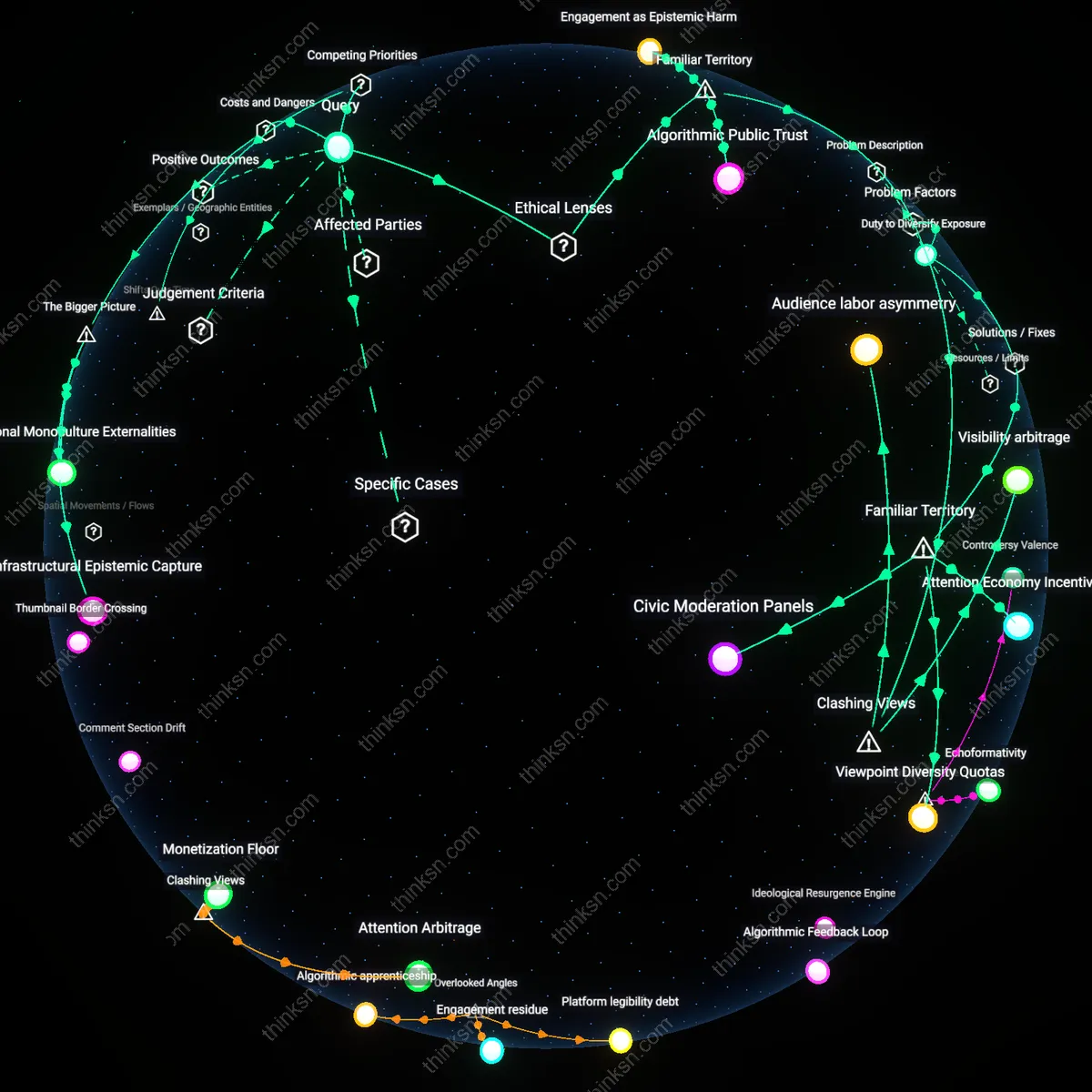

Attention Erosion

AI-enhanced market-research tools will erode critical thinking in consumer-insights analysts by reducing the cognitive friction required to identify patterns. As platforms like Qualtrics and Sprinklr deploy predictive analytics that auto-generate consumer segments and sentiment summaries, analysts increasingly accept algorithmic outputs without interrogating data quality or methodological assumptions. This shift transfers epistemic authority from human judgment to black-box models, weakening the practiced skepticism needed to challenge spurious correlations—particularly among junior analysts in digital-native agencies where speed trumps rigor. The underappreciated consequence is not outright skill loss but the gradual atrophy of deliberate, slow-thinking habits in environments culturally conditioned to trust automation.

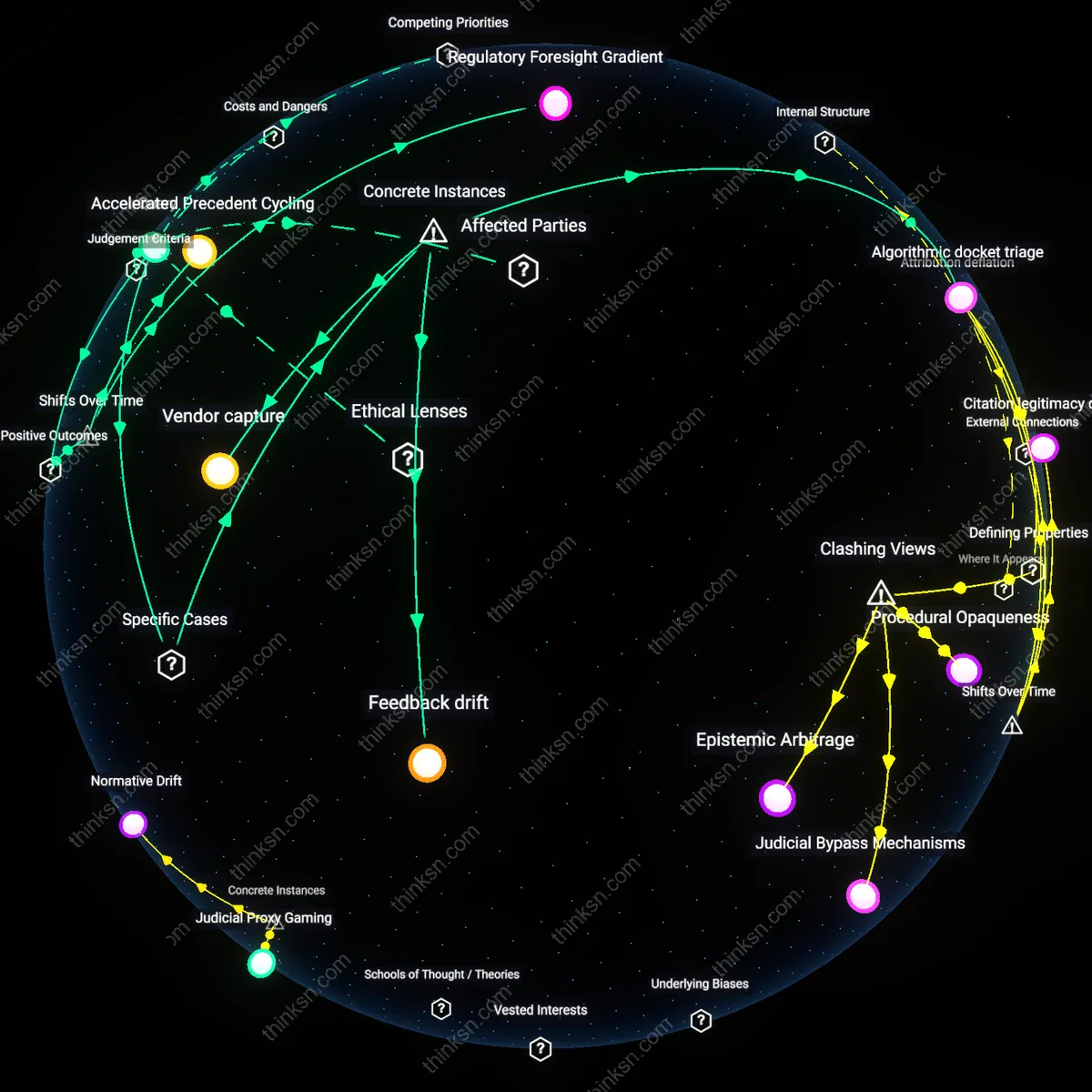

Role Redefinition

The adoption of AI in market research will transform analysts from interpreters into prompt managers, subordinating critical thinking to system orchestration. In teams at firms like Nielsen and Kantar, analysts spend growing portions of their time curating training data, refining queries for natural language processing tools, and validating AI-generated narratives rather than developing independent insights. This redefinition privileges procedural compliance over intellectual autonomy, as performance metrics track output velocity and tool utilization, not originality or depth of interpretation. The non-obvious reality is that critical thinking persists but becomes instrumental—channeled into optimizing AI inputs rather than questioning consumer behavior directly.

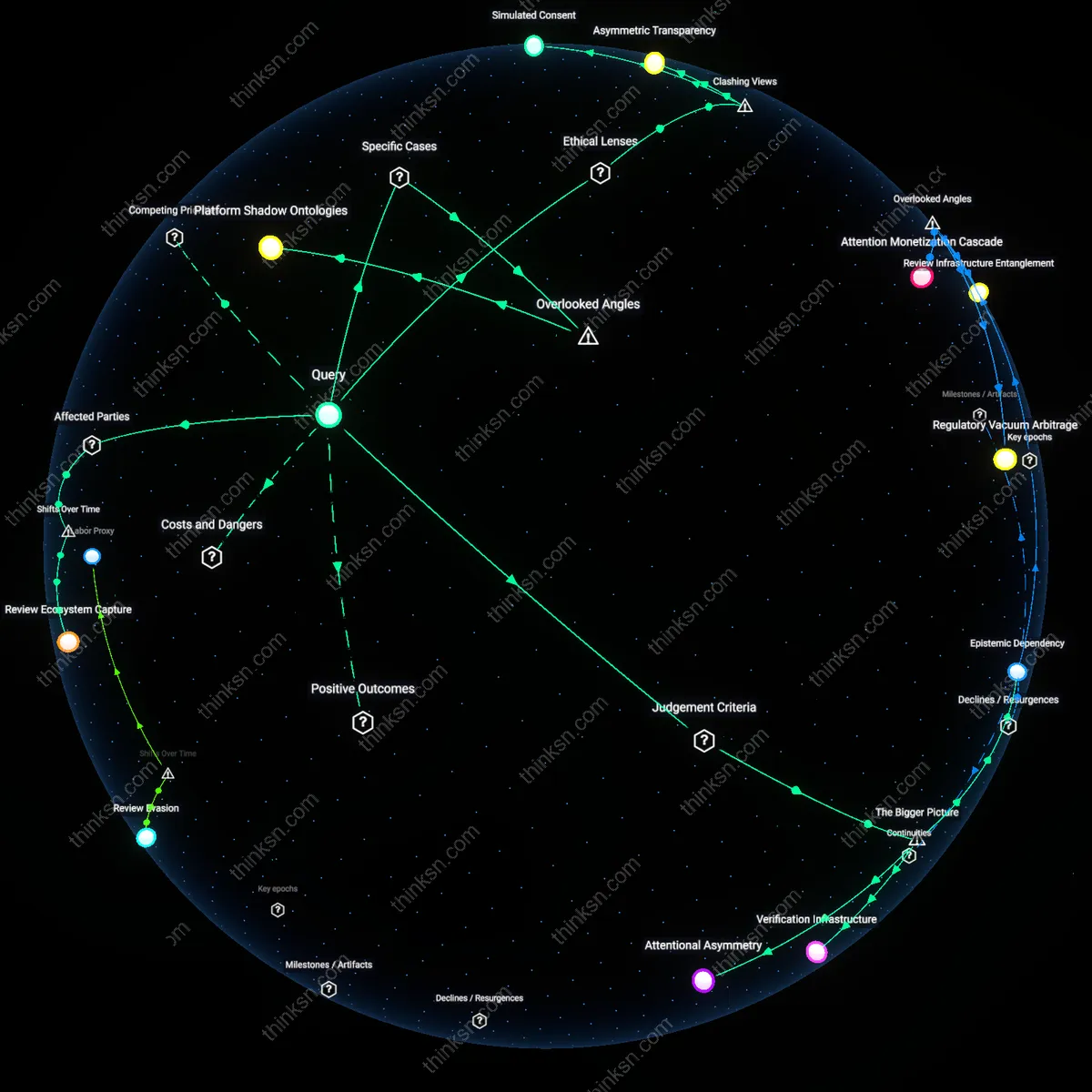

Insight Commodification

AI-enhanced tools will accelerate the commodification of consumer insights, displacing critical analysis with just-in-time deliverables tuned to executive preferences. Corporate procurement systems at Fortune 500 companies now prioritize platforms like Brandwatch and Talkwalker that promise real-time dashboards, shifting analyst roles toward maintaining stakeholder satisfaction with polished, rapid outputs. In this context, critical thinking that introduces uncertainty or challenges strategic narratives is structurally disincentivized, as AI-generated consensus views become embedded in quarterly reporting cycles. What’s rarely acknowledged is that the threat isn’t diminished cognition per se, but the systemic exclusion of dissenting interpretation from decision pipelines.

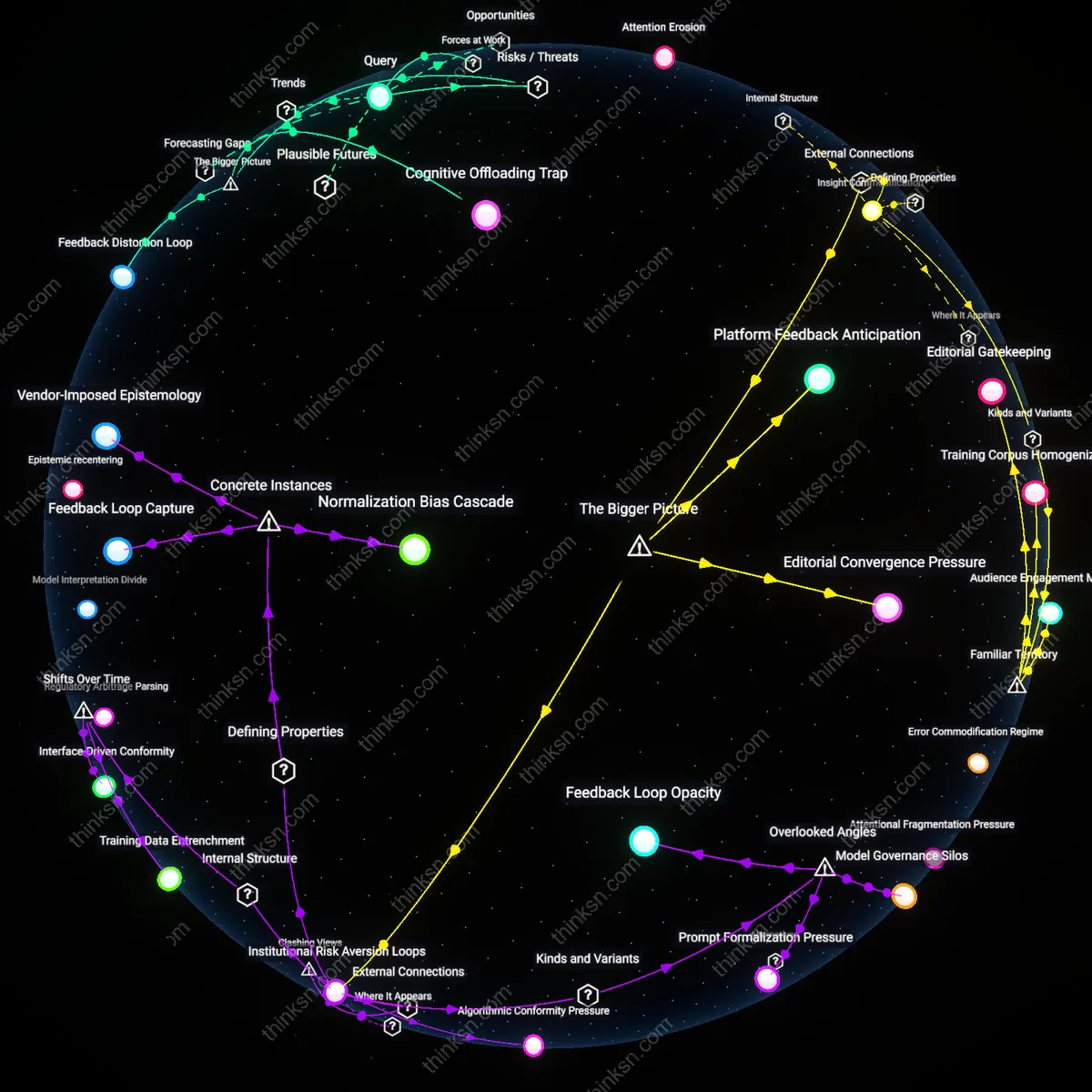

Cognitive Offloading Trap

Widespread reliance on AI-generated consumer insights will erode analysts’ capacity to independently evaluate assumptions, as repetitive delegation of pattern recognition to algorithms weakens metacognitive vigilance. The mechanism operates through organizational efficiency pressures in firms like Nielsen and Kantar, where time-to-insight metrics incentivize tool dependency over methodological scrutiny. This dynamic is non-obvious because productivity gains mask atrophy in foundational reasoning skills until novel market disruptions expose analytical fragility.

Epistemic Homogenization

Standardized AI models deployed across competing research firms will converge on similar consumer archetypes, suppressing outlier interpretations and narrowing strategic imagination. This occurs through the dominance of a few underlying large language models—such as those from Microsoft Azure and Google Cloud—applied uniformly to qualitative data, effectively centralizing inference logic. The systemic risk lies in how shared algorithmic priors reduce market diversity, making entire sectors vulnerable to coordinated misjudgment during asymmetric consumer shifts.

Feedback Distortion Loop

AI tools trained on historical research success metrics will amplify past biases by rewarding familiar, easily quantifiable insights while filtering out ambiguous or contradictory signals. This is driven by corporate procurement systems in Fortune 500 analytics departments that prioritize consistency and low variance in reporting, selecting for tools that minimize surprise rather than maximize insight. The unappreciated consequence is a recursive degradation of insight quality, where the very mechanisms designed to improve accuracy instead insulate decision-makers from necessary cognitive dissonance.