How Selective Deplatforming Reveals Power Imbalances Online?

Analysis reveals 11 key thematic connections.

Key Findings

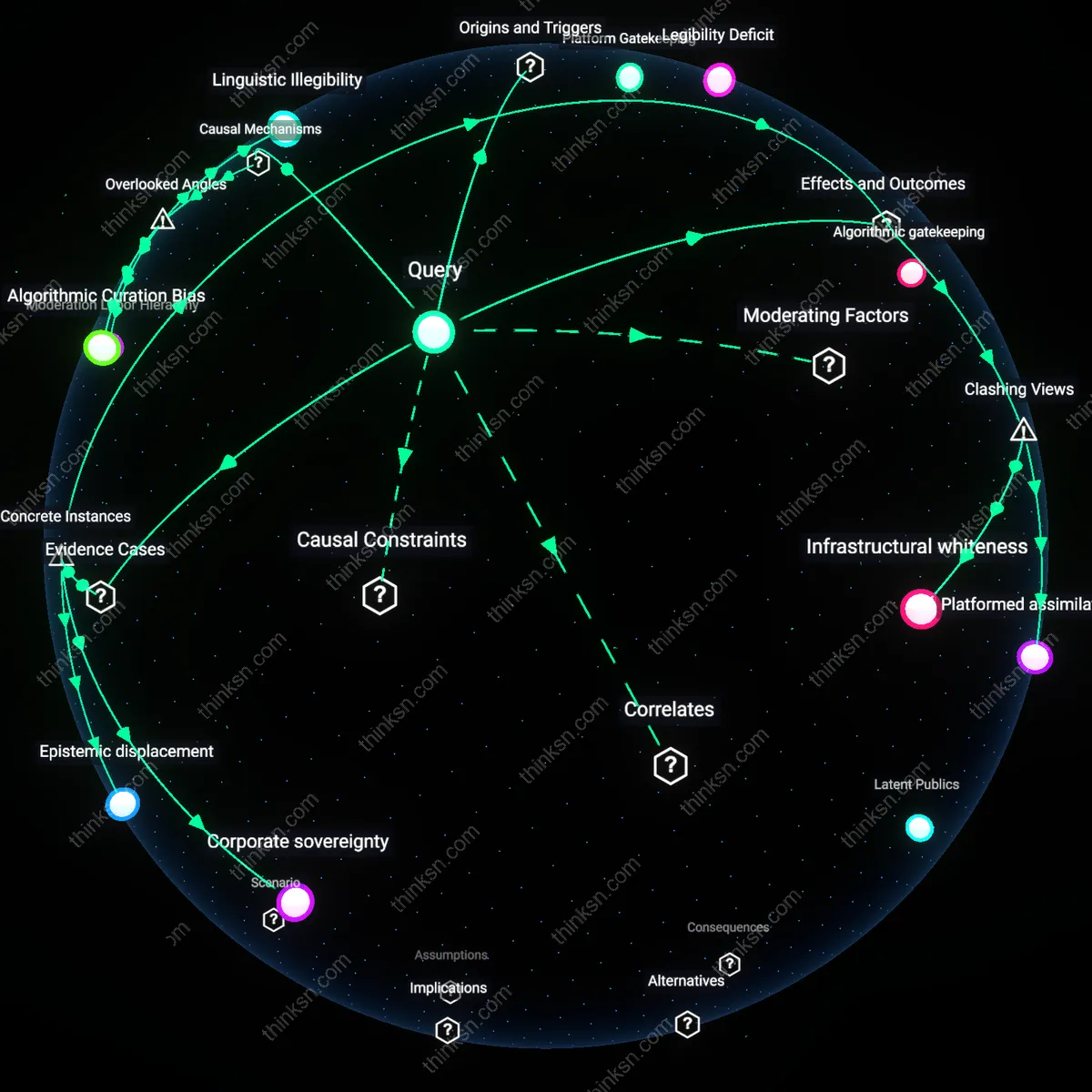

Platform Gatekeeping

Private platforms selectively remove minority voices because corporate content policies are shaped by dominant cultural and legal norms that define what counts as acceptable speech, thereby reinforcing existing hierarchies. This occurs through moderation teams and algorithmic filtering systems trained on mainstream linguistic and behavioral standards, which systematically devalue or misrecognize expressions common in marginalized communities. The non-obvious insight is that enforcement appears neutral but operates through historically codified biases embedded in seemingly technical systems—revealing how private governance mimics state power under the guise of user safety.

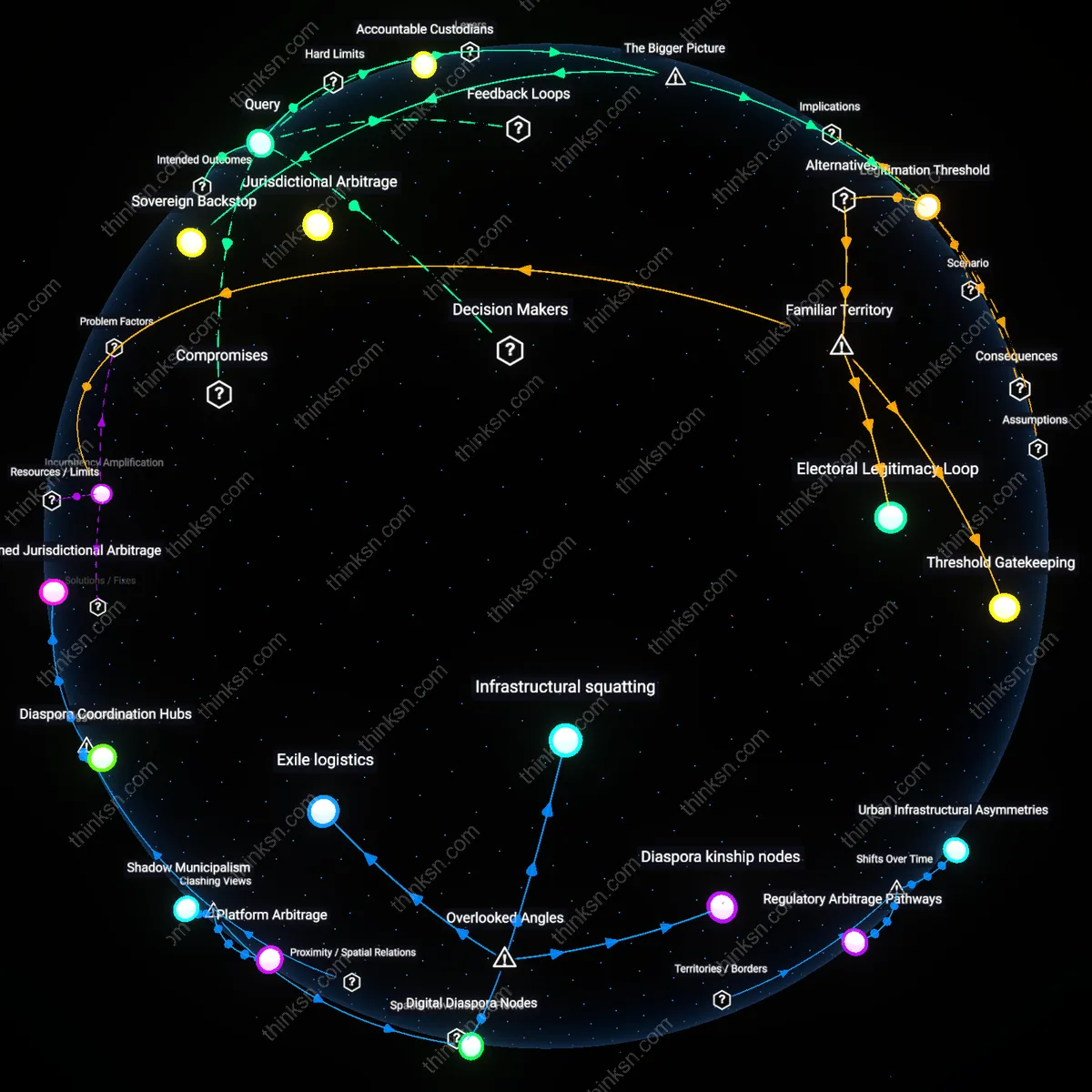

Visibility Economy

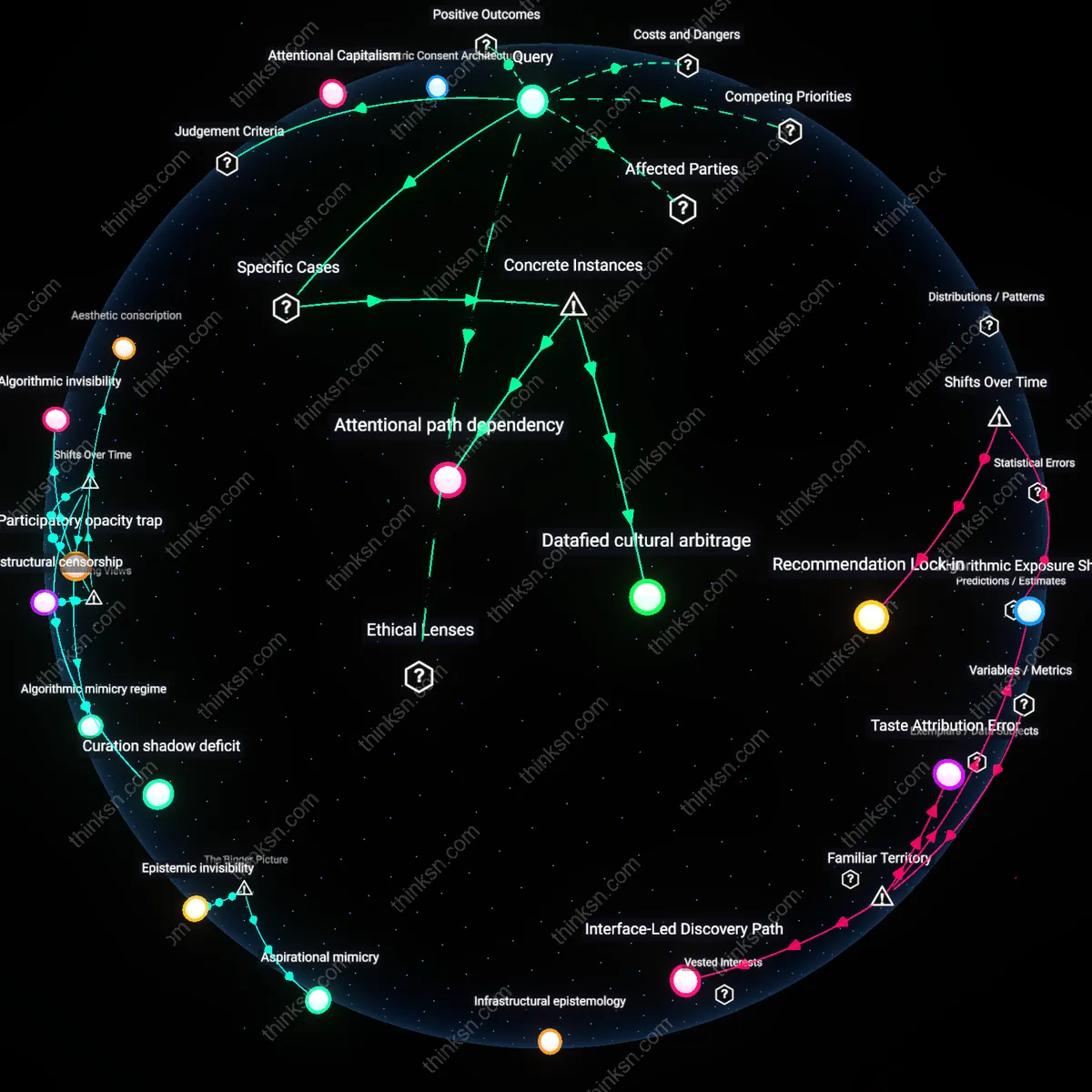

The erasure of minority voices from private platforms reflects a marketplace logic in which attention is monetized and outlier perspectives are deprioritized to maintain advertiser-friendly environments. Platforms optimize engagement through recommendation engines that favor consensus-aligned or emotionally amplified content, starving dissenting or culturally specific narratives of distribution. Most people intuitively associate censorship with overt suppression, but the deeper mechanism is economic filtering—where speech survives not by right, but by its ability to generate predictable, scalable attention.

Legibility Deficit

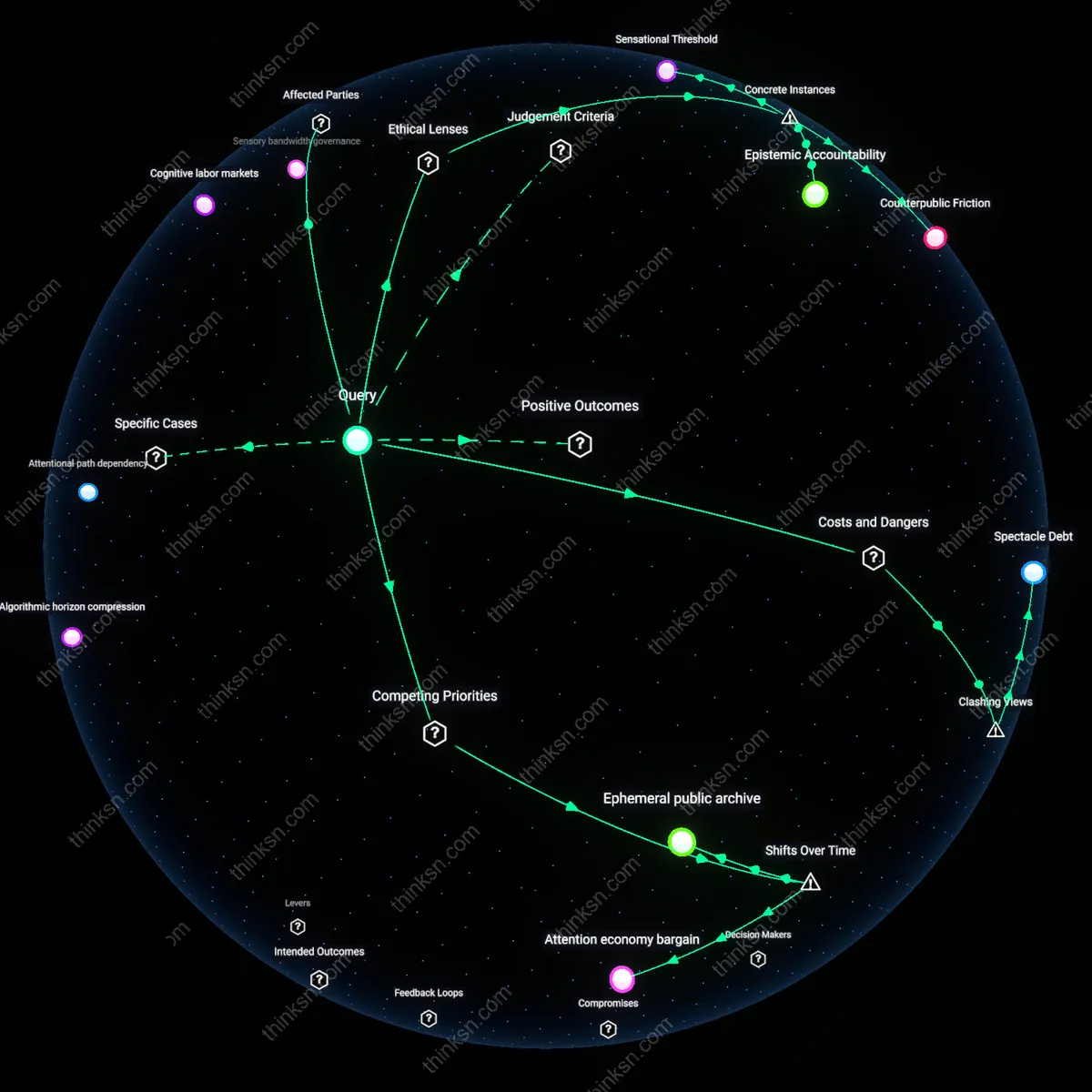

Minority voices are removed not because they are inherently disruptive but because they operate outside the structured formats platforms are engineered to recognize and manage, such as standardized language, verified identities, or documented behavior patterns. Automated moderation systems flag deviations from expected norms—like code-switching, collective authorship, or oral storytelling styles—as suspicious or harmful. While public discourse assumes moderation targets dangerous content, the underlying issue is a technological inability to parse culturally unfamiliar signals, which renders minority expression illegible and therefore disposable.

Algorithmic Curation Bias

The selective removal of minority voices on private platforms systematically reinforces dominant cultural narratives by privileging content that aligns with advertiser-friendly norms. Platform algorithms, trained on engagement metrics that reflect majority behavior, disproportionately flag and suppress minority speech as 'risky' or 'polarizing,' not because it violates policy uniformly, but because its deviation from mainstream discourse reduces monetizable attention. This mechanism is rarely exposed in public moderation debates, which focus on explicit censorship rather than the statistical logic of recommendation systems, thereby obscuring how commercial optimization silently enforces ideological homogeneity. The overlooked dynamic is that content suppression often precedes moderation decisions—minority voices are downranked before they’re even seen, making removal appear reactive rather than structurally preordained.

Moderation Labor Hierarchy

The erosion of minority voices on private platforms is partially driven by the geographic and socioeconomic stratification of content moderation labor, where outsourced contractors in low-wage regions apply ambiguous policies to culturally specific expressions they lack context to interpret. These workers, facing high turnover and strict productivity quotas, default to conservative takedowns to avoid penalties, disproportionately affecting marginalized speech that appears ambiguous without cultural fluency. Most analyses assume policy bias or corporate intent as the causal root, but the actual decision point is mediated by an invisible global workforce whose conditions produce systemic over-enforcement—this hidden layer of labor discipline becomes a silent filter shaping platform ideology. The non-obvious insight is that power imbalance is not just between user and corporation, but embedded in the cross-cultural operational chain that executes moderation.

Linguistic Illegibility

Minority voices are more vulnerable to removal because their linguistic innovations—slang, dialect, or code-switching—evade detection by automated systems trained on standardized language forms, rendering their speech structurally 'unintelligible' to platform classifiers. These systems mistake semantic richness for noise, flagging such content as spam or toxicity not due to intent but pattern divergence, effectively penalizing linguistic authenticity. This technical limitation is rarely acknowledged in equity debates, which assume moderation is ideologically motivated rather than constrained by narrow computational linguistics; yet, it creates a covert assimilation pressure where only culturally legible speech survives. The overlooked dependency is that algorithmic fairness fails not only by design but by linguistic ontology—what counts as coherent discourse is already defined by dominant language norms.

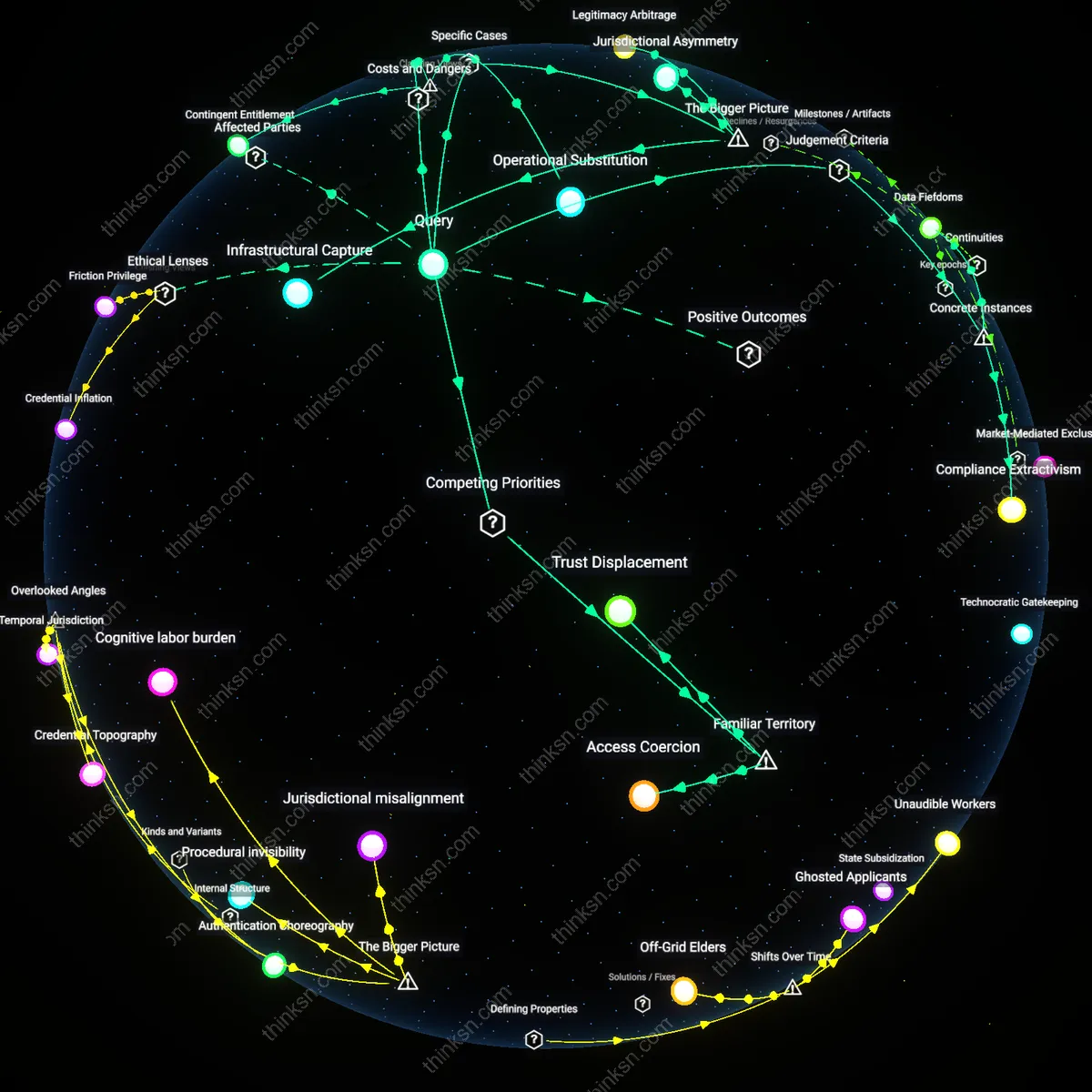

Platformed assimilation

The selective removal of minority voices from private platforms reinforces assimilationary compliance by conditioning access to digital publics on adherence to dominant cultural norms, not neutrality or policy enforcement. Major platforms like Facebook and Twitter enforce community standards shaped by Western legal precedents and corporate risk models, which disproportionately label expressions from marginalized communities—such as AAVE syntax, Indigenous land claims, or Palestinian grief narratives—as 'harmful' or 'inauthentic.' This mechanism functions through automated content moderation systems trained on majority-usage datasets, making marginality itself a signal for removal. The non-obvious consequence is not just suppression but the incentivization of linguistic and cultural self-modification to gain visibility—a systemic shift where participation requires internalizing hegemonic expression.

Infrastructural whiteness

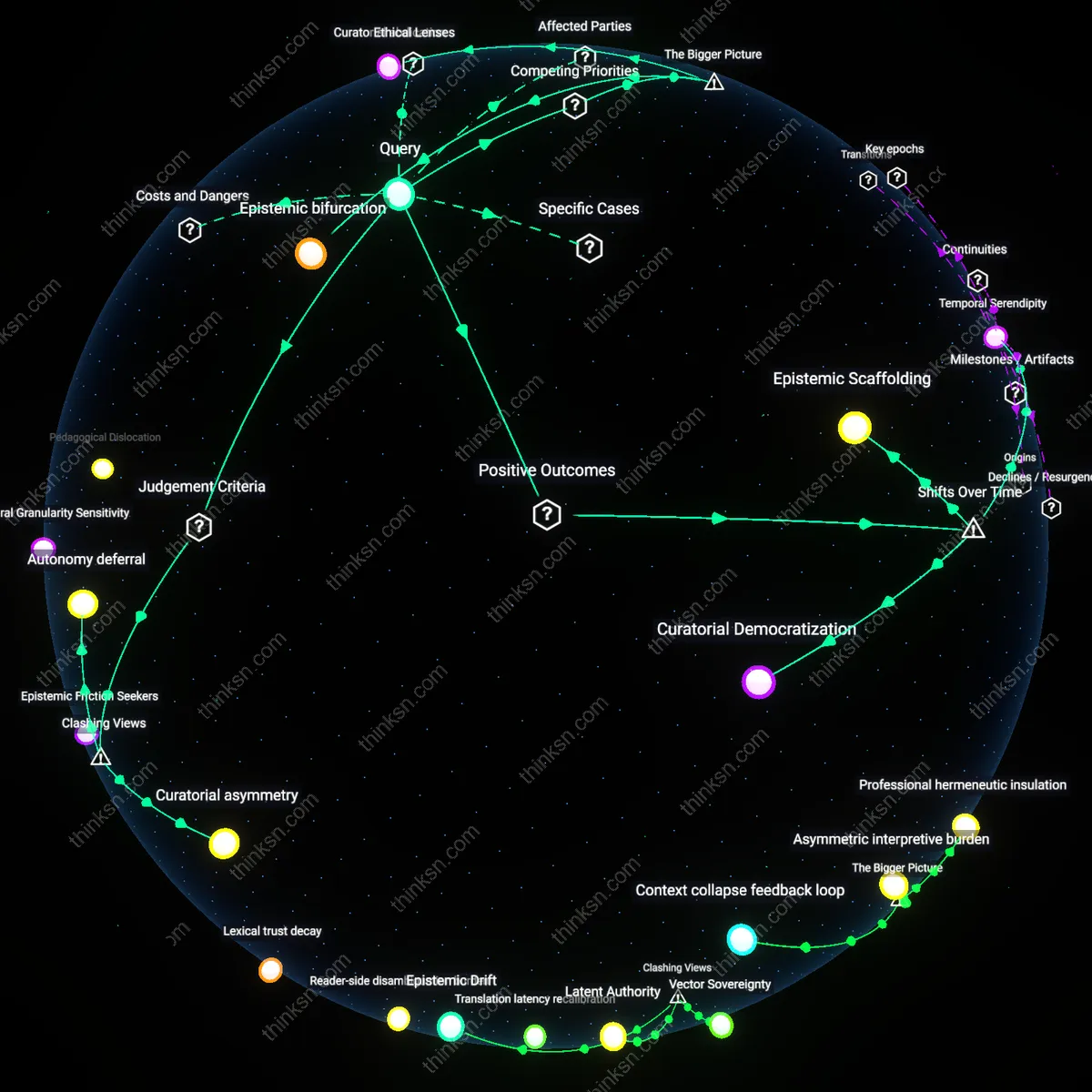

The removal of minority voices reveals infrastructural whiteness—the way technical architectures embed racial hierarchies by presenting Eurocentric governance logics as universal platform defaults. Content moderation protocols on platforms such as YouTube and Reddit rely on reporting mechanisms and trust-and-safety teams that replicate real-world racialized response biases, where Black organizers are flagged as 'violent' for discussing police brutality, while white supremacists invoking identical terminology evade detection. This operates through decentralized user-reporting systems that outsource enforcement to communities already shaped by racial surveillance norms. The dissonance lies in reframing these outcomes not as bugs or neglect, but as expected outputs of systems designed to reflect, rather than challenge, existing power geometries.

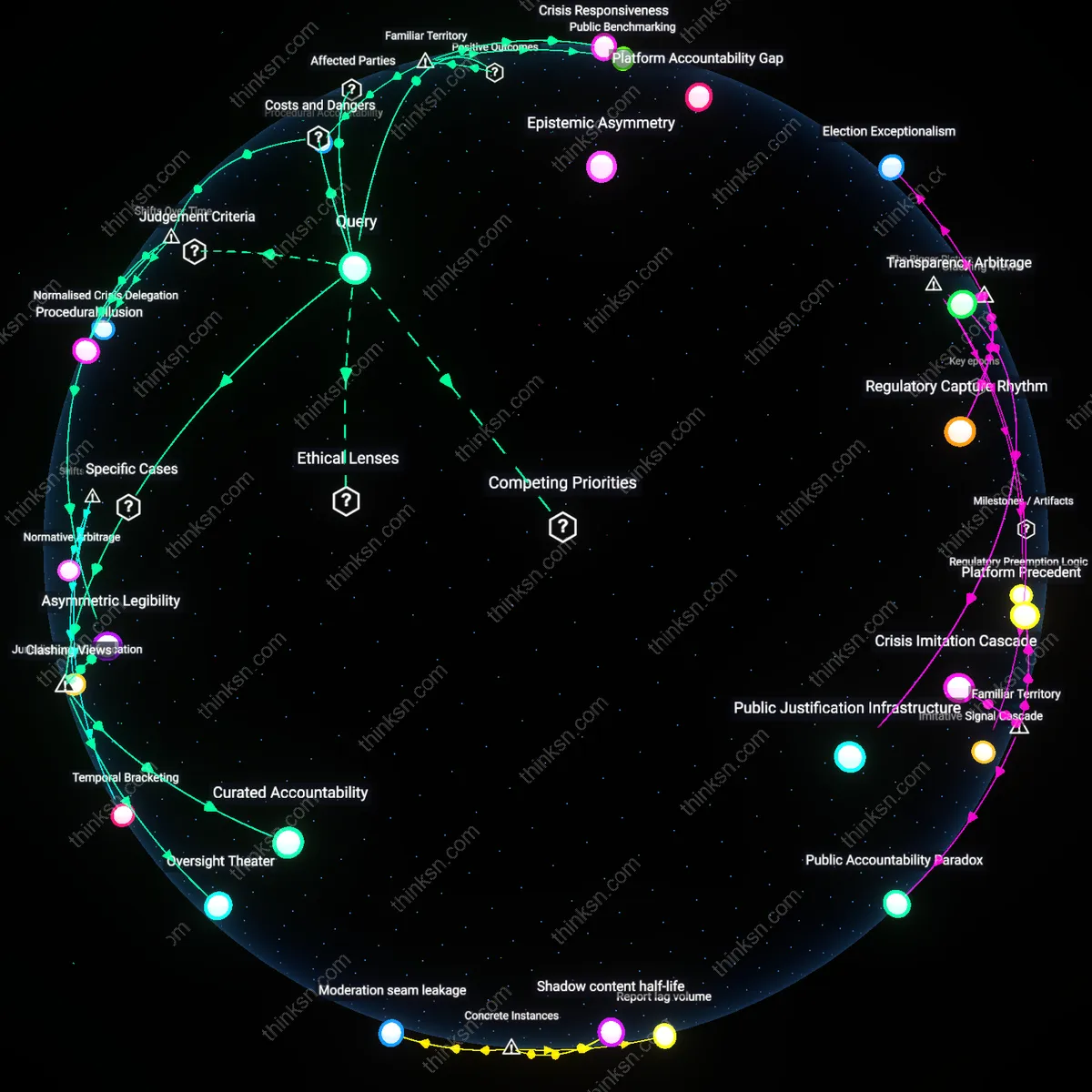

Algorithmic gatekeeping

The deplatforming of Black Lives Matter activists on Facebook in 2020 during peak protest activity demonstrates how content moderation systems selectively suppress minority voices. Automated flagging tools, trained on mainstream linguistic norms, disproportionately tagged posts using African American Vernacular English or protest-specific vernacular as 'aggressive' or 'spam', leading to disproportionate removal. This reveals that seemingly neutral algorithms act as gatekeepers not through intent, but through embedded training biases, making systemic exclusion invisible under technical rationality.

Corporate sovereignty

The removal of Rohingya advocacy content from YouTube, despite no violation of community guidelines, exemplifies how private platforms make sovereignty claims over speech. In 2018, Google prioritized access to Myanmar’s market by aligning takedowns with local regimes’ sensitivities, effectively delegitimizing atrocity documentation. This shows that platform governance often reflects geopolitical trade-offs rather than rights-based principles, where minority expression becomes collateral in corporate-state negotiations.

Epistemic displacement

The consistent shadow-banning of Indigenous water protectors’ hashtags related to pipeline resistance on Instagram illustrates how platform visibility controls displace marginalized knowledge systems. In the case of #NoDAPL, posts were systematically down-ranked, limiting reach without notification, thereby disrupting grassroots coordination and narrative control. This reveals that selective de-amplification, not just deletion, functions as a structural mechanism to displace non-dominant epistemologies from public discourse.