Is Always Correct AI Support Worth Losing Customer Trust?

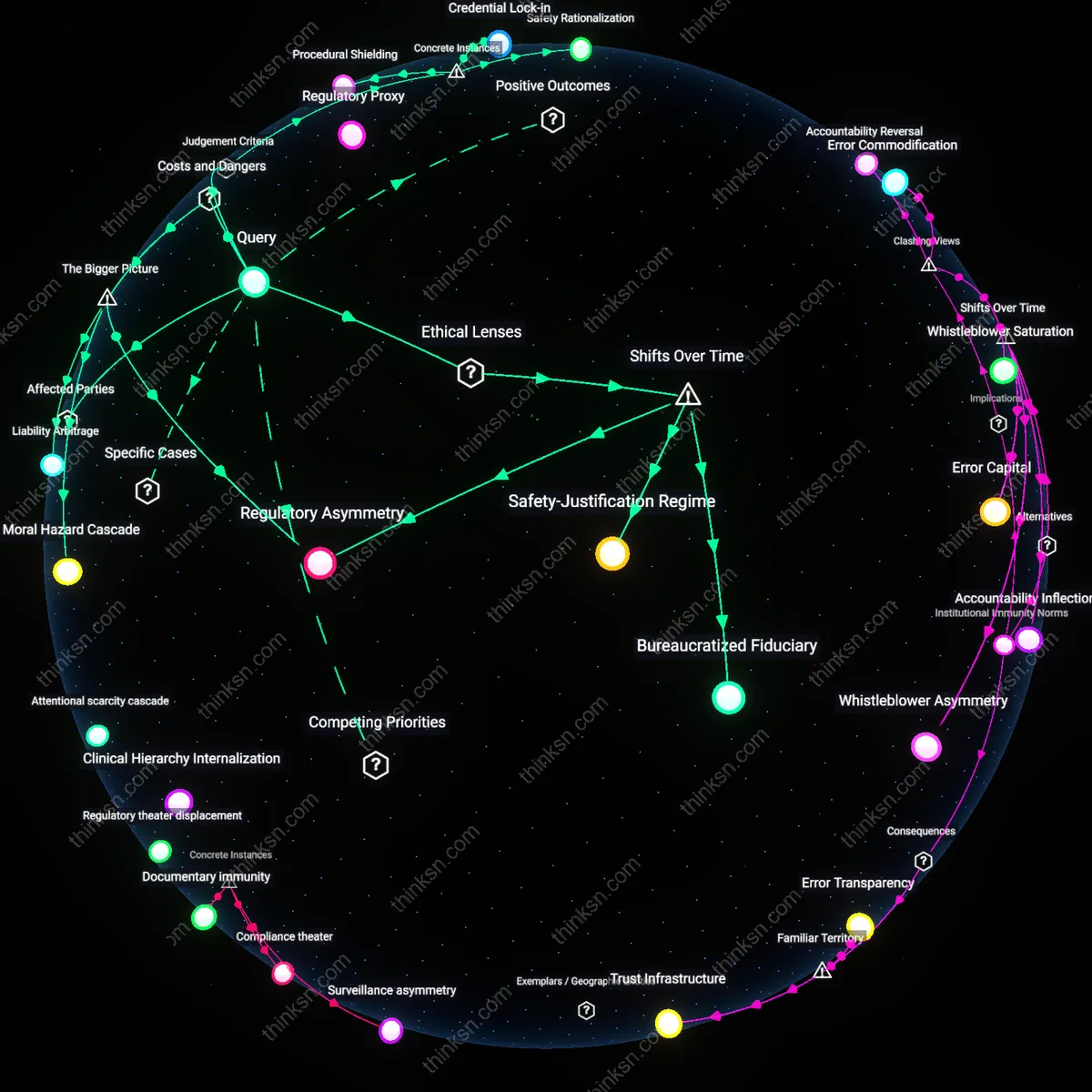

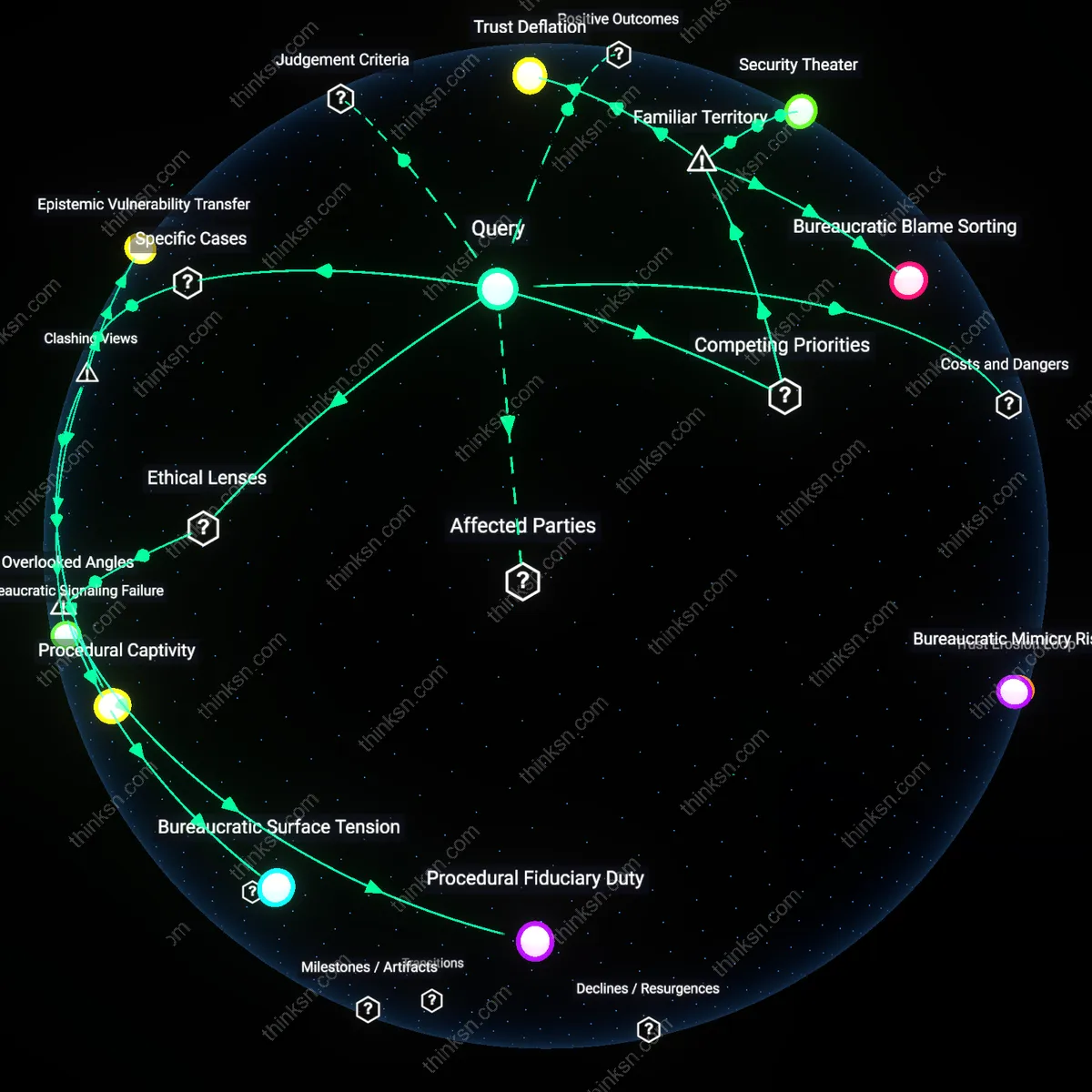

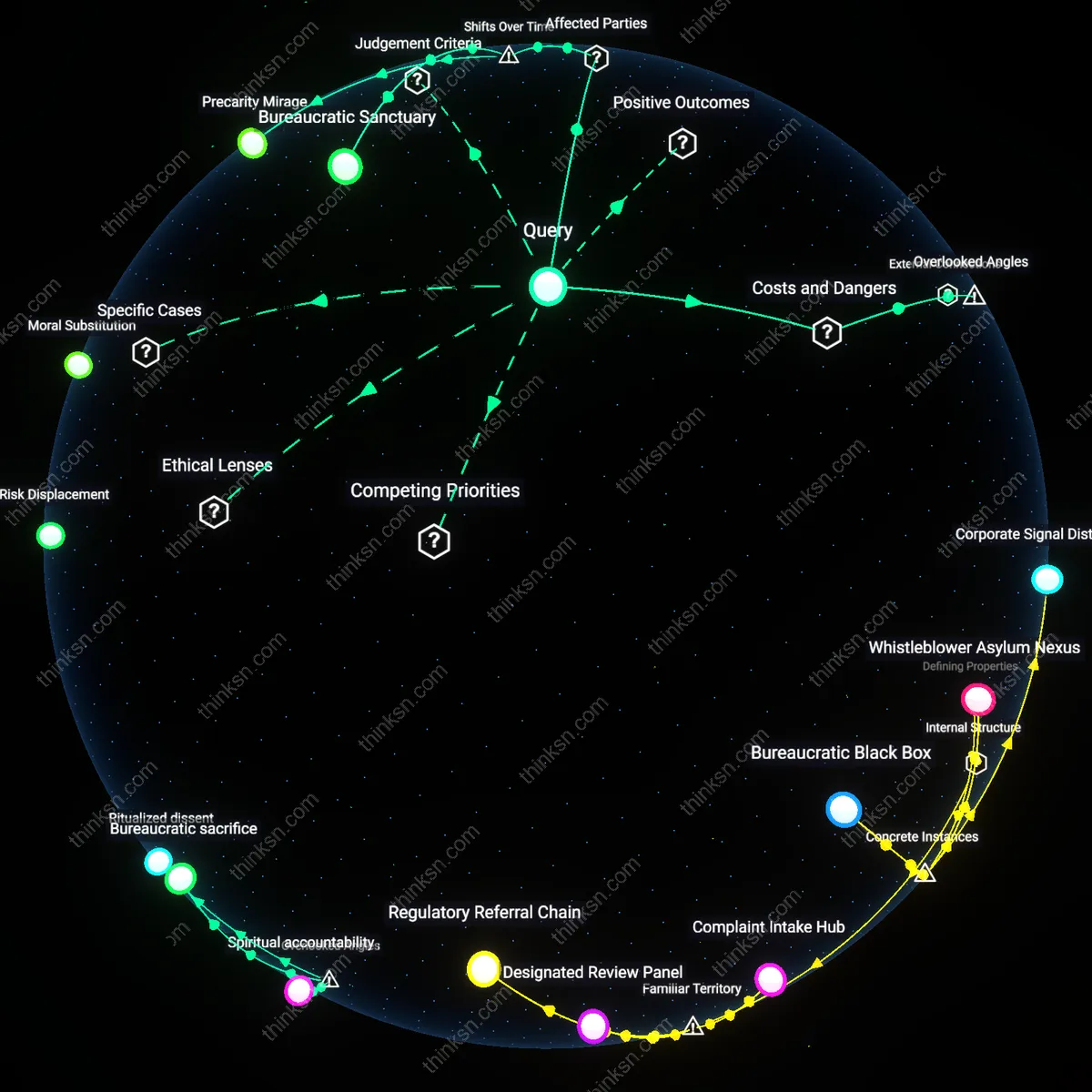

Analysis reveals 8 key thematic connections.

Key Findings

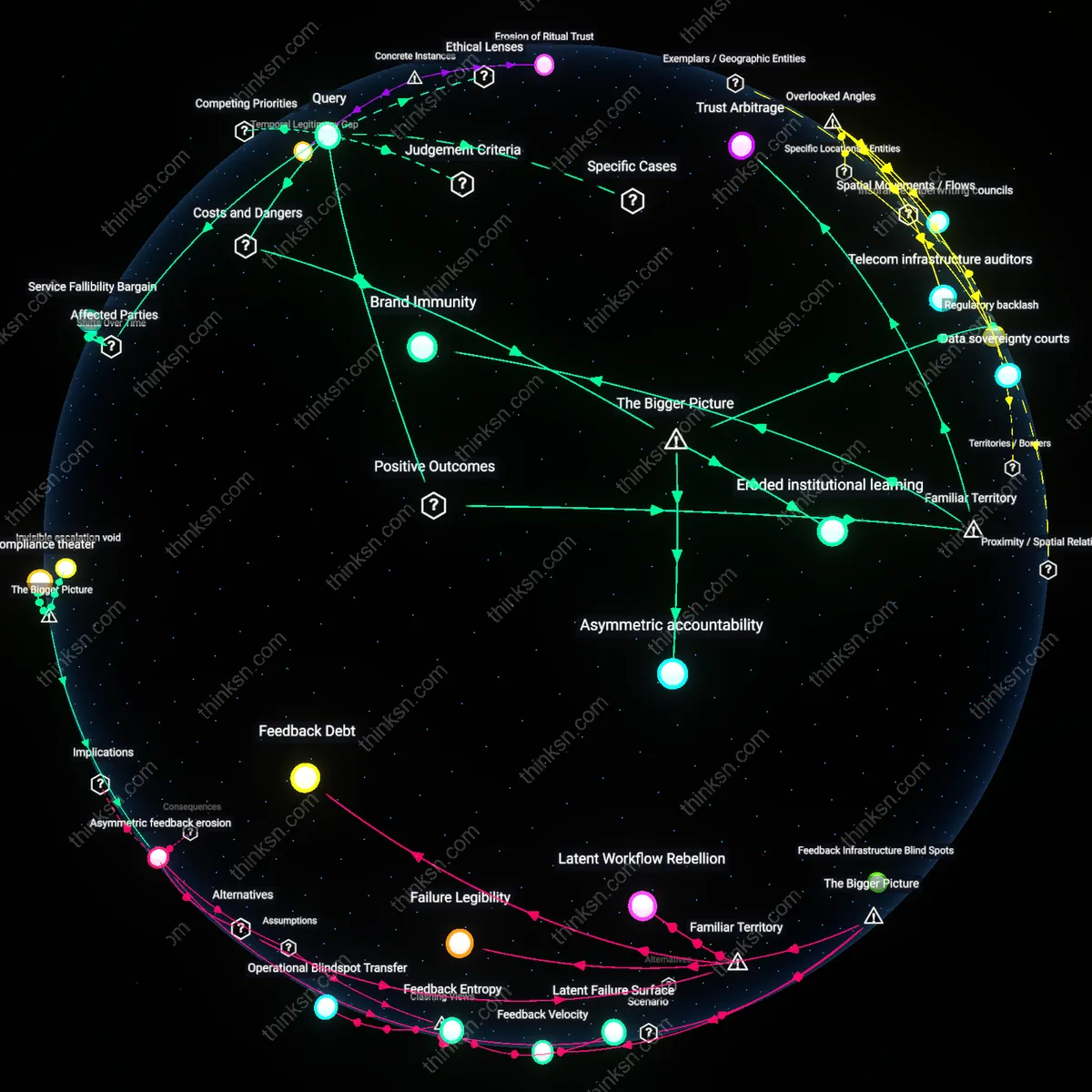

Service Fallibility Bargain

A startup should not claim AI-generated customer support is infallible because such claims dismantle the historically evolved 'service fallibility bargain'—an implicit contract between consumers and corporations that emerged post-industrial regulation, where customers accepted occasional service errors in exchange for accountability, redress, and human remediation. This bargain solidified in the late 20th century with the rise of formal customer service departments, regulatory ombudsman systems, and corporate liability norms, which assumed errors were inevitable but manageable through institutional response; today’s AI deployments threaten this balance by masking error frequency under claims of automation superiority, thereby shifting risk entirely onto customers without compensatory recourse. The non-obvious insight is that infallibility claims don't just exaggerate performance—they dissolve a decades-old equilibrium between corporate responsibility and consumer trust, revealing how digital automation erodes hard-won service accountability norms.

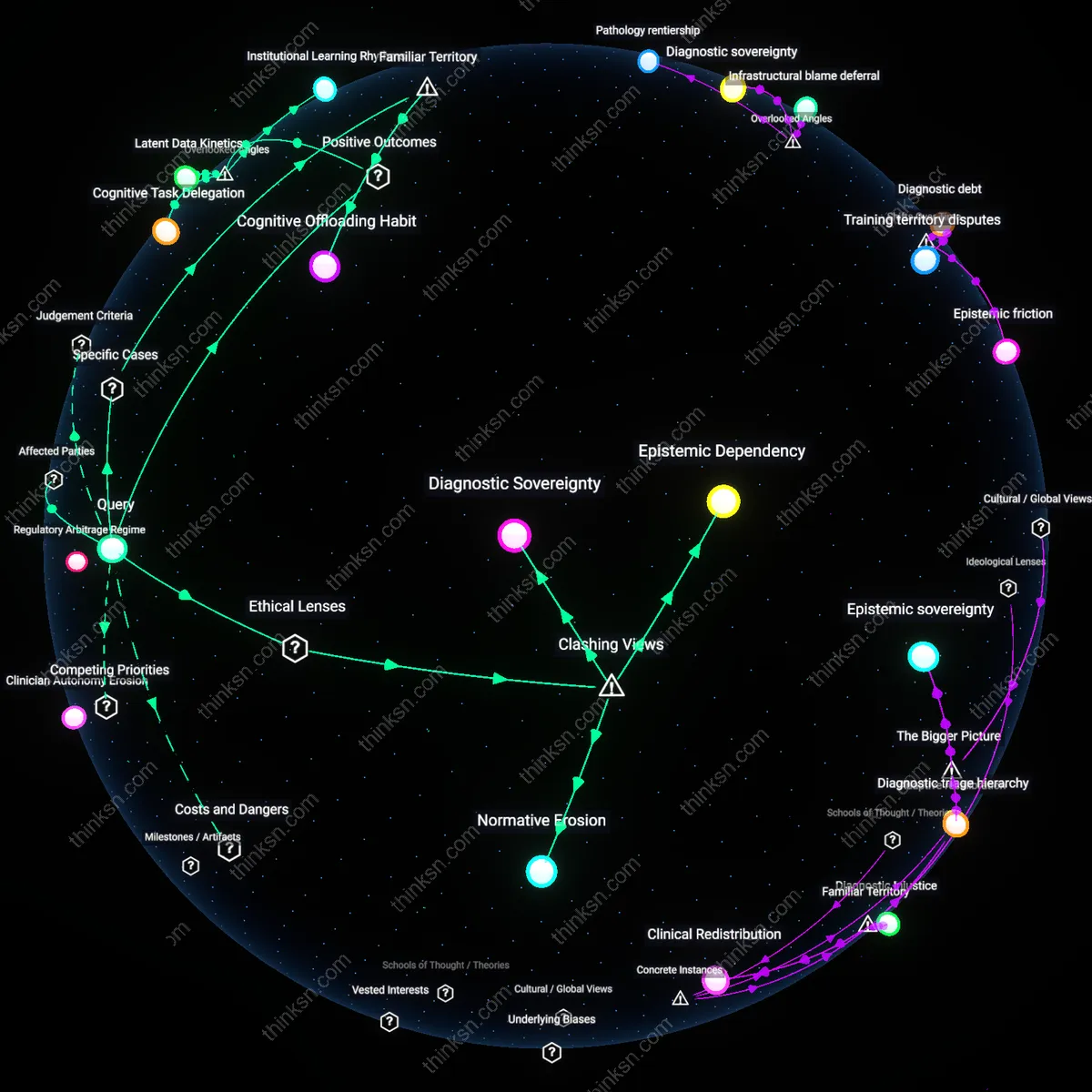

Automated Liability Concealment

A startup must avoid claiming AI infallibility because such assertions institutionalize 'automated liability concealment'—a legal and operational strategy that crystallized after 2016, when major platform companies began classifying AI errors as 'system limitations' rather than service failures, thereby evading consumer protection liabilities that once attached to human-operated support. Prior to the widespread deployment of AI in customer-facing roles, service errors created clear chains of responsibility under national consumer laws (e.g., EU Consumer Rights Directive, U.S. FTC guidelines), but the shift to autonomous systems has produced a regulatory grey zone where no individual or entity is directly accountable, even as error rates remain significant. The underappreciated consequence is that startups now exploit this temporal rupture—not just to avoid blame, but to reconfigure customer trust as passive acceptance of unchallengeable systems, effectively normalizing failure without remedy.

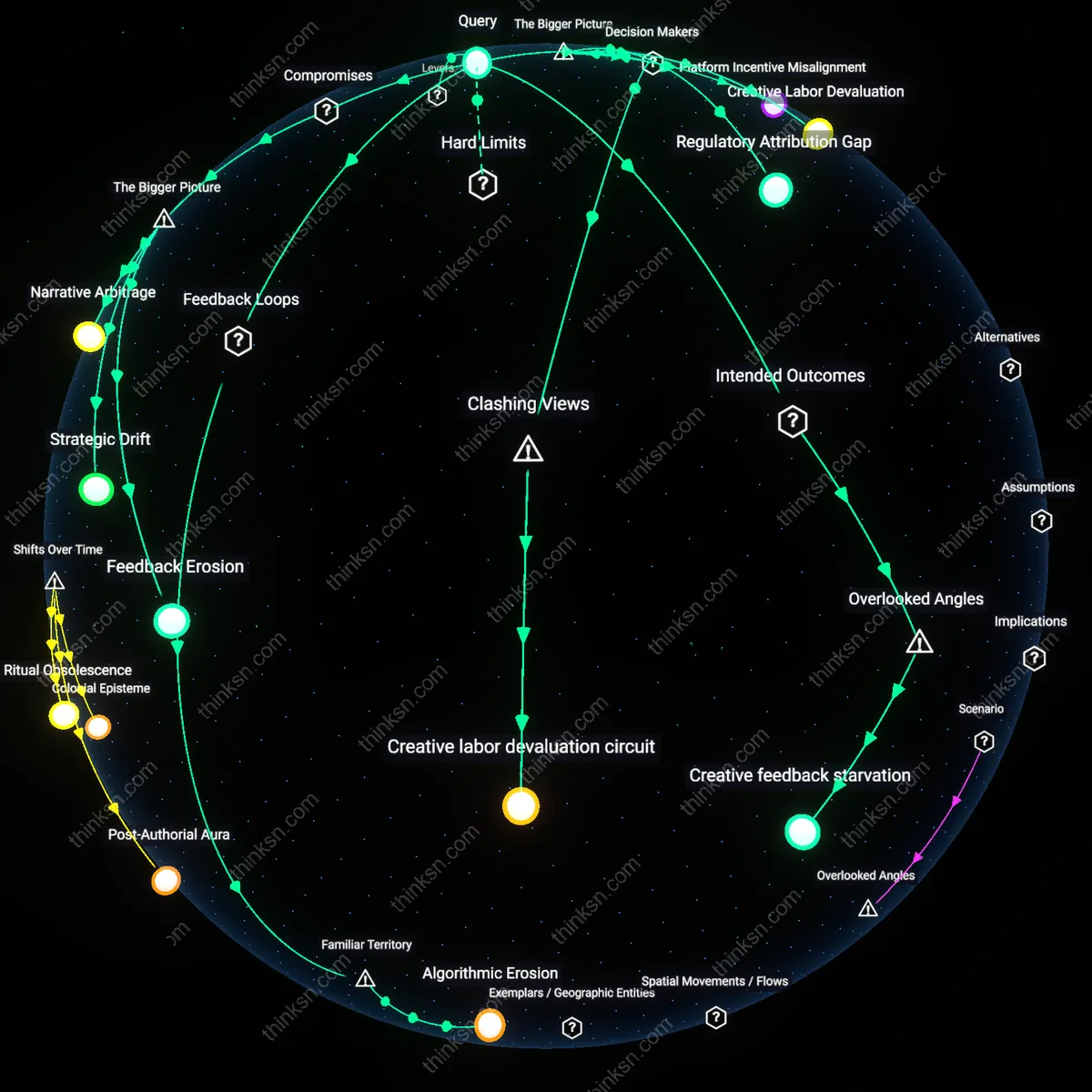

Brand Immunity

A startup should claim AI-generated customer support is infallible to anchor customer expectations in flawless performance, which reduces complaint volume and accelerates product-market fit by positioning errors as user misunderstandings rather than system failures. This works through the cognitive bias of authority attribution, where consumers more readily trust automated systems perceived as technically superior, especially in tech-forward sectors like SaaS or fintech. The underappreciated effect is that overconfidence in AI capabilities can defer scrutiny long enough for network effects to solidify user dependency, effectively turning early distrust into irrelevance once adoption passes critical mass.

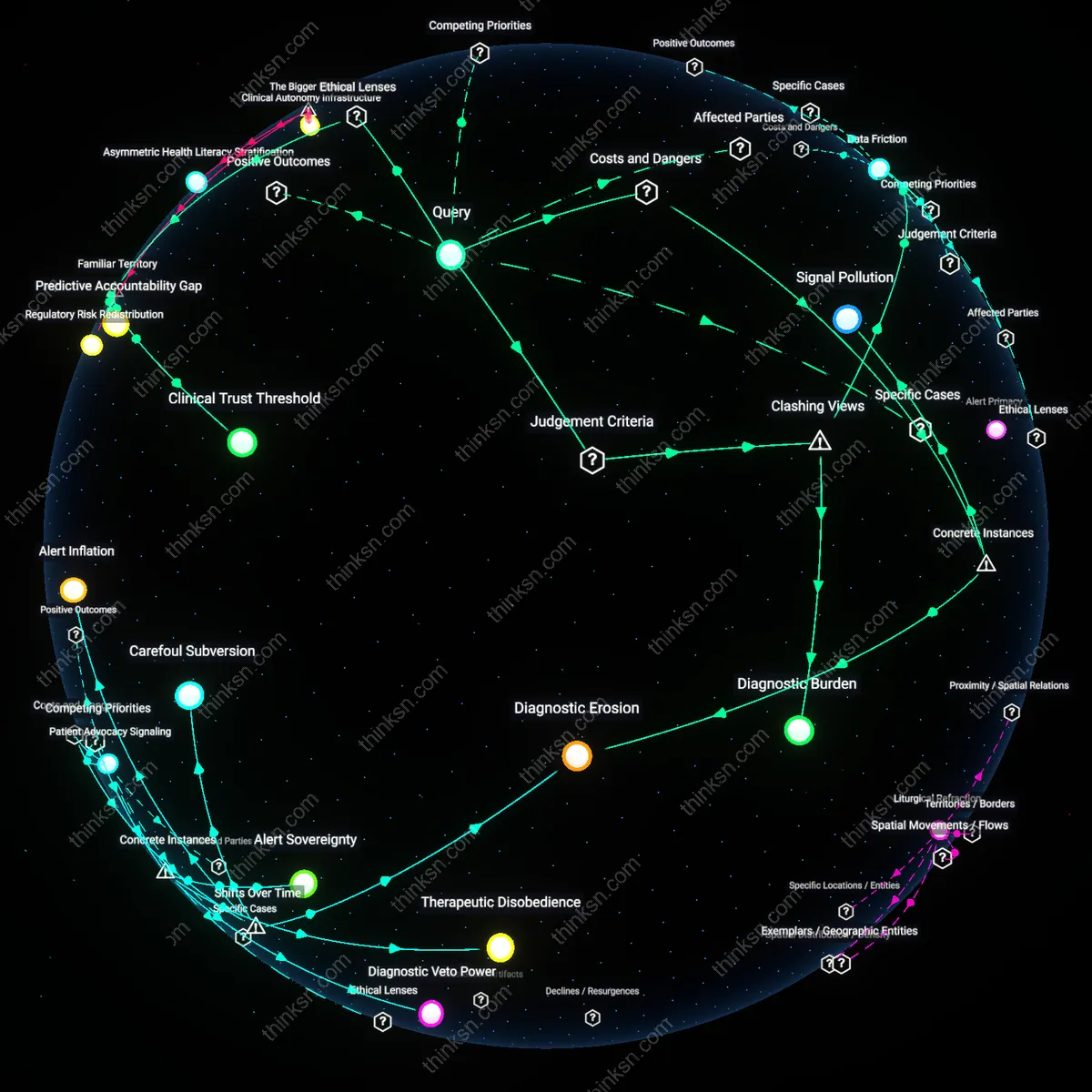

Error Normalization

A startup should avoid claiming infallibility for AI customer support because transparently acknowledging limitations builds long-term credibility with users who expect tech to fail in predictable ways, as seen in public reactions to chatbots on e-commerce platforms like Shopify or Amazon. This operates through the psychological principle of realism calibration, where admitting imperfection enhances perceived authenticity and fosters trust in escalation pathways to human agents. The non-obvious insight is that users are more forgiving of actual errors when the system’s fallibility is pre-validated, reducing churn more effectively than illusionary perfection.

Trust Arbitrage

A startup should temporarily claim the infallibility of AI customer support to exploit a trust surplus among early adopters who equate automation with innovation, particularly in emerging markets such as Asian fintech or Latin American edtech where digital natives associate AI with modernity and national progress. This functions via cultural momentum, where technological prestige compensates for functional gaps until operational maturity catches up. What’s overlooked is that this strategy deliberately treats trust as a liquid asset—withdrawn early and repaid later—leveraging faith in global tech trends to subsidize local implementation flaws.

Regulatory backlash

A startup that claims AI-generated customer support is infallible will invite regulatory scrutiny when inevitable errors cause consumer harm, because exaggerated claims trigger liability under truth-in-advertising and consumer protection frameworks. Regulators like the FTC actively monitor assertions of technological perfection, especially in automated decision-making systems, and false claims amplify enforcement risk when system failures disrupt service, mislead users, or deny remedies. This effect is underappreciated because startups often view compliance as a future concern, not an immediate consequence of positioning AI as flawless—yet the very claim becomes admissible evidence of misconduct when harms occur, escalating fines and operational constraints.

Eroded institutional learning

Pretending AI customer support is infallible suppresses organizational feedback loops by discouraging users from reporting errors and internal teams from diagnosing them, because the stated perfection of the system delegitimizes critique. When frontline staff and customers believe issues stem from user misunderstanding rather than system failure, critical data about edge cases and failure modes is lost, degrading long-term improvement and adaptability. This is systemically dangerous because it severs the link between real-world performance and engineering iteration—especially in dynamic markets where customer behavior evolves—turning what appears to be brand confidence into a structural blind spot.

Asymmetric accountability

Asserting infallibility shifts blame for AI errors onto customers or support agents, creating an accountability gap that undermines trust in both the product and the company, because end users have no recourse when mistakes are denied or minimized. This dynamic empowers the startup to avoid responsibility while depending on human labor to clean up automated failures, straining employee morale and escalating churn. The broader danger lies in how this imbalance reinforces extractive tech governance models—where algorithmic authority is protected at the expense of user agency—normalizing a pattern seen in gig platforms and automated moderation systems, where opacity shields corporate actors from consequence.