AI Outshines Human Copy: Double Down on Data or Storytelling?

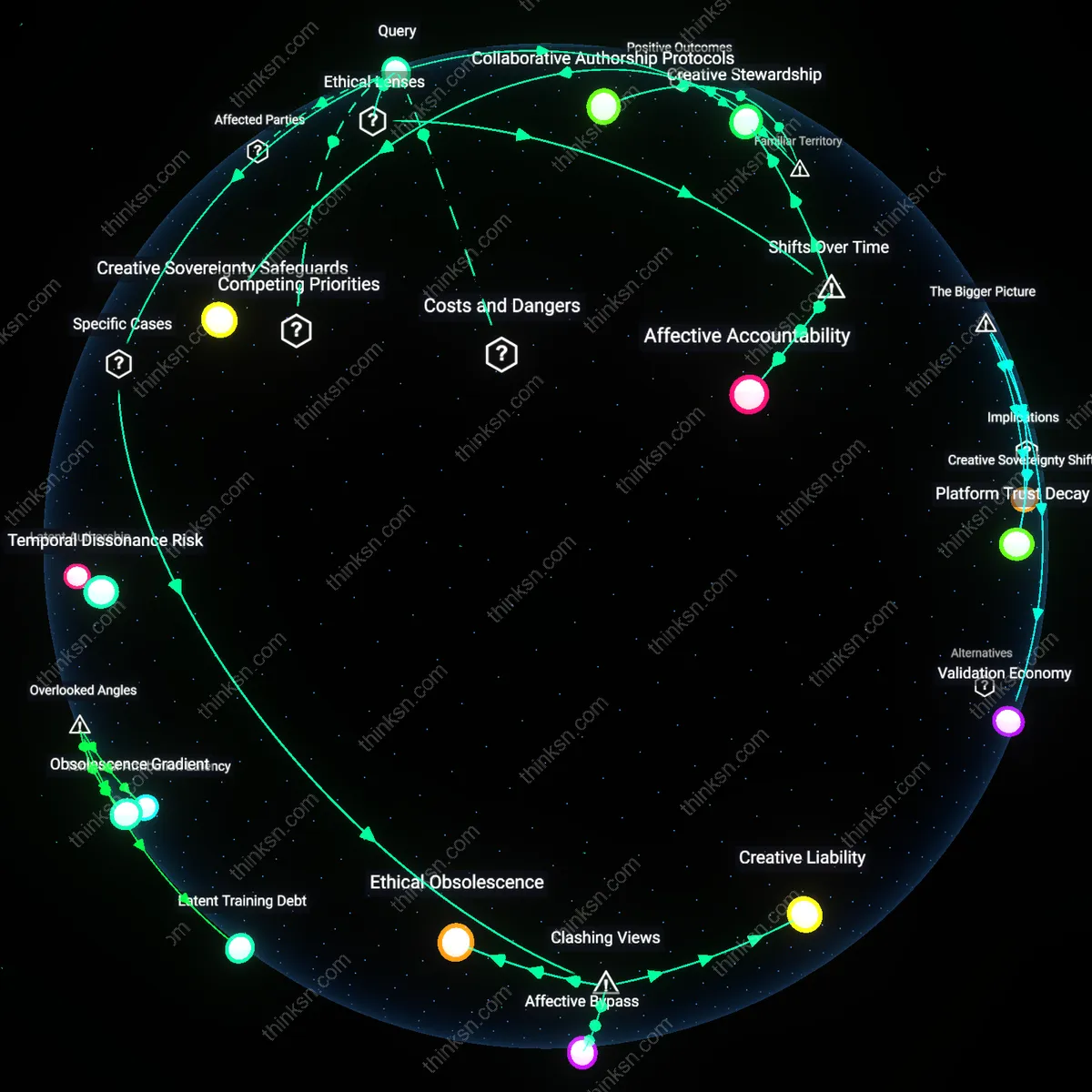

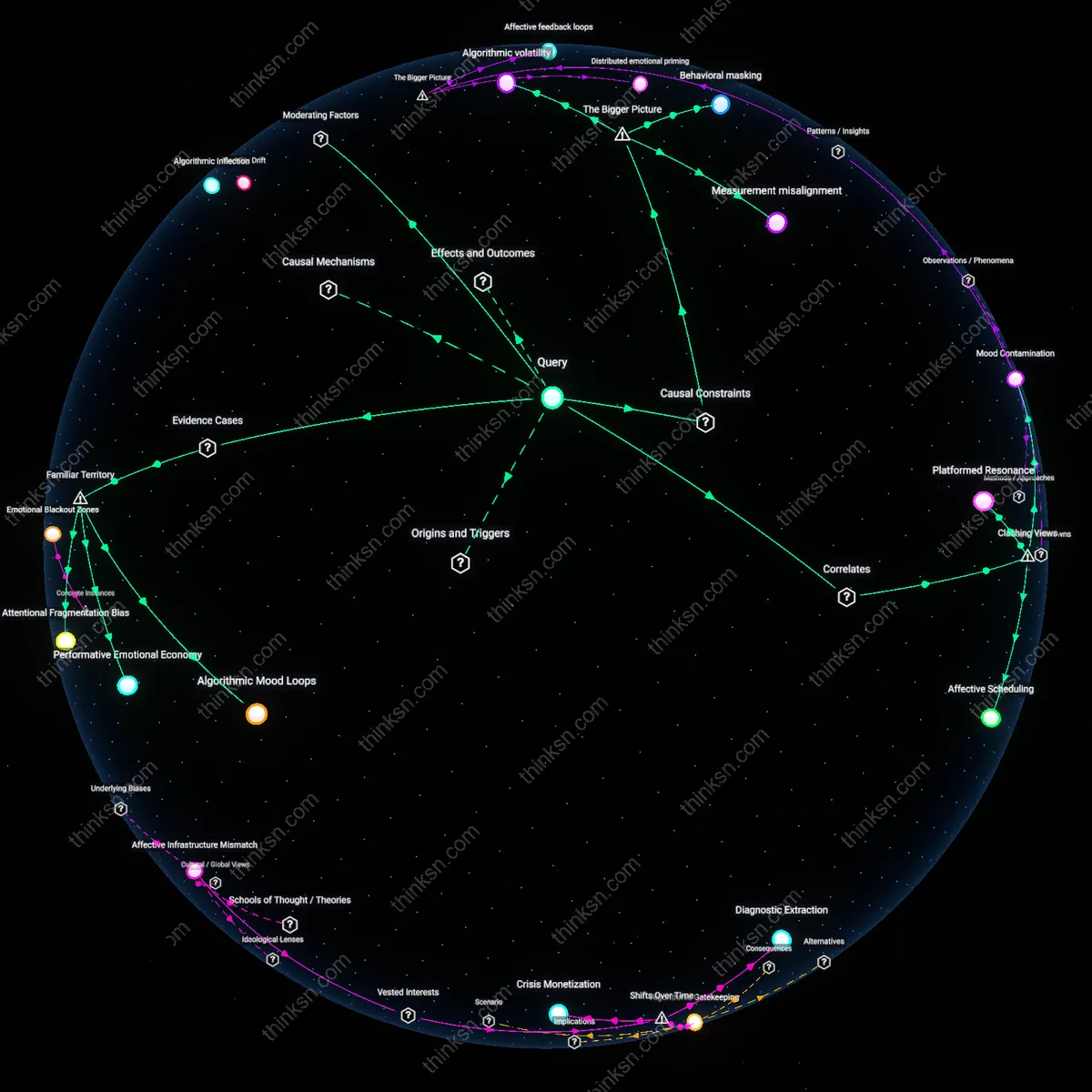

Analysis reveals 13 key thematic connections.

Key Findings

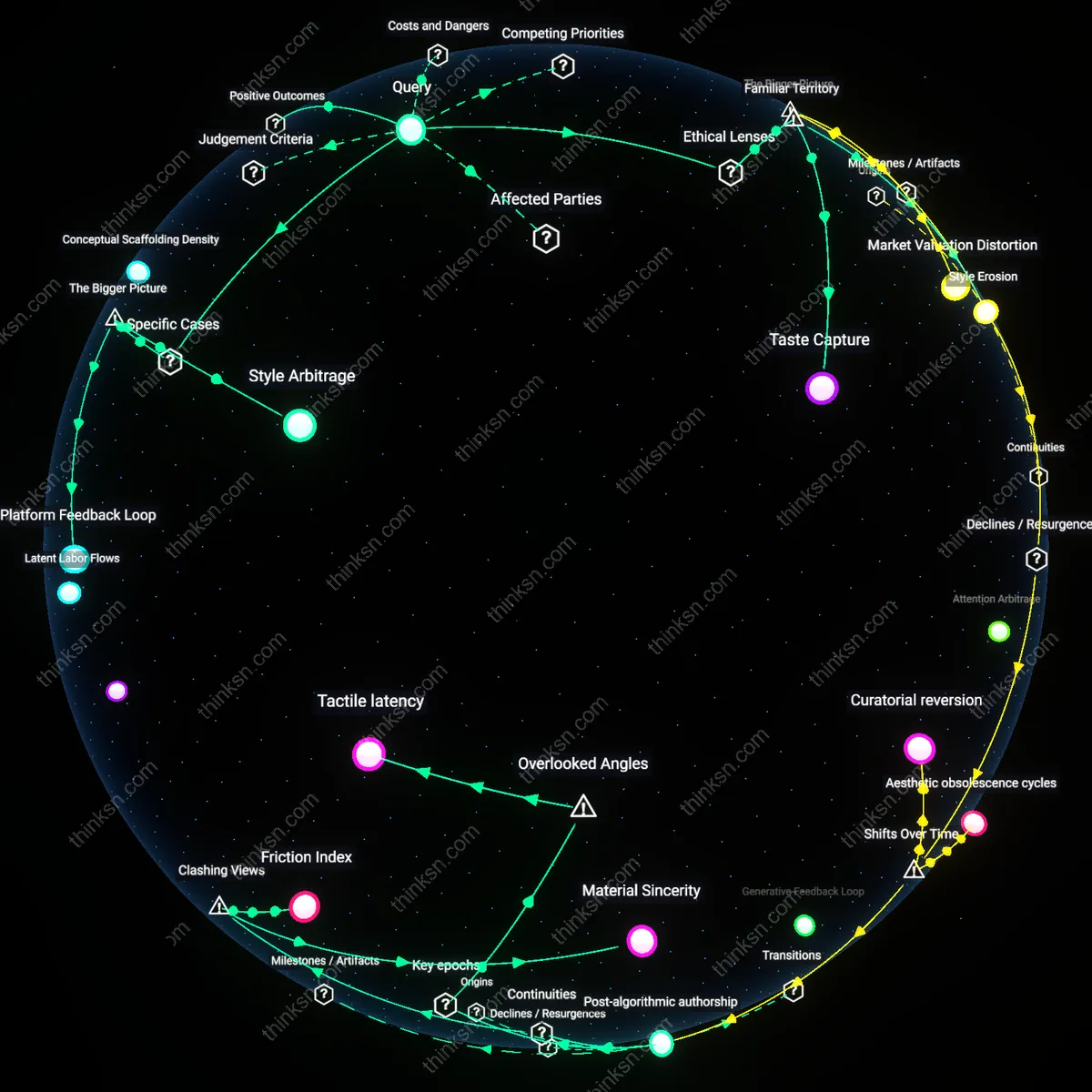

Creative override protocol

A marketing strategist should institutionalize human-led brand storytelling by establishing editorial veto rights for creative directors over AI-generated copy, as seen when Nike’s 2018 'Just What We Need' campaign was pulled from algorithmic optimization pipelines after sentiment analysis favored safer messaging that diluted athlete-centric narratives; the brand preserved voice consistency by mandating human curation at final approval stages, revealing that automated performance metrics often optimize for engagement at the expense of symbolic authenticity.

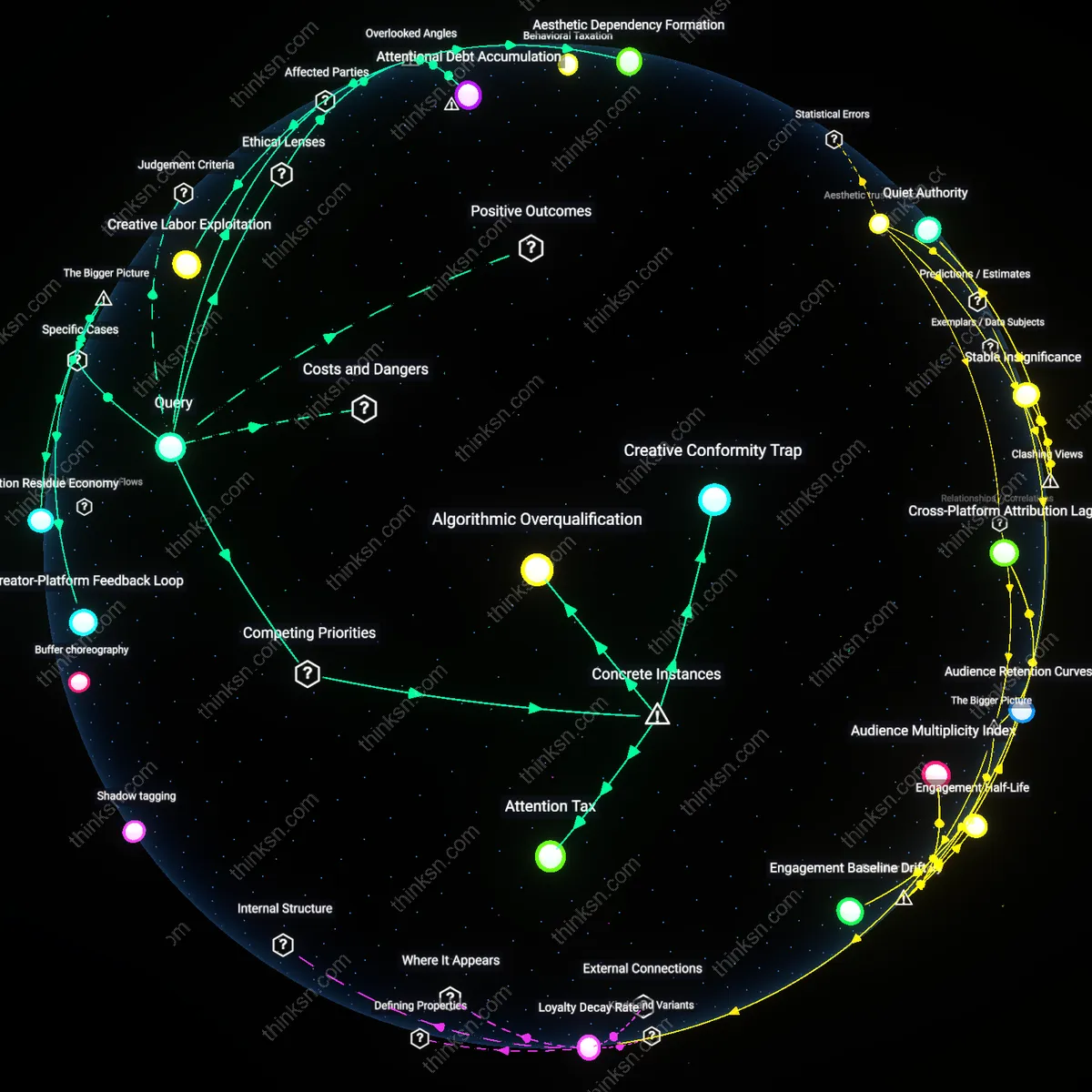

Performance feedback loop

A marketing strategist should prioritize data-driven personalization by deploying dynamic content modules tuned to real-time conversion metrics, exemplified by Spotify’s 2016 ‘Wrapped’ campaign, which used individual user data to generate hyper-personalized shareable summaries that outperformed centrally crafted narratives in both reach and virality; this demonstrated that AI-optimized outputs can exploit behavioral feedback loops at scale, making personalization not just tactical but structurally embedded in platform-mediated brand interactions.

Brand syntax preservation

A marketing strategist should embed brand voice constraints directly into AI training datasets, as demonstrated by The New York Times’ 2022 internal AI editorial tools that were fine-tuned on decades of Pulitzer-winning prose to maintain tonal coherence across automated summaries; this institutionalized storytelling integrity not through human intervention but through controlled data inputs, revealing that brand consistency can be systematized as a linguistic invariant rather than relying on ad hoc creative oversight.

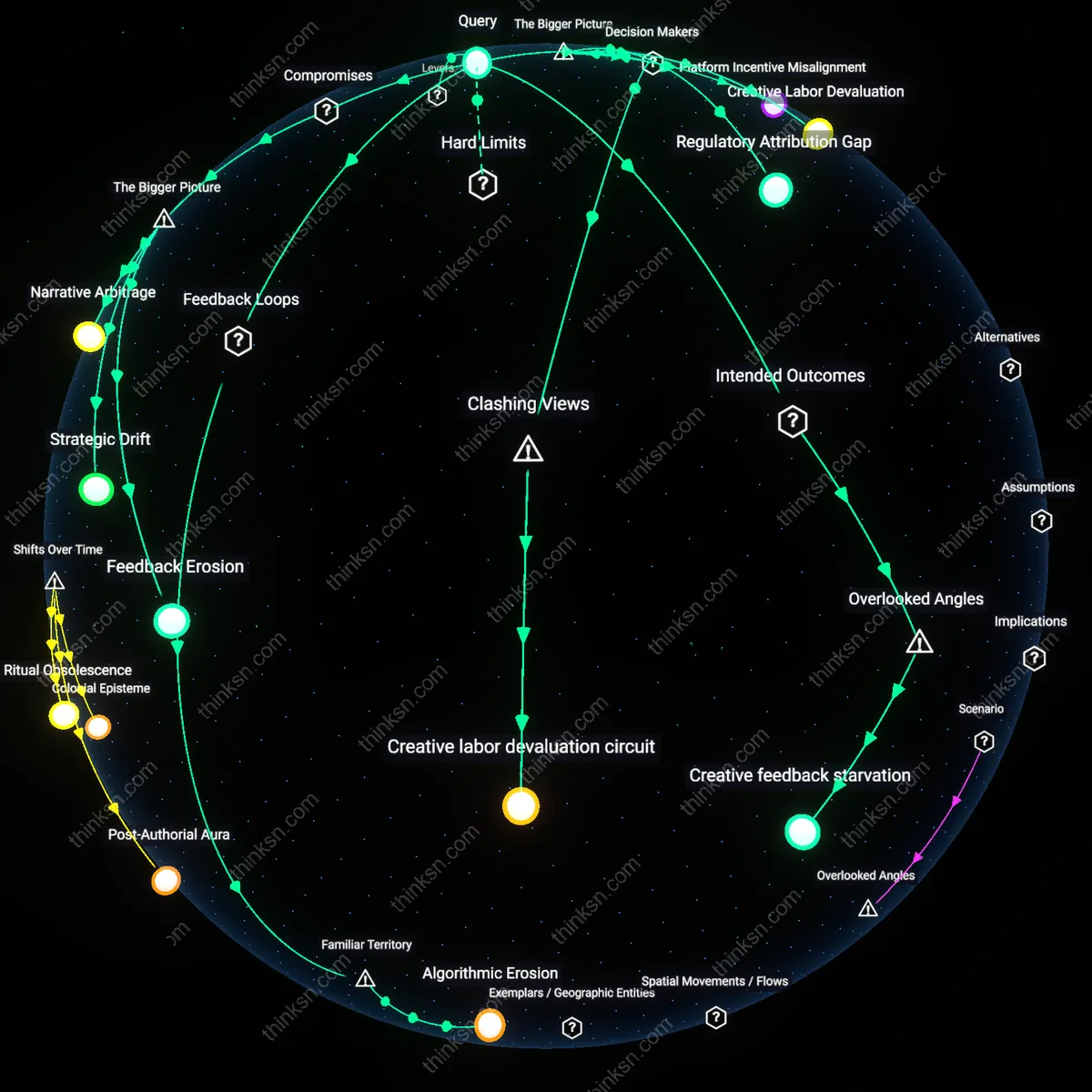

Algorithmic Erosion

A marketing strategist should prioritize human-led brand storytelling because overreliance on AI-generated copy creates a reinforcing feedback loop where data inputs become homogenized by past algorithmic outputs, degrading narrative originality over time. As brands increasingly adopt high-performing AI copy, the training data for future models skews toward statistically optimal but semantically shallow patterns, eroding linguistic diversity and brand distinctiveness. This mechanism operates through the self-reinforcing cycle of optimization pressure in platforms like Meta Ads and Google where top-performing content is amplified, crowding out experimental or emotionally resonant messaging that doesn’t immediately convert. The non-obvious insight under the lens of familiar territory—where people assume AI wins because it ‘converts better’—is that performance metrics themselves become distorted by the very success of AI, making authenticity a casualty of efficiency.

Emotional Half-Life

A marketing strategist should prioritize human-led brand storytelling because emotional resonance acts as a balancing feedback loop that sustains brand relevance across shifting cultural contexts. Unlike AI-generated copy, which optimizes for immediate engagement metrics based on historical data, human storytellers can anticipate and reflect emerging cultural sentiments—such as societal fatigue with hyper-optimization or nostalgia for authenticity—allowing brands to maintain trust during periods of disruption. This dynamic operates through consumer psychology systems where familiarity breeds not just recognition but deeper loyalty when tied to perceived integrity. The overlooked point under familiar territory—where 'AI wins because it performs better'—is that human stories decay slower emotionally; their half-life exceeds that of algorithmically tuned messages, which quickly feel generic or manipulative.

Creative labor devaluation circuit

A marketing strategist should prioritize data-driven personalization because platform executives and AI product managers at firms like Meta and Google design feedback loops that systematically downgrade the perceived value of human copy in performance dashboards, making AI output appear superior through KPIs tailored to machine-produced content—such as speed, volume, and A/B test wins—while excluding metrics like cultural resonance or long-term loyalty that favor human authorship. This mechanism functions through an incentive architecture that rewards quantitative velocity over qualitative depth, quietly eroding investment in human creatives. The dissonance lies in recognizing that AI 'performance' is not intrinsic but engineered by decision-makers who benefit from justifying automation and reducing reliance on unpredictable, union-adjacent creative labor.

Platform Incentive Misalignment

A marketing strategist should prioritize data-driven personalization because platform algorithms—controlled by tech giants like Meta, Google, and Amazon—privilege engagement-maximizing content, which AI-generated copy is increasingly optimized to exploit. These platforms reward granular behavioral targeting and real-time A/B testing at scale, creating a self-reinforcing loop where human-centric narratives become secondary unless they conform to algorithmically validated patterns. The underappreciated dynamic is that brand storytelling is no longer an editorial choice but a compliance mechanism with invisible platform rules that favor personalization metrics over narrative coherence, shifting creative agency to infrastructure-level actors.

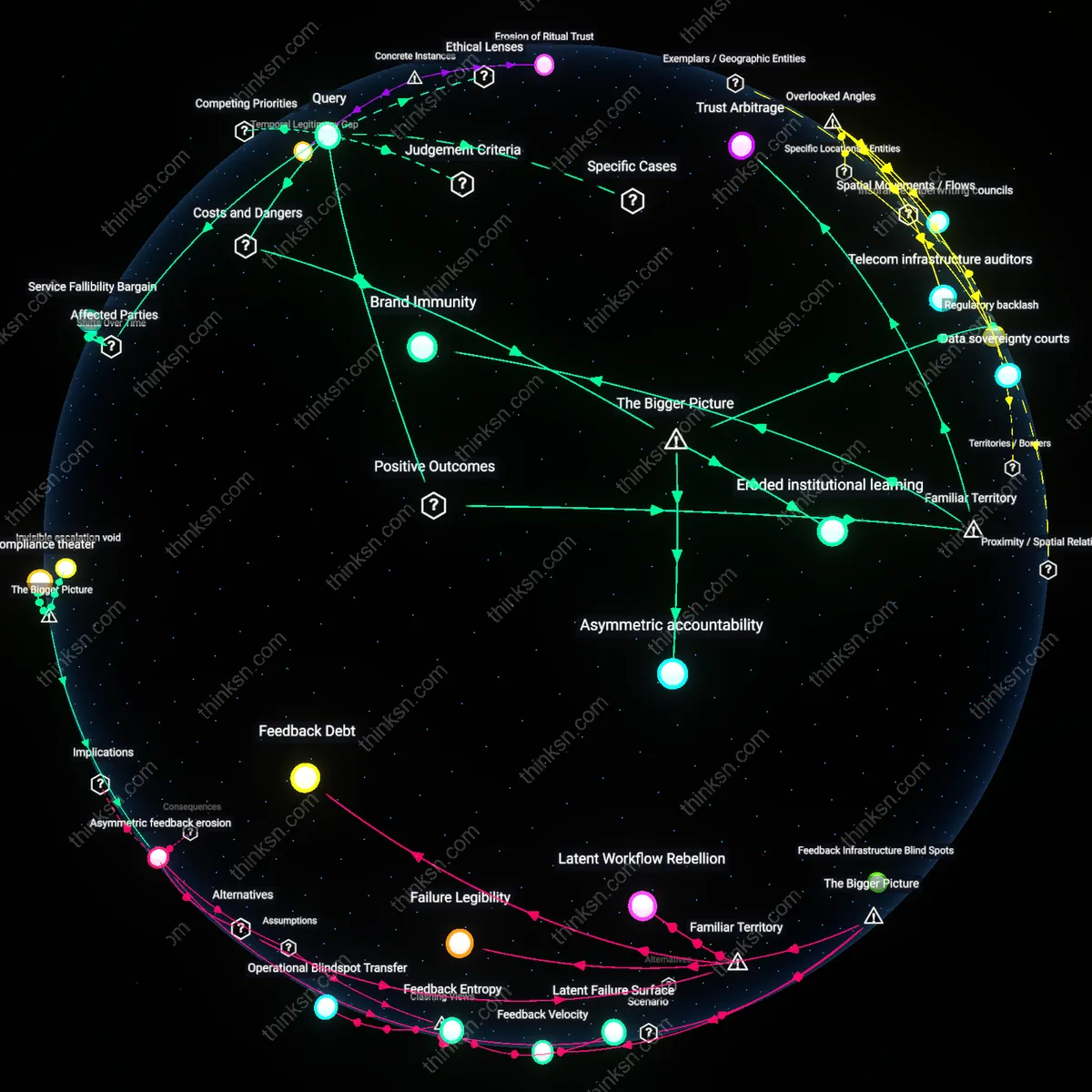

Creative Labor Devaluation

A marketing strategist should prioritize human-centric brand storytelling to resist the systemic devaluation of creative labor by AI commodification, which erodes long-term brand equity in favor of short-term conversion gains. When decision-makers at major agencies and in-house marketing teams accept AI copy as default, they reinforce a cost-efficiency logic led by CFOs and procurement officers rather than brand stewards, weakening the role of writers, strategists, and ethnographers. The non-obvious consequence is that storytelling becomes a defensive practice—preserving human insight against automation pressure—not because it outperforms now, but because its erosion removes the capacity to interpret cultural meaning in future data.

Regulatory Attribution Gap

A marketing strategist should prioritize data-driven personalization because emerging AI governance frameworks—such as the EU AI Act and Federal Trade Commission guidelines—are creating a liability shield for firms that can demonstrate algorithmic accountability, making personalized, traceable AI copy safer than ambiguous human narratives. Compliance officers and legal teams, not brand directors, are increasingly the final arbiters of marketing content, favoring systems that log decision pathways and avoid reputational risk from 'unauthorized' emotional appeals. The overlooked systemic shift is that verifiability, not resonance, is becoming the dominant regulatory constraint, privileging personalization architectures that can map inputs to outcomes even if they lack soul.

Feedback Erosion

A marketing strategist should prioritize human-led brand storytelling because overreliance on high-performing AI-generated personalization erodes the data feedback loop that sustains its own effectiveness; as AI homogenizes messaging across competitors, the behavioral data it learns from becomes statistically redundant, reducing differentiation and response rates over time. This dynamic is driven by platform economies—especially social media and programmatic advertising—where rapid A/B testing privileges short-term conversion metrics, incentivizing brands to flood channels with AI variants until audience signals saturate. The non-obvious consequence is not diminished creativity but the degradation of the very data fuel AI relies on—since mass mimicry collapses variation, the system’s learning foundation vanishes. This reveals a self-defeating mechanism embedded in automated optimization at scale.

Strategic Drift

A marketing strategist must compromise data-driven personalization for brand storytelling when operating in markets where regulatory and cultural backlashes against algorithmic manipulation are gaining institutional force, as seen in EU AI Act compliance demands and consumer trust erosion in hyper-targeted advertising. In these contexts, legal liability and reputational risk become material constraints, making high-performing AI copy a liability if it bypasses human ethical thresholds or amplifies bias. The strategic misstep lies in treating performance as exogenous to governance regimes, when in fact algorithms operate within legal and normative ecosystems that can retract permission to deploy. The underappreciated reality is that superior AI performance may accelerate regulatory intervention, shifting the cost-benefit equation in favor of legible, accountable human authorship.

Narrative Arbitrage

A marketing strategist should prioritize human-led brand storytelling because in saturated digital environments, cultural resonance—built through authentic narrative—grants access to offline amplification channels (e.g., press coverage, word-of-mouth, policy discussion) that dramatically extend reach beyond algorithmically gated platforms. While AI copy outperforms in click-through rates, its narrow optimization misses the systemic role of third-party validators—journalists, influencers, educators—who legitimize brand meaning outside paid media ecosystems. The overlooked mechanism is that human-authored stories circulate in networks where trust is currency, enabling brands to shift from media buying to ecosystem influence. This creates a leverage point where lower initial performance can yield higher systemic impact through narrative diffusion.

Creative feedback starvation

A marketing strategist should prioritize human-centric brand storytelling because overdependence on AI-generated copy disrupts the feedback loop between audience emotional response and creative evolution, a mechanism critical for brand longevity but invisible in A/B testing outcomes. Human writers internalize subtle audience reactions—comments, cultural reinterpretations, emotional echoes—across campaigns, refining narrative intuition over time, whereas AI systems train on surface-level engagement metrics and replicate what converts now, not what resonates next. This results in a gradual depletion of narrative risk-taking and emergent insight, observable in brands like certain Gen Z-targeted fashion labels that saw rising short-term CTRs but declining cultural relevance within 18 months of AI adoption. The overlooked dynamic is that human creativity functions as a recursive sense-making system, which AI consumption silently starves even as it boosts efficiency.