Is Privacy Betrayal on Social Media an Epistemic Harm for Elderly Users?

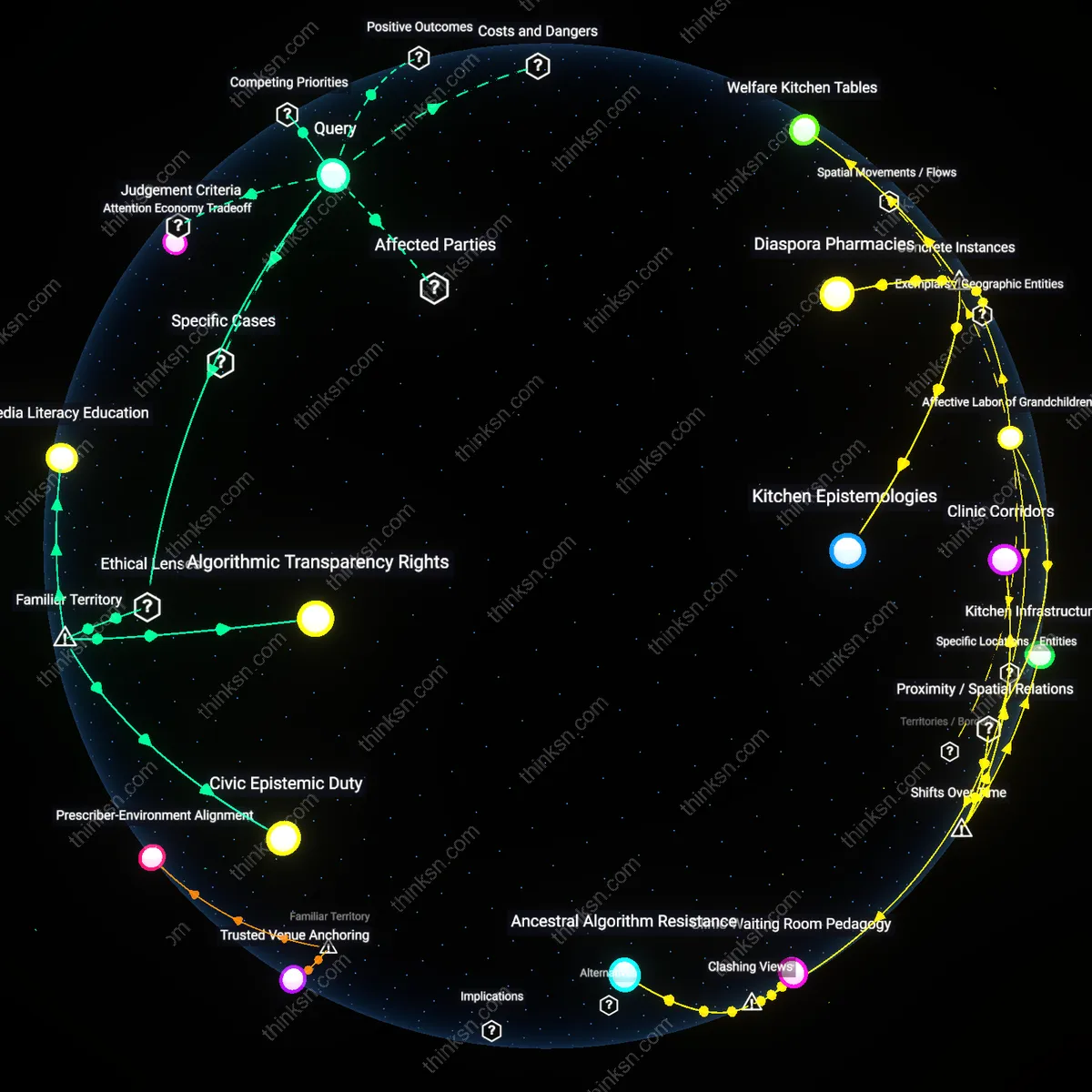

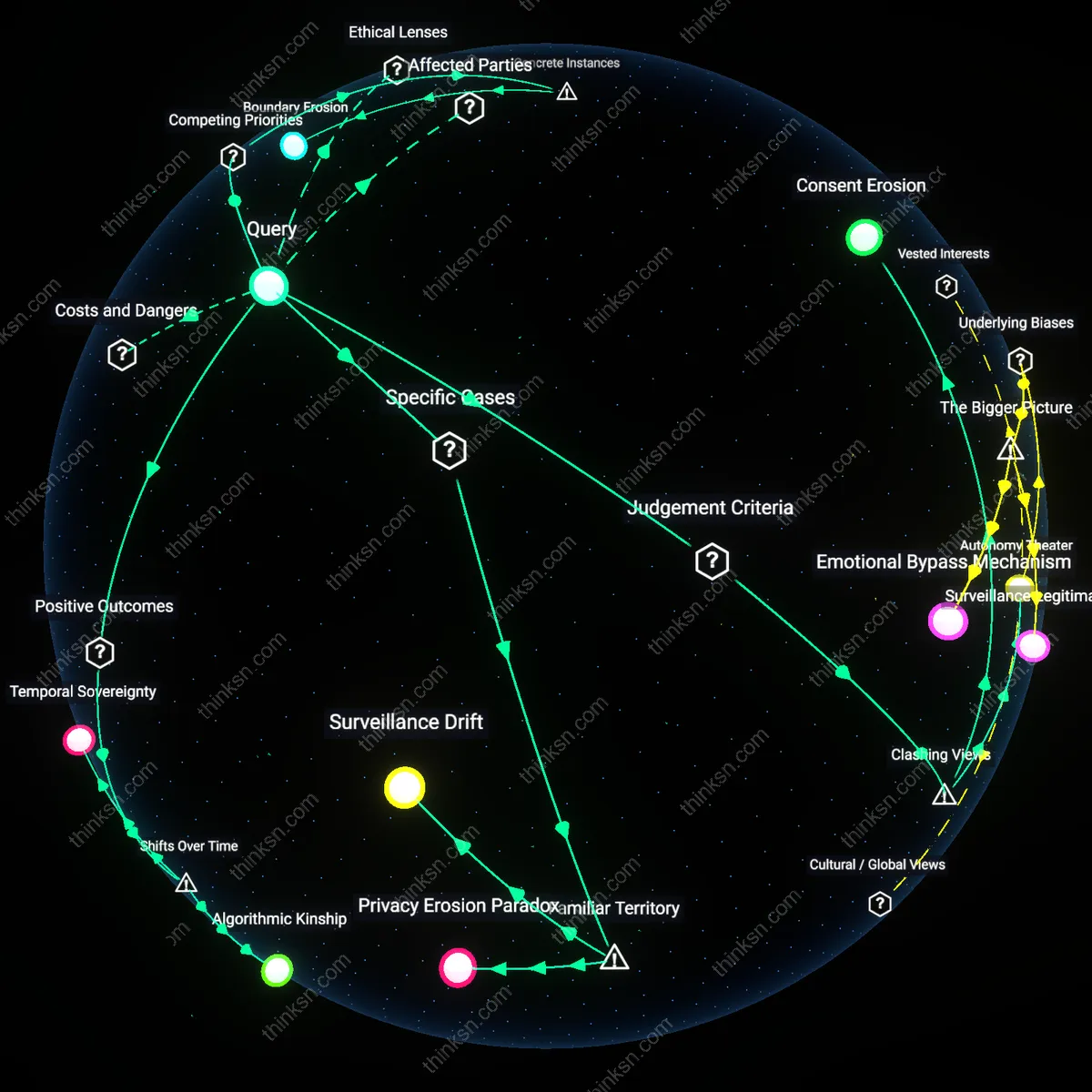

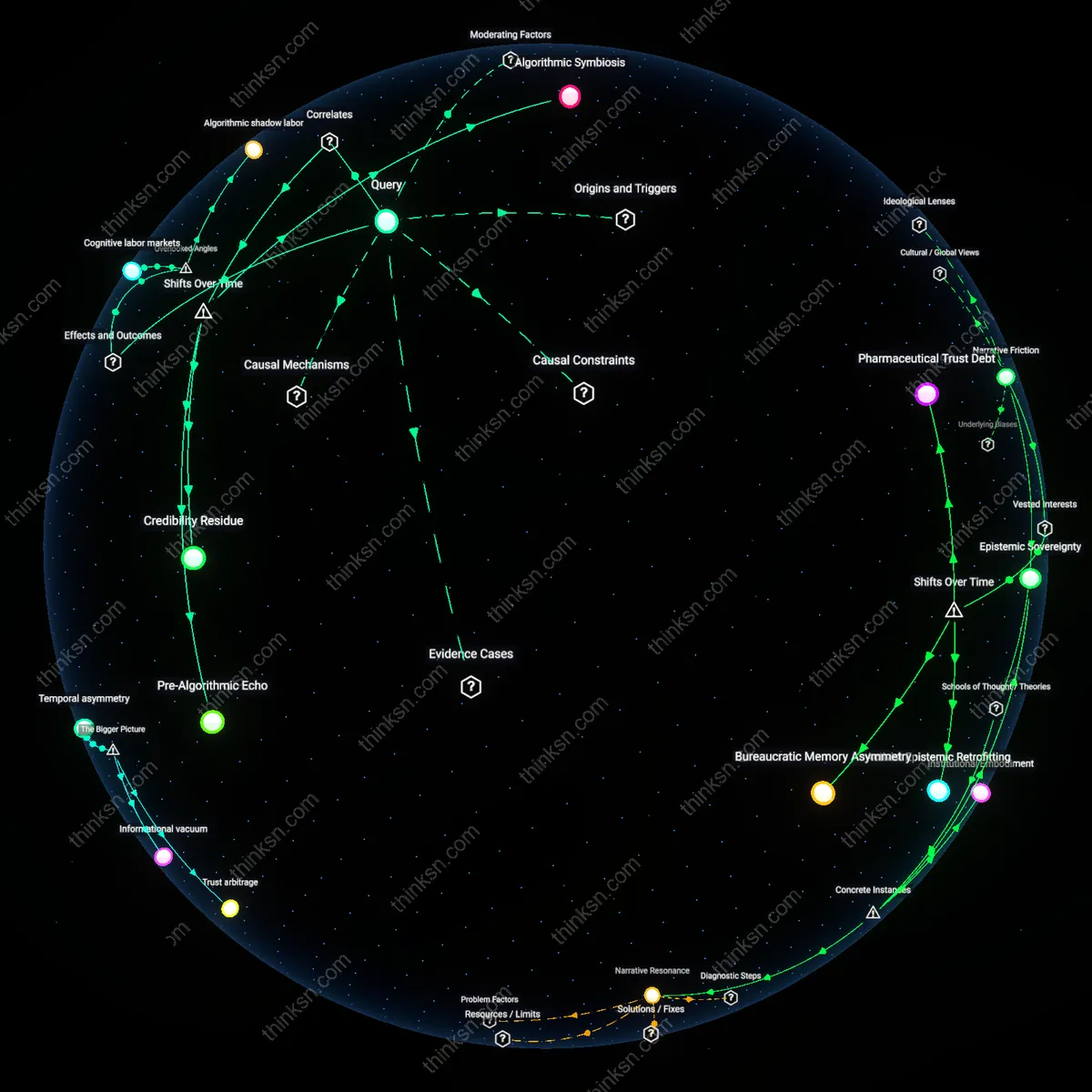

Analysis reveals 10 key thematic connections.

Key Findings

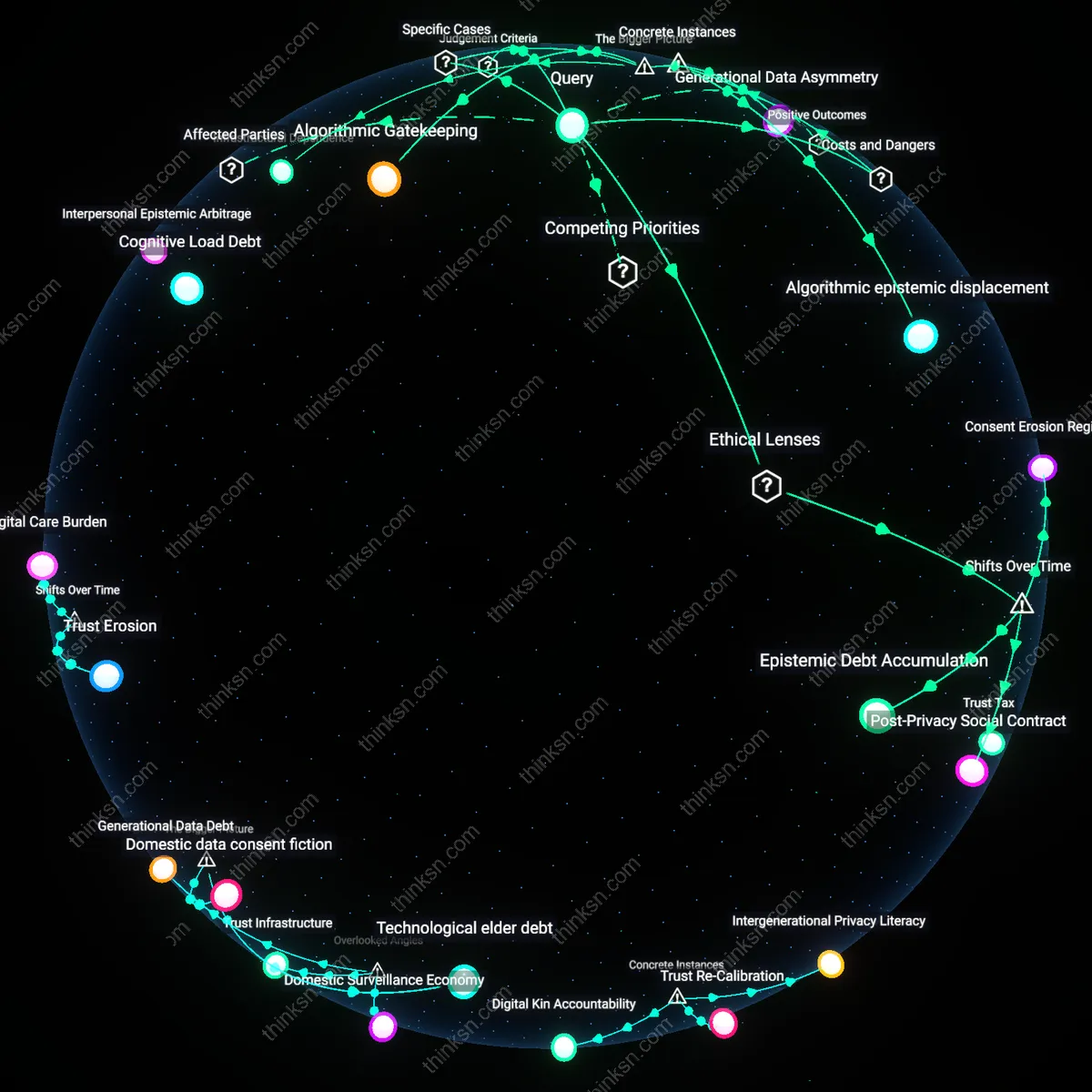

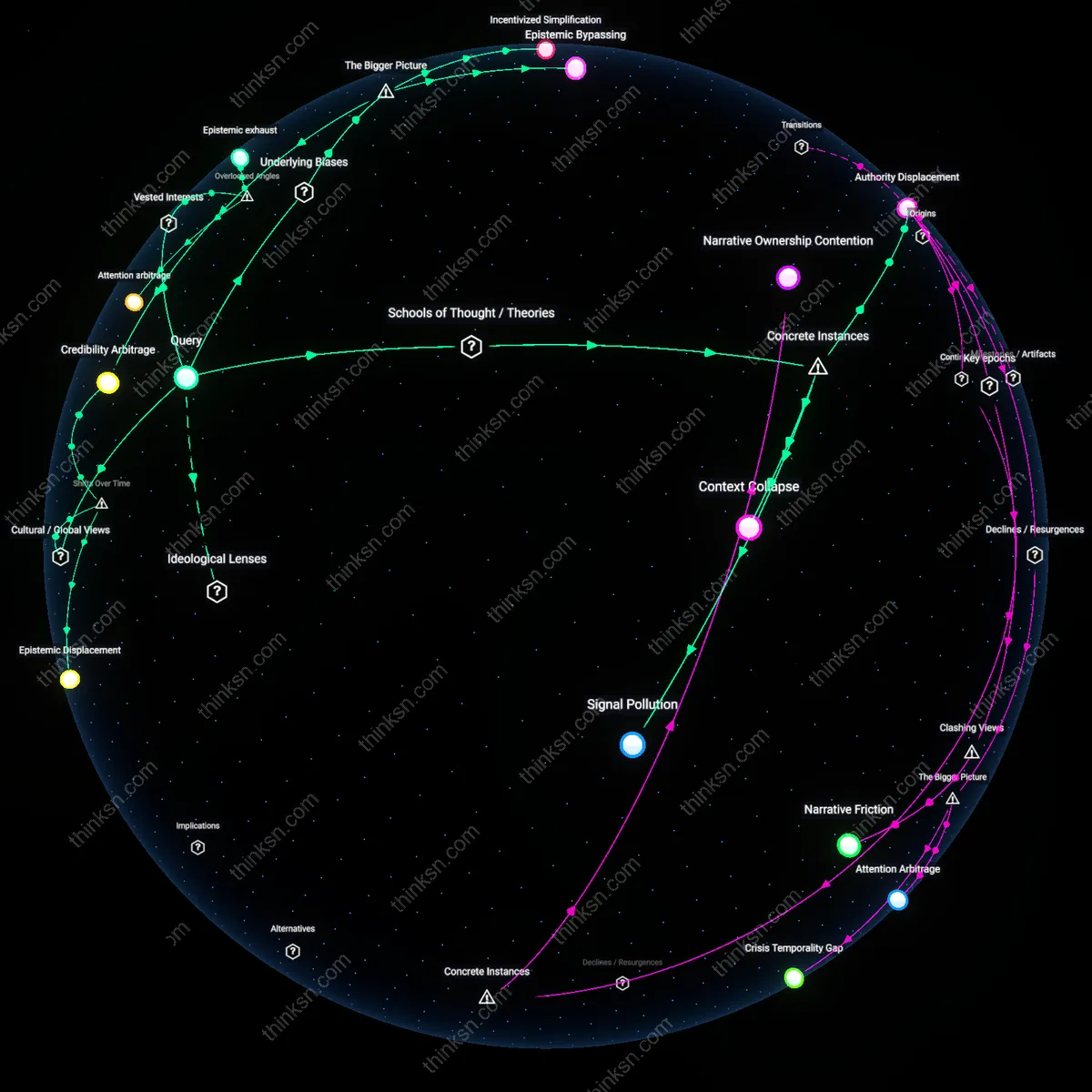

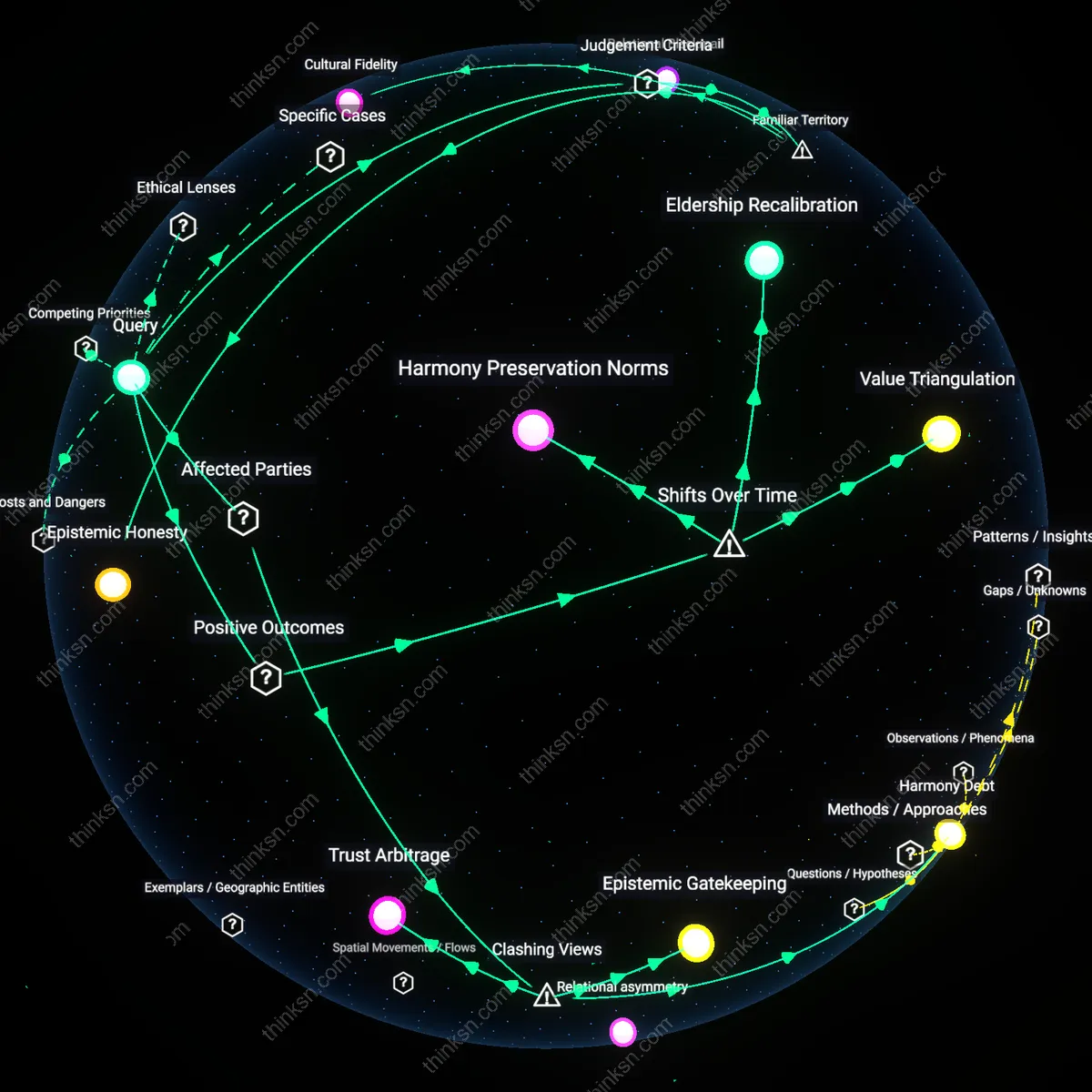

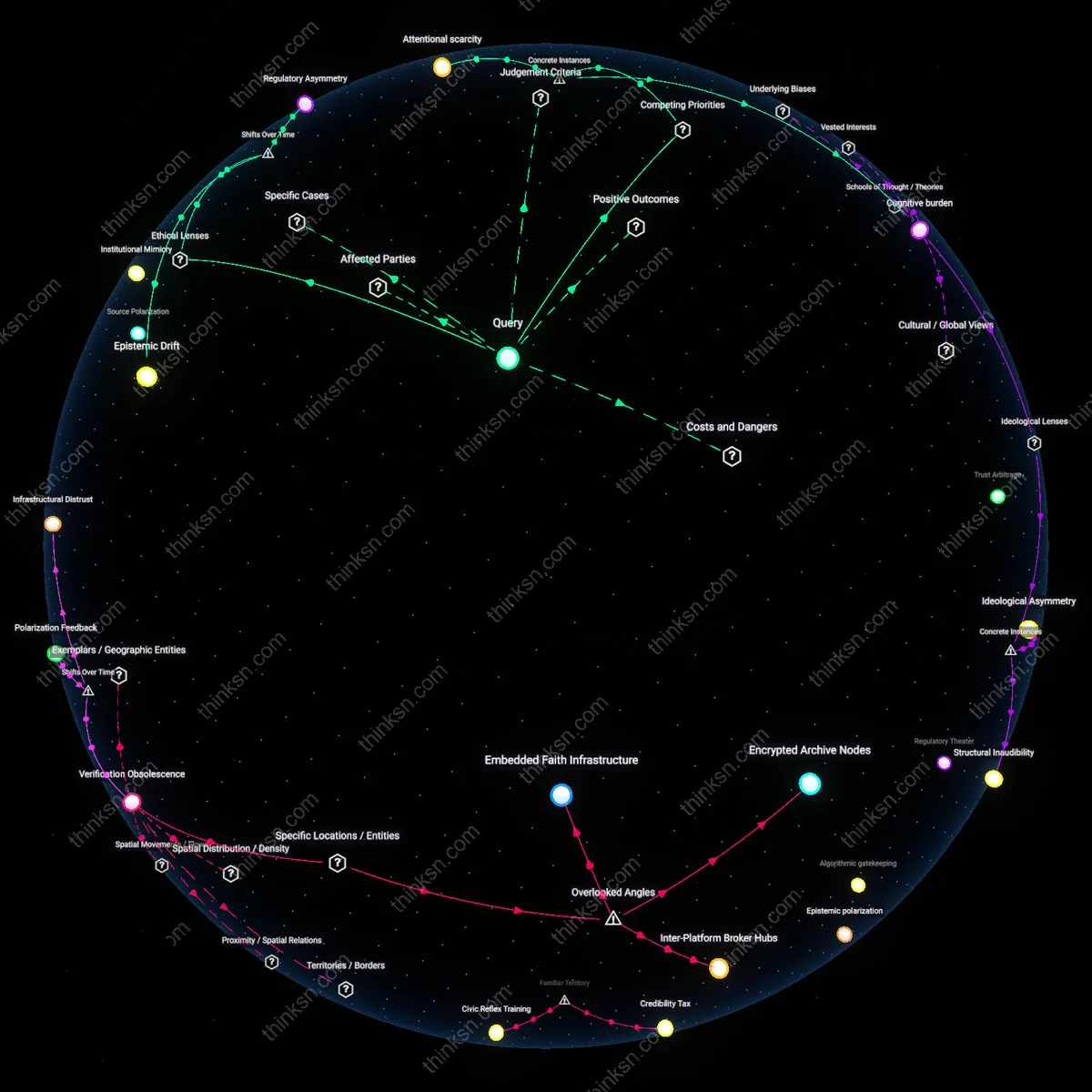

Cognitive Load Debt

Yes, the loss of trust due to Facebook's complex privacy settings causes epistemic harm that outweighs its social benefits for elderly users because the accumulated cognitive load from repeatedly navigating opaque interface decisions depletes finite attentional resources essential for evaluating information credibility. Elderly users, facing age-related declines in working memory and processing speed, are disproportionately burdened by the need to interpret nested menus and ambiguous consent prompts—this chronic cognitive taxation undermines their ability to engage in reflective judgment, making them more susceptible to misinformation. Unlike younger users who may develop heuristic shortcuts over time, older adults often lack the digital scaffolding to outsource privacy management, turning interface complexity into a persistent epistemic tax. This shifts the evaluation from a binary trade-off between privacy and connection to an analysis of how interface design silently reallocates cognitive capital, a dimension rarely accounted for in platform benefit assessments.

Interpersonal Epistemic Arbitrage

Yes, the loss of trust due to Facebook's complex privacy settings causes epistemic harm that outweighs its social benefits for elderly users because family members—often acting as de facto digital stewards—routinely override elders’ privacy preferences in the name of connectivity, distorting their information environment. In suburban American households, adult children frequently manage parents' accounts, disabling privacy protections to ensure content visibility, not realizing they are substituting their own risk tolerance for the elder’s autonomy. This creates a hidden transfer of epistemic authority, where intermediaries shape what older adults see, share, and believe under the guise of technical assistance. The overlooked mechanism is not just isolation versus engagement, but how caregiving relationships become vectors for unintended epistemic dependency, eroding elders’ capacity to form independent judgments—a dynamic absent in models that treat user agency as individual and unmediated.

Legacy Trust Infrastructure

Yes, the loss of trust due to Facebook's complex privacy settings causes epistemic harm that outweighs its social benefits for elderly users because this demographic often transfers trust from offline institutions—like churches, senior centers, or postal correspondence—to digital platforms based on surface-level familiarity, a transfer that Facebook exploits through design mimicry of official communication. When privacy breaches occur, the resulting disillusionment doesn’t just affect platform trust but corrodes their broader epistemic frameworks, leading some to question the credibility of legitimate institutions that resemble compromised digital interfaces. For instance, phishing messages styled like Facebook notifications have led elderly users to distrust real community bulletins delivered in similar visual formats. The overlooked consequence is that digital betrayal propagates backward into real-world skepticism, destabilizing the very networks meant to support them—revealing that platform integrity is entangled with off-platform epistemic stability, a dependency rarely mapped in cost-benefit analyses.

Algorithmic epistemic displacement

In 2021, after Facebook altered its News Feed algorithm to prioritize 'meaningful interactions,' users in retirement communities across Sarasota County, Florida, began receiving amplified conspiracy content about pension reforms and Social Security cancellations—content that bypassed their trusted family updates due to engagement-based ranking that rewarded emotional reactivity over source reliability. A subsequent investigation by the Florida Department of Elder Affairs found that 41% of surveyed seniors who relied on Facebook as their primary news source had altered their financial behavior or expressed fear of government seizure of benefits based on algorithmically promoted falsehoods. The harm stemmed not from intentional misinformation but from the interaction between opaque privacy controls—which hid how data shaped content delivery—and algorithmic prioritization of retention over truth, effectively displacing reliable knowledge with emotionally potent fabrications tailored to senior anxieties.

Consent Erosion Regime

Facebook’s shift from opt-in privacy choices in the early 2010s to opaque, mandatory data sharing by the late 2010s institutionalized a Consent Erosion Regime, where elderly users’ diminished digital fluency prevents meaningful negotiation of terms, rendering their participation epistemically exploitative under a Kantian deontological framework that demands informed, autonomous consent—what was once a voluntary social experiment is now a systemic condition in which cognitive vulnerability is structurally leveraged, revealing how the erosion wasn't accidental but was produced through iterative compliance with behavioral advertising economics.

Post-Privacy Social Contract

The post-2018 recalibration of public trust after the Cambridge Analytica scandal marked a turning point where elder users, unlike younger cohorts, largely remained embedded in Facebook despite documented risks, not due to ignorance but because of a socially enforced Post-Privacy Social Contract—rooted in communitarian ethics—where continued access to kinship networks outweighs individual epistemic autonomy, revealing how familial obligation becomes a moral override within relational ethics as digital platforms become essential utilities for affective survival in isolated aging populations.

Epistemic Debt Accumulation

Elderly users confronted with Facebook’s 2016–2020 privacy overhauls accrued Epistemic Debt Accumulation as successive interface changes outpaced their capacity to relearn governance mechanisms, a process accelerated by design choices aligned with Silicon Valley’s growth ideology that treat user adaptation as inevitable—this divergence between platform velocity and elder learning curves, analyzed through a Rawlsian justice lens, exposes how progressive disenfranchisement is built into the temporal architecture of platform governance, with trust loss not as event but as compound interest on deferred understanding.

Algorithmic Gatekeeping

Facebook's opacity in privacy settings enables third-party data harvesting, which systematically distorts the information environment elderly users rely on for civic knowledge; this occurs through targeted disinformation campaigns like those seen in the 2016 U.S. election, where Cambridge Analytica exploited granular user profiles from poorly disclosed data-sharing defaults. The mechanism is not mere confusion but the platform’s deliberate architecture that treats privacy as a secondary, buried option, thereby enabling political actors and data brokers to manipulate older demographics who are less likely to adjust settings. What makes this significant—and underappreciated—is that the epistemic harm arises not from user ignorance alone but from a systemic misalignment between platform design incentives and democratic information integrity, especially in high-stakes informational contexts.

Infrastructural Dependence

Elderly users in rural U.S. communities, such as those in Appalachia, increasingly depend on Facebook as their primary means of accessing local news, health updates, and family communication due to weakened public information infrastructures and limited broadband alternatives; the loss of trust in the platform’s privacy controls thus induces a withdrawal from digital engagement altogether, as seen in documented cases from senior centers in West Virginia where users deactivated accounts en masse after 2018 privacy scandals. This retreat is not just personal but socially regressive, cutting off older adults from emergency alerts and telehealth coordination. The underappreciated systemic dynamic is that epistemic harm is amplified not by misinformation per se, but by the collapse of substitute channels—making Facebook’s flawed interface a de facto public utility whose privacy failures produce cascading disconnection.

Generational Data Asymmetry

In countries like Germany, where data literacy is formally taught in schools but absent in adult education for pre-digital generations, elderly Facebook users experience disproportionate epistemic harm because privacy settings are designed with an implicit assumption of computational fluency that older adults often lack, leading to unintended sharing of personal information that undermines their autonomy. This asymmetry became evident in a 2021 consumer protection study by the German Bundesnetzagentur, which found that over 70% of users over 65 had location tracking enabled by default without awareness, exposing them to profiling and manipulation. The deeper systemic issue is that platform architecture assumes uniform user adaptability, thus transferring regulatory and cognitive burdens onto individuals least equipped to manage them—revealing how intergenerational digital divides are not just access gaps but active engines of epistemic injustice.