Why Expert Opinions Backfire on Social Media?

Analysis reveals 11 key thematic connections.

Key Findings

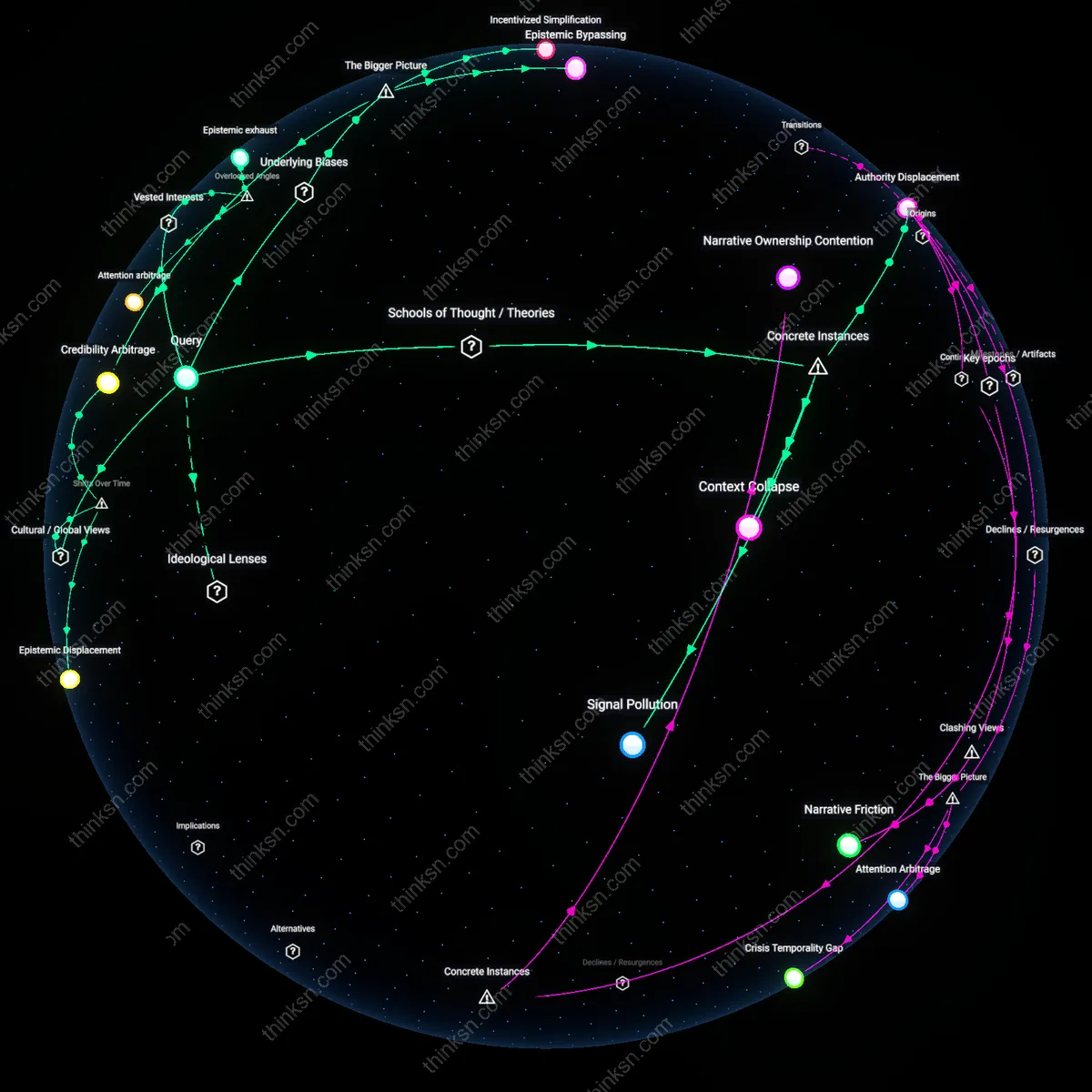

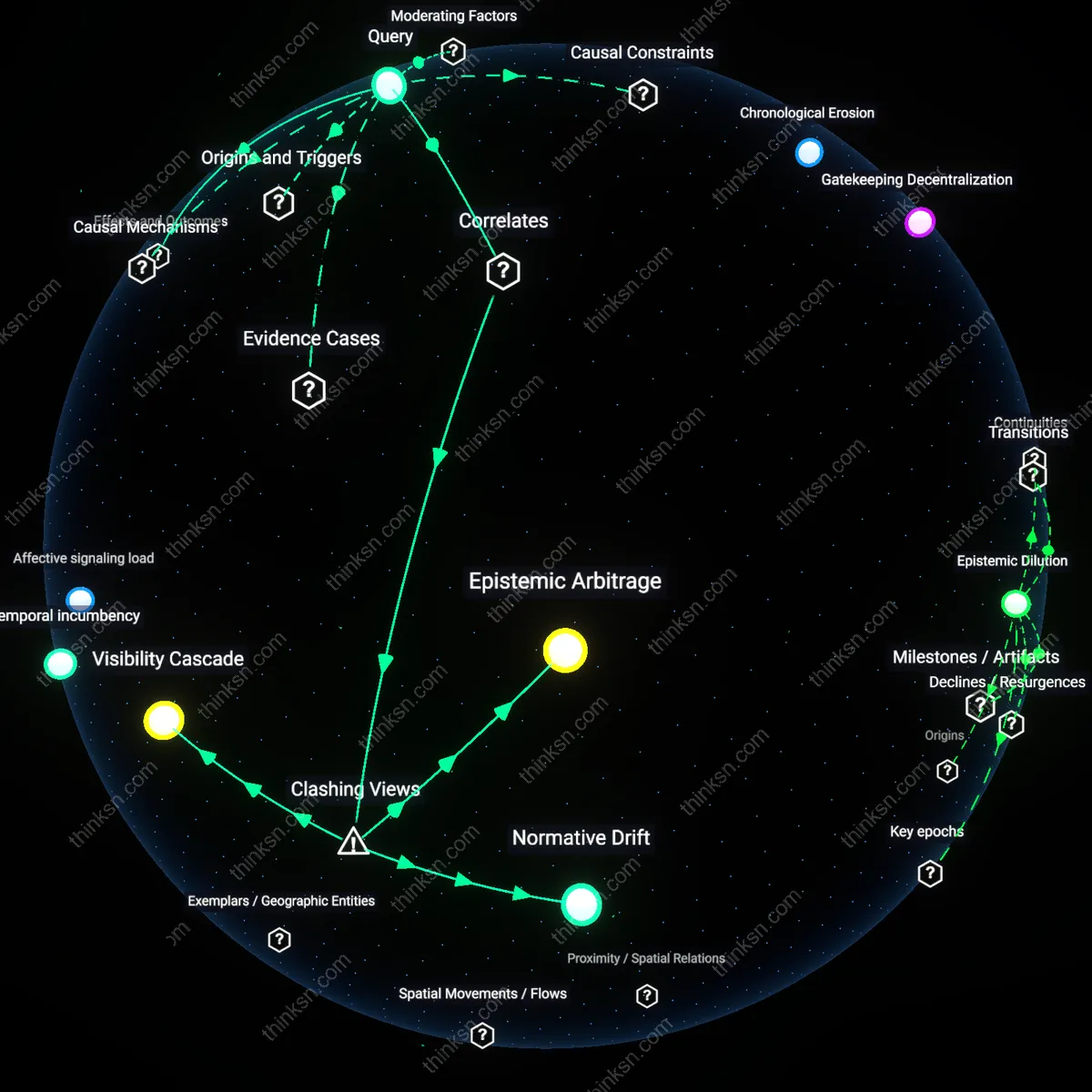

Authority Displacement

Expert commentary on social media can reduce data credibility when established institutional authorities are publicly challenged by peer-recognized experts using informal platforms, as seen when CDC epidemiologists’ pandemic data lost public traction after virologists like Dr. Eric Feigl-Ding reinterpreted the same statistics on Twitter in early 2020, framing them as evidence of uncontrolled spread, thereby transferring epistemic authority from bodies to individuals and exposing a vulnerability in institutional credibility when narrative velocity exceeds bureaucratic communication speed. This shift reveals that credibility no longer resides solely in data or institutions but in the agility of personal brands to narrativize risk in real time.

Signal Pollution

In the 2016 Zika virus outbreak, WHO's epidemiological reports were drowned in social media discourse where qualified tropical medicine specialists debated transmission risks using conflicting models—one group emphasizing mosquito vector dominance, another warning of sustained sexual transmission—causing public health messaging to appear irresolute despite consistent data, illustrating how pluralism of expert opinion in open digital forums creates interpretive noise that mimics data unreliability even when raw figures are stable. The mechanism is not misinformation but overproduction of legitimate interpretation, which becomes indistinguishable from confusion to non-specialist audiences.

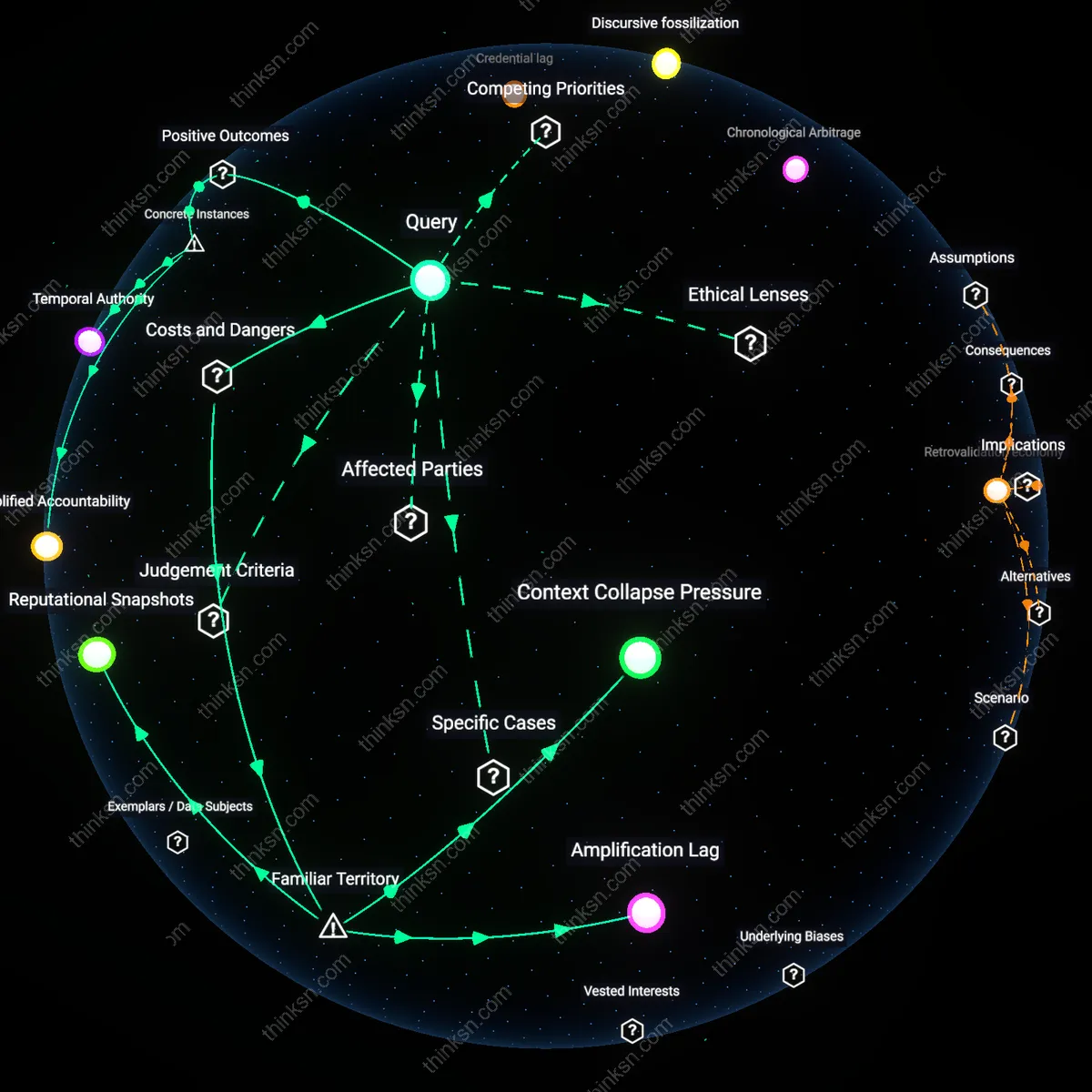

Context Collapse

During the 2022 IPCC climate report rollout, climatologist Michael E. Mann’s Twitter commentary explaining probabilistic sea-level projections was repurposed in right-wing media memes that juxtaposed his statements with unrelated flood footage, eroding the original data’s perceived rigor not through falsification but through the destruction of analytical context when expert narrative detaches from source constraints in decentralized networks. This demonstrates how social media collapses temporal, evidential, and rhetorical contexts, turning qualified interpretation into free-floating soundbites that undermine the precision on which credibility depends.

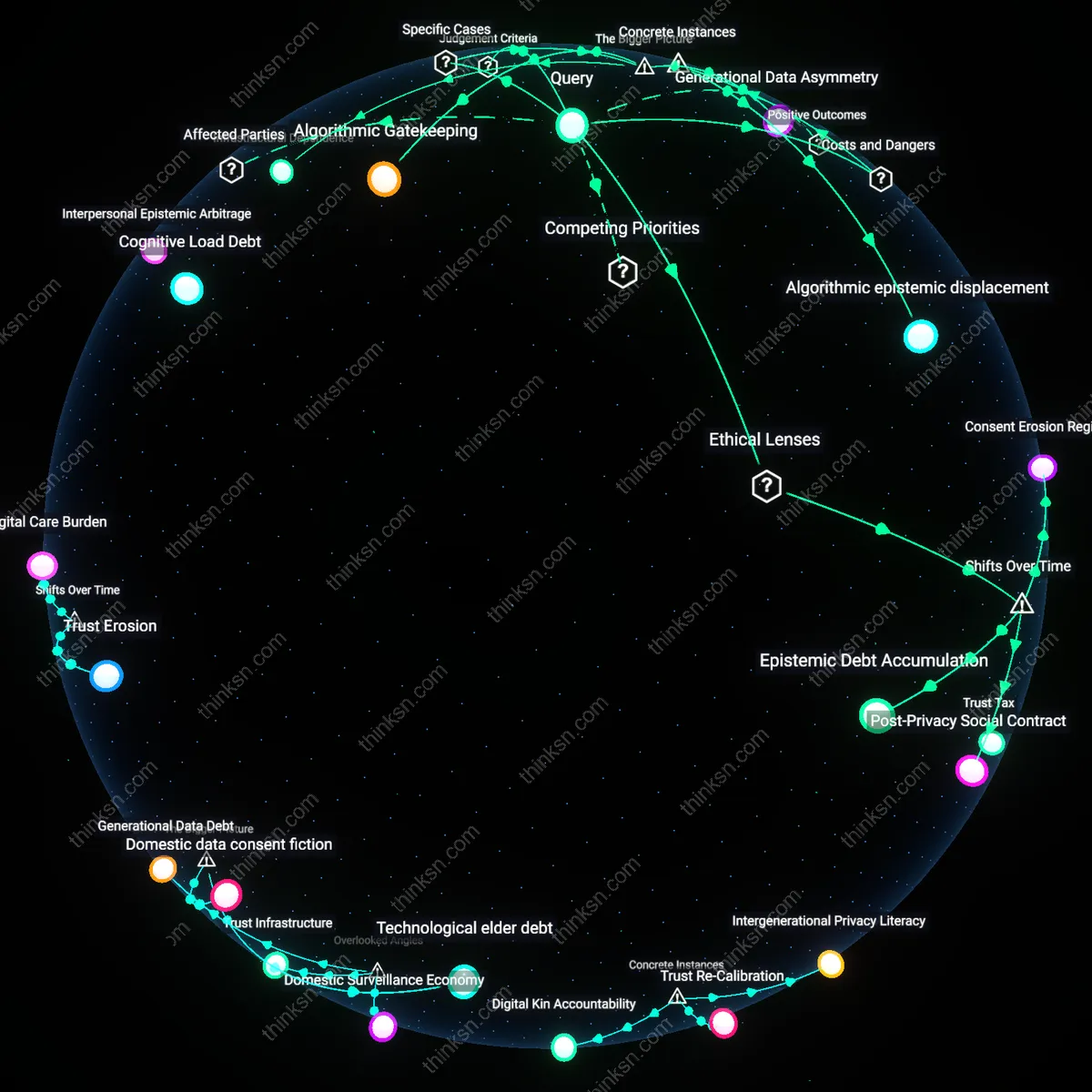

Epistemic Displacement

Expert commentary on social media reduces data credibility in collectivist cultures because post-2008 financial crisis, algorithmic amplification disrupted traditional knowledge hierarchies wherein elders or religious scholars—such as Indonesia’s kyai or West Africa’s marabouts—held interpretive authority, and this shift made publics suspect that Western-style experts promote data as neutral when it actually serves neoliberal agendas, revealing how digital platforms have displaced community-embedded epistemologies with transient, credential-based claims.

Temporal Legibility Gap

In East Asian contexts, particularly after the 2010s rise of Weibo and WeChat, expert commentary eroded data trust because rapid dissemination bypassed Confucian-aligned validation rituals—whereby knowledge required deliberative consensus among scholar-officials—leading audiences to perceive even qualified experts as skipping socially expected phases of humility and review, thus making their data appear prematurely asserted and culturally illegible, exposing a rupture between digital temporality and long-standing epistemic sequencings.

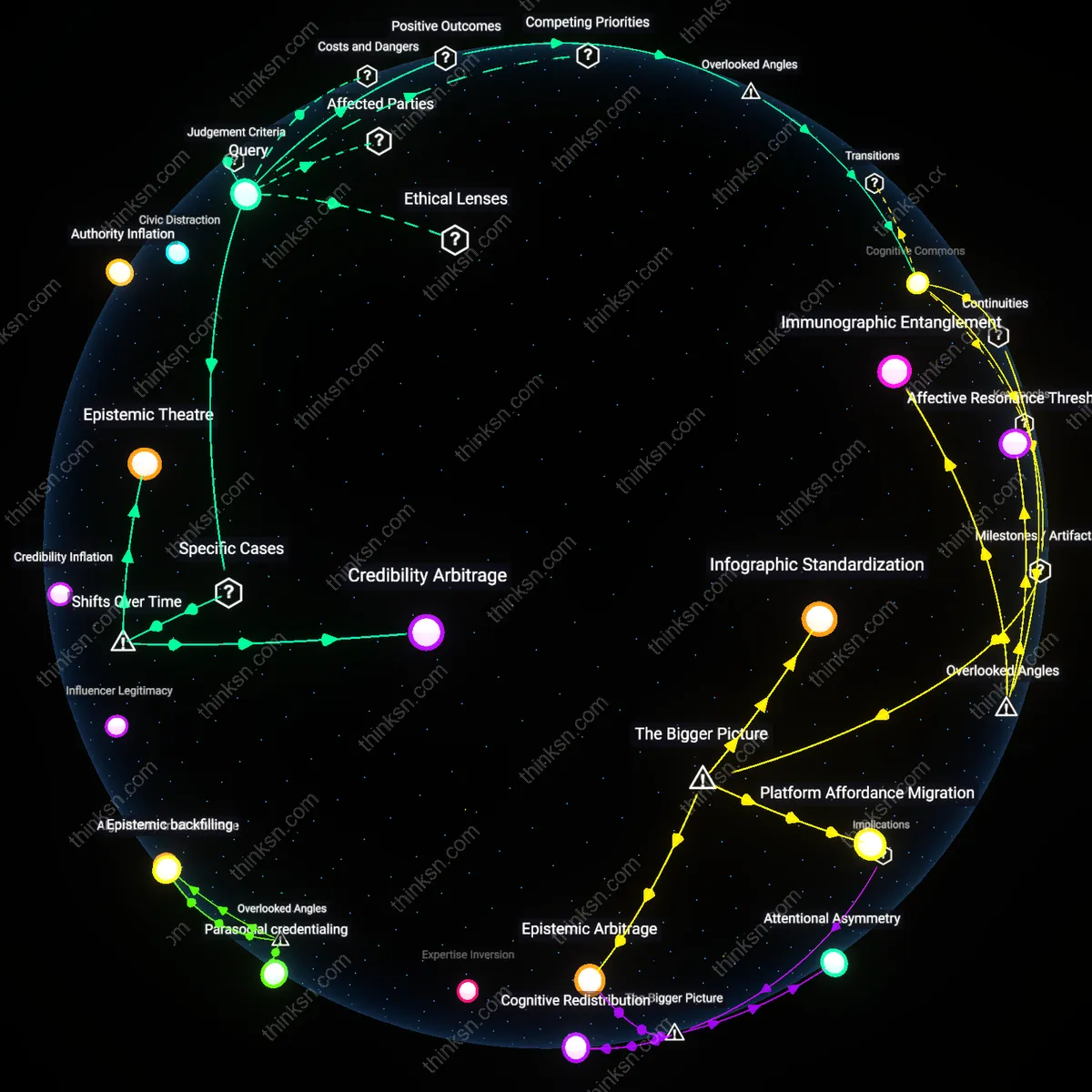

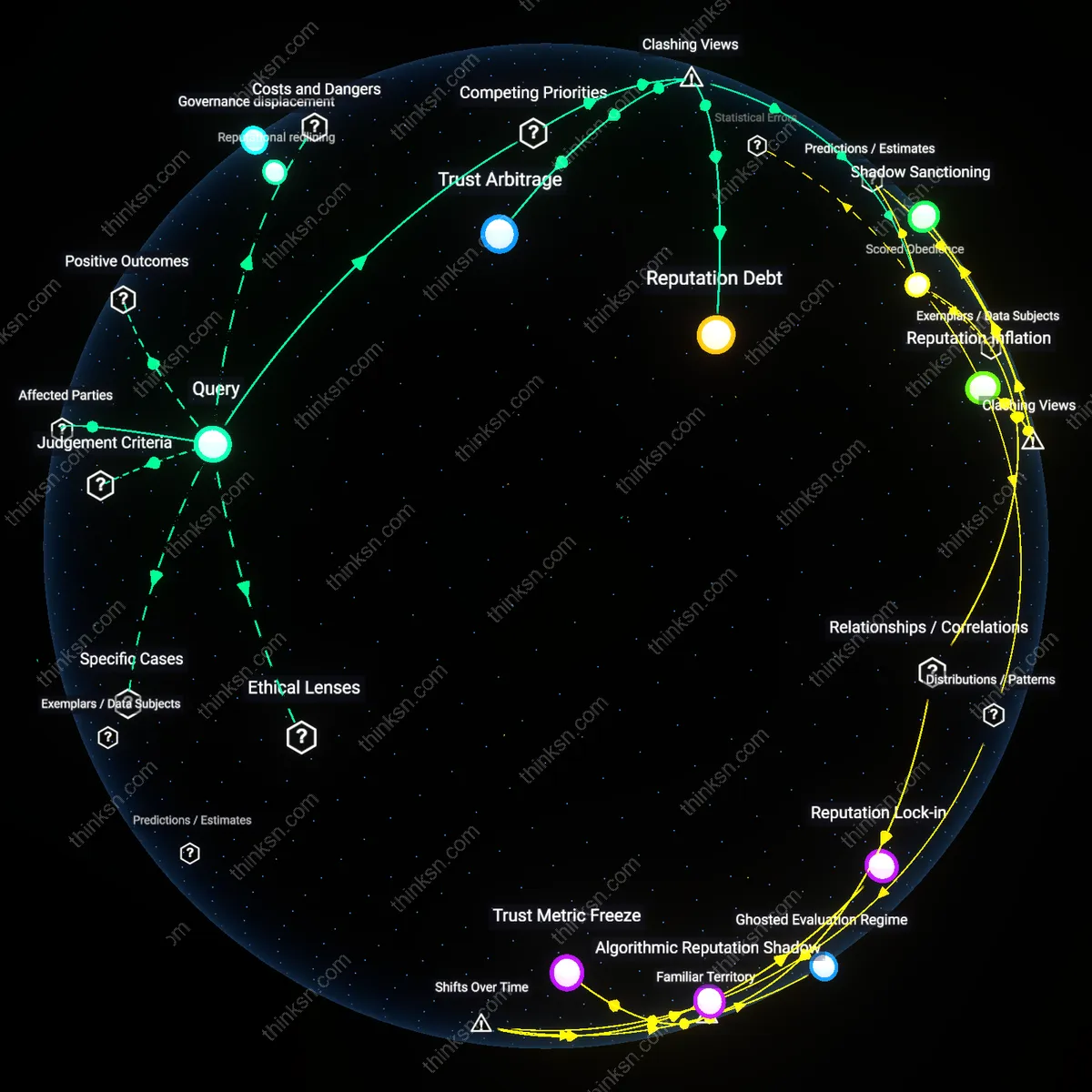

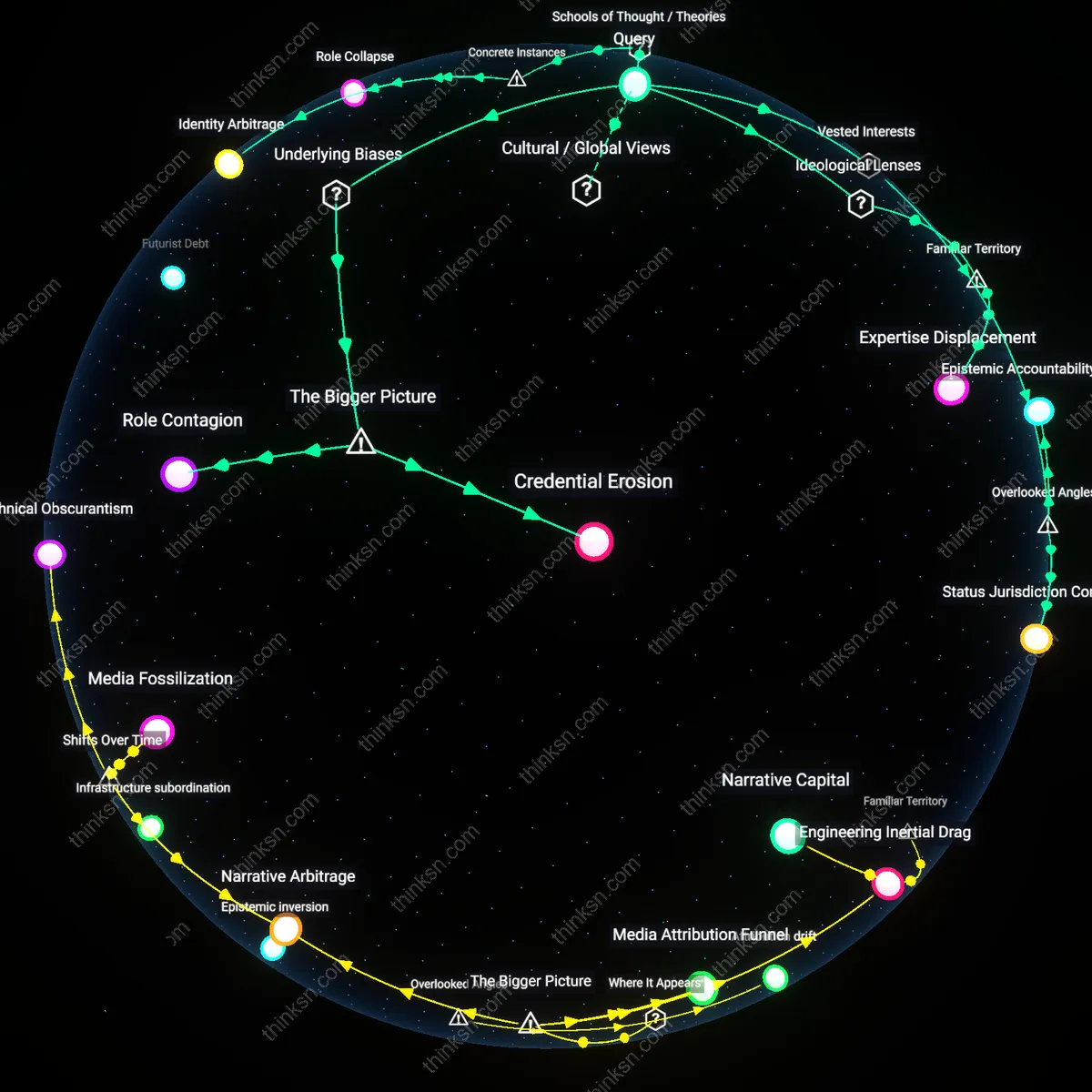

Credibility Arbitrage

Since the 2016 global disinformation turn, Western publics began treating expert social media commentary as credibility arbitrage—where specialists use their credentials to inflate the weight of contested data in real-time discourse, a practice unseen in pre-social media eras when peer-reviewed publication created a buffer between expertise and public claim-making—particularly visible in climate or vaccine debates on Twitter/X, where experts' immediacy mimics punditry, revealing how temporal compression has monetized or politicized the very performance of expertise.

Incentivized Simplification

Expert commentary on social media reduces data credibility because platforms reward engagement over accuracy, compelling experts to distort or oversimplify findings to compete for attention. Algorithmic curation on sites like Twitter or YouTube prioritizes emotionally resonant or controversial takes, pressuring credentialed individuals to align their communication with viral norms rather than methodological rigor. This dynamic is driven by platform owners and advertisers whose revenue depends on user retention, not informational fidelity, making distortion an enforced performance. The non-obvious consequence is that expertise becomes performative, where the expert’s survival in the visibility economy depends on sacrificing nuance, thereby eroding trust in the underlying data itself.

Epistemic Bypassing

Expert commentary on social media undermines data credibility by relocating epistemic authority from institutional processes to individual personalities, enabling partisan actors to selectively amplify or discredit experts based on ideological alignment. When credentialed voices enter decentralized networks, their statements are detached from peer review and embedded scientific contexts, making them vulnerable to co-option by communities invested in specific narratives—such as anti-vaccine groups citing rogue epidemiologists. This shift is enabled by the collapse of gatekeeping institutions (e.g., journals, universities) as arbiters of credibility, allowing political operatives, influencers, and algorithmic aggregators to reframe expertise as opinion. The underappreciated outcome is that data inherits the polarized fate of the commentator, not the strength of its evidence base.

Attention arbitrage

Expert commentary on social media reduces data credibility when vested corporate actors exploit timing and platform algorithms to flood discussions with supportive yet tangential expert voices during moments of public uncertainty. These actors deploy qualified but niche specialists to emphasize minor uncertainties in robust datasets, not to correct misinformation but to create an artificial impression of scientific contestation—particularly observed in climate and pharmaceutical discourse on platforms like X and Facebook. This tactic works not by discrediting the data directly but by shifting attention toward procedural minutiae, which platforms then amplify as engagement magnets, subtly eroding trust in consensus without overt denial. What is overlooked is that credibility is not undermined by the message’s content but by the strategic surplus of expert-like attention, which restructures public epistemology around distraction rather than dissent.

Epistemic exhaust

Activist networks, despite opposing corporate influence, inadvertently reduce data credibility by demanding expert commentators on social media continuously validate their moral framing, forcing experts to append ethical imperatives to statistical findings even when such extensions exceed the data’s scope. For example, public health researchers amplifying pandemic risk data on Instagram are pressured by aligned advocacy groups to link statistics explicitly to policy demands, merging empirical claims with calls for action that, when contested, retroactively taint the data’s perceived neutrality. The mechanism operates through audience expectations shaped by repeated fusion of data and directive in activist discourse, where the very act of expert commentary becomes read as political performance. The overlooked dynamic is that repeated moralization of data through well-intentioned expert advocacy generates epistemic fatigue—a dismissal not of facts, but of facts as vehicles for prescribed behavior.

Credential shadowing

Government-affiliated experts commenting on social media trigger a reduction in data credibility among distrustful publics not because of the content they share, but because their affiliations activate latent skepticism toward institutional knowledge systems, particularly in marginalized communities with histories of state misinformation, such as Indigenous populations in North America or Black communities in urban U.S. centers. These audiences interpret the mere presence of credentialed individuals as symbolic stand-ins for larger bureaucratic regimes, projecting past harms onto current statements regardless of data validity—a dynamic visible in resistance to federally backed vaccination data on TikTok. The mechanism functions through associative memory rather than logical evaluation, where the credential becomes a shadow proxy for institutional power. This reveals the overlooked truth that expertise in visible form can reactivate historical epistemic violence, making data appear less credible not due to its merits but due to its bearer’s institutional silhouette.