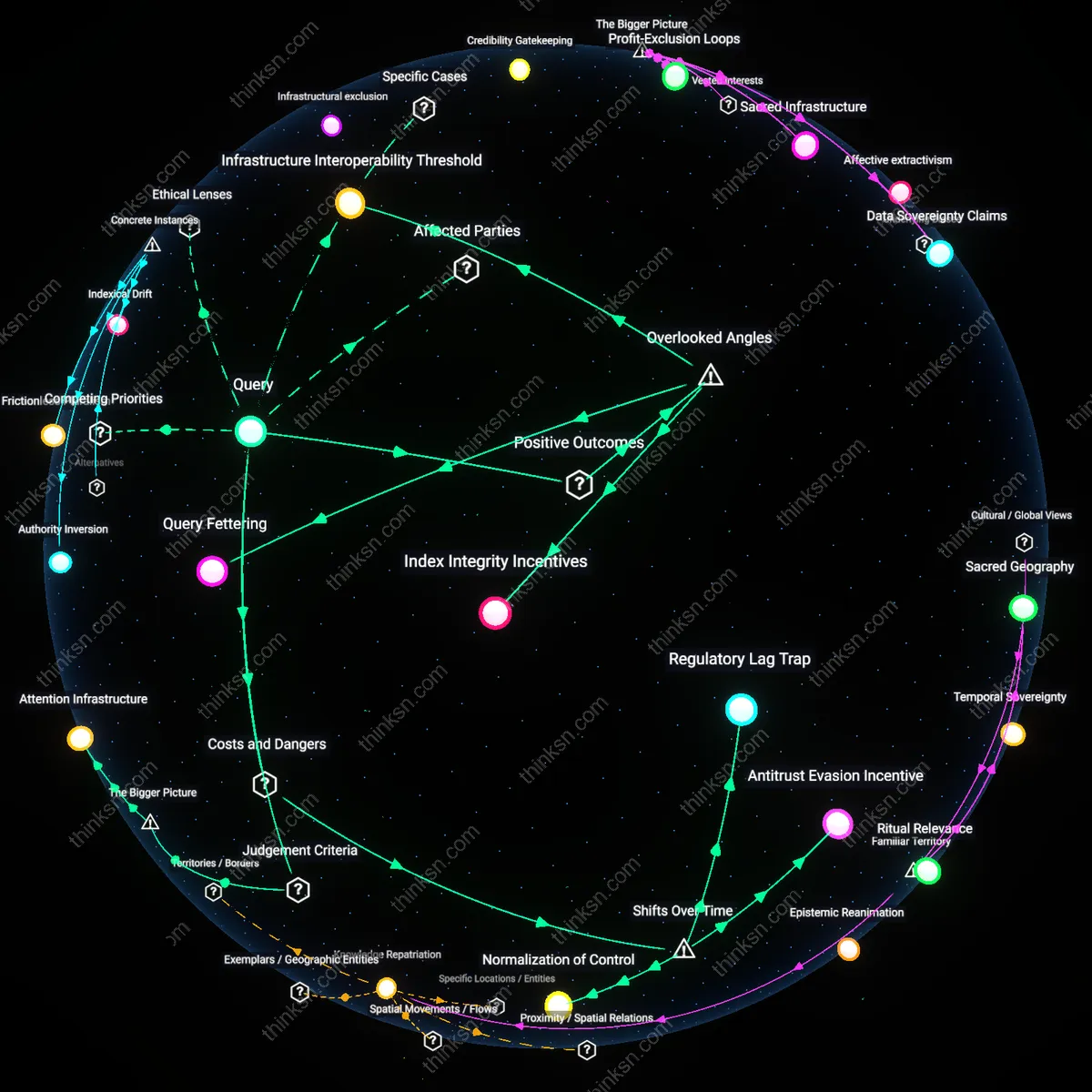

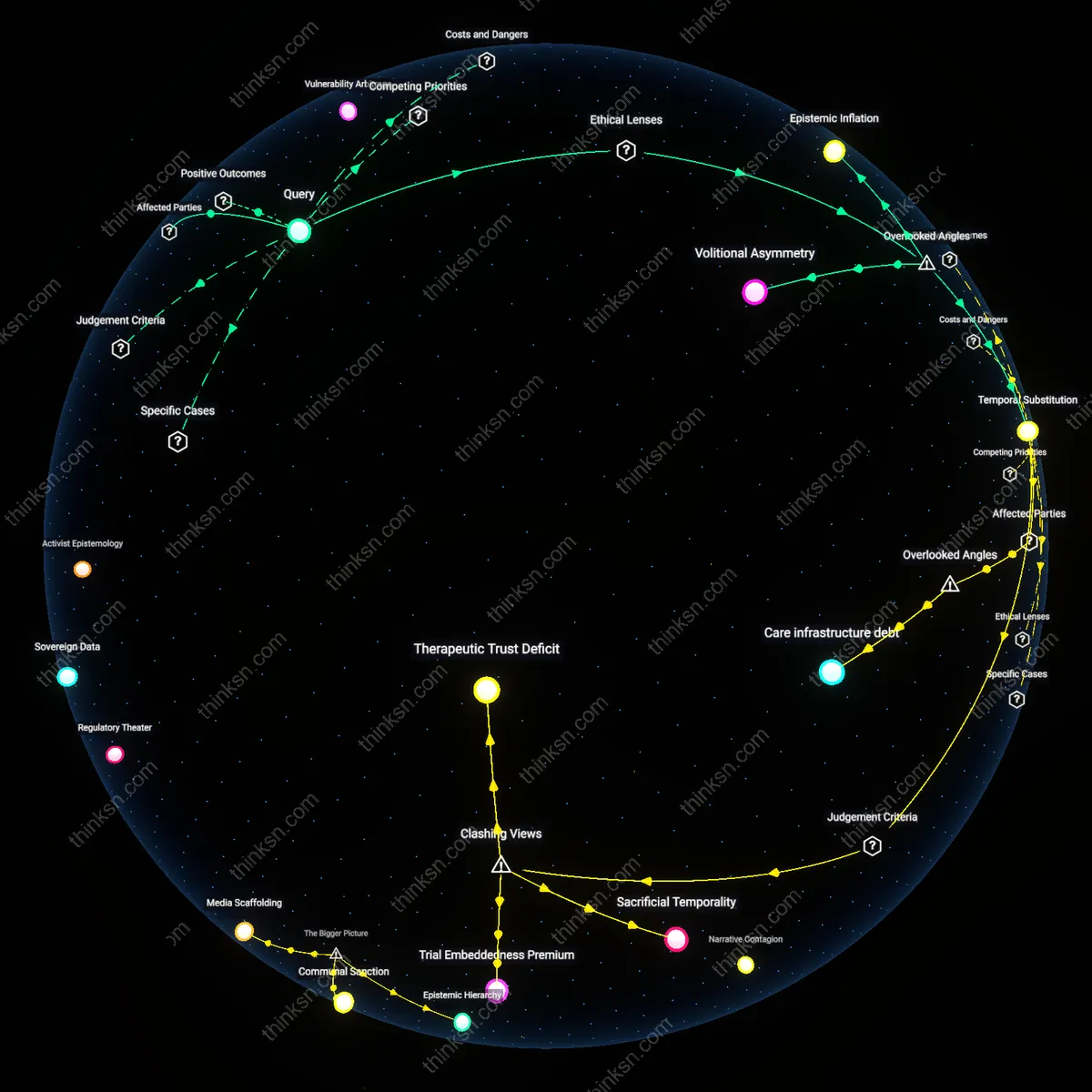

Do Algorithms Amplify Misinfo More Than User Intent?

Analysis reveals 6 key thematic connections.

Key Findings

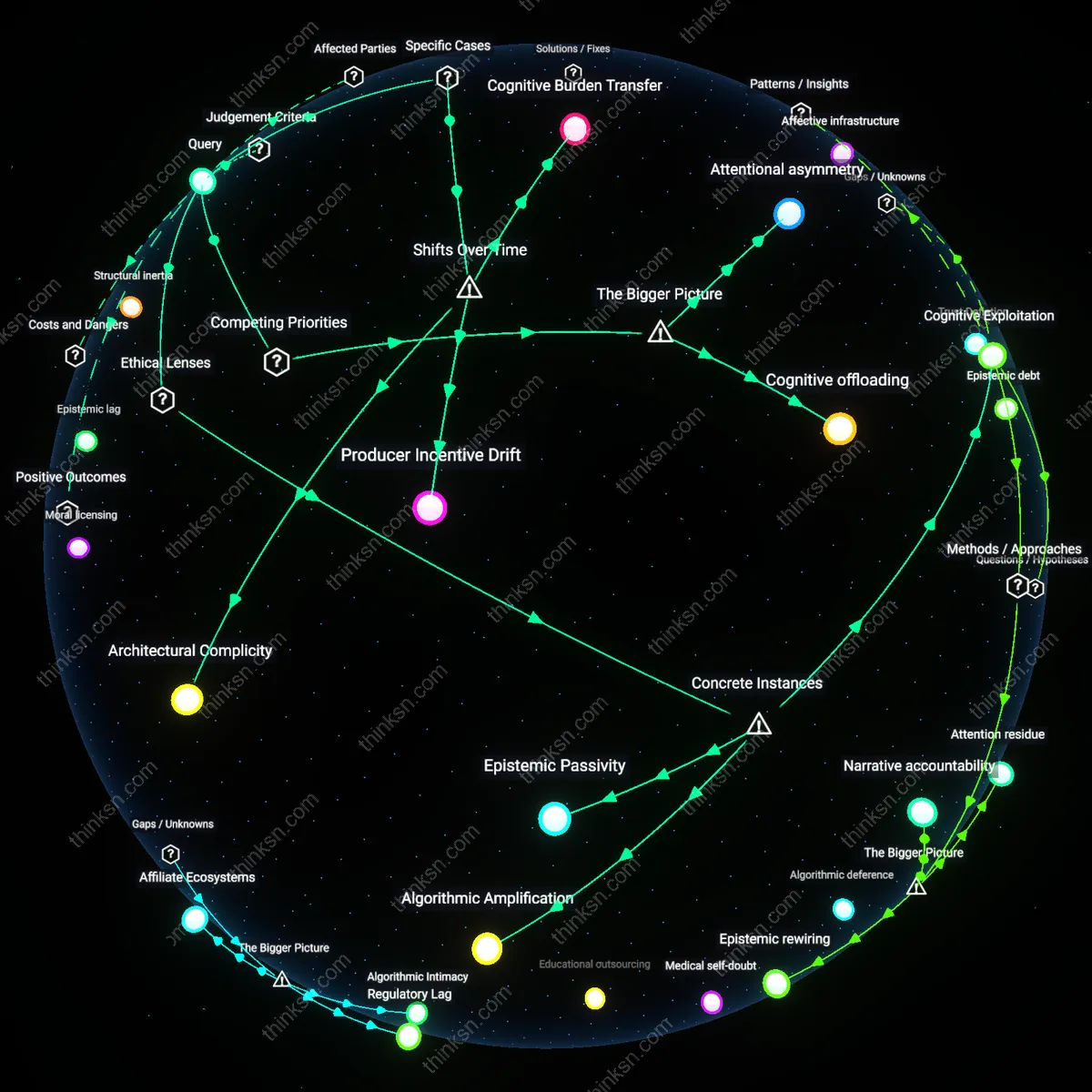

Cognitive labor markets

User motivated reasoning plays a more significant role than platform algorithms in spreading misinformation because individuals in low-resource information environments actively repurpose ambiguous content as symbolic capital in social bargaining. In communities with eroded trust in institutions—such as rural vaccine-hesitant networks in the U.S. Midwest—users selectively share misinformation not due to algorithmic exposure but to assert epistemic autonomy and strengthen communal identity, treating disputed claims as tools for negotiating status. This dynamic reveals that misinformation functions as a form of cognitive labor, where individuals invest effort in curating and validating alternative narratives to fulfill social roles, a mechanism overlooked when focusing on algorithmic amplification. The non-obvious insight is that misinformation spreads through user-driven economies of credibility, not just passive consumption or recommendation systems.

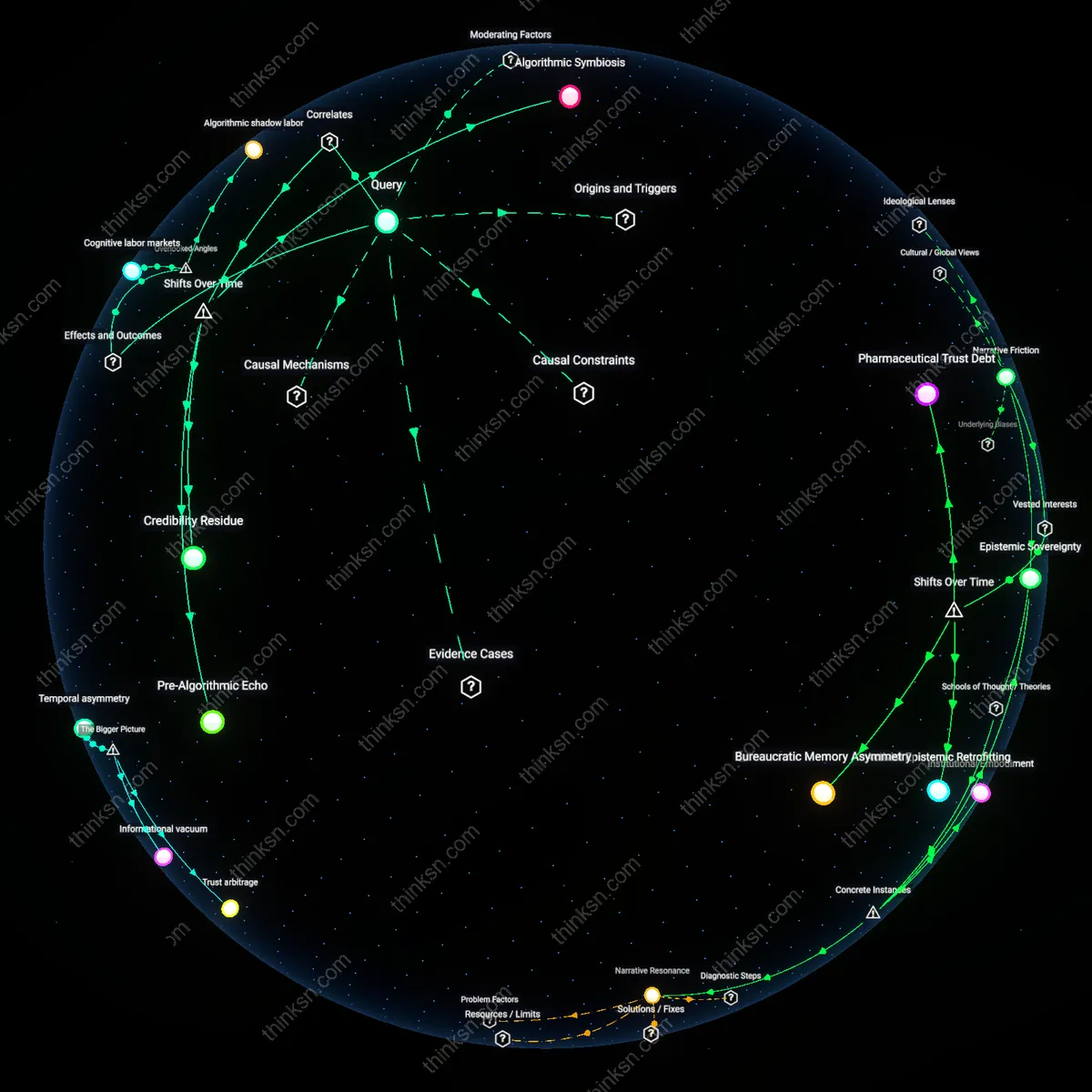

Algorithmic shadow labor

Platform algorithms play a more significant role than user motivated reasoning in spreading misinformation because algorithmic curation invisibly shapes the temporal structure of user attention, making certain emotional or divisive content recurrently salient even when users lack preexisting biases. On platforms like YouTube, the system's preference for watch-time optimization creates feedback loops where users—regardless of intent—are incrementally funneled into extreme content not because they seek it, but because micro-decisions are aggregated into macro-risks through infrastructural inertia. What is underappreciated is that algorithms perform 'shadow labor' by pre-selecting the cognitive menu, thereby defining the boundaries of motivated reasoning before it even activates. This reframes user agency as secondary to computational choreography of exposure cycles.

Affective infrastructure

User motivated reasoning plays a more significant role than platform algorithms in spreading misinformation because emotional scripts embedded in local cultural practices—such as distrust of urban elites in Brazilian favelas—prime users to reinterpret algorithmically neutral content as confirmatory evidence of systemic betrayal. In these settings, misinformation spreads fastest through voice-note chains on WhatsApp not because of ranking logic, but because affective norms reward expressive loyalty over factual accuracy, turning private messaging into a ritualized space of solidarity. The overlooked dynamic is that sociotechnical systems are scaffolded by affective infrastructures—habitual emotional cues that regulate sharing—making motivation a structuring force that operates beneath algorithmic visibility. This shifts the focus from content amplification to emotional resonance as the hidden engine of diffusion.

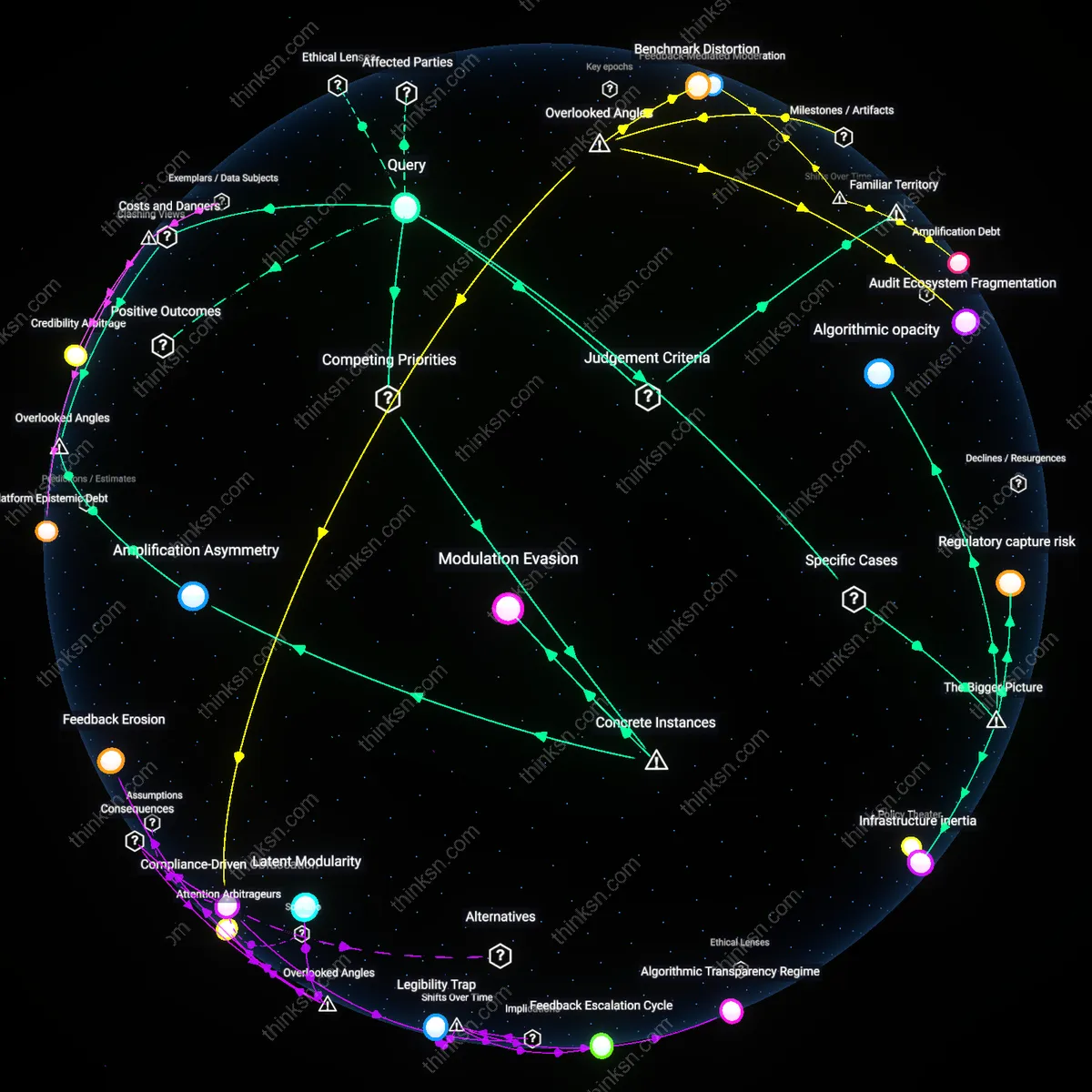

Algorithmic Symbiosis

User motivated reasoning has become a structurally embedded resource for platform algorithms, transforming selective engagement into scalable distribution. In the post-2016 social media environment, machine learning systems began treating user affect—particularly outrage and identity-affirming content—as a training signal, meaning that reasoning biased toward emotional resonance became a direct input into recommendation logic, especially on platforms like Facebook and YouTube. This feedback loop, operationalized through metric-driven AWS-based inference engines, repurposed apophenic pattern detection by users into a data commodity, rendering individual cognition invisible within automated amplification infrastructures. The non-obvious consequence is that what appears as user-driven spread is often an epiphenomenon of algorithmic learning routines that have retroactively validated motivated reasoning as engagement gold.

Pre-Algorithmic Echo

The dominance of user motivated reasoning in misinformation spread today is a projection backward onto pre-algorithmic cultures of belief, obscuring how propagation functioned in mid-20th century media ecosystems. Before real-time personalization, misinformation circulated through geographically bounded networks—like local talk radio audiences or church newsletters—where motivated reasoning shaped reception but not reach, constrained by print distribution and broadcast licensing. The advent of digital scalability did not introduce bias but rather decoupled it from physical limits, revealing that motivated reasoning was always culturally potent but previously bottlenecked by logistical inertia. This shift exposes how platform architecture did not create ideologically receptive audiences but instead dissolved the friction that once limited their aggregation.

Credibility Residue

As algorithmic platforms shifted from chronological to engagement-optimized feeds around 2014–2015, the visibility of user reasoning began to function less as a causal driver and more as a retrospective justification for algorithmic exposure. Users increasingly interpret algorithmically surfaced content as socially validated, retroactively attributing their acceptance of misinformation to personal judgment rather than noticing the mediating recommendation model—such as TikTok’s FYP or Instagram’s Explore—shaping initial visibility. This reversal—where reasoning follows rather than leads exposure—masks the systemic primacy of algorithmic curation, making individual cognition appear agentic when it operates downstream of computational filtering. The residue is a misattribution dynamic in which users consolidate belief after algorithmic suggestion, mistaking algorithmic choreography for autonomous discovery.