Does Bias Concern Drive Nurses to Complex Care in AI Triage?

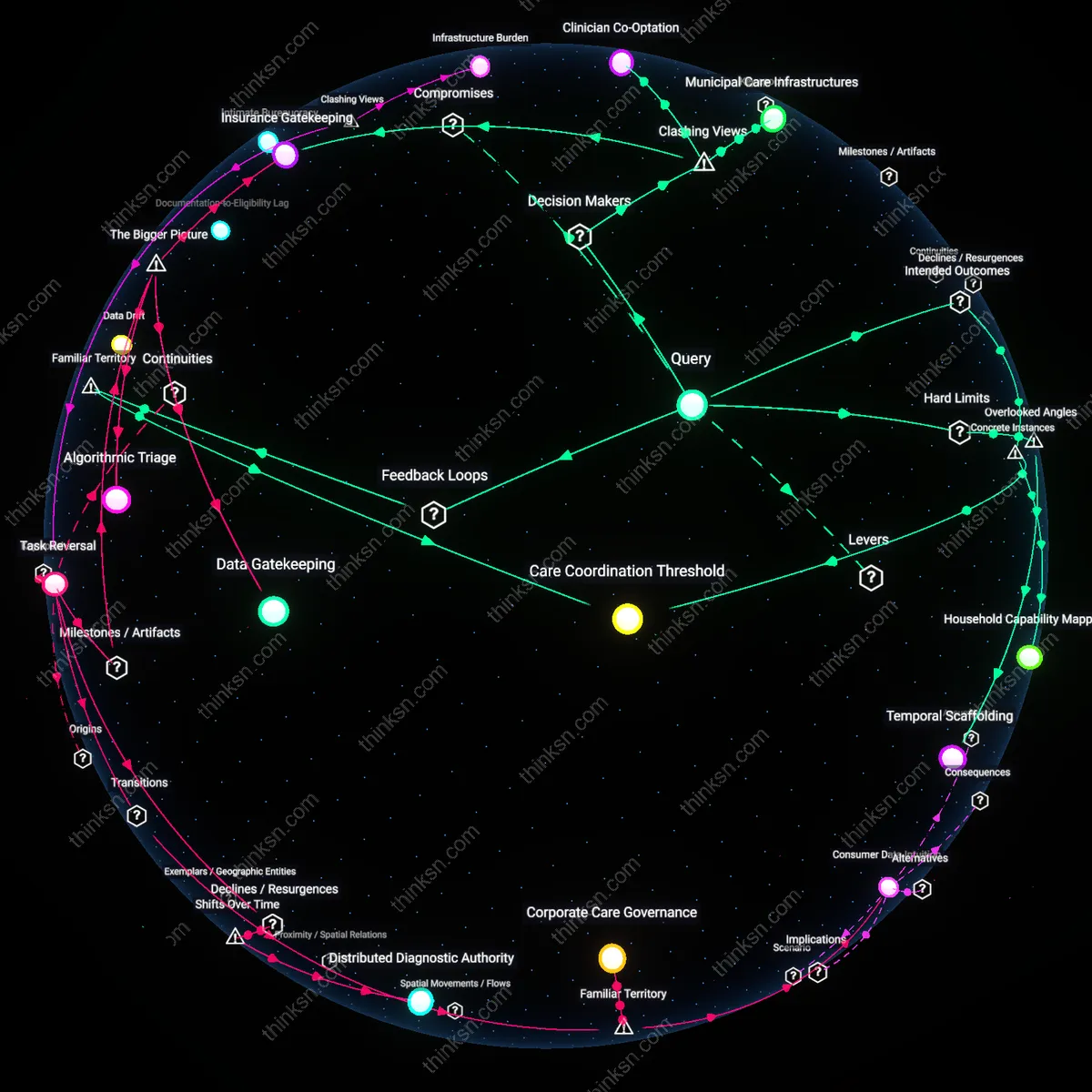

Analysis reveals 9 key thematic connections.

Key Findings

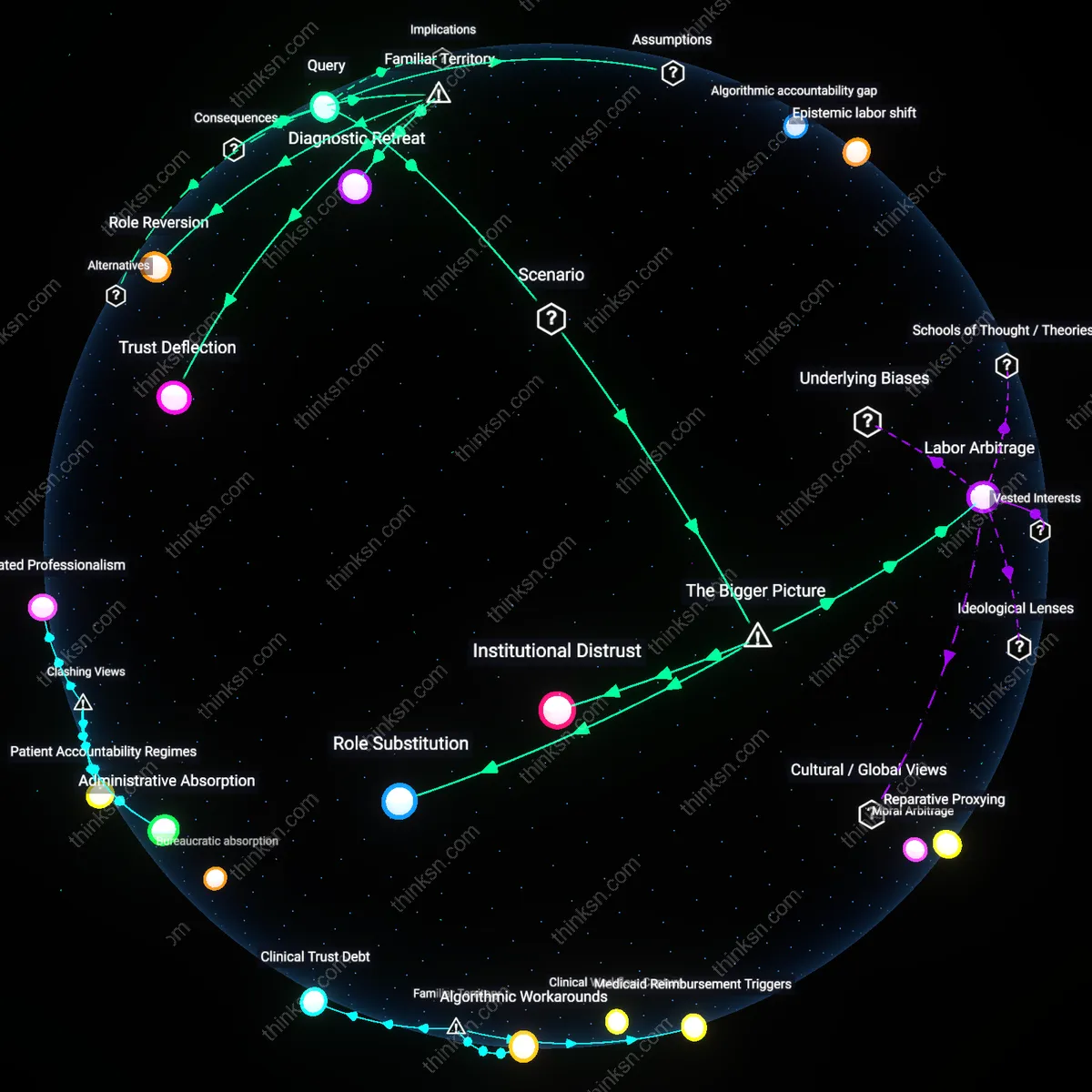

Institutional Distrust

Uncertainty about algorithmic bias in AI-driven patient triage causes frontline nurses to increasingly question the legitimacy of automated decision support systems in high-stakes environments. Nurses in urban academic medical centers, where AI triage is most prevalent, observe recurrent misclassification of vulnerable patients—especially non-English speakers and the elderly—and begin to associate algorithmic tools with hidden inequity, reducing their reliance on such systems. Because nurses operate at the intersection of clinical data and patient advocacy, this erosion of trust becomes a systematic push toward roles where human judgment is paramount, such as complex care coordination. The non-obvious insight is that distrust functions as a professional repulsion mechanism, not mere skepticism—it redirects career trajectories even when AI outputs are statistically accurate on average.

Role Substitution

Persistent ambiguity about algorithmic fairness incentivizes hospital administrators to reconfigure nursing roles, strategically offloading ethically ambiguous triage responsibilities from AI to clinically experienced nurses. Faced with public scrutiny and liability risks—especially after a high-profile miscalibration in a rural telehealth hub—health systems begin to treat experienced nurses as de facto algorithmic auditors, embedding them in teams that manage polypharmacy, geriatric transitions, or behavioral health comorbidities. This shift repurposes nursing expertise as a corrective to opaque systems rather than a subordinate to them. The non-obvious outcome is that algorithmic uncertainty does not reduce nursing influence but instead fuels a new zone of professional authority in multi-condition care, where unpredictability is managed through human integration rather than computational sorting.

Labor Arbitrage

As health systems deploy AI for routine triage to cut staffing costs, uncertainty around algorithmic bias paradoxically increases demand for expert nurses in complex care coordination, creating a structural pull toward higher-acuity case management. Investor-owned hospital networks, seeking to justify AI implementation despite inconsistent performance across socioeconomic strata, use nurse-specialized teams as a risk-mitigation overlay—particularly in Medicaid-dense regions like Louisiana and upstate New York. Here, the clinical need for reconciliation across AI errors (e.g., incorrect acuity scores for patients with limited health literacy) generates budget allocations for nurse coordinators that would otherwise be deemed non-essential. The overlooked dynamic is that algorithmic fallibility becomes a justification for skilled labor investment, transforming bias not as a flaw to fix but as a systemic rationale for redistributing care labor toward human intermediaries.

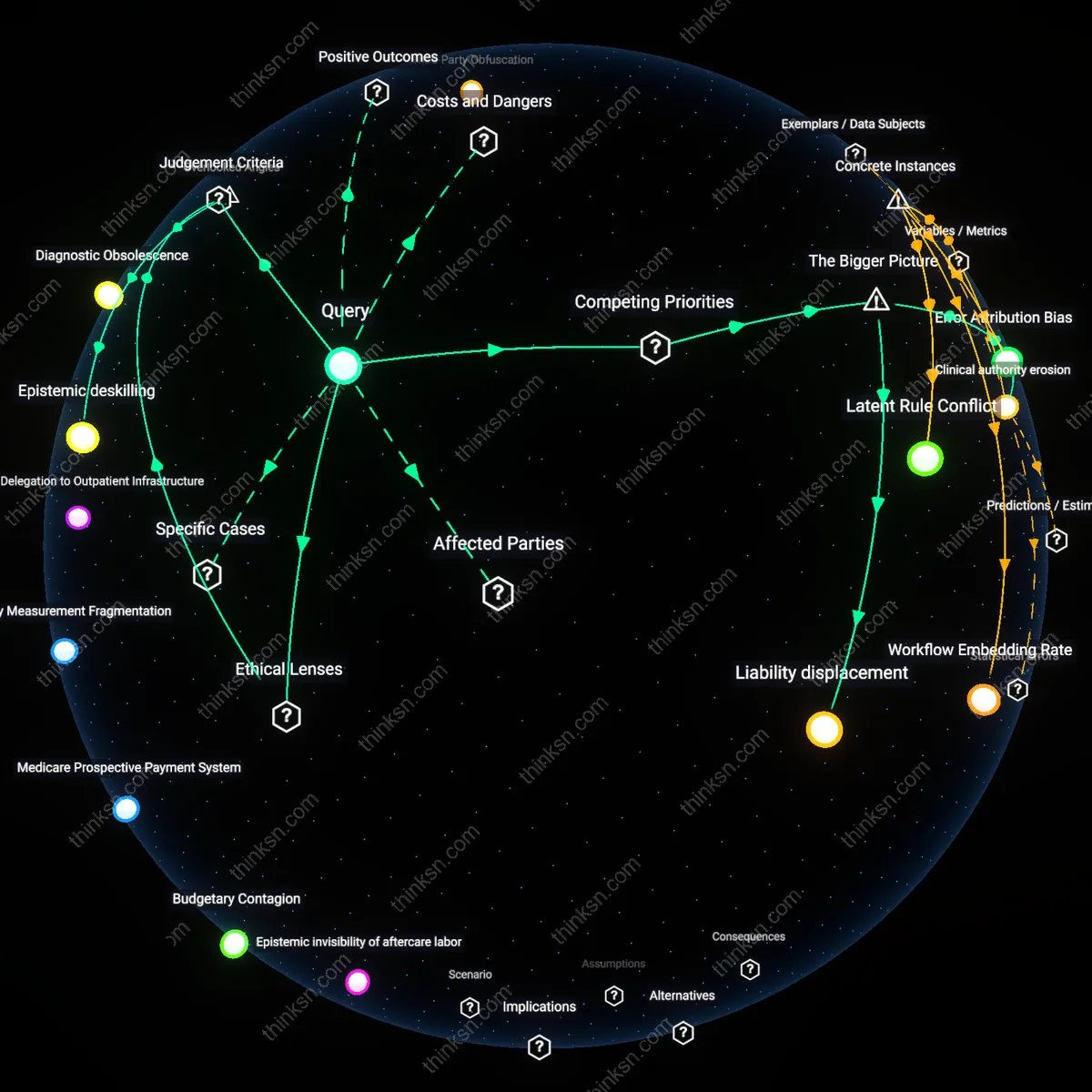

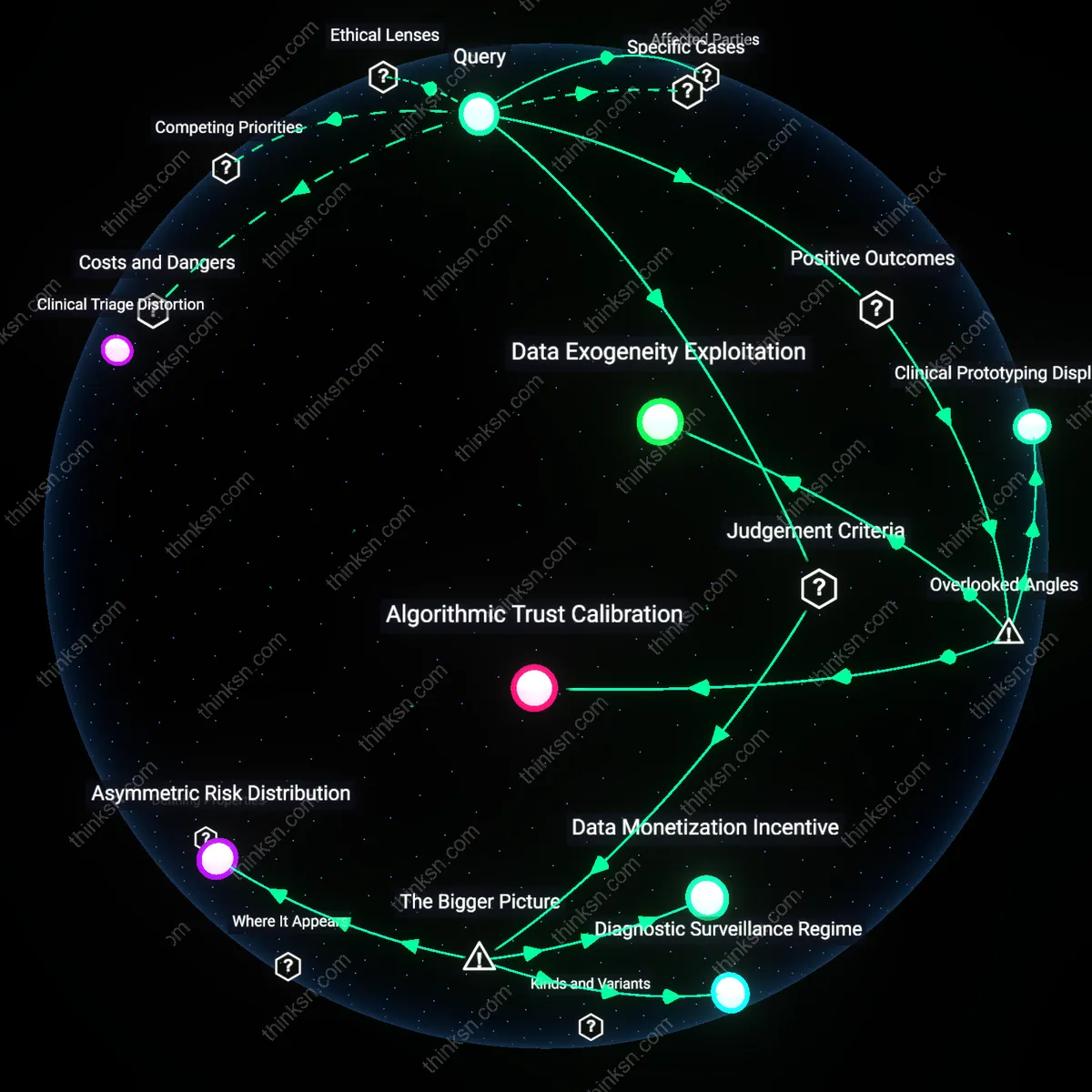

Algorithmic accountability gap

Uncertainty about algorithmic bias in AI-driven patient triage can push nurses toward complex care coordination because, as seen in the 2019 US study on an algorithm used by hospitals to guide care management for high-risk patients, Black patients were systematically under-prioritized due to biased cost-proxy logic—leading frontline nurses to distrust automated risk scores and increasingly assume responsibility for advocacy and case-level adjustments, revealing a structural drift from routine assessment toward intensive mediation when algorithmic legitimacy fails.

Epistemic labor shift

In the rollout of Babylon Health’s AI triage tool in NHS Scotland in 2020, nurses reported spending more cognitive effort interpreting and contesting AI-generated recommendations than performing initial assessments, because the tool’s lack of transparency about clinical weightings forced practitioners to reconstruct decision logic manually—turning routine tasks into sites of diagnostic skepticism and revealing how uncertainty about algorithmic validity redistributes professional effort toward hidden interpretive work rather than streamlined delegation.

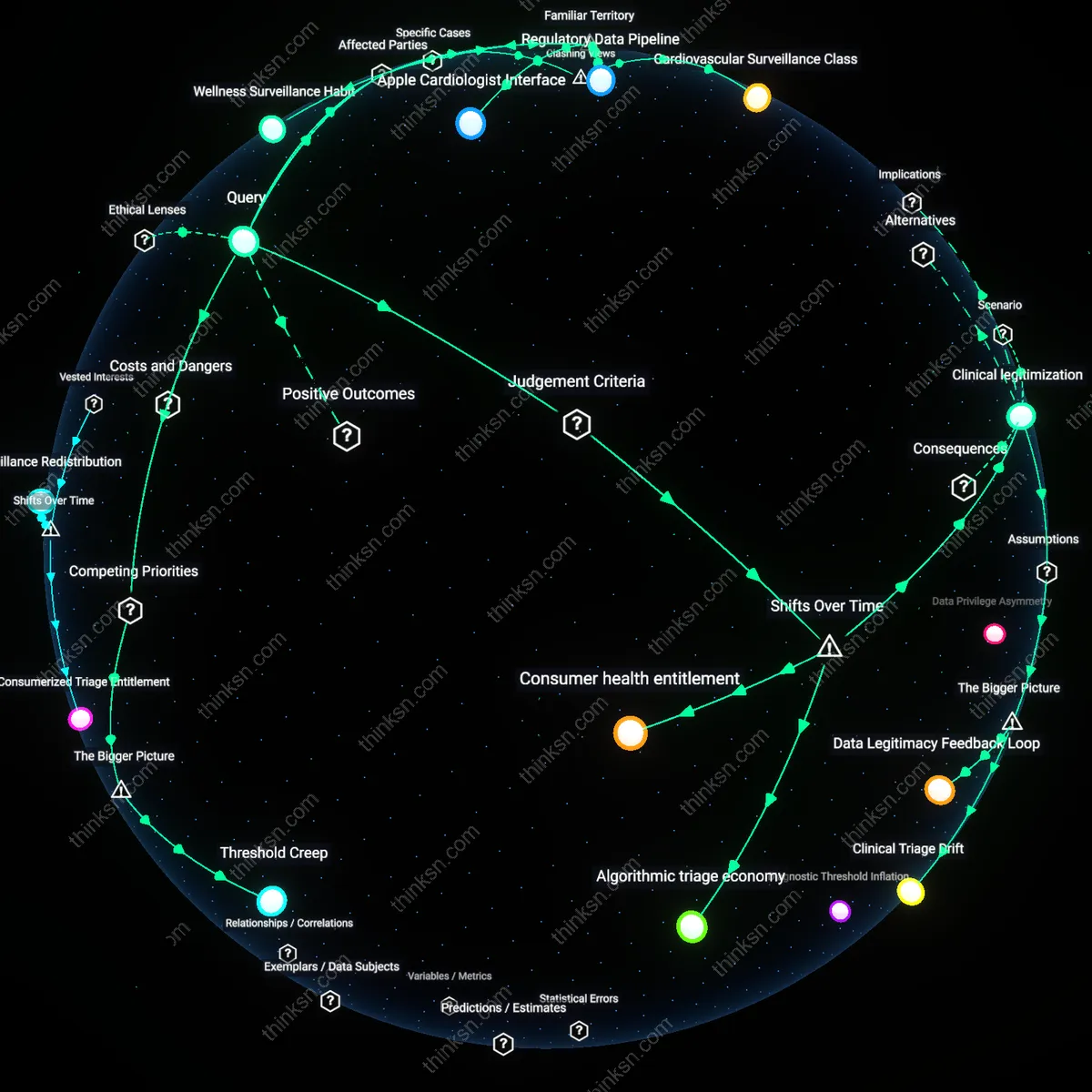

Clinical sovereignty rebound

During the 2021 implementation of an AI triage dashboard at Johns Hopkins Hospital, emergency nurses consciously reasserted judgment-based patient routing by forming informal triage councils, bypassing the algorithm’s default assignments, because repeated incidents of misprioritizing elderly patients with atypical symptom presentation eroded confidence in machine logic—demonstrating that perceived algorithmic unreliability can catalyze collective professional resistance, where nurses reconstitute authority through socially coordinated complexity rather than individualized routine action.

Trust Deflection

Uncertainty about algorithmic bias in AI-driven patient triage pushes nurses to prefer roles in complex care coordination because direct oversight of high-stakes decisions restores professional agency. In hospital systems where risk-averse institutions deploy opaque algorithms for initial patient risk stratification, nurses experience diminished confidence in frontline triage accuracy—especially in marginalized populations—leading experienced clinicians to migrate toward care coordination roles where relational judgment and advocacy remain central. The non-obvious insight under familiar concerns about 'bias' is that the real shift isn’t just about technology’s flaws, but about how professionals redistribute themselves toward domains where trust can still be personally enacted, not delegated.

Role Reversion

Nurses may increasingly specialize in complex care coordination over routine assessment when algorithmic uncertainty undermines the perceived value of their clinical intuition in early triage. As AI tools assume gateway decisions in emergency and primary care settings, the routinized aspects of nursing assessment are devalued institutionally, prompting skilled nurses to seek roles where holistic, longitudinal patient management resists full automation. What’s underappreciated beneath common talk of 'AI replacing jobs' is that professionals aren’t just resisting displacement—they’re reverting to traditional, pre-technical nursing identities centered on coordination and moral labor, effectively reasserting historical professional boundaries.

Diagnostic Retreat

Familiar anxieties about biased algorithms in patient triage lead nurses to exit routine assessment roles because diagnostic authority becomes institutionally centralized in data systems they cannot interpret or challenge. In urban medical centers using proprietary triage platforms, nurses report feeling like data entry operatives rather than clinical assessors, driving them toward complex care coordination where they can exercise discretion in treatment sequencing and family liaison without algorithmic interference. The overlooked consequence is not just role migration but a quiet retreat from diagnostic participation altogether—a professional withdrawal from judgment spaces contaminated by unaccountable technology.