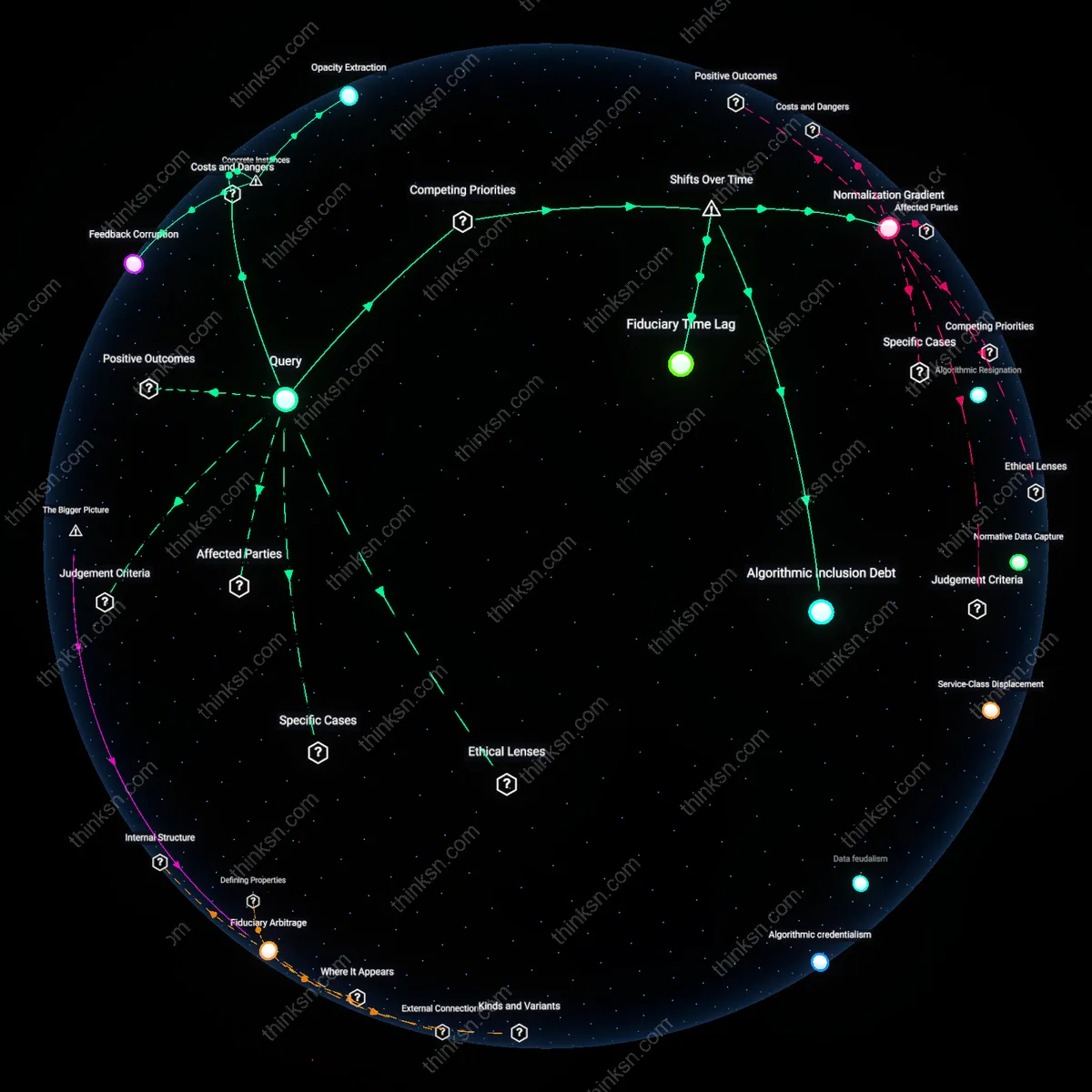

Algorithmic Resignation

Middle-class clients using Betterment’s automated financial planning tools report compliance without conviction, accepting portfolio allocations that prioritize low-cost indexing over upward mobility because the platform’s algorithms offer no pathway to outperform systemic averages—reflecting a shift from active financial aspiration to passive risk containment shaped by computational pragmatism. This dynamic reveals how algorithmic standardization quietly suppresses ambition by framing financial health as behavioral compliance rather than economic advancement, a mechanism underwritten by fintech’s privileging of scale over customization. The non-obvious insight is that automation does not merely limit access—it redefines success for the middle class as avoidance of loss, not accumulation of wealth.

Normative Data Capture

When the UK’s Nationwide Building Society integrated AI-driven advice engines into its online mortgage advisory service, middle-income applicants found their borrowing capacity benchmarked against regionalized, income-weighted norms derived from historical lending patterns—effectively encoding past class stratifications into present-day risk assessments. The system, designed to reduce cognitive load for users, automated recommendations that discouraged upwardly mobile borrowing outside actuarial peer clusters, framing deviation as irrational. This illustrates how AI standardization legitimizes class position through the scientization of financial norms, a process where advice transforms from aspirational guidance into behavioral policing masked as personalization.

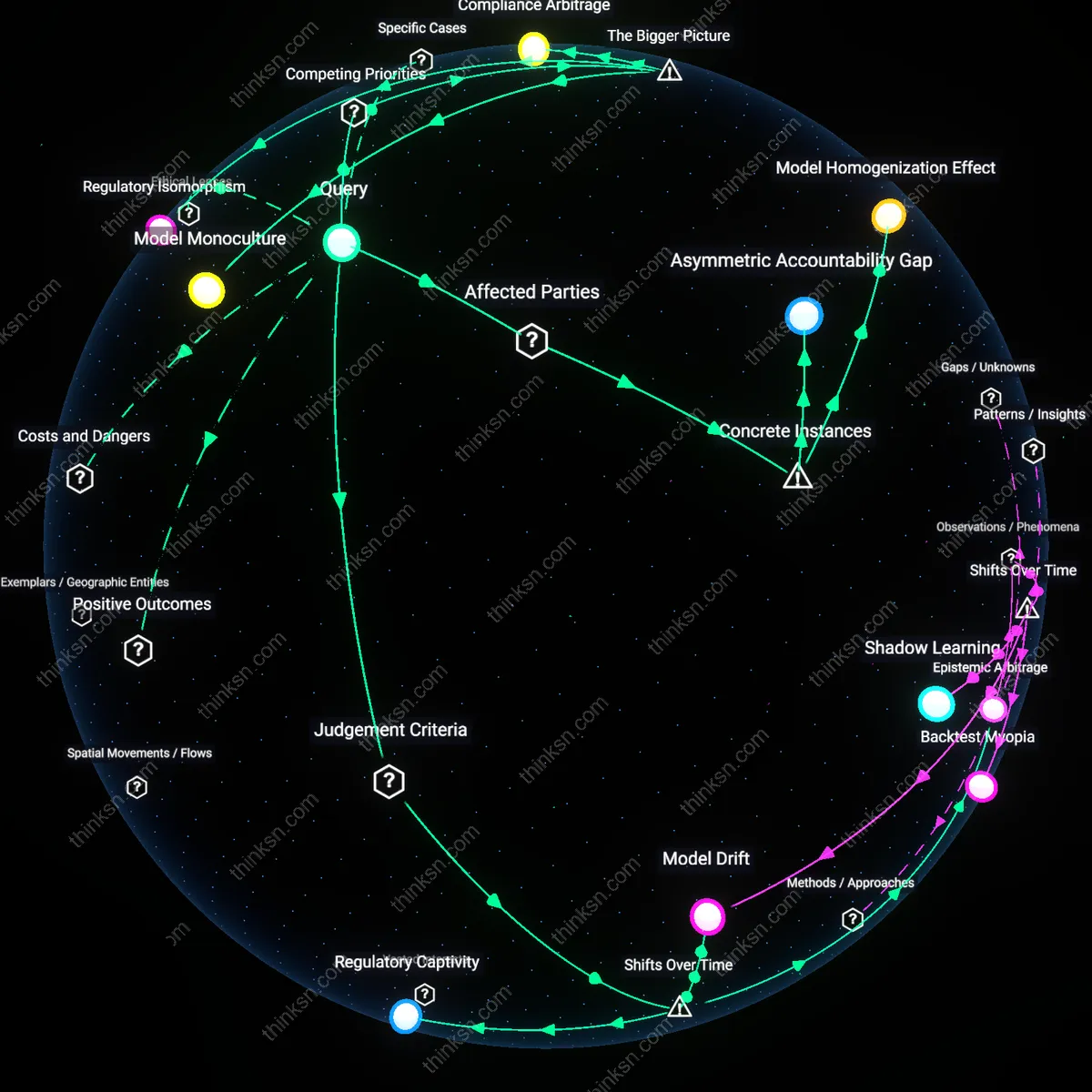

Service-Class Displacement

In 2018, Vanguard’s Personal Advisor Services expanded hybrid human-AI advising to clients with $50,000 minimum portfolios, leaving fully automated offerings for those below that threshold—creating a visible service hierarchy where middle-class users on the automated tier received identical, rules-based tax-loss harvesting and asset allocation with no strategic deviation. The disparity became evident when high-net-worth clients received tailored estate and philanthropy modules absent from standard interfaces, signaling that automation serves as a gatekeeping mechanism camouflaged as democratization. The overlooked consequence is that standardization doesn’t just reflect class divides—it operationalizes them through feature segmentation in software architecture.

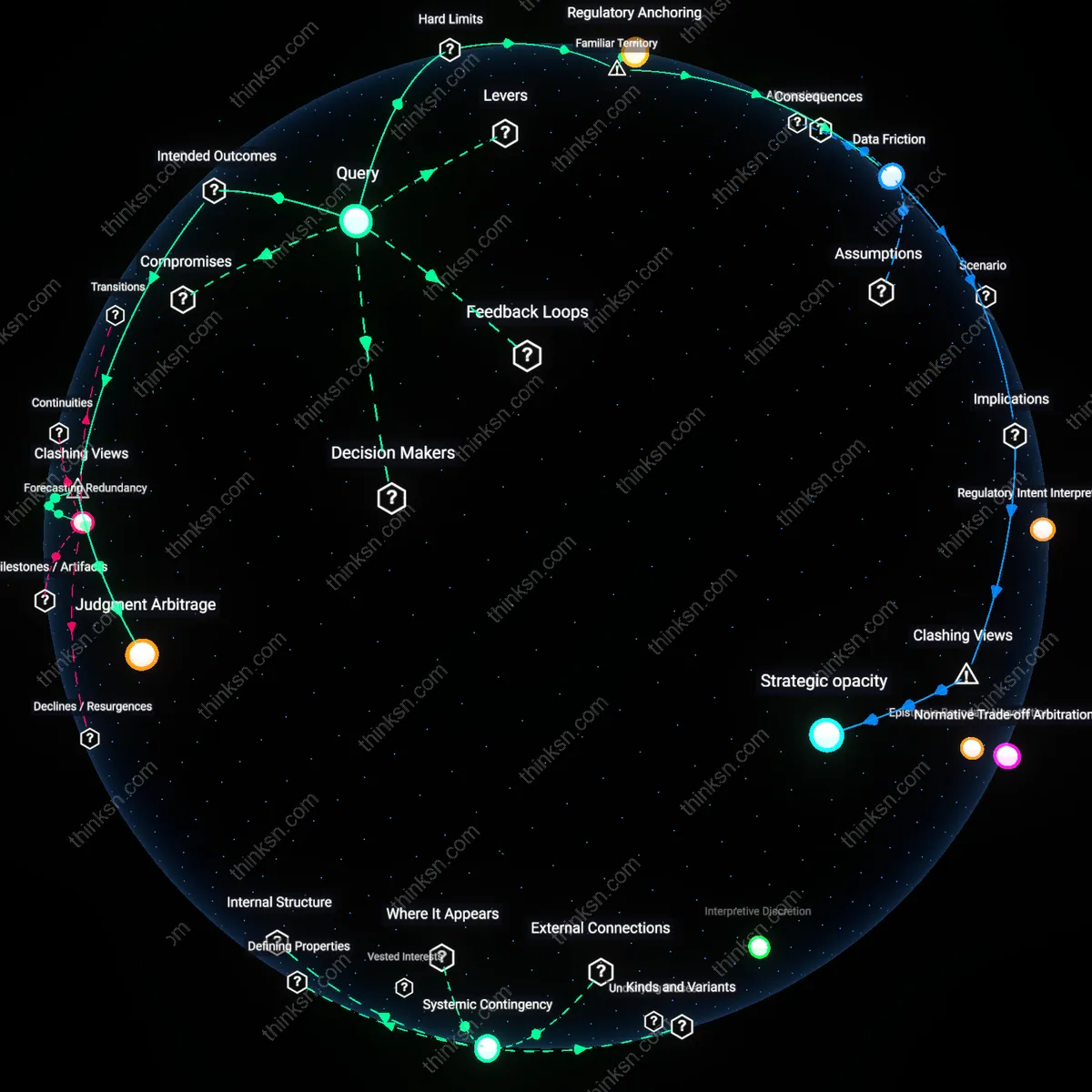

Technological enclosure

Middle-class clients experience standardized AI financial advice as a loss of personalized agency, which deepens their sense of exclusion from wealth-building mechanisms. This occurs because financial institutions deploy AI to reduce advisory costs at scale, prioritizing high-net-worth client customization while offering middle-class users templated, algorithm-driven pathways—reinforcing existing capital access hierarchies. The overlooked dynamic is that automation does not democratize expertise but instead codifies it into gated architectures, where data-driven models reflect historical wealth distributions and thus reproduce them. The key actors are fintech platforms and asset managers who face institutional pressure to maximize shareholder returns, making cost-efficient scalability more valuable than equitable outcomes.

Meritocratic disillusionment

Middle-class clients interpret automated financial guidance as evidence that their economic mobility depends on compliance with impersonal systems rather than individual effort, undermining faith in meritocratic promise. This perception emerges under liberal governance models that frame financial technology as a neutral tool for empowerment, even as AI systems embed risk-aversion and conservative investment templates that prioritize capital preservation over transformative growth—strategies aligned with maintaining, not challenging, class position. The non-obvious mechanism is that standardization is ideologically marketed as fairness, but in practice, it flattens financial ambition according to risk profiles derived from aggregate middle-class data, thus discouraging upward mobility. The enabling condition is regulatory tolerance for algorithmic standardization in fiduciary design, driven by public-private partnerships promoting 'inclusive' fintech.

Financial precarity cascade

Middle-class clients internalize automated advice as confirmation that their financial futures are structurally constrained, not personally solvable, accelerating a shift from planning to passive acceptance. This occurs within a neoliberal political economy where public welfare retrenchment has made household balance sheets the primary buffer against systemic risk, forcing middle-class families to rely on commercial AI tools that reflect profit-driven design logic rather than social stability goals. The critical, underappreciated dynamic is that automated advice normalizes stagnation—such as recommending conservative savings rates and market-matching index funds—while omitting structural levers like wage growth or tax reform, effectively depoliticizing inequality. The consequence is a feedback loop where algorithmic neutrality masks embedded conservatism, legitimizing widening class divides as the outcome of rational, data-based choice.

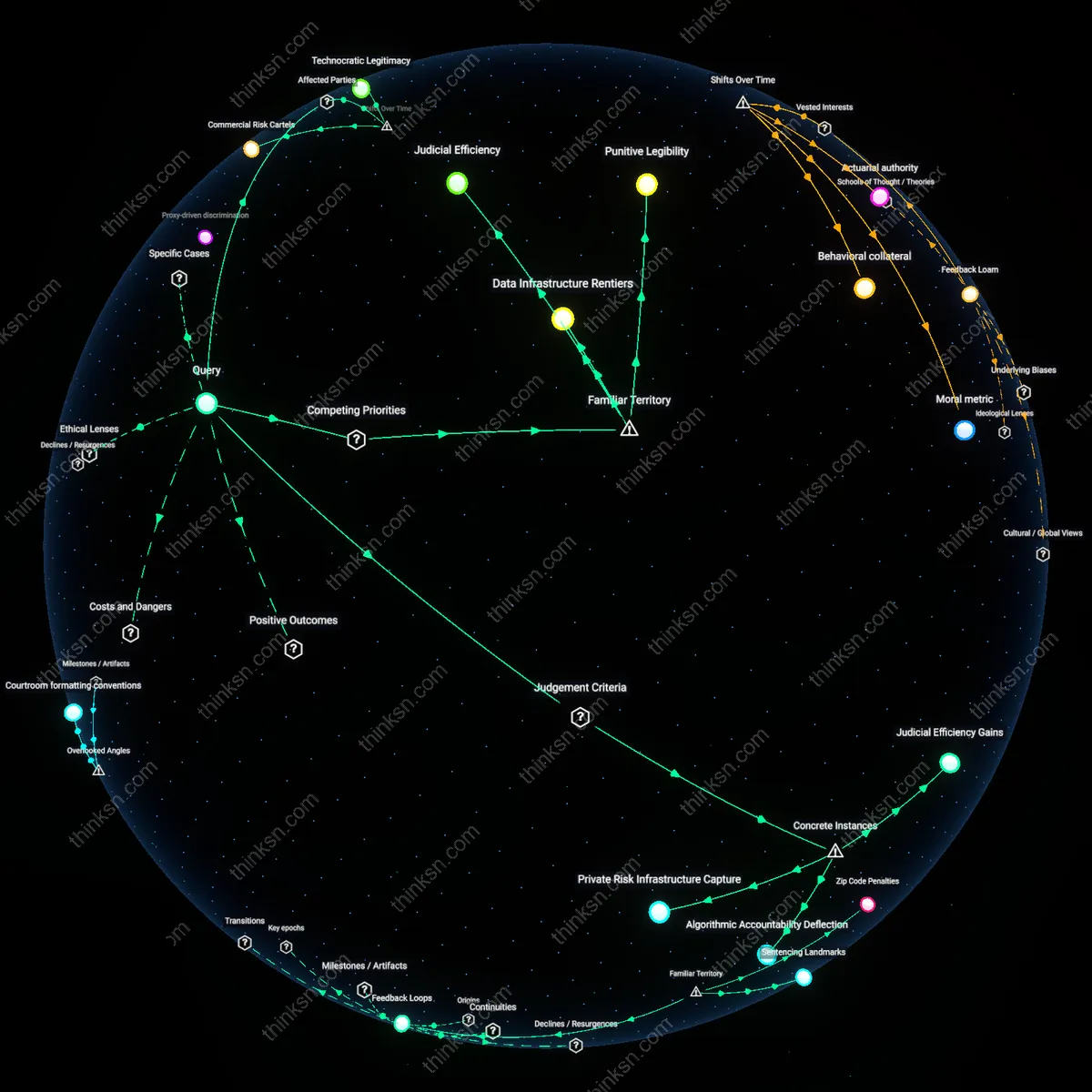

Digital Dharma Divide

Middle-class populations in South and Southeast Asia interpret standardized AI financial advice as a modern violation of dharma-based reciprocal duty, where personalized guidance from elders or community figures has long been the norm; when automation replaces human counsel, it disrupts expectations of context-sensitive moral stewardship, particularly among Hindu and Buddhist communities who associate ethical wealth management with spiritual obligation. This creates a quiet resistance to AI tools that feel culturally tone-deaf, even if technically accurate, revealing how algorithmic neutrality can clash with relational moral economies. The non-obvious insight is that automation’s fairness is not universally prized—familiarity with communal judgment makes depersonalization feel less like progress and more like abandonment.

Meritocratic Mirage

Western middle-class users, especially in the U.S. and U.K., react to automated financial advice with anxious resignation because it reinforces their existing belief in meritocracy—that personal data input should yield proportionate returns—yet exposes how structural advantage shapes outcomes more than individual effort. When AI delivers identical advice to all income brackets, it visually equalizes access while silently amplifying advantages for those who already possess capital, financial literacy, or stable employment—conditions baked into training data. The familiar framing of ‘democratized finance’ masks this skew, making the system feel fair on its face; the underappreciated truth is that standardization does not level the playing field—it codifies the norms of the dominant economic class as universal rationality.

Kinship Algorithm Gap

In many African and diasporic communities, financial guidance is traditionally embedded in kinship networks and rotating credit associations like *susu* or *stokvels*, where trust and social collateral matter more than data accuracy, so AI-driven planning feels alien and untrustworthy when it ignores communal obligation and interdependency. Middle-class members who migrate toward these tools often do so reluctantly, perceiving them as imposed by global fintech standards that privilege Northern individualism over Southern relational models. The habitual association of ‘smart technology’ with progress conceals the erosion of socially embedded risk-sharing; what’s underappreciated is that automated advice doesn’t just fail to include these systems—it implicitly delegitimizes them by offering a solitary, data-optimized path as the only rational choice.

Data feudalism

Middle-class clients distrust standardized AI financial advice because corporate actors—particularly big tech-finance hybrids like BlackRock’s AI-driven robo-advisory platforms—design these systems to prioritize scalability over personalization, thereby embedding invisible class biases in risk profiling algorithms. These firms justify their one-size-fits-all models as democratic access tools, but the underlying data architectures assume middle-class financial behaviors mimic wealthier users, systematically underweighting irregular incomes or asset-poor liquidity needs. What's overlooked is that the data hierarchy itself—how transaction histories from premium clients shape training sets—reproduces class divides structurally, not just in outcome, rendering middle-class financial lives 'noisier' and less legible to the system. This changes the standard critique by showing that inequity isn’t a flaw in deployment but baked into the epistemic foundation of the models.

Algorithmic credentialism

Middle-class users resent automated financial advice when credentialing institutions—like state-licensed financial advisory boards or fintech accreditation bodies—legitimize AI systems based on compliance efficiency rather than equity of outcome, treating algorithmic standardization as professional objectivity. These groups frame automation as a guard against human advisor bias, but in doing so, they equate regulatory adherence with fairness, erasing how standardized risk frameworks penalize non-conforming life paths such as gig work or intergenerational co-dependence. The overlooked dynamic is that professional legitimacy, not just profit motive, drives the erosion of personalized guidance—transforming class difference into technical 'noise' rather than structural context. This shifts focus from transparency debates to how gatekeeping institutions outsource moral responsibility to coded neutrality.