Niche Reddit Belonging vs Extremist Risks

Analysis reveals 7 key thematic connections.

Key Findings

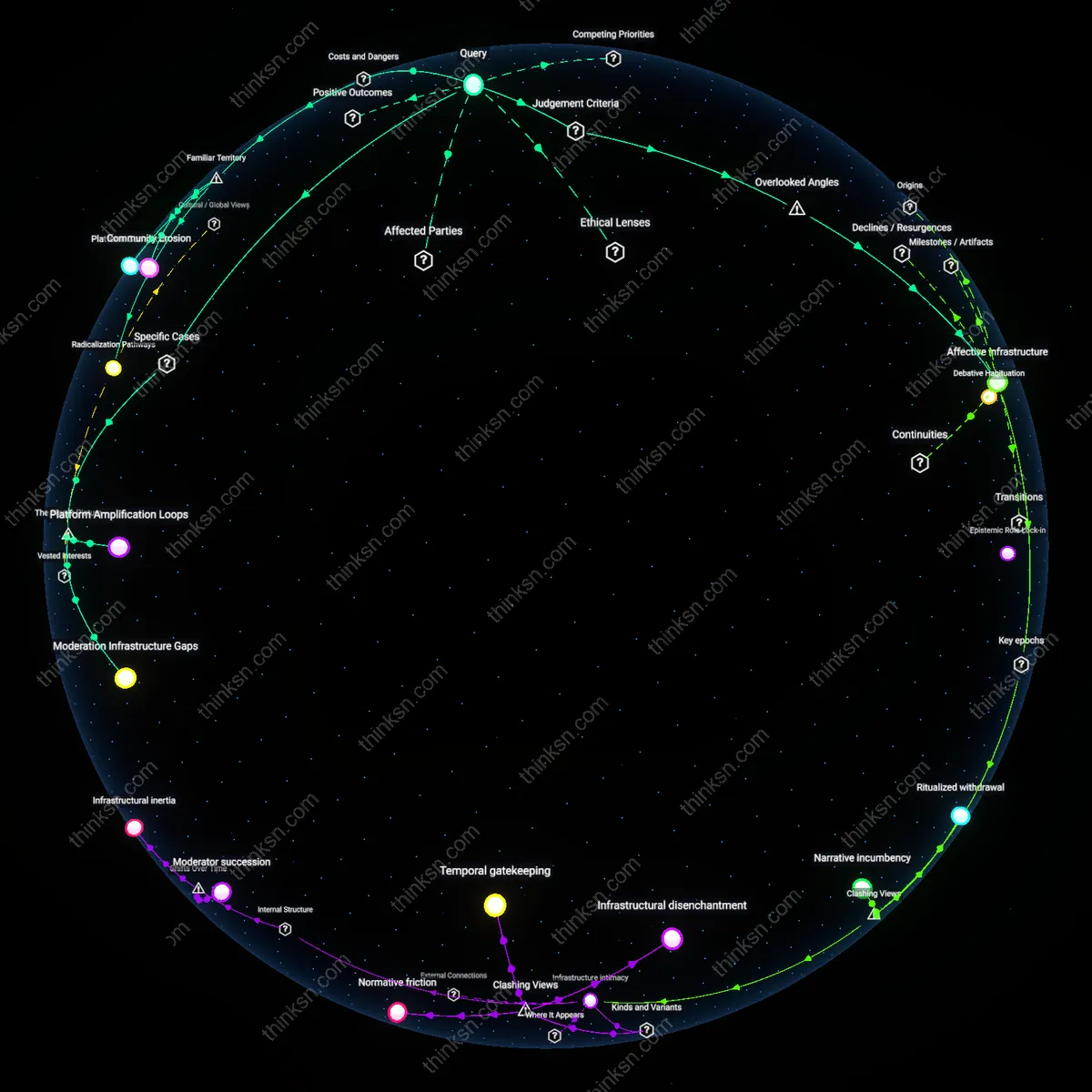

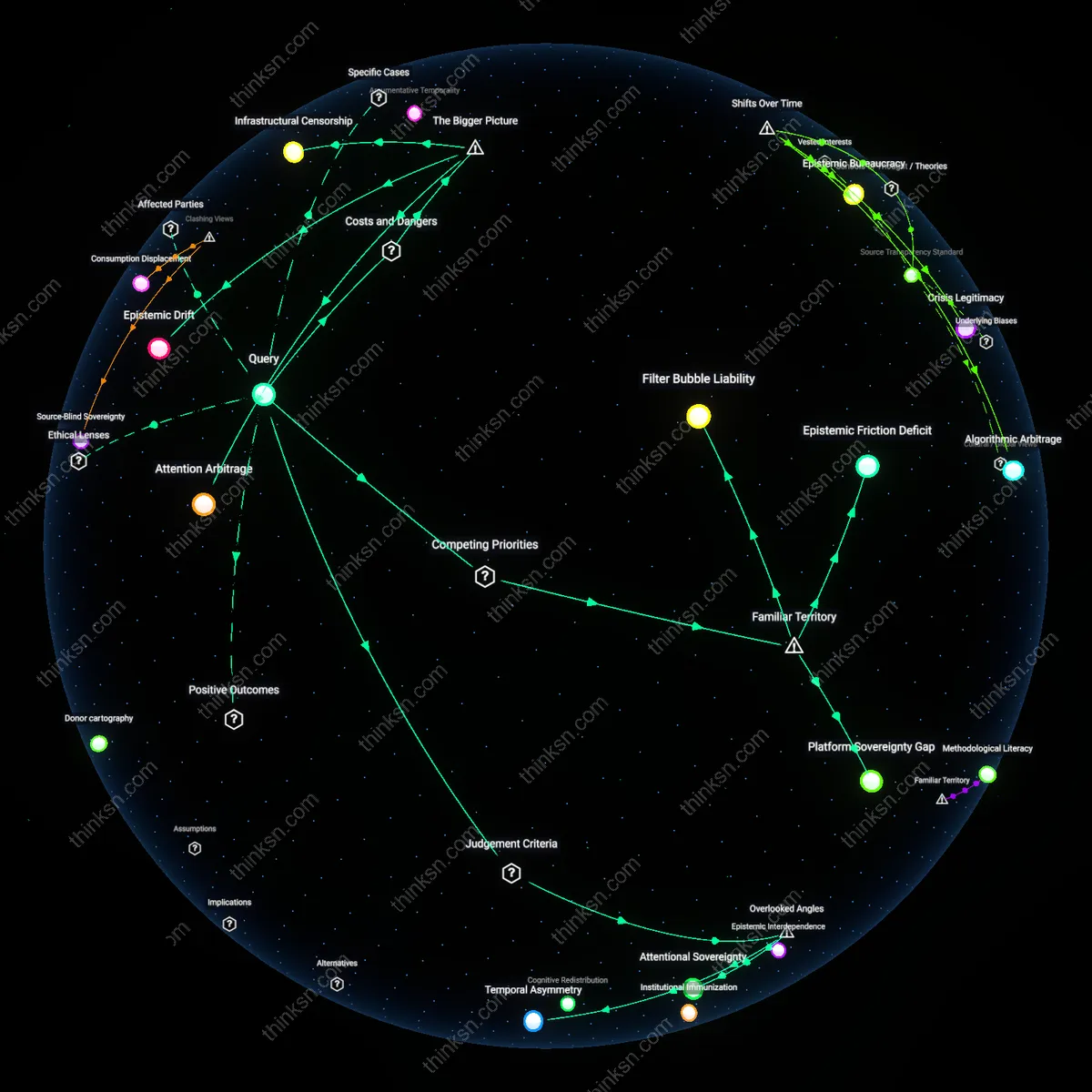

Affective infrastructure

Yes, the sense of belonging in niche Reddit communities can outweigh the platform's role in enabling extremist recruitment because the affective infrastructure of subreddits—such as private messaging, ritualized posting behaviors, and micro-validation loops—creates a more durable psychological anchor than the mere availability of extremist content. This infrastructure operates through granular, everyday interactions that accumulate emotional debt, making withdrawal socially and emotionally costly even when ideological alignment wanes, which is often overlooked in analyses focused on content moderation or radicalization pipelines. This shifts the moral yardstick from justice (fair exposure to ideas) toward psychological autonomy, emphasizing the coerciveness of belonging rather than belief. The overlooked dynamic is that the platform's real power lies not in radicalization per se, but in engineering dependency through mundane, emotionally reinforcing routines that precede and enable ideological capture.

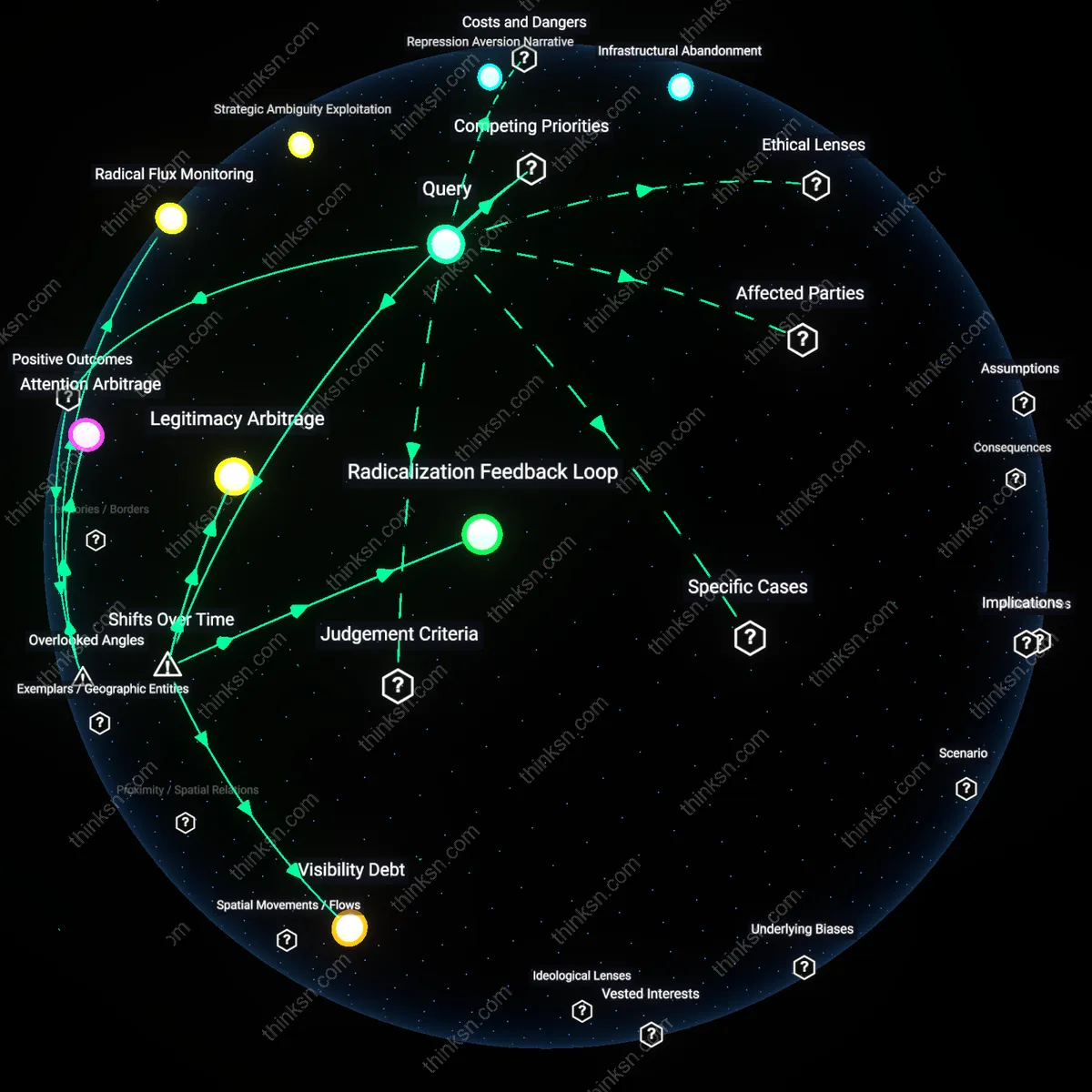

Radicalization Pathways

Niche Reddit communities can act as on-ramps to extremist ideologies by normalizing radical content through gradual escalation. Users seeking belonging in politically charged or identity-focused subreddits are exposed to increasingly extreme views under the guise of in-group loyalty, where moderators and influential posters shape discourse. The platform’s algorithmic leniency and decentralized structure allow fringe communities to operate without consistent oversight, turning social validation into a vector for ideological capture. What’s underappreciated is how the sense of inclusion itself becomes the mechanism of recruitment, masking radicalization as personal affirmation.

Community Erosion

The emotional investment users develop in niche Reddit communities often displaces broader civic or pluralistic engagement, weakening collective resilience to extremism. When belonging is contingent on ideological purity, users self-police dissent and internalize group norms that prioritize in-group cohesion over factual accuracy or ethical boundaries. This dynamic mirrors real-world sectarian fragmentation, where digital enclaves replicate the social costs of cult-like conformity. Most people recognize echo chambers as problematic, but fail to see how the psychological reward of belonging actively degrades the user’s capacity for independent judgment.

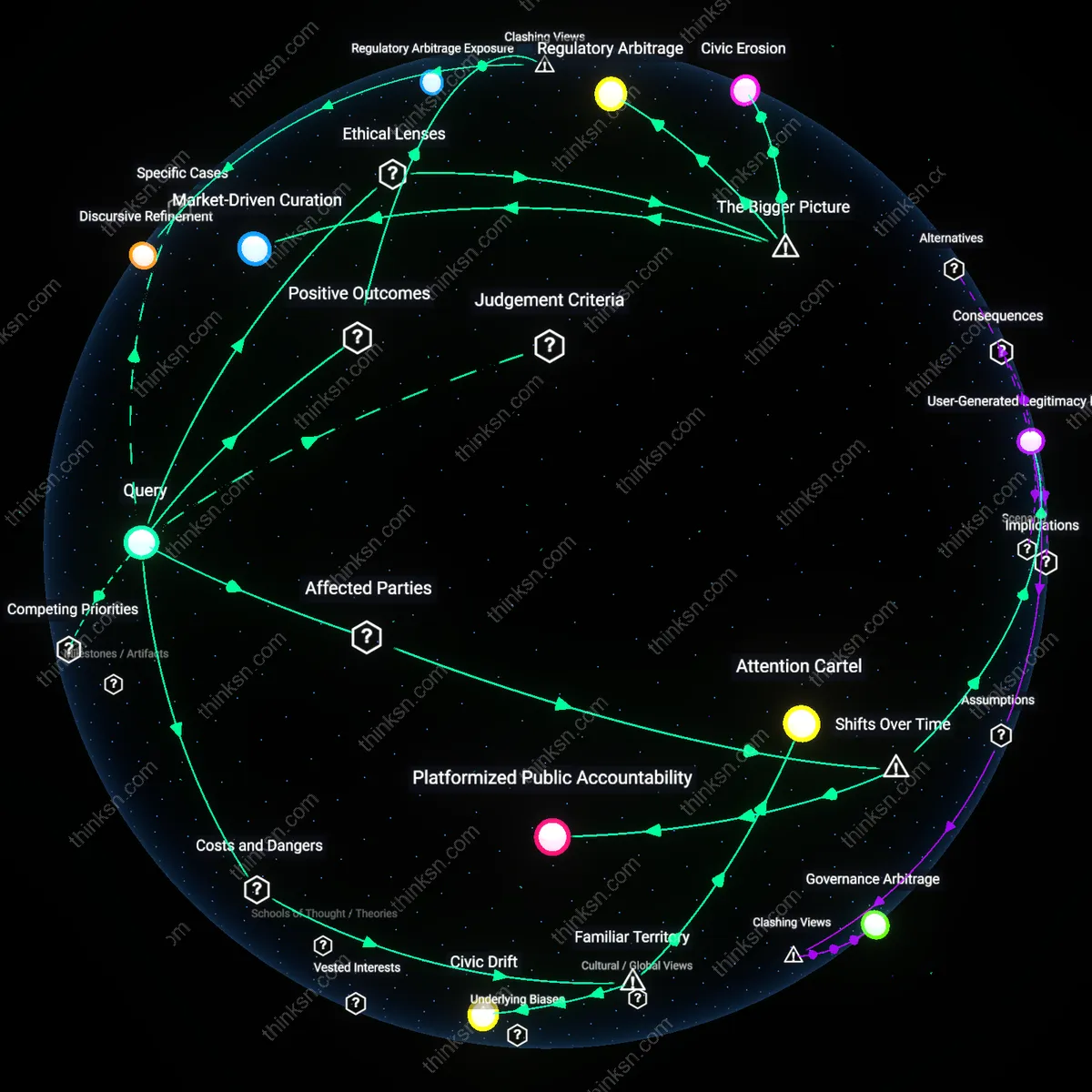

Platform Complicity

Reddit’s business model implicitly incentivizes engagement in extreme communities by tolerating high-activity niches that drive traffic and ad revenue. Moderation policies are inconsistently enforced, allowing extremist recruitment to persist under the surface of seemingly benign subcultures focused on humor, nationalism, or anti-establishment rhetoric. Administrators and moderators in these spaces often operate with de facto autonomy, creating parallel governance systems that evade accountability. The overlooked reality is that Reddit’s hands-off approach isn’t neutrality—it’s systemic enablement, where profitability depends on the emotional dependencies formed in toxic communities.

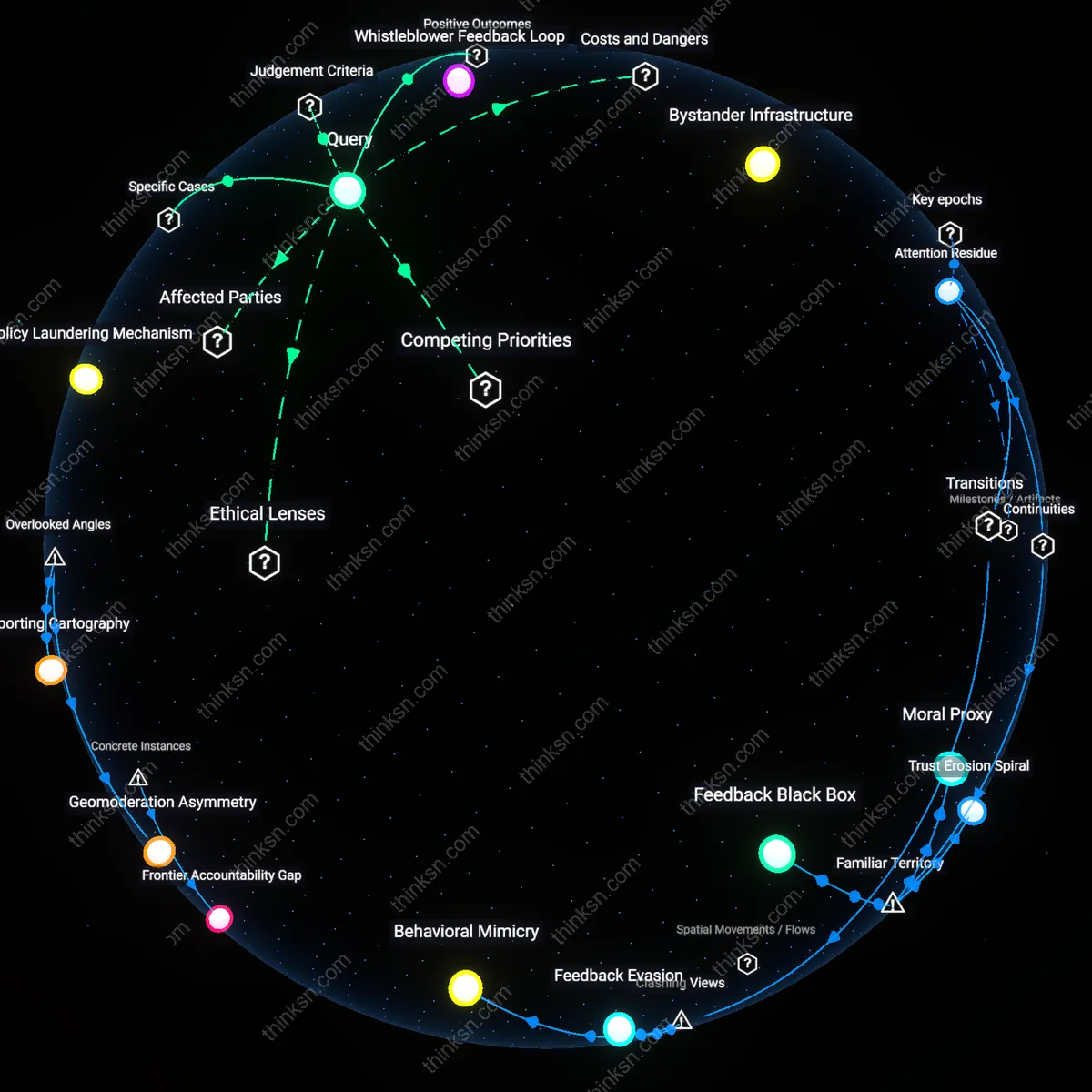

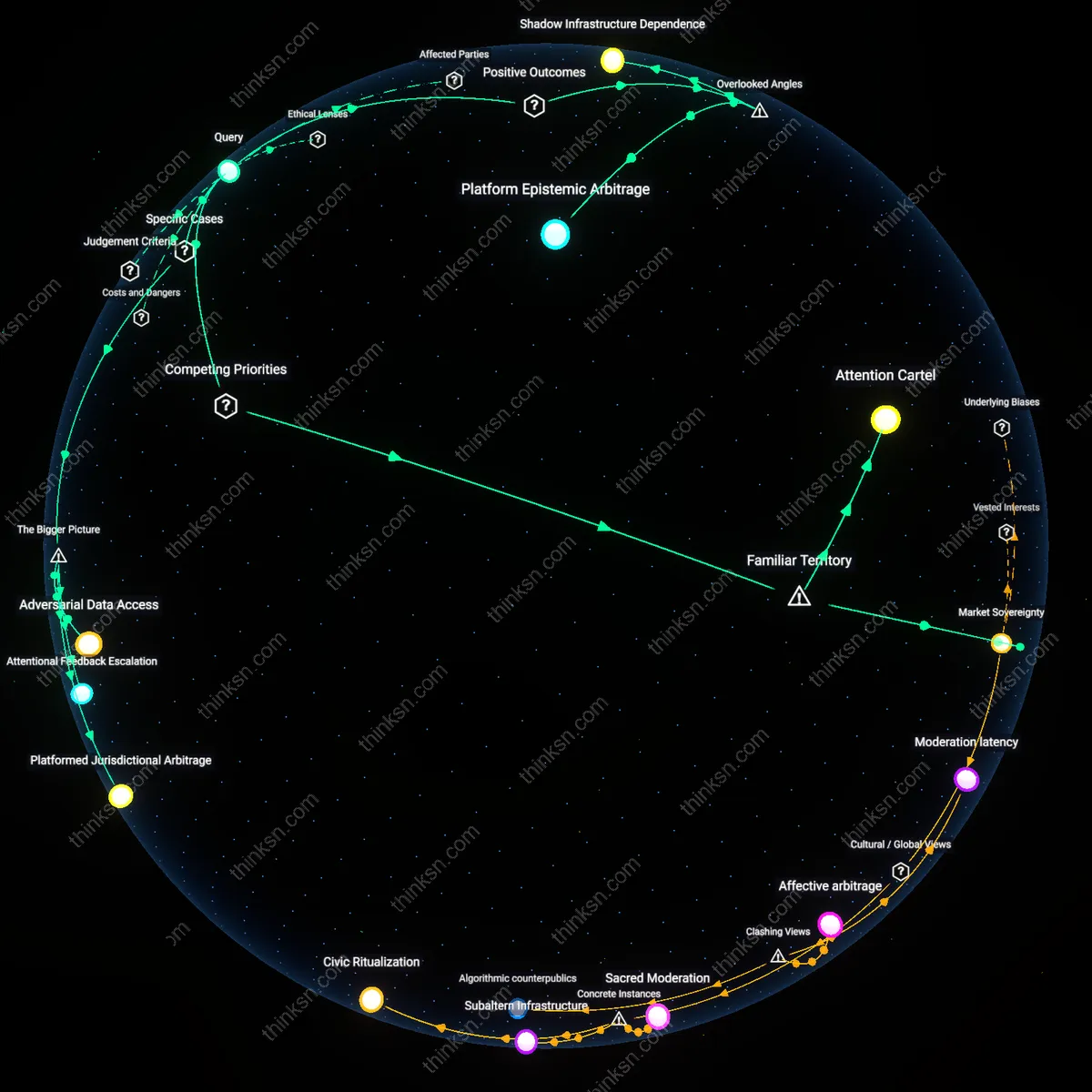

Platform Amplification Loops

Niche Reddit communities like r/The_Donald intensified political radicalization by repurposing humor and irony as plausible deniability for extremist recruitment, where users leveraged algorithmic visibility to mainstream conspiratorial narratives. This mechanism operated through Reddit’s upvote-driven ranking system, which privileged engagement over intent, allowing extremist-adjacent content to spread under the guise of satire. The non-obvious consequence is that belonging—framed as resistance to censorship—became a recruitment tool, subordinating ideological extremism to community identity while evading moderation. This reveals how platform architecture converts social cohesion into ideological propulsion under permissive visibility rules.

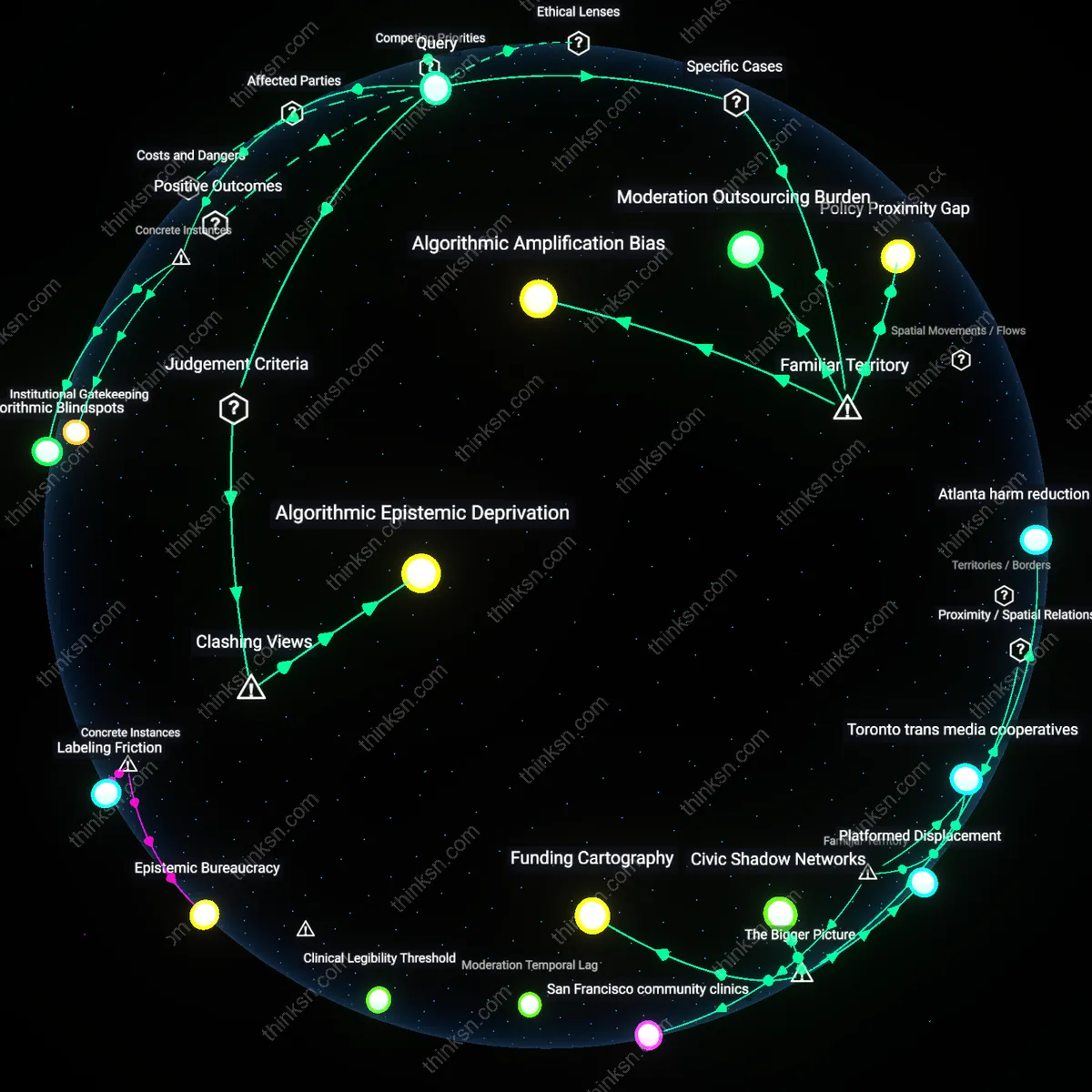

Moderation Infrastructure Gaps

The persistence of communities such as r/Incels before their 2017 ban exposed how decentralized moderation policies enabled extremist ideologies to embed within niche support forums masked as mental health spaces. Volunteer moderators lacked consistent enforcement capacity, while Reddit’s reliance on user reports delayed intervention until harm was widespread. The systemic flaw lies not in individual communities but in the governance model, where local belonging is structurally decoupled from platform-wide safety thresholds, allowing identity-based radicalization to precede formal definition as extremism.

Ideological Conversion Pipelines

Users migrating from subreddits like r/Chodi to banned off-platform forums such as Telegram channels demonstrate how Reddit functions as an onboarding layer rather than an endpoint for extremism. The sense of belonging in these communities normalizes progressively extreme worldviews through curated information diets and social validation, creating psychological dependency before external platforms complete radicalization. This pipeline effect depends on Reddit’s role as a legitimate, accessible internet space where ideological grooming is indistinguishable from community bonding—enabling recruitment by stealth rather than overt proselytizing.