Is Self-Regulation Enough to Safeguard Democratic Debate?

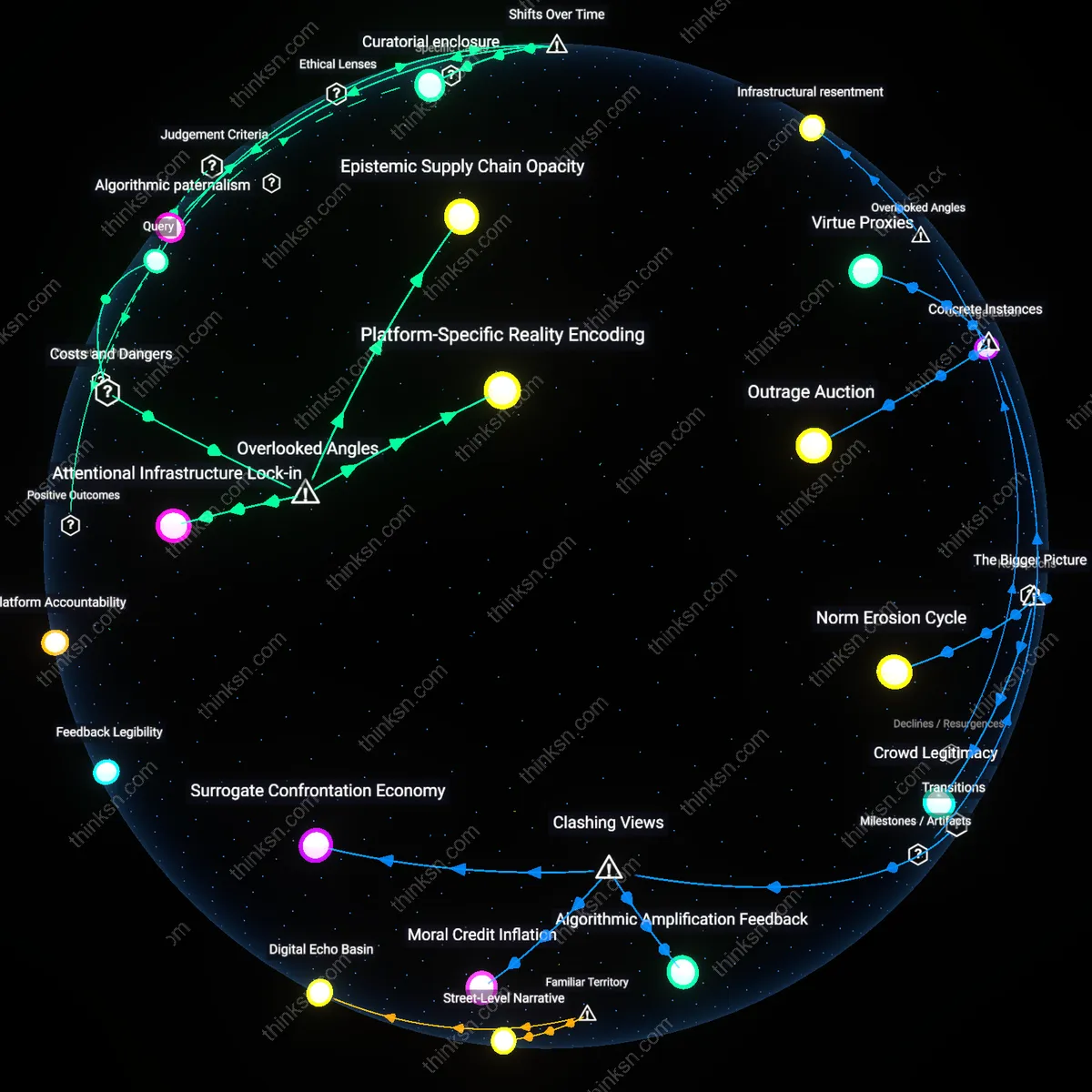

Analysis reveals 8 key thematic connections.

Key Findings

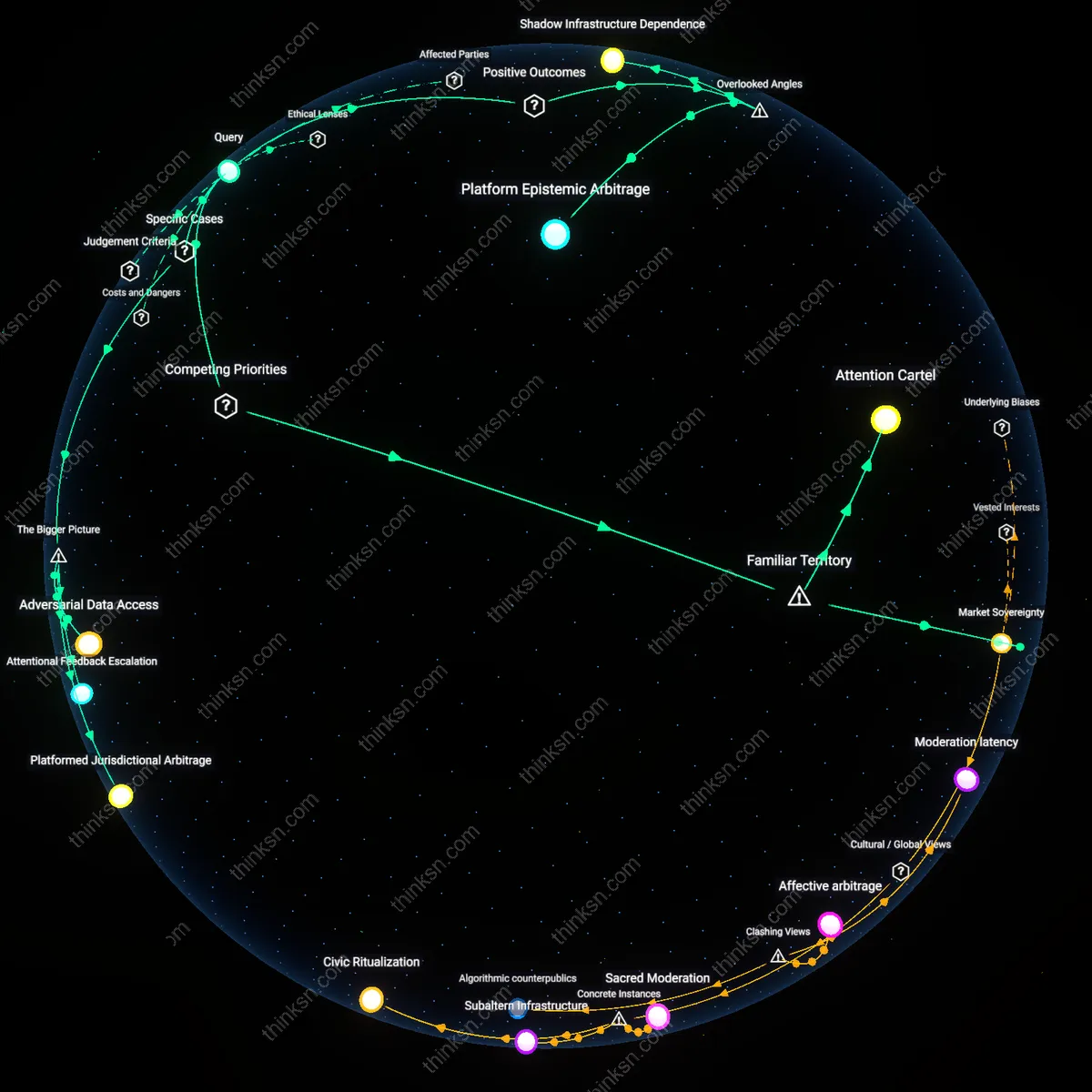

Platform Epistemic Arbitrage

Self-regulation by private platforms enhances democratic discourse by enabling cross-jurisdictional experimentation in content governance that external oversight would homogenize. Platforms like Meta and TikTok leverage differences in national speech norms—such as Germany’s restrictions on hate speech versus India’s controls on insurgency-related content—to test and refine moderation models that can later be adapted in politically fragile democracies, creating a de facto laboratory for speech rules that democratic policymakers rarely acknowledge. This dynamic is overlooked because most analyses assume regulatory divergence undermines democracy, when in fact it allows platforms to incubate nuanced responses to local democratic stressors without triggering wholesale policy rigidity that centralized oversight would impose.

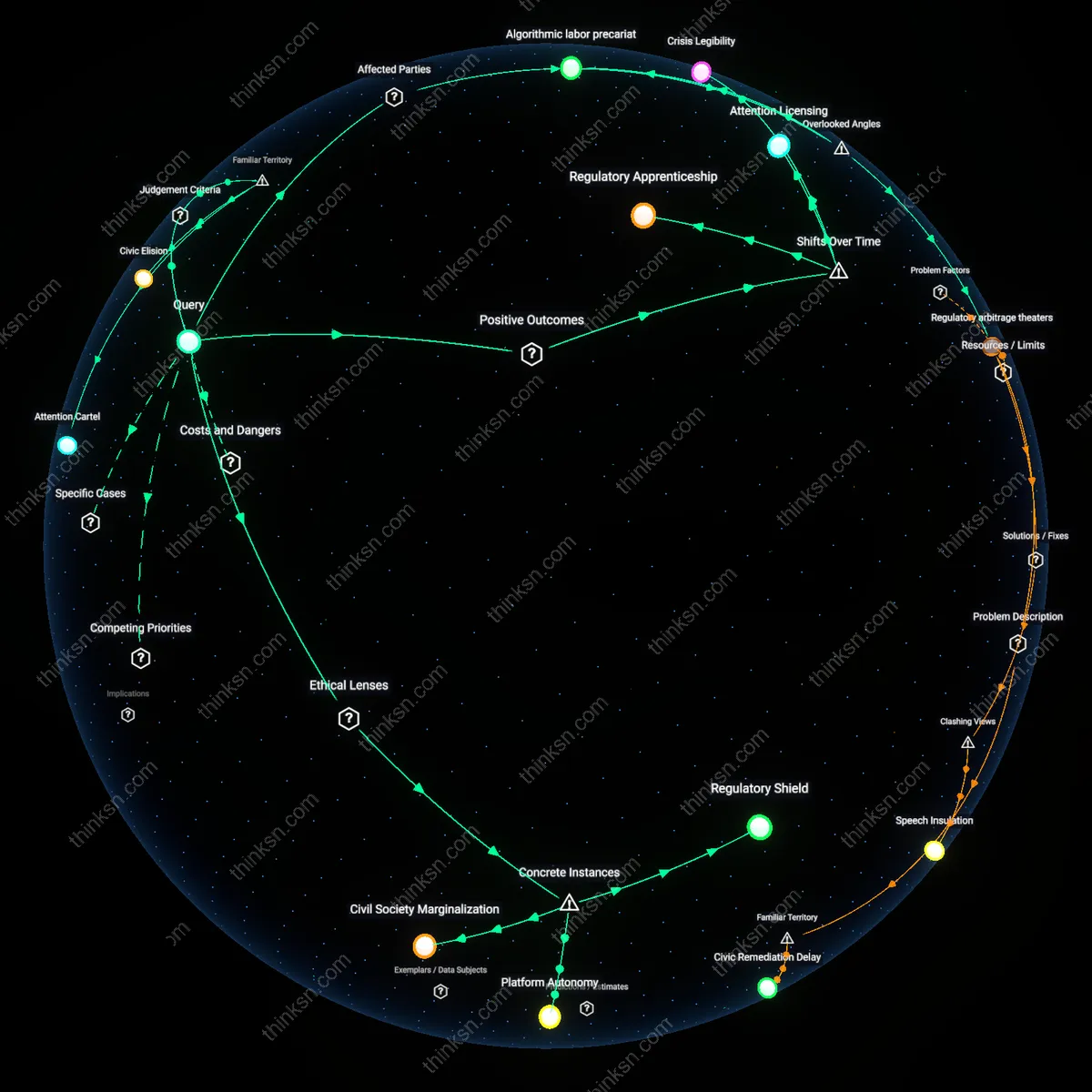

Modulation of Political Timeframes

Private platform self-regulation improves democratic resilience by unintentionally extending the feedback loops of political accountability, countering the standard critique that algorithms accelerate polarization. By intermittently suppressing or demoting certain types of viral content—such as during electoral periods—platforms insert artificial latency into the outrage cycle, giving civil society and institutional actors time to organize counter-narratives or fact-checking efforts, as seen in Facebook’s temporary ranking adjustments during Brazil’s 2022 elections. This buffering role is rarely recognized because debate focuses on content removal rather than the strategic slowing of dissemination, which in weak institutional environments can be more protective of democratic process than immediate takedowns or top-down regulation.

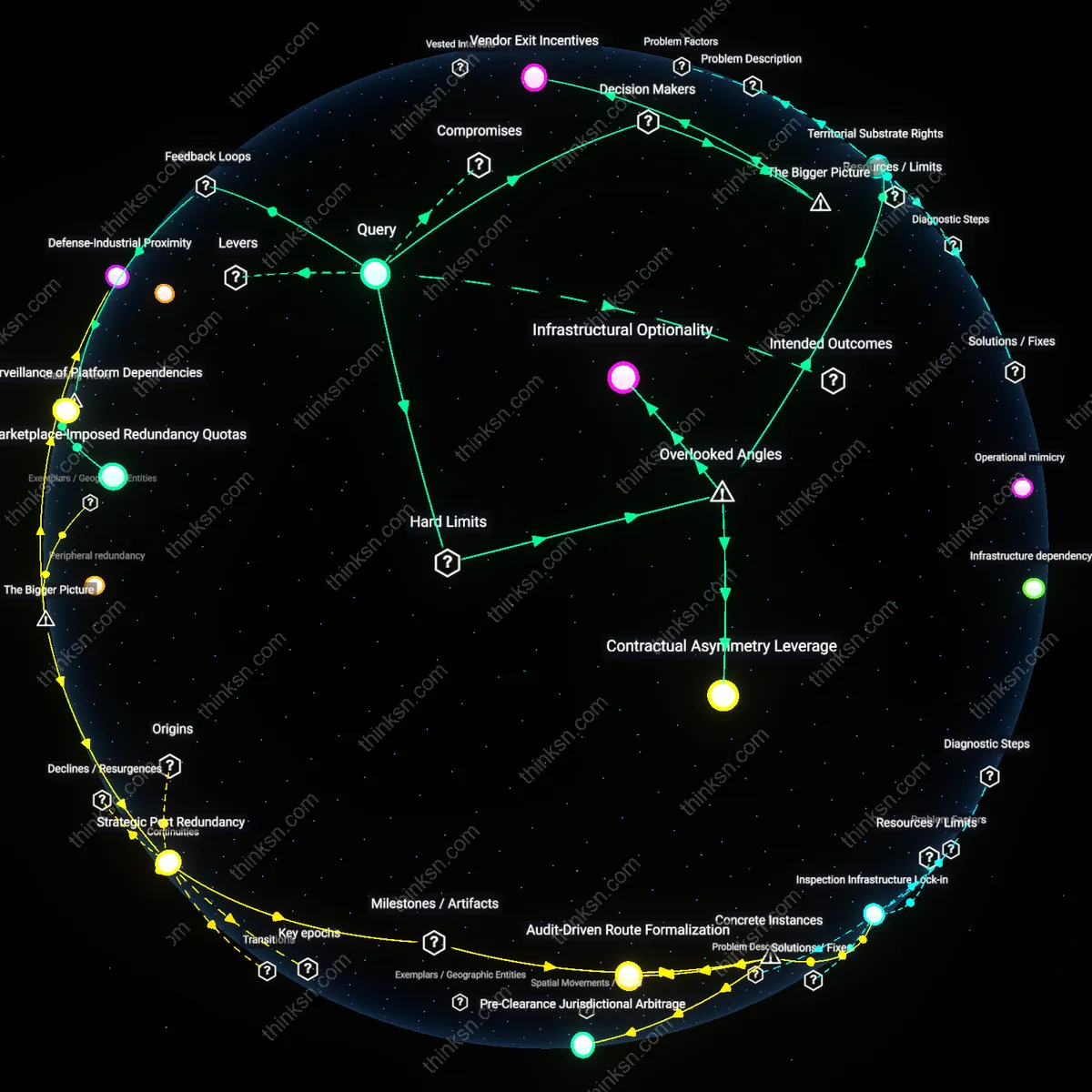

Shadow Infrastructure Dependence

External oversight becomes structurally necessary not because platforms are unaccountable but because their self-regulation relies on unseen dependencies on non-platform actors—like local fact-checkers in Kenya or volunteer moderator collectives in the Philippines—who absorb regulatory labor without institutional recognition, making platform governance fragile when these networks dissolve. This hidden reliance creates a systemic vulnerability where democratic safeguards fail not from malice or algorithmic bias but from the collapse of informal, underfunded ecosystems that external oversight could stabilize without imposing direct control. This dimension is overlooked because most regulation debates fixate on platforms’ internal policies, ignoring the distributed cognitive labor that actually adjudicates content at scale.

Market Sovereignty

Private platforms prioritize profit-driven engagement over civic integrity because their algorithms reward outrage and polarization, which increases user retention and ad revenue. This mechanism, embedded in the core architecture of platforms like Meta and X, systematically undermines democratic discourse by amplifying divisive content—despite superficial content moderation policies—because engagement metrics govern resource allocation. The non-obvious insight is that self-regulation fails not due to incompetence but by design, as platform sovereignty aligns with market logic rather than public interest, making democratic safeguards secondary to growth imperatives.

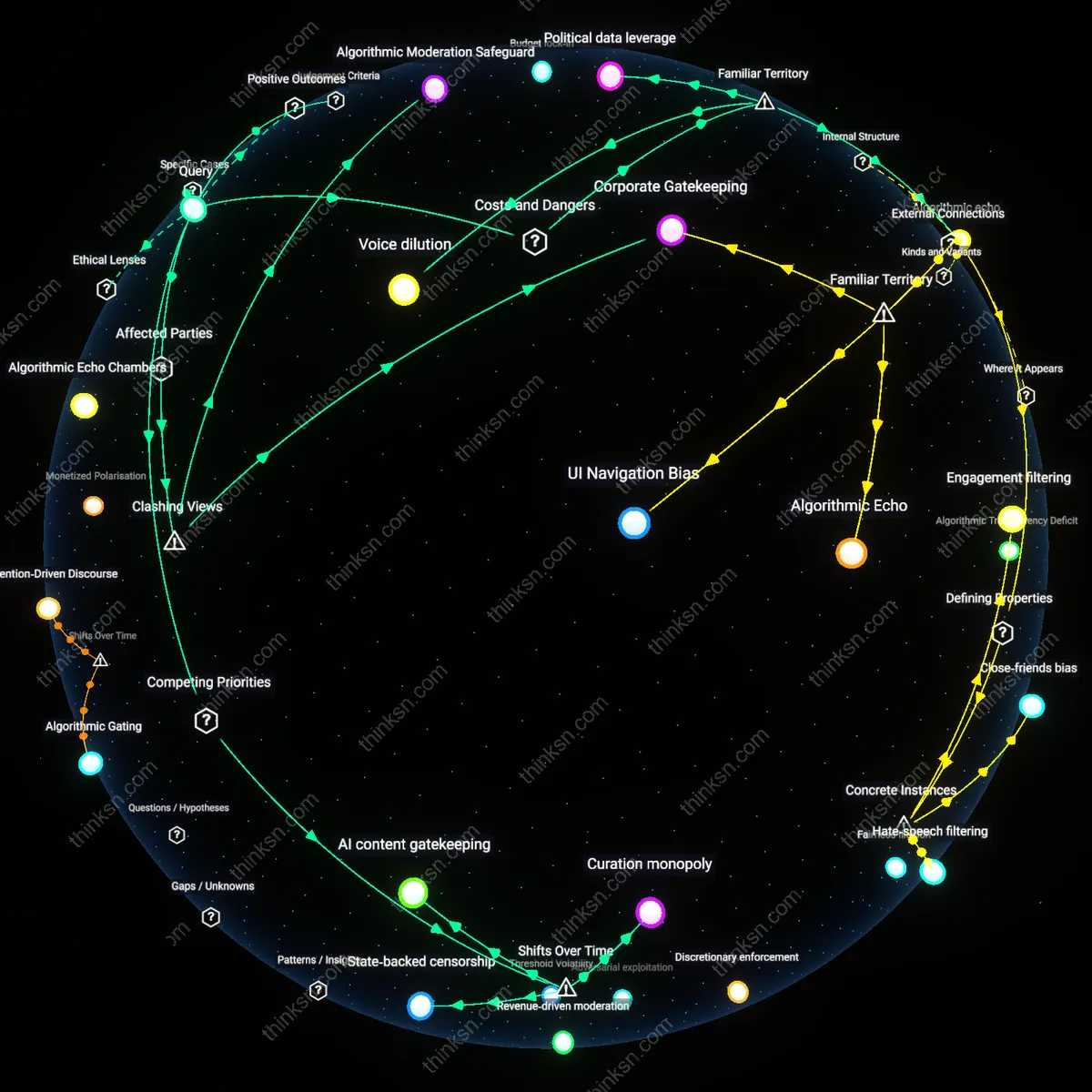

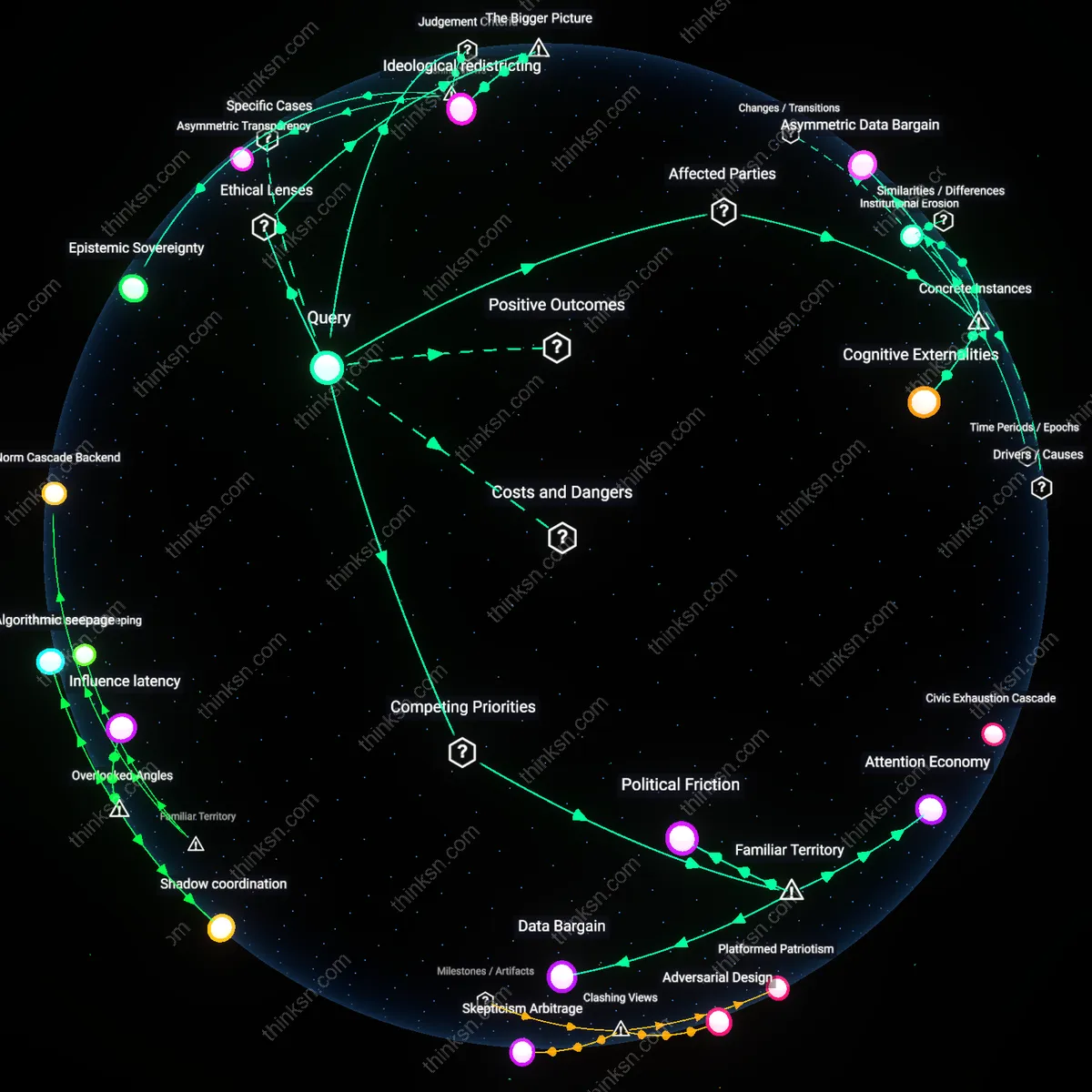

Attention Cartel

A handful of platforms monopolize public attention, allowing them to set the terms of political visibility and deliberation without civic accountability. Because platforms like YouTube, Facebook, and TikTok control not just speech but the very conditions under which speech becomes visible—through recommendation systems, search rankings, and notification loops—they effectively act as unauditable gatekeepers of discourse. The underappreciated reality, despite widespread awareness of platform power, is that users’ perceived freedom of choice masks systemic control over what is seen and when, making external oversight necessary to counteract algorithmic centralization that mimics state-like authority without democratic checks.

Adversarial Data Access

External oversight is necessary because private platforms like Meta and Twitter systematically restrict independent researchers’ access to data critical for auditing political disinformation campaigns, such as the 2016 U.S. election interference via IRA-linked accounts, enabling unaccountable content moderation at societal scale. The mechanism operates through centralized control of platform APIs and legal-technical barriers that prevent transparency, despite public interest in democratic integrity—highlighting how data gatekeeping functions as a structural substitute for governance. This reveals the non-obvious reality that accountability fails not from lack of will among regulators, but from the deliberate enclosure of evidence required to produce it.

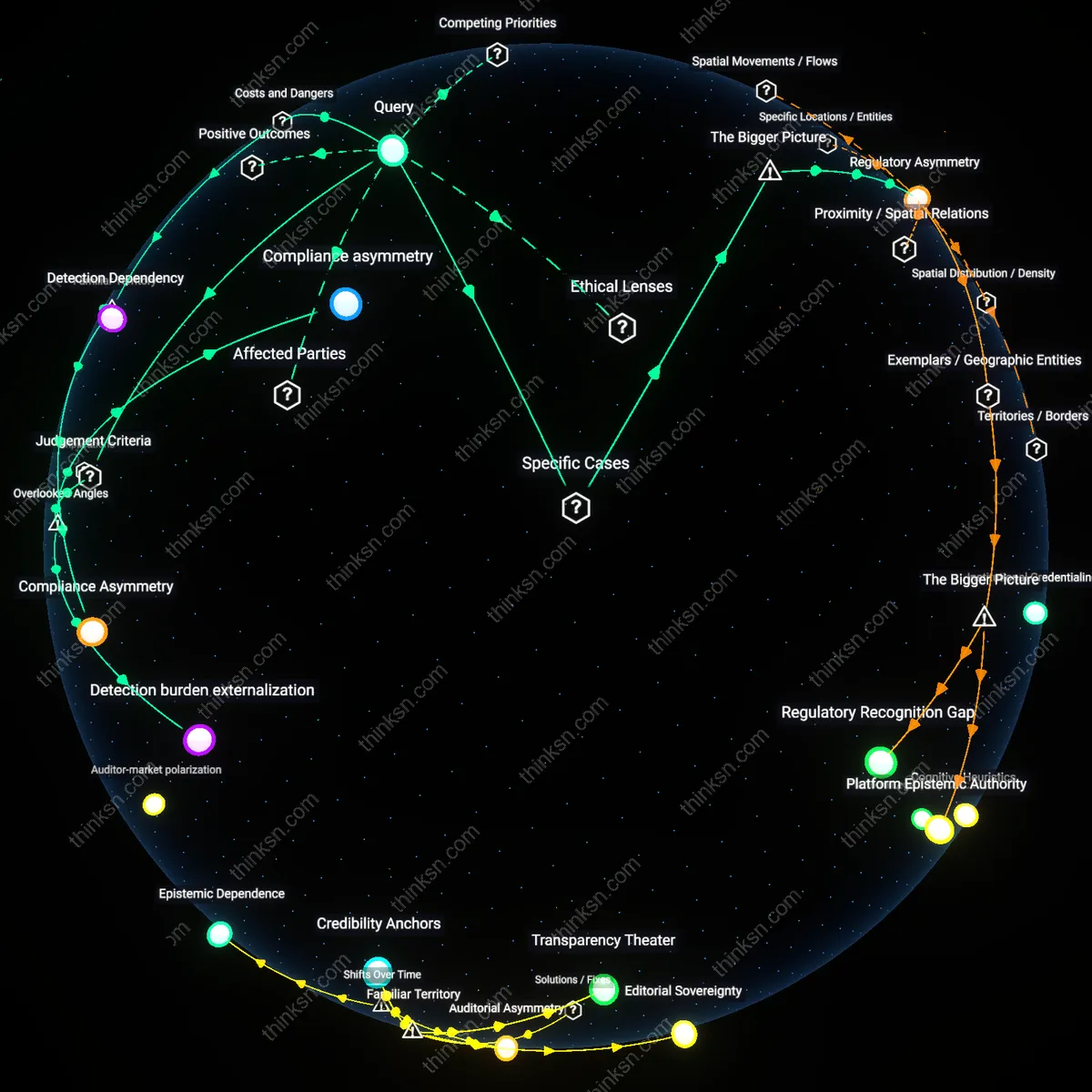

Platformed Jurisdictional Arbitrage

Self-regulation fails because global platforms such as YouTube and Facebook apply inconsistent enforcement standards across regions—as seen in Myanmar, where hate speech proliferation preceded the Rohingya genocide, while internal warnings were overridden by growth-focused algorithms. This occurs through a systemic misalignment between localized democratic harms and centralized, profit-driven governance structures that treat all speech risks as operational rather than ethical trade-offs. The underappreciated dynamic is that platforms function as de facto states without citizenship or accountability, leveraging jurisdictional fragmentation to delay or dilute regulatory costs.

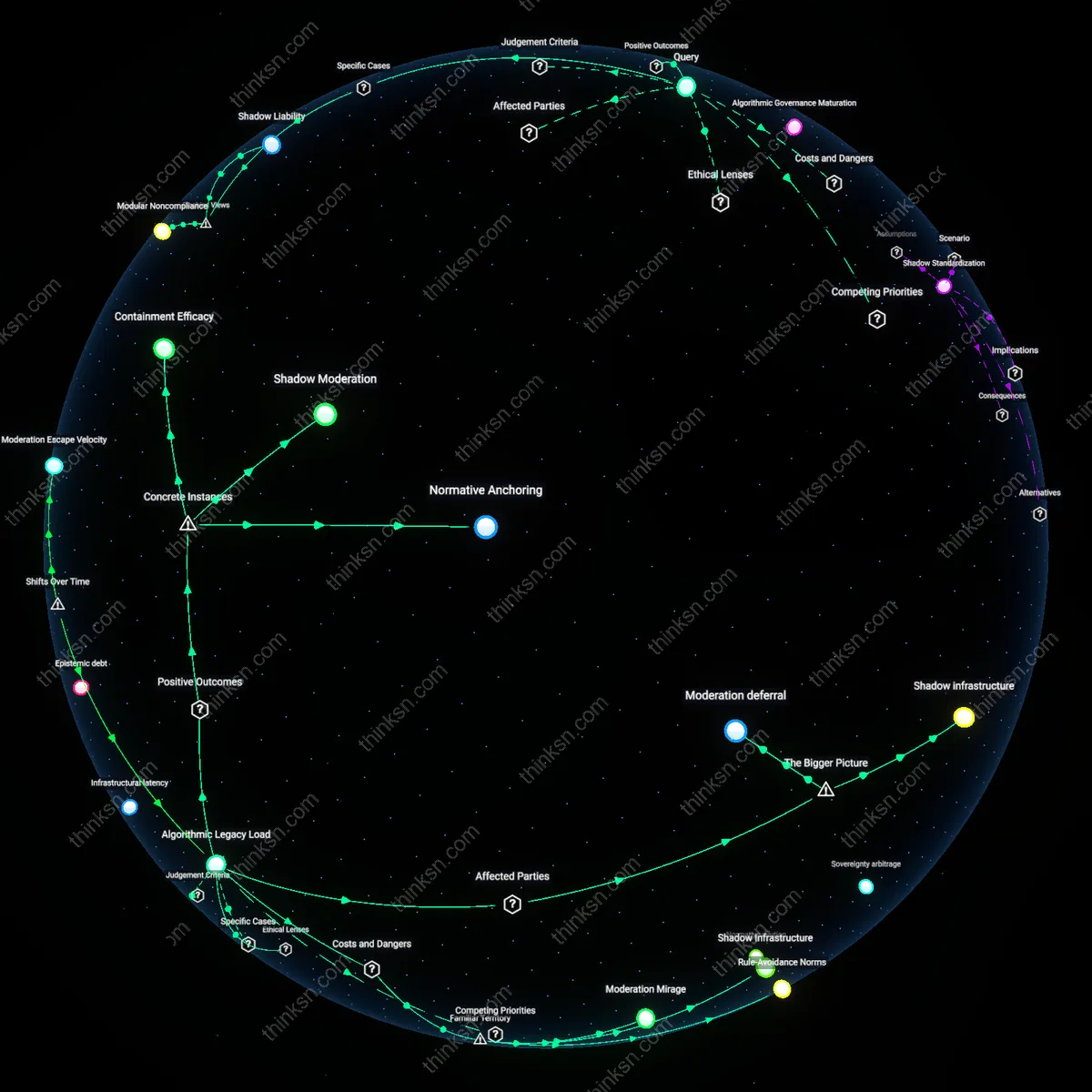

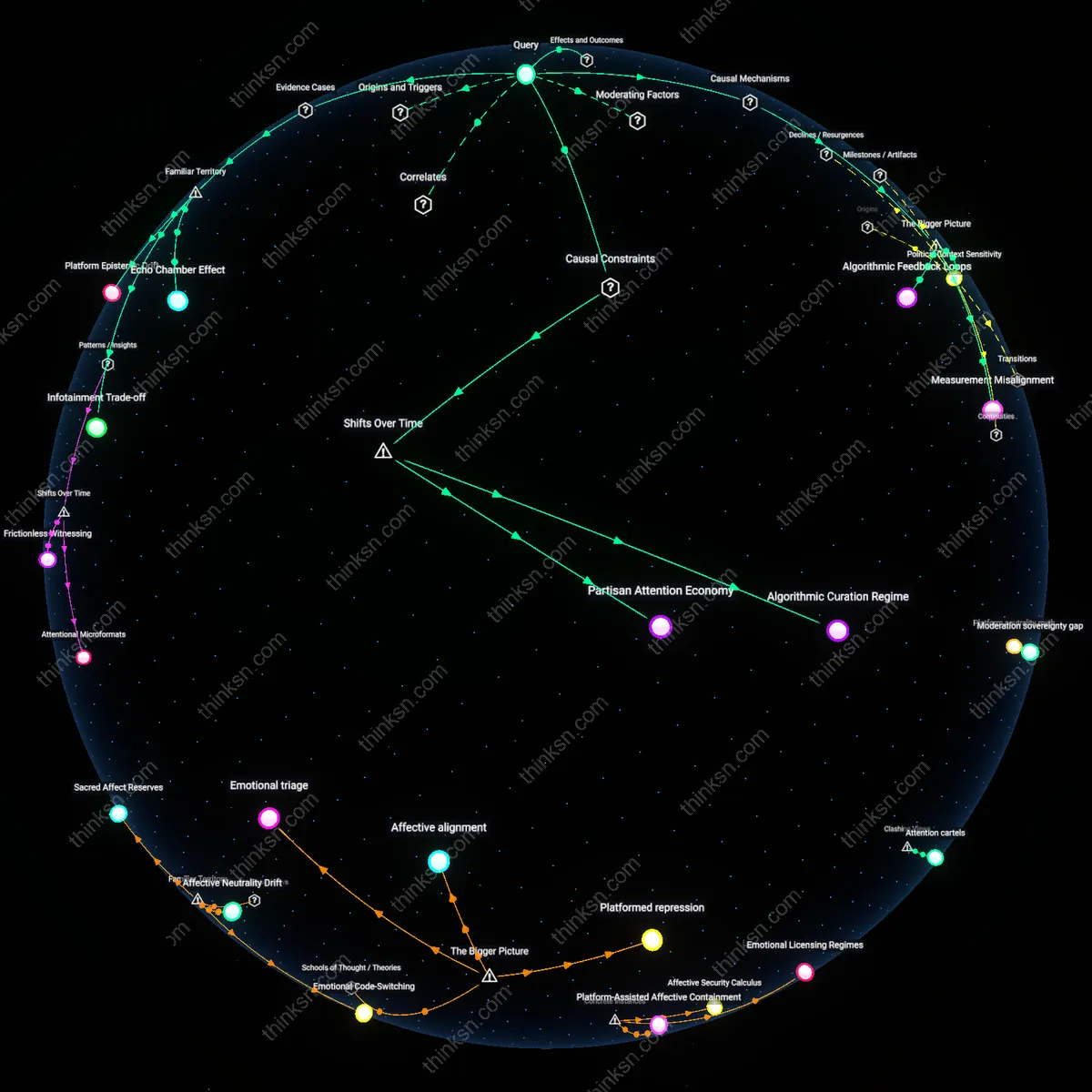

Attentional Feedback Escalation

External oversight is necessary because algorithmic recommendation systems at firms like TikTok and Google amplify polarizing content to maximize engagement, as observed in Brazil’s 2022 election cycle where misinformation-laden videos reached wider audiences than verified sources due to engagement-based ranking. This mechanism functions through closed-loop feedback between user behavior and content promotion, where democratic discourse is eroded not by deliberate censorship but by invisible reward structures embedded in machine learning models. The non-obvious insight is that harm arises not from content per se, but from the platform’s structural incentive to convert civic participation into behavioral surplus.