Surveillance Theater

Fringe groups in authoritarian-leaning regions interpret online non-intervention as a calculated setup because periodic inaction precedes targeted crackdowns using accumulated data, as seen in China’s tolerance of dissent in niche forums before mass arrests during political transitions; this pattern reveals that apparent neglect is a data-harvesting mechanism embedded in long-term state surveillance infrastructure, not passive tolerance. The non-obvious element is that government inaction functions as an active intelligence-gathering phase—users are allowed to self-identify as dissidents under the false premise of anonymity, which most people overlook because they associate surveillance with constant visibility, not strategic absence.

Neglect Spiral

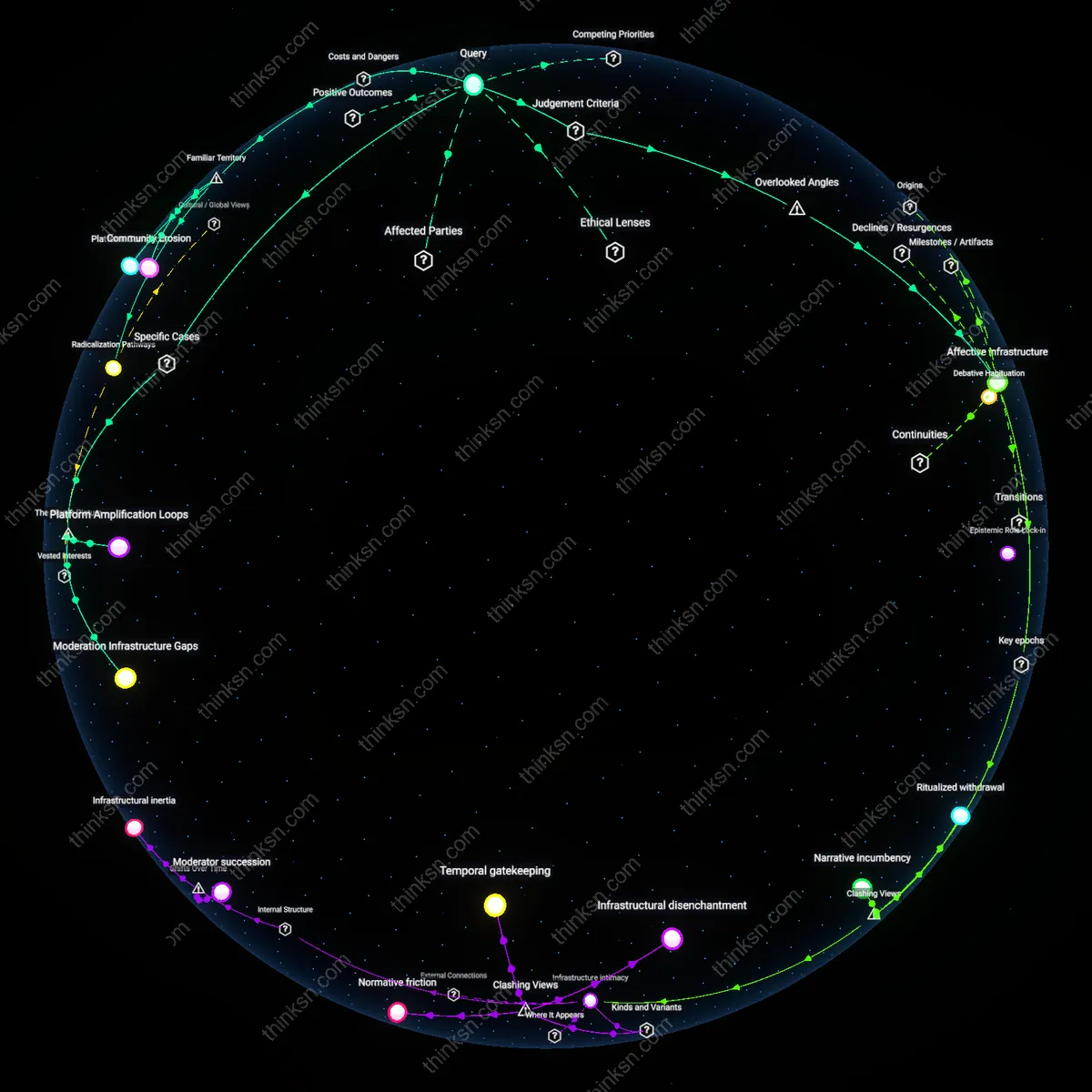

In economically marginalized regions like post-industrial parts of the U.S. Rust Belt, fringe groups interpret online non-intervention as governmental neglect because platforms hosting extremist content in these areas remain unmoderated due to under-resourced law enforcement and digital policy deserts, a condition perpetuated by federal disinvestment in rural broadband governance. The underappreciated reality is that this neglect reinforces group cohesion through shared resentment, turning digital abandonment into a catalyst for ideological radicalization—an outcome obscured by public discourse that equates online regulation with urban-centered disinformation, not structural disengagement.

Tolerance Mirage

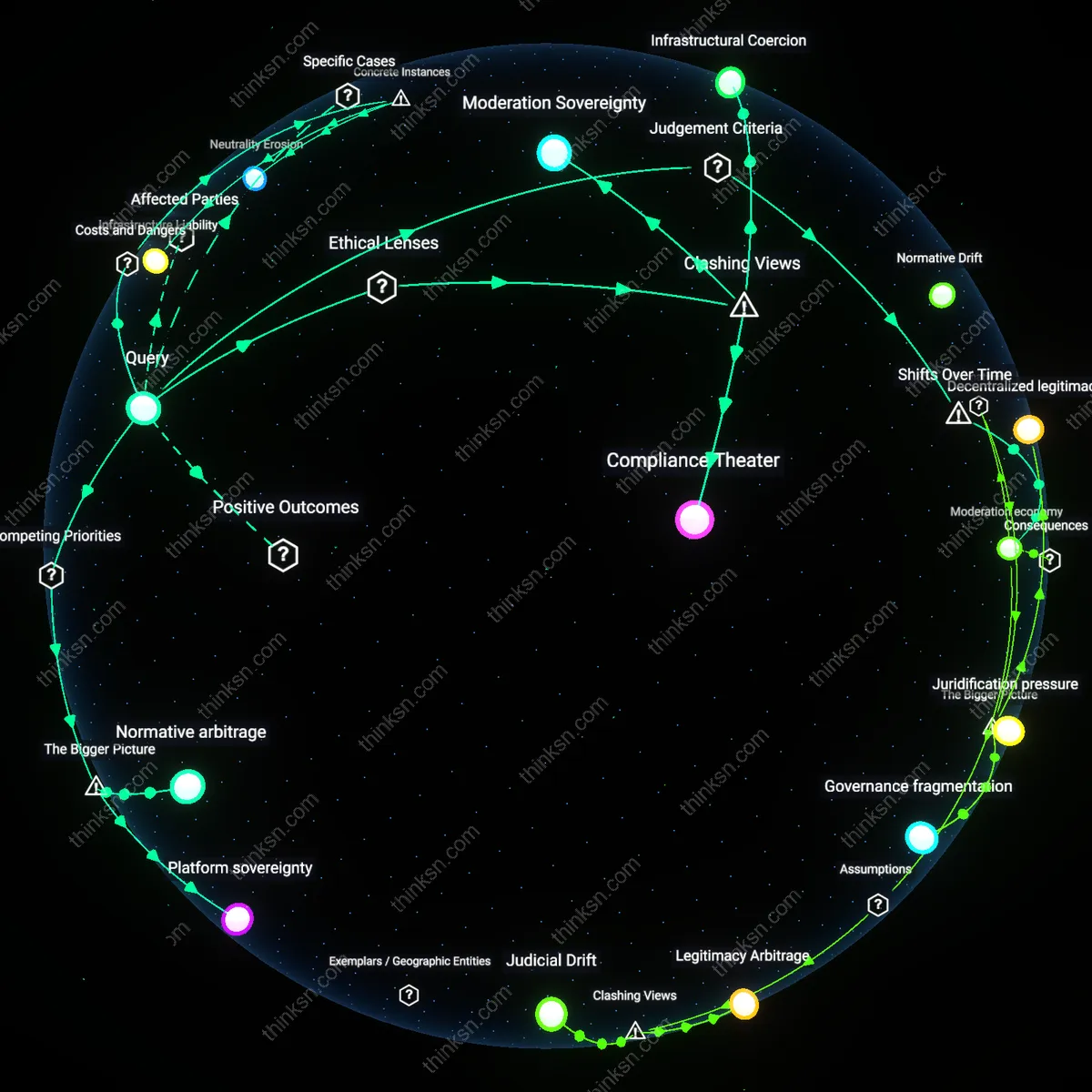

In liberal democracies such as Germany, far-right groups interpret lax enforcement against their online activity as implicit tolerance, even when legal frameworks like NetzDG mandate removal of hate speech, because inconsistent platform-level enforcement creates the perception of state complicity, particularly when counter-messaging is absent during peak recruitment periods. The overlooked mechanism is that policy precision (laws on the books) and operational ambiguity (uneven application) diverge, producing a false signal of acceptance; this gap is rarely acknowledged in public debate, which tends to frame government action in binary terms—either full censorship or full freedom—missing the psychological impact of erratic enforcement.

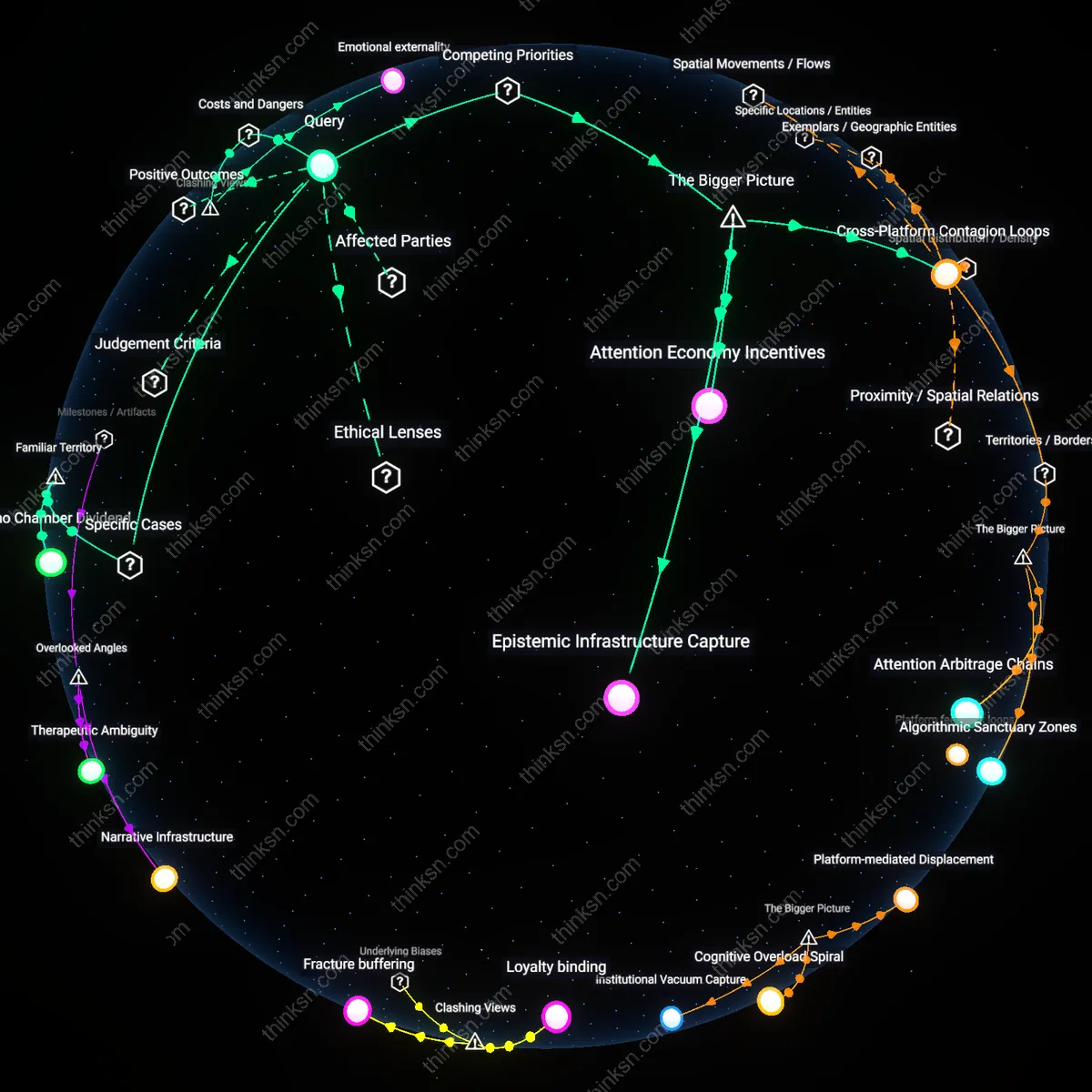

Epistemic Asymmetry Regime

Fringe groups in authoritarian-leaning regions interpret government non-intervention online as an epistemic asymmetry regime, where state silence functions as implicit authorization to develop alternative information ecosystems while remaining deniably unacknowledged. This mechanism operates through the strategic ambiguity of official inaction, allowing groups to propagate conspiratorial or extremist narratives under the protection of non-sanction rather than endorsement, as seen in Russian-speaking far-right Telegram communities that thrive due to delayed or absent regulatory response. The non-obvious reality is that absence of suppression can be a tool of epistemic control—permitting distortion to accumulate until it undermines institutional credibility, a dynamic overlooked because most analyses focus on overt censorship rather than calculated permissiveness.

Civic Abandonment Feedback Loop

Marginalized left-wing collectives in economically distressed Western regions, such as rural Pacific Northwest anarchist networks, interpret government non-intervention as civic abandonment—a withdrawal so total that even digital oversight is absent, reinforcing their framing of the state as functionally defunct. This perception fuels autonomous organizing but also paradoxically entrenches disengagement, as the lack of regulatory presence is read not as freedom but as confirmation of systemic neglect, breeding a feedback loop where communities cease expecting state accountability altogether. The overlooked dimension is that digital non-intervention can erode civic imagination itself, making restoration of trust structurally harder than in contexts of visible repression, which at least confirm the state’s presence.

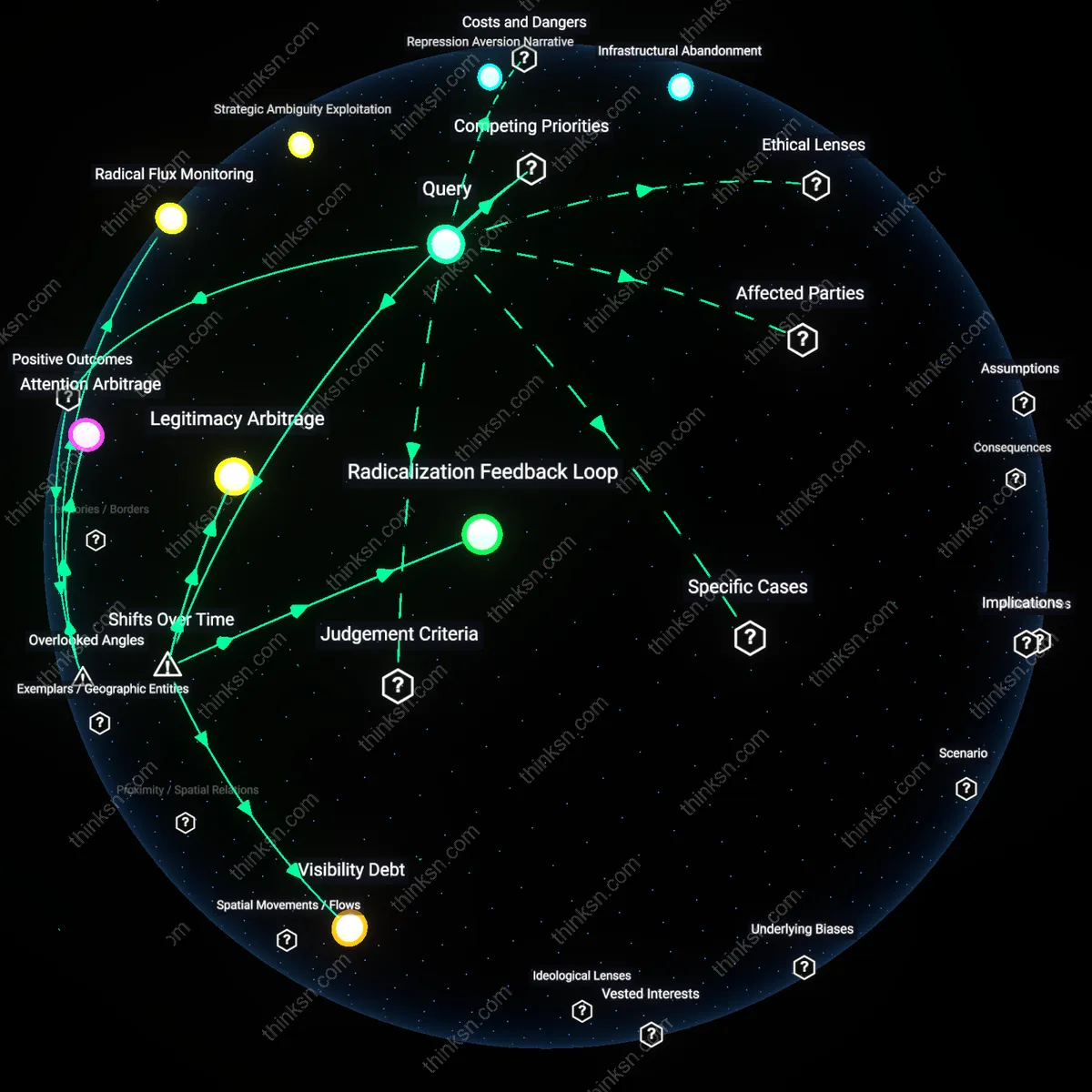

Legitimacy Arbitrage Mechanism

Right-libertarian encryption communities in decentralized internet enclaves like the Dark Web interpret non-intervention as a legitimacy arbitrage mechanism, wherein state forbearance is exploited to build parallel legal epistemologies—such as blockchain-based 'private law' systems—that mimic jurisdictional authority while avoiding state recognition. Operating through the residual legal vacuum, these groups convert silence into sovereign pretense, exemplified by autonomous digital courts on decentralized forums that enforce 'binding' rulings without state enforcement. The underappreciated dynamic is that non-intervention enables jurisdictional mimicry, where fringe actors don't just resist the state but replicate its functions in a way that destabilizes the monopoly on adjudication—something most analyses miss by focusing on dissent rather than substitution.

Digital Sovereignty Legacy

After the 2013 Snowden revelations, non-Western states like China and Iran reframed government non-intervention online as a facade for Western digital imperialism, shifting from earlier post-Cold War perceptions of the internet as a neutral public good; this reinterpretation activated longstanding anticolonial media policies, repurposing Cold War-era suspicions of foreign information dominance into algorithmic governance models that treat silence as sabotage. The mechanism—state-controlled media institutions redefining 'non-intervention' as asymmetric warfare—reveals how digital policy is filtered through inherited geopolitical distrust, making apparent that disengagement is not absence but strategic positioning in postcolonial cyber doctrines.

Neglect as Secular Sacrament

In post-Soviet Eastern Orthodox communities after the 2000s, fringe religious groups began interpreting state non-intervention in online blasphemy and sacrilege as a metaphysical withdrawal of moral guardianship, contrasting sharply with pre-1991 Soviet hyper-regulation of religious expression; this shift transformed state silence from a sign of liberalization into a sign of eschatological decay, where online chaos became evidence of spiritual abandonment by both church and state. The mechanism—parish networks using social media to document state inaction on digital desecration—exposes how digital governance gaps are sacralized when secular transitions fail to deliver moral order, revealing a latent theology of governance in digital passivity.

Laissez-Faire Fundamentalism

Following the 1996 Telecommunications Act in the U.S., far-right libertarian and militia groups reinterpreted federal non-intervention in early commercial internet spaces as a restoration of constitutional originalism, reversing their 1980s-era suspicion of federal technological overreach; this pivot turned regulatory restraint into a dogma, where absence of moderation was seen not as neglect but as affirmation of natural market sovereignty, mirroring frontier homesteading logic in digital space. The mechanism—crypto-forum moderation policies mimicking constitutional absolutism—uncovers how American exceptionalist narratives have been reverse-engineered to sacralize inaction, producing a fundamentalist ideology where governance voids become sites of ideological purity.

Strategic Abandonment

Fringe anti-vaccination networks in Quebec during the 2021 Canadian convoy protests interpreted Health Canada’s limited online moderation as strategic abandonment, where deliberate inaction allowed dissent to amplify beyond public health reach; this perception was sustained by Public Safety Canada’s delayed response to coordinated disinformation on platforms like Gettr and Telegram, revealing how non-intervention functioned not as neutrality but as an enabling condition for movement cohesion, which benefited far-right influencers who gained authority by framing themselves as resistance leaders amid institutional silence.

Absorptive Illegitimacy

In 2016, Macedonian teen webmasters in Veles exploited U.S. social media platforms’ non-intervention in foreign political content to mass-produce fake news that influenced the American election, leading local actors to view American regulatory passivity not as tolerance but as absorptive illegitimacy—a system where democratic erosion in one jurisdiction becomes a resource for economic gain in another; this reveals how digital peripheries can weaponize the core’s hands-off stance, with ad-revenue incentives aligning with political chaos abroad, thereby normalizing state inaction as a form of extractive opportunity.

Opacity Premium

Uyghur diaspora groups interpreting China’s non-intervention on WeChat censorship outside its borders as a calculated setup discovered that encrypted messages were still being monitored through cross-border data leaks involving Hong Kong-based servers, exposing a dual-state logic where visible non-intervention masks a submerged surveillance infrastructure; this opacity premium—value derived from the ambiguous threshold between intervention and neglect—enables authoritarian overreach while allowing plausible deniability, thus benefiting state security apparatuses that leverage perceived leniency to entrap dissidents operating abroad.

Strategic Ambiguity Exploitation

Fringe anti-regulatory tech lobbies in the U.S. interpret online non-intervention as a green light to expand surveillance infrastructure, justifying inaction as ideological alignment with libertarian digital governance—this interpretation is sustained not by formal policy but by deliberate regulatory delay from telecommunications regulators and the revolving door between FCC leadership and Silicon Valley compliance teams, which creates a permissive environment where de facto tolerance becomes a tool for profit-driven data extraction under the guise of non-interference; the non-obvious mechanism here is that governmental 'inaction' is actively produced and maintained by actors who benefit from ambiguous oversight, transforming neglect into a predictable operational advantage.

Repression Aversion Narrative

In Hong Kong, pro-democracy digital activists perceive Beijing’s selective non-intervention in certain cross-border forums as tactical withdrawal, not tolerance—because the state previously demonstrated capacity for real-time censorship during the 2019 protests, current lapses are interpreted as intelligence-gathering opportunities where allowing limited discourse enables identification and subsequent neutralization of dissident networks, a pattern reinforced by the PRC’s broader strategy of calibrated repression in semi-autonomous zones; the underappreciated dynamic is that perceived government absence functions as a signal within authoritarian systems precisely because it violates established patterns of control, making non-intervention itself a detectable instrument of statecraft rather than mere omission.

Infrastructural Abandonment

Indigenous digital collectives in the Amazon Basin view the Brazilian federal government’s non-intervention in rural connectivity as structural neglect rooted in colonial resource extraction logics, where absent broadband regulation correlates with unregulated mining and agribusiness expansion enabled by weakened environmental oversight—a causal chain visible in how satellite internet blackouts coincide with incursions by illegal logging syndicates protected by local political patrons; the systemic insight is that digital invisibility is not passive but actively aligns with extractive economies that depend on isolating communities from legal recourse and global scrutiny, turning online absence into a precondition for physical exploitation.