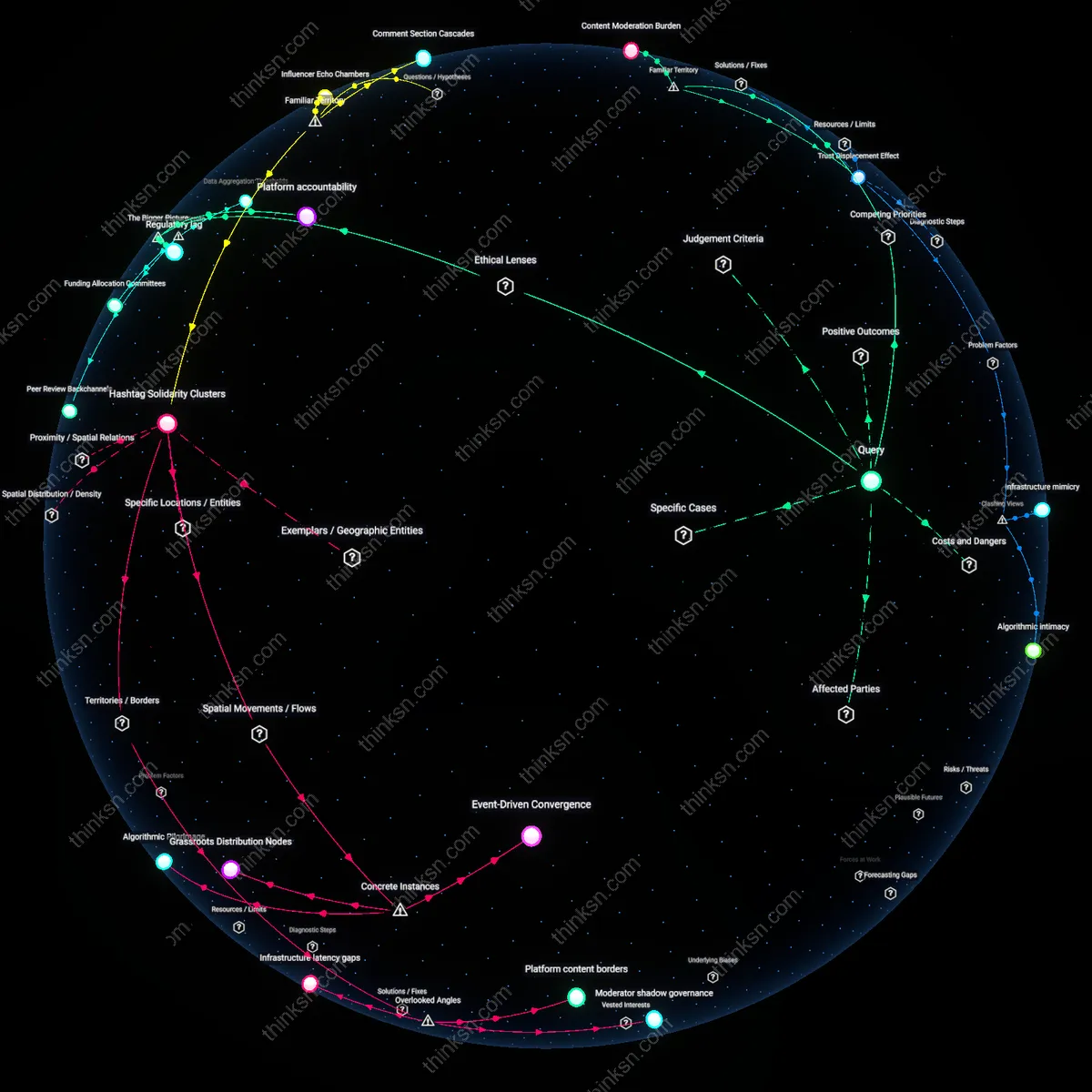

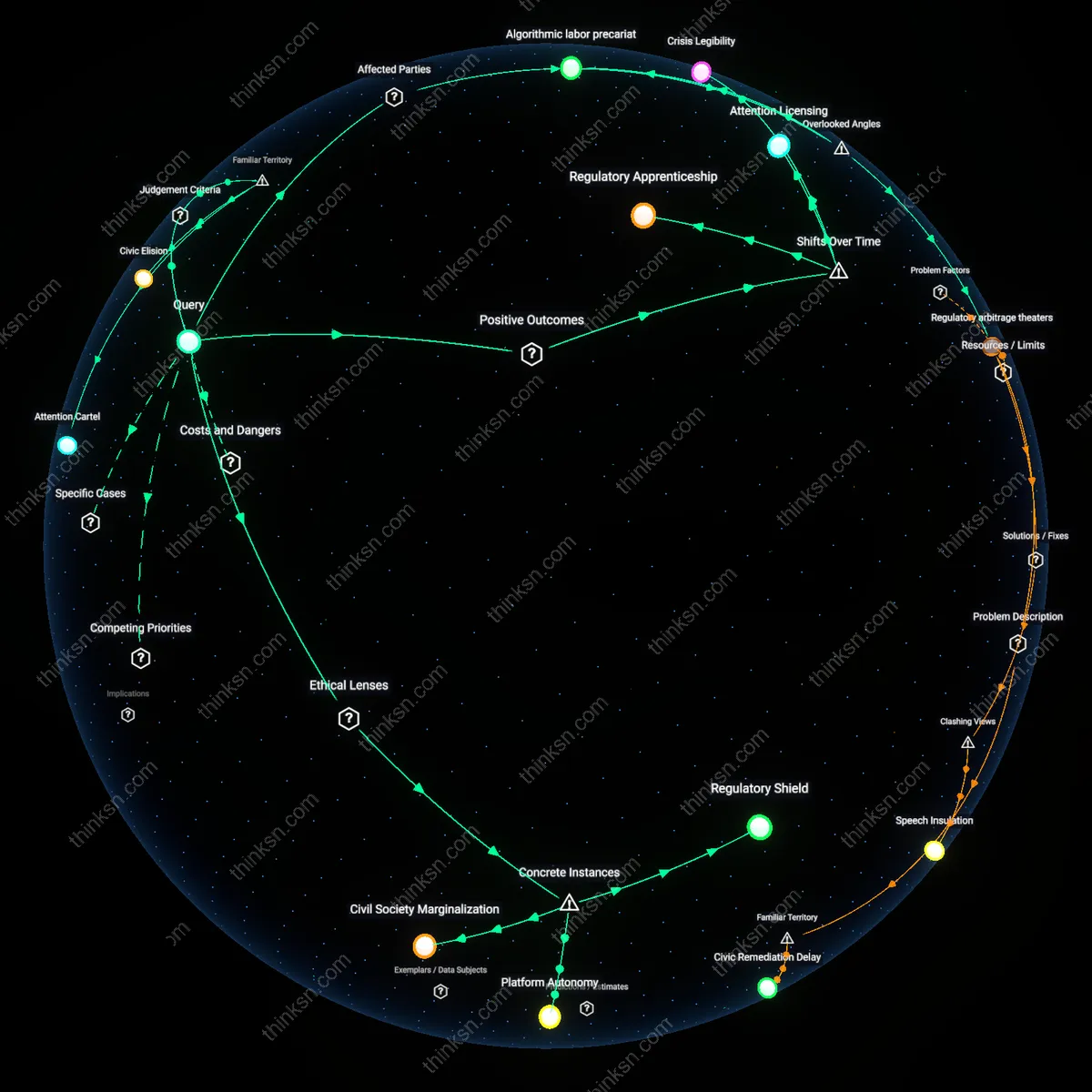

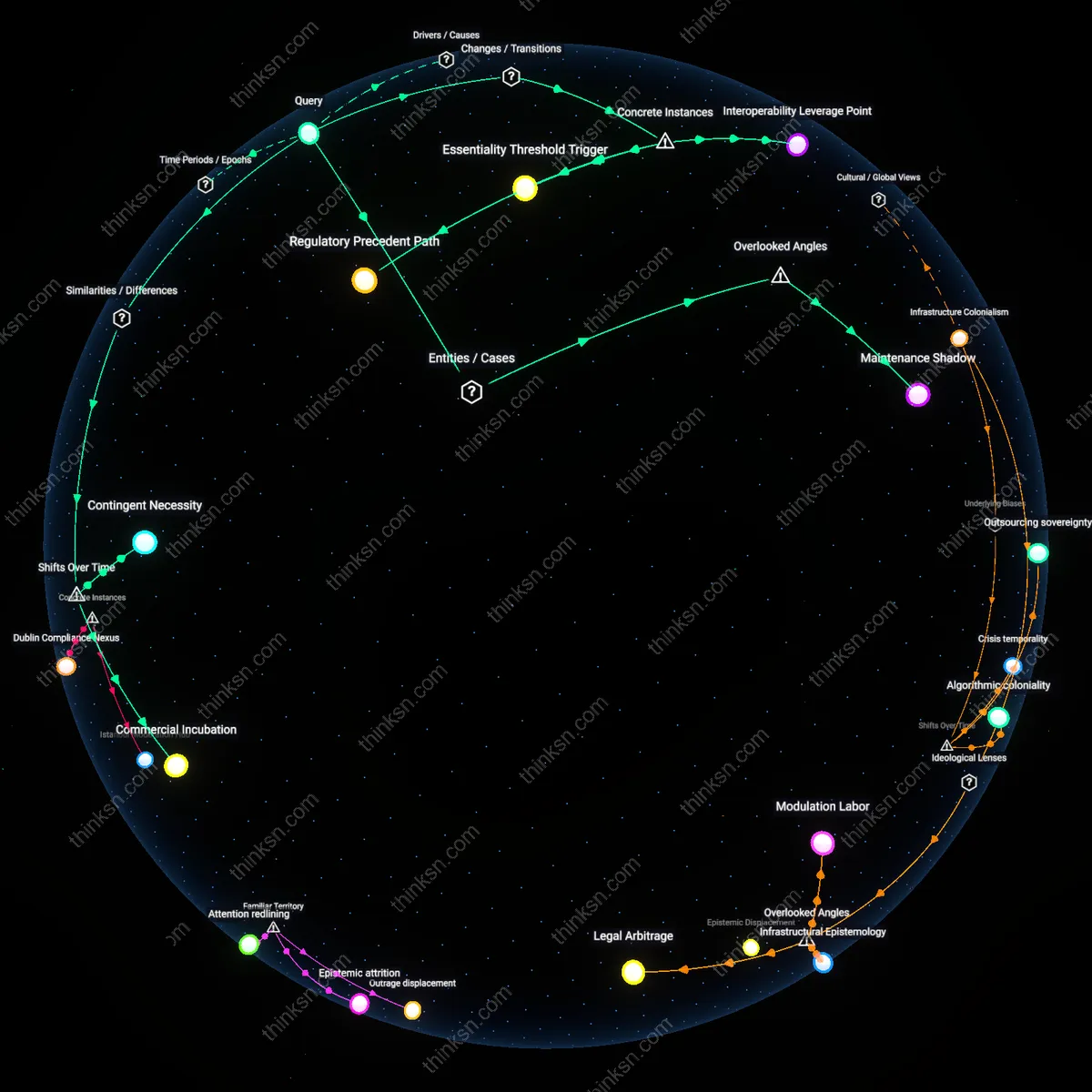

Does More Moderation Stifle Marginalized Voices?

Analysis reveals 7 key thematic connections.

Key Findings

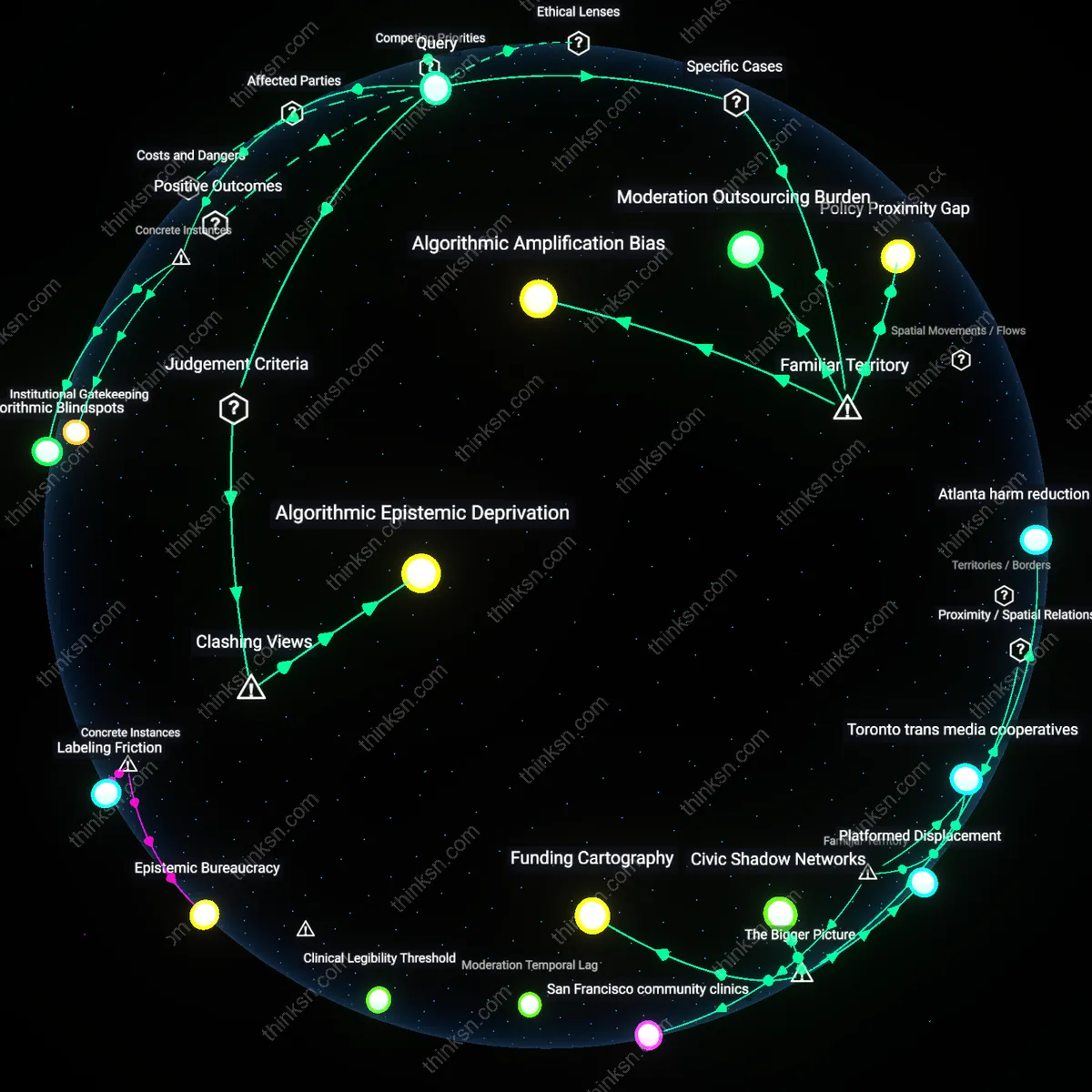

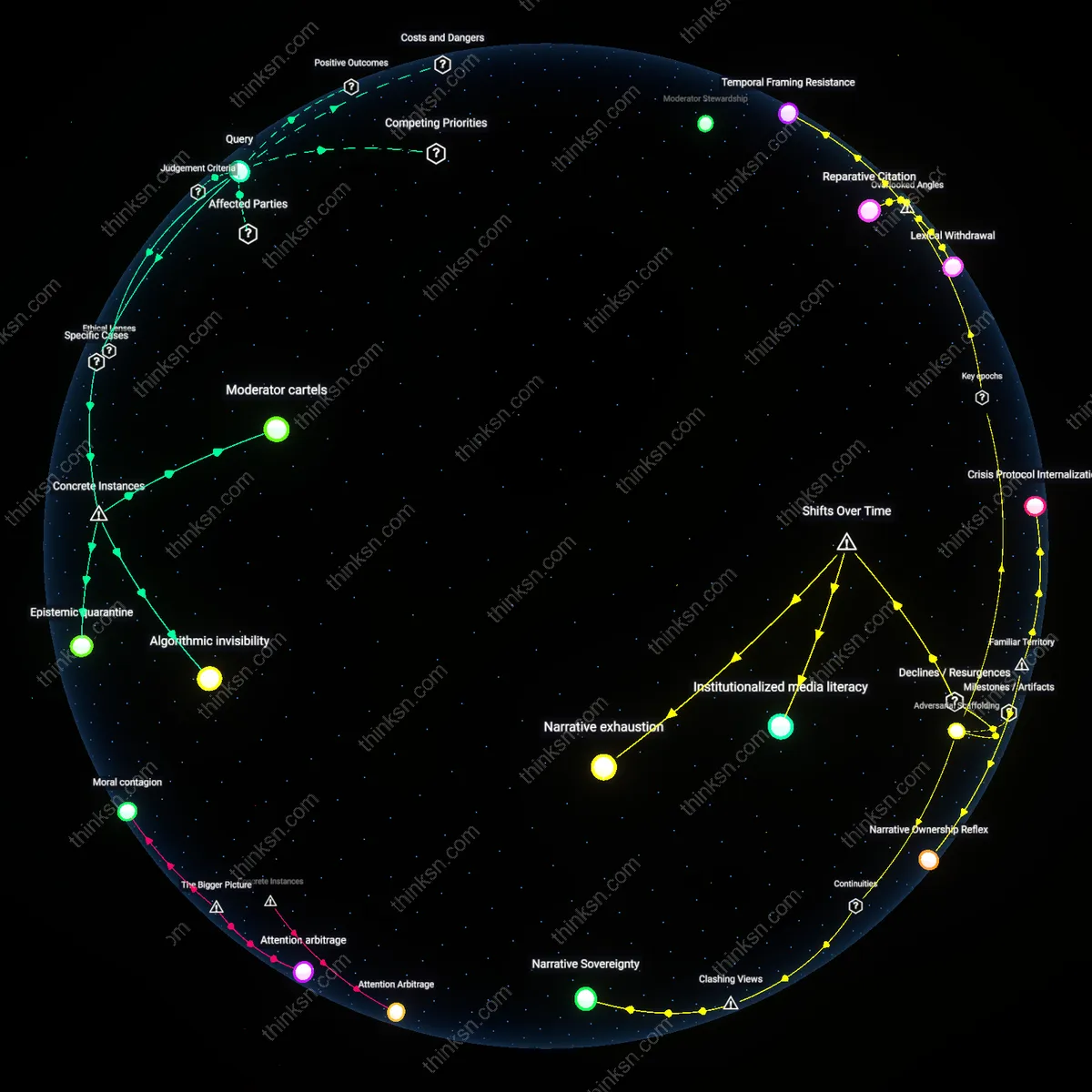

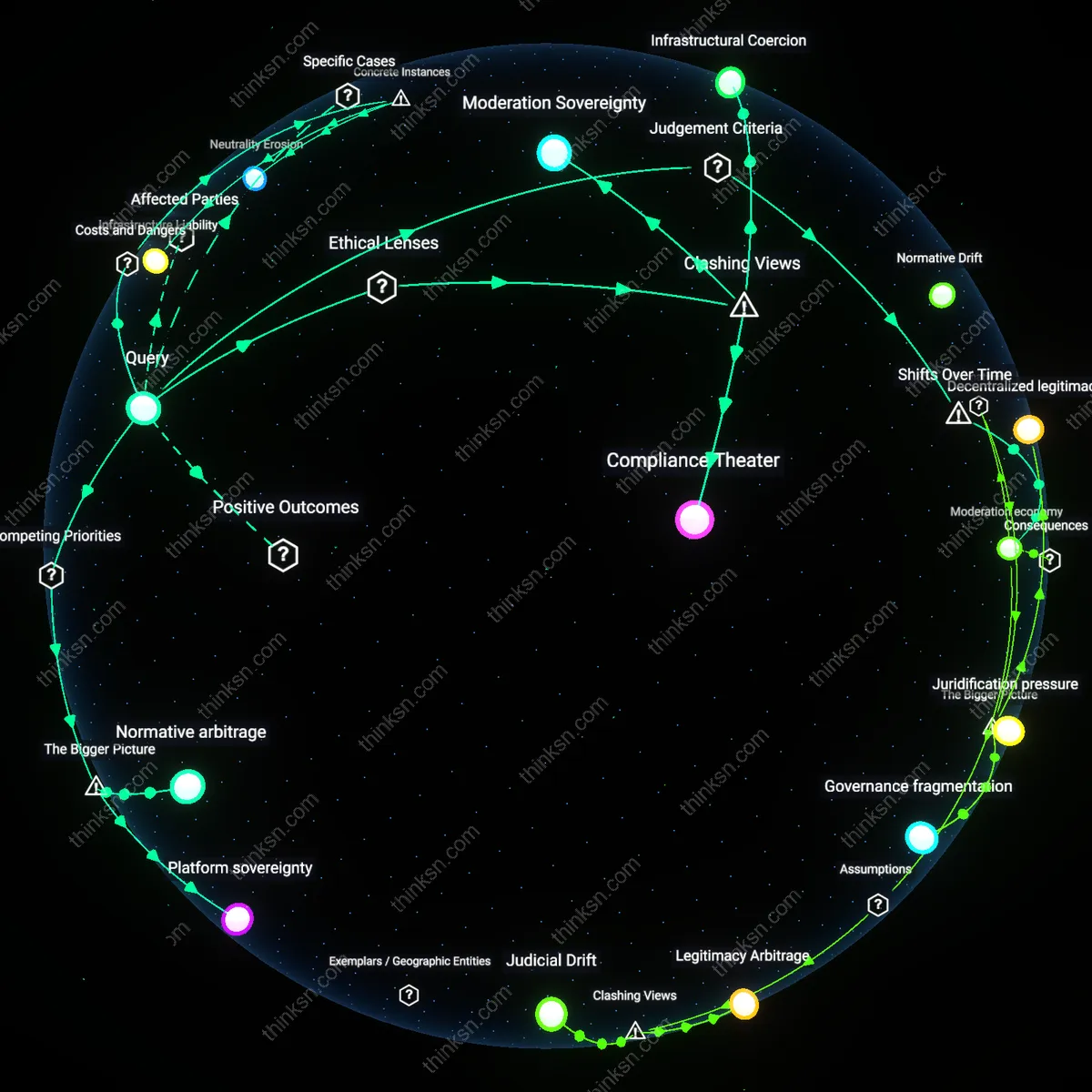

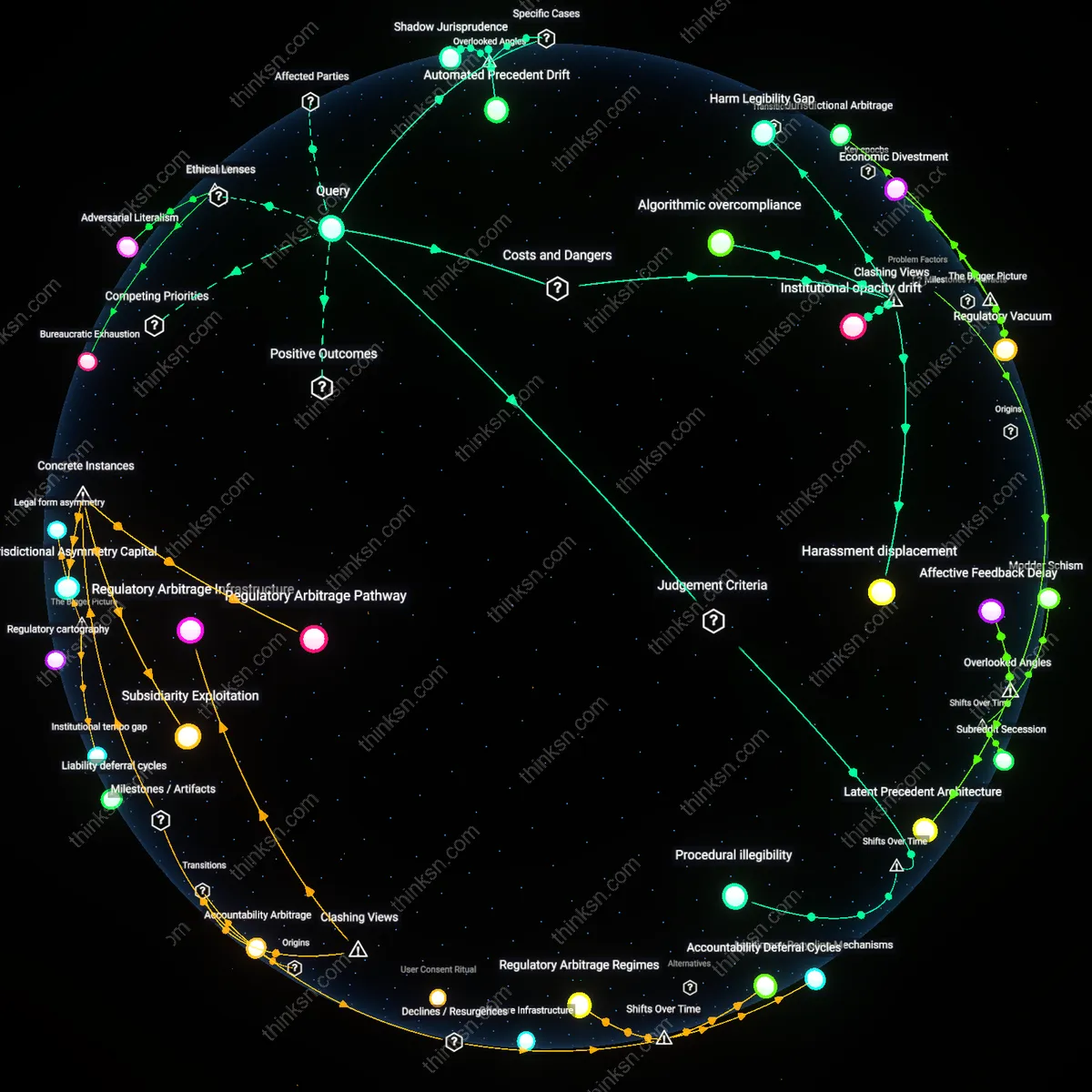

Algorithmic Blindspots

Facebook’s content moderation policies during the 2020 U.S. election systematically deprioritized Black civic organizers’ posts under automated anti-misinformation filters, mistaking dialectal and culturally specific language for inauthentic behavior. This occurred because engagement patterns and linguistic markers common in Black American communities—such as repetition for emphasis or the use of irony—triggered AI systems trained predominantly on white, middle-class norms, leading to reduced visibility of grassroots voter mobilization. The non-obvious insight is that harm arises not from overt censorship but from procedural neutrality in systems blind to sociolinguistic variation, privileging dominant communication styles under the guise of fairness.

Collateral Deplatforming

In 2018, YouTube’s broad demonetization and downranking of 'sexually suggestive' content disproportionately removed videos by trans and sex worker educators discussing harm reduction, consent, and health despite compliance with community guidelines. Because enforcement relied on keyword scanning and automated classifiers tuned to sensationalist pornography, nuanced advocacy content was stripped of reach and revenue, effectively silencing marginalized experts in public health discourse. The underappreciated mechanism is that systemic risk-aversion in platform governance leads to overbroad suppression where marginalized creators occupy overlapping categorical vulnerabilities.

Institutional Gatekeeping

Kenyan civil society groups reported in 2022 that Meta’s reliance on outsourced moderation firms based in Nairobi led to inconsistent enforcement, where Swahili-language political speech criticizing local officials was frequently flagged as hate speech while identical sentiments in English were ignored. This stemmed from uneven training and performance metrics for contractors, who faced pressure to meet quotas without contextual fluency, resulting in disproportionate removal of dissent from rural and low-income populations. The critical but overlooked dynamic is that decentralization of moderation labor does not ensure local understanding, and may instead embed new hierarchies that silence subnational political expression.

Algorithmic Epistemic Deprivation

Excessive content moderation systematically excludes marginalized voices by privileging institutionalized forms of speech that conform to dominant linguistic and procedural norms. Automated moderation systems, particularly those used by major platforms like Facebook and YouTube, rely on standardized language patterns, verified identities, and historical engagement metrics—criteria that disproportionately disadvantage communities with oral traditions, non-Western syntax, or limited digital infrastructure, such as Indigenous groups in the Global South or Black American vernacular speakers. These systems do not merely filter harmful content but restructure epistemic access by defining what counts as legible or credible speech, thereby enacting a form of digital gatekeeping that mirrors historical patterns of cultural exclusion. The non-obvious reality is that neutrality in moderation policy often amplifies existing hierarchies, not because of malicious intent but because the technical architecture of 'fairness' implicitly centers normative communication as the baseline for legitimacy.

Algorithmic Amplification Bias

Automated content moderation systems on major social media platforms like Facebook and YouTube disproportionately flag posts from Black activist groups as toxic or spam due to training data skewed toward mainstream vernacular. This occurs because machine learning models interpret culturally specific speech patterns, slang, or emotionally charged discourse—common in marginalized communities advocating for justice—as violations of community standards. The mechanism operates through opaque recommendation and suppression algorithms that prioritize perceived platform safety over contextual nuance, systematically reducing the visibility of voices already underrepresented in media ecosystems. What’s underappreciated is that the very tools designed to filter hate speech often replicate its silencing effects by treating marginalized expression as noise.

Moderation Outsourcing Burden

Contract-based moderation centers in low-wage countries like Kenya and the Philippines, contracted by companies such as Meta and Google, are required to review vast volumes of graphic and traumatic content under strict throughput quotas. Under these conditions, moderators apply rigid, rule-based interpretations to flag content, leading to over-enforcement on borderline cases—especially those involving LGBTQ+ advocacy or Indigenous resistance imagery that may include nudity or confrontational language. The system prioritizes legal compliance and corporate liability reduction over cultural sensitivity, resulting in disproportionate takedowns of material central to marginalized identities. The non-obvious consequence is that geographic and economic distance from the lived realities of users enables systemic misclassification under the guise of neutral enforcement.

Policy Proximity Gap

Content policies on platforms like Twitter (now X) and TikTok are primarily shaped by legal standards and advocacy norms centered in the Global North, especially the U.S. and EU, leading to enforcement regimes that fail to recognize context-specific expressions of dissent in regions like Palestine, Ethiopia, or Hong Kong. When local activists use terms or symbols tied to historical resistance—such as the phrase 'From the river to the sea' or Amharic protest chants—moderation systems coded around Western liberal frameworks misclassify them as extremist or violent incitement. The causal dynamic lies in the structural exclusion of frontline communities from policy design, where safety is defined by dominant geopolitical interests rather than on-the-ground harm. The overlooked reality is that moderation often protects reputational risk over human risk, especially when marginalized speech challenges state or corporate power.