At What Point Does News Personalization Undermine Democracy?

Analysis reveals 9 key thematic connections.

Key Findings

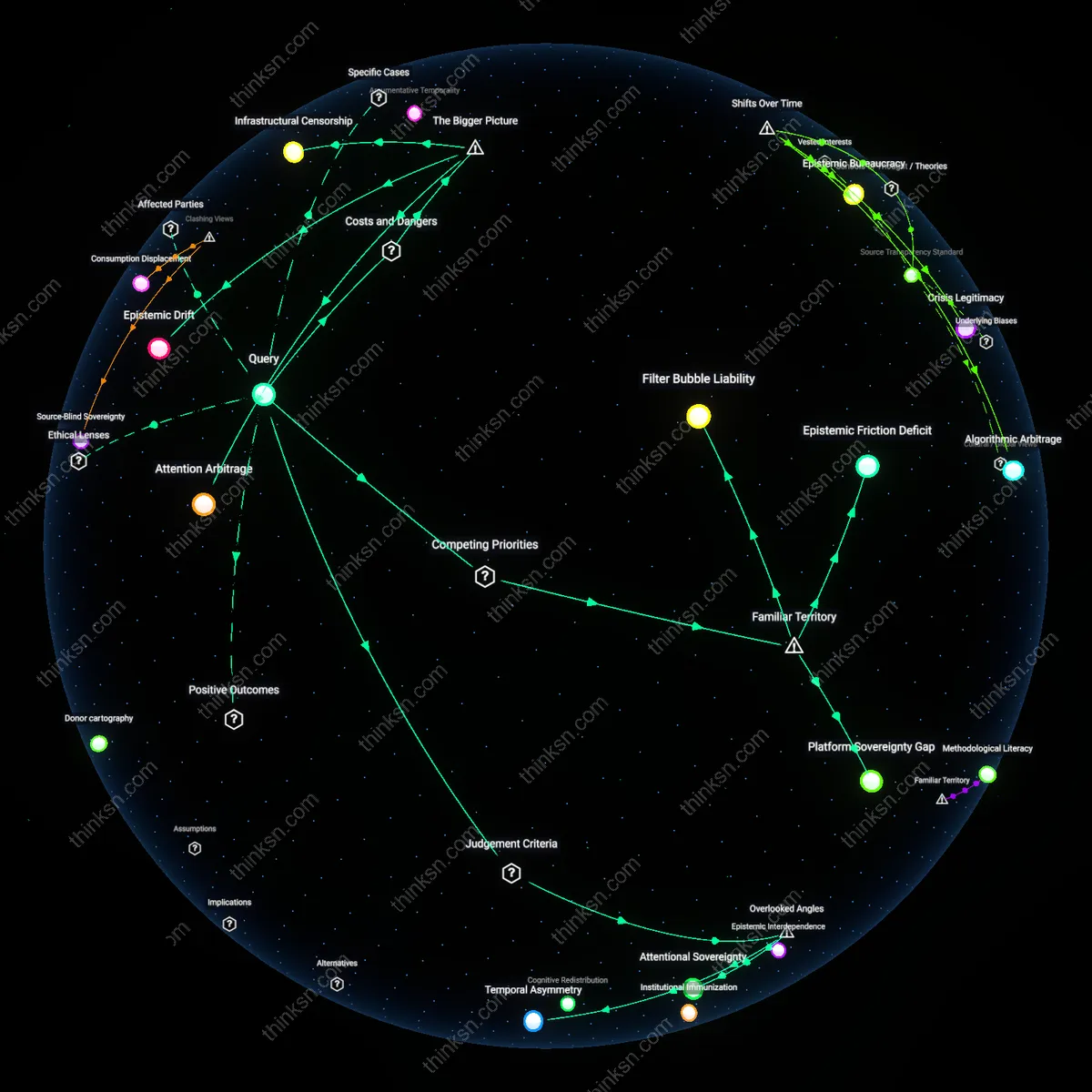

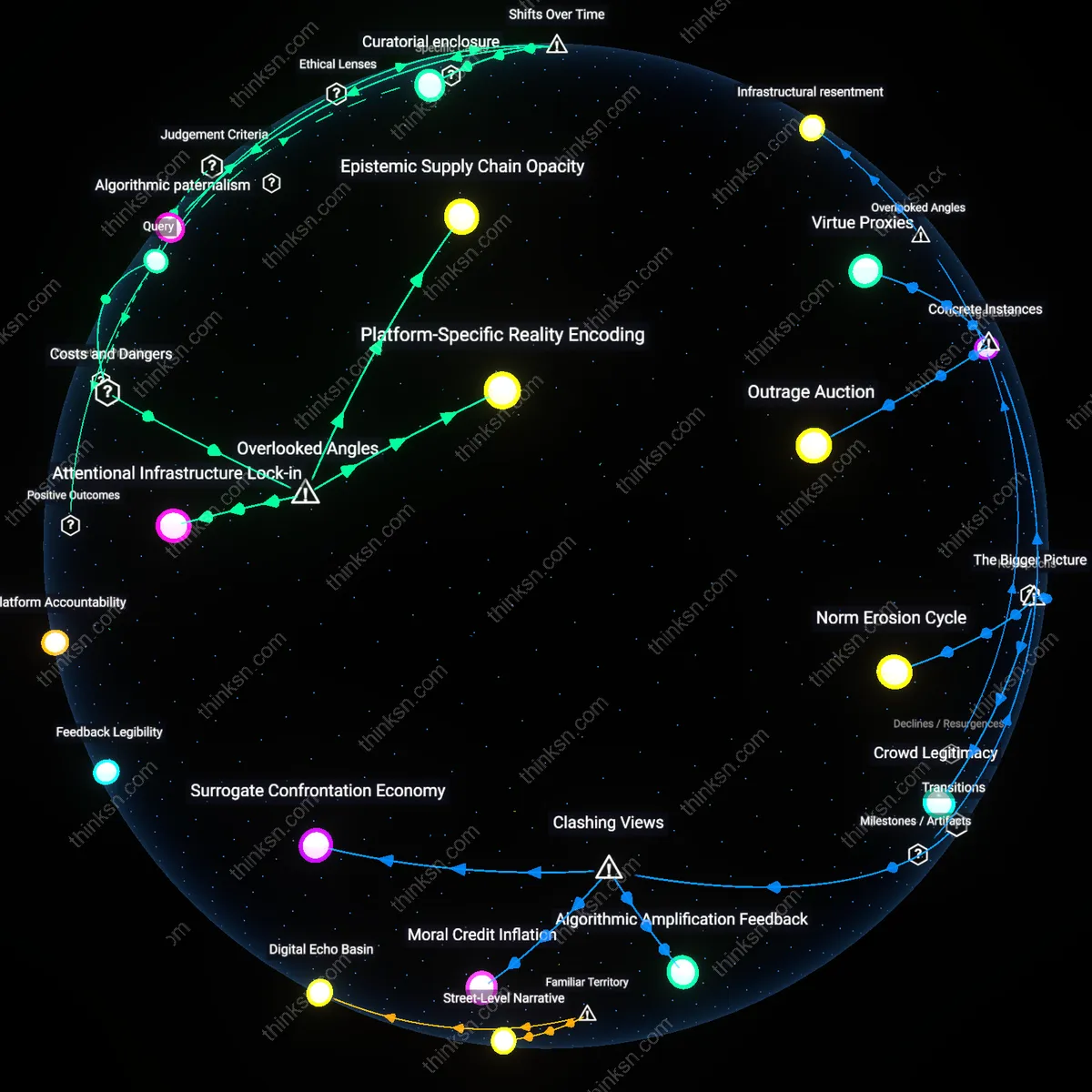

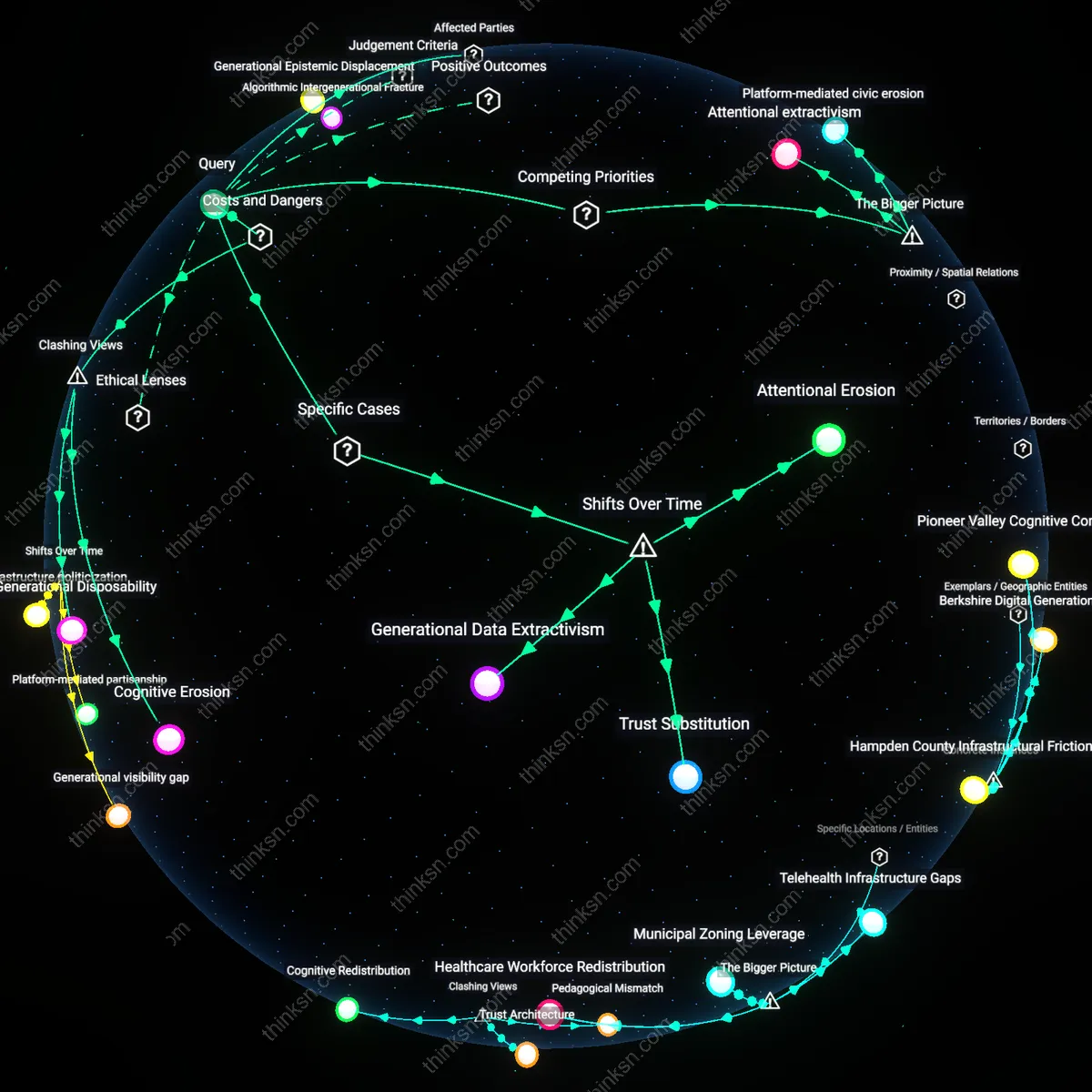

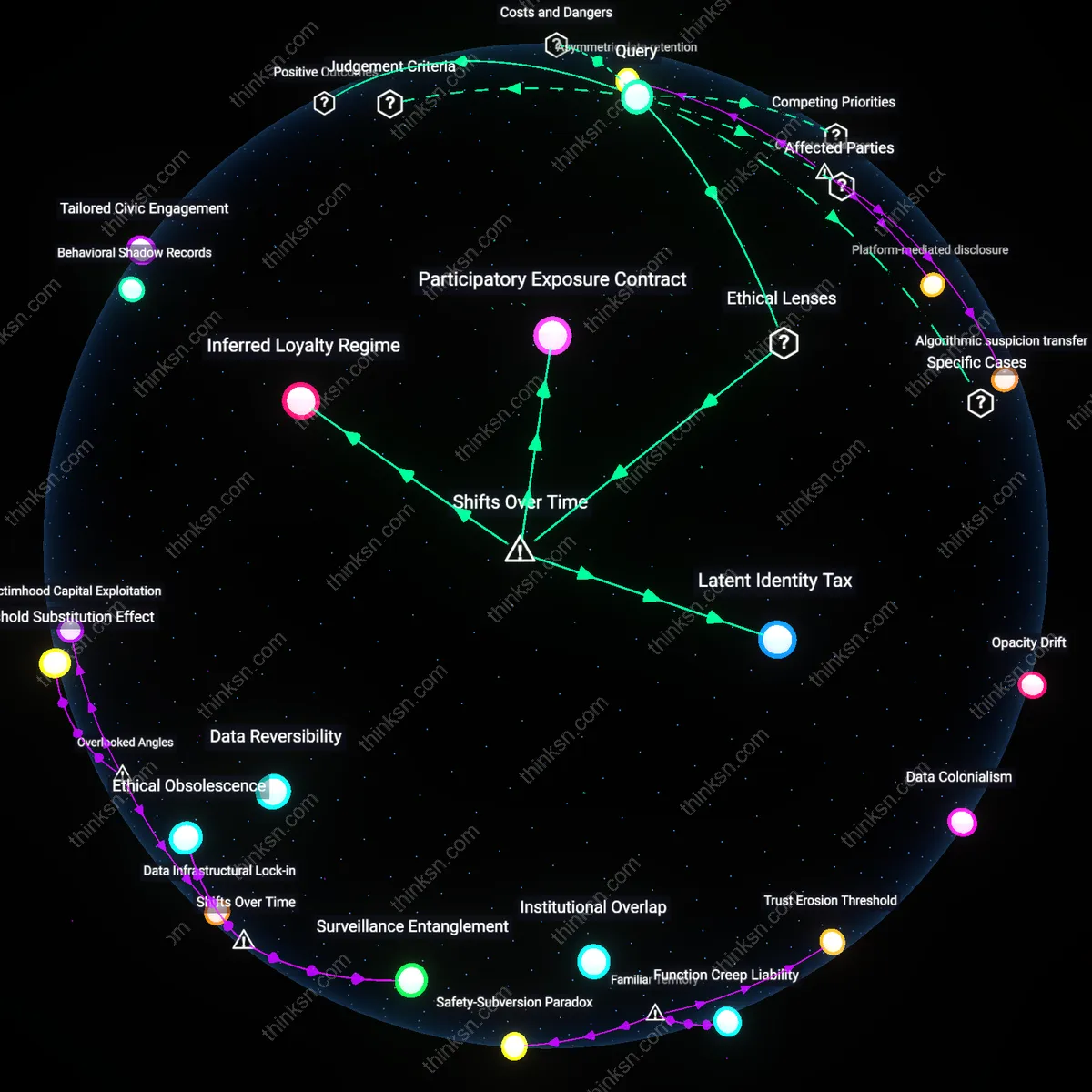

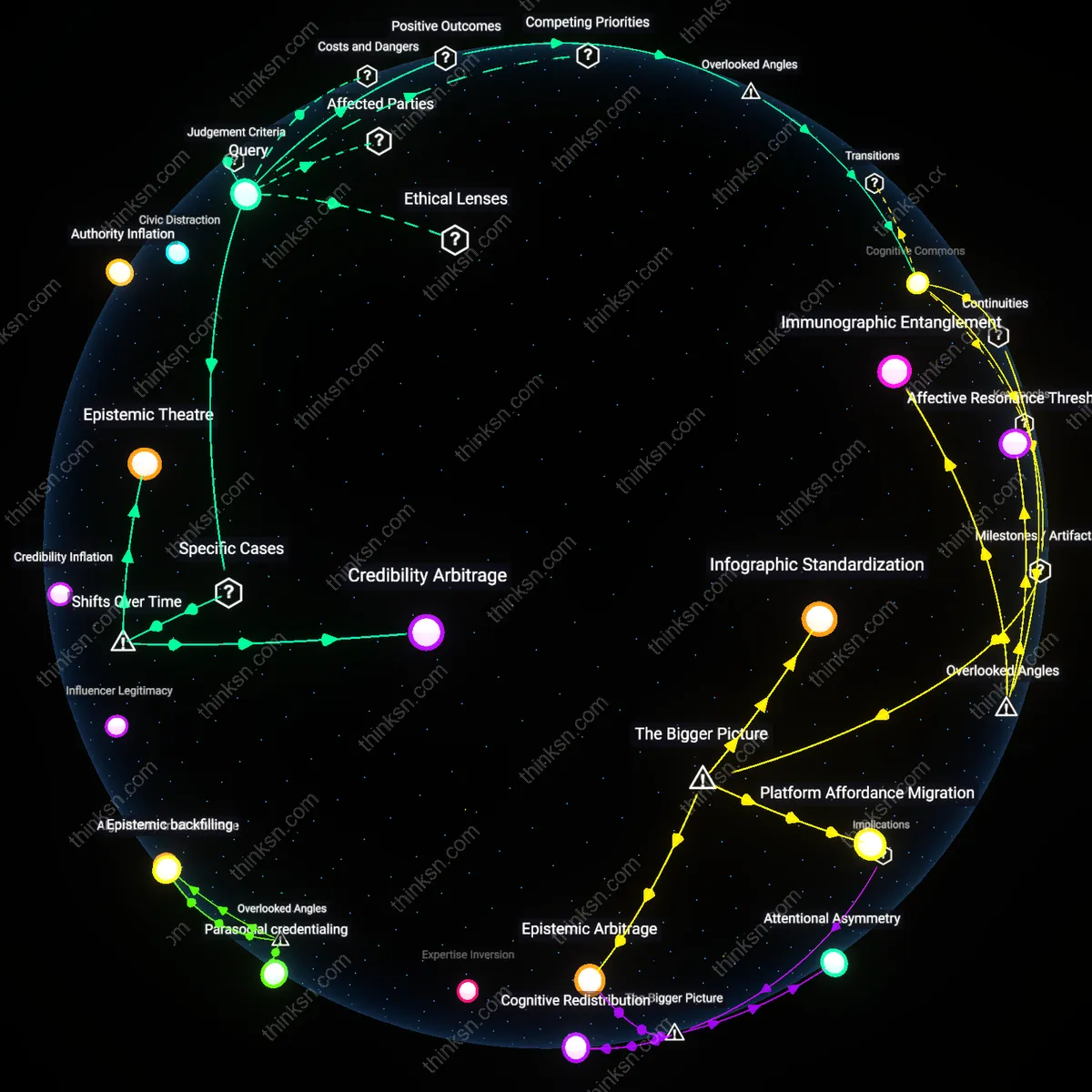

Attentional Sovereignty

Algorithmic personalization erodes democracy’s factual foundation when it systematically undermines users’ capacity to notice competing frames of reality. Social media platforms optimize for engagement through micro-targeted content loops that condition attention on emotionally resonant but epistemically isolated information diets; this reshapes not just what users believe, but what they are cognitively disposed to register as relevant. The overlooked mechanism is attentional sovereignty—the ability to voluntarily orient toward divergent perspectives—because most analyses treat belief formation as the endpoint, ignoring the prior erosion of perceptual openness. This shift matters because democracy presupposes not just disagreement, but mutual visibility; when citizens no longer see the same issues as salient, shared deliberation collapses at the perceptual level, not the belief level.

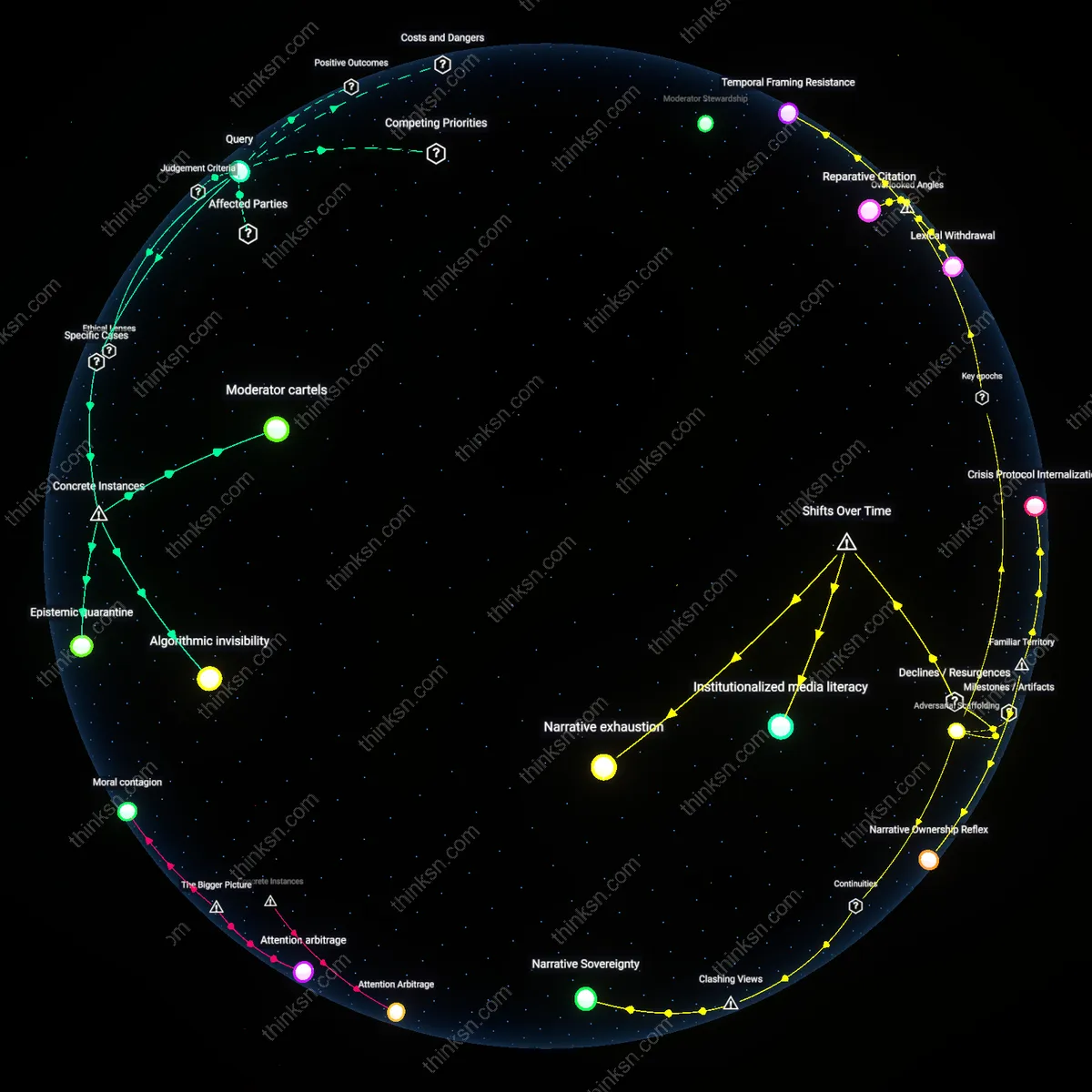

Infrastructure of Disputability

Algorithmic personalization undermines democracy when it hollows out the infrastructure of disputability—the shared platforms and procedures through which claims are contested. In legacy media, even partisan outlets operated within overlapping editorial norms and factual reference points, enabling public disagreement over interpretations of established facts. But personalized feeds fragment not only content but evidentiary standards, so that the very criteria for what counts as a valid source or coherent argument diverge across audiences. This hidden dependency on procedural commonality, rather than mere fact exposure, is missed in debates focused on misinformation; the deeper harm is the loss of a joint arena where disputes can be staged and resolved, making democratic accountability technically inoperative even without active deception.

Temporal Asymmetry

Algorithmic personalization threatens democracy when it enforces temporal asymmetry in news consumption, privileging real-time reactivity over retrospective coherence. Platforms amplify personalized content based on immediate engagement patterns, which compresses users’ temporal horizons and disrupts the slow integration of facts into stable narratives. The overlooked dynamic is that democratic consensus requires not just shared facts, but shared pacing of understanding—time to reconcile dissonance, verify claims, and revise beliefs collectively. When personalization locks users into divergent temporal rhythms, some communities remain stuck in outdated or emotionally charged versions of events while others move on, fracturing the synchronized temporality necessary for coordinated civic action.

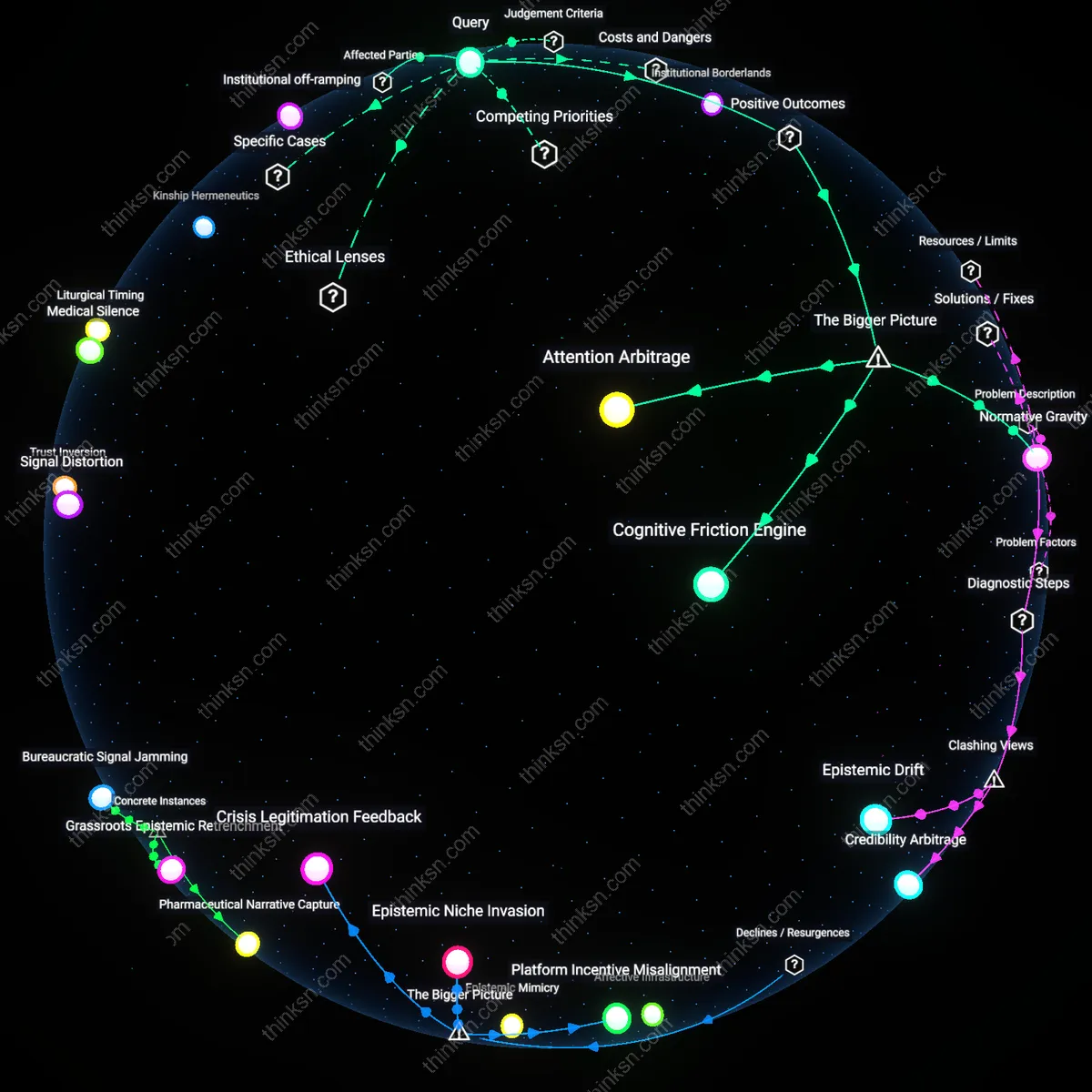

Attention Arbitrage

Algorithmic personalization erodes the shared factual foundation of democracy when platforms systematically prioritize engagement metrics over information accuracy, rewarding emotionally charged or ideologically reinforcing content. Social media companies like Meta and Google optimize for user retention through machine learning models that amplify divisive narratives, as these generate higher click-through and longer session times—creating a structural incentive to fragment public discourse. This mechanism functions through an economic model where attention is commodified, and political misalignment becomes a profitable externality rather than a societal risk, undermining epistemic common ground in ways that are invisible to individual users but systemic in effect.

Epistemic Drift

Algorithmic personalization undermines democracy when repeated exposure to divergent, systemically curated information streams causes user beliefs to cohere around non-overlapping realities over time. As individuals in geographically adjacent communities—such as voters in the same congressional district—consume news shaped by opaque recommendation algorithms on platforms like YouTube or TikTok, their understanding of basic facts (e.g., election integrity, pandemic risks) gradually diverges, not through deliberate deception but through differential reinforcement. This drift is enabled by decentralized feedback loops between user behavior and algorithmic adjustment, where the absence of shared informational baselines prevents collective problem-solving, making democratic deliberation functionally impossible even when institutions remain formally intact.

Infrastructural Censorship

Algorithmic personalization erodes democracy when private technology firms, acting as unaccountable gatekeepers, suppress or deprioritize content that fails to conform to engagement-driven distribution rules, effectively censoring factually accurate but less viral information. Unlike state censorship, this form of suppression is not based on political ideology per se but on algorithmic assessments of shareability, which favor simplification, outrage, and confirmation bias—all of which marginalize nuanced, evidence-based reporting from outlets like Reuters or AP News in favor of sensational alternatives. This creates a structural asymmetry where truthfulness does not guarantee visibility, and the public sphere becomes skewed not by overt lies alone but by the invisible downgrading of reliability within technical architectures that are shielded from public oversight.

Filter Bubble Liability

Algorithmic personalization erodes democracy’s shared factual foundation when news platforms prioritize user engagement over informational diversity, causing individuals to encounter only content reinforcing their existing beliefs. Social media platforms like Facebook and YouTube use machine learning models that amplify emotionally resonant or confirmation-biased content because it increases time-on-platform, effectively isolating users from competing perspectives. This mechanism transforms the public sphere into fragmented epistemic domains where consensus on basic facts becomes structurally improbable. What is underappreciated is that this fragmentation is not merely a side effect but a direct output of optimization for attention, making the filter bubble not an anomaly but a liability inherent to the business model.

Epistemic Friction Deficit

Democracy loses its common ground when personalized algorithms reduce exposure to disconfirming evidence, weakening citizens’ capacity to negotiate disagreement. Mainstream digital news outlets such as The New York Times or CNN now design their app-level recommendation engines to mimic social media logic—pushing trending or behaviorally-predicted content—thus displacing editorial judgment with engagement metrics. This shift systematically removes low-intensity exposure to opposing viewpoints that once occurred through shared front-page headlines or scheduled broadcasts. The underappreciated consequence is that the democratic value of mild, routine cognitive dissonance—what once provided epistemic friction—is now treated as a UX flaw to be optimized away.

Platform Sovereignty Gap

The factual foundation of democracy erodes when private algorithmic systems acquire final authority over news visibility without public accountability, as seen in Meta’s opaque content ranking policies during election cycles. These platforms function as de facto information gatekeepers, yet operate under commercial rather than civic imperatives, creating a sovereignty gap where no elected institution can enforce transparency or corrective action. Unlike traditional media regulators such as the FCC, which governed broadcast fairness within a public interest framework, current oversight mechanisms cannot compel algorithmic justification or equitable reach. The overlooked reality is that this gap isn’t a temporary regulatory lag but a structural misalignment between digital power and democratic legitimacy.