Do Liability Reforms Favor Big Tech Over Marginalized Users?

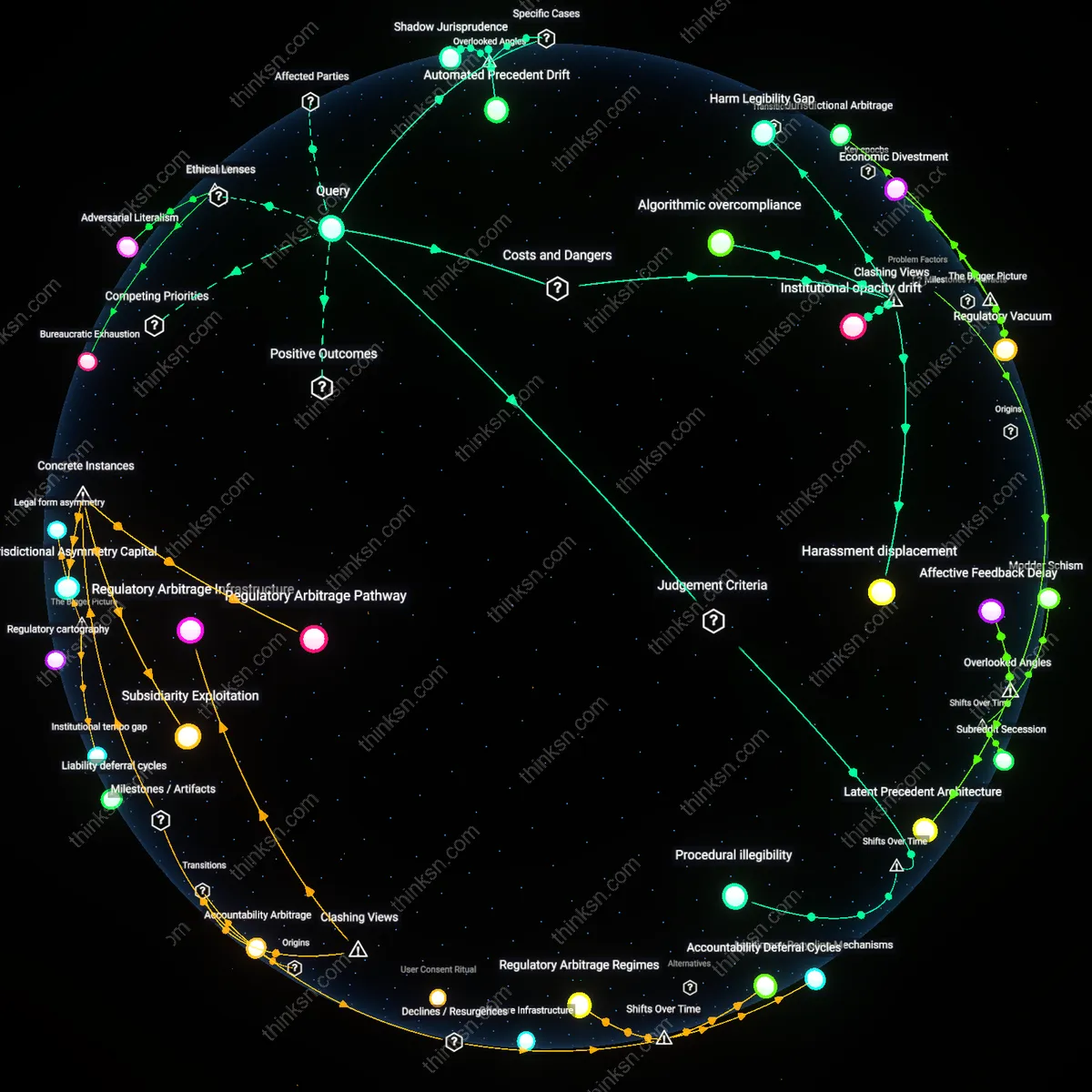

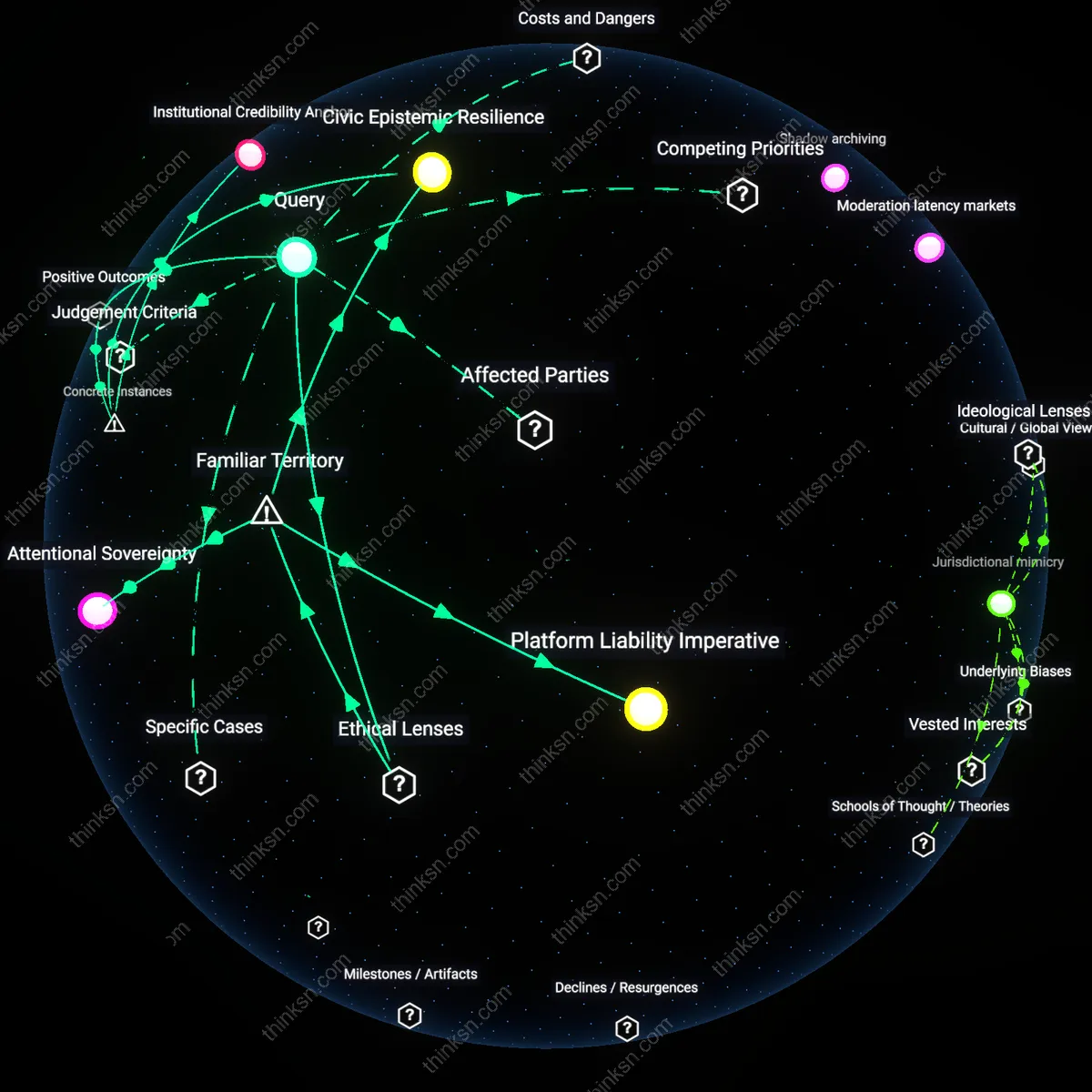

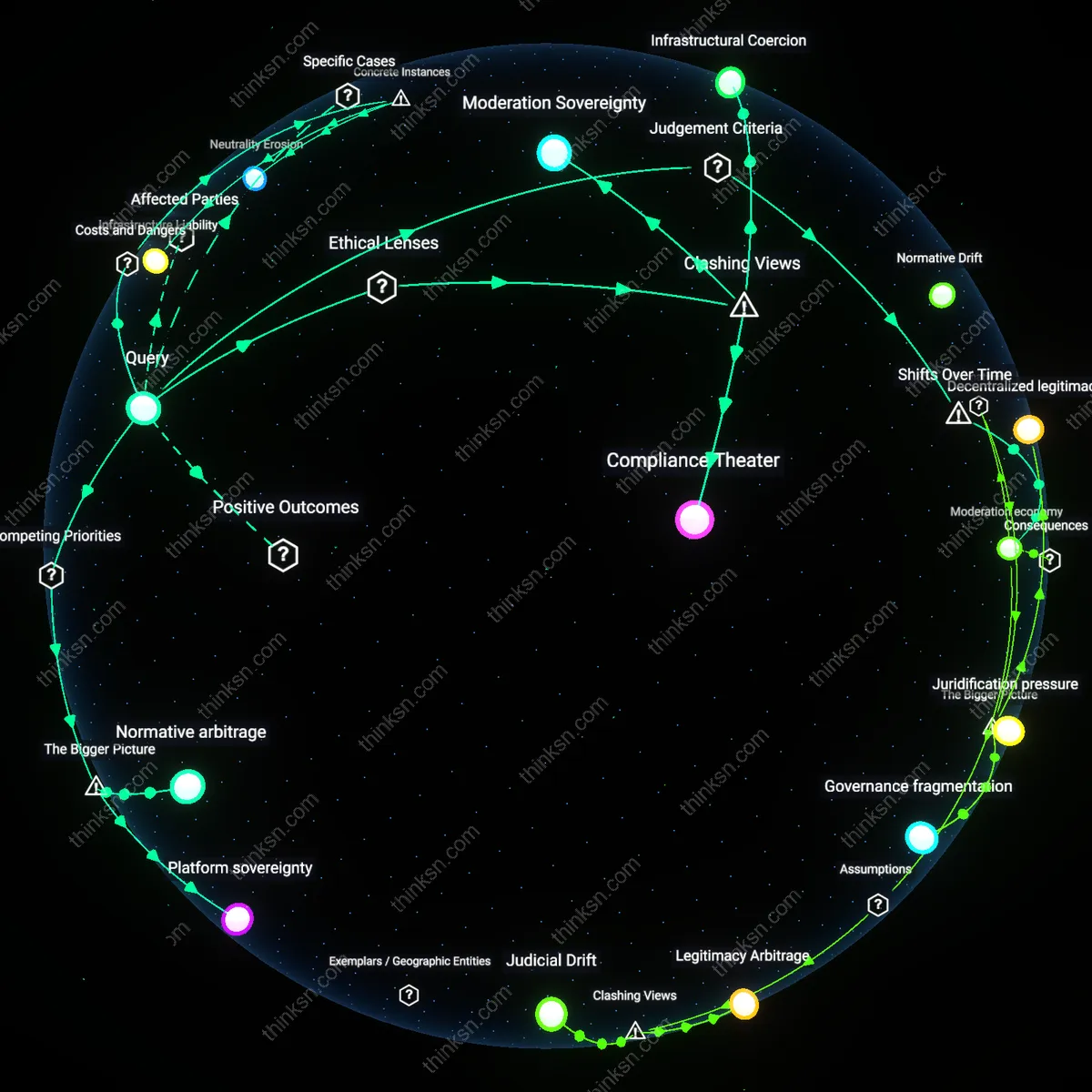

Analysis reveals 18 key thematic connections.

Key Findings

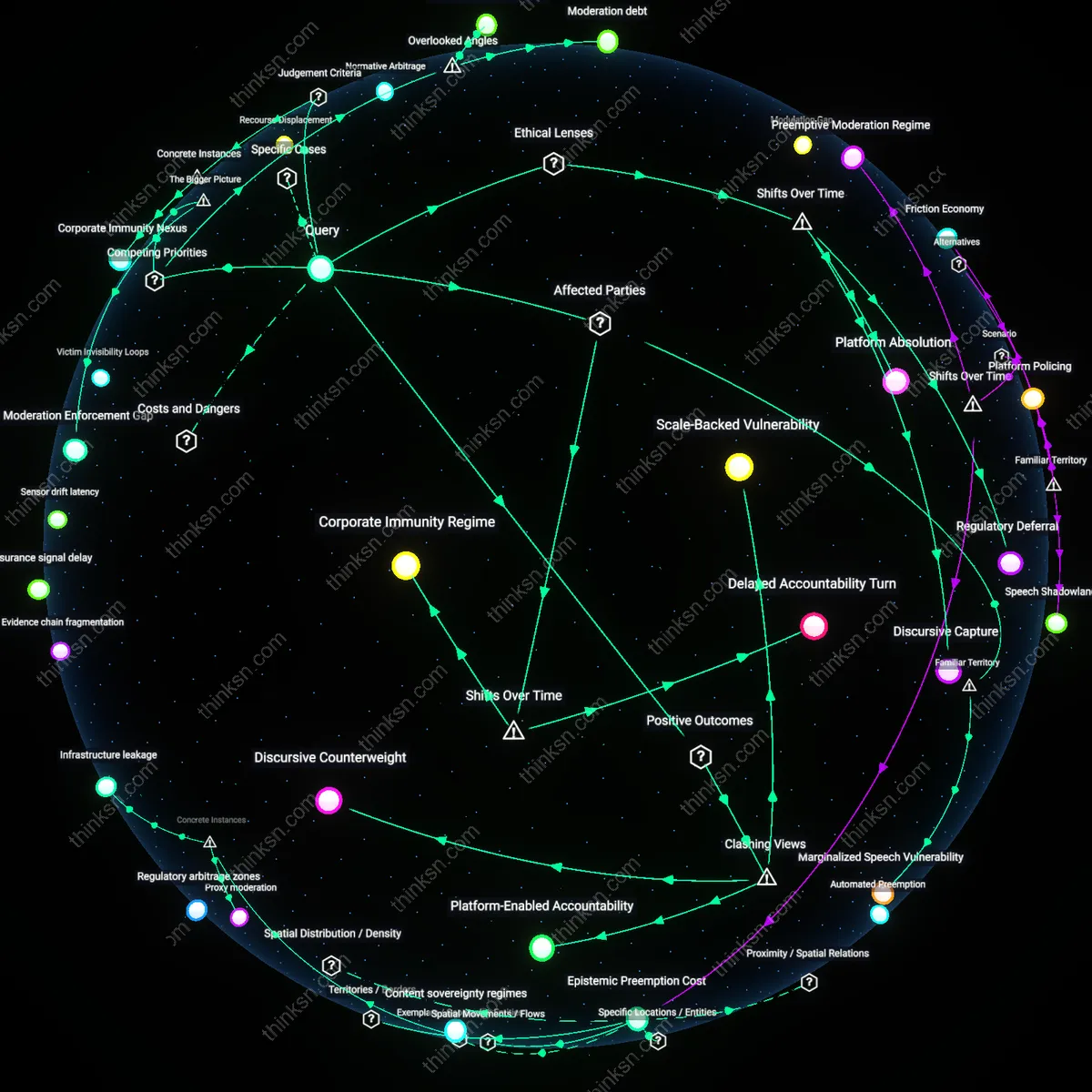

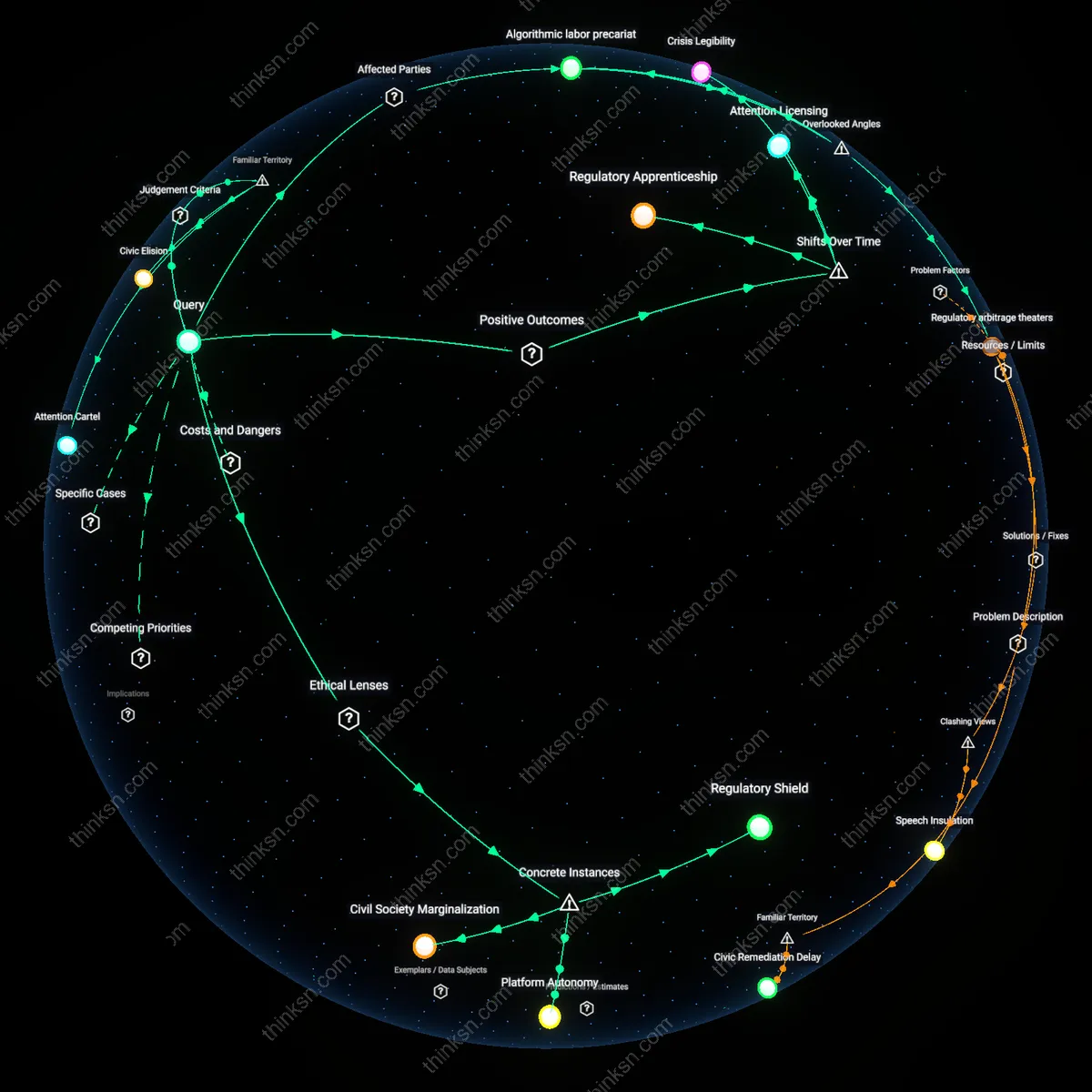

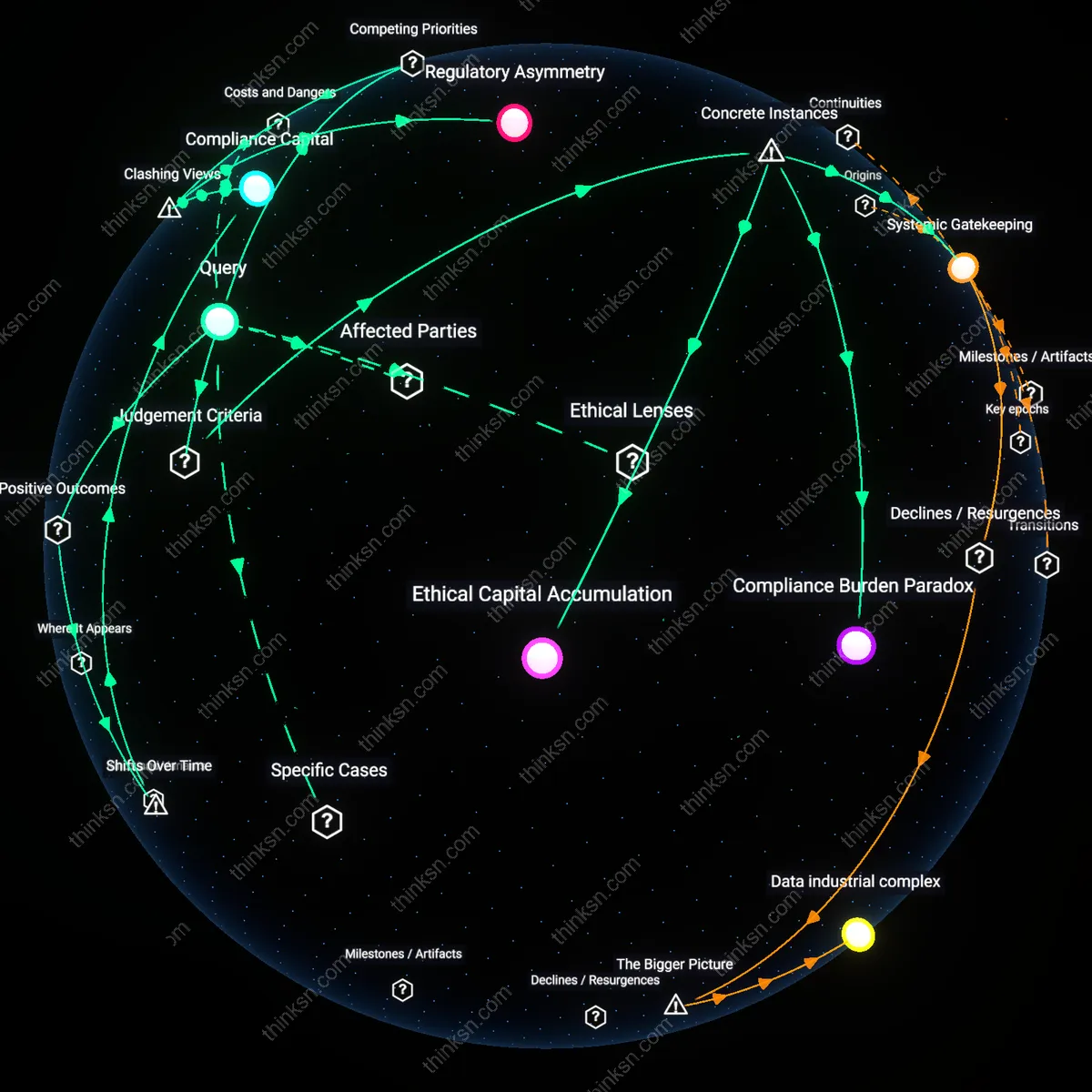

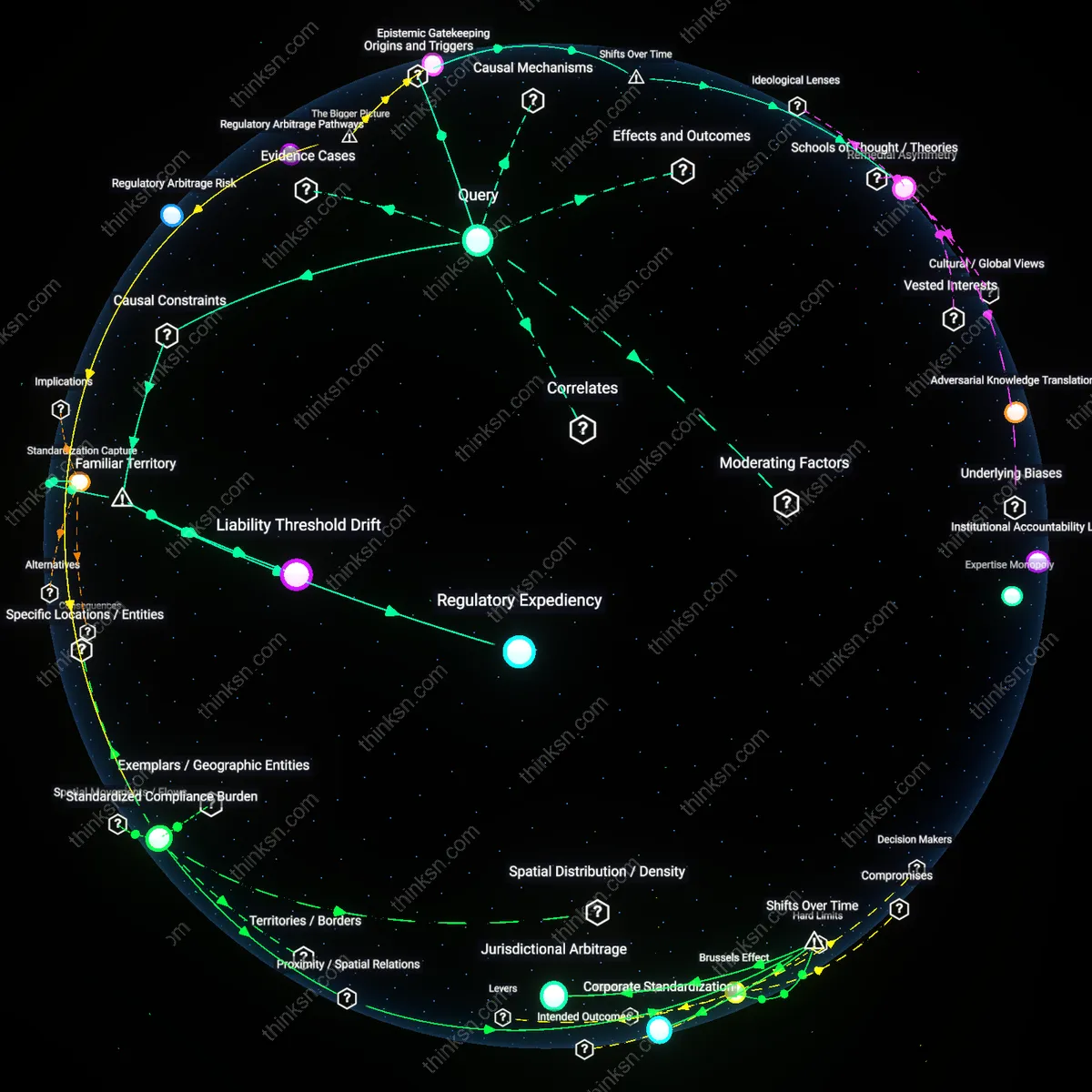

Platform Immunity Regime

Social media companies benefit most directly from liability protections because these shields insulate them from legal accountability for user-generated content, allowing platforms like Facebook, YouTube, and X to scale rapidly without bearing the cost of moderating billions of posts. This legal framework, exemplified by Section 230 of the Communications Decency Act, operates through a legislative bargain that treats platforms as intermediaries rather than publishers, thus preventing courts from treating them as responsible for defamation, hate speech, or misinformation posted by users. What’s underappreciated in public discourse is that this immunity isn’t just a legal detail—it’s the foundational infrastructure enabling the entire platform economy, making possible the explosive growth of digital intermediaries at the expense of those harmed by unmoderated content.

Marginalized Speech Vulnerability

Marginalized groups are disproportionately harmed by liability protections because those same shields that protect platforms also block survivors of online abuse—especially women, LGBTQ+ individuals, and racial minorities—from seeking legal remedies when targeted with harassment, doxxing, or incitement to violence. Since platforms cannot be treated as legally responsible for content under current doctrine, victims face near-insurmountable barriers in holding either the poster or the platform accountable, even in cases of extreme harm. The intuitive assumption that 'the internet should be free' obscures how this freedom is asymmetric—protected expression often weaponizes visibility against the vulnerable, turning free speech into a structural liability for those already at societal margins.

Legal Recourse Asymmetry

Large technology corporations benefit from liability protections not just through cost avoidance, but by shaping the very conditions under which justice is available—effectively creating a two-tiered system where powerful entities can enforce their rights (e.g., copyright takedowns) while marginalized users struggle to gain redress for personal harm. Automated enforcement systems like content ID or AI moderation are designed to honor property claims efficiently, yet lack equivalent mechanisms for non-consensual imagery, threats, or stalking, revealing a systemic bias in how legal recourse is operationalized. The unspoken norm—deeply embedded in public understanding of internet law—is that defending corporate scalability is more urgent than enabling individual justice, which makes the absence of remedy feel 'natural' rather than engineered.

Corporate Immunity Regime

Social media platforms gained expansive legal immunity after the 1996 enactment of Section 230, which insulates them from liability for user-generated content, thereby privileging platform scalability over victims’ access to justice. This legal shield emerged when Congress prioritized fostering digital innovation over regulating speech intermediaries, embedding a pro-industry bias into internet governance that solidified during the dial-up-to-broadband transition (1996–2005). The non-obvious consequence is that marginalized groups—particularly targets of gender-based harassment, racial defamation, or disinformation campaigns—were systematically disadvantaged at the moment platform architecture and content moderation norms were being codified, locking in a structural indifference to their harms.

Delayed Accountability Turn

Civil rights organizations and survivor advocacy networks only began coalescing around legal reform after 2015, when viral episodes of online abuse—such as coordinated attacks during Gamergate and the rise of non-consensual deepfake pornography—exposed how liability protections enabled repeat harm without recourse. This shift marks a departure from the early 2000s, when digital free speech absolutism dominated advocacy, to a post-2015 era where marginalized users reframed platform immunity as a civil rights issue rather than a technicality. The underappreciated dynamic is that legal redress became imaginable only after a generation of harm accumulation revealed immunity not as neutrality but as active disenfranchisement.

Platform Immunity Ratchet

Large technology platforms benefit most from liability protections because Section 230 enables them to scale user-generated content with minimal legal exposure, allowing dominant firms like Meta and YouTube to externalize the costs of moderation failures onto vulnerable users—particularly marginalized groups who face disinformation, harassment, or incitement that courts rarely remediate due to high evidentiary and procedural barriers. This dynamic is reinforced by venture capital incentives and infrastructure lock-in, which make de-platforming or structural reform economically unviable, thereby entrenching a system where liability shields function not as neutral safeguards but as cumulative advantages for incumbents. The underappreciated consequence is that the law doesn’t just protect speech—it systematically privileges platforms capable of absorbing regulatory risk, while excluding smaller or community-led platforms that lack the resources to navigate legal gray areas even under the same statutory protection.

Recourse Displacement

Marginalized groups experience diminished legal recourse not because liability protections directly strip their rights but because the practical effect of Section 230 is to shift dispute resolution from judicial accountability to opaque moderation systems controlled by private companies, which lack transparency, appeal mechanisms, or due process guarantees. This displacement arises from the collapse of viable litigation pathways, pushing victims of online harm into platforms’ internal grievance regimes—where decisions are algorithmically mediated, culturally tone-deaf, and shielded from public scrutiny—thus substituting one form of power (courts) with another (corporate content governance). The overlooked reality is that the erosion of legal standing doesn’t merely limit access to justice; it reconfigures harm management into a privatized, unaccountable bureaucracy that replicates offline inequities under the guise of neutral policy enforcement.

Normative Arbitrage

Advertiser-dependent platforms benefit from liability protections by leveraging them to host controversial content that drives engagement—especially radical or polarizing material targeting marginalized communities—while deflecting responsibility through claims of neutrality, a strategy made possible by the mismatch between legal immunity and monetization rules that are enforced selectively based on brand safety concerns rather than ethical consistency. This creates a system where platforms profit from amplifying harmful speech (e.g., anti-LGBTQ+ content on YouTube) without legal liability, yet still respond to public pressure by demonetizing or limiting reach only when advertiser backlash occurs, revealing a dual standard enforced by market optics rather than moral principle. The critical but hidden mechanism is that liability shields enable platforms to act as both passive conduits (in court) and active curators (to advertisers), allowing them to arbitrage between legal and reputational risks while leaving marginalized users exposed to both algorithmic amplification and institutional indifference.

Corporate Immunity Nexus

Section 230 protections primarily benefit dominant platform operators like Facebook by insulating them from liability for algorithmic amplification of user-generated hate speech, as seen in the 2021 Rohingya genocide in Myanmar, where Facebook's failure to moderate content was linked to offline violence yet shielded from legal accountability—revealing how legal immunity intersects with infrastructural power to entrench corporate impunity. This dynamic operates through the delegation of speech governance to private platforms while denying victims enforceable rights, making visible that the legal shield does not merely reduce regulatory burden but actively configures a jurisdictional void where systemic harm can scale without consequence. The non-obvious insight is that the protection functions not as passive safeguard but as an enabling condition for platform-led governance in high-risk sociopolitical environments.

Platform-Enabled Accountability

Marginalized groups benefit from liability protections because those safeguards enable social media platforms to rapidly amplify evidence of systemic harm without fear of legal reprisal for hosting user content. Activists in countries like Nigeria and India have leveraged platform infrastructures to document police violence and caste-based discrimination, turning otherwise ephemeral or suppressed incidents into globally witnessed events that pressure state and institutional actors. This dynamic flips the intuition that liability shields inherently disempower victims—instead, the ability of platforms to host contested content unchecked in real time creates a de facto evidentiary commons. The non-obvious outcome is that legal insulation for companies becomes an indirect scaffold for grassroots legal mobilization.

Discursive Counterweight

Liability protections benefit marginalized communities by allowing niche platforms—such as Muslim Pro or Disability Lead—to operate without the financial burden of constant litigation, enabling them to moderate content in culturally specific ways that mainstream legal systems ignore. These platforms become sites of norm-setting where users can define what constitutes harm within their own epistemic frameworks, rather than having definitions imposed through litigious, adversarial legal processes. The protection from liability thus preserves discursive autonomy, challenging the assumption that legal redress is the primary or most legitimate path to justice. The obscured insight is that avoiding legal exposure can be more empowering than accessing courts when those courts are structurally alienating.

Scale-Backed Vulnerability

Social media companies’ liability shields benefit dominant institutions—not marginalized groups—by allowing platforms to scale enforcement inconsistently, where content moderation appears responsive to crisis but systematically favors the visibility of well-resourced actors. When marginalized users report abuse, their appeals are routed through opaque, AI-driven systems trained on majority-group norms, causing disproportionate suppression of their speech under the guise of neutrality—yet the same platforms rapidly elevate user-generated content that aligns with state or corporate interests, as seen during the 2020 U.S. election or anti-LGBTQ+ campaigns in Uganda. This reveals that liability protections don’t just protect platforms—they entrench a hierarchy of vulnerability where legal redress remains inaccessible precisely because harm is algorithmically erased before it can be litigated.

Jurisdictional deterrence

Platform defendants benefit from liability protections by strategically siting data storage and incident response functions in jurisdictions with the weakest enforcement traditions for civil rights claims, thereby deepening the asymmetry between corporate legal mobility and marginalized users’ immobility. For example, when TikTok routes content disputes through arbitration clauses tied to Cayman Islands servers, it leverages liability shields not just to avoid liability but to weaponize legal geography against users whose claims already face procedural hurdles. This is rarely framed as a structural tactic because most analyses treat jurisdiction as background rather than a manipulable variable; yet the deliberate decoupling of user location from dispute venue exploits gaps in transnational civil rights enforcement, especially for diasporic or displaced communities. The overlooked dynamic is that immunity doesn’t merely protect speech—it licenses forum engineering, where the locus of harm bears no relation to the locus of adjudication, silently raising the cost of justice for mobile but politically precarious populations.

Moderation debt

Civil rights organizations incur modulation debt when platform liability shields delay or dilute enforcement against coordinated hate campaigns, forcing NGOs to absorb investigative and evidentiary labor that platforms would otherwise perform under stricter liability regimes. Groups like the Southern Poverty Law Center or the Asian American Legal Defense Fund increasingly function as de facto forensic units—documenting harassment patterns, preserving screenshots, and reconstructing timelines—because platforms shielded by laws like Section 230 lack mandatory reporting duties or evidentiary retention requirements for hate-based targeting. This hidden transfer of investigative burden undermines the sustainability of advocacy, especially for small, underfunded groups focused on niche marginalized identities. The underappreciated reality is that liability protections don’t just limit corporate exposure—they actively redistribute the cost of accountability onto the weakest actors in the ecosystem, turning grassroots organizations into unpaid risk-monitoring infrastructure for profit-driven platforms.

Moderation Enforcement Gap

Content moderation systems, enabled by liability protections, create a false equivalence between free expression and harmful speech that systematically disempowers marginalized users in practice while protecting corporate operational flexibility. Because platforms face no legal obligation to remove harmful content, their moderation policies emerge from public relations pressures rather than legal necessity, leading to inconsistent enforcement that favors visible de-escalation over structural accountability. This condition thrives on the asymmetry between user vulnerability—concentrated and personal—and corporate exposure—diffuse and reputational—allowing platforms to appease stakeholders without altering underlying risk allocation. The overlooked dynamic is how legal immunity sustains a permissive environment for algorithmic amplification of hostility, where marginalized users bear the cost of being both targets and de facto moderators through reporting labor.

Platform Absolution

Social media companies' liability protections primarily benefit corporate platform operators by insulating them from legal responsibility for user-generated content, a shift solidified by the 1996 Communications Decency Act’s Section 230, which replaced earlier regulatory models that treated distributors like publishers. This legal shield emerged during the dot-com boom as policymakers prioritized innovation over accountability, embedding neoliberal assumptions into internet governance; the non-obvious effect is that marginalized users seeking redress for harassment or defamation must now navigate a system designed to protect infrastructure, not individuals, thereby institutionalizing platform sovereignty over speech justice.

Regulatory Deferral

Civil rights advocates and marginalized communities were initially beneficiaries of liability protections because early 2000s moderation practices suppressed hate speech and organized extremism under goodwill-based platform policies, a phase preceding algorithmic scaling and datafication. The shift occurred post-2010 as platforms automated content governance to handle volume, weakening enforcement equity and converting legal immunity into technical inertia; the underappreciated consequence is that marginalized groups now face structural disbelief, where harms are dismissed not due to malice but systemic deferral to automated neutrality shaped by post-9/11 surveillance trade-offs.

Discursive Capture

Political actors leveraging free speech absolutism have redefined liability protections since 2017 as a civil liberties issue, transforming Section 230 from a pragmatic innovation safeguard into a battleground framed by libertarian constitutionalism, particularly after allegations of anti-conservative bias on major platforms. This reframing, amplified through federal judiciary appointments and FCC reinterpretations, exploits the tension between equality-based remedy and negative liberty, producing a discursive shift that delegitimizes marginalized claims by equating moderation with censorship—an outcome unanticipated in the 1990s consensus that viewed immunity as neutral infrastructure.