Sharing Budget Data: Risk of Financial Discrimination?

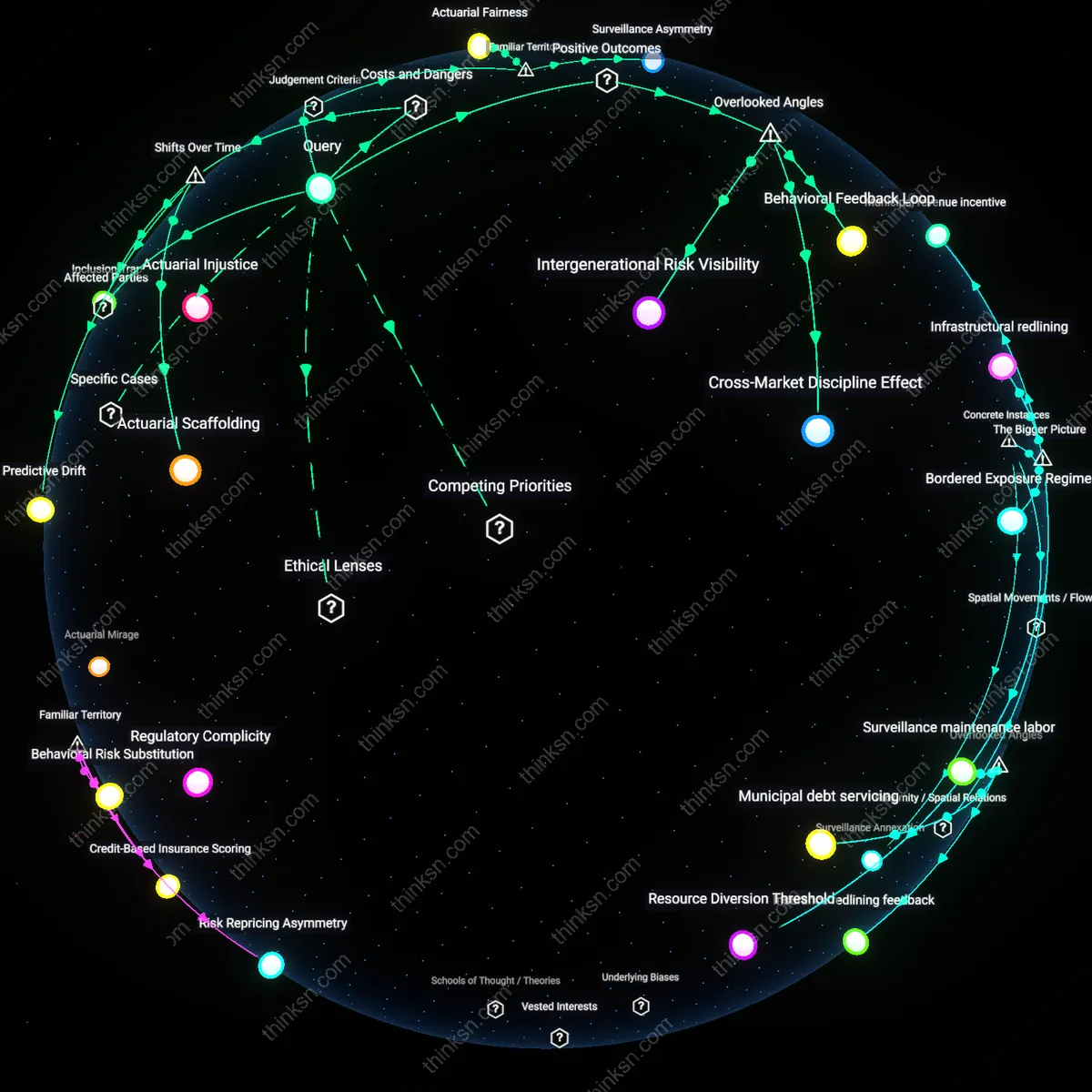

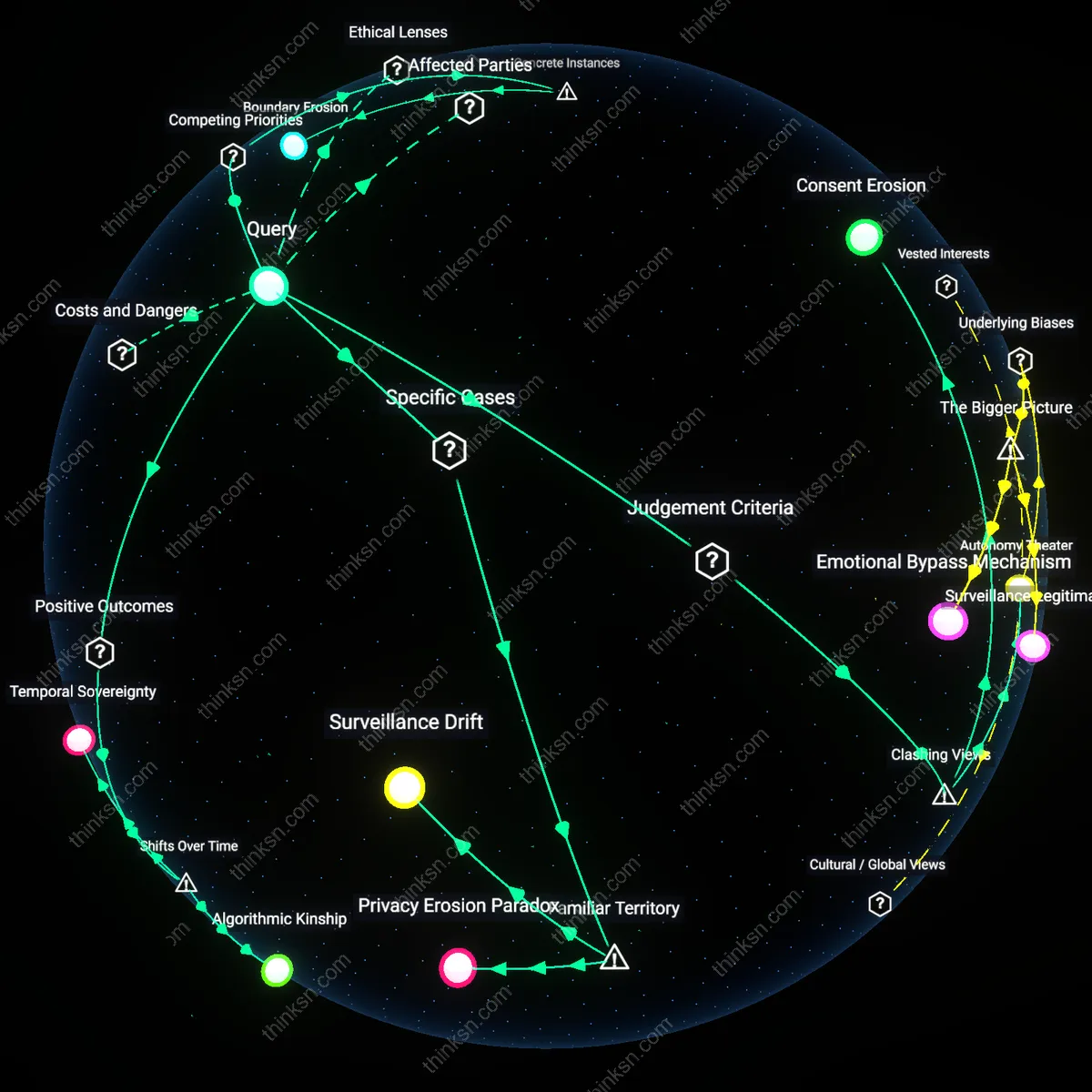

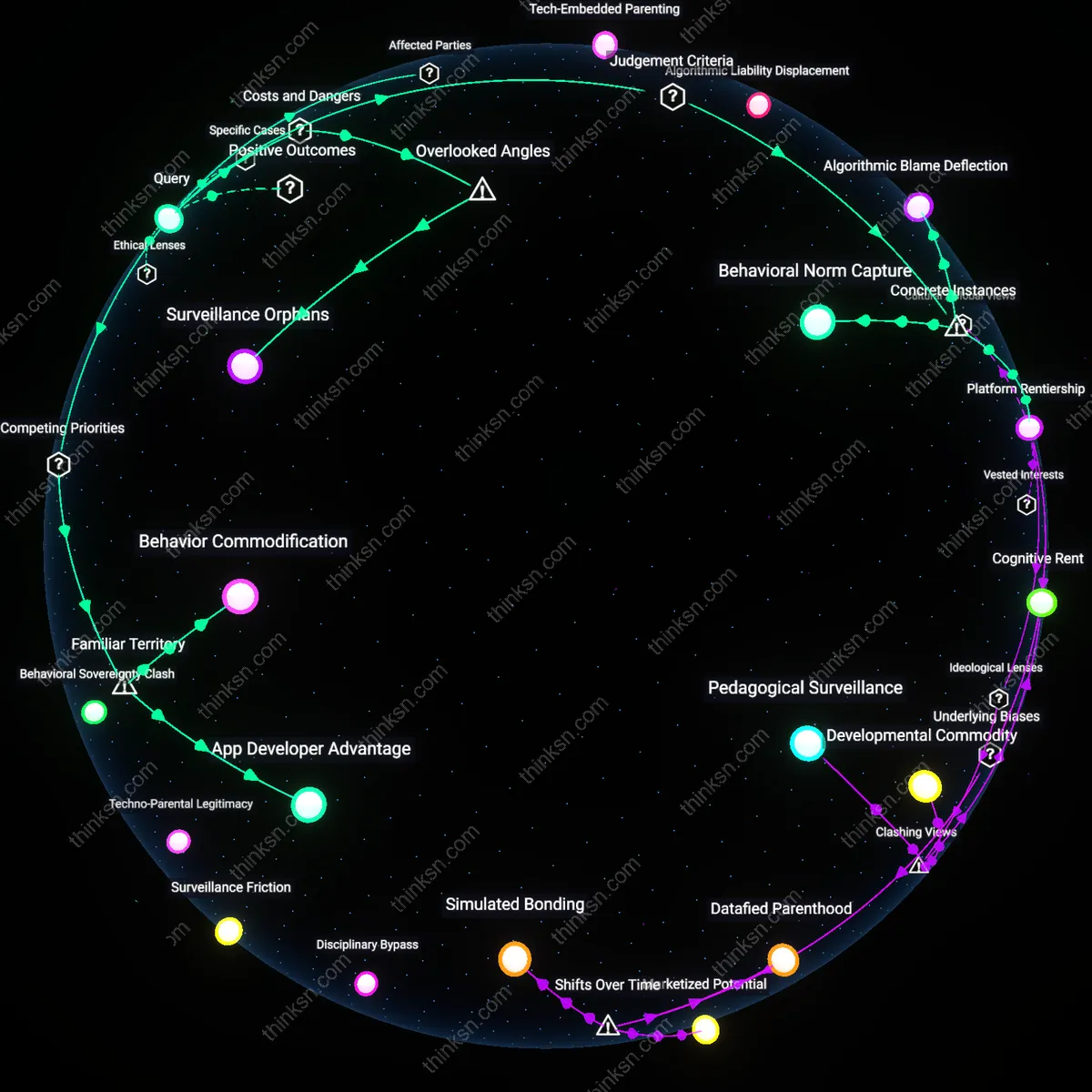

Analysis reveals 6 key thematic connections.

Key Findings

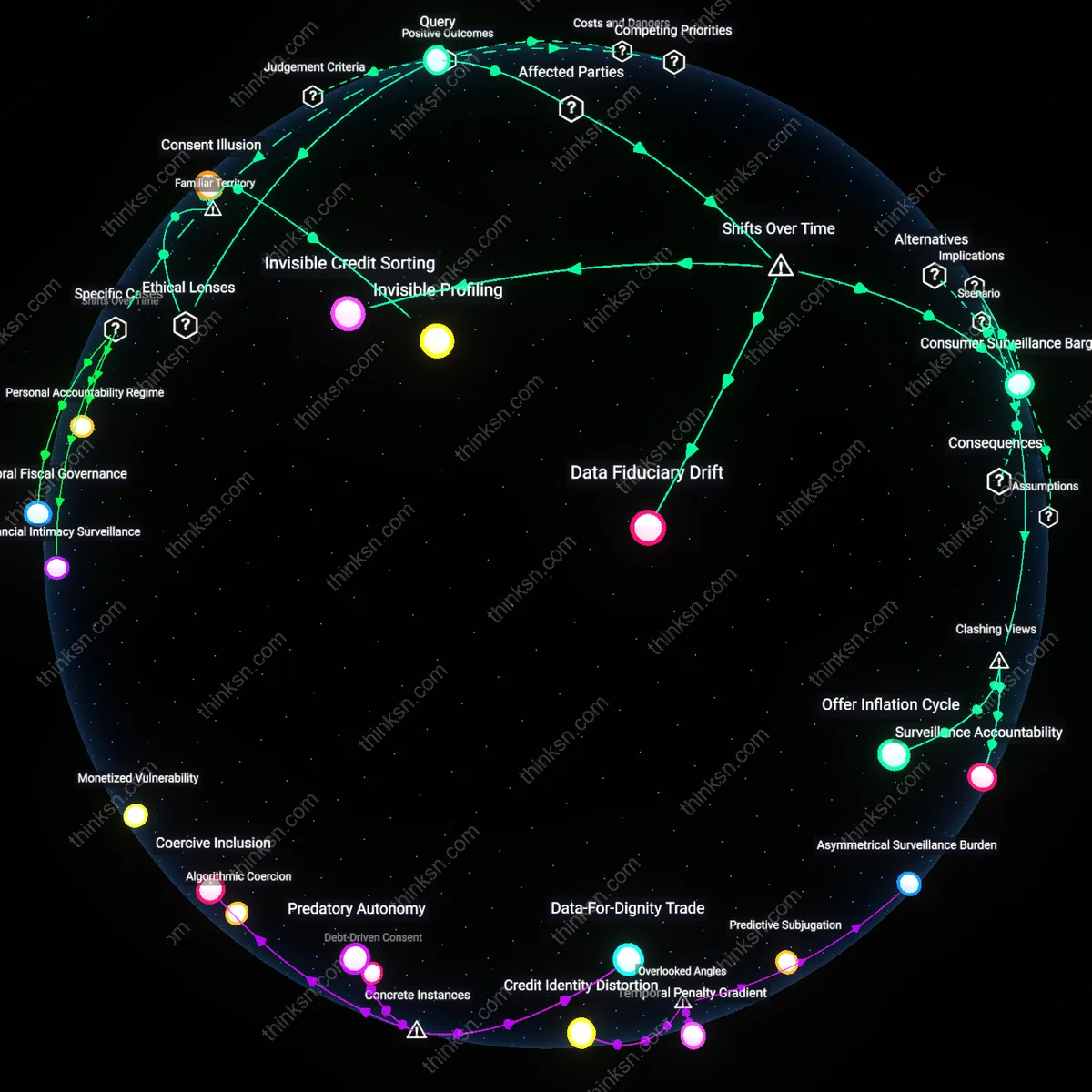

Data Fiduciary Drift

The risk of financial discrimination escalates when budgeting tool providers, historically positioned as consumer advocates, shift toward data brokerage roles amid lax regulatory enforcement post-2010, transferring fiduciary responsibility from users to marketers through opaque data licensing agreements. This transformation exploits the erosion of financial privacy norms after the Dodd-Frank rollback, enabling third parties to infer economic vulnerability from aggregated spending patterns under the guise of 'personalized advertising.' The non-obvious consequence is that institutions once trusted to protect financial well-being now functionally enable discriminatory targeting by design, not breach—marking a systemic inversion of stewardship.

Invisible Credit Sorting

Financial discrimination risk materializes when third-party marketers use historical spending aggregates to reconstruct creditworthiness proxies after the 2008 crisis, when traditional scoring models became politically contested and harder to access, shifting exclusionary practices into non-regulated behavioral analytics. Firms now circumvent Equal Credit Opportunity Act safeguards by targeting 'low financial resilience' cohorts identified through transaction timing, category leakage, and cash-flow volatility—patterns invisible in credit reports but evident in budgeting app data. What’s underappreciated is that this represents not a flaw in data sharing but a re-formation of redlining, relocated from geography to temporal consumption rhythms.

Consumer Surveillance Bargain

The danger of financial discrimination emerges from a post-2016 behavioral shift where users, disillusioned by stagnant wages and rising living costs, trade spending transparency for budgeting tools they perceive as essential financial therapy, unaware that aggregated data feeds an ecosystem of predation masked as convenience. This exchange consolidates power among digital platforms that treat financial vulnerability as a scalable market segment, normalizing surveillance as the price of financial agency. The overlooked insight is that this bargain repackages economic precarity as user consent, transforming austerity into a data-generating condition rather than a policy failure.

Consent Illusion

Ensure users provide informed consent before sharing their data with marketers. This involves presenting clear, jargon-free disclosures about how spending data will be used, who will access it, and what risks arise from re-identification or profiling. The mechanism operates through digital consent interfaces governed by frameworks like GDPR or CCPA, which mandate transparency and user control. What’s underappreciated is that even compliant interfaces often exploit cognitive overload and habituation—users routinely accept terms they don’t read, making consent ritualistic rather than meaningful, especially when the tool is free and perceived as low-risk.

Invisible Profiling

Audit third-party marketing algorithms for discriminatory targeting patterns using real-world transaction data. Marketers use aggregated spending behavior to model consumer propensity, often inferring race, gender, or class through proxies like grocery choices or pharmacy purchases—mechanisms embedded in U.S. fair lending and civil rights law as redlining analogs. The non-obvious risk is that aggregated data, presumed neutral, can still produce disparate impact when algorithms allocate goods, credit, or opportunities based on spending clusters mapped to disadvantaged neighborhoods, effectively automating exclusion without explicit identifiers.

Value Extraction

Restrict data-sharing to parties that compensate users for their financial behavior insights. Free budgeting tools function as digital labor platforms where users generate valuable behavioral data through routine logging, a process aligned with Marxian critiques of immaterial labor under platform capitalism. The underappreciated reality is that while marketers profit from hyper-targeted campaigns based on categorized spending—such as targeting 'low-income frugal households' for high-interest loans—the users who produce this data see no return, reinforcing economic hierarchies under the guise of financial empowerment.