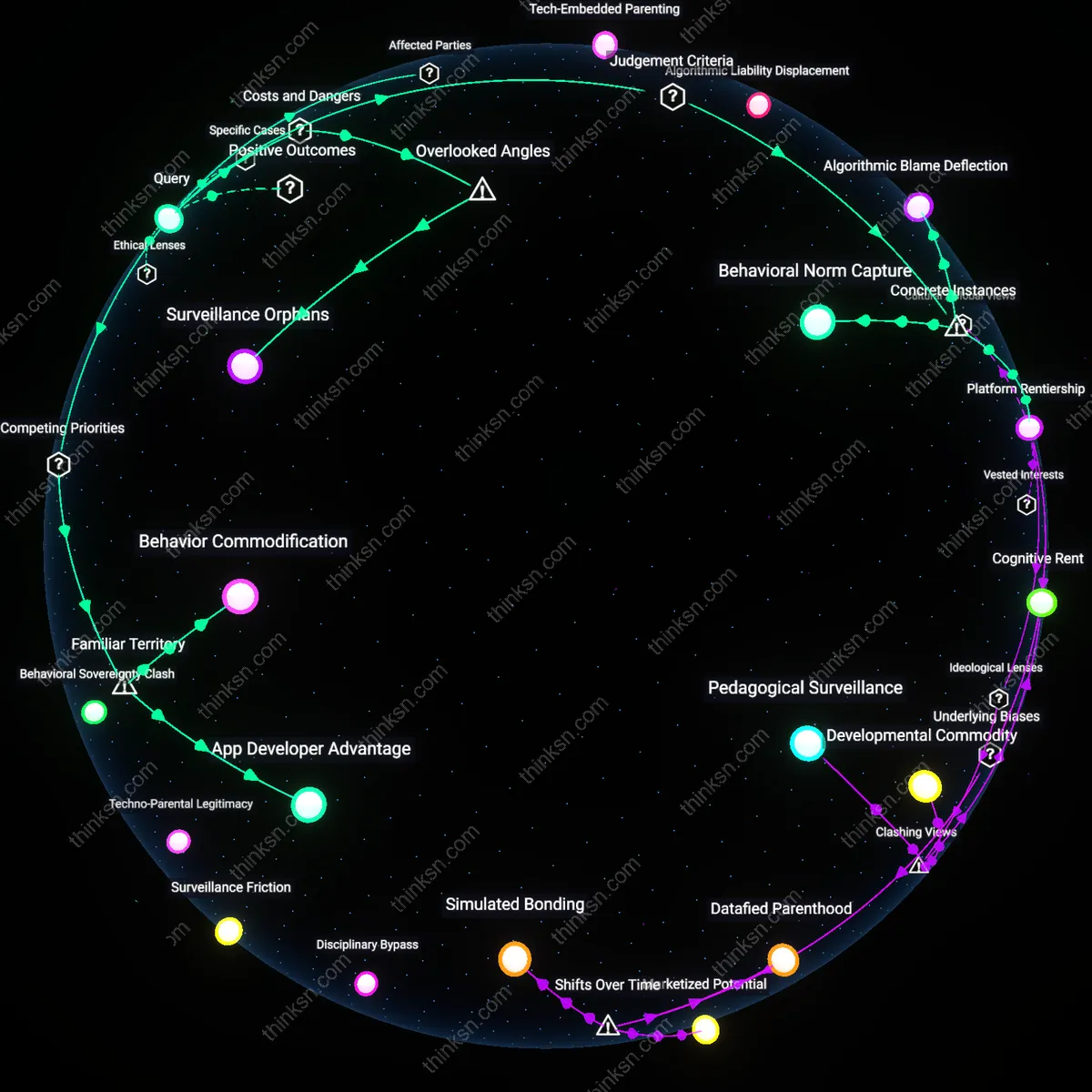

Are Parenting Apps Hurting More Than They Help?

Analysis reveals 9 key thematic connections.

Key Findings

Tech-Embedded Parenting

Venture-funded app developers benefit by monetizing parental anxiety through subscription models that reframe developmental variability as a data problem requiring constant tracking and correction. These platforms thrive not on proven behavioral outcomes but on the creation of perceived vigilance gaps—parents are incentivized to monitor and modify toddler behavior through gamified dashboards and AI-generated 'insights' that lack clinical validation, effectively turning everyday developmental stages into marketable moments of crisis. This shifts responsibility from pediatric and educational institutions to consumer technology, normalizing algorithmic intervention in early childhood without oversight. The non-obvious consequence is not that parents are misled, but that parenting itself is being redefined as a performance metric governed by Silicon Valley design logic rather than developmental science.

Disciplinary Surveillance Drift

Early childhood educators in underfunded public systems benefit indirectly when app-mediated discipline strategies absorb behavioral expectations previously managed through inclusive pedagogy, allowing schools to offload developmental support to homes equipped with tracking tools. As certain families arrive with 'optimized' children—conditioned by reward-punishment algorithms calibrated through app data—classroom norms shift toward behavioral predictability, privileging algorithmic compliance over socioemotional diversity. This creates a silent alignment between ed-tech aspirations and data-driven parenting, where the absence of scientific consensus becomes an asset, enabling experimental behavior-shaping to occur in private homes before institutional adoption. The dissonance lies in viewing apps not as substitutes for professional guidance but as pilot programs for scalable social control masked as parental choice.

Algorithmic Liability Displacement

Insurance and risk-assessment firms benefit by exploiting the unregulated data flows generated by parenting apps to model long-term behavioral risk profiles of children before any clinical diagnosis could ethically or legally exist. By aggregating anonymized behavioral logs—sleep patterns, tantrum frequency, obedience scores—these apps create shadow datasets that reinsurers and private education providers can use to anticipate future claims or exclude high-risk demographics preemptively. The lack of scientific consensus shields these actors from liability, as the data is framed as 'personalized insights' rather than medical assessment, yet it still informs actuarial logic downstream. The underappreciated mechanism is how regulatory ambiguity enables covert actuarial colonization of early childhood, where discipline becomes a data source for future exclusion.

Platform Rentiership

Tech companies benefit by monetizing parental anxiety through subscription-based access to unverified behavior analytics, as seen with the app developer Ovia Parenting, which repurposed fertility tracking infrastructure to sell premium 'development milestone' insights without clinical validation—revealing how digital health platforms extract revenue by positioning themselves as indispensable intermediaries in caregiving decisions, despite absent regulatory scrutiny or peer-reviewed efficacy, thereby transforming developmental uncertainty into a profit stream through datafication and recurrent billing cycles.

Algorithmic Blame Deflection

Parents benefit psychologically by outsourcing disciplinary accountability to app-generated recommendations, exemplified by users of the Australian-designed app 'Baby Connect' who reported reduced guilt when enforcing screen-time limits justified by the app’s neutralized metrics—illustrating how data interfaces redistribute moral responsibility from caregiver to algorithm, offering an illusory shield against accusations of inconsistent parenting in socially surveilled environments like school pickup circles or family group chats, where technologically mediated decisions appear more objective than subjective judgment.

Behavioral Norm Capture

Educational consultants and early childhood coaching franchises benefit by aligning private app data with norm-referenced developmental frameworks, as demonstrated when Bright Horizons, a U.S.-based childcare provider, integrated third-party app data into individualized learning plans to justify pedagogical interventions—exposing how aggregated, non-consensual toddler behavior data feeds professional ecosystems that profit from defining 'atypical' development, thus enabling commercial actors to shape behavioral standards while cloaking subjectivity in algorithmic authority and scaling influence without clinical oversight.

Surveillance Orphans

Tech investors benefit from the erosion of intergenerational caregiving networks by monetizing data harvested from isolated parents who distrust traditional childcare wisdom. As extended family structures dissolve or become geographically dispersed, first-time parents turn to apps for guidance, unaware that their inputs are being commodified into proprietary behavior prediction models; this dynamic turns developmental milestones into surveillance artifacts, effectively converting intimate family moments into training data for profit-driven algorithms. The non-obvious cost is how the dismantling of communal knowledge systems creates a dependency class—'surveillance orphans'—who inherit no ancestral care practices and thus submit more readily to technocratic parenting solutions that deepen data extraction.

App Developer Advantage

Venture-backed parenting app companies benefit most by monetizing parents' anxiety through algorithmic behavior tracking. These firms convert ambiguous toddler behaviors into sellable data insights, leveraging parental fears of developmental delays to secure recurring subscriptions—despite absent clinical validation. The non-obvious reality is that the familiar framing of 'supportive tech' masks a structural incentive to pathologize normal behavior, turning everyday childhood expressions into market opportunities.

Behavior Commodification

Data brokers benefit by harvesting behavioral metrics from toddler interactions, embedding surveillance norms early through parenting routines. The system operates via opt-in consent buried in app terms, where emotional appeals to child wellbeing obscure long-term data harvesting. What feels like familiar, benign support software becomes an on-ramp to lifelong behavioral tracking—normalizing extraction under the guise of developmental care.