Why Senate Funds AI Research Over Oversight?

Analysis reveals 6 key thematic connections.

Key Findings

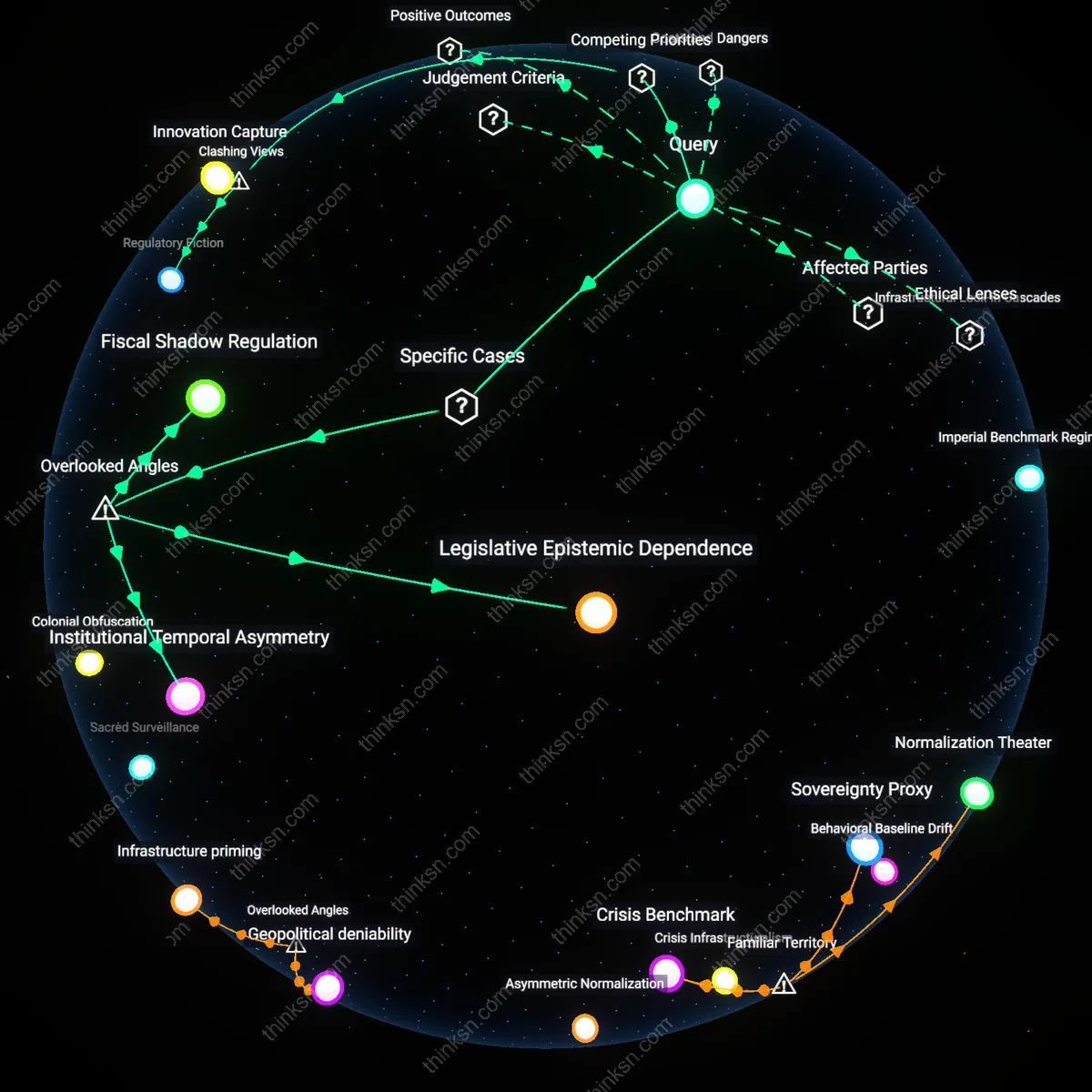

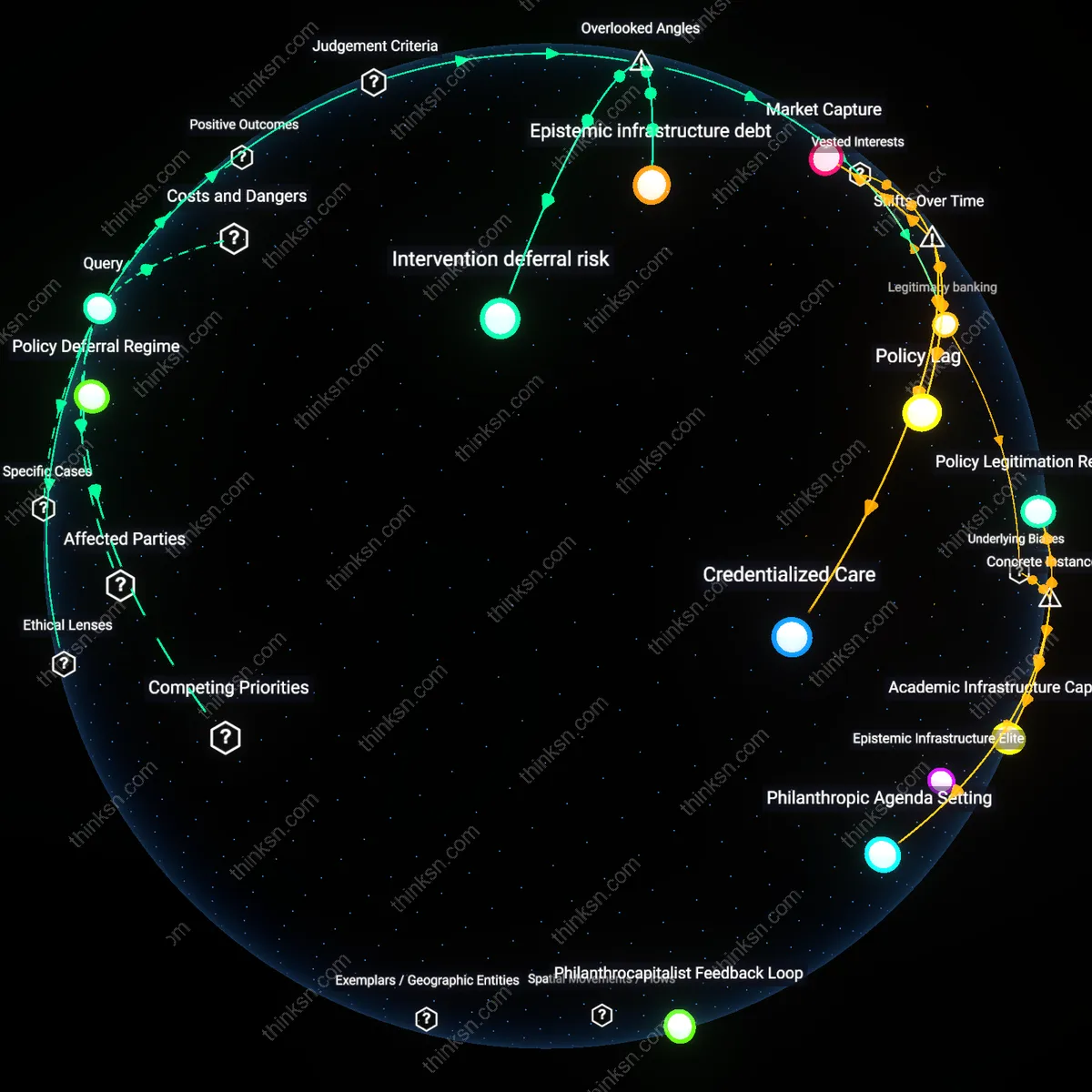

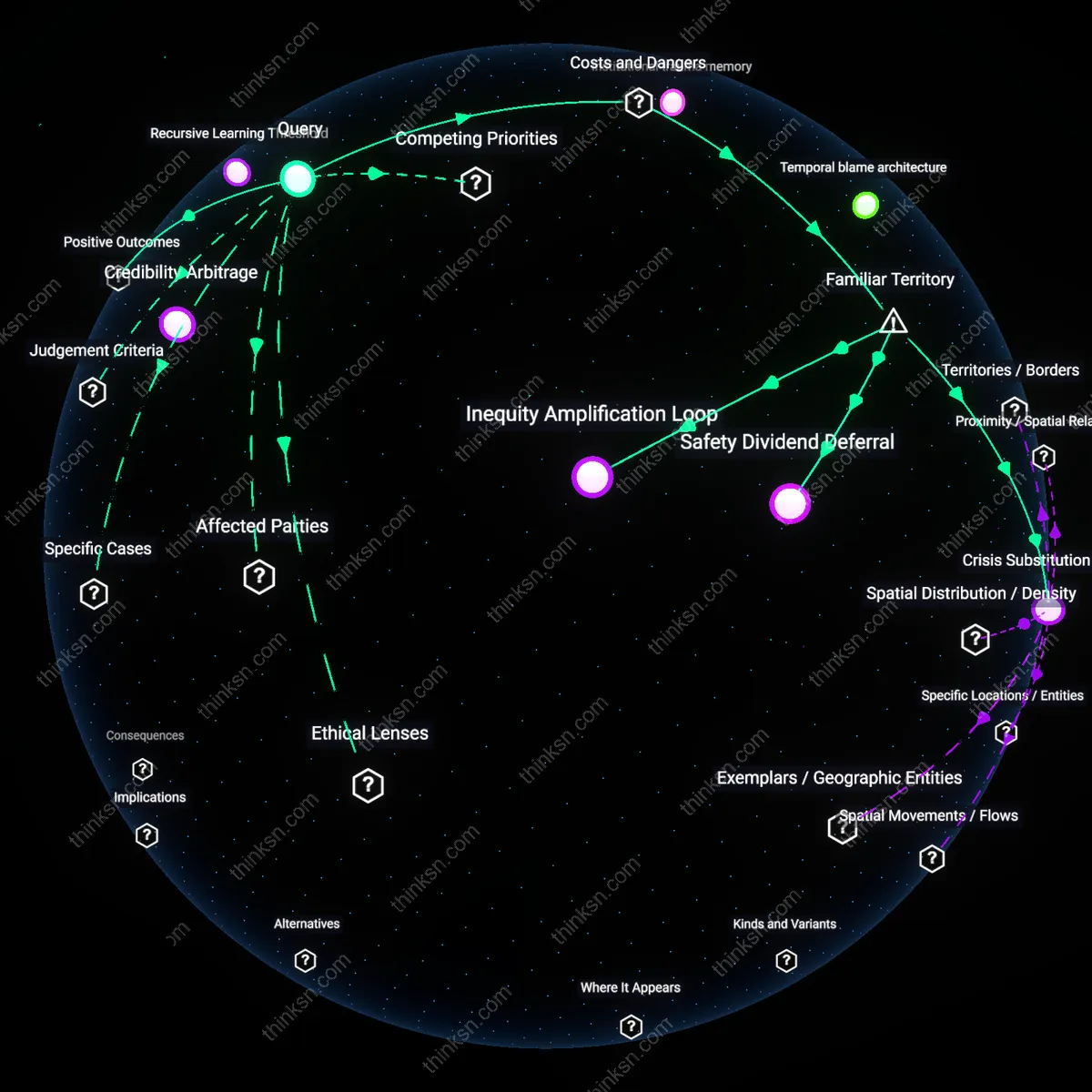

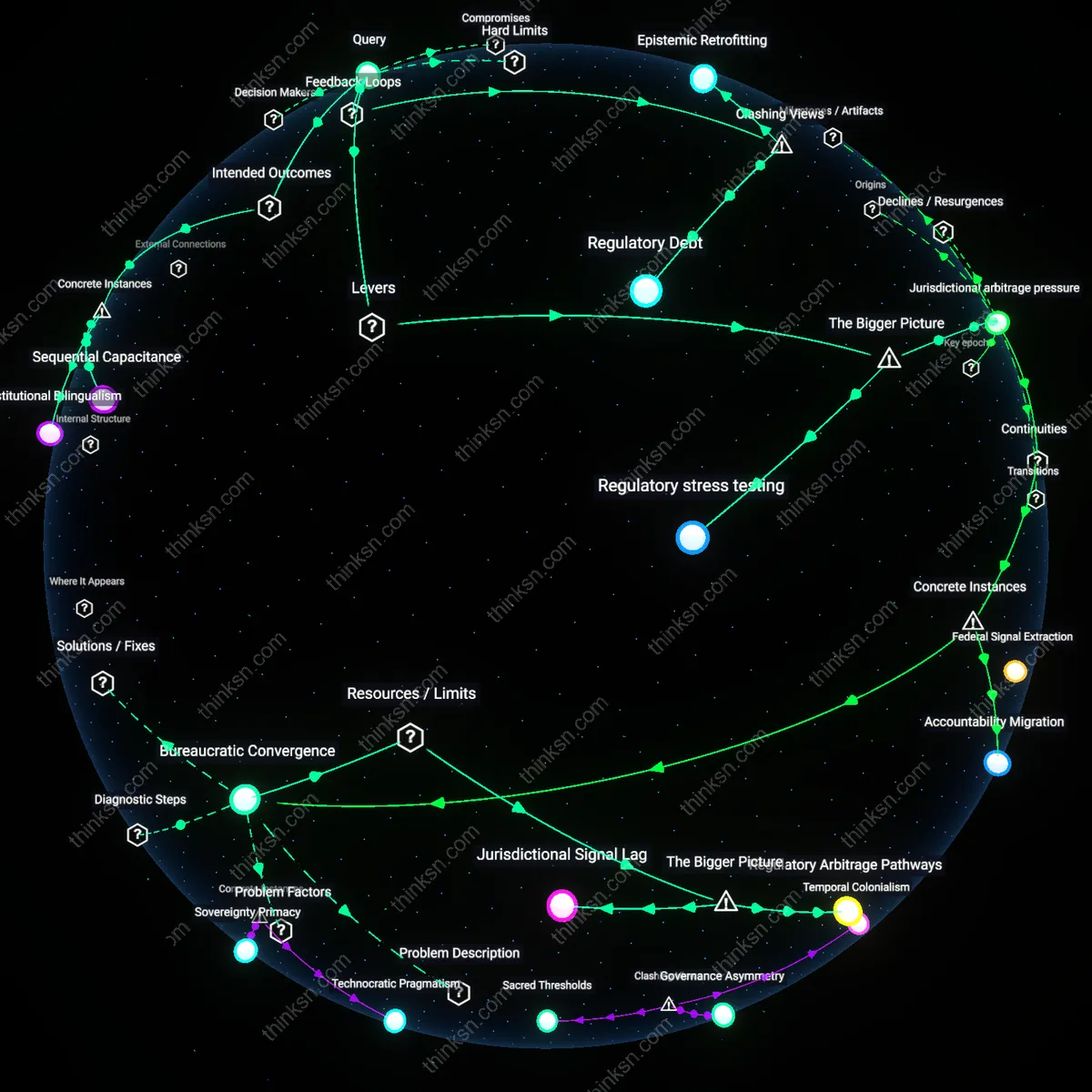

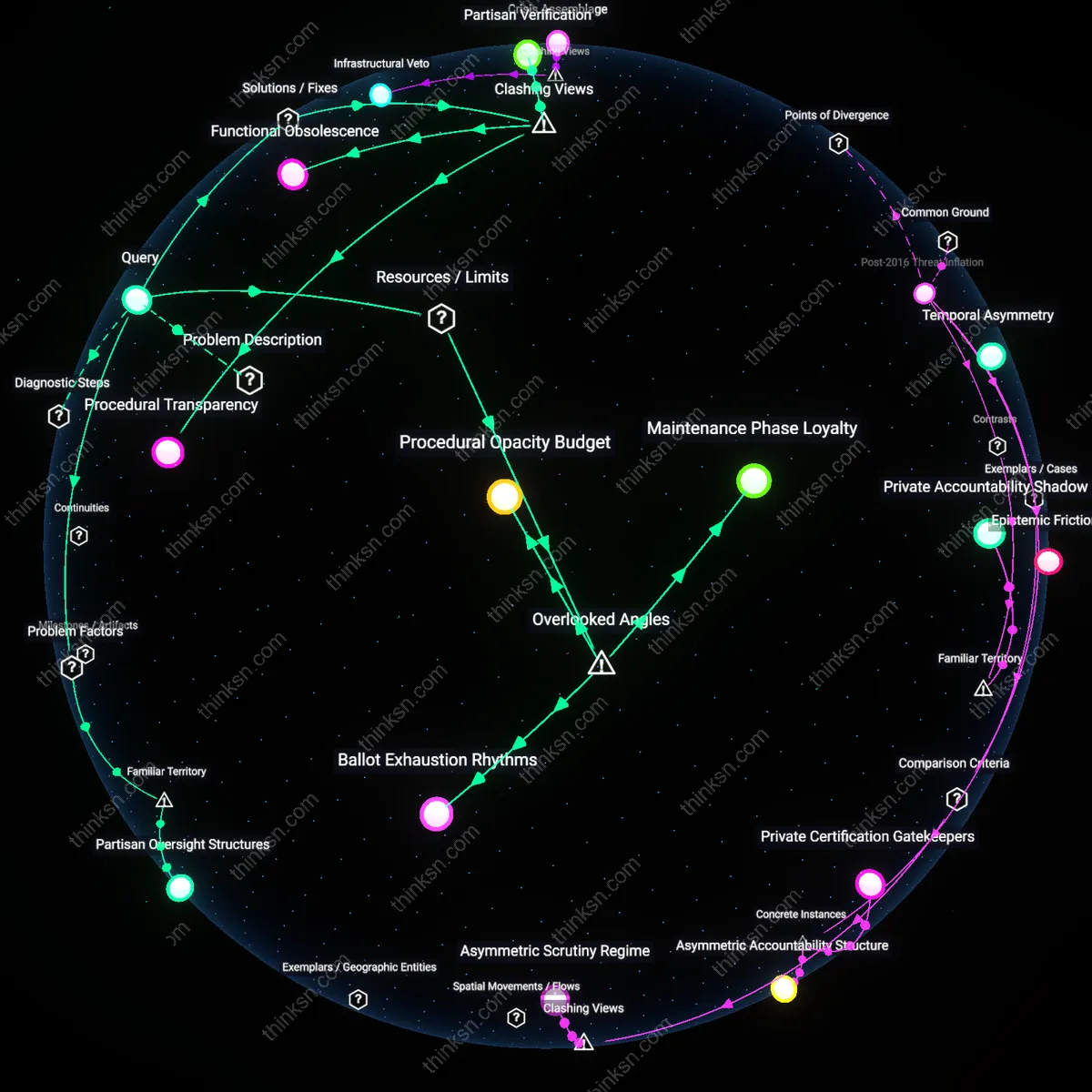

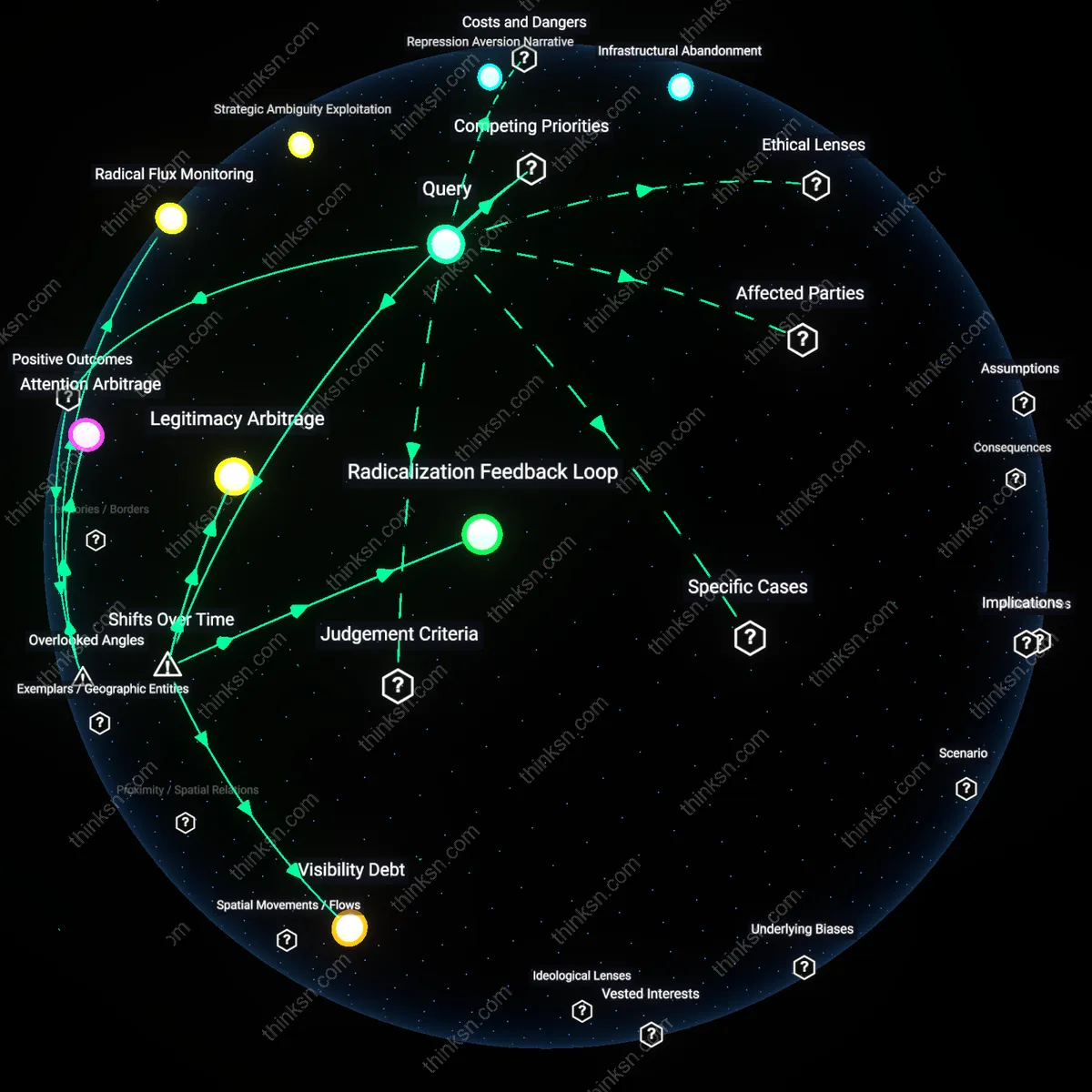

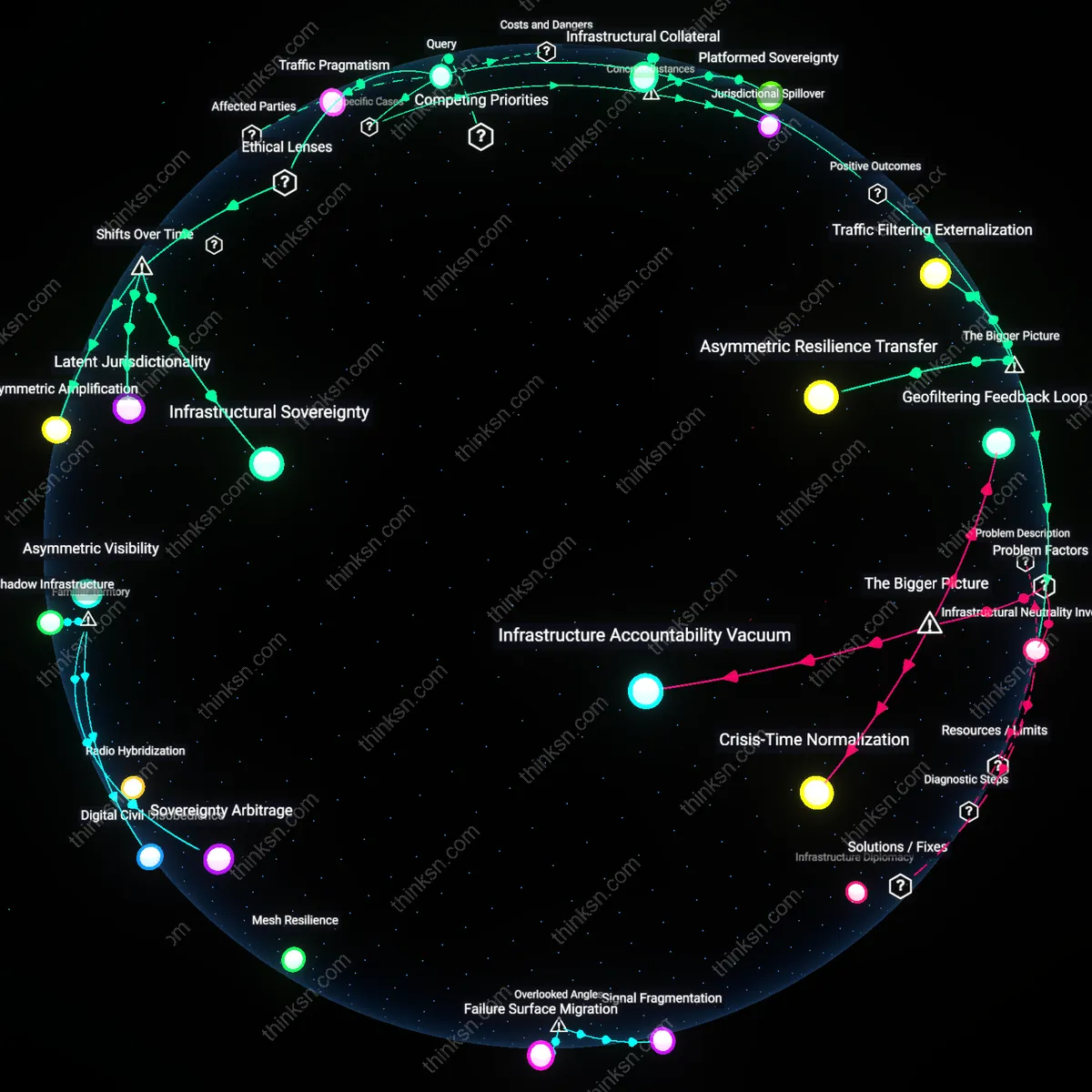

Oversight Evasion

Prioritizing AI research funding over regulatory infrastructure enables agencies like NIST and the NSF to expand technical capabilities without binding accountability mechanisms, thereby allowing the proliferation of AI systems into consumer markets ahead of safety validation. This dynamic privileges developers and federal innovation agendas over public welfare safeguards, embedding risk absorption into private users rather than institutional oversight bodies. The non-obvious consequence is not merely delayed regulation but the active structuring of legal and technological pathways that make future oversight technically obstructed and politically resisted.

Regulatory Fiction

By framing oversight as a secondary phase contingent on research maturity, the Senate’s bill constructs a temporal alibi that postpones enforceable consumer protections indefinitely, treating them as emergent byproducts rather than coequal policy objectives. This sequencing assumes that knowledge generation will naturally yield protective frameworks, ignoring how research institutions like DARPA and corporate labs are structurally incentivized to suppress risk data. The underappreciated mechanism is the transformation of oversight from a procedural requirement into a rhetorical promise, which allows policymakers to claim responsibility while avoiding confrontation with powerful industry stakeholders.

Innovation Capture

The concentration of funds in research channels controlled by elite universities and defense-adjacent tech firms entrenches a narrow conception of innovation that excludes public-interest design and grassroots accountability testing, effectively locking out alternative AI development paradigms. Because oversight demands distributed monitoring and participatory standards-setting—functions incompatible with the centralized, proprietary models favored by grantees like those in the CHIPS Initiative—consumer protection becomes functionally incompatible with the innovation model being resourced. The overlooked reality is that the trade-off isn’t between speed and safety, but between two competing visions of technological governance, one of which is being silently institutionalized through budgetary default.

Legislative Epistemic Dependence

The U.S. Senate’s prioritization of research funding over oversight in the AI Innovation and Accountability Act of 2023 entrenches legislative reliance on federally funded R&D institutions, such as NIST and NSF, to define what constitutes 'safe' or 'ethical' AI, thereby deferring regulatory standards to the same entities driving technical advancement. This creates a feedback loop where oversight frameworks are shaped by research paradigms that inherently favor innovation velocity, normalizing risk tolerance in consumer protection outcomes. The overlooked mechanism is that by delegating epistemic authority to research bodies without mandating independent red-teaming or adversarial testing, the Senate inadvertently institutionalizes a developmental bias that treats safety as an emergent byproduct rather than a preemptive requirement. What is missed in mainstream discourse is that the structure of knowledge production itself—rather than just funding levels—becomes a regulatory determinant.

Fiscal Shadow Regulation

The exclusion of mandatory audit rights or enforcement triggers in the Senate’s bill enables private AI developers, such as major cloud providers hosting dual-use foundation models, to treat federal research grants as de facto compliance signals, reducing perceived need for external scrutiny. Because firms can advertise government-funded R&D partnerships as proof of legitimacy, the marketplace of AI services interprets research investment as a proxy for regulatory adherence, even in the absence of enforceable consumer protections. This dynamic illustrates how fiscal instruments—grants, contracts, subsidies—function as stealth regulatory tools by shaping industry behavior without legislative mandates. The overlooked dimension is that funding allocations, when asymmetrically distributed, exert regulatory influence not through compliance burdens but through reputational capital, altering firm incentives in ways that formal rulemaking cannot easily reverse.

Institutional Temporal Asymmetry

The Senate’s focus on long-term AI research through agencies like DARPA and ARL creates a temporal misalignment between innovation cycles and consumer harm remediation, as seen in the delayed response to algorithmic discrimination in federally funded natural language processing tools adopted by commercial credit scoring platforms. Research timelines operate on decade-scale horizons, while consumer risks—such as biased loan denials—materialize within months of deployment, leaving victims without recourse during the oversight gap. The underappreciated factor is that temporal structure—the differing speeds at which knowledge is produced versus harm is experienced—creates a systemic vulnerability where policy appears forward-looking but is functionally reactive. This asymmetry erodes public trust not due to explicit failure, but because institutional rhythms are misaligned with lived temporalities of risk.