Balancing AI Expertise with Traditional Skills in Uncertain Policy Times?

Analysis reveals 8 key thematic connections.

Key Findings

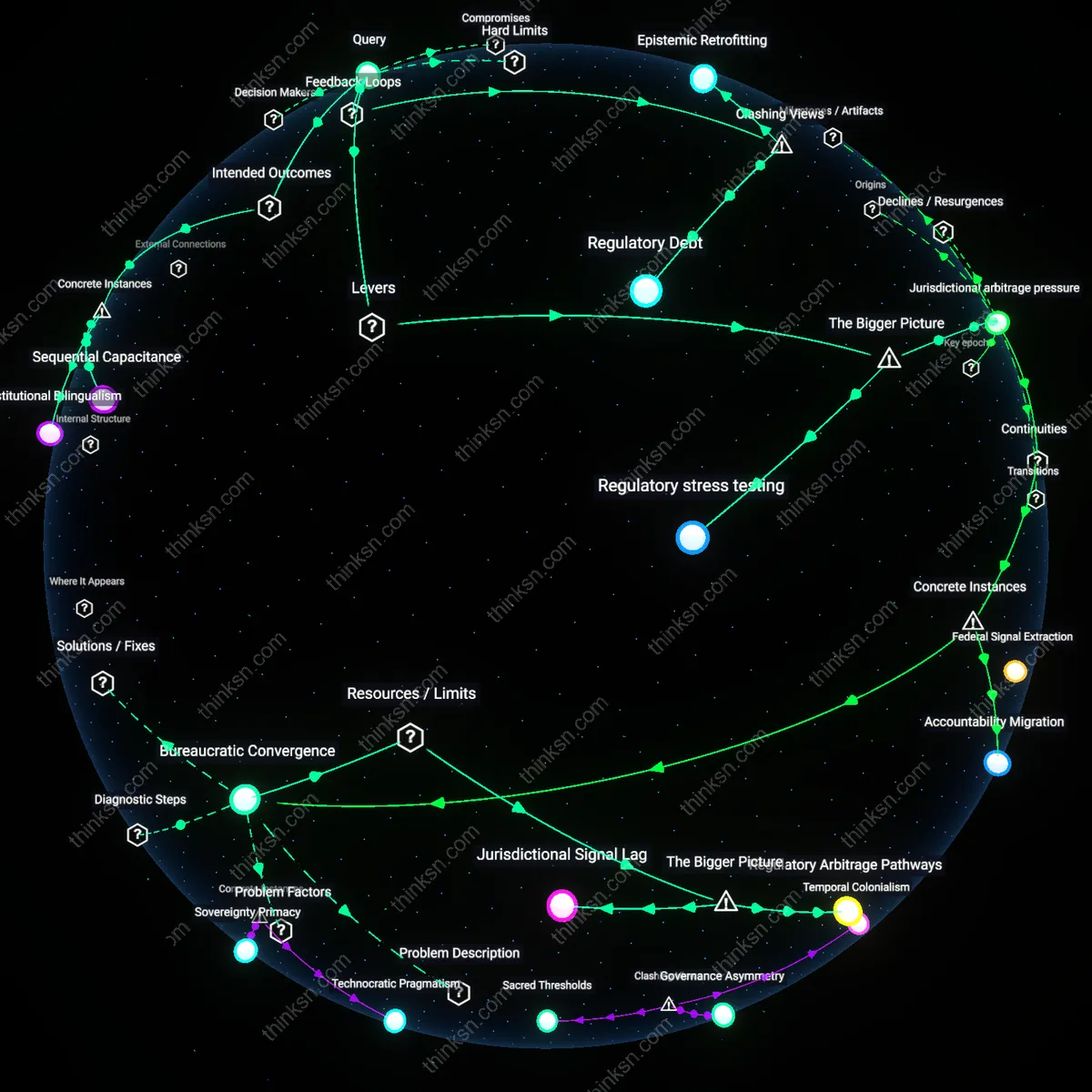

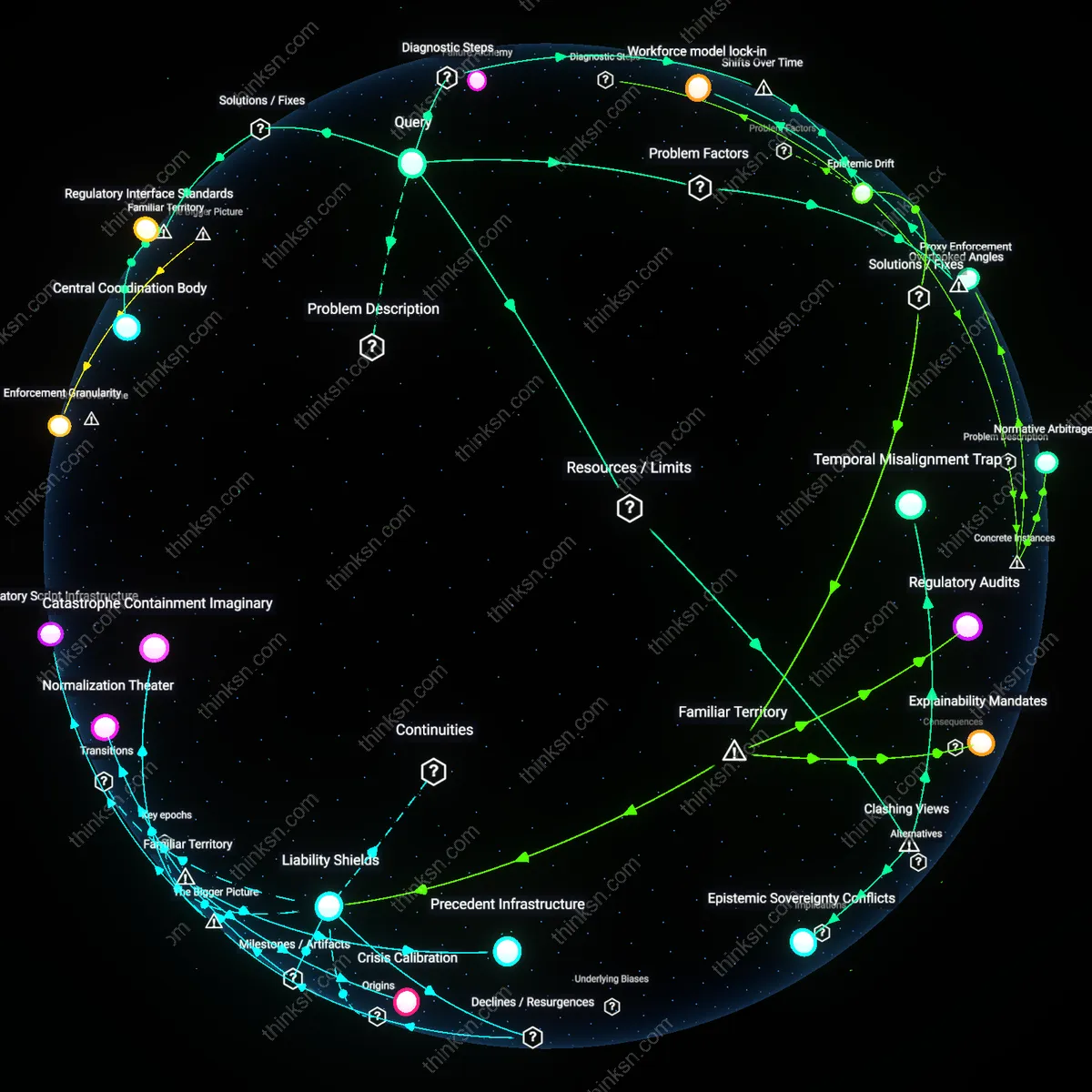

Regulatory stress testing

Design cross-sector pilot regulations that simulate high-impact AI deployment scenarios under varying legal futures, such as an executive order banning autonomous decision-making in social services. By using these scenarios to stress test both AI impact assessments and traditional cost-benefit analyses, senior analysts reveal incompatibilities, skill gaps, and assumptions latent in each approach. This functions through interagency working groups—like those convened under the National Artificial Intelligence Initiative—that align technical AI evaluation with statutory compliance planning. The underappreciated dynamic is that scenario-based exercises generate tacit knowledge and institutional alignment not through binding policy, but by creating shared interpretive frames for uncertainty, which persist beyond any single administration’s agenda.

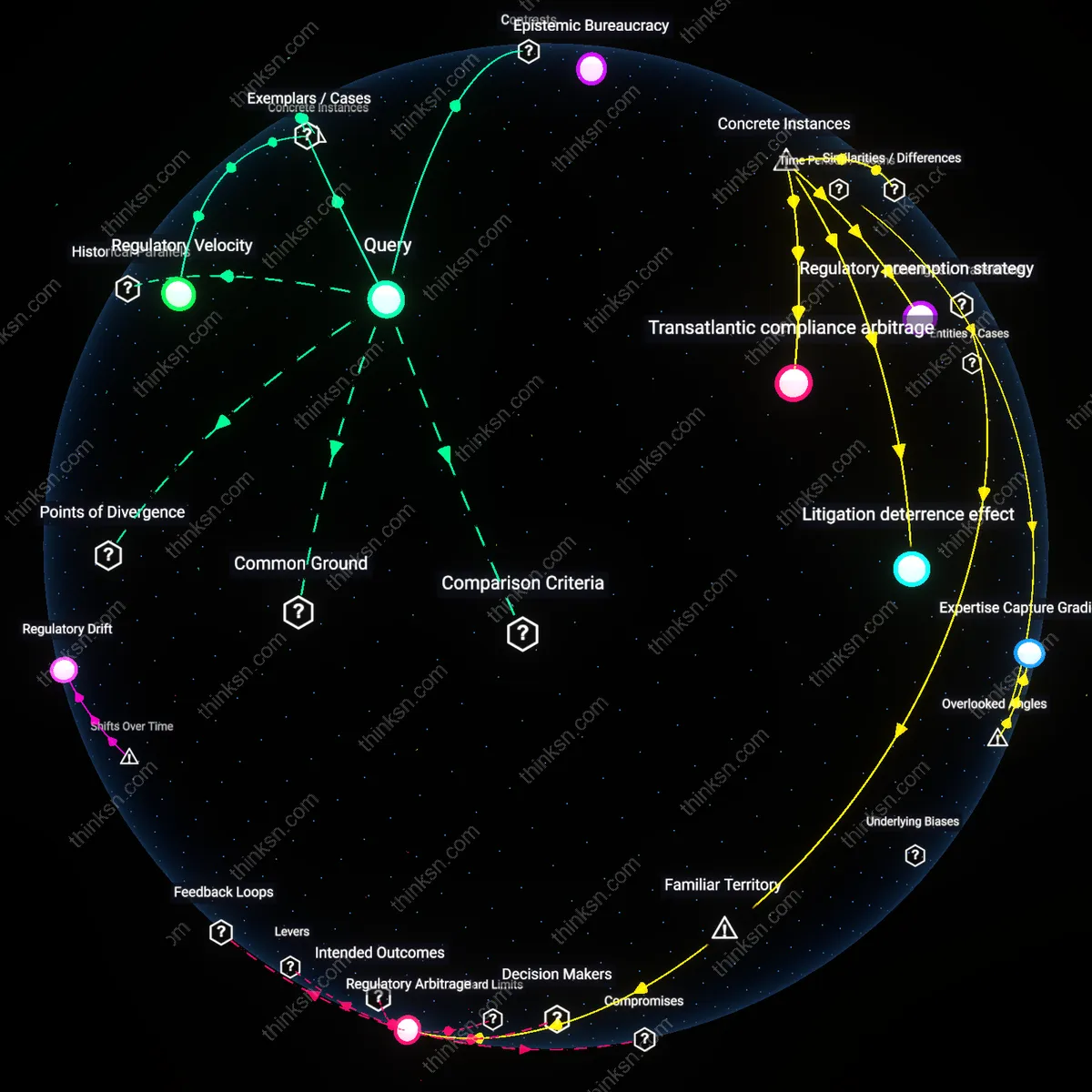

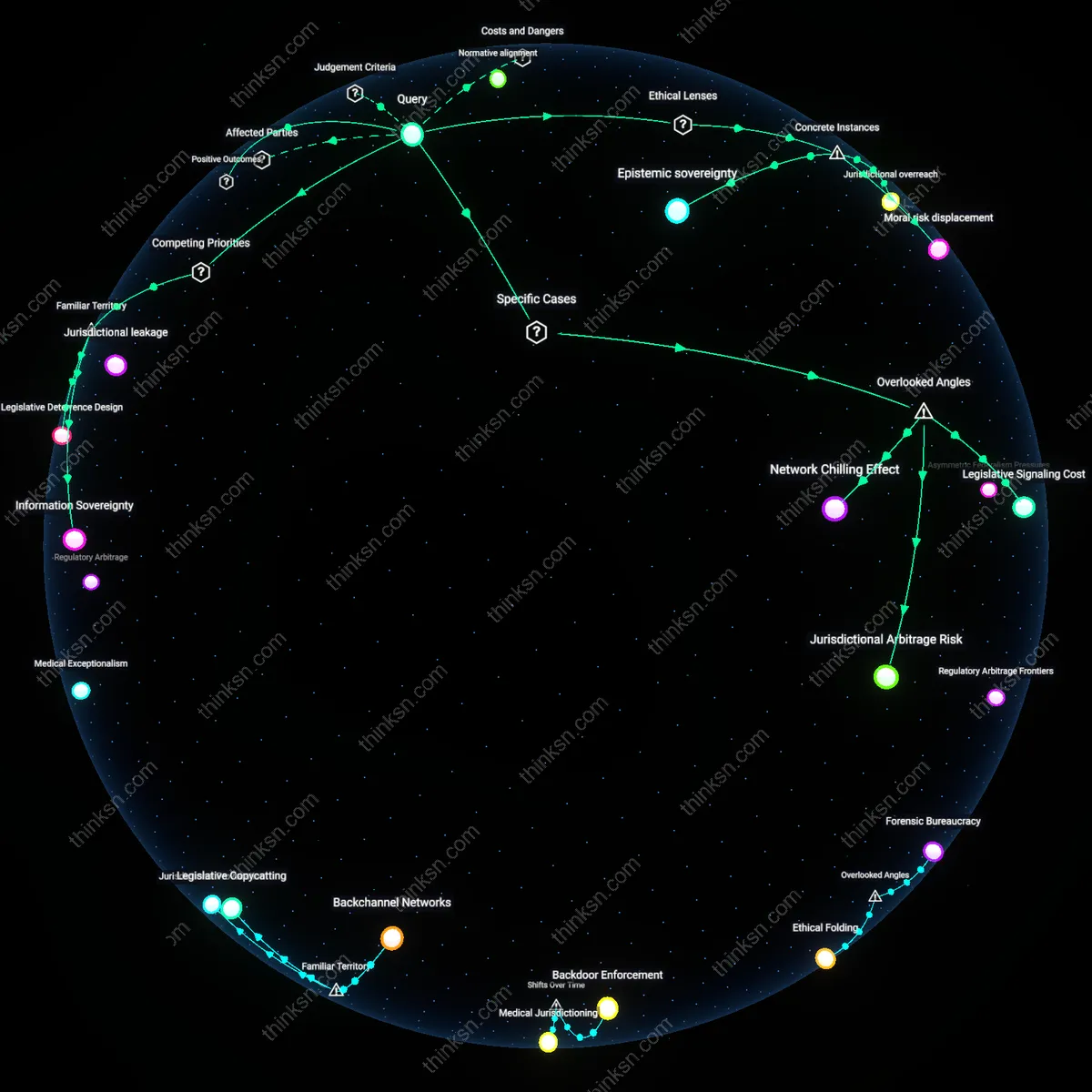

Jurisdictional arbitrage pressure

Leverage state-level AI governance experiments—such as California’s automated decision system audits or Colorado’s algorithmic accountability law—as live testing grounds for hybrid assessment models that merge qualitative equity analysis with quantitative regulatory impact metrics. Senior federal analysts can selectively adopt validated components from these divergent frameworks, creating a portfolio of modular assessment tools that function across shifting federal mandates. This works because federal policy vacuums incentivize subnational innovation, and the resulting regulatory heterogeneity forces federal analysts to develop translation mechanisms between regimes. The key insight is that legislative unpredictability at the national level amplifies the strategic value of subfederal policy variation, turning jurisdictional fragmentation into a methodological resource rather than a compliance burden.

Regulatory Debt

Prioritize documenting outdated regulatory assumptions in AI-adjacent domains to make traditional analysis a source of system correction rather than obstruction. This forces legacy frameworks into active feedback with emerging AI impact assessments by exposing where old cost-benefit logics fail under computational scale and opacity, as seen in environmental impact reviews now being strained by AI-driven energy demands in data centers. The mechanism—archiving regulatory assumptions as auditable liabilities—creates a balancing loop that slows AI integration only where precedent is most fragile, countering the assumption that speed requires discarding old methods. What’s non-obvious is that regulatory inertia isn’t a flaw to overcome but a diagnostic signal defining where AI disrupts foundational policy premises.

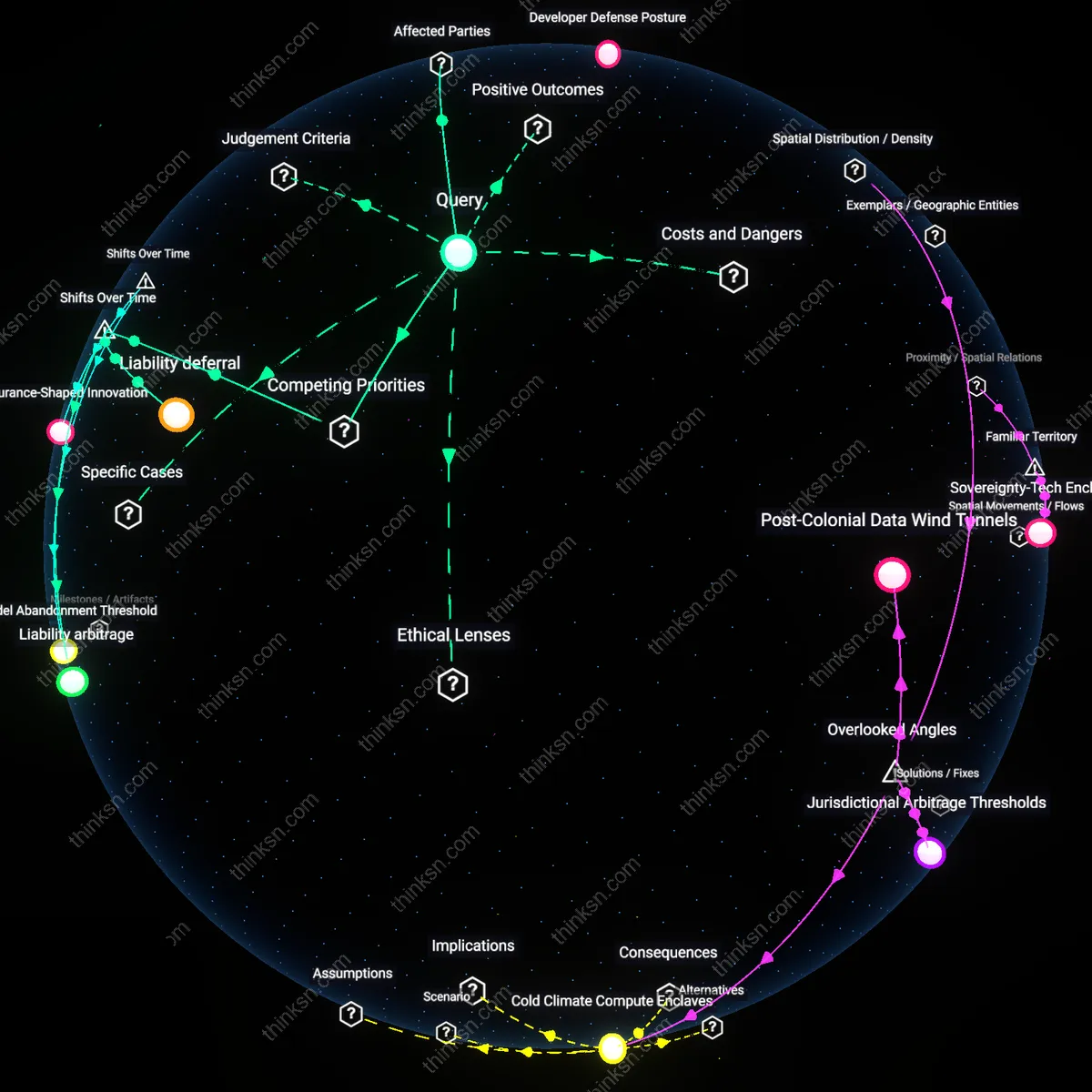

Assessment Arbitrage

Exploit jurisdictional mismatches in AI governance to preserve traditional analysis by positioning it as a competitive advantage in regulatory credibility. When states like California enforce rigorous environmental review under CEQA while federal AI guidelines remain voluntary, senior analysts can leverage the rigor of traditional methods as a counterweight to federally driven AI acceleration, creating a reinforcing loop where methodological conservatism gains legitimacy through legal durability. This reverses the dominant narrative that agility is the sole metric of effective policy analysis, revealing that strategic sluggishness can be weaponized to force higher evidentiary standards on AI deployment. The underappreciated dynamic is that fragmentation in legislative outcomes doesn’t hinder regulation—it enables niche survival of traditional tools through differential enforcement risk.

Epistemic Retrofitting

Reframe traditional regulatory analysis as infrastructure for AI accountability by repurposing cost-benefit templates to quantify AI's externalities in established fiscal terms, thereby embedding legacy systems into AI assessment rather than replacing them. In the U.S. Office of Management and Budget’s guidance on AI rulemaking, translating algorithmic bias into monetized social costs forces agencies to use decades-old regulatory impact analysis (RIA) formats to justify AI governance, creating a balancing loop that resists full methodological displacement. This challenges the idea that new tools are inherently more adaptive, showing instead that institutional survival often depends on making the new dependent on the old. The overlooked reality is that path dependency isn't a constraint on innovation—it's a leverage point for maintaining analytical continuity amid political flux.

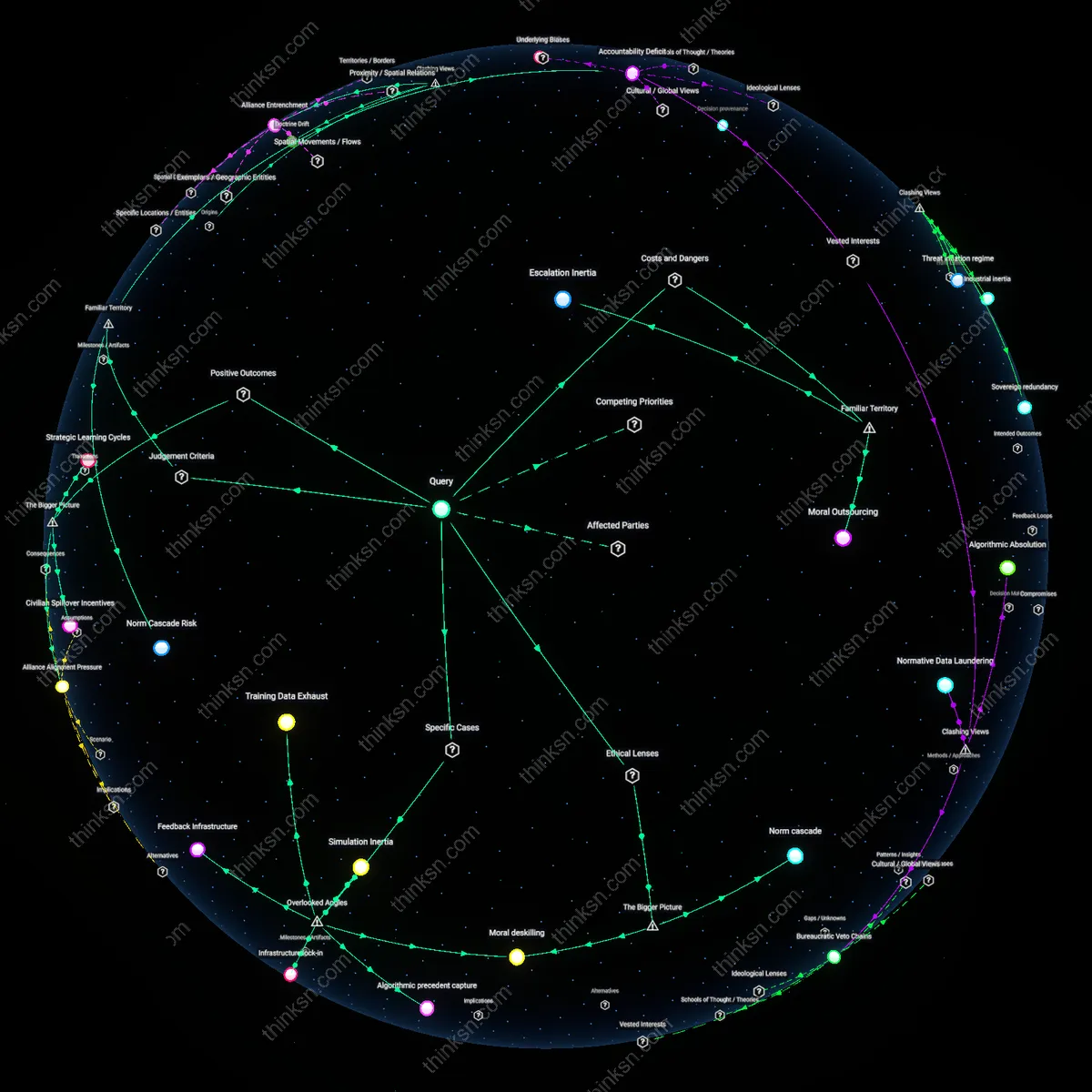

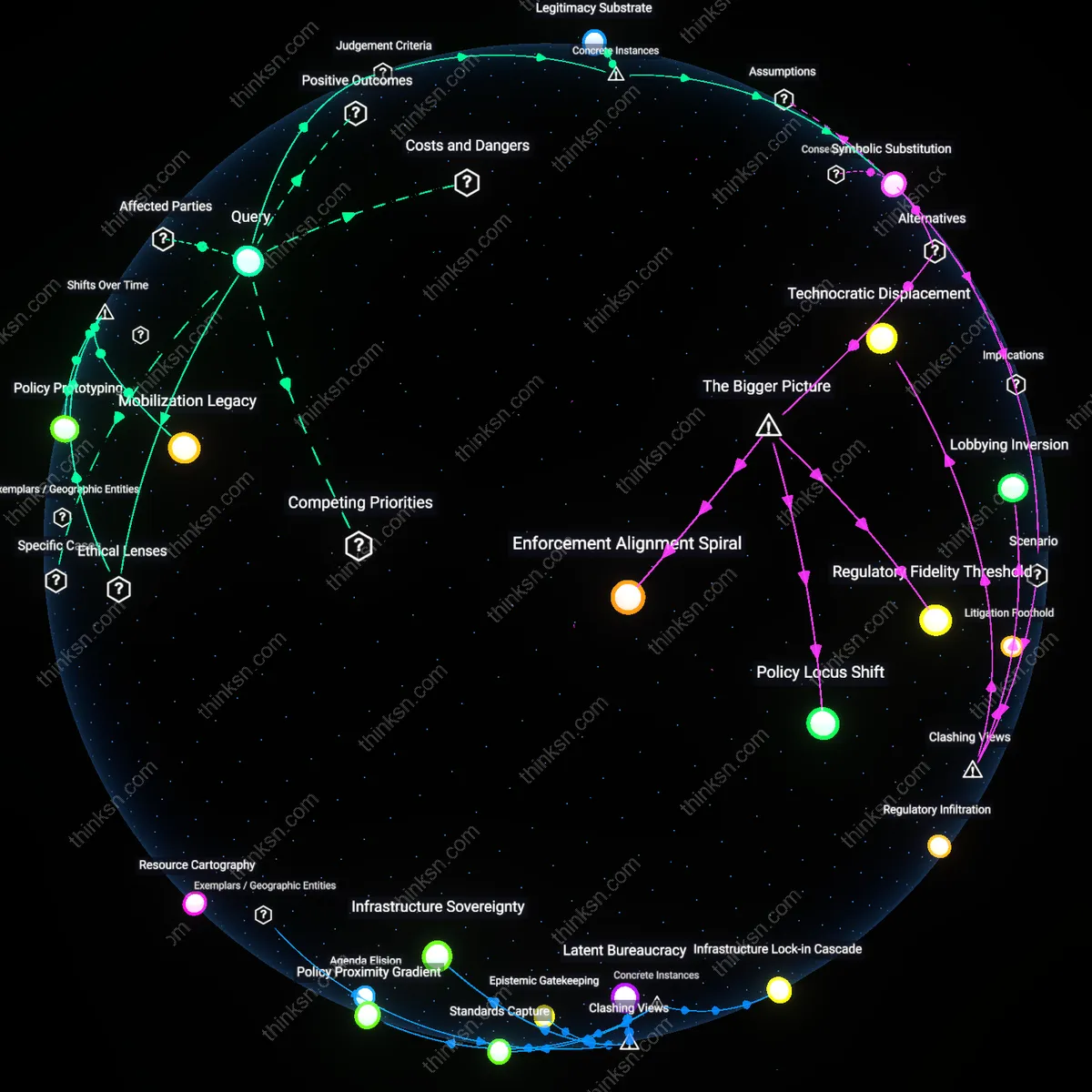

Sequential Capacitance

A senior policy analyst can balance AI impact assessment and traditional regulatory analysis by sequentially activating distinct methodological frameworks depending on legislative clarity, as demonstrated by the U.S. Office of Management and Budget’s use of Circular A-4 during stable periods and ad hoc AI review panels during the regulatory uncertainty of the Algorithmic Accountability Act debates in 2019–2020; this mechanism separates but preserves core analytic competencies by deploying them only when institutional signals authorize their use, revealing that temporal staggering of tools—not integration—can prevent skill atrophy under volatility.

Institutional Bilingualism

By institutionalizing dual-track training programs within the UK Government Digital Service post-2017, senior analysts maintained fluency in cost-benefit analysis while building AI impact assessment skills, using live regulatory impact assessments (RIAs) for Brexit-related statutes alongside experimental AI equity audits for automated benefits systems; this parallel skill maintenance through co-located practice communities reveals that organizational design—not individual upskilling—sustains technical pluralism when legislative futures are contested.

Legislative Anticipation Threshold

The Canadian Impact Assessment Agency embedded a trigger-based protocol in 2020 requiring AI-specific assessments only when automated decision-making exceeds a threshold of public harm risk, as defined in the Directive on Automated Decision-Making, while retaining standard regulatory analysis for all other cases; this rule-bound bifurcation, activated during the pharmacare legislation debates, reveals that analytically separable assessment regimes can be preserved through legally calibrated thresholds that insulate routine analysis from speculative technical overreach.