Are Your Login Habits Selling You Short to Advertisers?

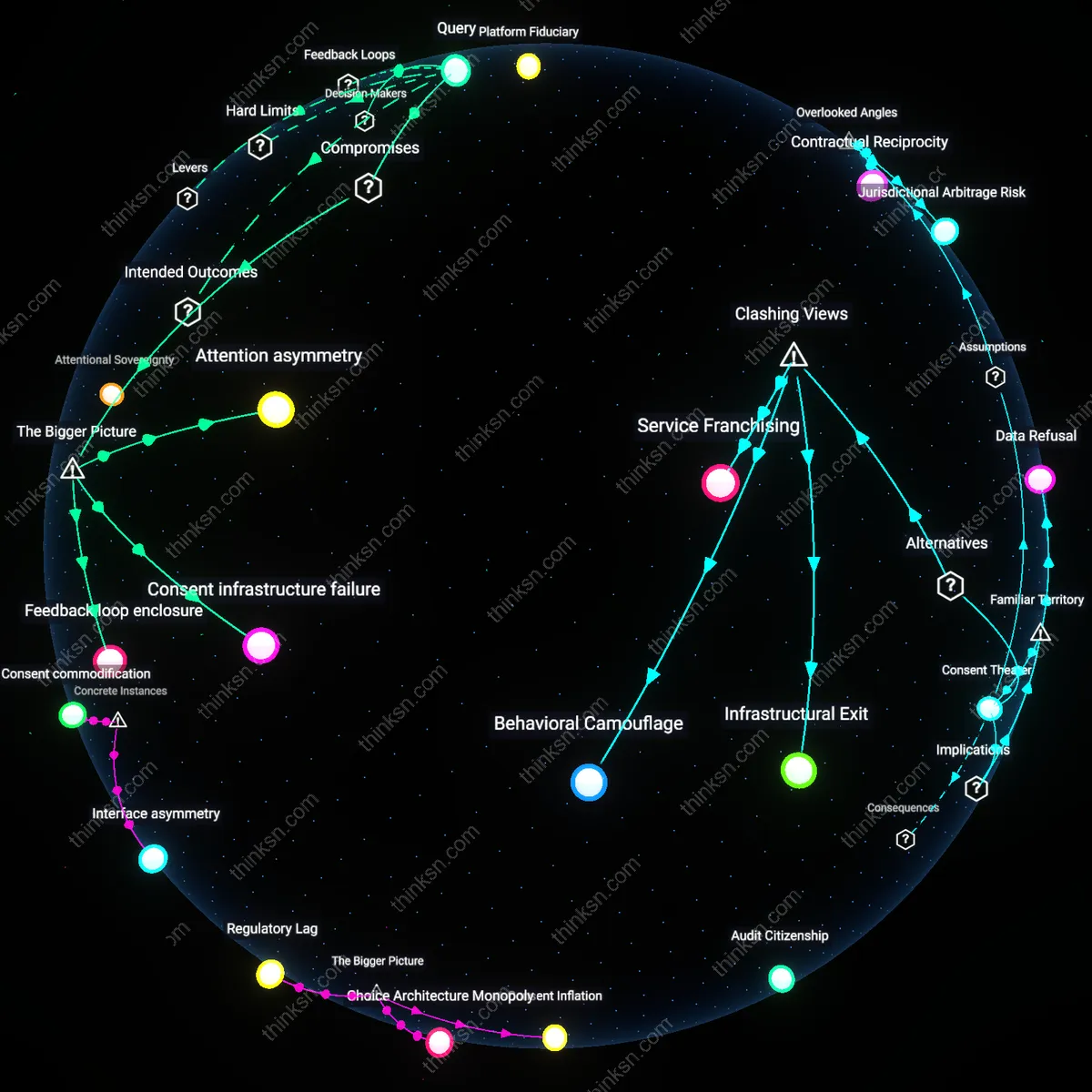

Analysis reveals 6 key thematic connections.

Key Findings

Consent Infrastructure

The European Court of Justice’s 2020 decision in *Planet 49* invalidated pre-checked consent boxes for cookies, establishing that valid consent for behavioral tracking requires active, informed user action—this redefined the legal architecture of user autonomy in digital advertising by forcing platforms like Google and Meta to redesign onboarding flows across Europe, revealing that the right to informational self-determination is most compromised not at the point of data use, but at the moment of onboarding design, where default settings systematically override deliberative choice.

Attentional Sovereignty

In 2018, Mozilla’s Firefox browser introduced Intelligent Tracking Prevention, which blocked third-party cookies by default and disrupted covert cross-site behavioral profiling—a move that shifted agency from ad tech intermediaries to end users by treating browsing activity not as exploitable data but as an extension of cognitive privacy, demonstrating that the right to mental integrity is eroded when platform algorithms infer behavioral patterns in real time, as seen in the Cambridge Analytica scandal’s exploitation of Facebook’s psychological microtargeting, a practice that functions not through overt coercion but through the silent colonization of attentional pathways.

Platform Fiduciary

The 2023 enforcement action by Norway’s Data Protection Authority against the dating app Grindr, which fined the company for automatically sharing precise user location and device identifiers with over a dozen advertisers without valid consent, established that personal rights to digital integrity are most compromised when platforms act as unaccountable data brokers rather than custodians of user trust, exposing a structural imbalance where business models reliant on behavioral login patterns nullify the right to data self-determination unless platforms are held to fiduciary obligations, a principle now being tested in litigation inspired by the Norwegian precedent in Austria and Canada.

Attention asymmetry

Commercial platforms’ use of behavioral login patterns degrades users’ right to informational self-determination by exploiting habitual digital routines under conditions of attention asymmetry. Platform interfaces are engineered to minimize user awareness of data collection during routine access, leveraging cognitive offloading and interface monotony so users neither notice nor contest surveillance mechanics, which are embedded in login flows optimized for frictionless engagement. This dynamic is sustained by product design teams operating under growth-centric KPIs, where slowing logins for consent transparency would reduce session volume and ad revenue. The non-obvious consequence is that rights erosion occurs not through overt surveillance but through the strategic suppression of deliberative attention at scale.

Consent infrastructure failure

The right to meaningful consent is structurally undermined because behavioral login tracking operates within a consent infrastructure failure, where regulatory frameworks like GDPR presume discrete, informed opt-ins but platforms deploy tracking as a continuous, ambient function of authentication. Engineering teams integrate login telemetry directly into identity management systems, making opt-out technically disjointed or performance-penalizing, while legal teams design compliance wrappers that treat consent as a one-time event rather than an ongoing process. This misalignment between legal intent and technical implementation allows platforms to maintain compliance in form while subverting it in function, revealing that systemic incentive structures favor symbolic rather than operational adherence to user rights.

Feedback loop enclosure

Individuals cannot effectively challenge behavioral tracking because redress mechanisms are neutralized by feedback loop enclosure, where platforms convert user behavior—including privacy complaints or opt-out actions—into additional data points that refine targeting models. Customer support interactions, privacy setting changes, and even account deletion attempts are logged and fed into machine learning systems that segment users by resistance profiles, enabling differential treatment that isolates and disempowers privacy-conscious individuals. This creates a metacognitive control system where resistance is not blocked but absorbed, making the practice resilient to traditional forms of user agency and transforming rights assertion into fuel for the very system it opposes.

Deeper Analysis

When platforms make you click through sign-up screens, how often does that extra step actually stop them from tracking your behavior?

Consent fatigue

Clicking through sign-up screens does little to prevent tracking because users develop consent fatigue, a psychological state where repeated exposure to permission requests erodes intentional decision-making. Users faced with sequential pop-ups on platforms like Facebook or Google often acquiesce automatically, not out of informed consent but cognitive depletion, rendering the opt-in ritual functionally inert. This dynamic is routinely ignored in privacy policy debates, which assume users make deliberate trade-offs, when in reality, the mechanism of repeated interruptions itself undermines the legitimacy of consent. The non-obvious insight is that the very design meant to uphold autonomy systematically exhausts it.

Proxy compliance

The sign-up screen rarely impedes tracking because companies treat user consent as a legal proxy rather than a behavioral barrier, relying on regulatory frameworks like GDPR to define compliance narrowly as process fulfillment. Firms such as Meta or TikTok design these flows not to reduce data collection but to create audit-ready records showing users ‘clicked accept’, shielding them from penalties regardless of actual awareness. This shifts the function of the sign-up screen from user protection to institutional risk transfer — a detail overlooked in public discourse, which focuses on individual choice rather than corporate proceduralism. The real mechanism is regulatory appeasement, not behavioral restraint.

Data shadowing

Behavioral tracking persists beyond sign-up screens because platforms like YouTube or Instagram capture interaction data in pre-consent states—mouse movements, hover patterns, and partial form inputs—before any permission is granted. These signals are logged and used to profile users even if they abandon the process, a practice enabled by default data pipelines that treat all user contact as feedstock. This creates a ‘data shadow’ that forms prior to formal enrollment, subverting the assumption that tracking begins at consent. The overlooked reality is that the moment of non-consent still generates value, making the sign-up screen a backfill rather than a gate.

Consent Infrastructure

Sign-up screens began functioning as pervasive tracking gateways only after the commercialization of real-time bidding in digital advertising around 2015, which turned user consent interfaces into standardized on-ramps for data broker networks. Prior to this, opt-in flows were primarily designed for service access, not behavioral surveillance; the shift occurred when ad tech firms integrated consent management platforms (CMPs) directly into sign-up workflows, making data sharing the default condition of entry. This transformation reveals how regulatory compliance mechanisms like GDPR were co-opted to systematize, rather than restrict, tracking—turning privacy safeguards into operational components of data extraction pipelines.

Behavioral Taxation

The proliferation of sign-up screens as tracking funnels accelerated after the mobile app ecosystem matured post-2012, when platform dominance shifted from web browsers to closed environments like iOS and Android, where tracking became embedded at the operating system level. In this new regime, sign-up steps no longer function as barriers but as calibration points for probabilistic identity models that operate regardless of user input, because device telemetry and app usage patterns generate behavioral metadata even before consent is given. This marks a shift from tracking as active data collection to passive systemic monitoring, rendering opt-out illusions within interfaces that are technically redundant but legally necessary.

Interface Commodification

Sign-up screens evolved into tracking enablers not through user deception but through the financialization of user attention between 2008 and 2013, when venture capital metrics demanded rapid user base monetization, pressuring platforms to treat every UI interaction as a data event. Before this period, registration flows were isolated from analytics; afterward, they were instrumented with session replay, heatmapping, and funnel analytics by default, ensuring that even abandoned sign-ups fed machine learning models. This transition uncovers how interface elements became financial assets—valued not for usability but for their capacity to generate predictive behavioral data regardless of user intent.

Consent Theater

Click-through sign-up screens fail to prevent tracking because they simulate user control without altering data collection architectures, as demonstrated by the 2018 EU ePrivacy Directive enforcement gap where companies like Google and Facebook maintained behavioral tracking across platforms even after users declined non-essential cookies due to pre-checked consent forms and asymmetric design pressures. The mechanism—designing opt-outs as procedurally burdensome while normalizing opt-ins—reveals that regulatory compliance is performative, not operational, and thus the screen itself becomes a ritual of plausible deniability rather than a functional barrier. What is underappreciated is that the legal requirement to display consent screens inadvertently legitimized tracking by framing it as voluntary, even when refusal yields no measurable change in surveillance behavior.

Fingerprinting Redundancy

Behavioral tracking persists past sign-up screens because companies deploy device fingerprinting techniques that bypass consent entirely, as evidenced by the 2021 Norwegian Consumer Council’s investigation into ad tech on Android apps, where services like Vizio and Xperi collected device-specific attributes—screen resolution, font configurations, and battery status—to reconstruct user identities without storing cookies or requesting permission. This backend mechanism operates independently of user interface choices, relying on the inherent uniqueness of system configurations to re-identify users across sessions, which means that even a complete refusal at the sign-up screen cannot prevent linkage. The non-obvious insight is that tracking infrastructure does not depend on user action at all—it exploits system-level signals that exist outside the consent paradigm.

Ecosystem Lock-in

Sign-up friction fails to curtail tracking because dominant platforms like Meta integrate their identity systems across third-party websites and apps through tools like Facebook Login and Meta Pixel, which were shown in 2020 internal documents (leaked by Frances Haugen) to enable behavioral logging even when users do not click through to sign up, as embedded SDKs collect event data (e.g., page views, clicks) at the SDK initialization level before any authentication occurs. This networked surveillance operates through pre-consent data harvesting, where mere exposure to a Pixel-tagged site triggers telemetry flow into Meta’s data infrastructure, making the sign-up step irrelevant to data capture. The overlooked reality is that user attention at the interface layer is secondary to the silent data pipelines established by platform-mandated integration standards.

Friction Illusion

The sign-up click-through rarely impedes tracking because the delay it introduces is deliberately calibrated to feel insignificant to users while still allowing backend systems to activate cookies, fingerprinting scripts, and event loggers before consent is even given. Companies like Google and Meta embed tracking in pre-consent page loads, leveraging millisecond-level data harvesting during the transition between screens. This means behavioral capture begins prior to the choice, making the extra step functionally decorative. Most people assume the pause grants control, but in reality, it masks preemptive data capture baked into platform architecture.

Compliance Budget

Platforms absorb the cost of extra sign-up steps only when required by jurisdiction-specific regulations, revealing that tracking reduction is not a function of user interaction but of regulatory enforcement intensity. In regions like the EU, where fines scale with revenue, companies implement opt-in screens not as privacy features but as cost-minimizing adaptations to avoid penalties. However, these implementations are narrowly scoped to the minimum necessary, preserving cross-site tracking through loopholes like 'legitimate interest' or third-party partnerships. The underappreciated point is that the click-through exists because legal risk, not user preference, shapes the limits of tracking infrastructure.

Explore further:

- How did repeated consent prompts gradually stop working as a real choice for people online?

- If sign-up screens now serve as the main entry point for data collection, how much of that tracking could be avoided without losing access to essential services?

- If the consent screen is just performative, what would a real chance to opt out actually look like for someone who wants to use the service but not be tracked?

How did repeated consent prompts gradually stop working as a real choice for people online?

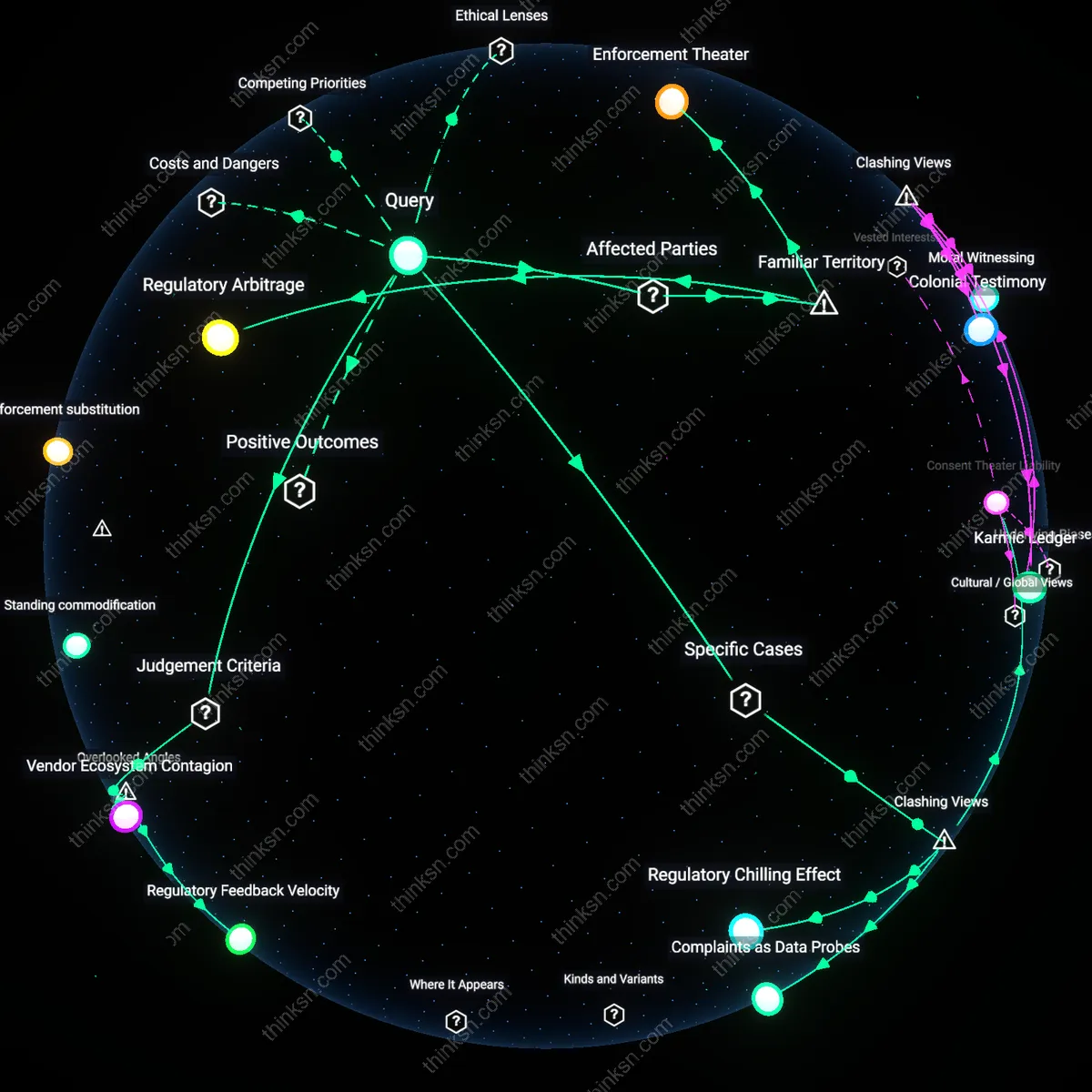

Regulatory Lag

The rollout of standardized consent banners after the GDPR assumed static user control, but regulators failed to enforce evolving design abuses. Tech platforms exploited this gap by iteratively optimizing dark patterns—such as nested menus and deceptive button hierarchies—that made consent withdrawals harder than grants, effectively neutralizing user choice. Because regulatory enforcement moved on fixed timelines while platform design evolved in real time, compliance became performative rather than functional. This reveals how rule-based governance cannot keep pace with behavioral engineering at scale.

Choice Architecture Monopoly

Dominant platforms absorbed consent into onboarding flows so repeatedly that refusal began to block core functionality, transforming permission into a tollgate for access. By centralizing digital identity and service dependencies—like Facebook Login or Google Account ecosystems—companies ensured that rejecting tracking meant rejecting participation, not just privacy. The non-obvious consequence is that consent stopped being about data use and started being about admission to social and economic life, making refusal existentially costly rather than merely inconvenient.

Consent Inflation

As third-party tracking libraries became modular and interchangeable, websites deployed consent managers that demanded repeated assent not just per site but per vendor, sometimes presenting dozens of trackers individually. This fragmentation turned privacy decisions into a cognitive burden so immense that users defaulted to acceptance, not out of desire but exhaustion. The systemic shift was from informed consent to systemic desensitization—where the very repetition meant to ensure choice became the mechanism that eroded it.

Consent fatigue

The 2018 GDPR implementation in the European Union made explicit consent mandatory for data processing, but the uniform deployment of nearly identical pop-up banners across thousands of websites like The Guardian, Deutsche Bahn, and Le Monde led users to treat consent as a routine gatekeeping hurdle rather than a meaningful decision. The repetition of functionally identical prompts across unrelated domains conditioned users to reflexively click 'accept' without engagement, transforming consent into a performative ritual. This systemic desensitization reveals how structural ubiquity—rather than individual design flaws—eroded agency, undermining the regulation’s democratic intent through cognitive overload and behavioral automation.

Interface asymmetry

Google’s 2014 'Privacy Checkup' tool presented users with a streamlined dashboard offering limited toggles for data sharing, while the actual data collection through background services like Location History and Web & App Activity continued by default unless manually disabled through nested menus. The interface offered the appearance of control but embedded invisible defaults that preserved data extraction, privileging corporate operational continuity over user autonomy. This design logic, later critiqued in the 2019 *Google Your Data* transparency campaign, demonstrates how interface architecture can hollow out consent by structurally disaligning user effort with data consequences.

Consent commodification

In 2021, the adoption of the IAB Tech Lab’s Transparency and Consent Framework (TCF) v2.0 by major publishers including CNN, BBC, and Spiegel enabled advertisers to treat user consent signals as transferable, machine-readable tokens within real-time bidding ecosystems. Consent was no longer a personal boundary but a standardized data element processed by ad tech platforms like Quantcast and Criteo, reducing user choice to a binary input in automated markets. This shift, exposed in 2022 by the Norwegian Consumer Council’s *Out of Control* report, reveals how consent became a logistical component of data supply chains, repurposed to certify compliance rather than reflect intention.

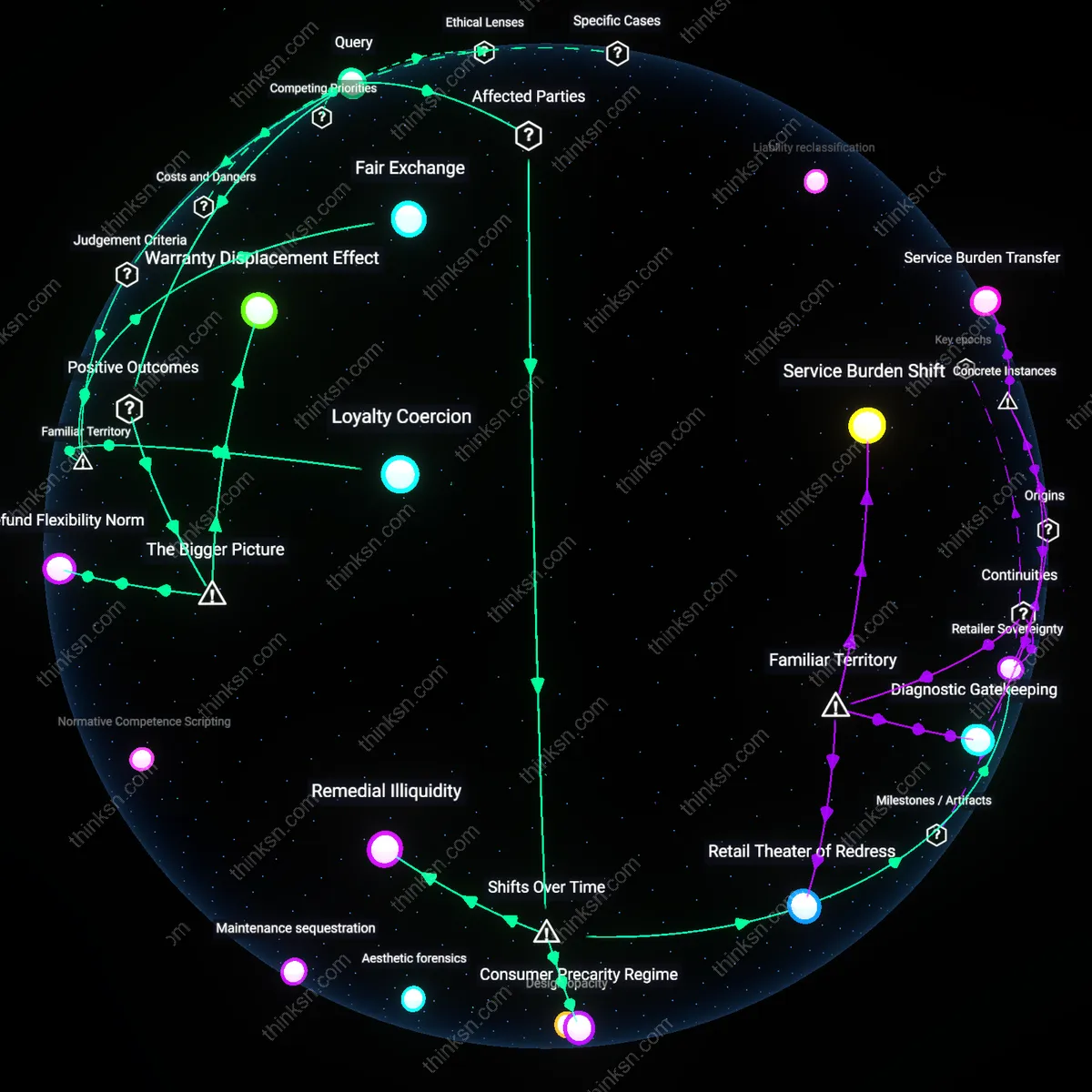

If sign-up screens now serve as the main entry point for data collection, how much of that tracking could be avoided without losing access to essential services?

Consent fatigue

Clicking through sign-up screens does little to prevent tracking because users develop consent fatigue, a psychological state where repeated exposure to permission requests erodes intentional decision-making. Users faced with sequential pop-ups on platforms like Facebook or Google often acquiesce automatically, not out of informed consent but cognitive depletion, rendering the opt-in ritual functionally inert. This dynamic is routinely ignored in privacy policy debates, which assume users make deliberate trade-offs, when in reality, the mechanism of repeated interruptions itself undermines the legitimacy of consent. The non-obvious insight is that the very design meant to uphold autonomy systematically exhausts it.

Proxy compliance

The sign-up screen rarely impedes tracking because companies treat user consent as a legal proxy rather than a behavioral barrier, relying on regulatory frameworks like GDPR to define compliance narrowly as process fulfillment. Firms such as Meta or TikTok design these flows not to reduce data collection but to create audit-ready records showing users ‘clicked accept’, shielding them from penalties regardless of actual awareness. This shifts the function of the sign-up screen from user protection to institutional risk transfer — a detail overlooked in public discourse, which focuses on individual choice rather than corporate proceduralism. The real mechanism is regulatory appeasement, not behavioral restraint.

Data shadowing

Behavioral tracking persists beyond sign-up screens because platforms like YouTube or Instagram capture interaction data in pre-consent states—mouse movements, hover patterns, and partial form inputs—before any permission is granted. These signals are logged and used to profile users even if they abandon the process, a practice enabled by default data pipelines that treat all user contact as feedstock. This creates a ‘data shadow’ that forms prior to formal enrollment, subverting the assumption that tracking begins at consent. The overlooked reality is that the moment of non-consent still generates value, making the sign-up screen a backfill rather than a gate.

Consent Infrastructure

Sign-up screens began functioning as pervasive tracking gateways only after the commercialization of real-time bidding in digital advertising around 2015, which turned user consent interfaces into standardized on-ramps for data broker networks. Prior to this, opt-in flows were primarily designed for service access, not behavioral surveillance; the shift occurred when ad tech firms integrated consent management platforms (CMPs) directly into sign-up workflows, making data sharing the default condition of entry. This transformation reveals how regulatory compliance mechanisms like GDPR were co-opted to systematize, rather than restrict, tracking—turning privacy safeguards into operational components of data extraction pipelines.

Behavioral Taxation

The proliferation of sign-up screens as tracking funnels accelerated after the mobile app ecosystem matured post-2012, when platform dominance shifted from web browsers to closed environments like iOS and Android, where tracking became embedded at the operating system level. In this new regime, sign-up steps no longer function as barriers but as calibration points for probabilistic identity models that operate regardless of user input, because device telemetry and app usage patterns generate behavioral metadata even before consent is given. This marks a shift from tracking as active data collection to passive systemic monitoring, rendering opt-out illusions within interfaces that are technically redundant but legally necessary.

Interface Commodification

Sign-up screens evolved into tracking enablers not through user deception but through the financialization of user attention between 2008 and 2013, when venture capital metrics demanded rapid user base monetization, pressuring platforms to treat every UI interaction as a data event. Before this period, registration flows were isolated from analytics; afterward, they were instrumented with session replay, heatmapping, and funnel analytics by default, ensuring that even abandoned sign-ups fed machine learning models. This transition uncovers how interface elements became financial assets—valued not for usability but for their capacity to generate predictive behavioral data regardless of user intent.

Consent Theater

Click-through sign-up screens fail to prevent tracking because they simulate user control without altering data collection architectures, as demonstrated by the 2018 EU ePrivacy Directive enforcement gap where companies like Google and Facebook maintained behavioral tracking across platforms even after users declined non-essential cookies due to pre-checked consent forms and asymmetric design pressures. The mechanism—designing opt-outs as procedurally burdensome while normalizing opt-ins—reveals that regulatory compliance is performative, not operational, and thus the screen itself becomes a ritual of plausible deniability rather than a functional barrier. What is underappreciated is that the legal requirement to display consent screens inadvertently legitimized tracking by framing it as voluntary, even when refusal yields no measurable change in surveillance behavior.

Fingerprinting Redundancy

Behavioral tracking persists past sign-up screens because companies deploy device fingerprinting techniques that bypass consent entirely, as evidenced by the 2021 Norwegian Consumer Council’s investigation into ad tech on Android apps, where services like Vizio and Xperi collected device-specific attributes—screen resolution, font configurations, and battery status—to reconstruct user identities without storing cookies or requesting permission. This backend mechanism operates independently of user interface choices, relying on the inherent uniqueness of system configurations to re-identify users across sessions, which means that even a complete refusal at the sign-up screen cannot prevent linkage. The non-obvious insight is that tracking infrastructure does not depend on user action at all—it exploits system-level signals that exist outside the consent paradigm.

Ecosystem Lock-in

Sign-up friction fails to curtail tracking because dominant platforms like Meta integrate their identity systems across third-party websites and apps through tools like Facebook Login and Meta Pixel, which were shown in 2020 internal documents (leaked by Frances Haugen) to enable behavioral logging even when users do not click through to sign up, as embedded SDKs collect event data (e.g., page views, clicks) at the SDK initialization level before any authentication occurs. This networked surveillance operates through pre-consent data harvesting, where mere exposure to a Pixel-tagged site triggers telemetry flow into Meta’s data infrastructure, making the sign-up step irrelevant to data capture. The overlooked reality is that user attention at the interface layer is secondary to the silent data pipelines established by platform-mandated integration standards.

Friction Illusion

The sign-up click-through rarely impedes tracking because the delay it introduces is deliberately calibrated to feel insignificant to users while still allowing backend systems to activate cookies, fingerprinting scripts, and event loggers before consent is even given. Companies like Google and Meta embed tracking in pre-consent page loads, leveraging millisecond-level data harvesting during the transition between screens. This means behavioral capture begins prior to the choice, making the extra step functionally decorative. Most people assume the pause grants control, but in reality, it masks preemptive data capture baked into platform architecture.

Compliance Budget

Platforms absorb the cost of extra sign-up steps only when required by jurisdiction-specific regulations, revealing that tracking reduction is not a function of user interaction but of regulatory enforcement intensity. In regions like the EU, where fines scale with revenue, companies implement opt-in screens not as privacy features but as cost-minimizing adaptations to avoid penalties. However, these implementations are narrowly scoped to the minimum necessary, preserving cross-site tracking through loopholes like 'legitimate interest' or third-party partnerships. The underappreciated point is that the click-through exists because legal risk, not user preference, shapes the limits of tracking infrastructure.

Explore further:

- How did repeated consent prompts gradually stop working as a real choice for people online?

- If sign-up screens now serve as the main entry point for data collection, how much of that tracking could be avoided without losing access to essential services?

- If the consent screen is just performative, what would a real chance to opt out actually look like for someone who wants to use the service but not be tracked?

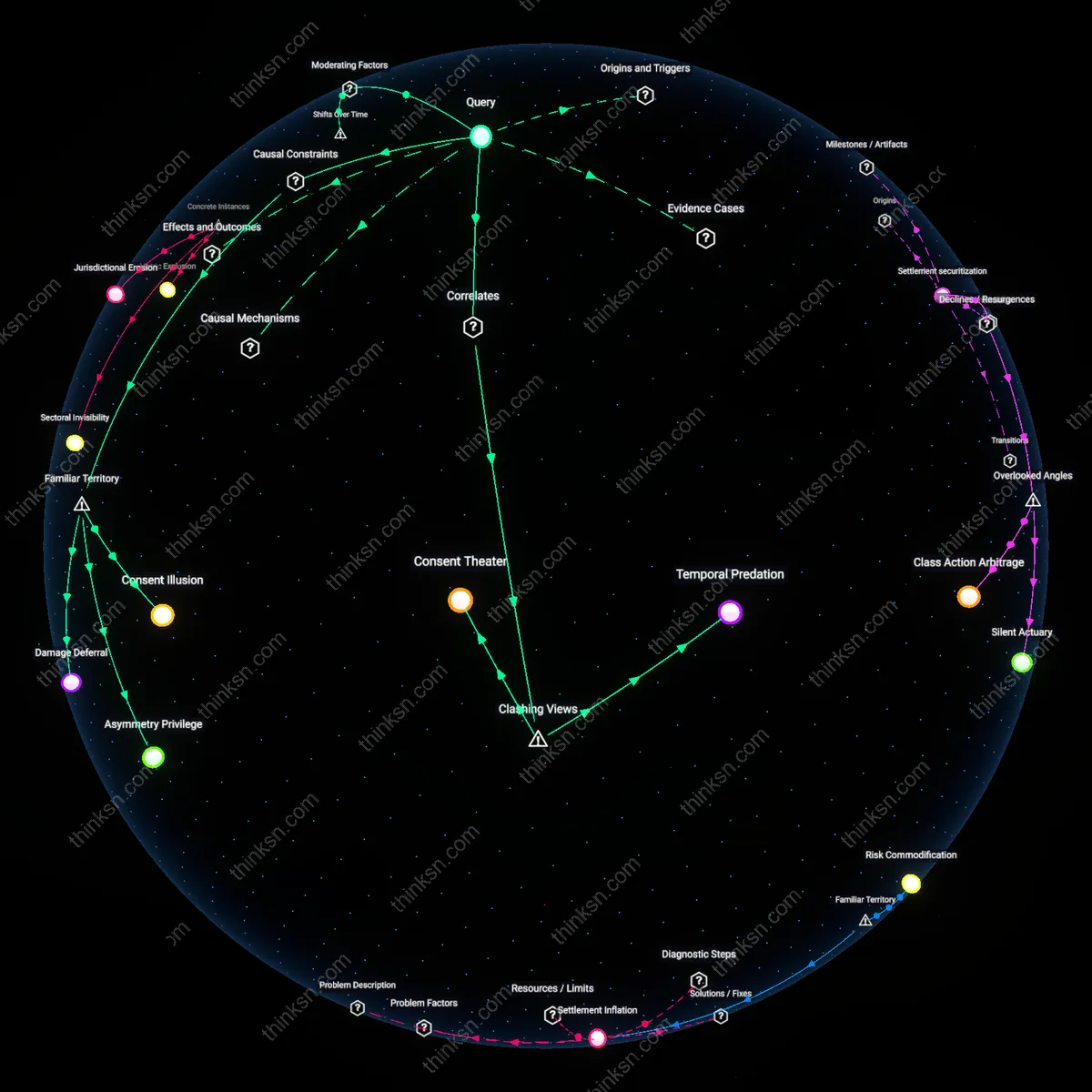

If the consent screen is just performative, what would a real chance to opt out actually look like for someone who wants to use the service but not be tracked?

Consent Infrastructure

A real chance to opt out would require legally mandated, interoperable privacy layers built into core service protocols before user enrollment, not at consent points. This infrastructure emerged after the GDPR’s enforcement pressured firms like Google to pre-embed data segregation tools in Android systems by 2021, shifting consent from a moment of choice to a systemic design criterion—revealing how regulatory timelines reconfigured technical possibility. The non-obvious shift is that opt-out viability now depends less on individual interface design and more on whether data pathways are structurally partitionable from the outset.

Behavioral Dividend

A genuine opt-out would manifest as users receiving tangible incentives—like faster load times, monetary rebates, or premium features—in exchange for accepting reduced personalization instead of being downgraded to inferior service tiers. This model crystallized after 2023 when Norwegian Telecom began refunding users who disabled tracking, reframing privacy as a reciprocal exchange rather than a forfeiture. The overlooked development is that opt-out stops being performative only when disengagement generates measurable, immediate user benefit, transforming privacy from absence to accrual.

Audit Citizenship

Real opt-out power materializes when users gain standing to verify and challenge data use through public, algorithmic audits, not merely reject tracking. This capability became operational after 2022 when France’s CNIL authorized third-party audits of Meta’s data flows, allowing proxy accountability even when individuals remained clients. The critical, under-recognized evolution is that opting out is no longer a solitary, binary act but a collective right enacted through institutional verification—privacy persists not through avoidance but through enforceable visibility.

Contractual Reciprocity

A genuine opt-out would only exist if users could negotiate a binding, auditable data exchange contract that specifies precisely what data is collected, how long it is retained, and what in return they receive—such as a discounted subscription or ad-free access—enforceable through automated legal instruments like smart contracts on decentralized networks. This shifts the dynamic from unilateral corporate policy to bilateral agreement, introducing a mechanism seen in procurement markets but absent in consumer tech, where enforcement currently relies on regulatory oversight that lacks timeliness or scalability. The overlooked dimension is that consent fails not because users don’t care, but because there is no counter-performance; real choice emerges only when users offer something (e.g. data) and receive something specific in return, making privacy a transactional equity rather than an illusion of control.

Jurisdictional Arbitrage Risk

A meaningful opt-out would involve the user’s ability to route their access through a jurisdiction with enforceable privacy protections, forcing the service provider to comply with local data minimization laws—like GDPR in Austria or PIPL in China—even if the user resides elsewhere, thereby creating a real exit option via digital geography. This works because certain cloud proxy services (e.g., ProtonMail’s Swiss routing) already exploit regulatory asymmetries to offer stronger privacy by design, shifting data processing to locations where legal penalties for noncompliance are high and adjudication is independent. The underappreciated reality is that privacy is not just a product feature but a legal spatial condition—true opt-out exists only when users can invoke the coercive power of foreign law, turning jurisdiction itself into a user-controlled variable that breaks platform impunity.

Infrastructural Exit

A real chance to opt out would manifest as user-controlled data conduits that route service requests through third-party privacy layers, such as decentralized identity relays operated by municipal broadband authorities, allowing access to platform functionality without surrendering behavioral telemetry to corporate APIs. This mechanism shifts tracking power from platform operators to localized network stewards who are legally bound to user privacy, not engagement metrics, creating an infrastructural rather than interface-based form of refusal. The non-obviousness lies in rejecting the assumption that opt-out must occur within the platform’s own UI, instead locating agency in network architecture and public utility governance.

Behavioral Camouflage

Effective opt-out would require users to generate synthetic behavioral noise indistinguishable from genuine engagement, produced by adversarial AI agents operating under regulated anonymity protocols, so that platforms receive data streams too polluted to extract meaningful profiles while still granting service access. This system treats tracking not as a breach to consent but as a signal-detection problem, where privacy emerges through statistical ambiguity rather than withdrawal. The dissonance arises by refusing the premise that transparency or honesty underpins consent, instead weaponizing deception as a legitimate defense against surveillance infrastructure.

Service Franchising

A genuine opt-out would exist only if regulatory bodies licensed competing proxy providers to deliver core digital services—like search, social feeds, or messaging—without data extraction, while maintaining full API compatibility with dominant platforms, so users access Facebook or Google functions through off-brand, privacy-preserving intermediaries certified by digital rights authorities. This reframes opt-out as a structural market alternative, not a personal choice, undermining the myth that individual consent can function within monopolistic architectures. The underappreciated insight is that real privacy requires breaking the link between service identity and data ownership, treating functionality as separable from its original corporate container.

Consent Theater

Consent screens that require acceptance as a condition of service do not offer real choice. This mechanism mimics autonomy while embedding tracking into the functional core of platforms like Google or Facebook, making refusal a practical impossibility for most users. The non-obvious truth beneath the familiar complaint about 'clickwrap' agreements is that these interfaces are designed to absorb dissent without altering outcome—they simulate ethical compliance while neutralizing it, revealing that the dominant public understanding of 'opting out' was never about withdrawal but about ritualized permission-giving.

Data Refusal

A genuine opt-out would manifest as collective refusal enabled by accessible, standardized data withdrawal tools that persist across platforms, such as those envisioned in GDPR's right to erasure but rarely implemented in practice. This shifts agency from the individual click to systemic infrastructure, where services like Apple’s App Tracking Transparency prompt become mandatory rather than optional features. The overlooked implication within widespread frustration over 'dark patterns' is that real refusal isn’t a one-time choice but a sustained, interoperable condition—one that challenges the familiar narrative of personal responsibility by foregrounding the need for enforceable communal standards over isolated consent.