Is Individual Digital Responsibility Futile Against Data Brokers?

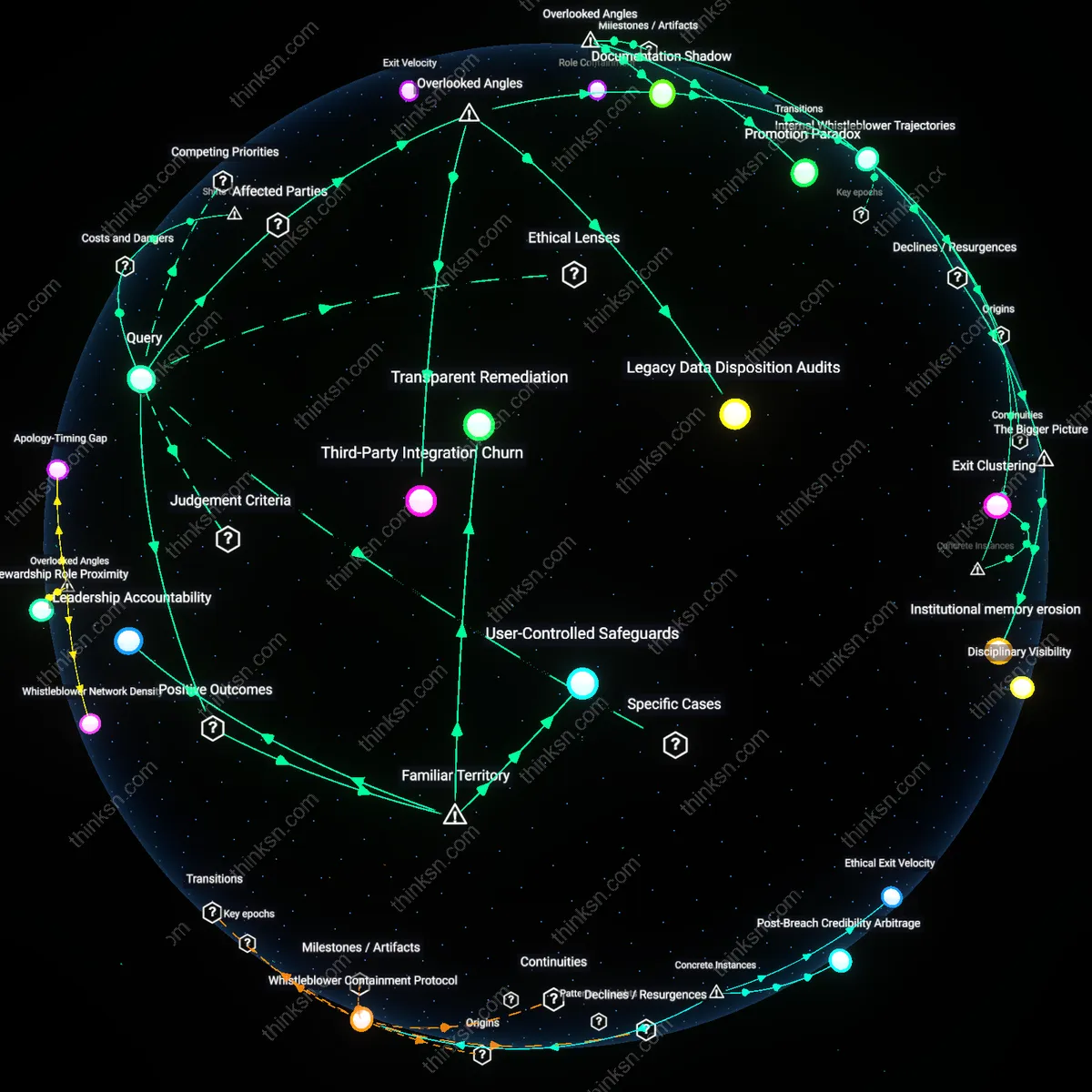

Analysis reveals 9 key thematic connections.

Key Findings

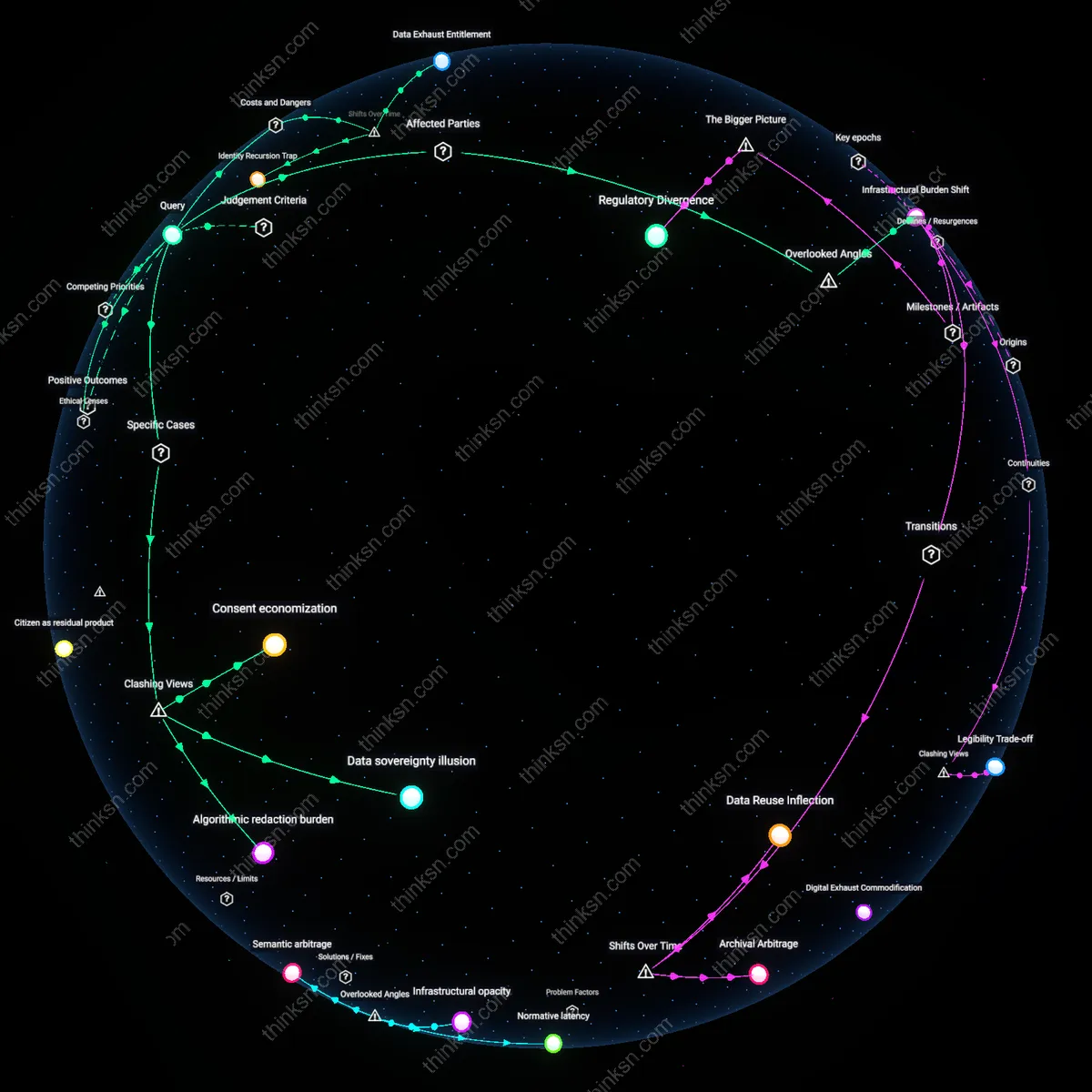

Infrastructural Burden Shift

Individuals cannot reasonably be held responsible for protecting their digital identity because data brokers exploit automated, cross-jurisdictional data ingestion pipelines that continuously harvest public data from civic infrastructure like property records, court filings, and business registrations. These systems were designed for public accountability, not personal data aggregation, yet they feed into commercial identity reconstruction with no individual consent or opt-out mechanism. The non-obvious reality is that responsibility is structurally displaced onto users while the actual data supply chain operates through legacy public institutions that neither control nor anticipate this reuse, obscuring liability and rendering personal vigilance futile.

Data Exhaust Entitlement

Individuals cannot reasonably be held responsible for protecting their digital identity because the post-2010 monetization of ambient data traces has transformed discarded digital byproducts into valuable profile fragments, which data brokers exploit without consent. The rise of real-time bidding ecosystems in online advertising after the mid-2000s shifted personal data from intentional disclosures to passive emissions—clicks, scrolls, and dwell times—creating a system where identity is continuously leaked through routine activity. This transition normalized the use of data exhaust as raw material for identity reconstruction, obscuring individual agency by making surveillance incidental rather than transactional. The underappreciated consequence is that responsibility is misallocated onto users for managing leakage they cannot perceive or intercept.

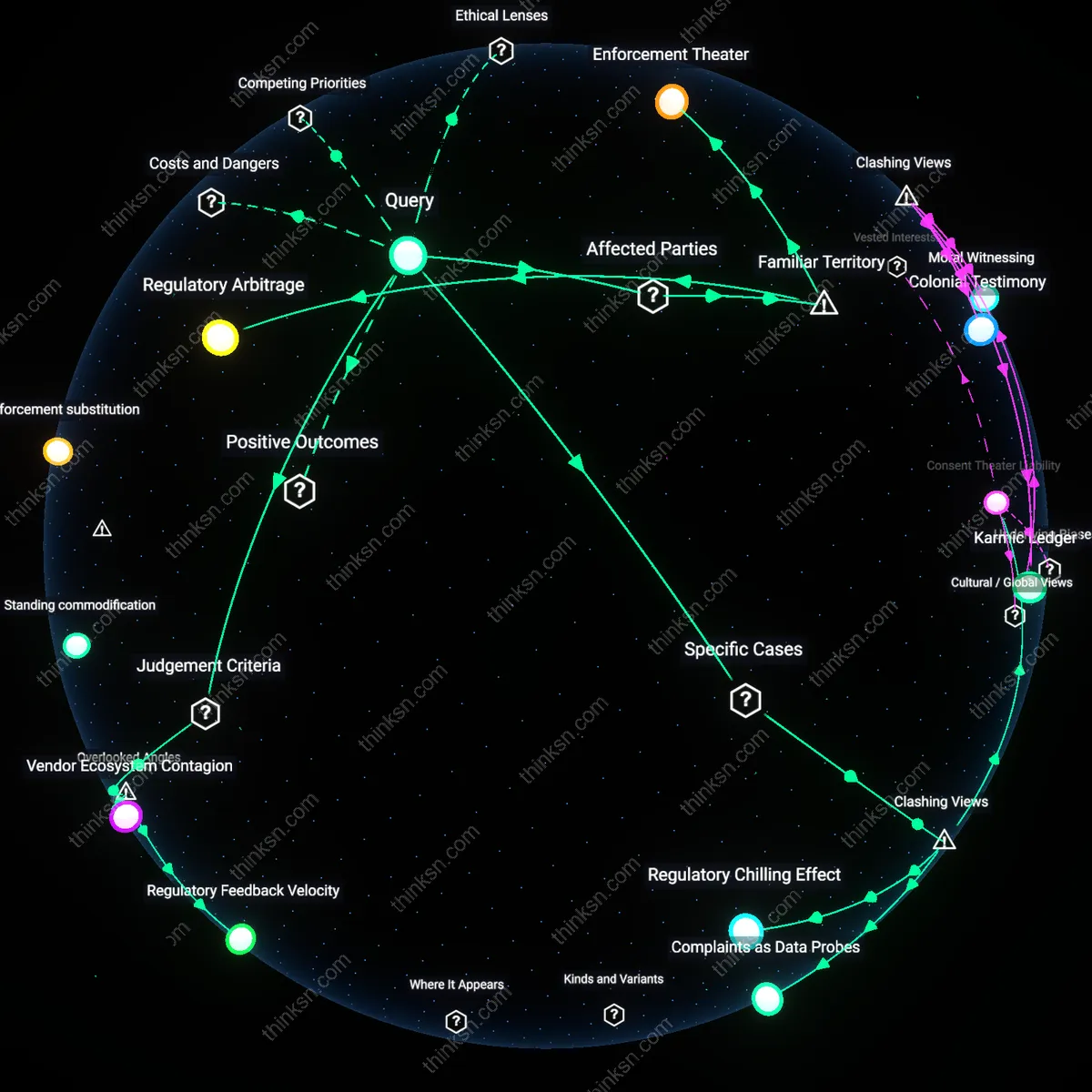

Regulatory Deflation Cycle

Holding individuals responsible for digital identity protection became unreasonable after the 1990s shift from sector-specific privacy laws to self-regulatory frameworks, which systematically offloaded compliance risks onto users while enabling data broker expansion. The Clinton-era endorsement of 'market-based solutions' in the 1998 FTC report weakened enforcement infrastructure and legitimized industry-led data practices, allowing brokers to aggregate public records, marketing data, and social media scraps without accountability. This eroded the feasibility of personal oversight as legal protections failed to scale alongside data integration technologies. The non-obvious outcome is that the deflation of regulatory ambition over two decades rendered individual vigilance a symbolic substitute for systemic control.

Identity Recursion Trap

Since the 2016 EU–US Privacy Shield collapse, individuals have been unable to meaningfully protect their digital identity because transnational data flows now rely on recursive reassembly, where brokers use machine learning to infer and regenerate deleted or obscured information from peripheral data sources. The breakdown of cross-border enforcement mechanisms accelerated the use of predictive analytics to fill data gaps, turning privacy choices into temporary artifacts rather than permanent opt-outs. This created a feedback loop in which any disclosed fragment—even anonymized or aggregated—can be used to reconstruct identity through correlation with third-party datasets. The underappreciated shift is that identity is no longer a fixed attribute but a dynamically reconstituted target, making individual defenses obsolete against self-updating profiles.

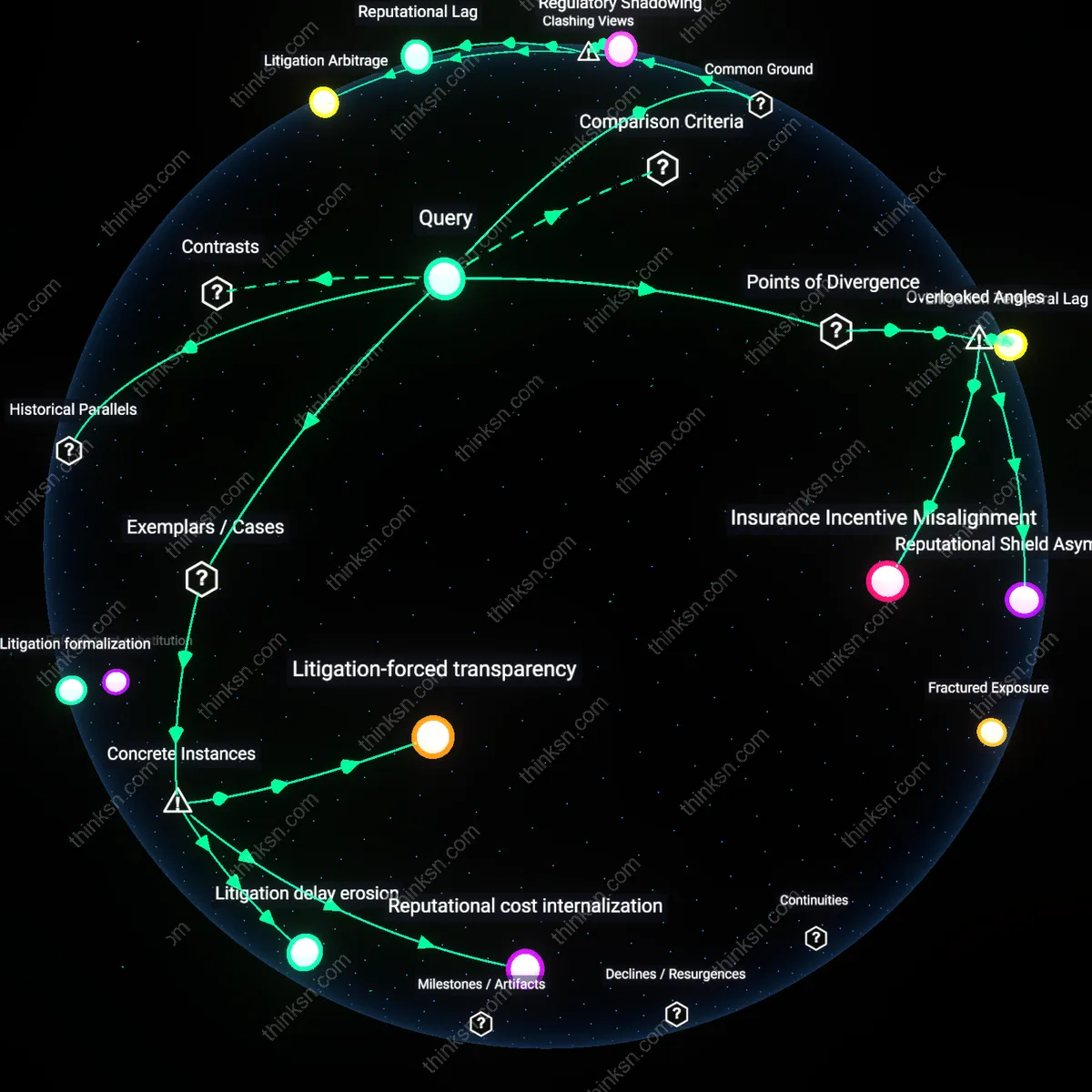

Asymmetric accountability

Individuals cannot reasonably be held responsible for protecting their digital identity because legal frameworks like the EU’s GDPR place enforcement burdens on data controllers, not subjects—exemplified by the Austrian Privacy Advocate’s 2019 complaints against Google and Facebook, where violations centered on opaque data reaggregation from publicly available snippets without user consent. The system treats individuals as incapable of meaningful self-defense against infrastructural surveillance, shifting ethical responsibility to institutional actors who design and profit from data synthesis. This reveals a normative separation between personal agency and systemic opacity—one where individual vigilance is rendered performative against automated reidentification.

Citizen as residual product

Holding individuals responsible for digital identity protection is ethically incoherent under neoliberal data regimes, where platforms like Equifax monetize identity as a service while transferring risk downward—evident in the 2017 Equifax breach exposing 147 million people’s data, repackaged from public and semi-public sources into high-value credit profiles. Here, the legal system absolves the broker of preemption but demands individuals monitor credit reports and dispute fraud, reframing citizens as perpetual auditors of their own compromised identities. This redefines personhood not as a protected right but as an economically externalized byproduct of data infrastructure.

Data sovereignty illusion

Individuals cannot reasonably be held responsible for protecting their digital identity because data brokers like Acxiom and Oracle integrate publicly available voter registration, property, and purchase records into comprehensive profiles that individuals never consented to assemble; this assemblage occurs through automated inference systems in the commercial data supply chain, which renders personal responsibility a technical absurdity given the volume and opacity of recombination—exposing how the expectation of individual control masks systemic institutional power.

Algorithmic redaction burden

Holding individuals responsible for digital identity protection is indefensible when municipal transparency policies—such as Illinois’ public property tax databases—feed Clearview AI’s facial recognition models that re-identify anonymized individuals; the burden shifts to citizens to suppress lawful public presence to evade surveillance, inverting accountability so that civic participation becomes a privacy risk—which reveals how compliance with transparency norms inadvertently fuels invasive reidentification ecosystems.

Consent economization

People are unreasonably tasked with defending digital identity when companies like SafeGraph monetize foot traffic data from app developers who disclose 'aggregated' location use, yet the data allows reidentification via spatiotemporal patterns tied to home and work locations; regulators treat this as compliant with privacy norms, making individual vigilance a performative substitute for structural oversight—uncovering how the legal validation of 'anonymized' data enables commercial exploitation under the guise of user consent.