Are AI Reviews Reliable or Just Smoke and Mirrors?

Analysis reveals 9 key thematic connections.

Key Findings

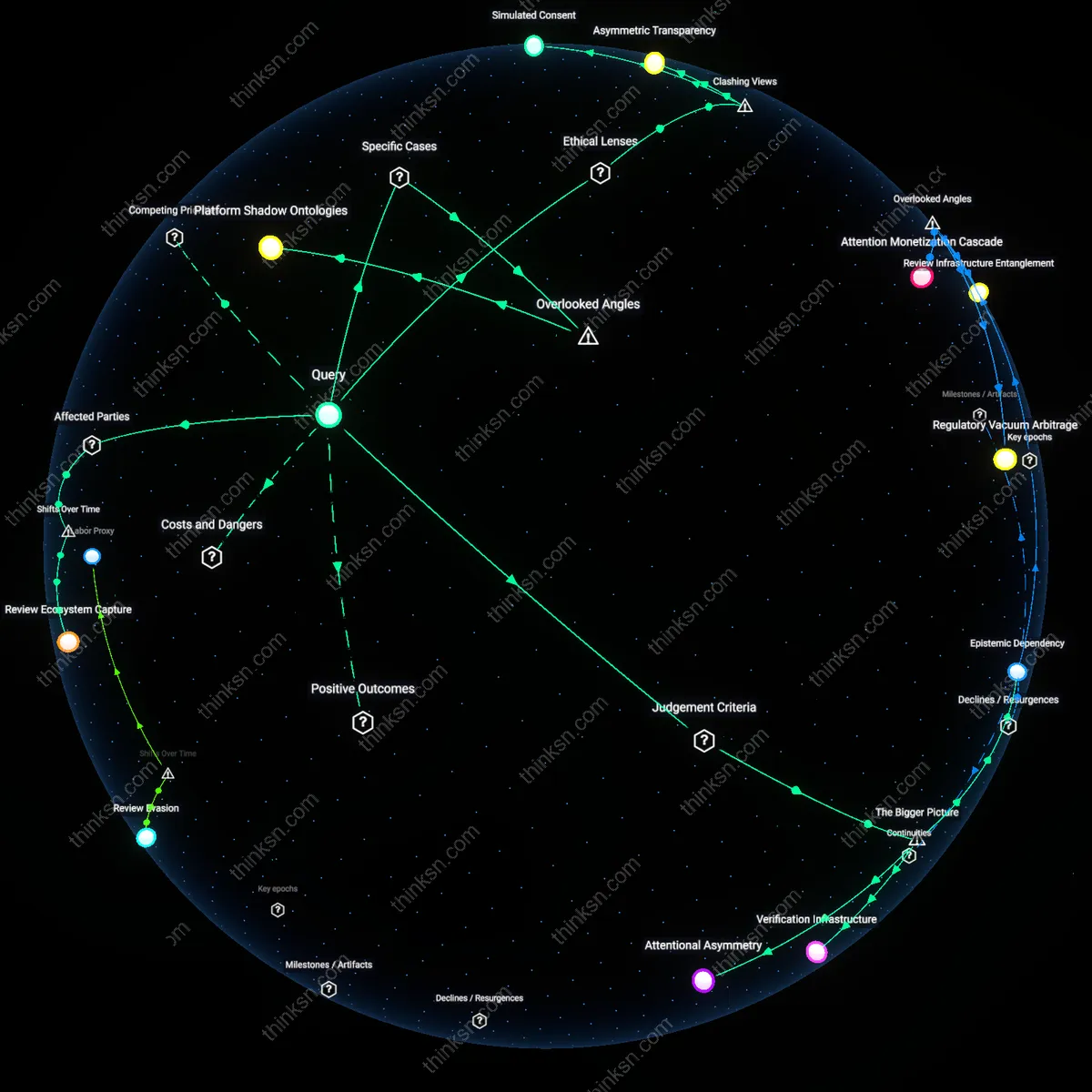

Review Ecosystem Capture

Consumers must scrutinize which platforms have undergone corporate consolidation enabling centralized control of review data, because post-2010 mergers—like Amazon’s acquisition of Goodreads and Bazaarvoice’s expansion—created vertical integration between review hosting and e-commerce, allowing firms to algorithmically prioritize favorable AI-generated content; this shift from decentralized, organic user feedback to managed reputation systems reveals how platform ownership now determines review credibility, a non-obvious dynamic where trust is structurally compromised before a single fake review is written.

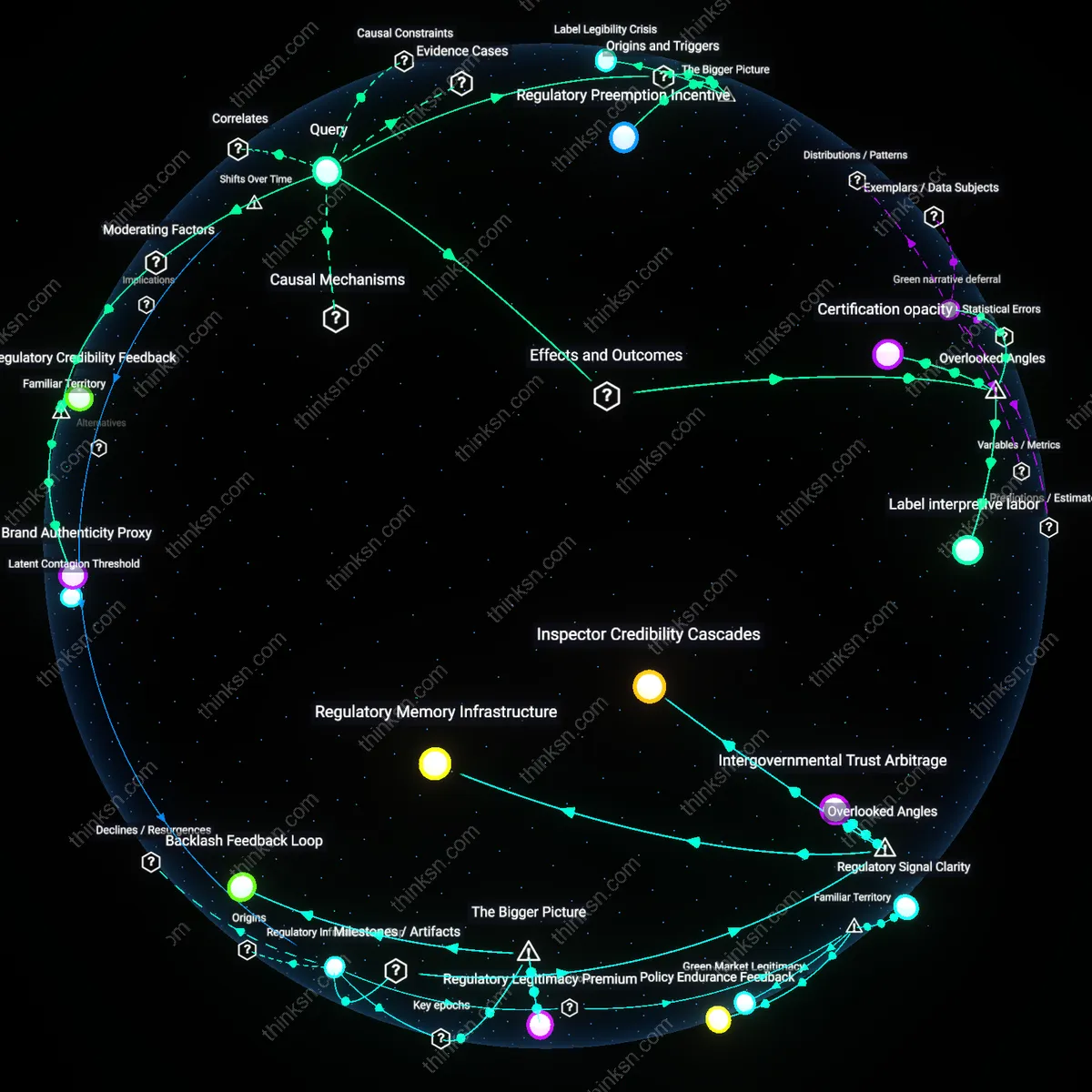

Regulatory Lag Effect

Consumers should treat AI-generated reviews as inherently suspect due to the post-2015 gap between the rapid deployment of generative AI by retail marketers and the slow evolution of enforcement by bodies like the FTC, which only began issuing non-binding guidance on fake reviews in 2023; this regulatory lag has produced a de facto permission structure where firms legally publish AI content without disclosure, revealing how the absence of real-time oversight has normalized deceptive practices under the guise of innovation.

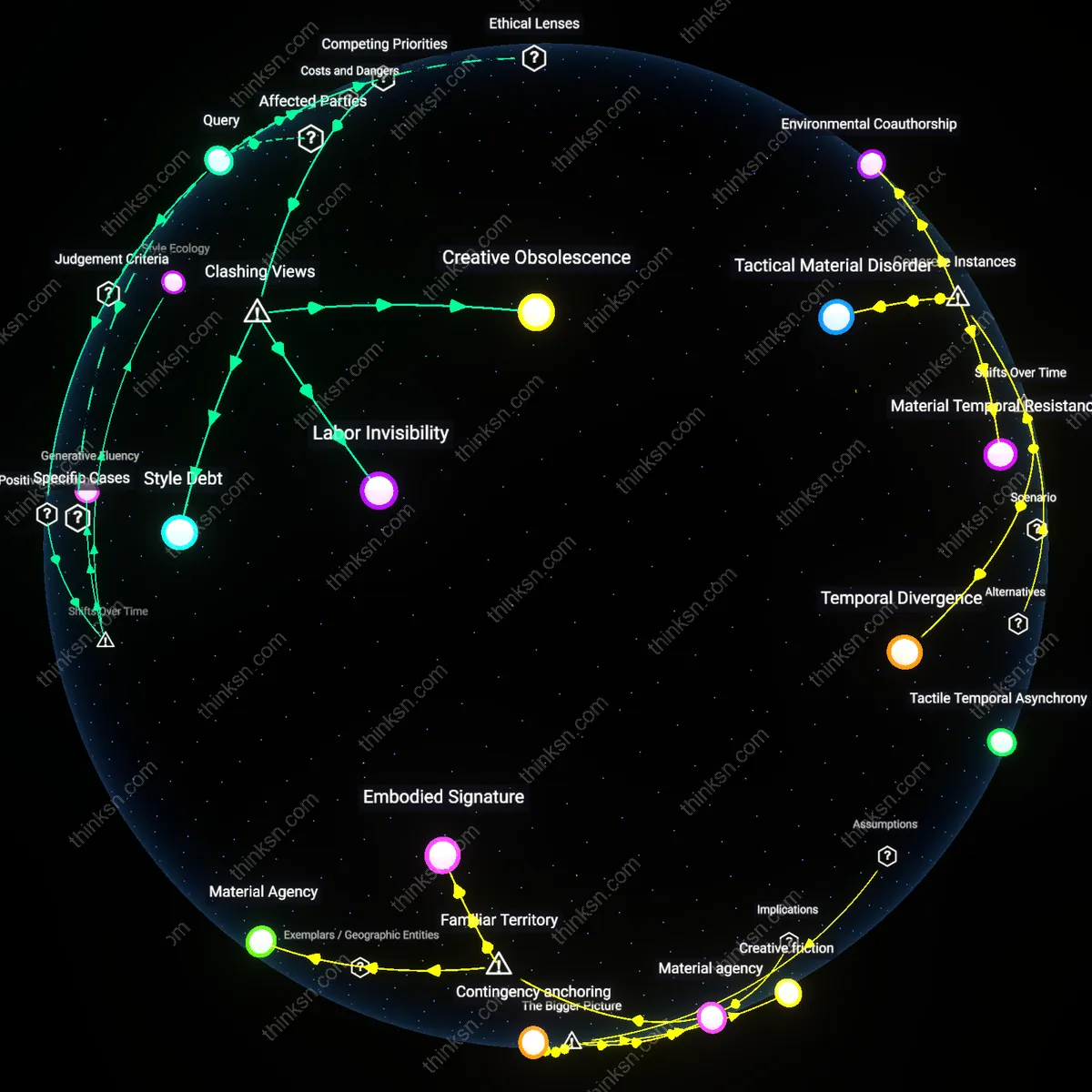

Verification Infrastructure

Consumers can trust AI-generated product reviews only when independent verification infrastructure audited by public-interest technologists confirms the provenance and integrity of review datasets, because without such infrastructure, platforms face no enforcement mechanism to deter synthetic content manipulation. This infrastructure operates through technical standards like cryptographic watermarking and decentralized ledgers, which are increasingly adopted by regulatory pilots in the EU and California, revealing that trust hinges not on consumer vigilance but on systemic authentication capacities that shift accountability to data producers. The non-obvious insight is that individual judgment is structurally displaced by a new layer of technological gatekeeping that determines epistemic legitimacy before information reaches users.

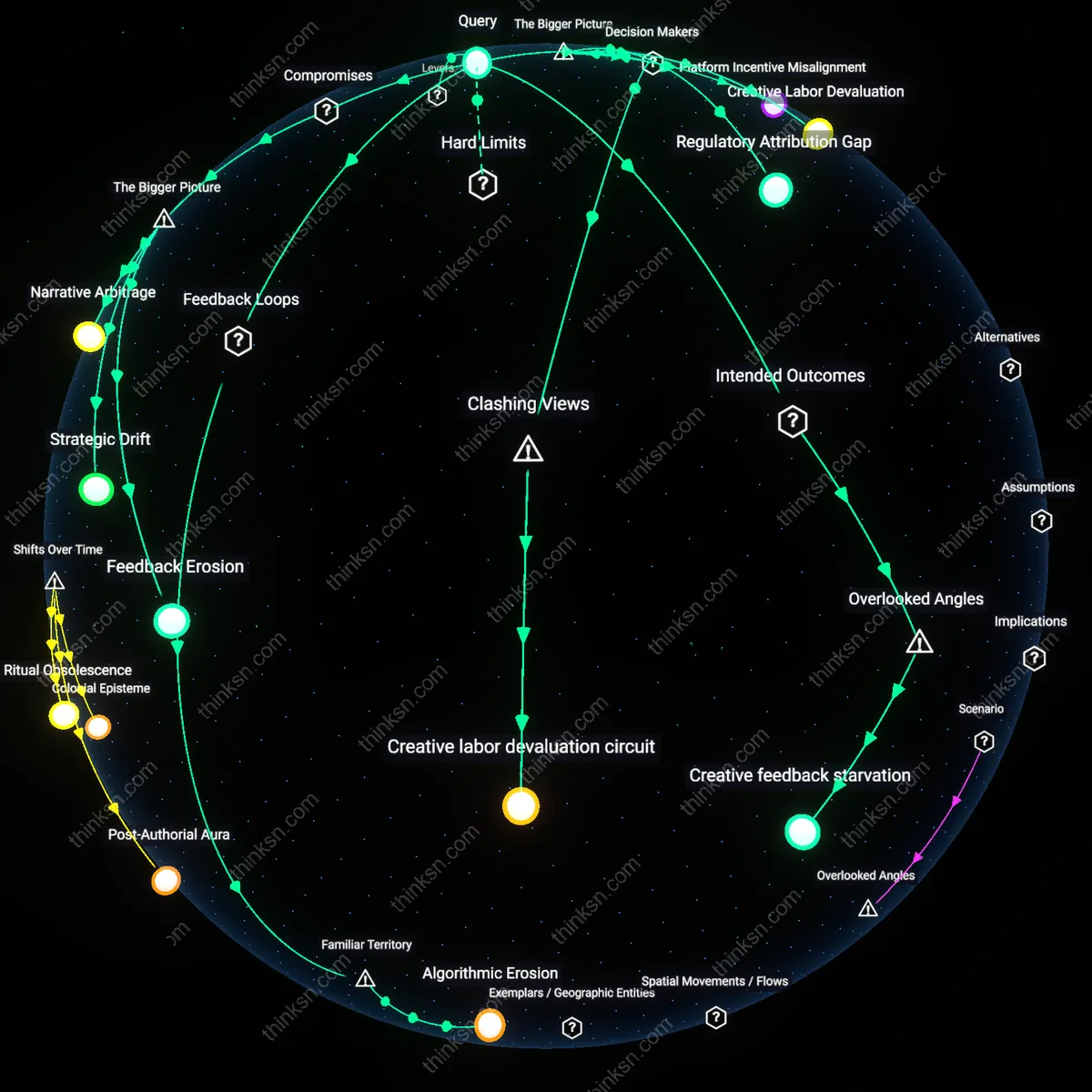

Attentional Asymmetry

Consumers should distrust AI-generated reviews by default because the economic design of digital marketplaces systematically rewards attention-grabbing content over factual accuracy, enabling bad-faith actors to exploit the speed and scalability of generative AI to flood platforms with persuasive but false endorsements. This asymmetry arises from algorithmic ranking systems used by Amazon, TikTok Shop, and Google that prioritize engagement metrics—time-on-page, click-through—over veracity checks, creating a self-reinforcing cycle where manipulated reviews gain visibility and displace genuine feedback. The underappreciated dynamic is that trust erosion stems not from the AI's quality but from how platform incentive structures selectively amplify deception, rendering consumer discernment irrelevant in practice.

Epistemic Dependency

Consumers cannot reliably assess the trustworthiness of AI-generated reviews because they are embedded in an epistemic environment where their cognitive autonomy is structurally compromised by opaque data supply chains controlled by private firms like Yotpo and Bazaarvoice that assemble review datasets without public oversight. These firms operate as unseen intermediaries that curate, weight, and sometimes fabricate social proof using proprietary machine learning models, meaning consumers lack access to the foundational evidence needed to apply standard evaluative criteria like consistency or consensus. The overlooked reality is that judgment is outsourced upstream, making individual critical thinking a symbolic act rather than an effective safeguard—trust becomes a function of invisible data governance, not personal scrutiny.

Epistemic Accountability

Consumers should treat AI-generated reviews as legally cognizable speech subject to producer liability under strict liability regimes for deceptive commercial communication. This means platforms and brands deploying AI reviews must be held legally responsible for their veracity, just as they are for human-authored false advertising, because automation does not absolve intent when the output functionally serves promotional ends. The non-obvious twist is that ethical blame shifts from consumer discernment to regulatory enforcement—trusting reviews becomes a matter of institutional deterrence, not personal vigilance, revealing that trust is structurally enforced, not individually negotiated.

Simulated Consent

Consumers cannot meaningfully detect manipulated AI reviews because the design of digital marketplaces relies on cognitive offloading to algorithms, making skepticism a maladaptive behavior in systems engineered for frictionless consumption. This erodes the liberal ethical premise of informed choice, as users are psychologically conditioned to treat algorithmic content as neutral infrastructure, not persuasive rhetoric—thus, the real issue is not detection but the covert substitution of judgment with compliance. The dissonance lies in rejecting the idea that better detection tools empower consumers, exposing a deeper mechanism where automated review systems manufacture passive agreement rather than inform decision-making.

Asymmetric Transparency

Trust in AI-generated reviews should be withdrawn by default until auditability is enforced through adversarial interoperability laws requiring open logging of review provenance, accessible to third-party validators like consumer advocacy NGOs or public interest tech collectives. This challenges the dominant market logic that positions transparency as a voluntary brand feature, reframing it as a political precondition for market legitimacy under civic republican theory, where fair commerce requires active oversight by countervailing public actors. The underappreciated reality is that transparency without enforceable access rituals merely performs accountability while preserving corporate control, making the appearance of openness a tool of dominance.

Platform Shadow Ontologies

Trust in AI-generated reviews hinges on whether consumers recognize how platform-specific data hierarchies—like Google Shopping’s implicit product taxonomy—shape which reviews are surfaced and weighted. For example, Google’s algorithm prioritizes reviews tied to ‘top-of-funnel’ product clusters (e.g., 'best wireless earbuds'), where AI-generated summaries are more likely to amalgamate commercially incentivized inputs from affiliate marketers using tracked purchase codes, subtly skewing sentiment. Most users assume editorial neutrality in these AI summaries, but the real determinant is an invisible classification schema built by Google’s product graph engineers, which decides what counts as a 'relevant' review based on engagement metrics, not credibility. This hidden structuring logic—an unacknowledged ontology—matters because it embeds commercial incentives into the very architecture of perceived objectivity, a dependency rarely visible to consumers or regulators.