Do Shadow Bans Work? Lack of Transparency Threatens Democracy?

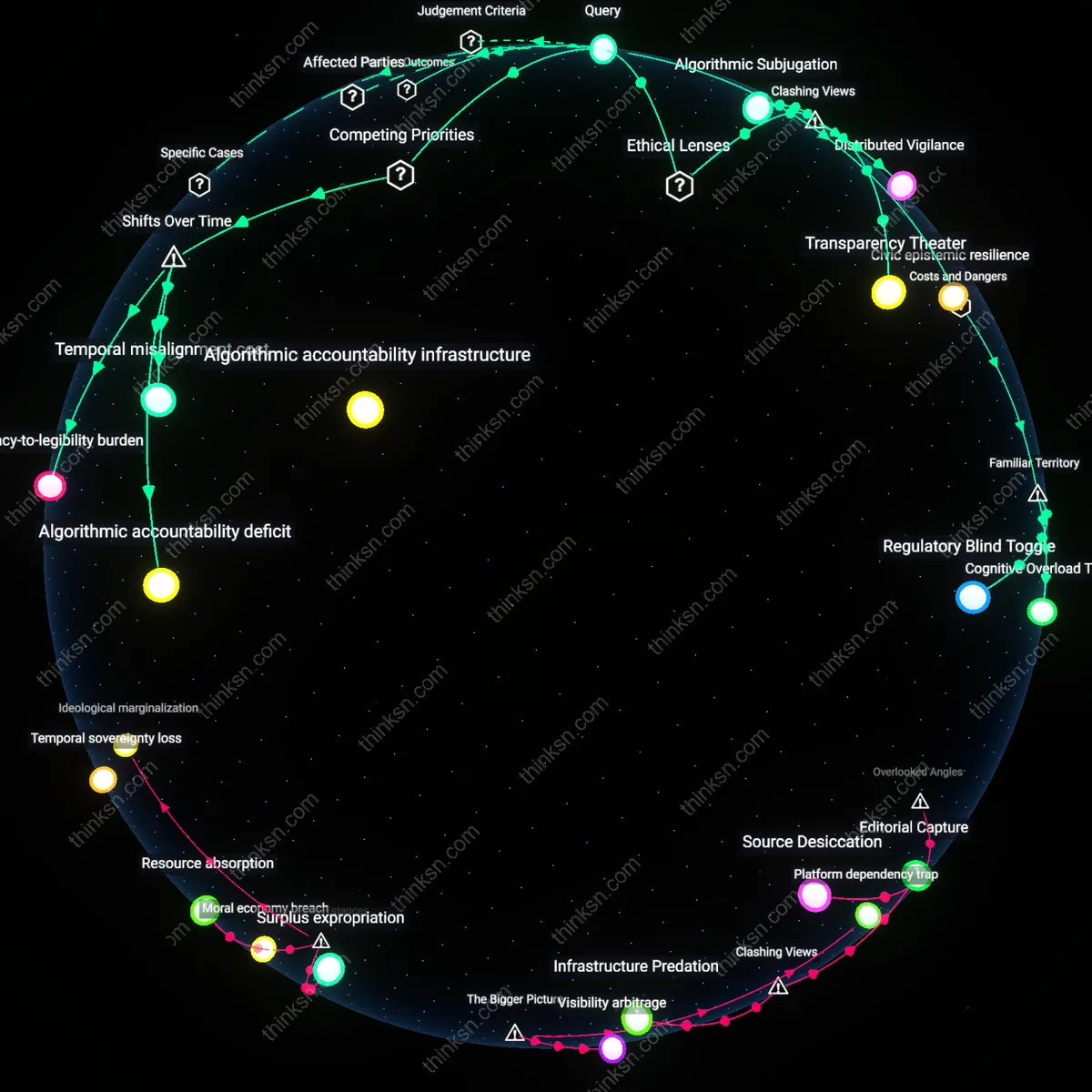

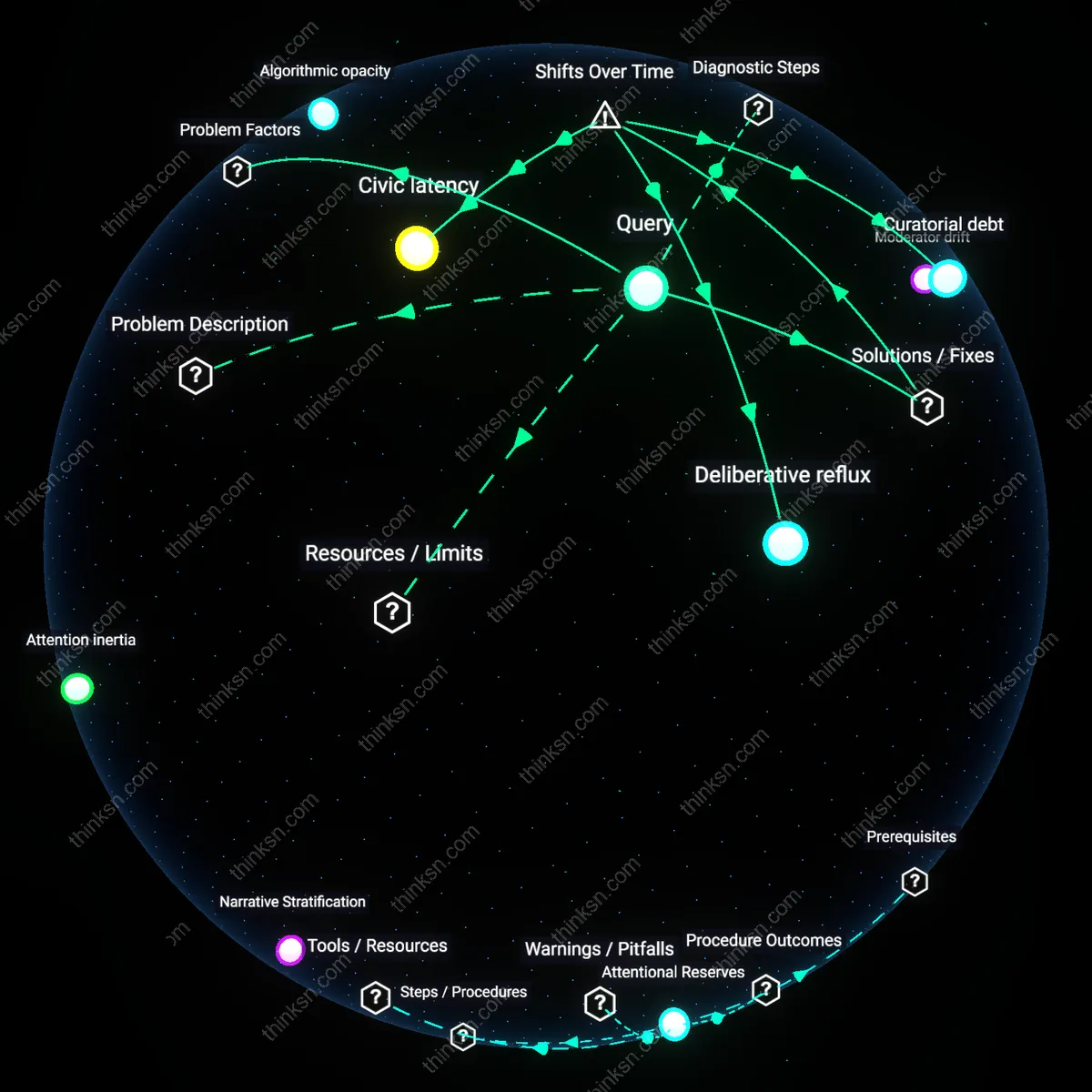

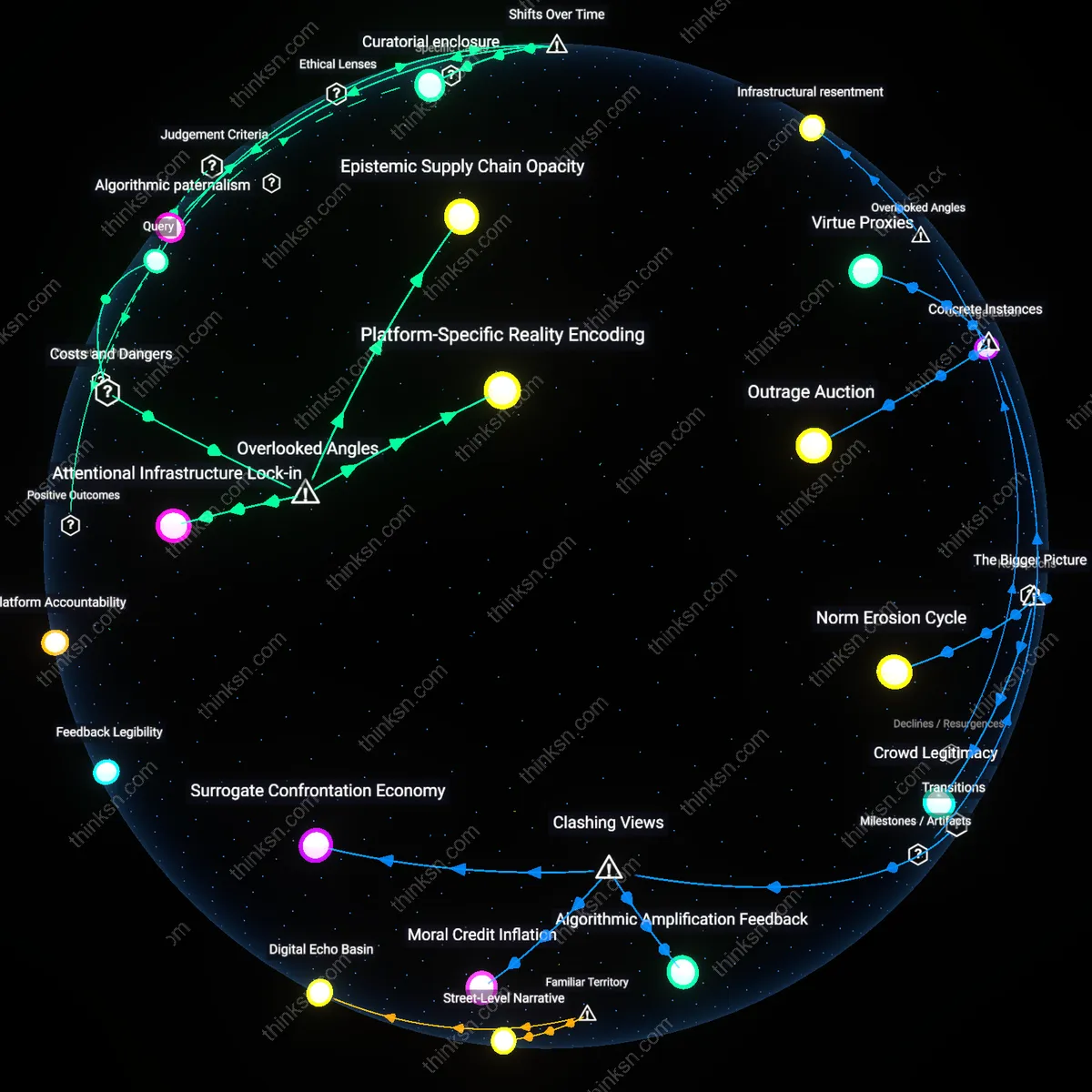

Analysis reveals 8 key thematic connections.

Key Findings

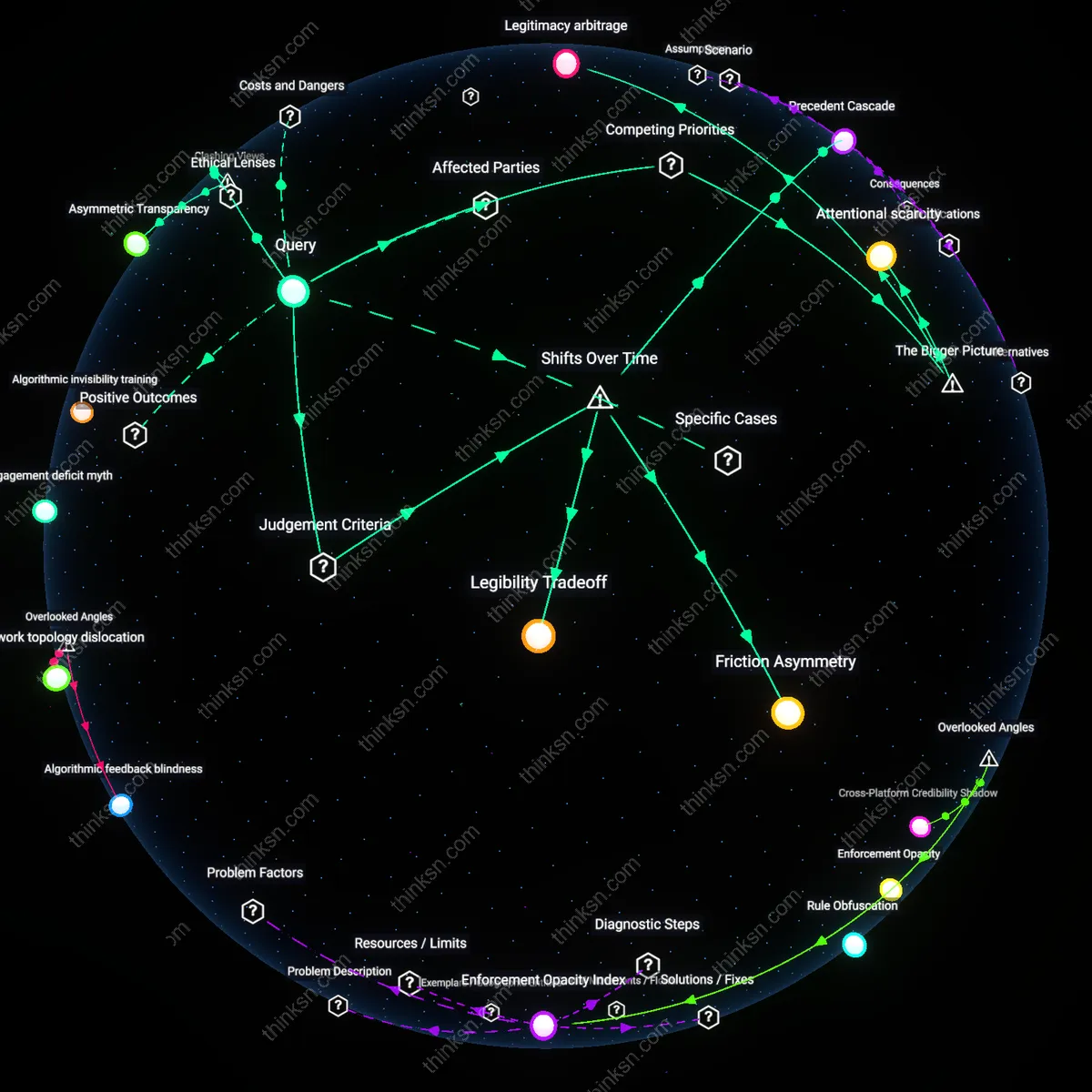

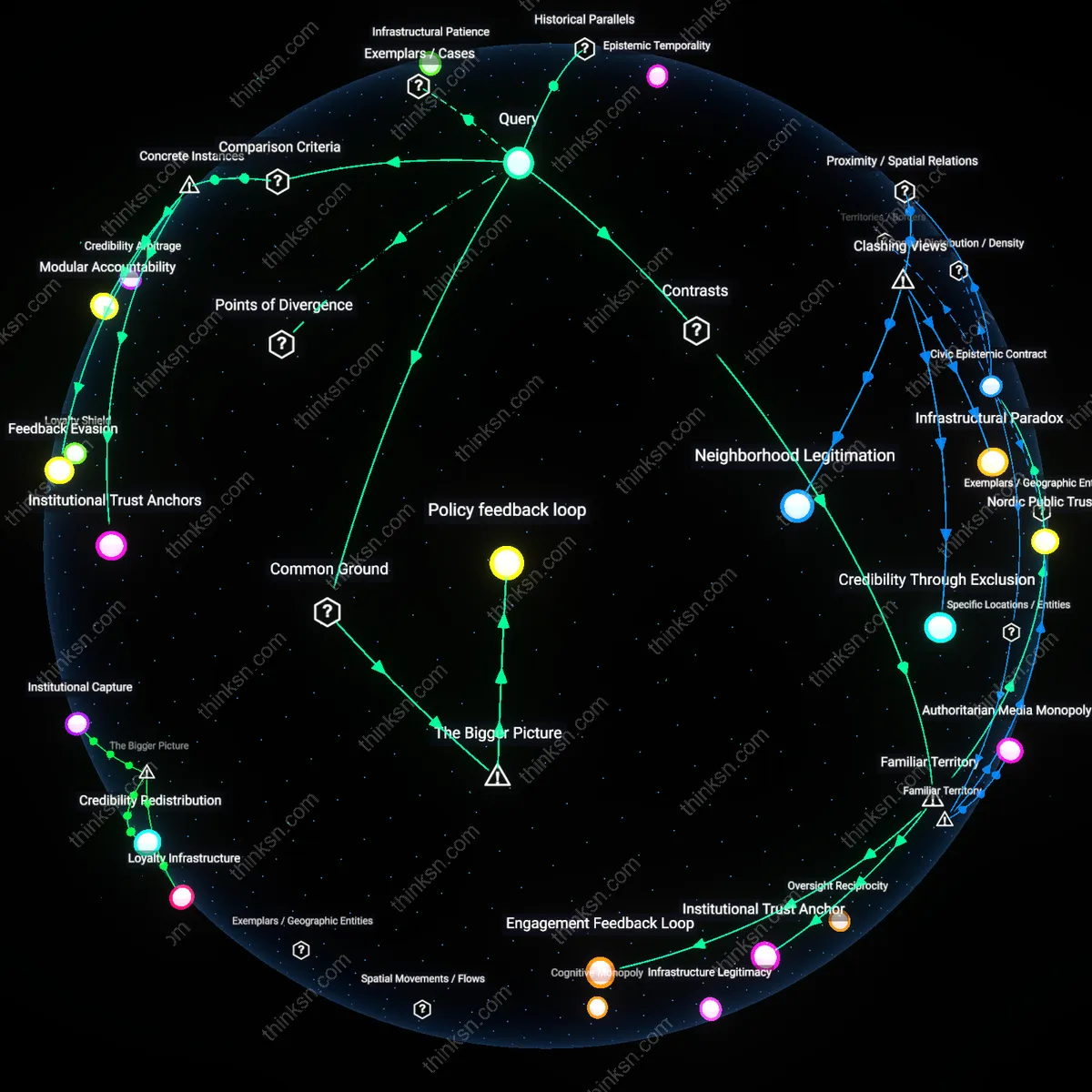

Legibility Tradeoff

Shadow banning fails democratic accountability because platforms prioritize operational security over public scrutiny, a shift accelerated after 2016 when intelligence disclosures revealed foreign manipulation of social media, forcing companies to conceal moderation tactics to avoid gaming by bad actors; this obscured accountability not by accident but by design, embedding a non-obvious tradeoff between system legibility to users and resilience against coordinated abuse. The mechanism—algorithmic stealth enforced through opaque user experience—emerged as a residual governance model after states proved unable to regulate disinformation at scale, transferring normative oversight to private technical teams who judged user autonomy secondary to network integrity.

Precedent Cascade

Misinformation governance evolved from content removal to shadow banning in the mid-2010s not because of proven efficacy but due to legal pressures in democratic countries where free speech norms constrained visible censorship, making invisible throttling the path of least resistance; this shift calcified into standard practice after major platforms faced regulatory fragmentation post-GDPR and Section 230 debates, privileging plausible deniability over transparency and creating a precedent cascade where lack of accountability became institutionalized as operational necessity. The non-obvious insight is that democratic backsliding was not caused by shadow banning alone, but by its normalization as a workaround to legal constraints originally meant to protect speech.

Friction Asymmetry

Shadow banning gained traction after 2020 not because it reduced misinformation more effectively than alternatives but because it redistributed friction—placing adaptive costs on malicious actors while insulating compliant users—marking a shift from reactive takedowns to anticipatory network shaping guided by behavioral economics; the mechanism operates through differential visibility settings keyed to account reputation scores, a technique refined from early spam filters but repurposed under crisis conditions when trust in public information collapsed. The underappreciated dynamic is that opacity here is not a democratic failure but a feature enabling tactical agility, revealing friction asymmetry as the hidden principle of modern information governance.

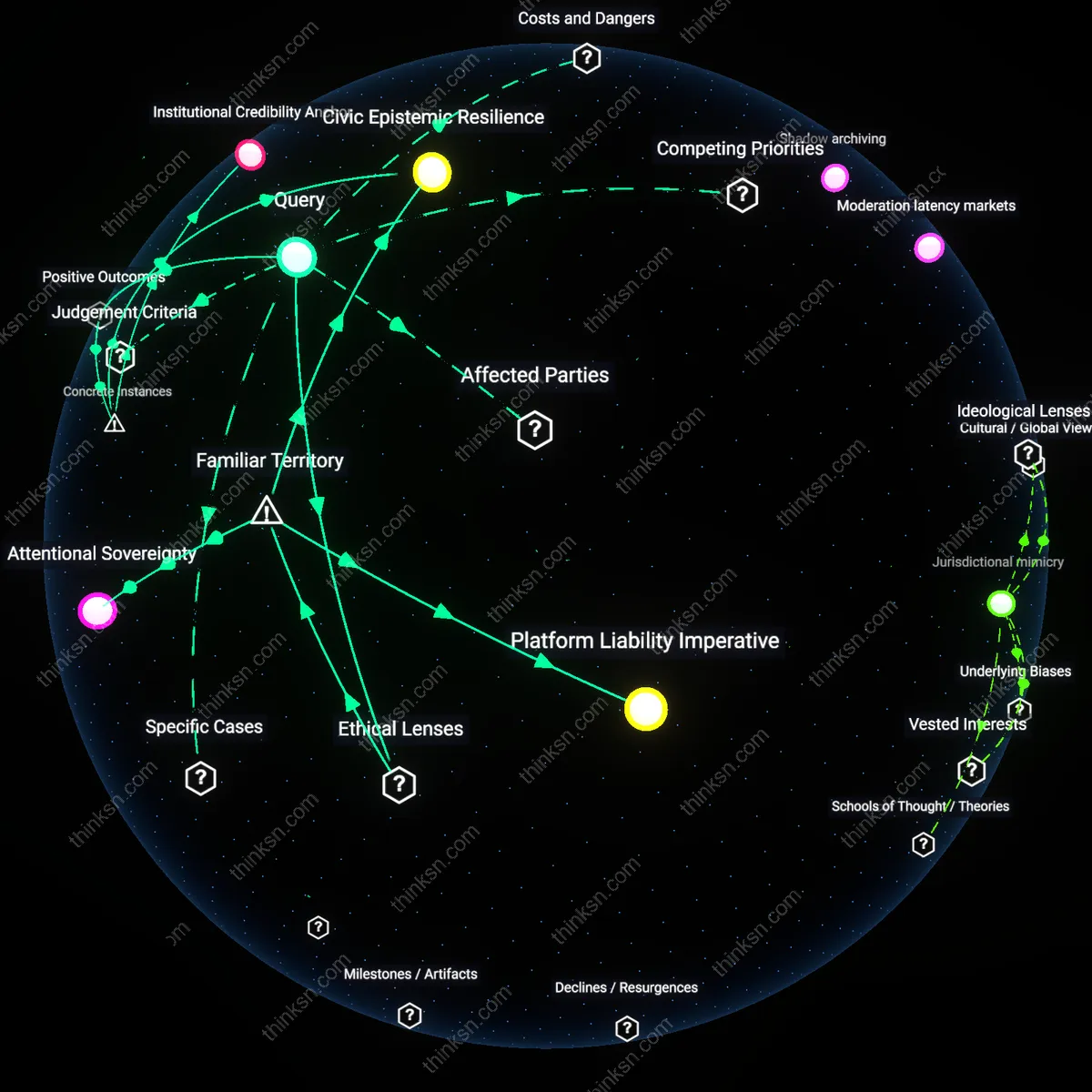

Algorithmic obscurity

Shadow banning reduces the spread of misinformation by limiting the visibility of suspicious accounts, but its effectiveness depends on remaining undetectable to those targeted. When users cannot confirm suppression, they are less likely to adapt tactics or migrate to alternative platforms, preserving the intervention’s potency. This dynamic is sustained by platform-controlled algorithms whose criteria are shielded from public audit, enabling discretionary enforcement that avoids direct confrontation with free speech norms. The non-obvious consequence is that opacity itself becomes a functional requirement for efficacy, embedding a structural resistance to transparency within content moderation systems. What makes this connection hold is the interaction between adversarial user behavior and platform risk calculus, where disclosure would trigger evasion, thereby undermining the very mechanism designed to contain misinformation.

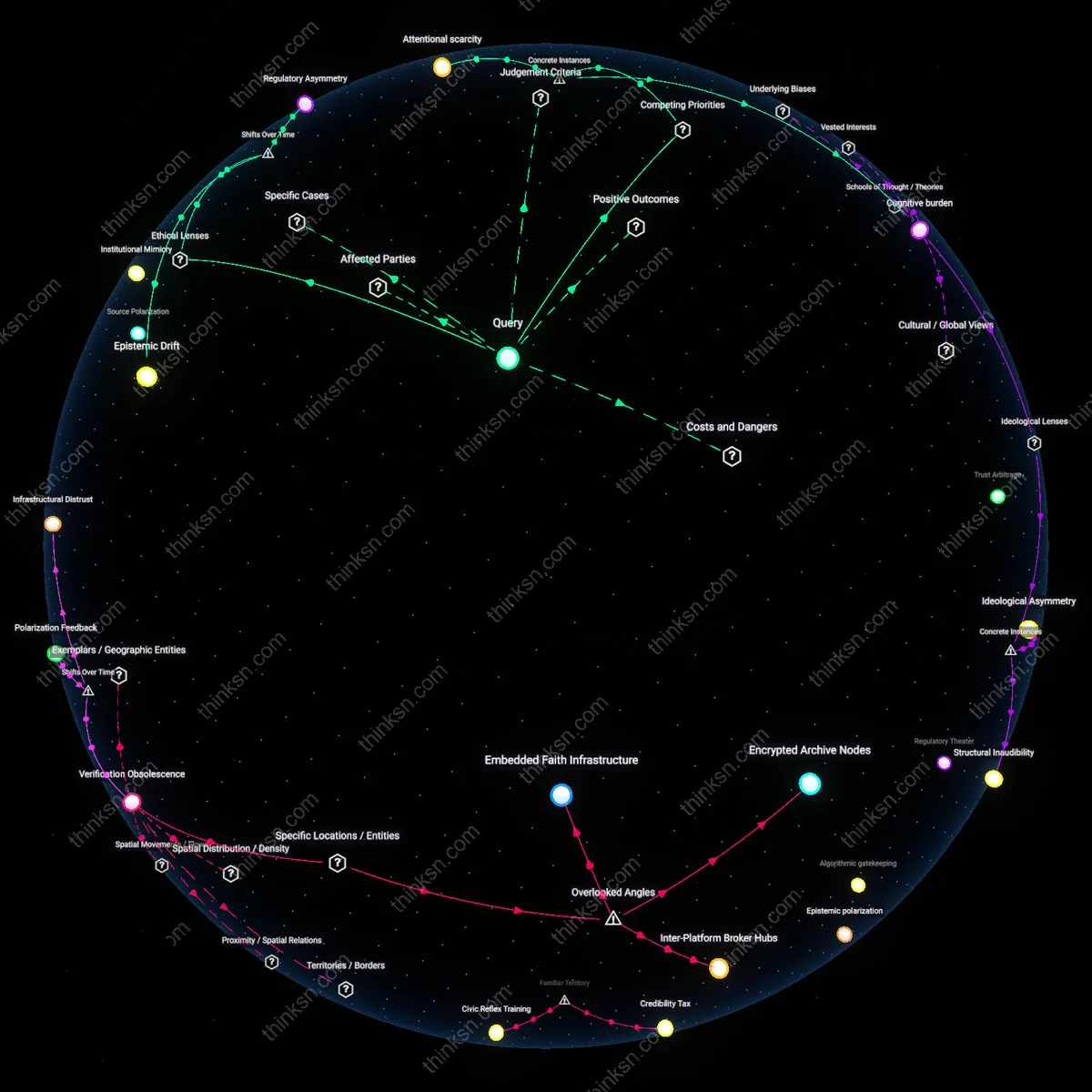

Legitimacy arbitrage

Social media platforms deploy shadow banning as a compromise between regulatory pressure to remove harmful content and backlash against overt censorship, positioning themselves as both secure and permissive. By quietly demoting contested speech rather than deleting it, companies appease governments demanding action on disinformation while avoiding public accusations of political bias. This balancing act is made possible by the lack of standardized oversight across jurisdictions, allowing platforms to selectively apply invisible moderation in ways that align with local political climates. The underappreciated systemic effect is that platforms exploit jurisdictional fragmentation to outsource accountability decisions, turning inconsistent democratic norms into operational flexibility. The key actors are national regulators, platform policy teams, and algorithmic enforcement units operating in a patchwork governance environment.

Attentional scarcity

Shadow banning functions effectively not because it erases misinformation, but because it exploits the finite nature of user attention in algorithmically curated environments. Misinformation relies on virality, which in turn depends on platform-driven amplification; by withholding recommendation engine support, shadow banned content fails to reach critical mass even if technically accessible. This mechanism operates through the structural design of engagement-based ranking systems used by platforms like Facebook and X, where visibility is gatekept by predictive models optimizing for interaction. The non-obvious insight is that democratic accountability suffers not from deletion, but from silent exclusion from public visibility—where speech is neither protected nor challenged, but rendered irrelevant through engineered invisibility. The causal force lies in how attention economies prioritize algorithmic efficiency over deliberative inclusion.

Epistemic Sovereignty

Shadow banning effectively curbs misinformation by exploiting algorithmic invisibility to reduce the reach of disinformation actors without triggering backlash, a mechanism legitimized under utilitarian ethics that prioritize collective epistemic welfare over individual expressive rights; this operates through platforms like Meta and X, which deploy non-transparent content throttling calibrated to user engagement patterns, revealing that the least contestable form of censorship is the one the target never knows they’re subject to—a dynamic that undermines liberal democratic norms of procedural accountability not through malice but by design. What is non-obvious is that the most ethically defensible intervention under consequentialist frameworks becomes the most corrosive to democratic legitimacy precisely because it works silently, normalizing unchallengeable authority over public discourse.

Asymmetric Transparency

Far from threatening democratic accountability, the opacity of shadow banning is an essential safeguard against bad-faith actors weaponizing transparency to escalate harassment and coordinate platform manipulation, a justification rooted in Rawlsian political liberalism that treats informational asymmetry as a necessary buffer to protect the integrity of digital public reason; this manifests in moderation systems at Reddit and TikTok, where adversarial communities actively probe for enforcement thresholds, making full disclosure a tactical liability. The non-obvious insight is that democratic accountability in contested epistemic environments does not require full visibility but rather functional redress, and that revealing shadow banning mechanics would not empower users but instead optimize abuse vectors, flipping the normative assumption that transparency is inherently democratizing.