Should Political Speech and Ads Have Different Rules on Social Media?

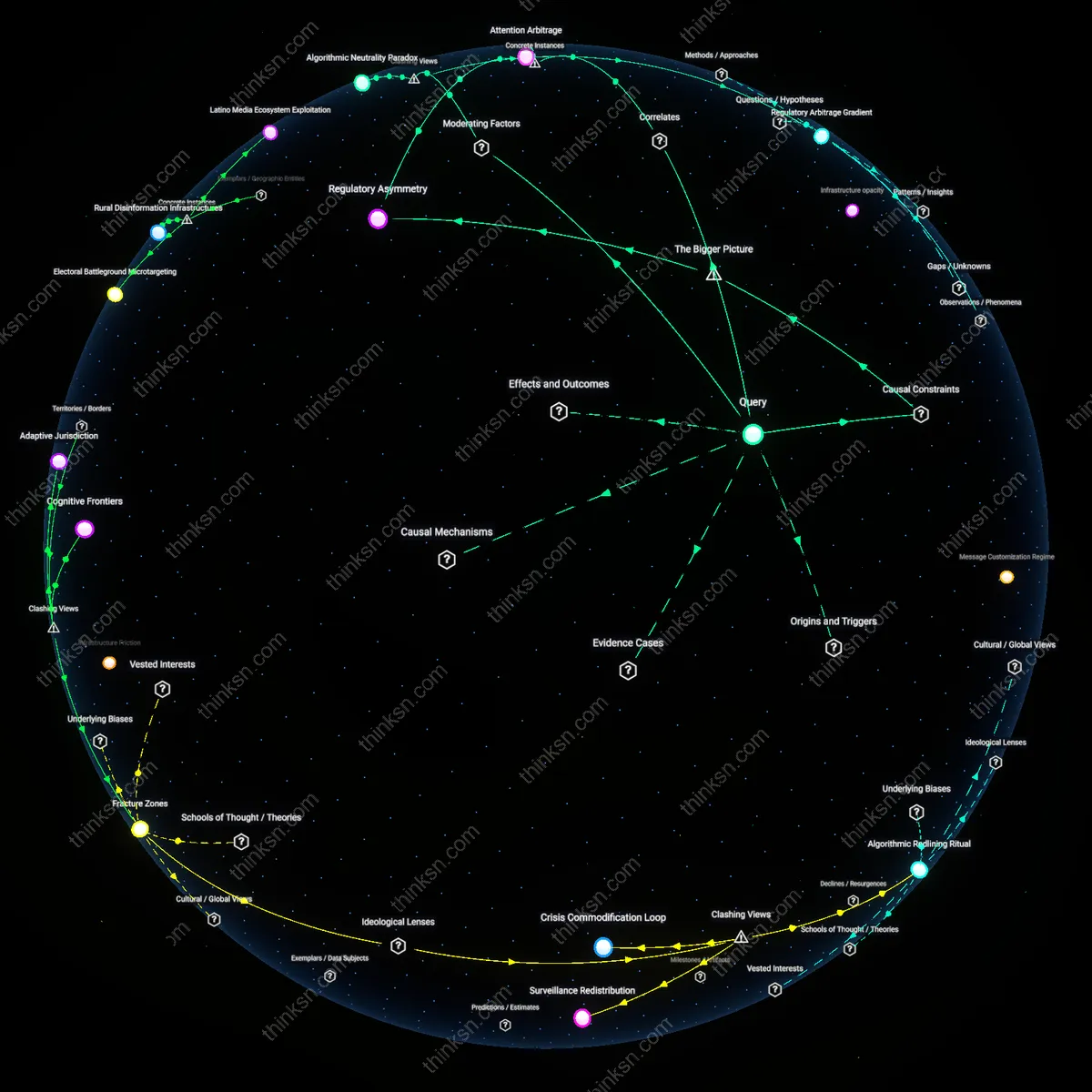

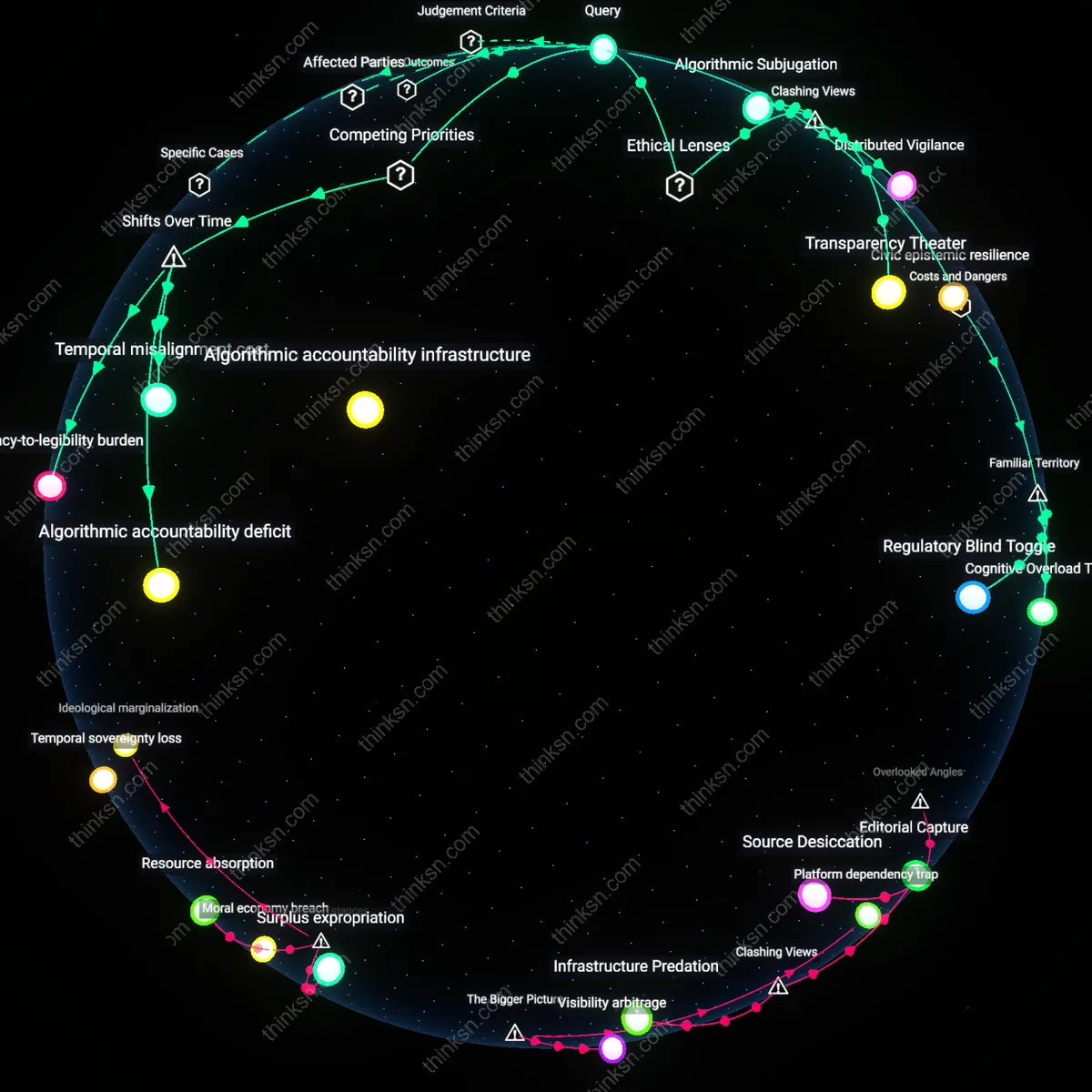

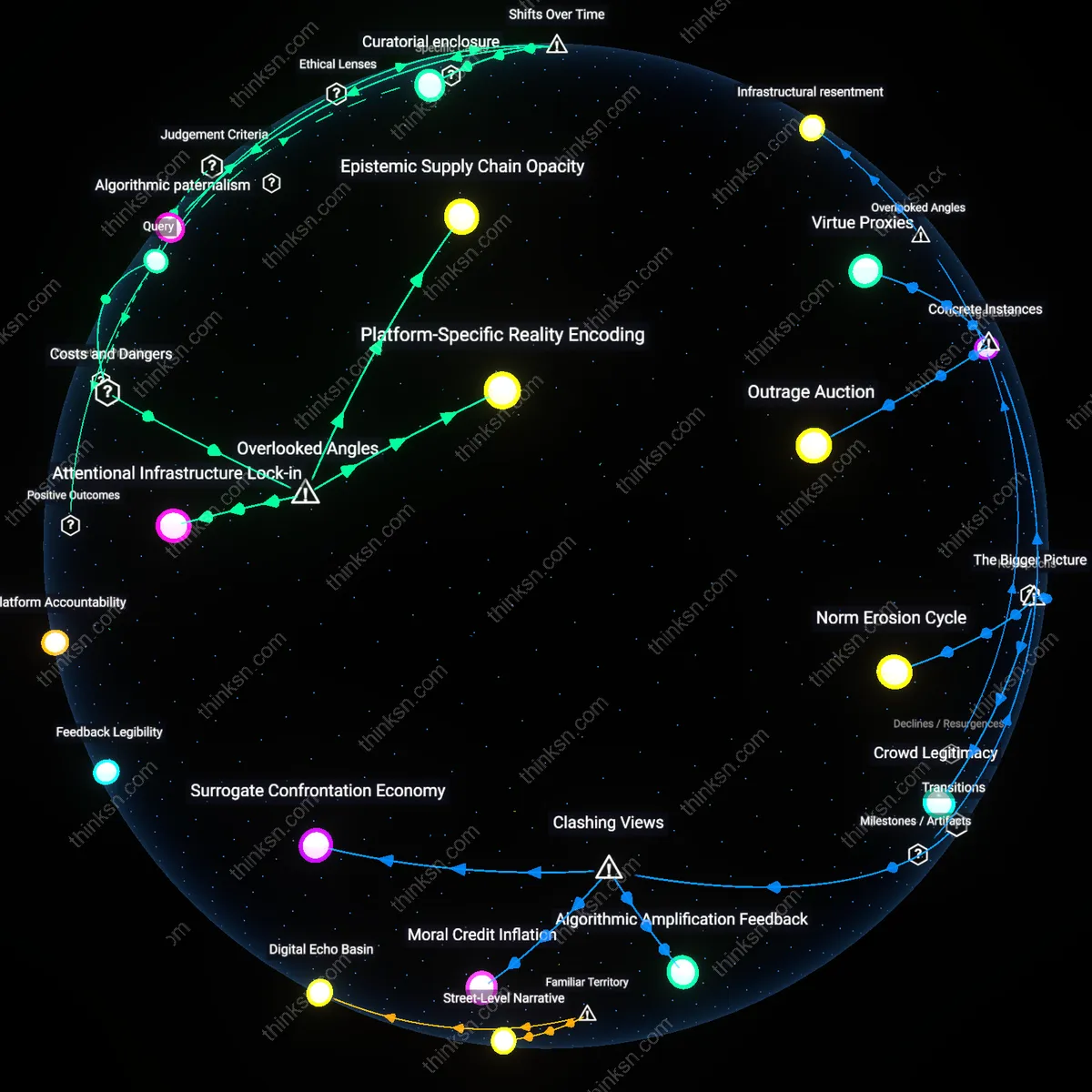

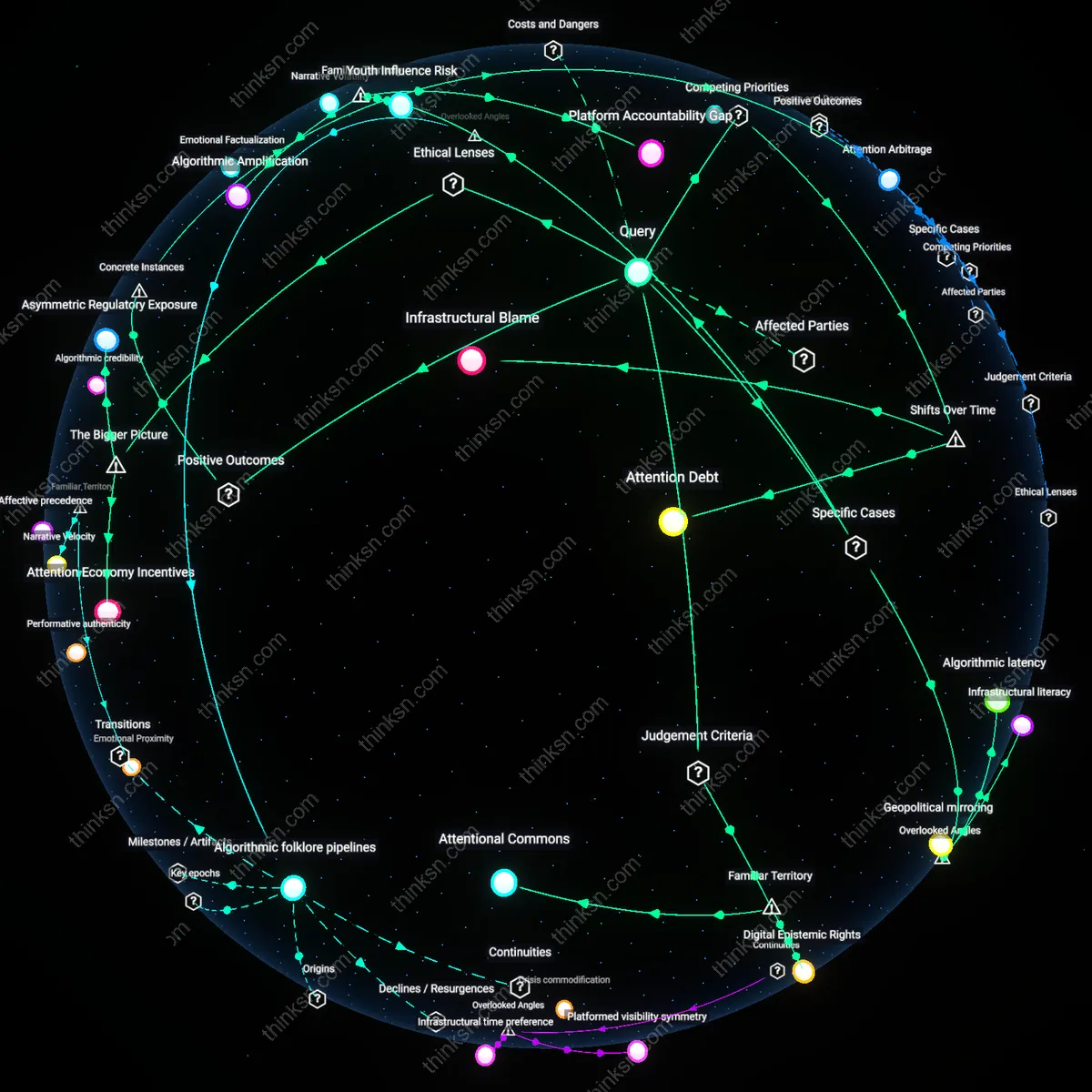

Analysis reveals 7 key thematic connections.

Key Findings

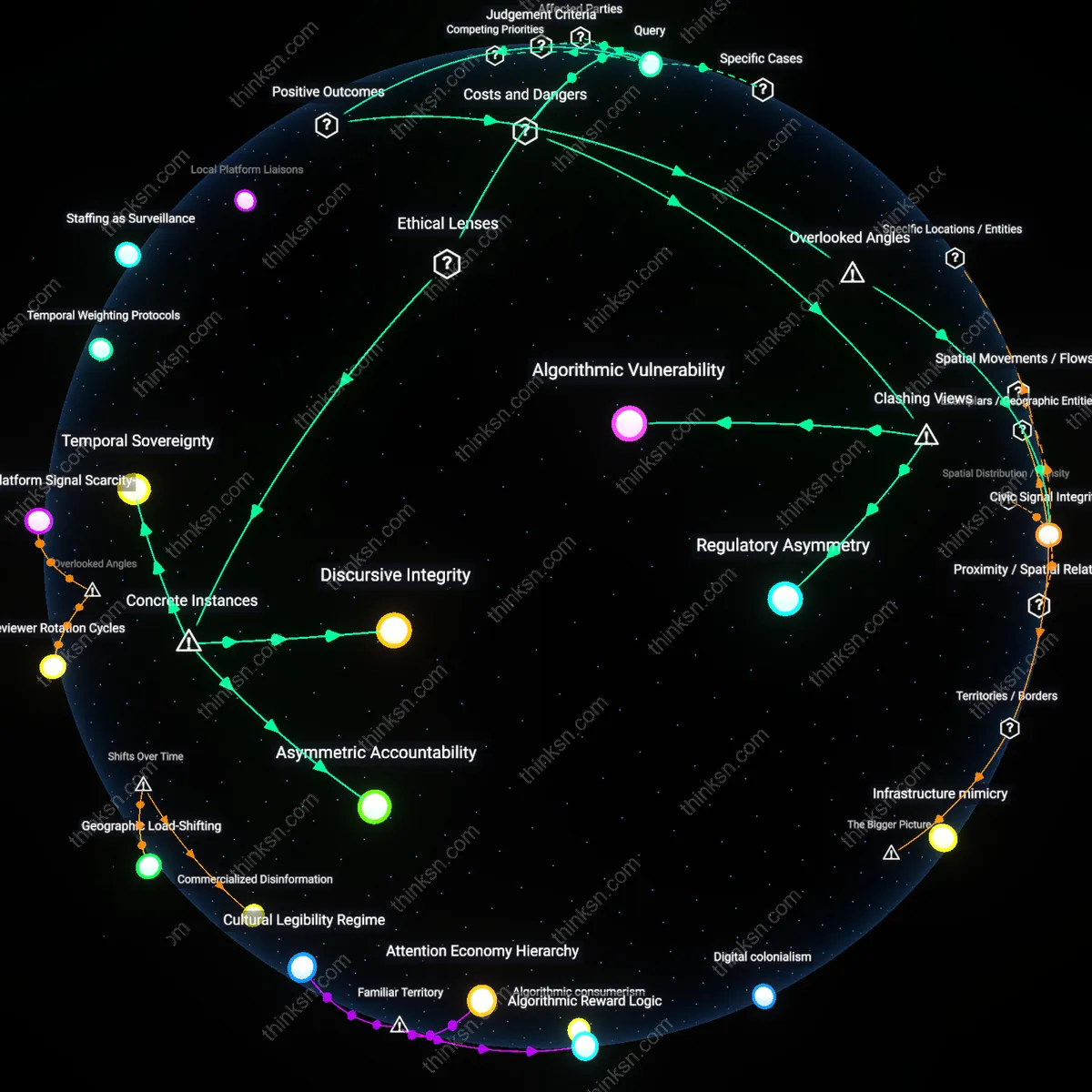

Civic Signal Integrity

Social media platforms should differentiate political speech from commercial advertising to preserve the integrity of civic signals in algorithmic environments. Political discourse relies on unmediated authenticity to function democratically, yet engagement-based algorithms optimized for commercial conversion disproportionately amplify emotionally charged or misleading political content when treated like ads; this distorts public perception not through volume but through signal-to-noise degradation in the civic information ecosystem. The overlooked dimension is how ad optimization logic—designed to drive purchases—inadvertently corrupts political deliberation by rewarding virality over veracity, a systemic interference rarely acknowledged in content moderation debates that focus on speaker rights rather than signal fidelity.

Algorithmic Vulnerability

Social media platforms should not treat political speech differently from commercial advertising because doing so forces artificial distinctions that amplify algorithmic manipulation; content moderation systems operated by firms like Meta and X rely on scalable, automated enforcement that cannot reliably differentiate between political messaging and stealth commercial influence operations, especially when state-linked actors co-opt ad infrastructure to spread disinformation under the guise of legitimate political expression. This indistinction corrupts the platform’s integrity by privileging detection of format over intent, making the system more fragile to exploitation through hybridized speech — a risk most evident during electoral cycles in swing states like Wisconsin or Georgia where microtargeted ads blur sponsorship and ideology. The non-obvious danger is not that platforms conflate speech types, but that mandated differentiation pressures them to develop classification rules that adversaries can reverse-engineer and exploit.

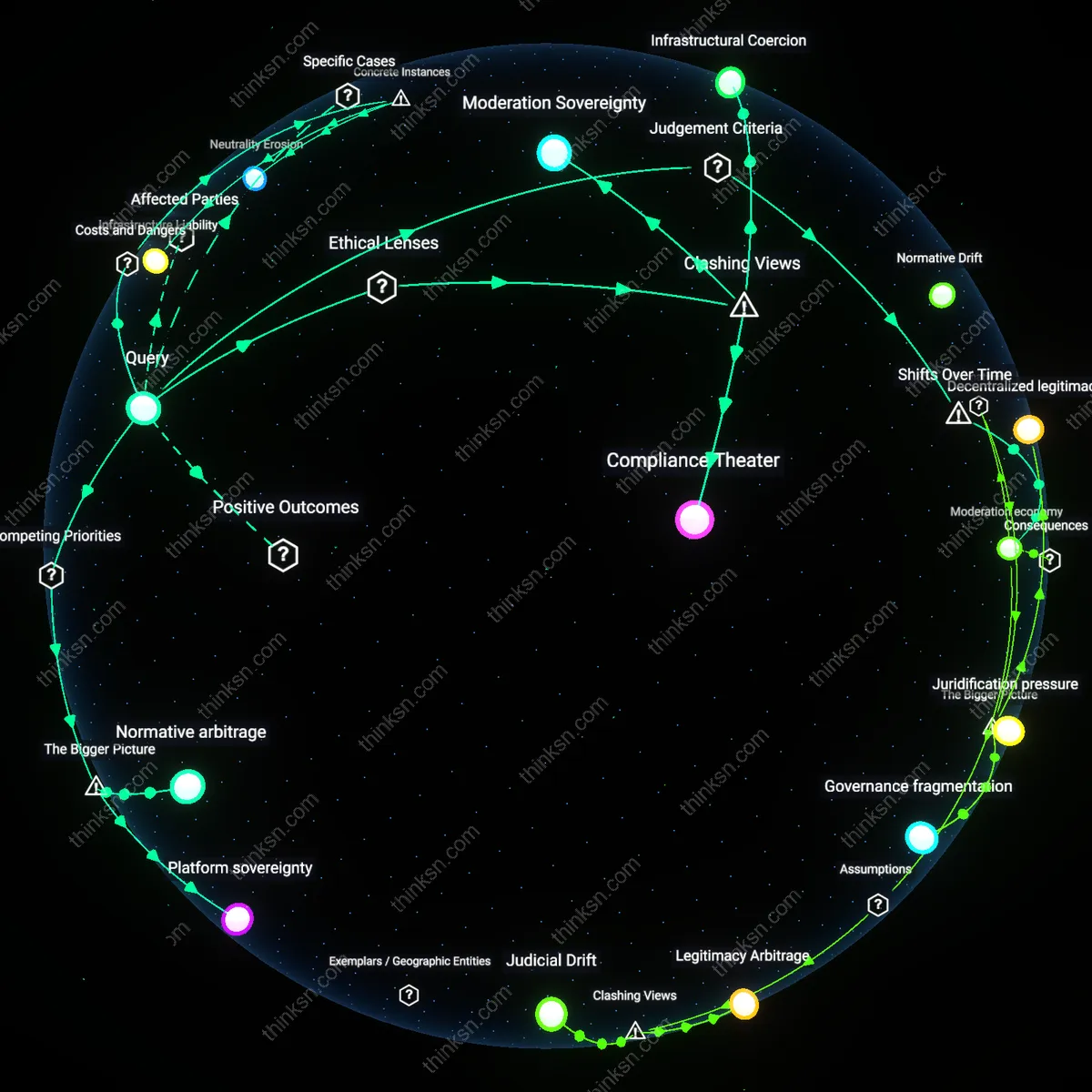

Regulatory Asymmetry

Requiring political speech to be governed differently than commercial advertising creates a legal and operational imbalance that advantages well-resourced political actors while disadvantaging grassroots movements; federal and state regulations like the Bipartisan Campaign Reform Act already impose rigorous disclosure requirements on political ads, but platforms such as YouTube or TikTok apply these unevenly due to inconsistent verification of political entity status, allowing Super PACs with legal counsel to navigate exemptions while community organizers face arbitrary takedowns. This divergent treatment doesn't correct market failures — it replicates them under a civic guise, incentivizing the formalization of political voice into monetizable, trackable formats indistinguishable from branded content. The underappreciated consequence is that regulatory intervention meant to protect democratic discourse instead entrenches institutional power by making political expression legible only when it conforms to commercial logics.

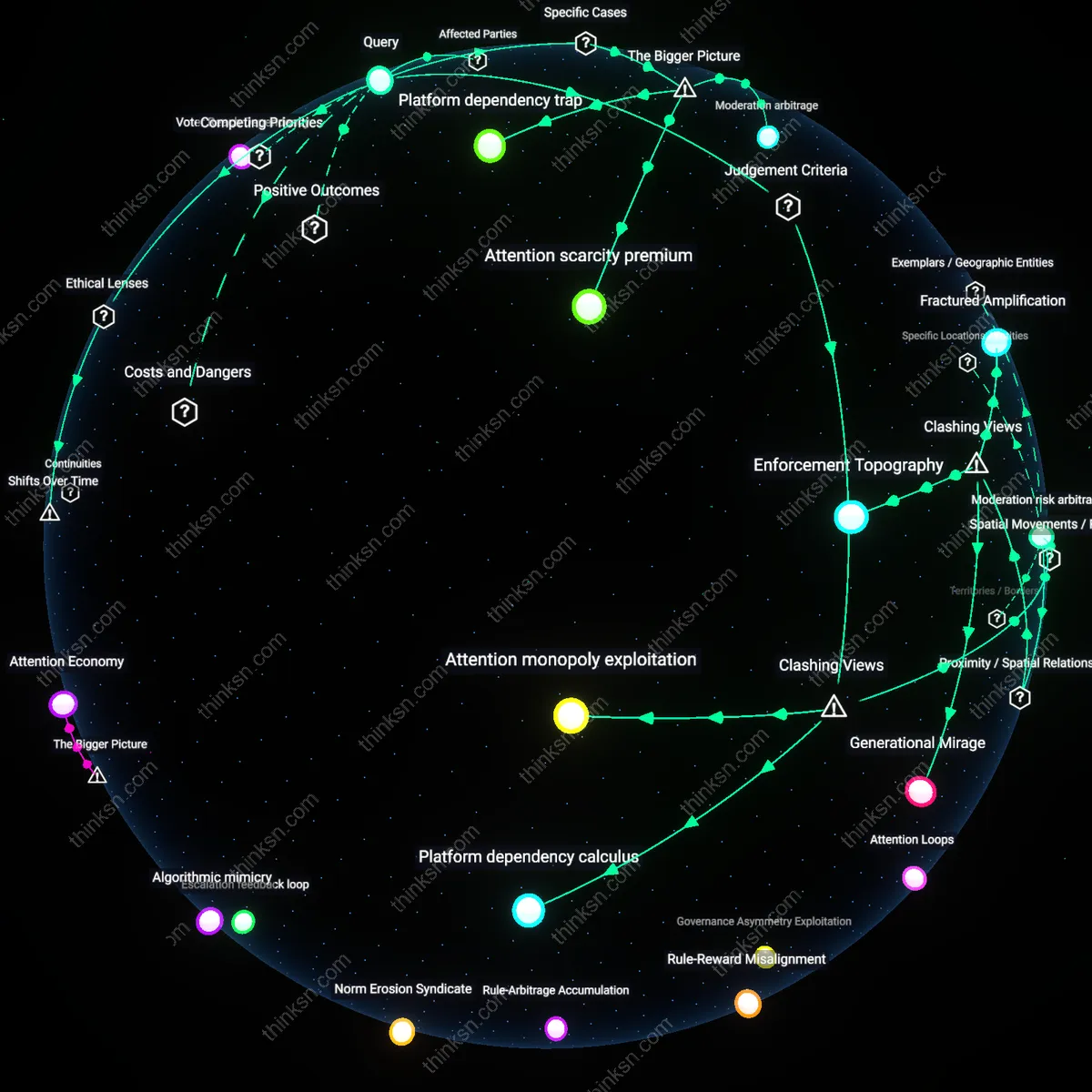

Attention Arbitrage

Treating political speech as categorically distinct from commercial content increases systemic risk by encouraging platforms to treat civic engagement as a premium attention commodity, as seen in Facebook’s 2020 decision to exempt political ads from fact-checking while simultaneously selling political targeting tools to campaign consultants in battleground districts. This creates a perverse incentive where political expression gains higher distribution priority not due to public value but because its controversial nature generates more measurable user engagement — a dynamic exploited by foreign and domestic actors alike to drive algorithmic amplification at low cost. The overlooked hazard is not inconsistent moderation, but that formal differentiation licenses platforms to instrumentalize political speech as a privileged vector for attention extraction, effectively letting them monetize democracy without liability while offloading accountability to users.

Discursive Integrity

Social media platforms must differentiate political speech from commercial advertising because political discourse requires protection from manipulative behavioral design that undermines democratic deliberation, as occurred during the 2016 U.S. presidential election when microtargeted ads exploited cognitive biases to spread disinformation under the guise of political expression; this manipulation operated through Facebook’s opaque algorithmic amplification system, which treated politically charged content no differently than consumer-facing ads, revealing that treating both forms of speech as equivalent erodes the epistemic conditions necessary for democratic legitimacy—a failure that utilitarian and deontological ethical frameworks alike condemn when public reasoning is distorted by profit-driven personalization engines.

Asymmetric Accountability

Political speech on social media should be governed by distinct rules because elected officials and state actors use platform infrastructure to perform governance functions, as seen when then-President Donald Trump used Twitter to issue emergency declarations and suspend civil rights during the 2020 Black Lives Matter protests, compelling the platform to function as a de facto public square governed by First Amendment norms under the state action doctrine; unlike commercial advertisers, such actors generate constitutionally significant speech acts that create public obligations for platforms, exposing a legal asymmetry where private content moderation systems must respond to state-like speech while lacking state-level accountability mechanisms, a tension unrecognized when all speech is flattened into market equivalence.

Temporal Sovereignty

Platforms must treat political speech differently because electoral processes impose time-bound integrity demands unlike commercial cycles, exemplified by India’s 2019 general election where WhatsApp’s encrypted forwarding architecture enabled unchecked viral dissemination of party-aligned misinformation in the 72-hour election silence period, a window legally protected under the Representation of the People Act to ensure voters' reflective autonomy; whereas commercial ads operate on perpetual engagement metrics, political speech is bound by democratic temporality—critical junctures where information integrity directly affects franchise legitimacy—revealing that economic models of attention optimization are ethically incommensurate with the sovereignty of electoral time.