Do Transparency Reports Mask Hidden Algorithmic Bias?

Analysis reveals 8 key thematic connections.

Key Findings

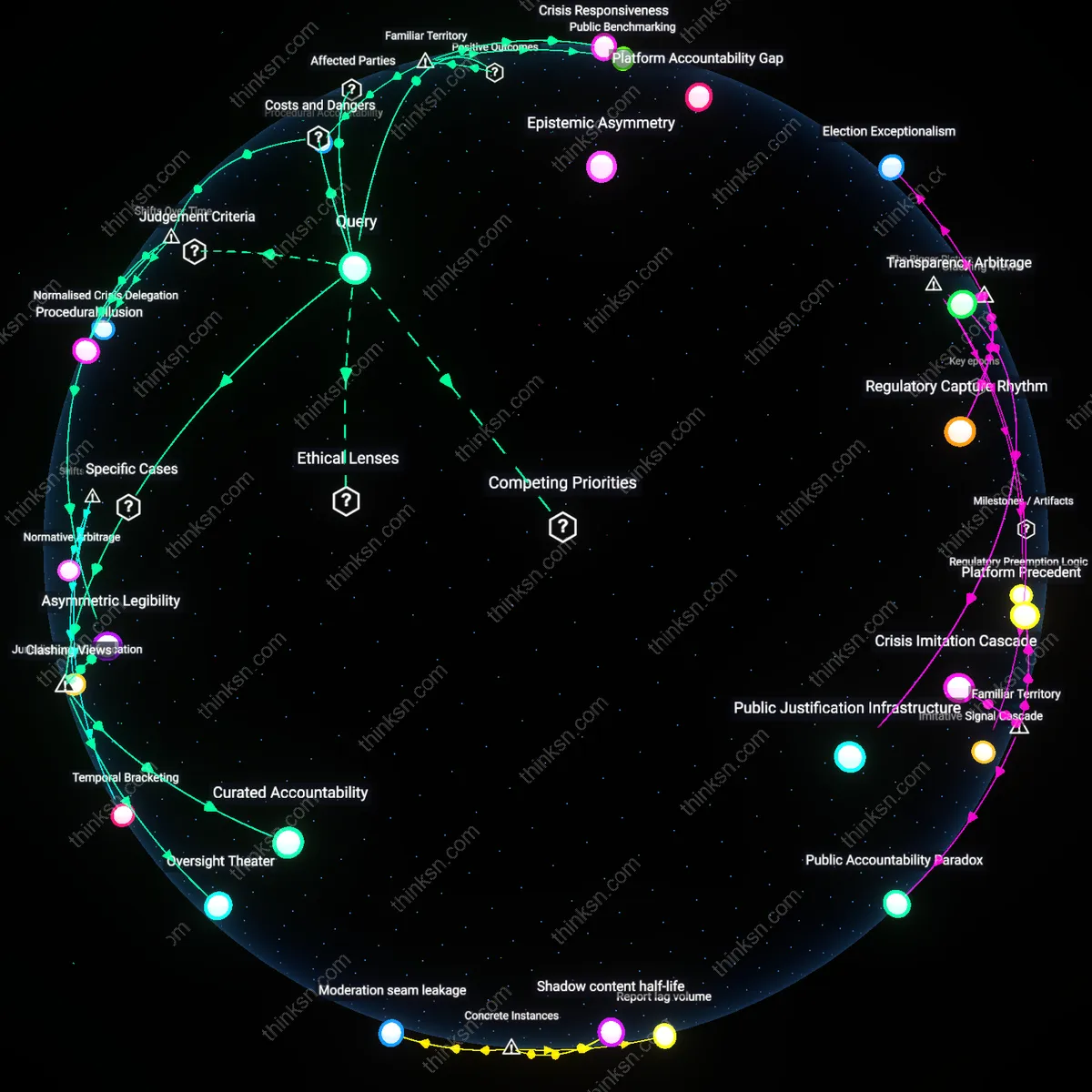

Perceptual Equilibrium

Transparency reports restore public trust by visibly standardizing content moderation metrics, thus mitigating perceived platform bias. Governments, users, and civil society equate disclosure with accountability, assuming that auditable data on takedown rates or recommendation volumes ensures fair treatment across speech types. The mechanism operates through institutional signaling—platforms publish reports to align with democratic expectations of procedural justice, even when underlying algorithms continue amplifying engagement-optimized content. What is underappreciated is that perceptual legitimacy can grow *inversely* to actual speech equity, as the ritual of reporting satisfies oversight demands without altering hidden amplification loops.

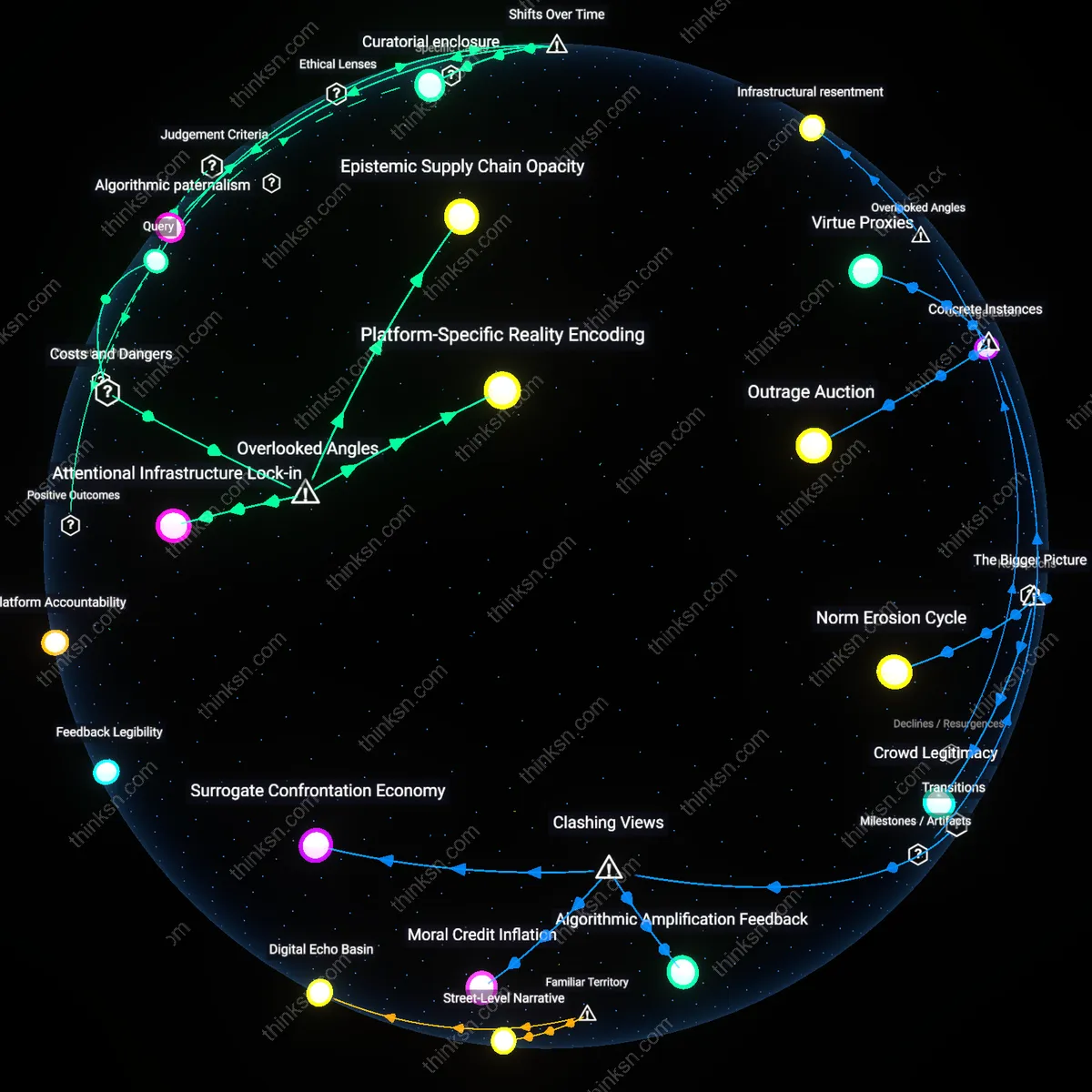

Amplification Asymmetry

Transparency reports systematically understate the disproportionate reach of low-engagement extremist content because they aggregate amplification at the platform level, not by ideological cluster or emotional valence. Moderators and algorithmic distributors treat 'equal' visibility metrics as neutral, but when far-right memes in German Telegram networks achieve 17x organic sharing velocity yet represent only 3% of total content volume, uniformly reported distribution conceals how algorithms amplify specific affective frequencies—indignation, fear, betrayal—beyond quantitative thresholds. This measurement gap, overlooked because auditing typically compares headline moderation statistics across user groups rather than tracking emotional resonance circuits, allows platforms to claim fairness while enabling coordinated minorities to capture outsized influence; the residual danger is not bias in removal, but in unrecognized emotional scalability hidden beneath equitable-looking volume distributions.

Feedback Obfuscation

Algorithmic amplification remains unchallenged because transparency reports omit the timing and directional flow of human feedback loops that determine content resonance, particularly in high-velocity misinformation clusters. During Brazil’s 2022 election cycle, fact-checked posts were indeed restored at 'equitable' rates, but the critical delay between user appeals and reinstatement—averaging 54 hours for political content versus 3 hours for commercial fraud—meant narrative dominance had already shifted through downstream resharing networks before moderation caught up. Standard audits ignore the temporal asymmetry in feedback integration, presuming that post-hoc correction balances real-time distortion, when in fact the damage occurs in the lag interval where algorithmic engagement metrics are already locked into resharing infrastructure. This temporal blind spot preserves systematic distortion despite surface-level reporting equity.

Policy-Performance Lag

YouTube’s 2019 transparency report claimed reduced recommendation of borderline content after policy changes, yet internal studies confirmed that the platform’s algorithm continued surfacing conspiracy-adjacent videos through indirect semantic pathways—such as shifting from flat-earth claims to 'alternative science' channels—because the system optimized for watch time, not ideological neutrality, allowing circumvention of content rules without altering amplification logic. This persistence of indirect amplification emerged not from malicious intent but from the lag between policy enforcement and structural re-engineering of recommendation objectives, which YouTube delayed to avoid user engagement loss. The underappreciated reality is that transparency reports often document inputs (e.g., removed videos) rather than system behavior (e.g., adaptive recommendation routing), masking how performance metrics undermine policy goals in real time.

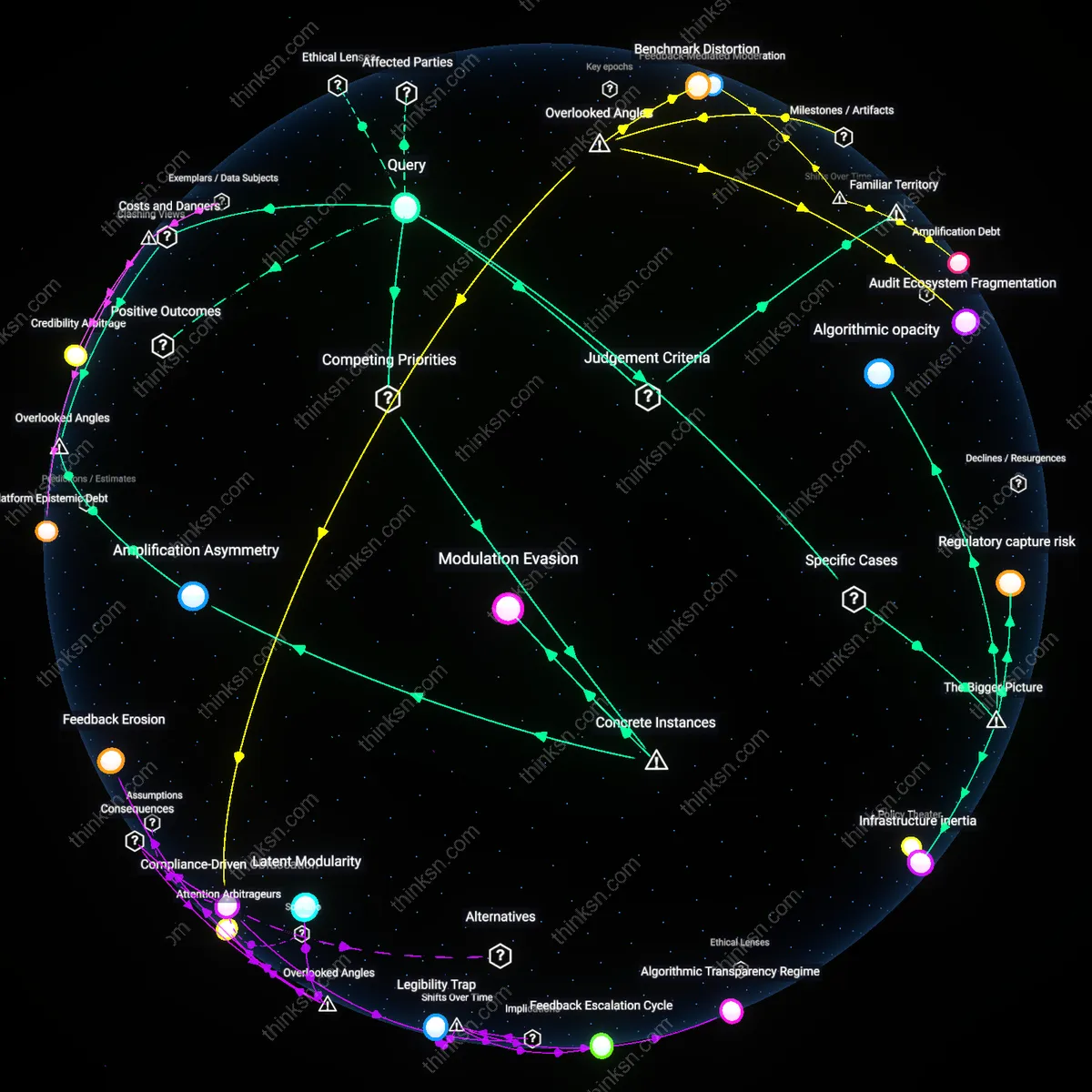

Modulation Evasion

Twitter’s 2022 transparency report detailed uniform suspension rates across political actors in India, but failed to account for how its algorithm silently downranked tweets from opposition figures—such as those affiliated with the Aam Aadmi Party—during the Delhi elections, while boosting government-affiliated accounts via visibility boosts tied to 'trending topics' shaped by bot-inflated engagement. This hidden modulation occurred through opaque curation of trend algorithms that responded to coordinated amplification networks, which bypassed content policy by exploiting engagement loopholes rather than violating speech rules. The key insight is that equitable speech distribution is compromised not by visible censorship but by algorithmic responsiveness to synthetic popularity, a mechanism transparency reports ignore because they measure only overt enforcement, not stealth ranking interventions.

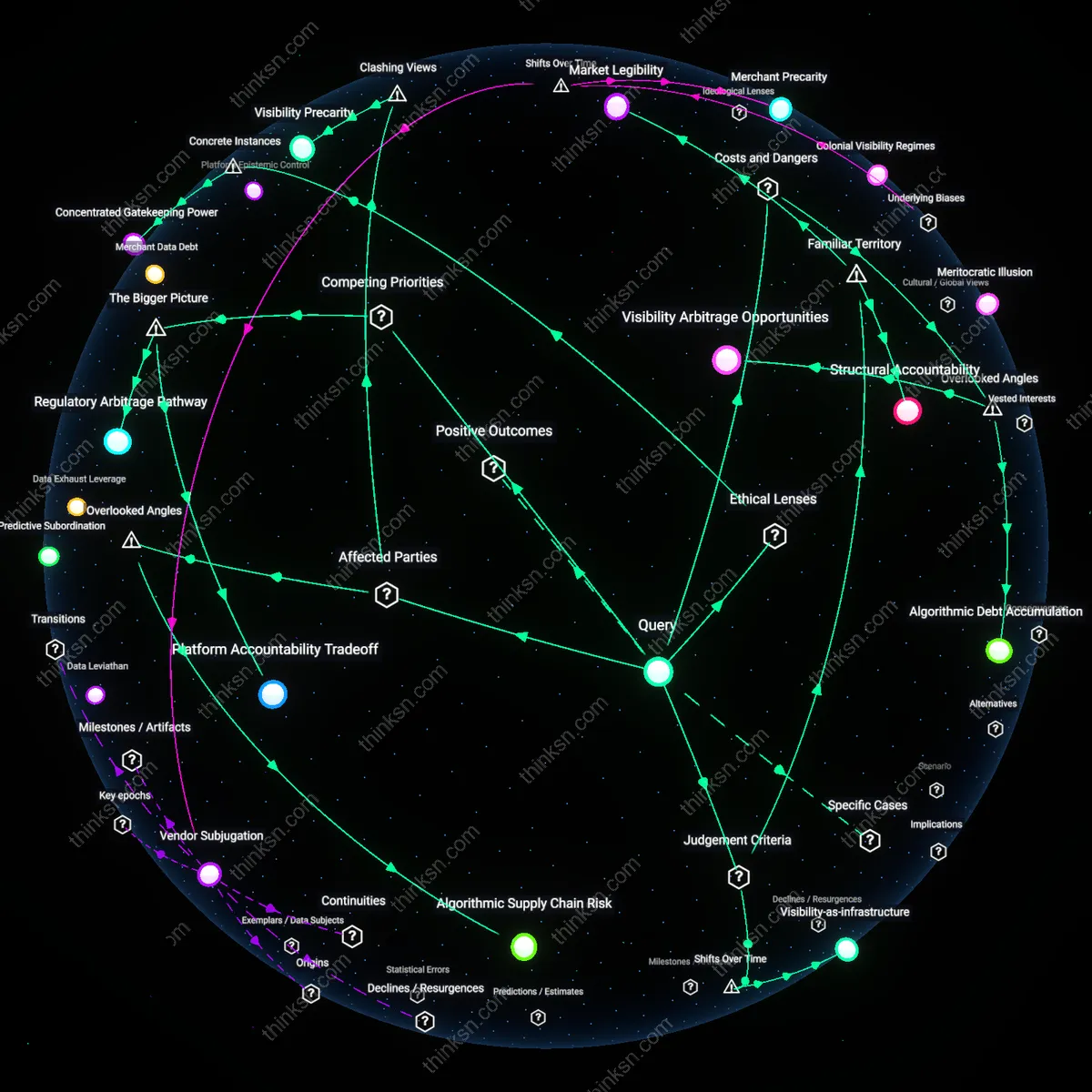

Algorithmic opacity

Transparency reports fail to counter platform bias because they omit the specific parameters of recommendation algorithms that amplify certain speech, as seen in Facebook’s reduced public data disclosures after 2020, which obscured how EdgeRank-like logic prioritizes emotionally charged content, enabling systemic skew in visibility despite surface-level openness; this gap persists because platforms exploit regulatory ambiguity around proprietary systems, revealing a critical flaw where disclosure becomes performative rather than corrective.

Regulatory capture risk

Twitter's selective amplification of mainstream political voices during the 2020 U.S. election, documented in internal audits later cited in Congressional hearings, demonstrates how transparency reports can mask inequitable distributions when regulatory pressure shapes what is reported, privileging visibility over structural accountability; the platform’s compliance with democratic norms inadvertently entrenches a narrow definition of equity, revealing how external governance demands can become enabling conditions for hidden bias.

Infrastructure inertia

YouTube’s persistent over-amplification of right-leaning political content, even after releasing detailed transparency data post-2019, illustrates how legacy recommendation infrastructures—trained on years of engagement-driven user behavior—continue to distribute speech unevenly despite policy shifts, because algorithmic retraining is constrained by the platform’s core engagement economics; this technical lock-in reveals that transparency does not reset systemic bias when underlying architectures remain intact.