Do Self-regulation Pledges by Social Networks Truly Restrict Platform Power?

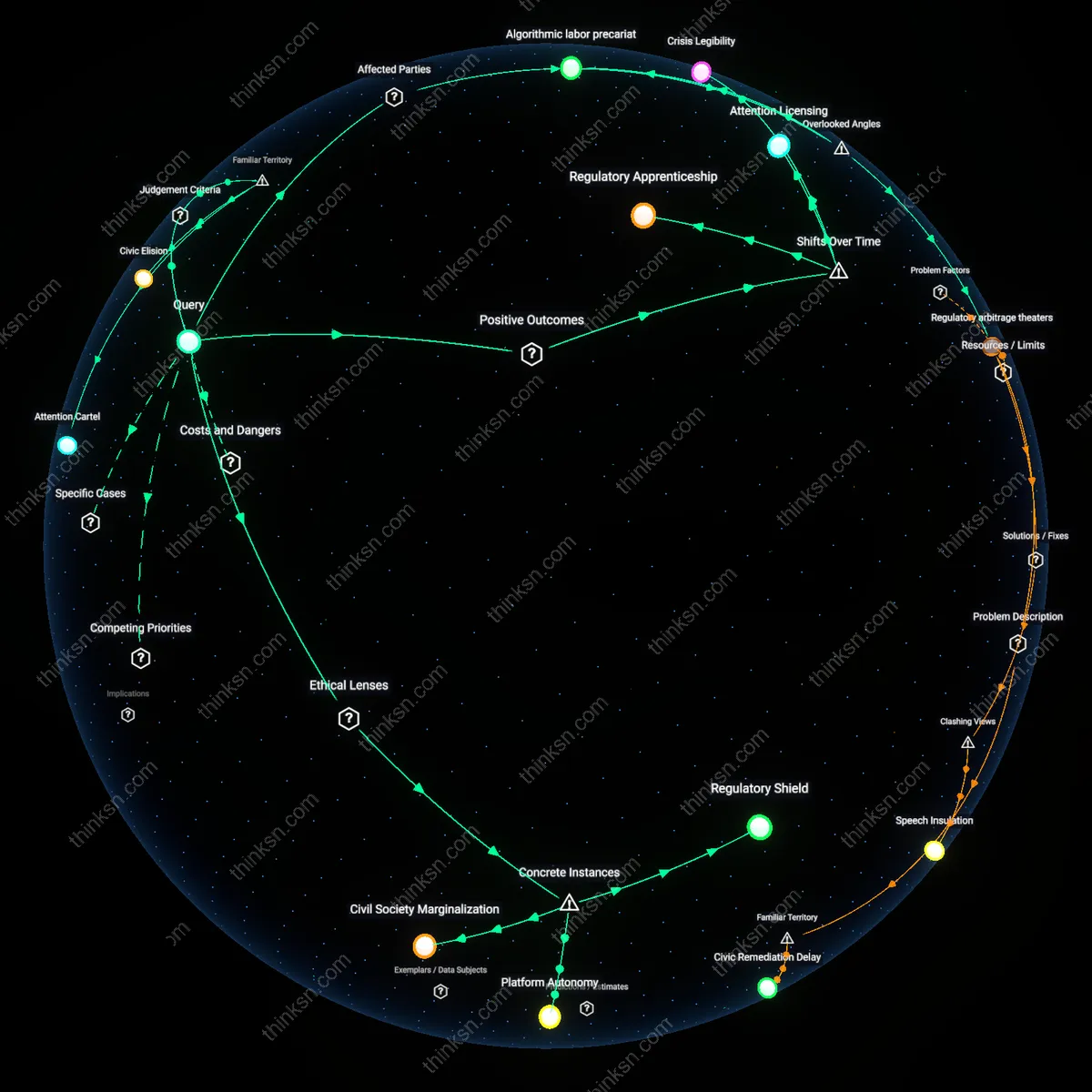

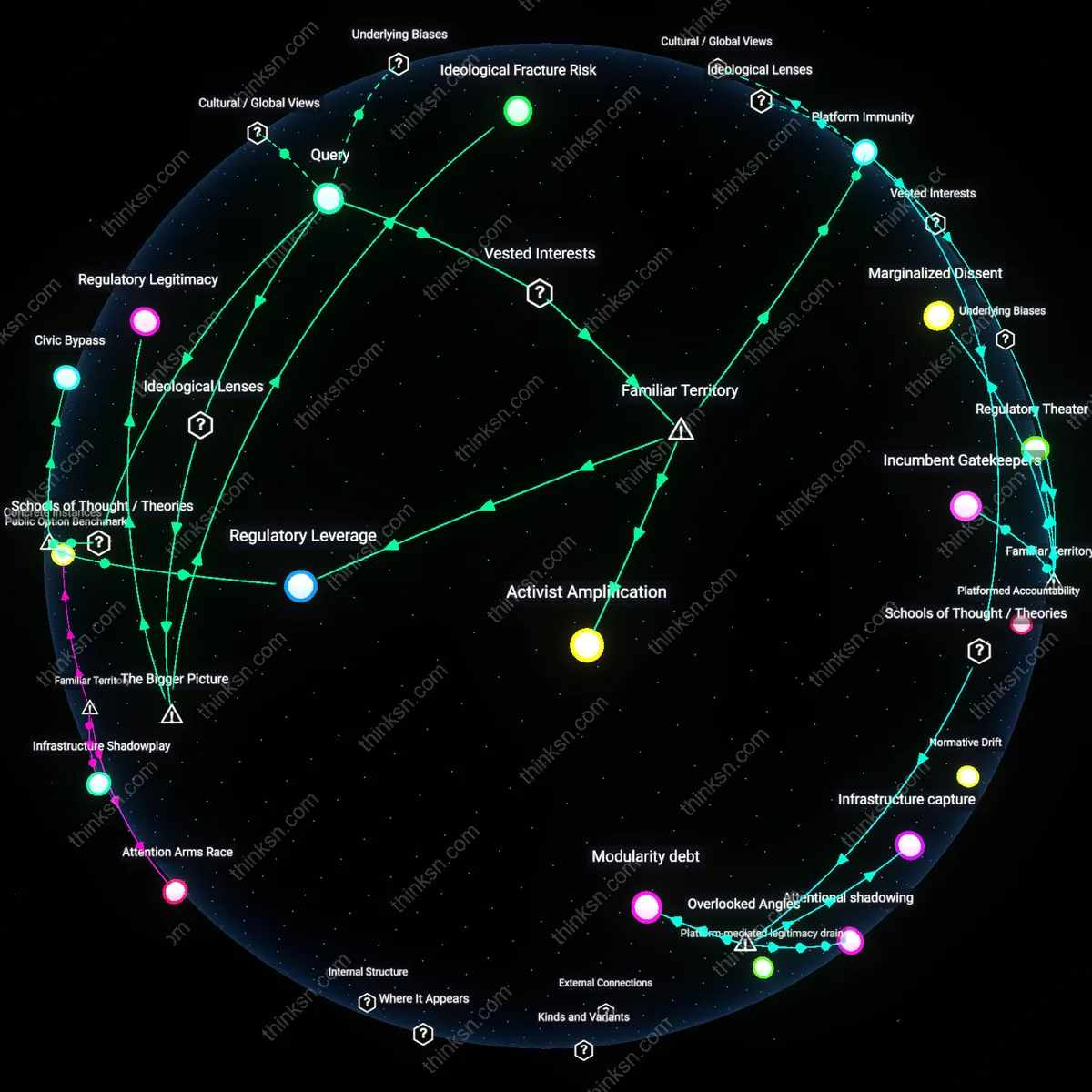

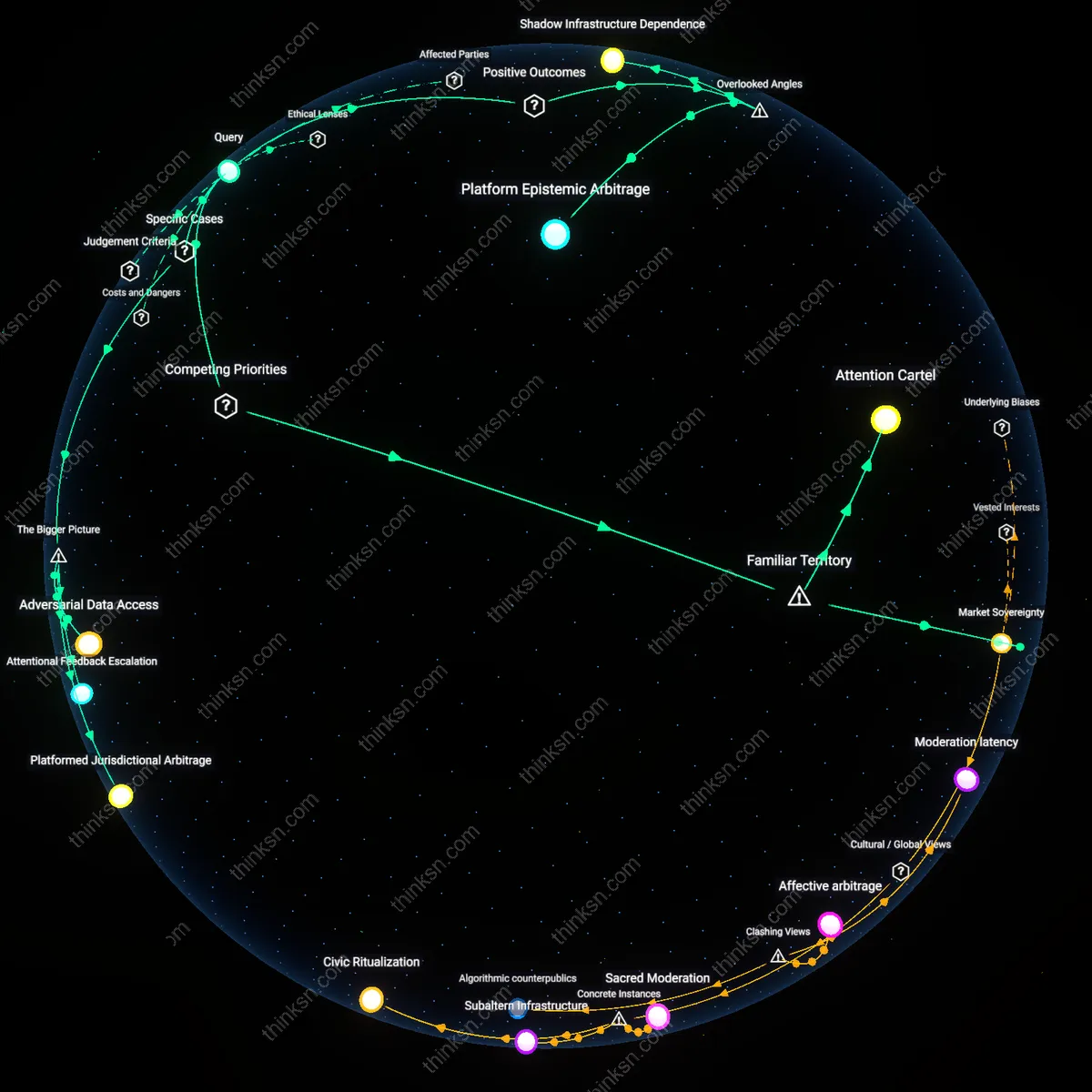

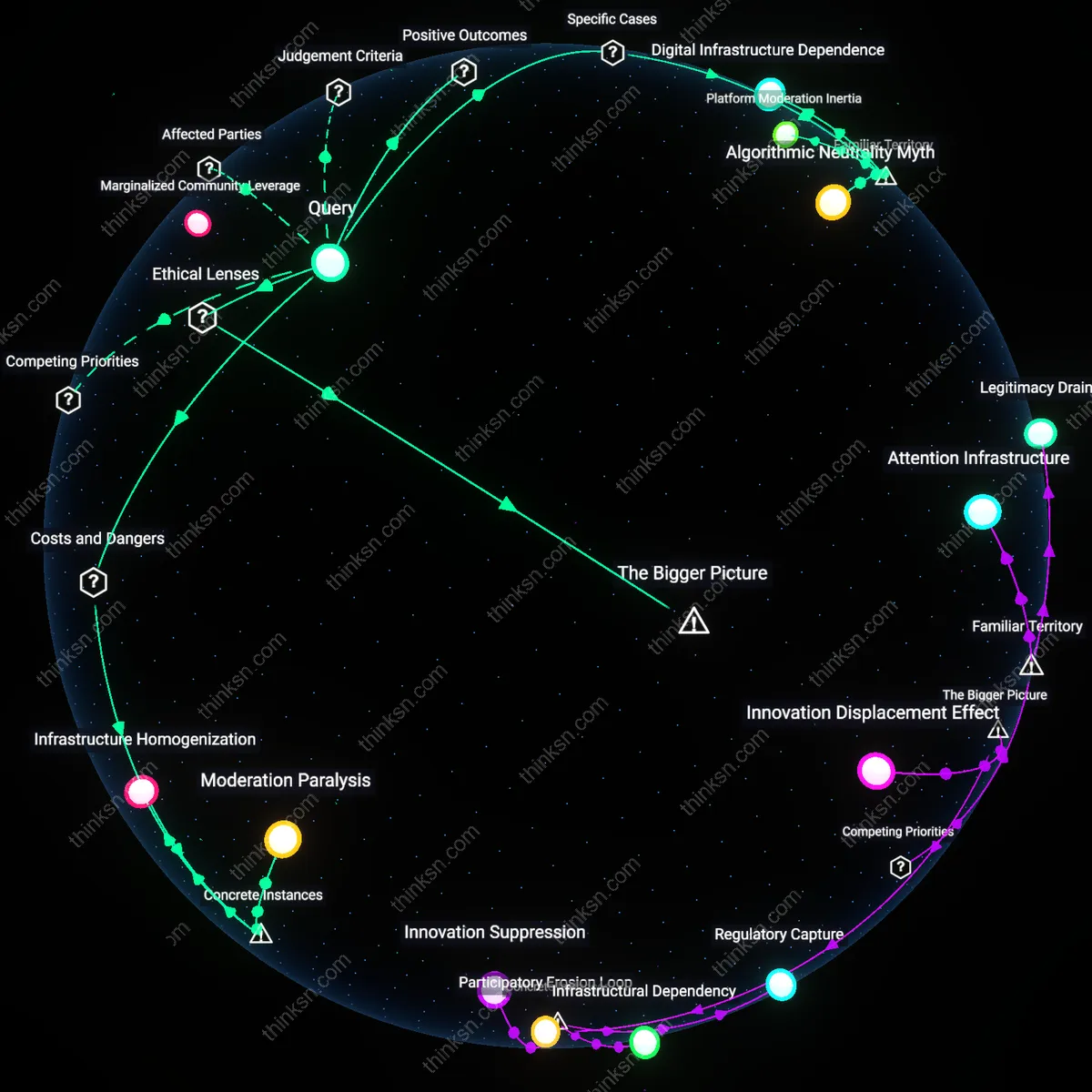

Analysis reveals 11 key thematic connections.

Key Findings

Algorithmic labor precariat

Platform moderation subcontractors in low-wage regions absorb the psychological toll of content adjudication, enabling tech firms to externalize moral injury while maintaining plausible deniability about enforcement outcomes. These outsourced workers—often employed through third-party firms in countries like the Philippines or Kenya—routinely review graphic, traumatic, or politically sensitive material without adequate mental health support, creating a hidden human infrastructure that privatizes the emotional costs of self-regulation pledges. This matters because it reveals that purported commitments to ethical speech governance function less as constraints on corporate power and more as risk-transfer mechanisms onto a globally distributed underclass whose labor sustains the facade of neutrality. The overlooked angle is not corporate overreach or user vulnerability, but the deliberate offshoring of moral decision-making to disposable workforces, which insulates platforms from accountability while deepening global digital labor hierarchies.

Regulatory arbitrage theaters

National governments with weak media oversight exploit platform self-regulation pledges to justify abdication of sovereign responsibility, treating corporate codes of conduct as functional substitutes for legislative frameworks. In countries like Brazil or India, regulators point to Meta’s or Google’s community standards as evidence of 'sufficient' content governance, thereby avoiding the political cost of passing controversial speech laws while extracting concessions through threat of intervention. This dynamic matters because it reframes voluntary pledges not as limitations on platform power, but as diplomatic instruments enabling states to outsource norm enforcement—especially during elections or civil unrest—while retaining plausible deniability. The underappreciated reality is that self-regulation functions less as corporate restraint than as a mutual delusion between platforms and regimes, where both benefit from ambiguous, unenforceable commitments that avoid binding obligations.

Corporate Sovereignty

Major social networks benefit most from their self-regulation pledges because these commitments function as strategic concessions that preempt stricter government oversight, allowing platforms like Meta and Twitter to maintain legislative breathing room while shaping the terms of acceptable speech within broadly defined community guidelines. This mechanism operates through repetitive cycles of public crisis followed by voluntary reform announcements, which satisfy immediate regulatory pressure without requiring structural accountability, thereby preserving the platforms’ unilateral power over content moderation at scale. The non-obvious insight here, against the familiar narrative of user protection and democratic integrity, is that self-regulation functions not as a check on control but as a legitimizing performance that enhances corporate jurisdiction over public discourse.

Attention Cartel

Advertisers and their associated data brokers benefit most from self-regulation pledges because these frameworks sustain a stable, predictable environment for targeted messaging by minimizing platform volatility and regulatory disruption, ensuring continuous access to user attention streams. This system functions through the mutual dependence of platforms and ad-tech firms on behavioral data flows, where moderation pledges reduce the risk of sudden policy shifts that could fragment audience segmentation or trigger mass user migration, thus reinforcing a de facto cartel in attention extraction. Despite common associations of self-regulation with user safety or civic health, the underappreciated reality is that the primary stability beneficiaries are not the public but the commercial ecosystem built around scalable influence operations.

Civic Elision

Elected officials and national governments benefit from social media self-regulation pledges because these commitments allow states to outsource politically sensitive content moderation decisions to private firms, thereby avoiding direct responsibility for censorship accusations while still achieving policy goals related to disinformation or extremism. This dynamic operates through deliberate jurisdictional ambiguity—where platforms become de facto speech regulators without democratic mandate, shielding governments from backlash over free expression concerns. The familiar discourse around platform power obscures the complicity of state actors who exploit self-regulation as a mechanism of plausible deniability, making civic accountability vanish into a gap no institution claims.

Regulatory Apprenticeship

Self-regulation pledges by major social networks after 2016 created a proving ground for state actors to assess corporate capacity to govern speech, where platforms demonstrated harm-reduction protocols in exchange for delayed legislative intervention, revealing an underappreciated transition from adversarial posture to conditional co-regulation that substituted immediate statutory oversight with monitored autonomy during a critical window of democratic vulnerability.

Crisis Legibility

The period following the 2020 U.S. election marked a shift wherein self-regulation pledges ceased to function as voluntary assurances and instead became audit trails that made previously opaque content moderation decisions legible to courts, journalists, and oversight bodies, transforming ephemeral corporate promises into evidentiary records that retrospectively enabled accountability during a moment when public trust in platform neutrality collapsed.

Attention Licensing

As social networks moved from algorithmic amplification as default to pledging restraint after 2018, particularly around viral misinformation, they inadvertently established a new economy where user attention became conditionally licensed rather than freely harvested, marking a decisive departure from the engagement-maximization paradigm and revealing how temporary self-imposed limits could function as reversible concessions during periods of regulatory threat.

Regulatory Shield

Governments benefit from social networks' self-regulation pledges because these commitments allow states to outsource content moderation while avoiding accountability for censorship, as seen when the European Commission endorsed the 2016 Code of Conduct on Countering Illegal Hate Speech, enabling EU member states to pressure platforms like Facebook and YouTube to remove content without passing controversial legislation—this dynamic reveals how voluntary pledges function as a regulatory shield, insulating democratic governments from First Amendment-like scrutiny while expanding de facto control over speech through private intermediaries.

Platform Autonomy

The platforms themselves benefit from self-regulation pledges because these agreements grant them discretion in enforcement while preempting stricter legal mandates, exemplified by Twitter’s adherence to the Christchurch Call after the 2019 mosque shootings, where it publicly committed to faster removal of violent extremist content but retained ultimate authority over what constituted enforceable policy—this instance exposes platform autonomy as the core outcome, where public pledges serve as performative compliance without ceding algorithmic or editorial sovereignty.

Civil Society Marginalization

Marginalized communities are systematically disadvantaged by self-regulation pledges, as seen in the 2020 #StopHateForProfit campaign where civil rights groups like the NAACP Legal Defense Fund exposed Facebook’s failure to enforce its own hate speech policies in Black and Indigenous communities, revealing that voluntary frameworks prioritize brand safety over racial justice—this illustrates civil society marginalization, where ethical commitments under liberalism fail to redistribute power because enforcement remains unilaterally controlled by corporate actors indifferent to redistributive equity.