Public Funding vs Algorithms: Tackling Misinformation Effectively?

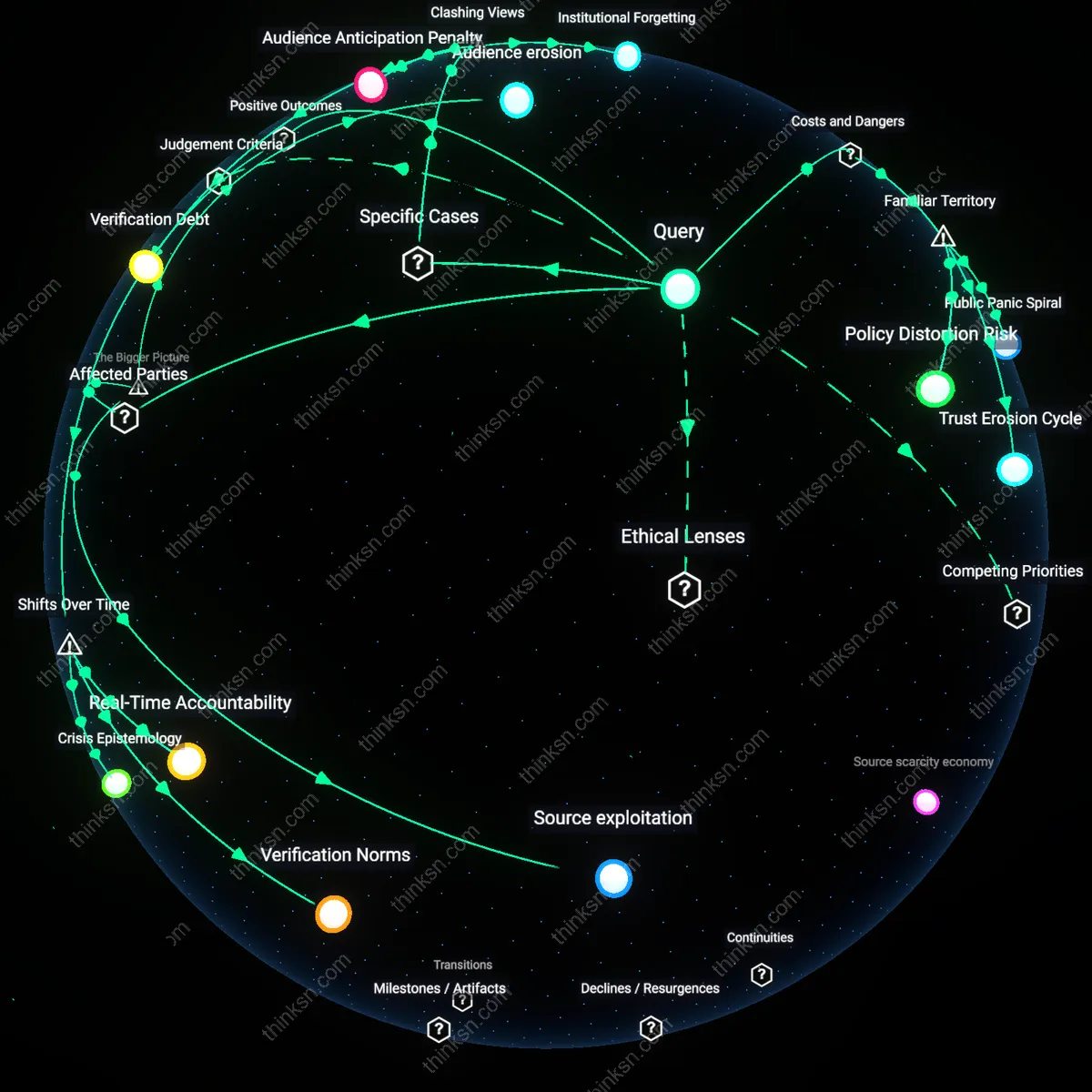

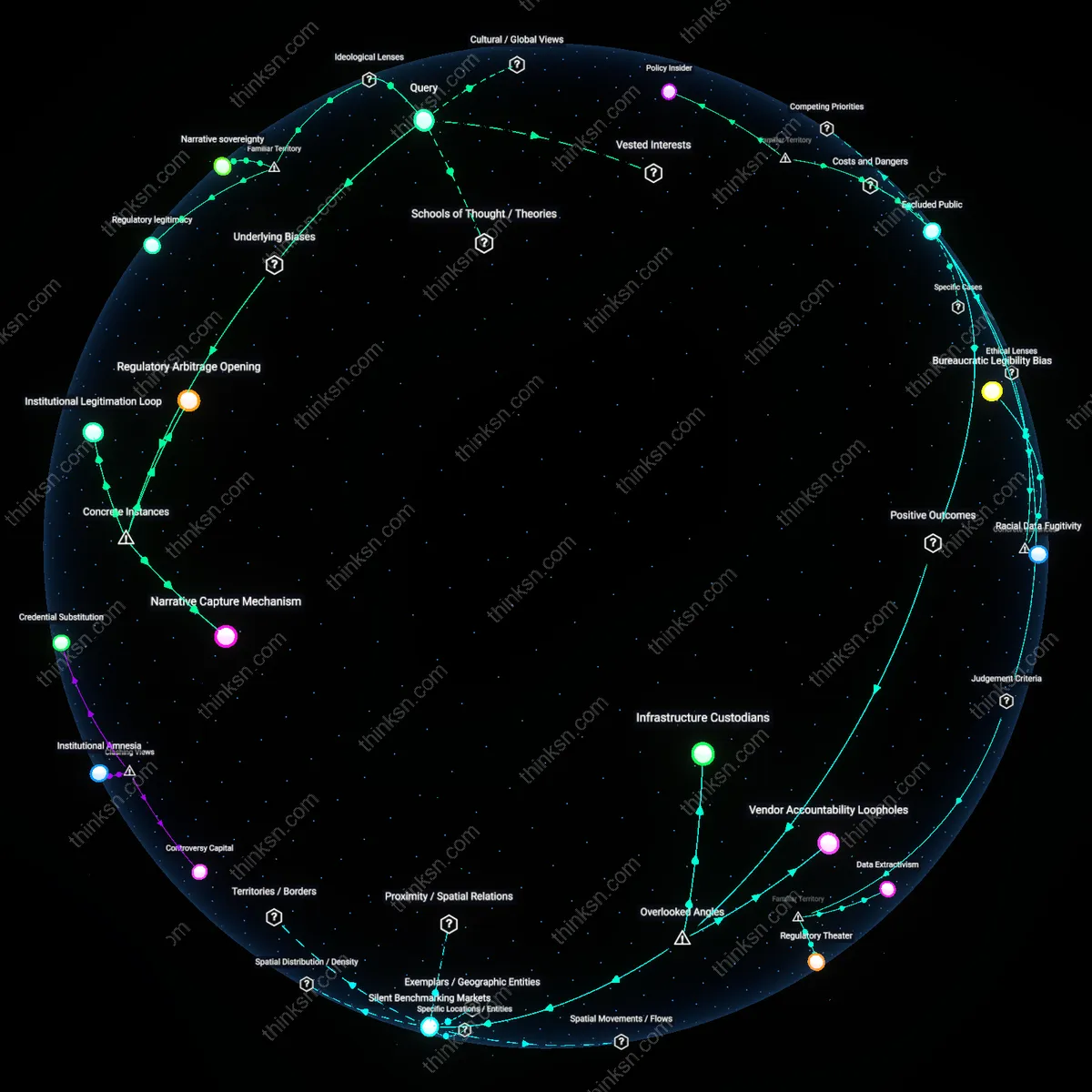

Analysis reveals 10 key thematic connections.

Key Findings

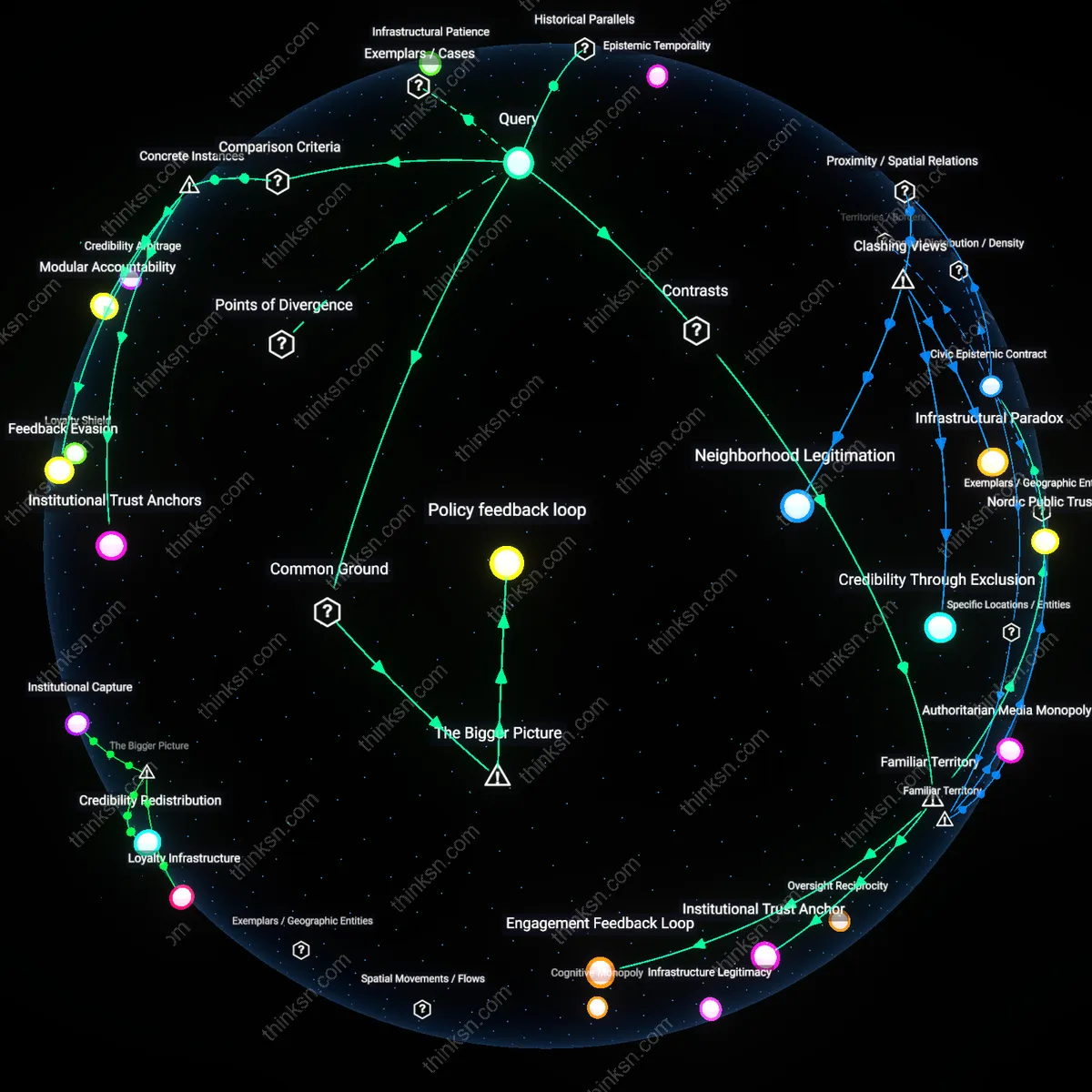

Infrastructural Patience

Public-broadcast funding models counter misinformation more effectively during transitional media eras by insulating editorial institutions from real-time audience metrics, as seen in the postwar BBC model before commercial television expansion in 1960s Britain; this buffer enabled long-horizon trust-building through consistent factual norms rather than reactive correction, a mechanism eroded when algorithmic systems prioritized engagement velocity after the 2008 shift to machine-curated digital platforms. The non-obvious insight is that the decline of public funding did not merely reduce resources but dismantled a temporally extended feedback loop—where credibility was rewarded over years, not hours—revealing that resilience to misinformation depends less on content accuracy alone than on the time horizon encoded in distribution systems.

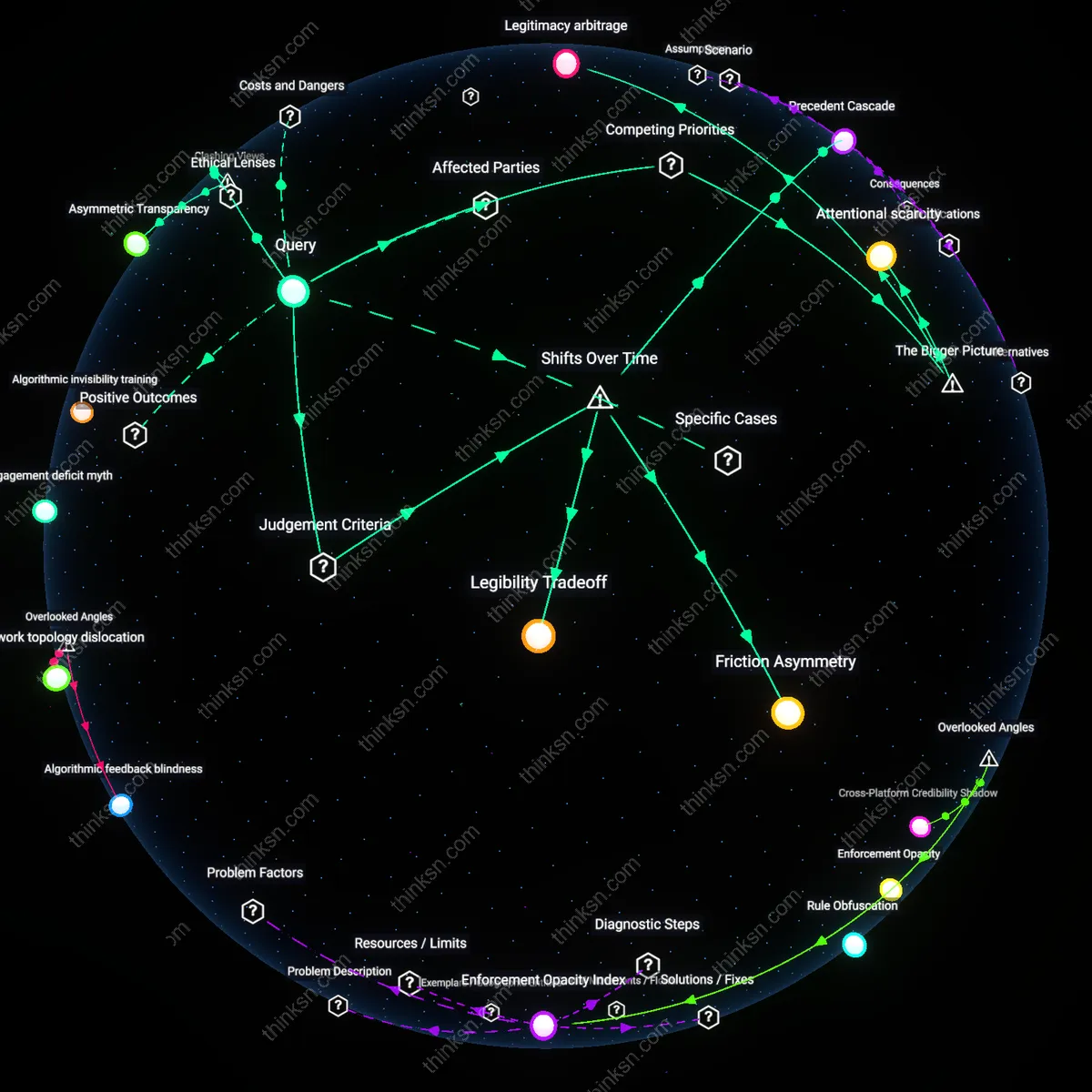

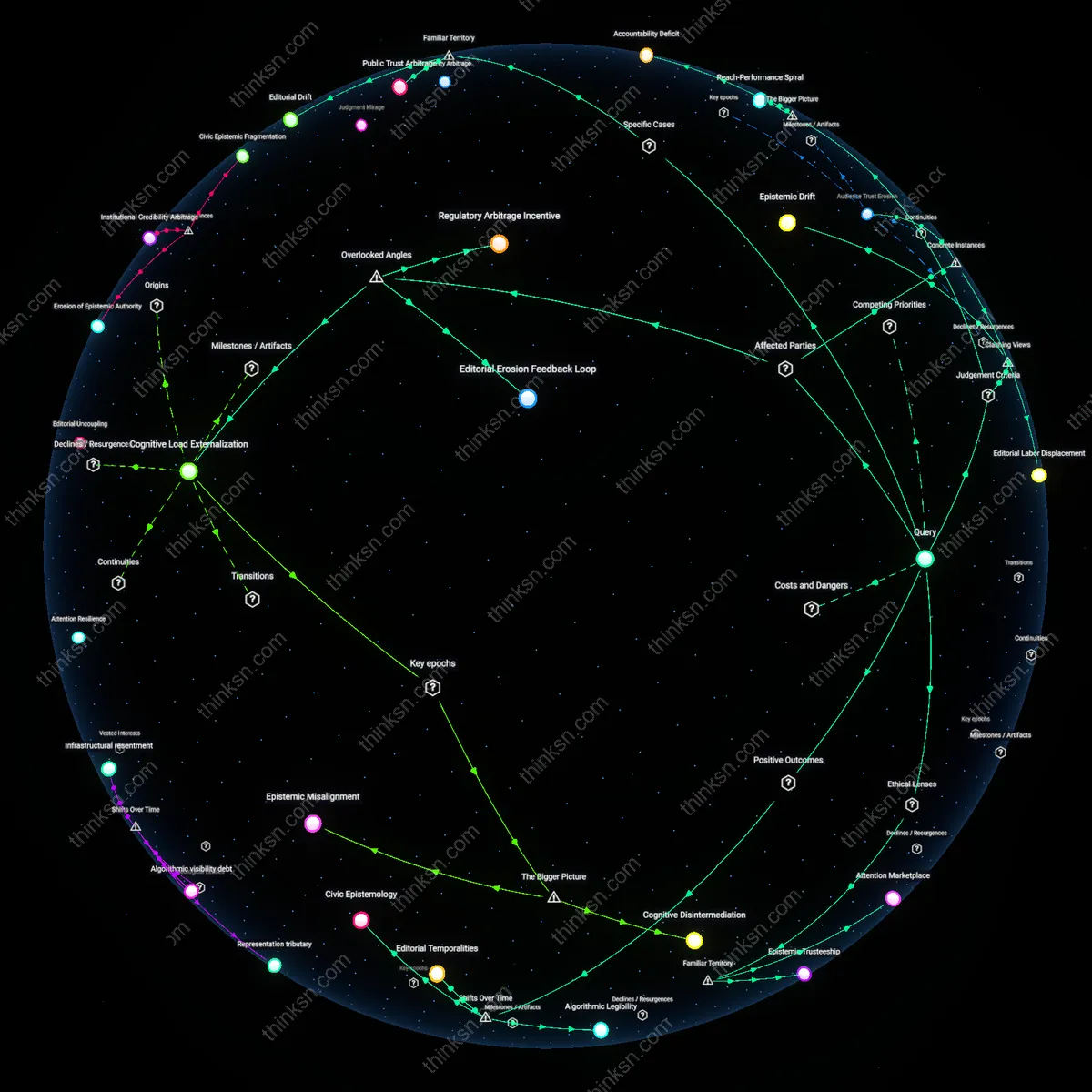

Governance Arbitrage

Algorithm-driven content moderation has displaced public-broadcast norms by exploiting regulatory lag between national media standards and transnational platform operations, exemplified by Facebook’s 2016 pivot to AI-driven fact-checking partnerships in response to EU pressure while maintaining hands-off policies in Global South contexts; this created a two-tier system where misinformation governance becomes contingent on state capacity to impose compliance, not universal editorial principles. The critical historical shift occurred around 2018–2020, when coordinated disinformation campaigns exposed how algorithmic systems could legally externalize harm to weaker regulatory environments, revealing that the erosion of public-broadcast universality was not inevitable but strategically offshored.

Epistemic Temporality

The transition from public-broadcast primacy to algorithmic dominance redefined what counts as 'timely' validation of truth, illustrated by the replacement of the 1970s PBS editorial review cycle—averaging 72 hours for contested segments—with YouTube’s real-time moderation dashboards post-2019 that flag content within minutes but based on probabilistic similarity to known false clusters; this compressed the epistemic event horizon so drastically that plausibility is now assessed through pattern-matching rather than source verification. The underappreciated consequence is that misinformation is no longer countered through institutional authority but contained through statistical suppression, exposing a new temporal condition where belief stabilizes faster than refutation can be credibly delivered.

Institutional Trust Anchor

Public-broadcast funding models counter misinformation by sustaining editorial independence from both state and market pressures, as seen in entities like the BBC or NPR, which rely on license fees or public appropriations to maintain consistent, fact-checked reporting. This model operates through legally insulated editorial boards and public service mandates that prioritize accuracy over engagement, contrasting with algorithmic systems that optimize for attention. The non-obvious insight from familiar associations with 'trusted news' is that the anchor of credibility lies not in content volume or speed, but in the perception of stable, accountable institutions—what people implicitly recall when they think of 'the evening news' as a shared reference point.

Engagement Feedback Loop

Algorithm-driven content moderation amplifies or suppresses misinformation based on user engagement patterns, as deployed by platforms like YouTube or Facebook, where recommendation engines prioritize content that retains attention, regardless of accuracy. This system functions through real-time behavioral data loops that reward emotional resonance over factual integrity, making it structurally incapable of upholding truth standards in the way public broadcasters do. While most people associate 'viral misinformation' with algorithms, the underappreciated reality is that the mechanism isn't censorship or bias but a neutralized incentive structure—designed to reflect user interest, not correct it—turning moderation into a byproduct of engagement rather than a purposeful act.

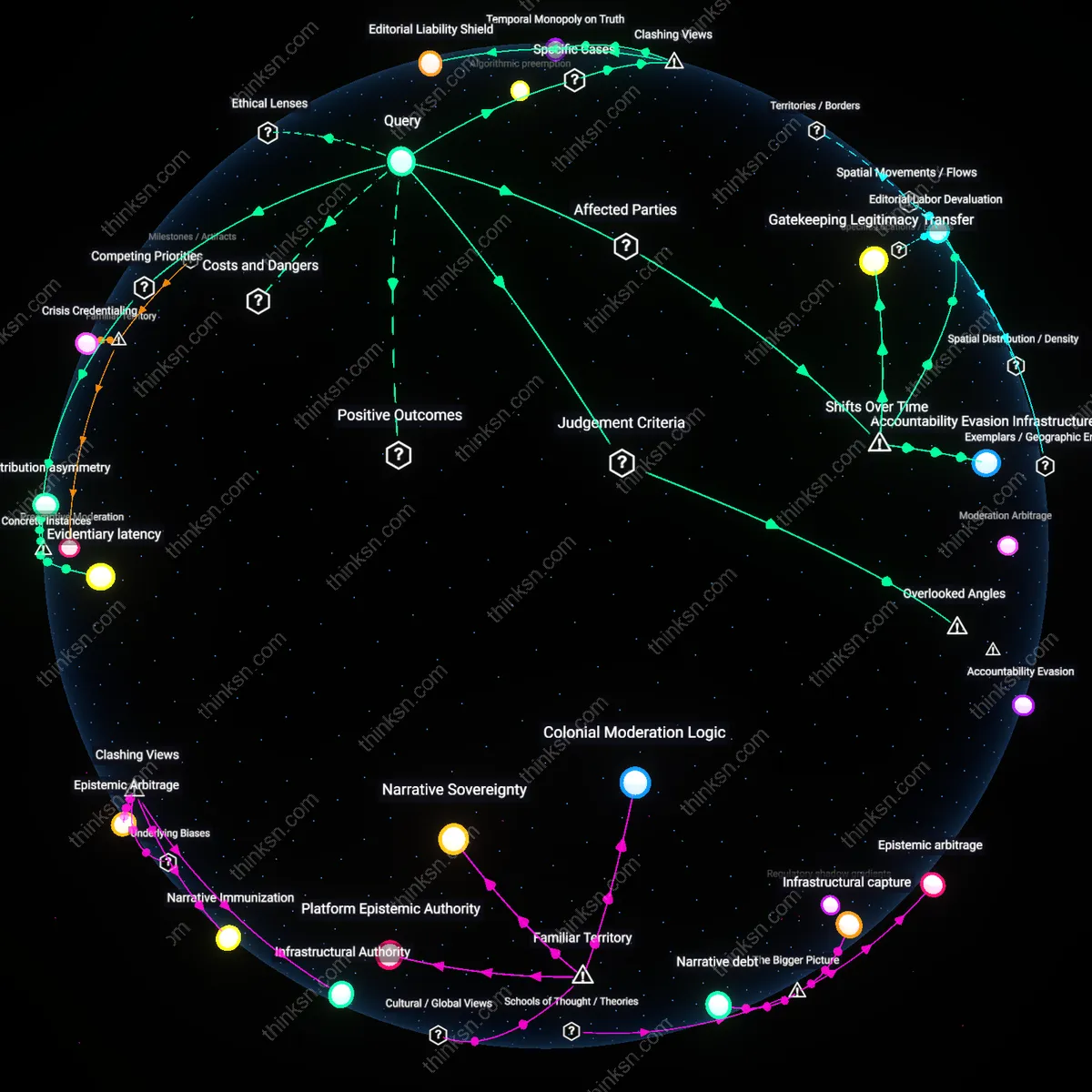

Civic Epistemic Contract

Public broadcasting counters misinformation by fulfilling an implicit social contract in which citizens accept funding mechanisms like TV license fees in exchange for reliable, universal information, as institutionalized in countries like Germany or Japan. This operates through a normative expectation that information essential to democracy should be shielded from both commercial distortion and algorithmic volatility. Unlike the familiar tech narrative of 'fixing platforms,' this model reflects a widely recognized but rarely articulated belief—rooted in postwar democratic reconstruction—that accurate public knowledge is a collective good, not a personalized feed, and that its governance belongs to civic institutions, not code.

Institutional Trust Anchors

Public-broadcast funding models counter misinformation more effectively than algorithm-driven moderation by anchoring content legitimacy in state-guaranteed institutional autonomy, as demonstrated by Japan's NHK during the 2011 Fukushima disaster, where its publicly funded weather and crisis reporting maintained public compliance with evacuation orders amid viral rumors; unlike algorithmically moderated platforms such as YouTube, which amplified conflicting nuclear safety claims through engagement-based ranking, NHK’s funding insulated it from amplification pressures and prioritized authoritative coordination, revealing that long-term public trust is sustained not by content removal but by consistent, predictable institutional presence.

Feedback Evasion

Algorithm-driven content moderation fails to counter misinformation under high cognitive load when users bypass recommended content through direct sharing, as evidenced by WhatsApp’s role in spreading false medical advice during India’s 2018 measles vaccination campaign, where end-to-end encryption and private group dynamics rendered platform algorithms blind to viral misinformation, whereas All India Radio’s state-funded rural broadcasts—despite limited interactivity—achieved higher correction reach by circumventing algorithmic dependency entirely, showing that systems avoiding user feedback loops can outperform adaptive algorithms in low-digital-literacy environments.

Modular Accountability

Public-broadcast funding enables segmented accountability in misinformation response, as seen in Germany’s ARD-ZDF joint public network during the 2020 Querdenken protests, where staggered, regionally tailored fact-based programming diluted conspiracy narratives without centralized takedown power, contrasting with Facebook’s algorithmic approach that applied uniform rule enforcement and inadvertently amplified fringe visibility through backlash dynamics; the modular structure of decentralized public producers allowed geographically responsive narratives while preserving legitimacy, demonstrating that distributed editorial authority can reduce polarization more effectively than monolithic algorithmic governance.

Policy feedback loop

Public-broadcast funding models indirectly shape the effectiveness of algorithm-driven moderation by setting normative baselines for credible information that regulatory bodies and platforms then reference in crisis events. When the French government funded France Télévisions’ cross-platform fact-checking during elections, it established a publicly legitimized information anchor that social media companies were pressured to align with—evident in Facebook’s partnership with Le Monde’s Les Décodeurs during the 2022 presidential election. This creates a feedback mechanism where public media’s credibility becomes a regulatory asset, enabling states to coerce platform accountability through legitimacy arbitrage rather than direct control. The non-obvious dynamic is that public funding does not just produce trustworthy content but expands the state’s strategic capacity to govern private algorithms by pre-defining what counts as ‘true’ in contested information spaces.