Do Sensational Politics on Social Media Harm Public Discourse?

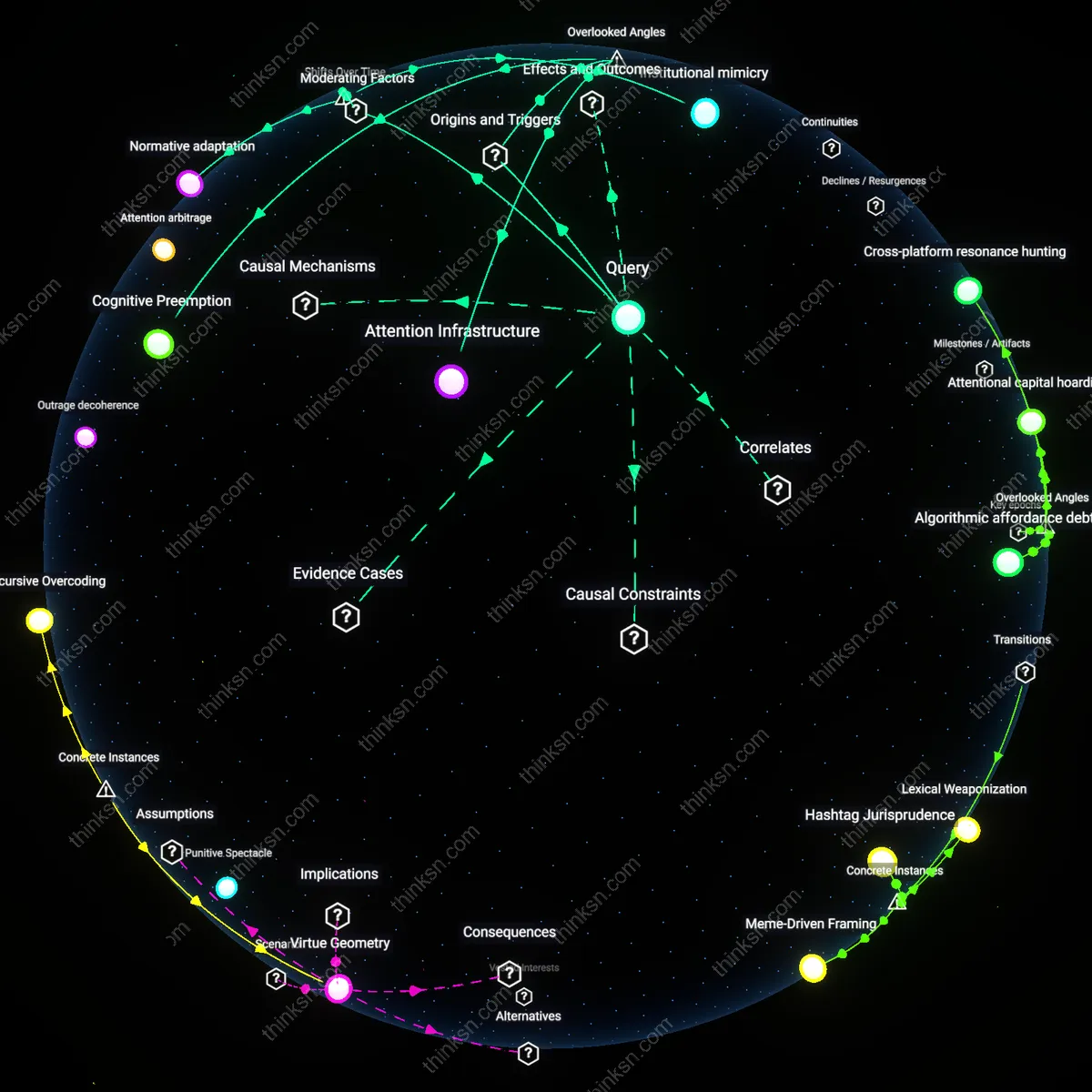

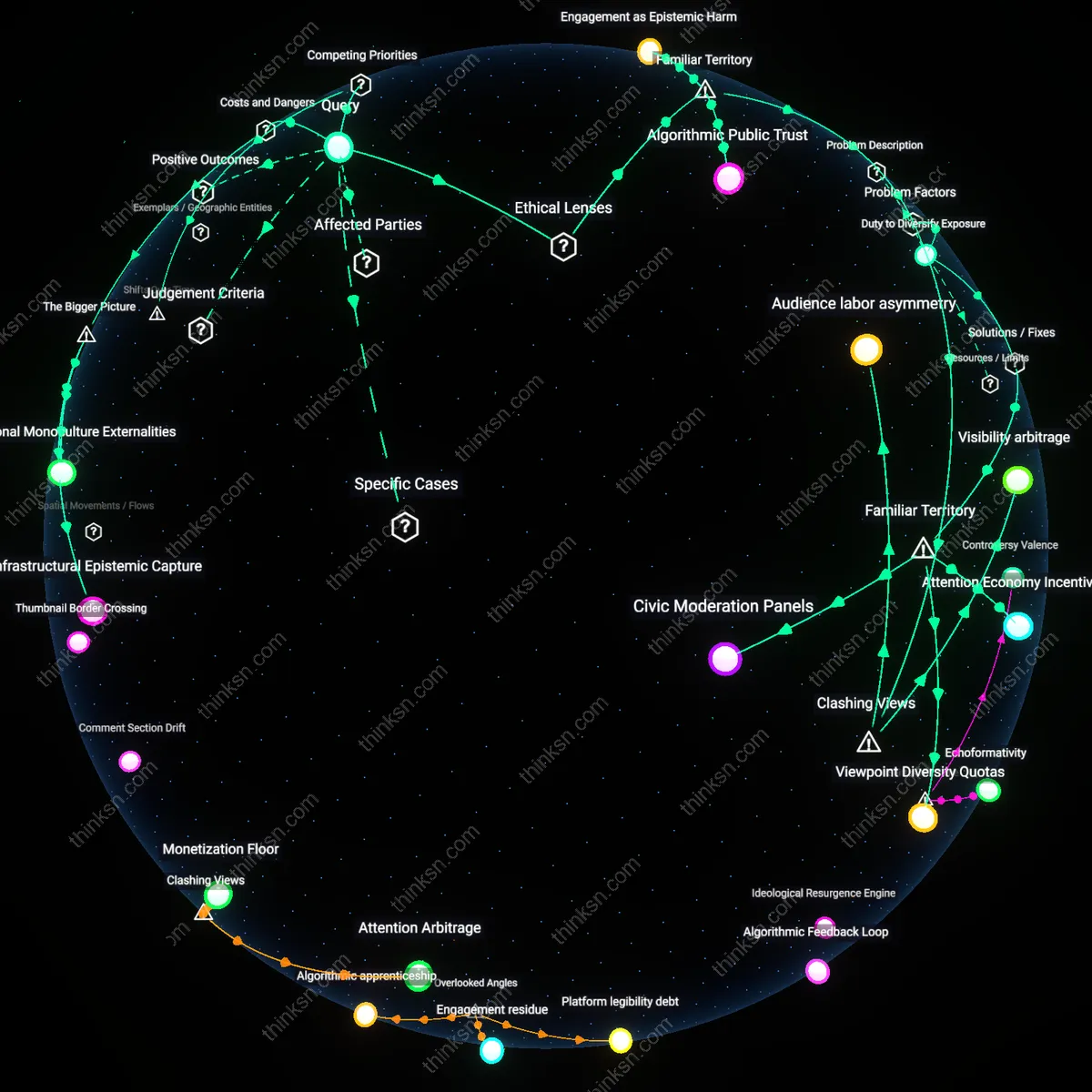

Analysis reveals 5 key thematic connections.

Key Findings

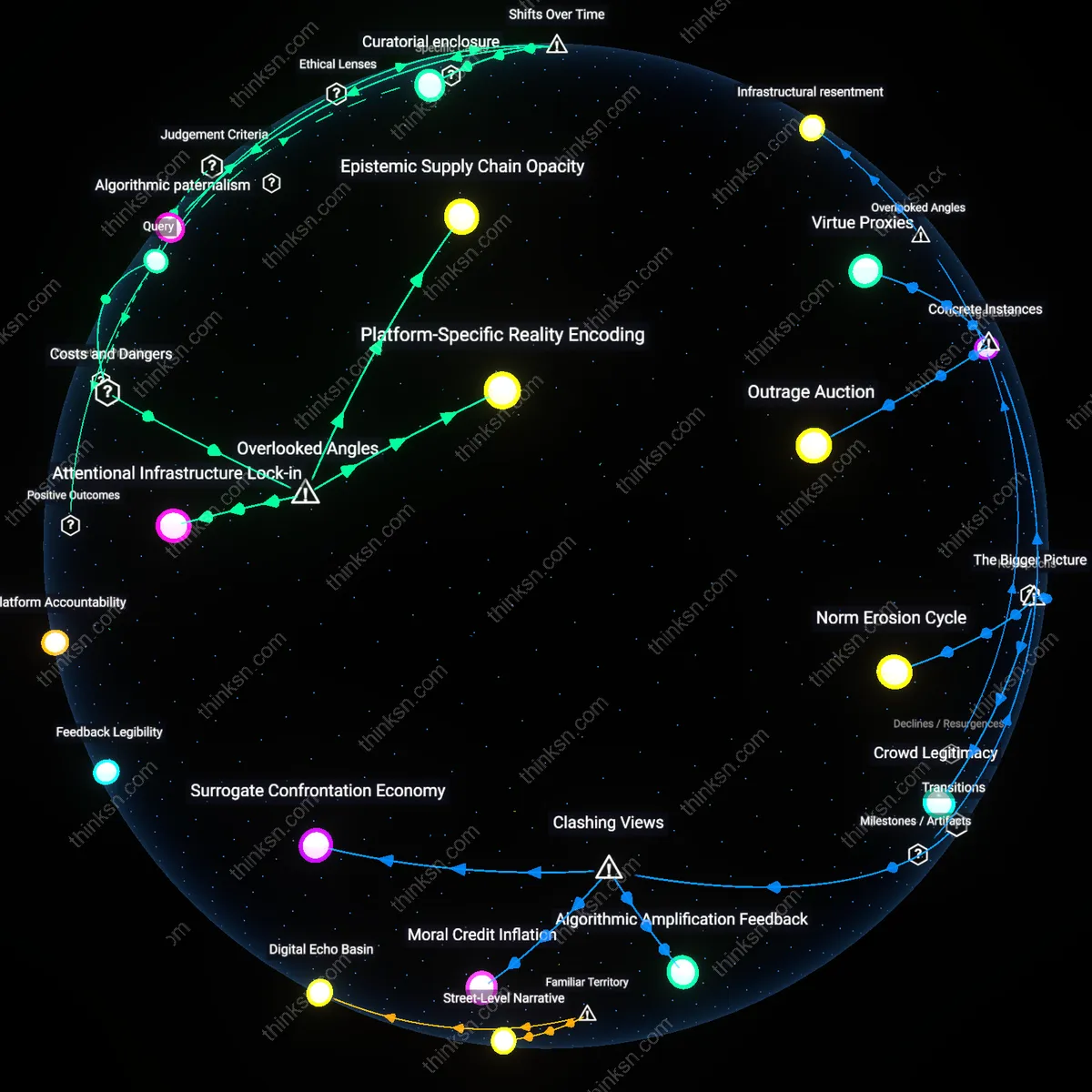

Attentional Externalities

Yes, the algorithmic amplification of sensational content harms public discourse more than any legitimate claim of neutrality can justify because the economic design of social media platforms treats user attention as a tradable commodity, leading to extractive attention economies where political discourse is downgraded to click-driven spectacle. This happens through real-time auction systems like Meta’s ad marketplace, which monetize distraction and reward virality regardless of societal cost, meaning platforms have structurally incentivized the displacement of substantive debate by emotionally reactive content; the underappreciated reality is that these platforms do not merely reflect public discourse—they asymmetrically shape it through engineered interruptions and reward structures that most users do not perceive as coercive, despite their measurable influence on behavioral norms.

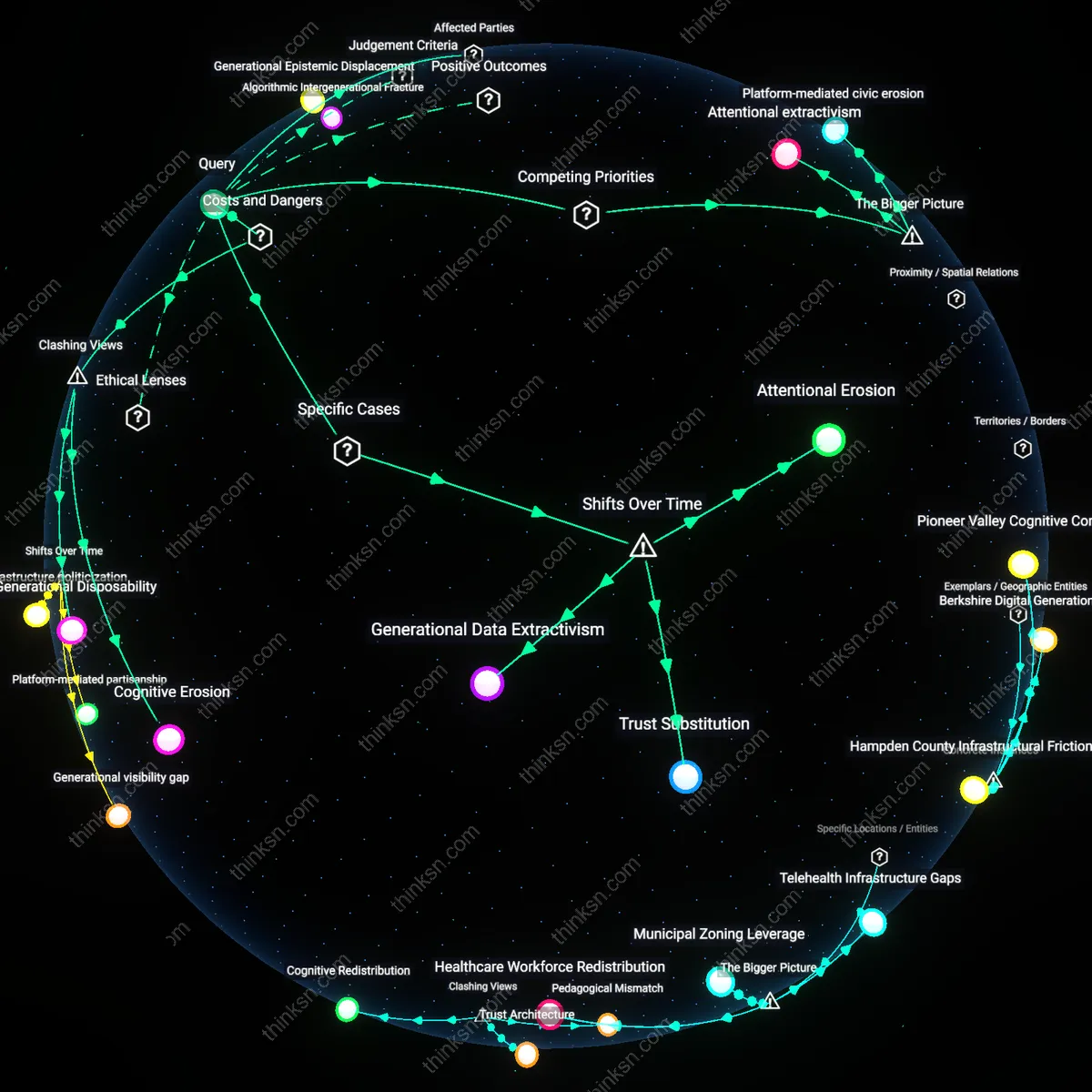

Platform Procedural Illegitimacy

Yes, the harm outweighs neutrality claims because the procedural opacity of algorithmic curation undermines democratic accountability, rendering platforms’ claims of neutrality indistinguishable from arbitrary rule by code. This occurs through the delegation of editorial power to private engineering teams at companies like TikTok or X (formerly Twitter), who deploy recommendation logics without public oversight or appeal, effectively deciding which voices gain visibility under the guise of technical optimization; the underappreciated point is that when algorithmic systems suppress or magnify political speech without transparent criteria, they replicate the functions of state-like authority without the corresponding duties of due process, thereby eroding trust in both institutions and discourse.

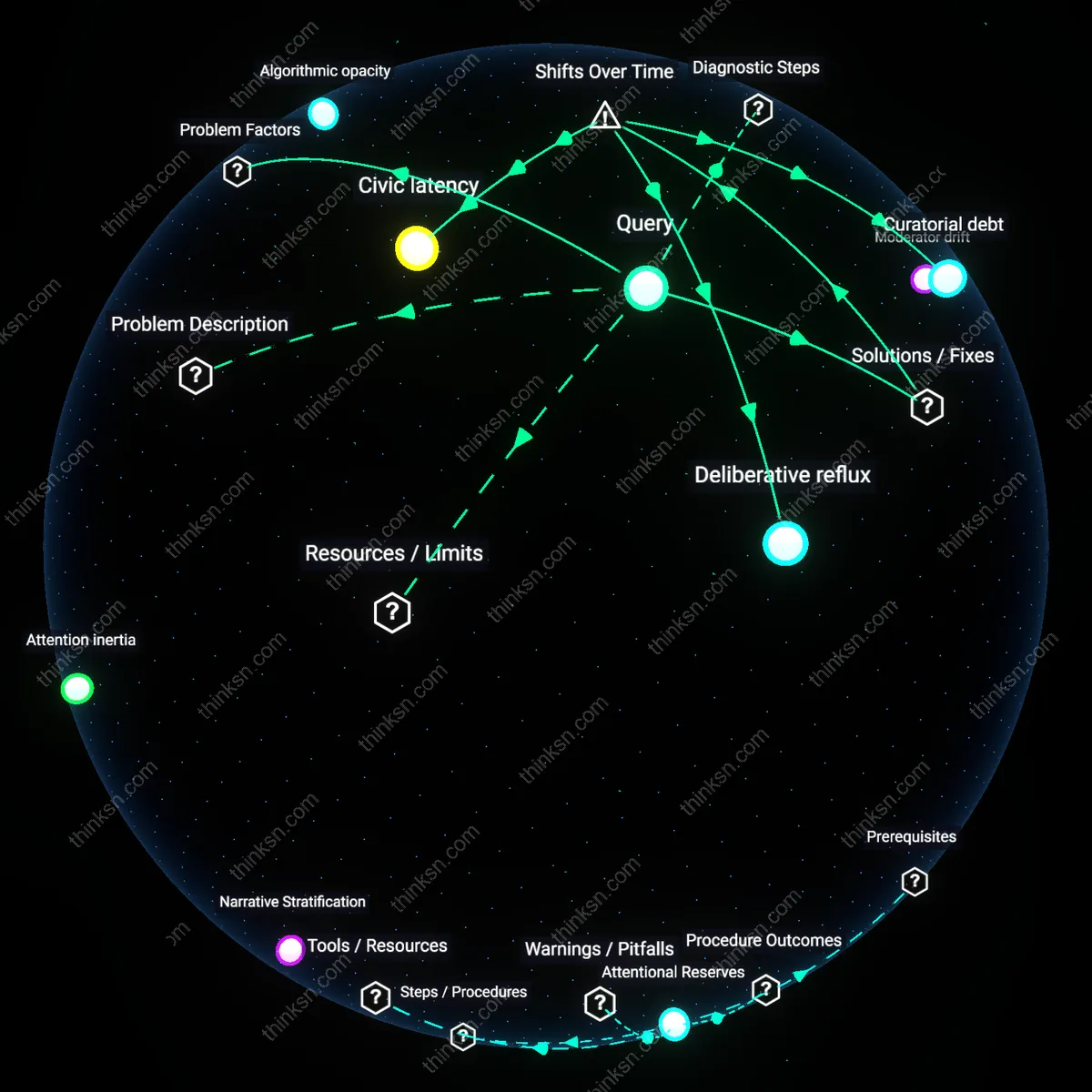

Attention Resilience

The 2020 U.S. Census public awareness campaign successfully leveraged algorithmic amplification on Facebook and Instagram to boost participation in undercounted communities, demonstrating that sensational political content is not the only type elevated—civic utility can be algorithmically prioritized when objectives are clearly aligned with public goods; this reveals that platform algorithms respond to engagement-shaped incentives, not inherent bias toward conflict, and when institutions design messages to work *with* these mechanisms—using urgency, identity, and visual salience—in ways that outcompete outrage, they reclaim attention for collective benefit; what is underappreciated is that algorithmic amplification does not necessarily degrade discourse if countervailing positive content is engineered to meet its logic.

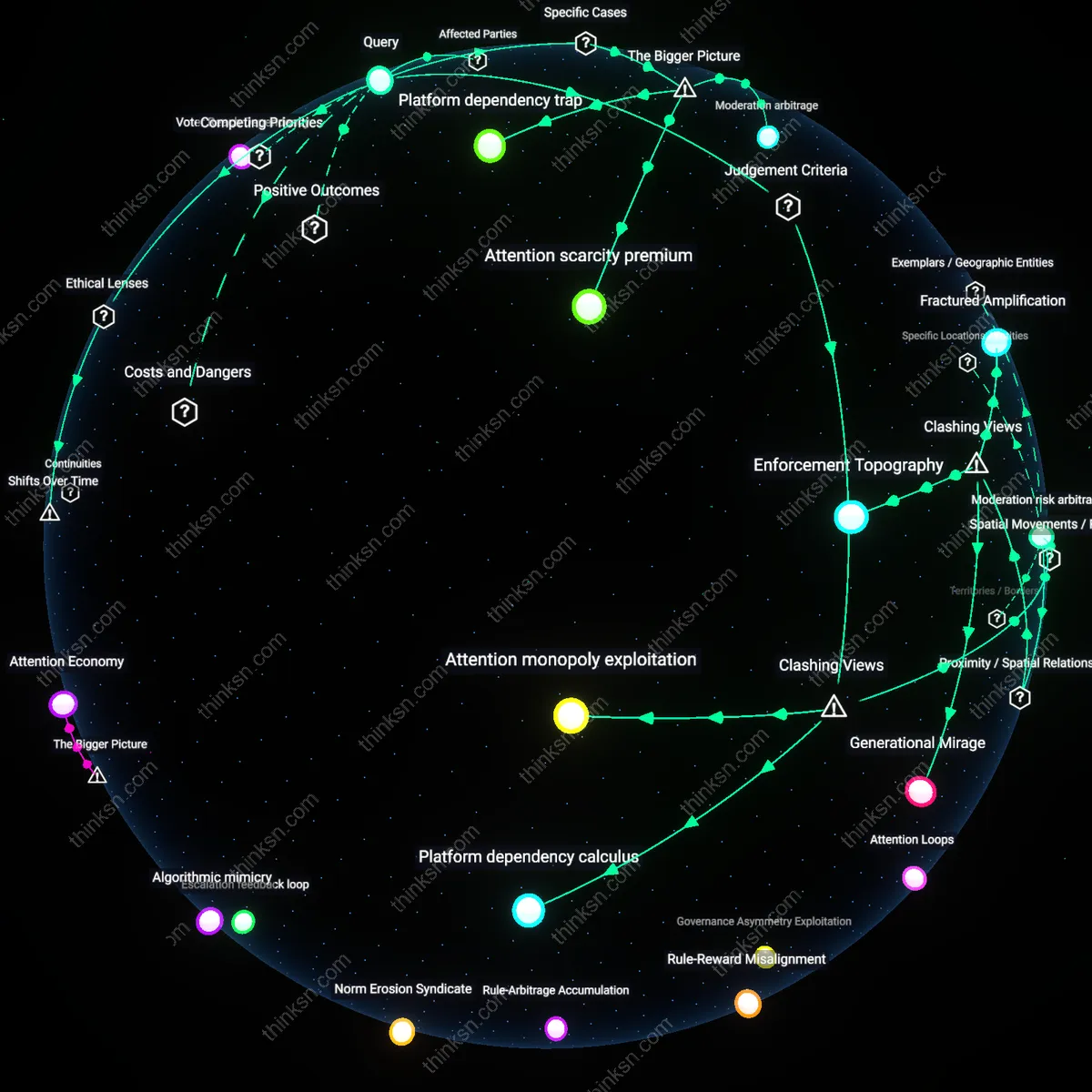

Civic Signal Boost

During the 2022 Kenyan general election, WhatsApp’s partnership with local fact-checking collective PesaCheck enabled certified civic messages—from polling locations to electoral commission updates—to be algorithmically prioritized within regional group chats, reducing reliance on viral misinformation while maintaining user engagement; this demonstrates that neutral curation is operationally possible when platforms embed trusted local actors into content distribution pipelines, allowing prosocial information to travel natively through the same pathways as sensationalism; what is rarely acknowledged is that 'neutrality' in curation can be redefined not as passive non-intervention but as active architecture that elevates verified civic signals without suppressing speech.

Discursive Redundancy

In 2019, Taiwan’s Digital Minister Audrey Tang deployed a real-time sentiment-responsive bot network on the PTT Bulletin Board System to reframe polarizing debates on pension reform by flooding threads with humorous, consensus-oriented counter-memes that matched the emotional valence of dominant narratives while subtly shifting their frame toward compromise; this shows that algorithmic amplification’s harm to discourse can be disrupted not by removing sensational content but by over-saturating attention economies with higher-utility alternatives that obey the same virality rules; the underappreciated insight is that balance in public discourse does not require neutrality but can emerge from strategic, symmetric flooding of meaning.